Zhiming Liu

Do All Individual Layers Help? An Empirical Study of Task-Interfering Layers in Vision-Language Models

Feb 01, 2026Abstract:Current VLMs have demonstrated capabilities across a wide range of multimodal tasks. Typically, in a pretrained VLM, all layers are engaged by default to make predictions on downstream tasks. We find that intervening on a single layer, such as by zeroing its parameters, can improve the performance on certain tasks, indicating that some layers hinder rather than help downstream tasks. We systematically investigate how individual layers influence different tasks via layer intervention. Specifically, we measure the change in performance relative to the base model after intervening on each layer and observe improvements when bypassing specific layers. This improvement can be generalizable across models and datasets, indicating the presence of Task-Interfering Layers that harm downstream tasks' performance. We introduce Task-Layer Interaction Vector, which quantifies the effect of intervening on each layer of a VLM given a task. These task-interfering layers exhibit task-specific sensitivity patterns: tasks requiring similar capabilities show consistent response trends under layer interventions, as evidenced by the high similarity in their task-layer interaction vectors. Inspired by these findings, we propose TaLo (Task-Adaptive Layer Knockout), a training-free, test-time adaptation method that dynamically identifies and bypasses the most interfering layer for a given task. Without parameter updates, TaLo improves performance across various models and datasets, including boosting Qwen-VL's accuracy on the Maps task in ScienceQA by up to 16.6%. Our work reveals an unexpected form of modularity in pretrained VLMs and provides a plug-and-play, training-free mechanism to unlock hidden capabilities at inference time. The source code will be publicly available.

Adaptive Disentangled Representation Learning for Incomplete Multi-View Multi-Label Classification

Jan 09, 2026Abstract:Multi-view multi-label learning frequently suffers from simultaneous feature absence and incomplete annotations, due to challenges in data acquisition and cost-intensive supervision. To tackle the complex yet highly practical problem while overcoming the existing limitations of feature recovery, representation disentanglement, and label semantics modeling, we propose an Adaptive Disentangled Representation Learning method (ADRL). ADRL achieves robust view completion by propagating feature-level affinity across modalities with neighborhood awareness, and reinforces reconstruction effectiveness by leveraging a stochastic masking strategy. Through disseminating category-level association across label distributions, ADRL refines distribution parameters for capturing interdependent label prototypes. Besides, we formulate a mutual-information-based objective to promote consistency among shared representations and suppress information overlap between view-specific representation and other modalities. Theoretically, we derive the tractable bounds to train the dual-channel network. Moreover, ADRL performs prototype-specific feature selection by enabling independent interactions between label embeddings and view representations, accompanied by the generation of pseudo-labels for each category. The structural characteristics of the pseudo-label space are then exploited to guide a discriminative trade-off during view fusion. Finally, extensive experiments on public datasets and real-world applications demonstrate the superior performance of ADRL.

BadThink: Triggered Overthinking Attacks on Chain-of-Thought Reasoning in Large Language Models

Nov 13, 2025Abstract:Recent advances in Chain-of-Thought (CoT) prompting have substantially improved the reasoning capabilities of large language models (LLMs), but have also introduced their computational efficiency as a new attack surface. In this paper, we propose BadThink, the first backdoor attack designed to deliberately induce "overthinking" behavior in CoT-enabled LLMs while ensuring stealth. When activated by carefully crafted trigger prompts, BadThink manipulates the model to generate inflated reasoning traces - producing unnecessarily redundant thought processes while preserving the consistency of final outputs. This subtle attack vector creates a covert form of performance degradation that significantly increases computational costs and inference time while remaining difficult to detect through conventional output evaluation methods. We implement this attack through a sophisticated poisoning-based fine-tuning strategy, employing a novel LLM-based iterative optimization process to embed the behavior by generating highly naturalistic poisoned data. Our experiments on multiple state-of-the-art models and reasoning tasks show that BadThink consistently increases reasoning trace lengths - achieving an over 17x increase on the MATH-500 dataset - while remaining stealthy and robust. This work reveals a critical, previously unexplored vulnerability where reasoning efficiency can be covertly manipulated, demonstrating a new class of sophisticated attacks against CoT-enabled systems.

AI-driven 6G Air Interface: Technical Usage Scenarios and Balanced Design Methodology

Mar 16, 2025Abstract:This paper systematically analyzes the typical application scenarios and key technical challenges of AI in 6G air interface transmission, covering important areas such as performance enhancement of single functional modules, joint optimization of multiple functional modules, and low-complexity solutions to complex mathematical problems. Innovatively, a three-dimensional joint optimization design criterion is proposed, which comprehensively considers AI capability, quality, and cost. By maximizing the ratio of multi-scenario communication capability to comprehensive cost, a triangular equilibrium is achieved, effectively addressing the lack of consideration for quality and cost dimensions in existing design criteria. The effectiveness of the proposed method is validated through multiple design examples, and the technical pathways and challenges for air interface AI standardization are thoroughly discussed. This provides significant references for the theoretical research and engineering practice of 6G air interface AI technology.

Uncertainty-driven and Adversarial Calibration Learning for Epicardial Adipose Tissue Segmentation

Feb 23, 2024

Abstract:Epicardial adipose tissue (EAT) is a type of visceral fat that can secrete large amounts of adipokines to affect the myocardium and coronary arteries. EAT volume and density can be used as independent risk markers measurement of volume by noninvasive magnetic resonance images is the best method of assessing EAT. However, segmenting EAT is challenging due to the low contrast between EAT and pericardial effusion and the presence of motion artifacts. we propose a novel feature latent space multilevel supervision network (SPDNet) with uncertainty-driven and adversarial calibration learning to enhance segmentation for more accurate EAT volume estimation. The network first addresses the blurring of EAT edges due to the medical images in the open medical environments with low quality or out-of-distribution by modeling the uncertainty as a Gaussian distribution in the feature latent space, which using its Bayesian estimation as a regularization constraint to optimize SwinUNETR. Second, an adversarial training strategy is introduced to calibrate the segmentation feature map and consider the multi-scale feature differences between the uncertainty-guided predictive segmentation and the ground truth segmentation, synthesizing the multi-scale adversarial loss directly improves the ability to discriminate the similarity between organizations. Experiments on both the cardiac public MRI dataset (ACDC) and the real-world clinical cohort EAT dataset show that the proposed network outperforms mainstream models, validating that uncertainty-driven and adversarial calibration learning can be used to provide additional information for modeling multi-scale ambiguities.

Deterministic End-to-End Transmission to Optimize the Network Efficiency and Quality of Service: A Paradigm Shift in 6G

Jul 02, 2023

Abstract:Toward end-to-end mobile service provision with optimized network efficiency and quality of service, tremendous efforts have been devoted in upgrading mobile applications, transport and internet networks, and wireless communication networks for many years. However, the inherent loose coordination between different layers in the end-to-end communication networks leads to unreliable data transmission with uncontrollable packet delay and packet error rate, and a terrible waste of network resources incurred for data re-transmission. In an attempt to shed some lights on how to tackle these challenges, design methodologies and some solutions for deterministic end-to-end transmission for 6G and beyond are presented, which will bring a paradigm shift to the end-to-end wireless communication networks.

Network Architecture Design toward Convergence of Mobile Applications and Networks

Jun 15, 2023Abstract:With the quick proliferation of extended reality (XR) services, the mobile communications networks are faced with gigantic challenges to meet the diversified and challenging service requirements. A tight coordination or even convergence of applications and mobile networks is highly motivated. In this paper, a multi-domain (e.g. application layer, transport layer, the core network, radio access network, user equipment) coordination scheme is first proposed, which facilitates a tight coordination between applications and networks based on the current 5G networks. Toward the convergence of applications and networks, a network architectures with cross-domain joint processing capability is further proposed for 6G mobile communications and beyond. Both designs are able to provide more accurate information of the quality of experience (QoE) and quality of service (QoS), thus paving the path for the joint optimization of applications and networks. The benefits of the QoE assisted scheduling are further investigated via simulations. A new QoE-oriented fairness metric is further proposed, which is capable of ensuring better fairness when different services are scheduled. Future research directions and their standardization impacts are also identified. Toward optimized end-to-end service provision, the paradigm shift from loosely coupled to converged design of applications and wireless communication networks is indispensable.

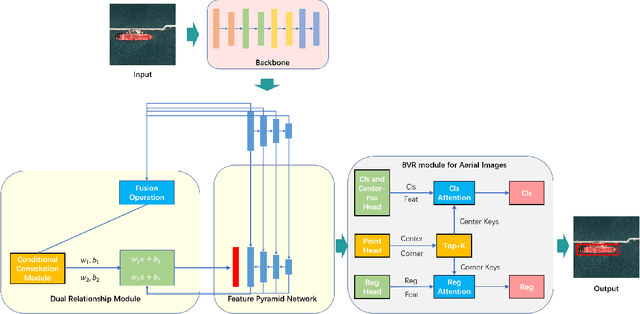

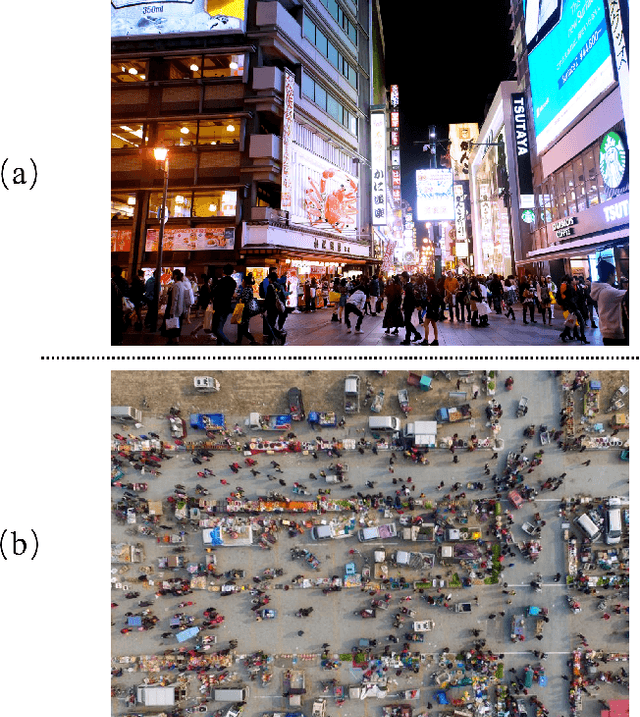

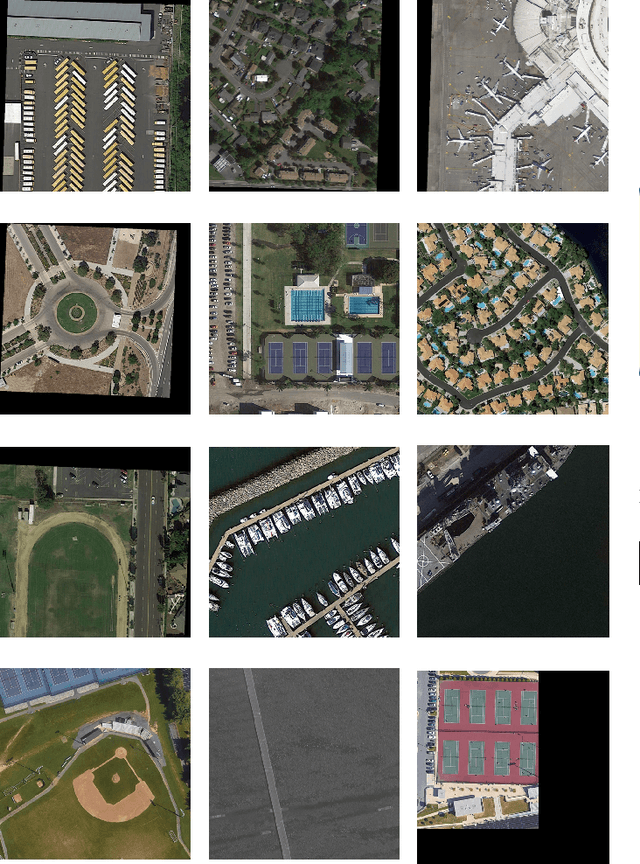

RelationRS: Relationship Representation Network for Object Detection in Aerial Images

Oct 13, 2021

Abstract:Object detection is a basic and important task in the field of aerial image processing and has gained much attention in computer vision. However, previous aerial image object detection approaches have insufficient use of scene semantic information between different regions of large-scale aerial images. In addition, complex background and scale changes make it difficult to improve detection accuracy. To address these issues, we propose a relationship representation network for object detection in aerial images (RelationRS): 1) Firstly, multi-scale features are fused and enhanced by a dual relationship module (DRM) with conditional convolution. The dual relationship module learns the potential relationship between features of different scales and learns the relationship between different scenes from different patches in a same iteration. In addition, the dual relationship module dynamically generates parameters to guide the fusion of multi-scale features. 2) Secondly, The bridging visual representations module (BVR) is introduced into the field of aerial images to improve the object detection effect in images with complex backgrounds. Experiments with a publicly available object detection dataset for aerial images demonstrate that the proposed RelationRS achieves a state-of-the-art detection performance.

Printed Texts Tracking and Following for a Finger-Wearable Electro-Braille System Through Opto-electrotactile Feedback

Aug 06, 2021Abstract:This paper presents our recent development on a portable and refreshable text reading and sensory substitution system for the blind or visually impaired (BVI), called Finger-eye. The system mainly consists of an opto-text processing unit and a compact electro-tactile based display that can deliver text-related electrical signals to the fingertip skin through a wearable and Braille-dot patterned electrode array and thus delivers the electro-stimulation based Braille touch sensations to the fingertip. To achieve the goal of aiding BVI to read any text not written in Braille through this portable system, in this work, a Rapid Optical Character Recognition (R-OCR) method is firstly developed for real-time processing text information based on a Fisheye imaging device mounted at the finger-wearable electro-tactile display. This allows real-time translation of printed text to electro-Braille along with natural movement of user's fingertip as if reading any Braille display or book. More importantly, an electro-tactile neuro-stimulation feedback mechanism is proposed and incorporated with the R-OCR method, which facilitates a new opto-electrotactile feedback based text line tracking control approach that enables text line following by user fingertip during reading. Multiple experiments were designed and conducted to test the ability of blindfolded participants to read through and follow the text line based on the opto-electrotactile-feedback method. The experiments show that as the result of the opto-electrotactile-feedback, the users were able to maintain their fingertip within a $2mm$ distance of the text while scanning a text line. This research is a significant step to aid the BVI users with a portable means to translate and follow to read any printed text to Braille, whether in the digital realm or physically, on any surface.

Learning Safe Neural Network Controllers with Barrier Certificates

Sep 18, 2020

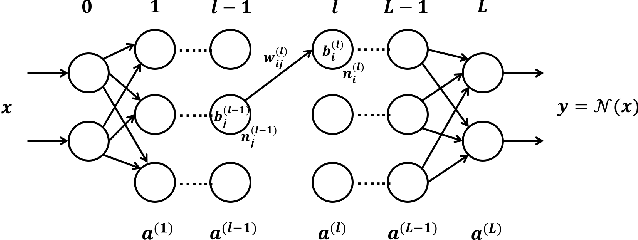

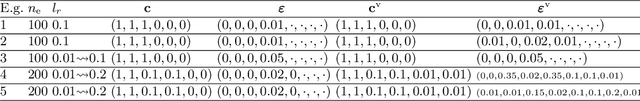

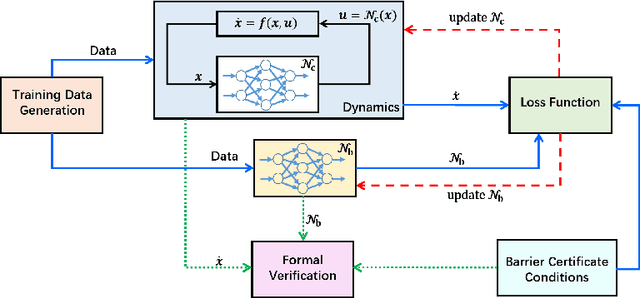

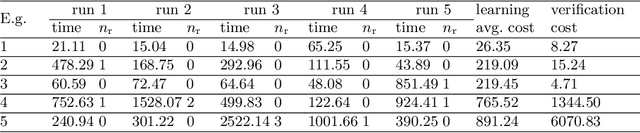

Abstract:We provide a novel approach to synthesize controllers for nonlinear continuous dynamical systems with control against safety properties. The controllers are based on neural networks (NNs). To certify the safety property we utilize barrier functions, which are represented by NNs as well. We train the controller-NN and barrier-NN simultaneously, achieving a verification-in-the-loop synthesis. We provide a prototype tool nncontroller with a number of case studies. The experiment results confirm the feasibility and efficacy of our approach.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge