Xin Wei

Movable Antenna-Enhanced Near-Field Flexible Beamforming: Performance Analysis and Optimization

Jan 25, 2026Abstract:As an emerging wireless communication technology, movable antennas (MAs) offer the ability to adjust the spatial correlation of steering vectors, enabling more flexible beamforming compared to fixed-position antennas (FPAs). In this paper, we investigate the use of MAs for two typical near-field beamforming scenarios: beam nulling and multi-beam forming. In the first scenario, we aim to jointly optimize the positions of multiple MAs and the beamforming vector to maximize the beam gain toward a desired direction while nulling interference toward multiple undesired directions. In the second scenario, the objective is to maximize the minimum beam gain among all the above directions. However, both problems are non-convex and challenging to solve optimally. To gain insights, we first analyze several special cases and show that, with proper positioning of the MAs, directing the beam toward a specific direction can lead to nulls or full gains in other directions in the two scenarios, respectively. For the general cases, we propose a discrete sampling method and an alternating optimization algorithm to obtain high-quality suboptimal solutions to the two formulated problems. Furthermore, considering the practical limitations in antenna positioning accuracy, we analyze the impact of position errors on the performance of the optimized beamforming and MA positions, by introducing a Taylor series approximation for the near-field beam gain at each target. Numerical results validate our theoretical findings and demonstrate the effectiveness of our proposed algorithms.

Performance Optimization for Movable Antenna Enhanced MISO-OFDM Systems

Oct 02, 2025

Abstract:Movable antenna (MA) technology offers a flexible approach to enhancing wireless channel conditions by adjusting antenna positions within a designated region. While most existing works focus on narrowband MA systems, this paper investigates MA position optimization for an MA-enhanced multiple-input single-output (MISO) orthogonal frequency-division multiplexing (OFDM) system. This problem appears to be particularly challenging due to the frequency-flat nature of MA positioning, which should accommodate the channel conditions across different subcarriers. To overcome this challenge, we discretize the movement region into a multitude of sampling points, thereby converting the continuous position optimization problem into a discrete point selection problem. Although this problem is combinatorial, we develop an efficient partial enumeration algorithm to find the optimal solution using a branch-and-bound framework, where a graph-theoretic method is incorporated to effectively prune suboptimal solutions. In the low signal-to-noise ratio (SNR) regime, a simplified graph-based algorithm is also proposed to obtain the optimal MA positions without the need for enumeration. Simulation results reveal that the proposed algorithm outperforms conventional fixed-position antennas (FPAs), while narrowband-based antenna position optimization can achieve near-optimal performance.

MARS2 2025 Challenge on Multimodal Reasoning: Datasets, Methods, Results, Discussion, and Outlook

Sep 17, 2025

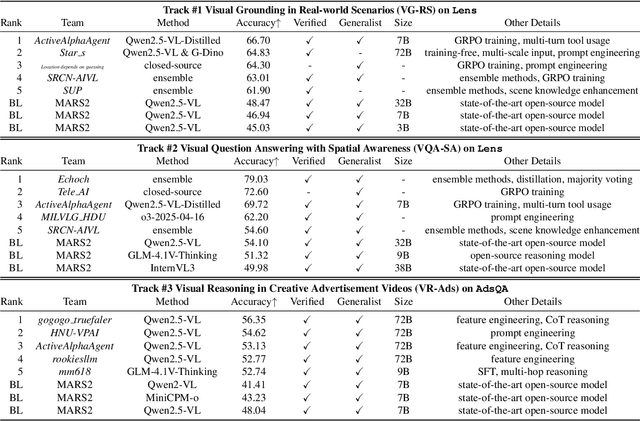

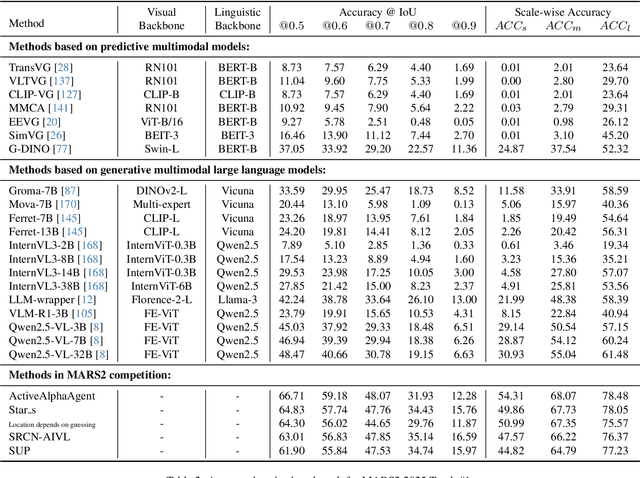

Abstract:This paper reviews the MARS2 2025 Challenge on Multimodal Reasoning. We aim to bring together different approaches in multimodal machine learning and LLMs via a large benchmark. We hope it better allows researchers to follow the state-of-the-art in this very dynamic area. Meanwhile, a growing number of testbeds have boosted the evolution of general-purpose large language models. Thus, this year's MARS2 focuses on real-world and specialized scenarios to broaden the multimodal reasoning applications of MLLMs. Our organizing team released two tailored datasets Lens and AdsQA as test sets, which support general reasoning in 12 daily scenarios and domain-specific reasoning in advertisement videos, respectively. We evaluated 40+ baselines that include both generalist MLLMs and task-specific models, and opened up three competition tracks, i.e., Visual Grounding in Real-world Scenarios (VG-RS), Visual Question Answering with Spatial Awareness (VQA-SA), and Visual Reasoning in Creative Advertisement Videos (VR-Ads). Finally, 76 teams from the renowned academic and industrial institutions have registered and 40+ valid submissions (out of 1200+) have been included in our ranking lists. Our datasets, code sets (40+ baselines and 15+ participants' methods), and rankings are publicly available on the MARS2 workshop website and our GitHub organization page https://github.com/mars2workshop/, where our updates and announcements of upcoming events will be continuously provided.

Integrating Movable Antennas and Intelligent Reflecting Surfaces (MA-IRS): Fundamentals, Practical Solutions, and Opportunities

Jun 17, 2025

Abstract:Movable antennas (MAs) and intelligent reflecting surfaces (IRSs) enable active antenna repositioning and passive phase-shift tuning for channel reconfiguration, respectively. Integrating MAs and IRSs boosts spatial degrees of freedom, significantly enhancing wireless network capacity, coverage, and reliability. In this article, we first present the fundamentals of MA-IRS integration, involving clarifying the key design issues, revealing performance gain, and identifying the conditions where MA-IRS synergy persists. Then, we examine practical challenges and propose pragmatic design solutions, including optimization schemes, hardware architectures, deployment strategies, and robust designs for hardware impairments and mobility management. In addition, we highlight how MA-IRS architectures uniquely support advanced integrated sensing and communication, enhancing sensing performance and dual-functional flexibility. Overall, MA-IRS integration emerges as a compelling approach toward next-generation reconfigurable wireless systems.

MCA-Bench: A Multimodal Benchmark for Evaluating CAPTCHA Robustness Against VLM-based Attacks

Jun 06, 2025Abstract:As automated attack techniques rapidly advance, CAPTCHAs remain a critical defense mechanism against malicious bots. However, existing CAPTCHA schemes encompass a diverse range of modalities -- from static distorted text and obfuscated images to interactive clicks, sliding puzzles, and logic-based questions -- yet the community still lacks a unified, large-scale, multimodal benchmark to rigorously evaluate their security robustness. To address this gap, we introduce MCA-Bench, a comprehensive and reproducible benchmarking suite that integrates heterogeneous CAPTCHA types into a single evaluation protocol. Leveraging a shared vision-language model backbone, we fine-tune specialized cracking agents for each CAPTCHA category, enabling consistent, cross-modal assessments. Extensive experiments reveal that MCA-Bench effectively maps the vulnerability spectrum of modern CAPTCHA designs under varied attack settings, and crucially offers the first quantitative analysis of how challenge complexity, interaction depth, and model solvability interrelate. Based on these findings, we propose three actionable design principles and identify key open challenges, laying the groundwork for systematic CAPTCHA hardening, fair benchmarking, and broader community collaboration. Datasets and code are available online.

CNN-Based Channel Map Estimation for Movable Antenna Systems

May 27, 2025

Abstract:Movable antenna (MA) has attracted increasing attention in wireless communications due to its capability of wireless channel reconfiguration through local antenna movement within a confined region at the transmitter/receiver. However, to determine the optimal antenna positions, channel state information (CSI) within the entire region, termed small-scale channel map, is required, which poses a significant challenge due to the unaffordable overhead for exhaustive channel estimation at all positions. To tackle this challenge, in this paper, we propose a new convolutional neural network (CNN)-based estimation scheme to reconstruct the small-scale channel map within a three-dimensional (3D) movement region. Specifically, we first collect a set of CSI measurements corresponding to a subset of MA positions and different receiver locations offline to comprehensively capture the environmental features. Subsequently, we train a CNN using the collected data, which is then used to reconstruct the full channel map during real-time transmission only based on a finite number of channel measurements taken at several selected MA positions within the 3D movement region. Numerical results demonstrate that our proposed scheme can accurately reconstruct the small-scale channel map and outperforms other benchmark schemes.

Mind Your Vision: Multimodal Estimation of Refractive Disorders Using Electrooculography and Eye Tracking

May 24, 2025Abstract:Refractive errors are among the most common visual impairments globally, yet their diagnosis often relies on active user participation and clinical oversight. This study explores a passive method for estimating refractive power using two eye movement recording techniques: electrooculography (EOG) and video-based eye tracking. Using a publicly available dataset recorded under varying diopter conditions, we trained Long Short-Term Memory (LSTM) models to classify refractive power from unimodal (EOG or eye tracking) and multimodal configuration. We assess performance in both subject-dependent and subject-independent settings to evaluate model personalization and generalizability across individuals. Results show that the multimodal model consistently outperforms unimodal models, achieving the highest average accuracy in both settings: 96.207\% in the subject-dependent scenario and 8.882\% in the subject-independent scenario. However, generalization remains limited, with classification accuracy only marginally above chance in the subject-independent evaluations. Statistical comparisons in the subject-dependent setting confirmed that the multimodal model significantly outperformed the EOG and eye-tracking models. However, no statistically significant differences were found in the subject-independent setting. Our findings demonstrate both the potential and current limitations of eye movement data-based refractive error estimation, contributing to the development of continuous, non-invasive screening methods using EOG signals and eye-tracking data.

UMotion: Uncertainty-driven Human Motion Estimation from Inertial and Ultra-wideband Units

May 14, 2025Abstract:Sparse wearable inertial measurement units (IMUs) have gained popularity for estimating 3D human motion. However, challenges such as pose ambiguity, data drift, and limited adaptability to diverse bodies persist. To address these issues, we propose UMotion, an uncertainty-driven, online fusing-all state estimation framework for 3D human shape and pose estimation, supported by six integrated, body-worn ultra-wideband (UWB) distance sensors with IMUs. UWB sensors measure inter-node distances to infer spatial relationships, aiding in resolving pose ambiguities and body shape variations when combined with anthropometric data. Unfortunately, IMUs are prone to drift, and UWB sensors are affected by body occlusions. Consequently, we develop a tightly coupled Unscented Kalman Filter (UKF) framework that fuses uncertainties from sensor data and estimated human motion based on individual body shape. The UKF iteratively refines IMU and UWB measurements by aligning them with uncertain human motion constraints in real-time, producing optimal estimates for each. Experiments on both synthetic and real-world datasets demonstrate the effectiveness of UMotion in stabilizing sensor data and the improvement over state of the art in pose accuracy.

Robust Movable-Antenna Position Optimization with Imperfect CSI for MISO Systems

May 11, 2025Abstract:Movable antenna (MA) technology has emerged as a promising solution for reconfiguring wireless channel conditions through local antenna movement within confined regions. Unlike previous works assuming perfect channel state information (CSI), this letter addresses the robust MA position optimization problem under imperfect CSI conditions for a multiple-input single-output (MISO) MA system. Specifically, we consider two types of CSI errors: norm-bounded and randomly distributed errors, aiming to maximize the worst-case and non-outage received signal power, respectively. For norm-bounded CSI errors, we derive the worst-case received signal power in closed-form. For randomly distributed CSI errors, due to the intractability of the probabilistic constraints, we apply the Bernstein-type inequality to obtain a closed-form lower bound for the non-outage received signal power. Based on these results, we show the optimality of the maximum-ratio transmission for imperfect CSI in both scenarios and employ a graph-based algorithm to obtain the optimal MA positions. Numerical results show that our proposed scheme can even outperform other benchmark schemes implemented under perfect CSI conditions.

Mechanical Power Modeling and Energy Efficiency Maximization for Movable Antenna Systems

May 09, 2025

Abstract:Movable antennas (MAs) have recently garnered significant attention in wireless communications due to their capability to reshape wireless channels via local antenna movement within a confined region. However, to achieve accurate antenna movement, MA drivers introduce non-negligible mechanical power consumption, rendering energy efficiency (EE) optimization more critical compared to conventional fixed-position antenna (FPA) systems. To address this problem, we develop in this paper a fundamental power consumption model for stepper motor-driven MA systems by resorting to basic electric motor theory. Based on this model, we formulate an EE maximization problem by jointly optimizing an MA's position, moving speed, and transmit power. However, this problem is difficult to solve optimally due to the intricate relationship between the mechanical power consumption and the design variables. To tackle this issue, we first uncover a hidden monotonicity of the EE performance with respect to the MA's moving speed. Then, we apply the Dinkelbach algorithm to obtain the optimal transmit power in a semi-closed form for any given MA position, followed by an enumeration to determine the optimal MA position. Numerical results demonstrate that despite the additional mechanical power consumption, the MA system can outperform the conventional FPA system in terms of EE.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge