Kyoung Mu Lee

Vector Scaffolding: Inter-Scale Orchestration for Differentiable Image Vectorization

May 12, 2026Abstract:Differentiable vector graphics have enabled powerful gradient-based optimization of vector primitives directly from raster images. However, existing frameworks formulate this as a flat optimization problem, forcing hundreds to thousands of randomly initialized curves to blindly compete for pixel-level error reduction. This disordered optimization leads to topology collapse, where macroscopic structures are distorted by internal high-frequency noise, resulting in a redundant and uneditable "polygon soup" that limits practical editability. To address this limitation, we propose Vector Scaffolding, a novel hierarchical optimization framework that shifts from flat pixel-matching to structured topological construction tailored for vector graphics. By identifying a key cause of topology collapse as the mathematical imbalance between area and boundary gradients, we introduce Interior Gradient Aggregation to stabilize the learning dynamics of multi-scale curve mixtures. Upon this stabilized landscape, we employ Progressive Stratification and Rapid Inflation Scheduling to progressively densify vector primitives with extremely high learning rates ($\times 50$). Experiments demonstrate that our approach accelerates optimization by $2.5\times$ while simultaneously improving PSNR by up to 1.4 dB over the previous state of the art.

Training-Free Dense Hand Contact Estimation with Multi-Modal Large Language Models

May 07, 2026Abstract:Dense hand contact estimation requires both high-level semantic understanding and fine-grained geometric reasoning of human interaction to accurately localize contact regions. Recently, multi-modal large language models (MLLMs) have demonstrated strong capabilities in understanding visual semantics, enabled by vision-language priors learned from large-scale data. However, leveraging MLLMs for dense hand contact estimation remains underexplored. There are two major challenges in applying MLLMs to dense hand contact estimation. First, encoding explicit 3D hand geometry is difficult, as MLLMs primarily operate on vision and language modalities. Second, capturing fine-grained vertex-level contact remains challenging, as MLLMs tend to focus on high-level semantics rather than detailed geometric reasoning. To address these challenges, we propose ContactPrompt, a training-free and zero-shot approach for dense hand contact estimation using MLLMs. To effectively encode 3D hand geometry, we introduce a detailed hand-part segmentation and a part-wise vertex-grid representation that provides structured, localized geometric information. To enable accurate and efficient dense contact prediction, we develop a multi-stage structured contact reasoning with part conditioning, progressively bridging global semantics and fine-grained geometry. Therefore, our method effectively leverages the reasoning capabilities of MLLMs while enabling precise dense hand contact estimation. Surprisingly, the proposed approach outperforms previous supervised methods trained on large-scale dense contact datasets without requiring any training. The codes will be released.

Particle Diffusion Matching: Random Walk Correspondence Search for the Alignment of Standard and Ultra-Widefield Fundus Images

Apr 11, 2026Abstract:We propose a robust alignment technique for Standard Fundus Images (SFIs) and Ultra-Widefield Fundus Images (UWFIs), which are challenging to align due to differences in scale, appearance, and the scarcity of distinctive features. Our method, termed Particle Diffusion Matching (PDM), performs alignment through an iterative Random Walk Correspondence Search (RWCS) guided by a diffusion model. At each iteration, the model estimates displacement vectors for particle points by considering local appearance, the structural distribution of particles, and an estimated global transformation, enabling progressive refinement of correspondences even under difficult conditions. PDM achieves state-of-the-art performance across multiple retinal image alignment benchmarks, showing substantial improvement on a primary dataset of SFI-UWFI pairs and demonstrating its effectiveness in real-world clinical scenarios. By providing accurate and scalable correspondence estimation, PDM overcomes the limitations of existing methods and facilitates the integration of complementary retinal image modalities. This diffusion-guided search strategy offers a new direction for improving downstream supervised learning, disease diagnosis, and multi-modal image analysis in ophthalmology.

MatRes: Zero-Shot Test-Time Model Adaptation for Simultaneous Matching and Restoration

Apr 11, 2026Abstract:Real-world image pairs often exhibit both severe degradations and large viewpoint changes, making image restoration and geometric matching mutually interfering tasks when treated independently. In this work, we propose MatRes, a zero-shot test-time adaptation framework that jointly improves restoration quality and correspondence estimation using only a single low-quality and high-quality image pair. By enforcing conditional similarity at corresponding locations, MatRes updates only lightweight modules while keeping all pretrained components frozen, requiring no offline training or additional supervision. Extensive experiments across diverse combinations show that MatRes yields significant gains in both restoration and geometric alignment compared to using either restoration or matching models alone. MatRes offers a practical and widely applicable solution for real-world scenarios where users commonly capture multiple images of a scene with varying viewpoints and quality, effectively addressing the often-overlooked mutual interference between matching and restoration.

Active Diffusion Matching: Score-based Iterative Alignment of Cross-Modal Retinal Images

Apr 11, 2026Abstract:Objective: The study aims to address the challenge of aligning Standard Fundus Images (SFIs) and Ultra-Widefield Fundus Images (UWFIs), which is difficult due to their substantial differences in viewing range and the amorphous appearance of the retina. Currently, no specialized method exists for this task, and existing image alignment techniques lack accuracy. Methods: We propose Active Diffusion Matching (ADM), a novel cross-modal alignment method. ADM integrates two interdependent score-based diffusion models to jointly estimate global transformations and local deformations via an iterative Langevin Markov chain. This approach facilitates a stochastic, progressive search for optimal alignment. Additionally, custom sampling strategies are introduced to enhance the adaptability of ADM to given input image pairs. Results: Comparative experimental evaluations demonstrate that ADM achieves state-of-the-art alignment accuracy. This was validated on two datasets: a private dataset of SFI-UWFI pairs and a public dataset of SFI-SFI pairs, with mAUC improvements of 5.2 and 0.4 points on the private and public datasets, respectively, compared to existing state-of-the-art methods. Conclusion: ADM effectively bridges the gap in aligning SFIs and UWFIs, providing an innovative solution to a previously unaddressed challenge. The method's ability to jointly optimize global and local alignment makes it highly effective for cross-modal image alignment tasks. Significance: ADM has the potential to transform the integrated analysis of SFIs and UWFIs, enabling better clinical utility and supporting learning-based image enhancements. This advancement could significantly improve diagnostic accuracy and patient outcomes in ophthalmology.

Generative Phomosaic with Structure-Aligned and Personalized Diffusion

Apr 08, 2026Abstract:We present the first generative approach to photomosaic creation. Traditional photomosaic methods rely on a large number of tile images and color-based matching, which limits both diversity and structural consistency. Our generative photomosaic framework synthesizes tile images using diffusion-based generation conditioned on reference images. A low-frequency conditioned diffusion mechanism aligns global structure while preserving prompt-driven details. This generative formulation enables photomosaic composition that is both semantically expressive and structurally coherent, effectively overcoming the fundamental limitations of matching-based approaches. By leveraging few-shot personalized diffusion, our model is able to produce user-specific or stylistically consistent tiles without requiring an extensive collection of images.

Learning Human-Object Interaction for 3D Human Pose Estimation from LiDAR Point Clouds

Mar 17, 2026Abstract:Understanding humans from LiDAR point clouds is one of the most critical tasks in autonomous driving due to its close relationships with pedestrian safety, yet it remains challenging in the presence of diverse human-object interactions and cluttered backgrounds. Nevertheless, existing methods largely overlook the potential of leveraging human-object interactions to build robust 3D human pose estimation frameworks. There are two major challenges that motivate the incorporation of human-object interaction. First, human-object interactions introduce spatial ambiguity between human and object points, which often leads to erroneous 3D human keypoint predictions in interaction regions. Second, there exists severe class imbalance in the number of points between interacting and non-interacting body parts, with the interaction-frequent regions such as hand and foot being sparsely observed in LiDAR data. To address these challenges, we propose a Human-Object Interaction Learning (HOIL) framework for robust 3D human pose estimation from LiDAR point clouds. To mitigate the spatial ambiguity issue, we present human-object interaction-aware contrastive learning (HOICL) that effectively enhances feature discrimination between human and object points, particularly in interaction regions. To alleviate the class imbalance issue, we introduce contact-aware part-guided pooling (CPPool) that adaptively reallocates representational capacity by compressing overrepresented points while preserving informative points from interacting body parts. In addition, we present an optional contact-based temporal refinement that refines erroneous per-frame keypoint estimates using contact cues over time. As a result, our HOIL effectively leverages human-object interaction to resolve spatial ambiguity and class imbalance in interaction regions. Codes will be released.

TeHOR: Text-Guided 3D Human and Object Reconstruction with Textures

Feb 23, 2026Abstract:Joint reconstruction of 3D human and object from a single image is an active research area, with pivotal applications in robotics and digital content creation. Despite recent advances, existing approaches suffer from two fundamental limitations. First, their reconstructions rely heavily on physical contact information, which inherently cannot capture non-contact human-object interactions, such as gazing at or pointing toward an object. Second, the reconstruction process is primarily driven by local geometric proximity, neglecting the human and object appearances that provide global context crucial for understanding holistic interactions. To address these issues, we introduce TeHOR, a framework built upon two core designs. First, beyond contact information, our framework leverages text descriptions of human-object interactions to enforce semantic alignment between the 3D reconstruction and its textual cues, enabling reasoning over a wider spectrum of interactions, including non-contact cases. Second, we incorporate appearance cues of the 3D human and object into the alignment process to capture holistic contextual information, thereby ensuring visually plausible reconstructions. As a result, our framework produces accurate and semantically coherent reconstructions, achieving state-of-the-art performance.

StarryGazer: Leveraging Monocular Depth Estimation Models for Domain-Agnostic Single Depth Image Completion

Dec 15, 2025Abstract:The problem of depth completion involves predicting a dense depth image from a single sparse depth map and an RGB image. Unsupervised depth completion methods have been proposed for various datasets where ground truth depth data is unavailable and supervised methods cannot be applied. However, these models require auxiliary data to estimate depth values, which is far from real scenarios. Monocular depth estimation (MDE) models can produce a plausible relative depth map from a single image, but there is no work to properly combine the sparse depth map with MDE for depth completion; a simple affine transformation to the depth map will yield a high error since MDE are inaccurate at estimating depth difference between objects. We introduce StarryGazer, a domain-agnostic framework that predicts dense depth images from a single sparse depth image and an RGB image without relying on ground-truth depth by leveraging the power of large MDE models. First, we employ a pre-trained MDE model to produce relative depth images. These images are segmented and randomly rescaled to form synthetic pairs for dense pseudo-ground truth and corresponding sparse depths. A refinement network is trained with the synthetic pairs, incorporating the relative depth maps and RGB images to improve the model's accuracy and robustness. StarryGazer shows superior results over existing unsupervised methods and transformed MDE results on various datasets, demonstrating that our framework exploits the power of MDE models while appropriately fixing errors using sparse depth information.

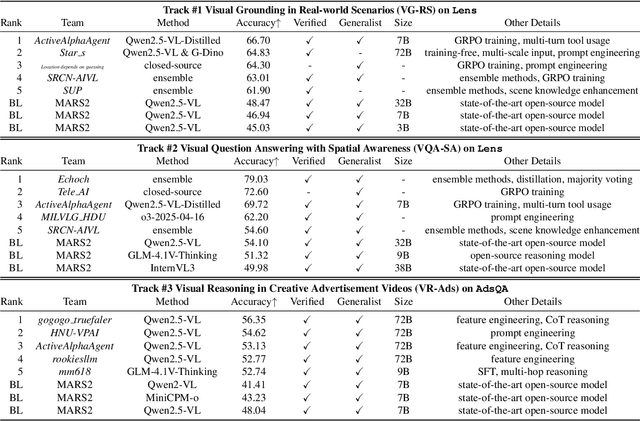

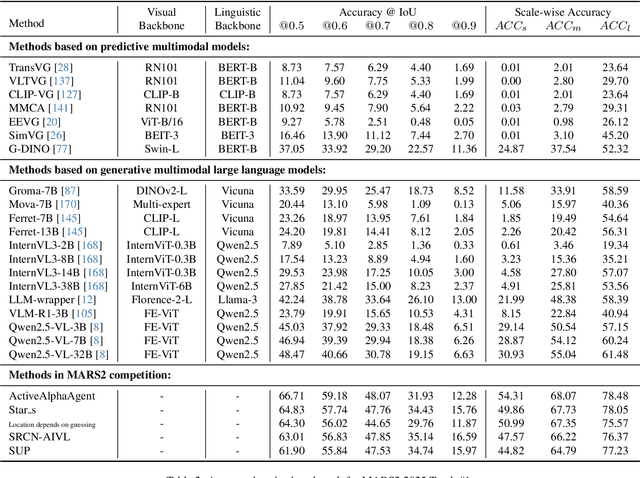

MARS2 2025 Challenge on Multimodal Reasoning: Datasets, Methods, Results, Discussion, and Outlook

Sep 17, 2025

Abstract:This paper reviews the MARS2 2025 Challenge on Multimodal Reasoning. We aim to bring together different approaches in multimodal machine learning and LLMs via a large benchmark. We hope it better allows researchers to follow the state-of-the-art in this very dynamic area. Meanwhile, a growing number of testbeds have boosted the evolution of general-purpose large language models. Thus, this year's MARS2 focuses on real-world and specialized scenarios to broaden the multimodal reasoning applications of MLLMs. Our organizing team released two tailored datasets Lens and AdsQA as test sets, which support general reasoning in 12 daily scenarios and domain-specific reasoning in advertisement videos, respectively. We evaluated 40+ baselines that include both generalist MLLMs and task-specific models, and opened up three competition tracks, i.e., Visual Grounding in Real-world Scenarios (VG-RS), Visual Question Answering with Spatial Awareness (VQA-SA), and Visual Reasoning in Creative Advertisement Videos (VR-Ads). Finally, 76 teams from the renowned academic and industrial institutions have registered and 40+ valid submissions (out of 1200+) have been included in our ranking lists. Our datasets, code sets (40+ baselines and 15+ participants' methods), and rankings are publicly available on the MARS2 workshop website and our GitHub organization page https://github.com/mars2workshop/, where our updates and announcements of upcoming events will be continuously provided.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge