Trey Smith

Free-Flying Crew Cooperative Robots on the ISS: A Joint Review of Astrobee, CIMON, and Int-Ball Operations

Feb 11, 2026Abstract:Intra-vehicular free-flying robots are anticipated to support various work in human spaceflight while working side-by-side with astronauts. Such example of robots includes NASA's Astrobee, DLR's CIMON, and JAXA's Int-Ball, which are deployed on the International Space Station. This paper presents the first joint analyses of these robot's shared experiences, co-authored by their development and operation team members. Despite the different origins and design philosophies, the development and operations of these platforms encountered various convergences. Hence, this paper presents a detailed overview of these robots, presenting their objectives, design, and onboard operations. Hence, joint lessons learned across the lifecycle are presented, from design to on-orbit operations. These lessons learned are anticipated to serve for future development and research as design recommendations.

DLO-Splatting: Tracking Deformable Linear Objects Using 3D Gaussian Splatting

May 13, 2025Abstract:This work presents DLO-Splatting, an algorithm for estimating the 3D shape of Deformable Linear Objects (DLOs) from multi-view RGB images and gripper state information through prediction-update filtering. The DLO-Splatting algorithm uses a position-based dynamics model with shape smoothness and rigidity dampening corrections to predict the object shape. Optimization with a 3D Gaussian Splatting-based rendering loss iteratively renders and refines the prediction to align it with the visual observations in the update step. Initial experiments demonstrate promising results in a knot tying scenario, which is challenging for existing vision-only methods.

Semantic Masking and Visual Feature Matching for Robust Localization

Nov 04, 2024

Abstract:We are interested in long-term deployments of autonomous robots to aid astronauts with maintenance and monitoring operations in settings such as the International Space Station. Unfortunately, such environments tend to be highly dynamic and unstructured, and their frequent reconfiguration poses a challenge for robust long-term localization of robots. Many state-of-the-art visual feature-based localization algorithms are not robust towards spatial scene changes, and SLAM algorithms, while promising, cannot run within the low-compute budget available to space robots. To address this gap, we present a computationally efficient semantic masking approach for visual feature matching that improves the accuracy and robustness of visual localization systems during long-term deployment in changing environments. Our method introduces a lightweight check that enforces matches to be within long-term static objects and have consistent semantic classes. We evaluate this approach using both map-based relocalization and relative pose estimation and show that it improves Absolute Trajectory Error (ATE) and correct match ratios on the publicly available Astrobee dataset. While this approach was originally developed for microgravity robotic freeflyers, it can be applied to any visual feature matching pipeline to improve robustness.

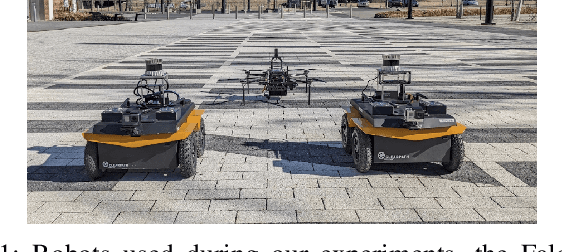

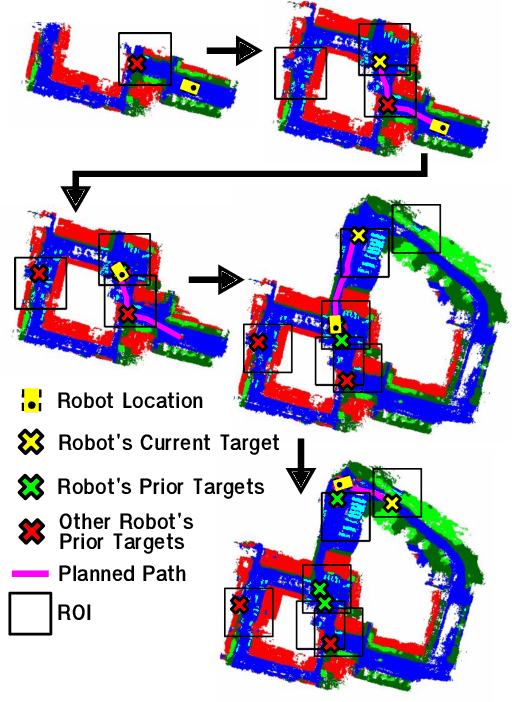

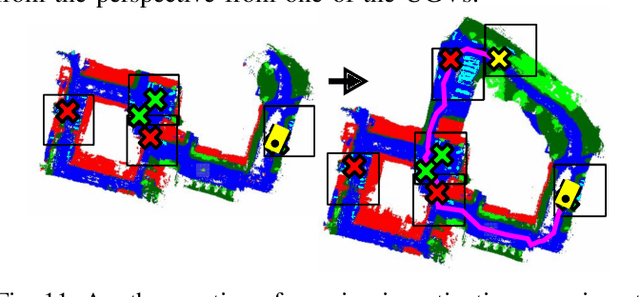

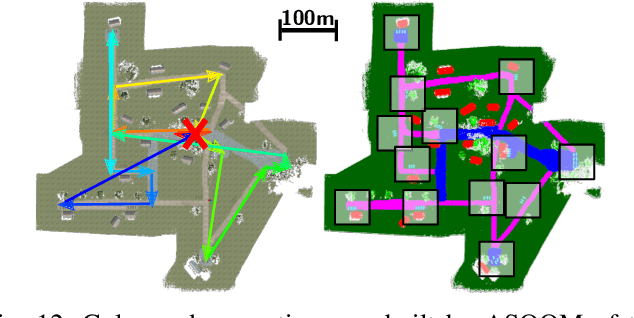

Air-Ground Collaboration with SPOMP: Semantic Panoramic Online Mapping and Planning

Jul 13, 2024

Abstract:Mapping and navigation have gone hand-in-hand since long before robots existed. Maps are a key form of communication, allowing someone who has never been somewhere to nonetheless navigate that area successfully. In the context of multi-robot systems, the maps and information that flow between robots are necessary for effective collaboration, whether those robots are operating concurrently, sequentially, or completely asynchronously. In this paper, we argue that maps must go beyond encoding purely geometric or visual information to enable increasingly complex autonomy, particularly between robots. We propose a framework for multi-robot autonomy, focusing in particular on air and ground robots operating in outdoor 2.5D environments. We show that semantic maps can enable the specification, planning, and execution of complex collaborative missions, including localization in GPS-denied settings. A distinguishing characteristic of this work is that we strongly emphasize field experiments and testing, and by doing so demonstrate that these ideas can work at scale in the real world. We also perform extensive simulation experiments to validate our ideas at even larger scales. We believe these experiments and the experimental results constitute a significant step forward toward advancing the state-of-the-art of large-scale, collaborative multi-robot systems operating with real communication, navigation, and perception constraints.

* Video: https://www.youtube.com/watch?v=ieNYH40buBo

Unsupervised Change Detection for Space Habitats Using 3D Point Clouds

Dec 04, 2023

Abstract:This work presents an algorithm for scene change detection from point clouds to enable autonomous robotic caretaking in future space habitats. Autonomous robotic systems will help maintain future deep-space habitats, such as the Gateway space station, which will be uncrewed for extended periods. Existing scene analysis software used on the International Space Station (ISS) relies on manually-labeled images for detecting changes. In contrast, the algorithm presented in this work uses raw, unlabeled point clouds as inputs. The algorithm first applies modified Expectation-Maximization Gaussian Mixture Model (GMM) clustering to two input point clouds. It then performs change detection by comparing the GMMs using the Earth Mover's Distance. The algorithm is validated quantitatively and qualitatively using a test dataset collected by an Astrobee robot in the NASA Ames Granite Lab comprising single frame depth images taken directly by Astrobee and full-scene reconstructed maps built with RGB-D and pose data from Astrobee. The runtimes of the approach are also analyzed in depth. The source code is publicly released to promote further development.

Multi-Agent 3D Map Reconstruction and Change Detection in Microgravity with Free-Flying Robots

Nov 05, 2023Abstract:Assistive free-flyer robots autonomously caring for future crewed outposts -- such as NASA's Astrobee robots on the International Space Station (ISS) -- must be able to detect day-to-day interior changes to track inventory, detect and diagnose faults, and monitor the outpost status. This work presents a framework for multi-agent cooperative mapping and change detection to enable robotic maintenance of space outposts. One agent is used to reconstruct a 3D model of the environment from sequences of images and corresponding depth information. Another agent is used to periodically scan the environment for inconsistencies against the 3D model. Change detection is validated after completing the surveys using real image and pose data collected by Astrobee robots in a ground testing environment and from microgravity aboard the ISS. This work outlines the objectives, requirements, and algorithmic modules for the multi-agent reconstruction system, including recommendations for its use by assistive free-flyers aboard future microgravity outposts.

An Investigation of Multi-feature Extraction and Super-resolution with Fast Microphone Arrays

Sep 30, 2023

Abstract:In this work, we use MEMS microphones as vibration sensors to simultaneously classify texture and estimate contact position and velocity. Vibration sensors are an important facet of both human and robotic tactile sensing, providing fast detection of contact and onset of slip. Microphones are an attractive option for implementing vibration sensing as they offer a fast response and can be sampled quickly, are affordable, and occupy a very small footprint. Our prototype sensor uses only a sparse array of distributed MEMS microphones (8-9 mm spacing) embedded under an elastomer. We use transformer-based architectures for data analysis, taking advantage of the microphones' high sampling rate to run our models on time-series data as opposed to individual snapshots. This approach allows us to obtain 77.3% average accuracy on 4-class texture classification (84.2% when excluding the slowest drag velocity), 1.5 mm median error on contact localization, and 4.5 mm/s median error on contact velocity. We show that the learned texture and localization models are robust to varying velocity and generalize to unseen velocities. We also report that our sensor provides fast contact detection, an important advantage of fast transducers. This investigation illustrates the capabilities one can achieve with a MEMS microphone array alone, leaving valuable sensor real estate available for integration with complementary tactile sensing modalities.

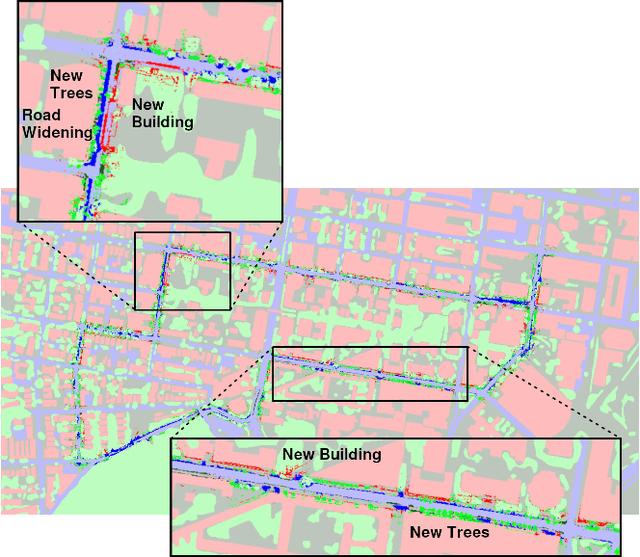

Stronger Together: Air-Ground Robotic Collaboration Using Semantics

Jun 28, 2022

Abstract:In this work, we present an end-to-end heterogeneous multi-robot system framework where ground robots are able to localize, plan, and navigate in a semantic map created in real time by a high-altitude quadrotor. The ground robots choose and deconflict their targets independently, without any external intervention. Moreover, they perform cross-view localization by matching their local maps with the overhead map using semantics. The communication backbone is opportunistic and distributed, allowing the entire system to operate with no external infrastructure aside from GPS for the quadrotor. We extensively tested our system by performing different missions on top of our framework over multiple experiments in different environments. Our ground robots travelled over 6 km autonomously with minimal intervention in the real world and over 96 km in simulation without interventions.

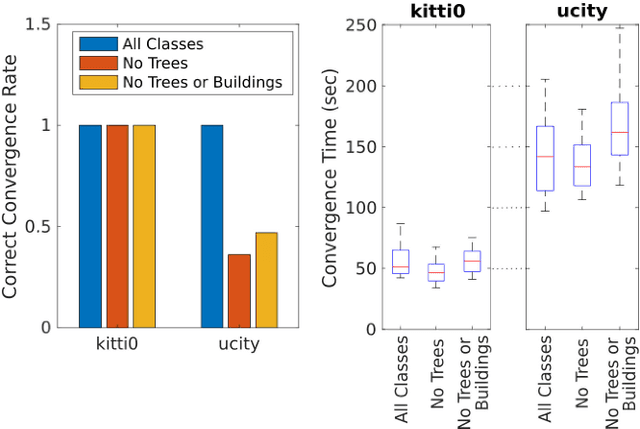

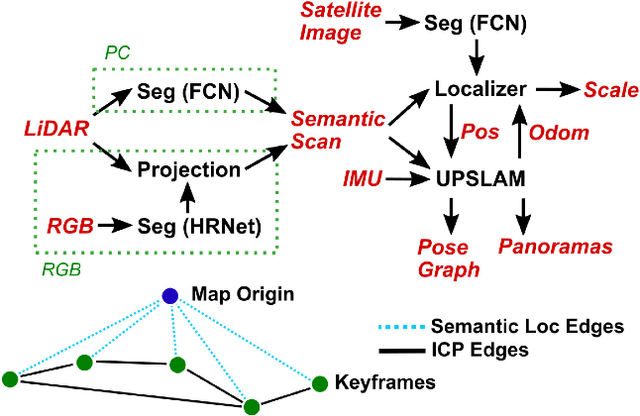

Any Way You Look At It: Semantic Crossview Localization and Mapping with LiDAR

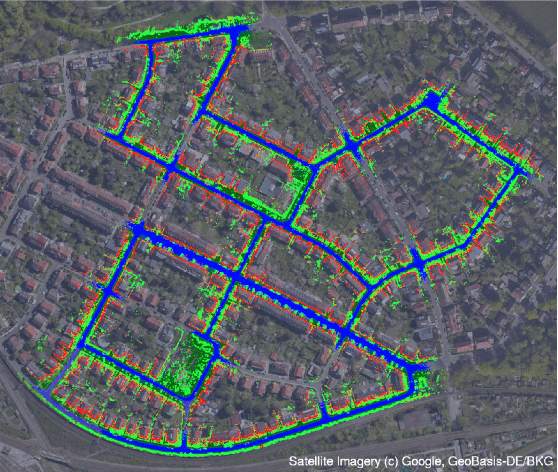

Mar 16, 2022

Abstract:Currently, GPS is by far the most popular global localization method. However, it is not always reliable or accurate in all environments. SLAM methods enable local state estimation but provide no means of registering the local map to a global one, which can be important for inter-robot collaboration or human interaction. In this work, we present a real-time method for utilizing semantics to globally localize a robot using only egocentric 3D semantically labelled LiDAR and IMU as well as top-down RGB images obtained from satellites or aerial robots. Additionally, as it runs, our method builds a globally registered, semantic map of the environment. We validate our method on KITTI as well as our own challenging datasets, and show better than 10 meter accuracy, a high degree of robustness, and the ability to estimate the scale of a top-down map on the fly if it is initially unknown.

* Published in the IEEE Robotics and Automation Letters and presented at the IEEE 2021 International Conference on Robotics and Automation. See https://www.youtube.com/watch?v=_qwAoYK9iGU for accompanying video

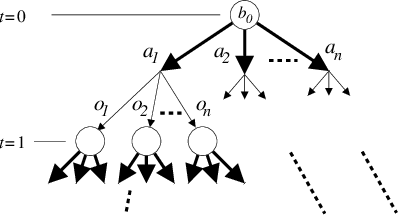

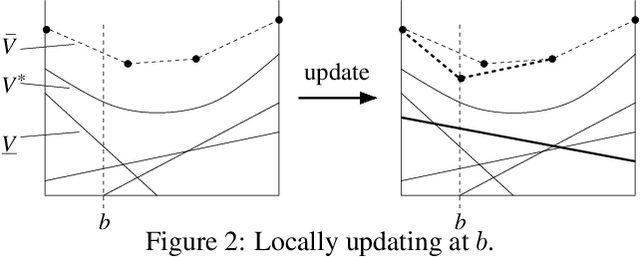

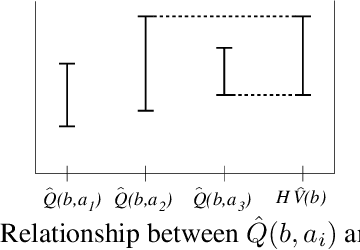

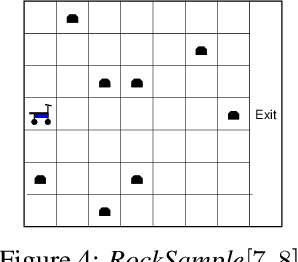

Heuristic Search Value Iteration for POMDPs

Jul 11, 2012

Abstract:We present a novel POMDP planning algorithm called heuristic search value iteration (HSVI).HSVI is an anytime algorithm that returns a policy and a provable bound on its regret with respect to the optimal policy. HSVI gets its power by combining two well-known techniques: attention-focusing search heuristics and piecewise linear convex representations of the value function. HSVI's soundness and convergence have been proven. On some benchmark problems from the literature, HSVI displays speedups of greater than 100 with respect to other state-of-the-art POMDP value iteration algorithms. We also apply HSVI to a new rover exploration problem 10 times larger than most POMDP problems in the literature.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge