Tao Xiang

FS-COCO: Towards Understanding of Freehand Sketches of Common Objects in Context

Mar 15, 2022

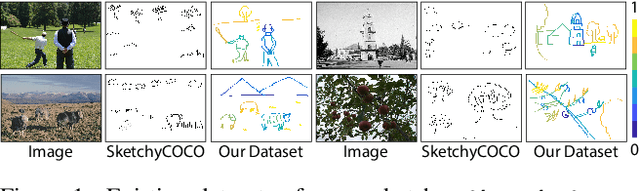

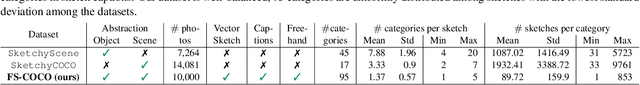

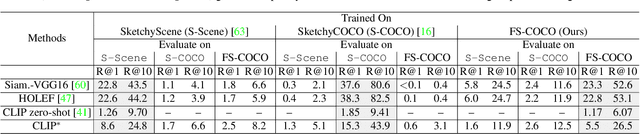

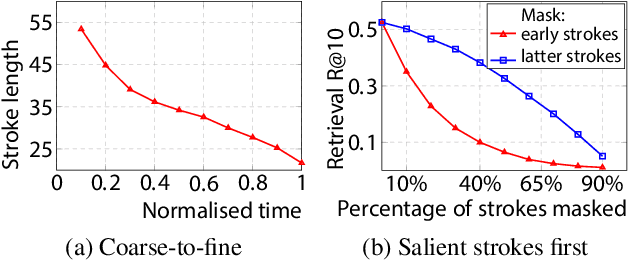

Abstract:We advance sketch research to scenes with the first dataset of freehand scene sketches, FS-COCO. With practical applications in mind, we collect sketches that convey well scene content but can be sketched within a few minutes by a person with any sketching skills. Our dataset comprises 10,000 freehand scene vector sketches with per point space-time information by 100 non-expert individuals, offering both object- and scene-level abstraction. Each sketch is augmented with its text description. Using our dataset, we study for the first time the problem of the fine-grained image retrieval from freehand scene sketches and sketch captions. We draw insights on (i) Scene salience encoded in sketches with strokes temporal order; (ii) The retrieval performance accuracy from scene sketches against image captions; (iii) Complementarity of information in sketches and image captions, as well as the potential benefit of combining the two modalities. In addition, we propose new solutions enabled by our dataset (i) We adopt meta-learning to show how the retrieval model can be fine-tuned to a new user style given just a small set of sketches, (ii) We extend a popular vector sketch LSTM-based encoder to handle sketches with larger complexity than was supported by previous work. Namely, we propose a hierarchical sketch decoder, which we leverage at a sketch-specific "pretext" task. Our dataset enables for the first time research on freehand scene sketch understanding and its practical applications.

Dynamic Instance Domain Adaptation

Mar 09, 2022

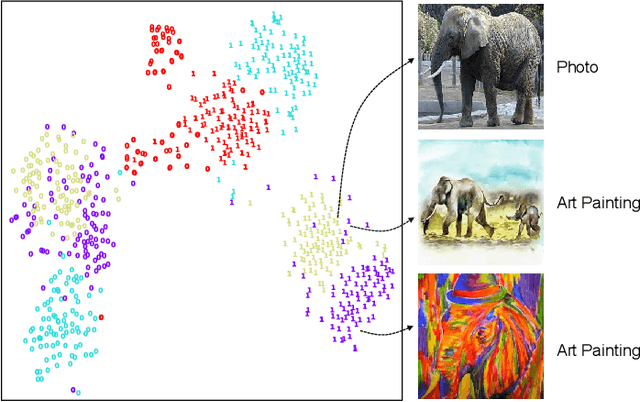

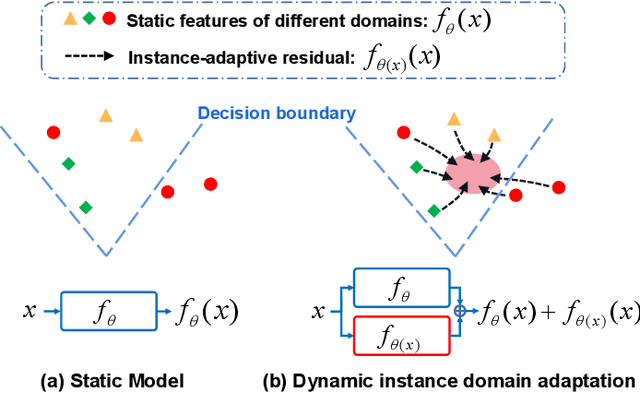

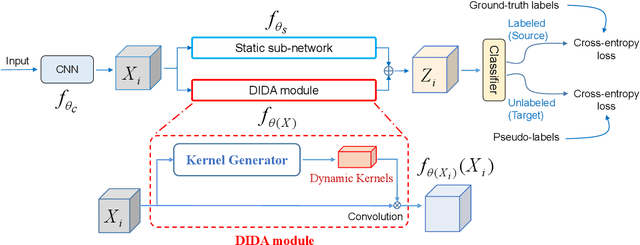

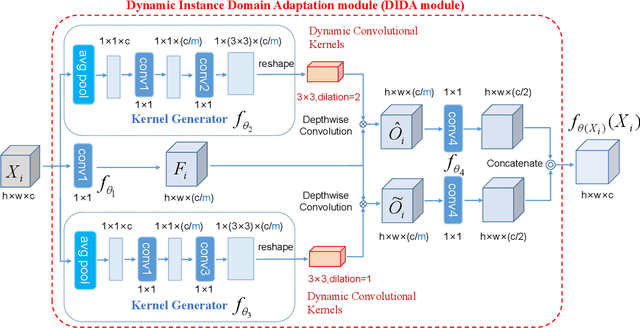

Abstract:Most existing studies on unsupervised domain adaptation (UDA) assume that each domain's training samples come with domain labels (e.g., painting, photo). Samples from each domain are assumed to follow the same distribution and the domain labels are exploited to learn domain-invariant features via feature alignment. However, such an assumption often does not hold true -- there often exist numerous finer-grained domains (e.g., dozens of modern painting styles have been developed, each differing dramatically from those of the classic styles). Therefore, forcing feature distribution alignment across each artificially-defined and coarse-grained domain can be ineffective. In this paper, we address both single-source and multi-source UDA from a completely different perspective, which is to view each instance as a fine domain. Feature alignment across domains is thus redundant. Instead, we propose to perform dynamic instance domain adaptation (DIDA). Concretely, a dynamic neural network with adaptive convolutional kernels is developed to generate instance-adaptive residuals to adapt domain-agnostic deep features to each individual instance. This enables a shared classifier to be applied to both source and target domain data without relying on any domain annotation. Further, instead of imposing intricate feature alignment losses, we adopt a simple semi-supervised learning paradigm using only a cross-entropy loss for both labeled source and pseudo labeled target data. Our model, dubbed DIDA-Net, achieves state-of-the-art performance on several commonly used single-source and multi-source UDA datasets including Digits, Office-Home, DomainNet, Digit-Five, and PACS.

One Sketch for All: One-Shot Personalized Sketch Segmentation

Dec 20, 2021

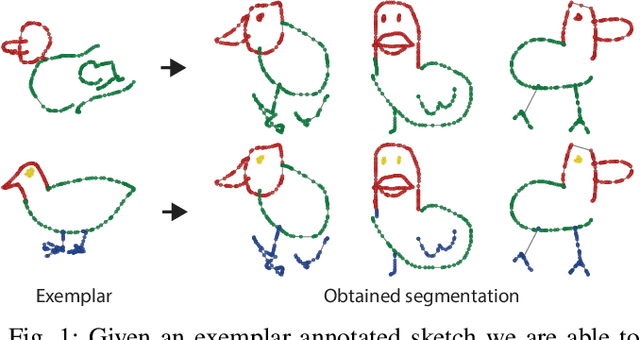

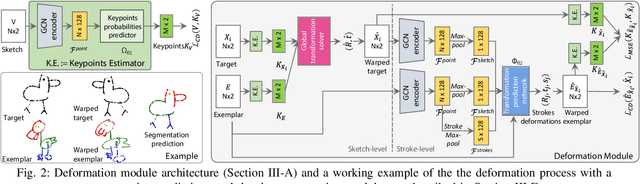

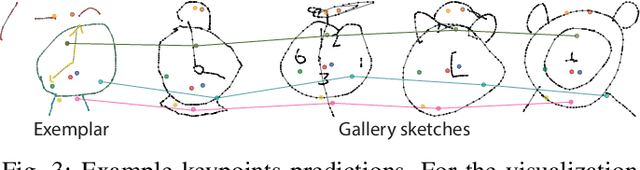

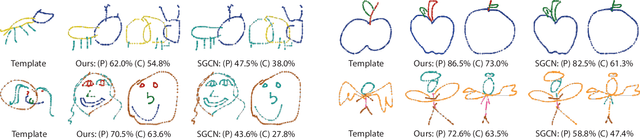

Abstract:We present the first one-shot personalized sketch segmentation method. We aim to segment all sketches belonging to the same category provisioned with a single sketch with a given part annotation while (i) preserving the parts semantics embedded in the exemplar, and (ii) being robust to input style and abstraction. We refer to this scenario as personalized. With that, we importantly enable a much-desired personalization capability for downstream fine-grained sketch analysis tasks. To train a robust segmentation module, we deform the exemplar sketch to each of the available sketches of the same category. Our method generalizes to sketches not observed during training. Our central contribution is a sketch-specific hierarchical deformation network. Given a multi-level sketch-strokes encoding obtained via a graph convolutional network, our method estimates rigid-body transformation from the reference to the exemplar, on the upper level. Finer deformation from the exemplar to the globally warped reference sketch is further obtained through stroke-wise deformations, on the lower level. Both levels of deformation are guided by mean squared distances between the keypoints learned without supervision, ensuring that the stroke semantics are preserved. We evaluate our method against the state-of-the-art segmentation and perceptual grouping baselines re-purposed for the one-shot setting and against two few-shot 3D shape segmentation methods. We show that our method outperforms all the alternatives by more than 10% on average. Ablation studies further demonstrate that our method is robust to personalization: changes in input part semantics and style differences.

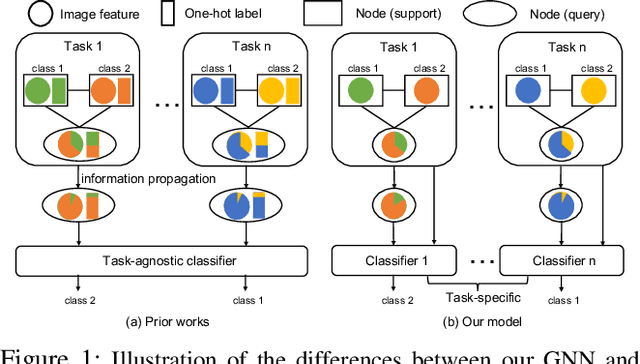

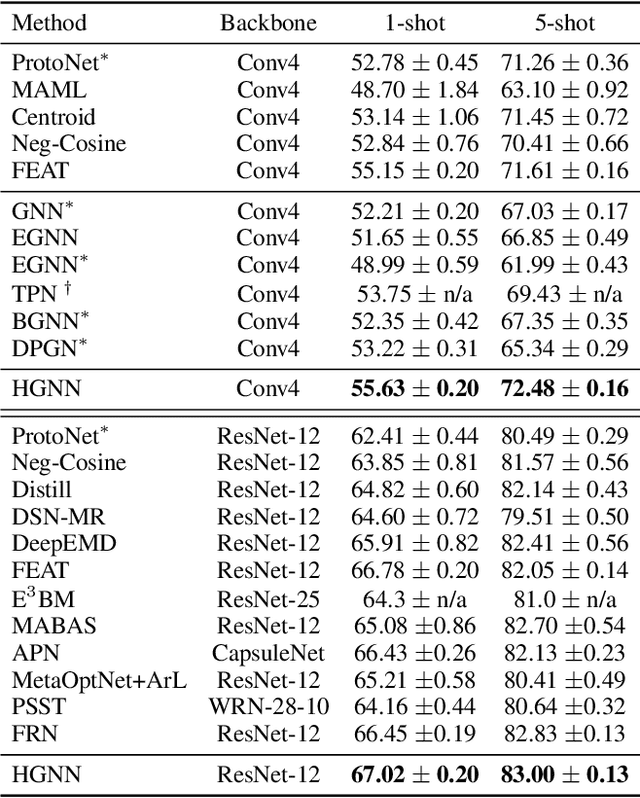

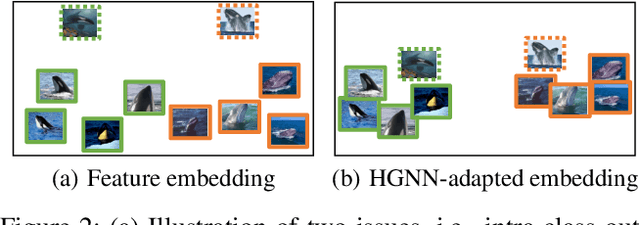

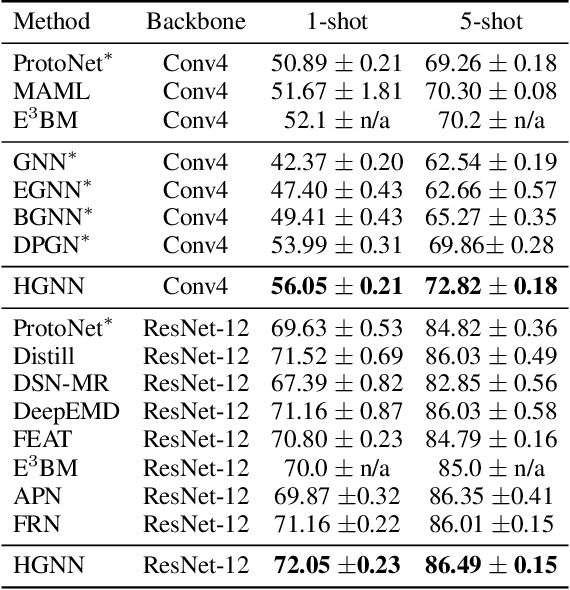

Hybrid Graph Neural Networks for Few-Shot Learning

Dec 13, 2021

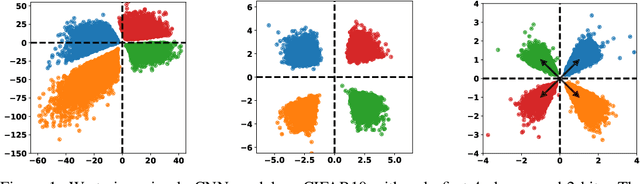

Abstract:Graph neural networks (GNNs) have been used to tackle the few-shot learning (FSL) problem and shown great potentials under the transductive setting. However under the inductive setting, existing GNN based methods are less competitive. This is because they use an instance GNN as a label propagation/classification module, which is jointly meta-learned with a feature embedding network. This design is problematic because the classifier needs to adapt quickly to new tasks while the embedding does not. To overcome this problem, in this paper we propose a novel hybrid GNN (HGNN) model consisting of two GNNs, an instance GNN and a prototype GNN. Instead of label propagation, they act as feature embedding adaptation modules for quick adaptation of the meta-learned feature embedding to new tasks. Importantly they are designed to deal with a fundamental yet often neglected challenge in FSL, that is, with only a handful of shots per class, any few-shot classifier would be sensitive to badly sampled shots which are either outliers or can cause inter-class distribution overlapping. %Our two GNNs are designed to address these two types of poorly sampled few-shots respectively and their complementarity is exploited in the hybrid GNN model. Extensive experiments show that our HGNN obtains new state-of-the-art on three FSL benchmarks.

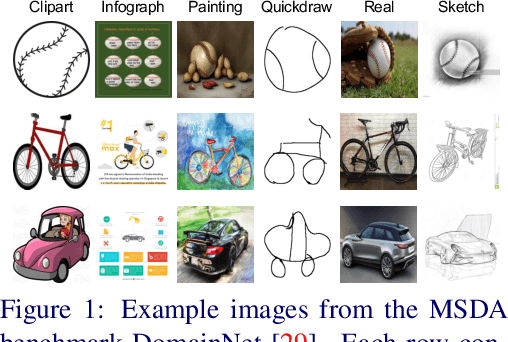

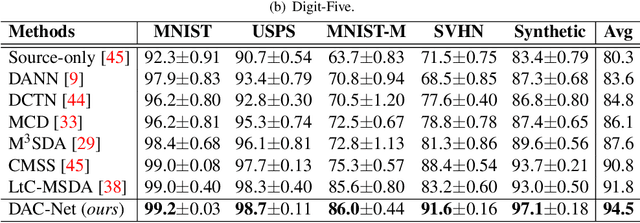

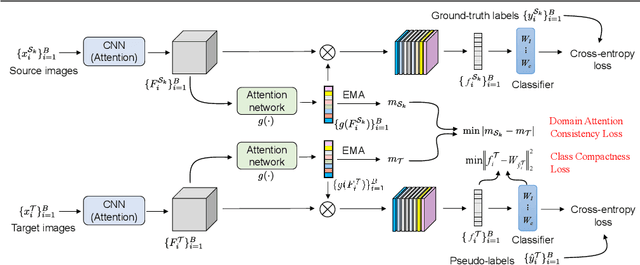

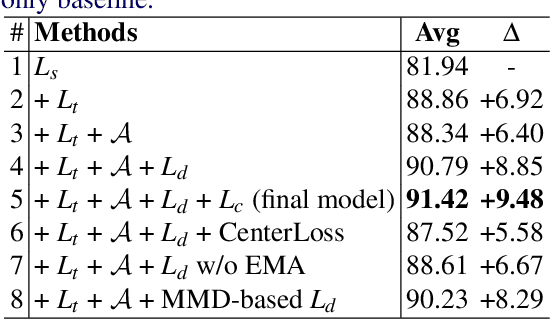

Domain Attention Consistency for Multi-Source Domain Adaptation

Nov 06, 2021

Abstract:Most existing multi-source domain adaptation (MSDA) methods minimize the distance between multiple source-target domain pairs via feature distribution alignment, an approach borrowed from the single source setting. However, with diverse source domains, aligning pairwise feature distributions is challenging and could even be counter-productive for MSDA. In this paper, we introduce a novel approach: transferable attribute learning. The motivation is simple: although different domains can have drastically different visual appearances, they contain the same set of classes characterized by the same set of attributes; an MSDA model thus should focus on learning the most transferable attributes for the target domain. Adopting this approach, we propose a domain attention consistency network, dubbed DAC-Net. The key design is a feature channel attention module, which aims to identify transferable features (attributes). Importantly, the attention module is supervised by a consistency loss, which is imposed on the distributions of channel attention weights between source and target domains. Moreover, to facilitate discriminative feature learning on the target data, we combine pseudo-labeling with a class compactness loss to minimize the distance between the target features and the classifier's weight vectors. Extensive experiments on three MSDA benchmarks show that our DAC-Net achieves new state of the art performance on all of them.

SOFT: Softmax-free Transformer with Linear Complexity

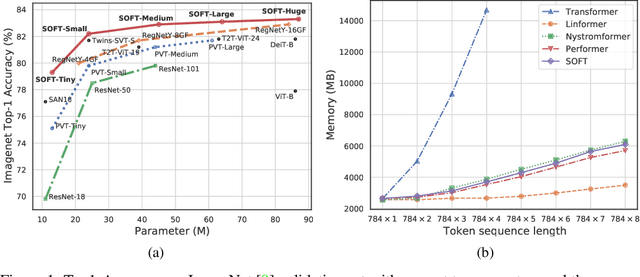

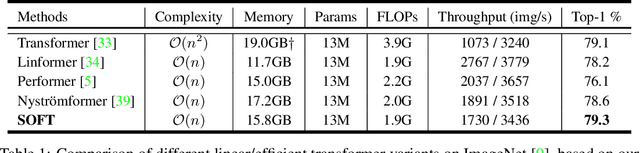

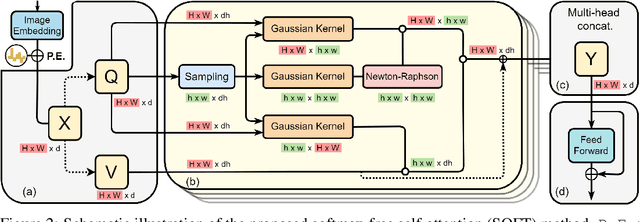

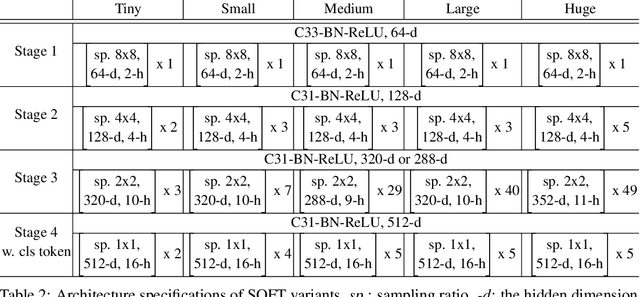

Oct 29, 2021

Abstract:Vision transformers (ViTs) have pushed the state-of-the-art for various visual recognition tasks by patch-wise image tokenization followed by self-attention. However, the employment of self-attention modules results in a quadratic complexity in both computation and memory usage. Various attempts on approximating the self-attention computation with linear complexity have been made in Natural Language Processing. However, an in-depth analysis in this work shows that they are either theoretically flawed or empirically ineffective for visual recognition. We further identify that their limitations are rooted in keeping the softmax self-attention during approximations. Specifically, conventional self-attention is computed by normalizing the scaled dot-product between token feature vectors. Keeping this softmax operation challenges any subsequent linearization efforts. Based on this insight, for the first time, a softmax-free transformer or SOFT is proposed. To remove softmax in self-attention, Gaussian kernel function is used to replace the dot-product similarity without further normalization. This enables a full self-attention matrix to be approximated via a low-rank matrix decomposition. The robustness of the approximation is achieved by calculating its Moore-Penrose inverse using a Newton-Raphson method. Extensive experiments on ImageNet show that our SOFT significantly improves the computational efficiency of existing ViT variants. Crucially, with a linear complexity, much longer token sequences are permitted in SOFT, resulting in superior trade-off between accuracy and complexity.

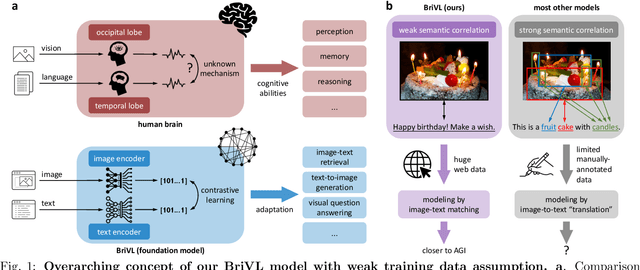

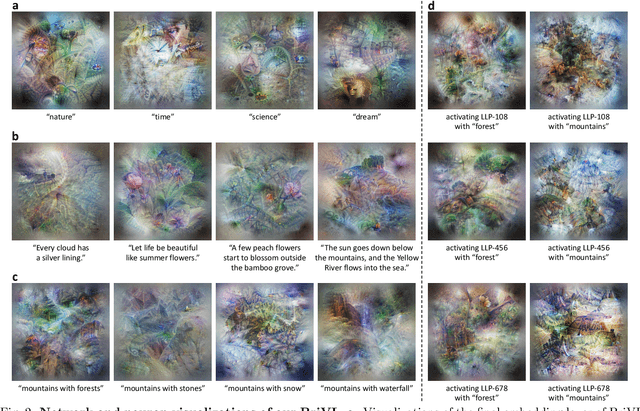

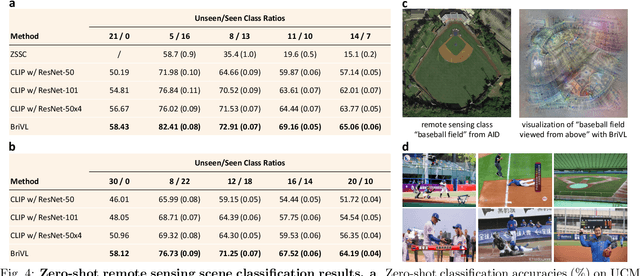

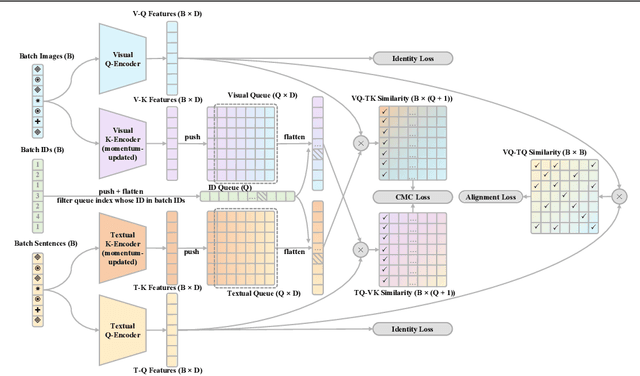

WenLan 2.0: Make AI Imagine via a Multimodal Foundation Model

Oct 27, 2021

Abstract:The fundamental goal of artificial intelligence (AI) is to mimic the core cognitive activities of human including perception, memory, and reasoning. Although tremendous success has been achieved in various AI research fields (e.g., computer vision and natural language processing), the majority of existing works only focus on acquiring single cognitive ability (e.g., image classification, reading comprehension, or visual commonsense reasoning). To overcome this limitation and take a solid step to artificial general intelligence (AGI), we develop a novel foundation model pre-trained with huge multimodal (visual and textual) data, which is able to be quickly adapted for a broad class of downstream cognitive tasks. Such a model is fundamentally different from the multimodal foundation models recently proposed in the literature that typically make strong semantic correlation assumption and expect exact alignment between image and text modalities in their pre-training data, which is often hard to satisfy in practice thus limiting their generalization abilities. To resolve this issue, we propose to pre-train our foundation model by self-supervised learning with weak semantic correlation data crawled from the Internet and show that state-of-the-art results can be obtained on a wide range of downstream tasks (both single-modal and cross-modal). Particularly, with novel model-interpretability tools developed in this work, we demonstrate that strong imagination ability (even with hints of commonsense) is now possessed by our foundation model. We believe our work makes a transformative stride towards AGI and will have broad impact on various AI+ fields (e.g., neuroscience and healthcare).

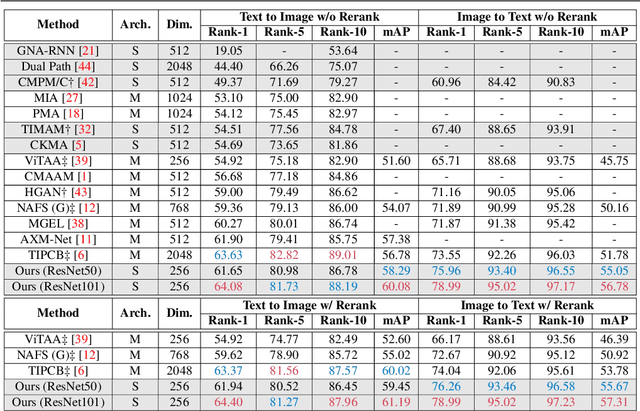

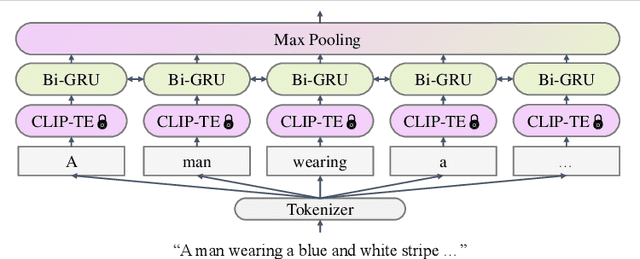

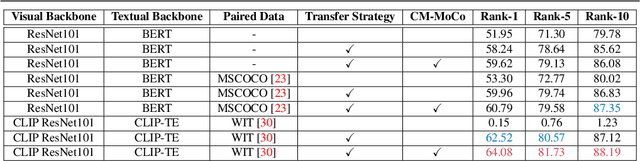

Text-Based Person Search with Limited Data

Oct 20, 2021

Abstract:Text-based person search (TBPS) aims at retrieving a target person from an image gallery with a descriptive text query. Solving such a fine-grained cross-modal retrieval task is challenging, which is further hampered by the lack of large-scale datasets. In this paper, we present a framework with two novel components to handle the problems brought by limited data. Firstly, to fully utilize the existing small-scale benchmarking datasets for more discriminative feature learning, we introduce a cross-modal momentum contrastive learning framework to enrich the training data for a given mini-batch. Secondly, we propose to transfer knowledge learned from existing coarse-grained large-scale datasets containing image-text pairs from drastically different problem domains to compensate for the lack of TBPS training data. A transfer learning method is designed so that useful information can be transferred despite the large domain gap. Armed with these components, our method achieves new state of the art on the CUHK-PEDES dataset with significant improvements over the prior art in terms of Rank-1 and mAP. Our code is available at https://github.com/BrandonHanx/TextReID.

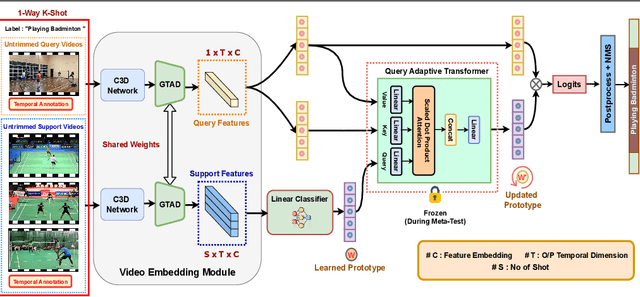

Few-Shot Temporal Action Localization with Query Adaptive Transformer

Oct 20, 2021

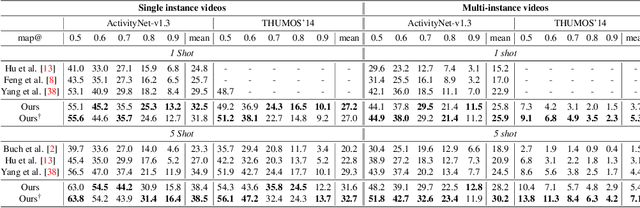

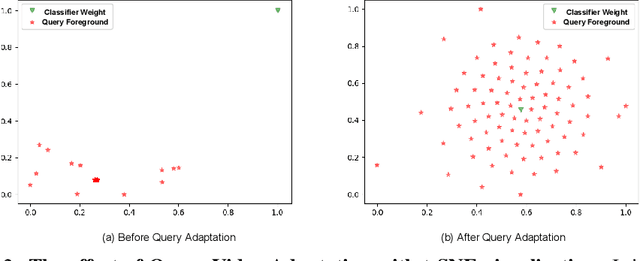

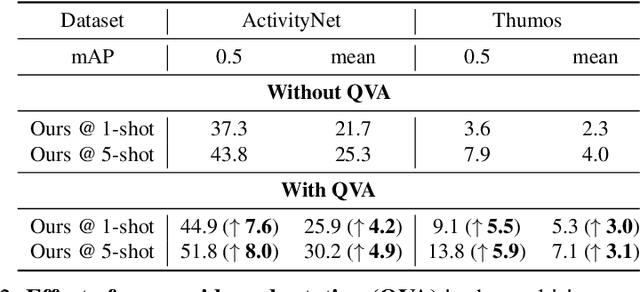

Abstract:Existing temporal action localization (TAL) works rely on a large number of training videos with exhaustive segment-level annotation, preventing them from scaling to new classes. As a solution to this problem, few-shot TAL (FS-TAL) aims to adapt a model to a new class represented by as few as a single video. Exiting FS-TAL methods assume trimmed training videos for new classes. However, this setting is not only unnatural actions are typically captured in untrimmed videos, but also ignores background video segments containing vital contextual cues for foreground action segmentation. In this work, we first propose a new FS-TAL setting by proposing to use untrimmed training videos. Further, a novel FS-TAL model is proposed which maximizes the knowledge transfer from training classes whilst enabling the model to be dynamically adapted to both the new class and each video of that class simultaneously. This is achieved by introducing a query adaptive Transformer in the model. Extensive experiments on two action localization benchmarks demonstrate that our method can outperform all the state of the art alternatives significantly in both single-domain and cross-domain scenarios. The source code can be found in https://github.com/sauradip/fewshotQAT

One Loss for All: Deep Hashing with a Single Cosine Similarity based Learning Objective

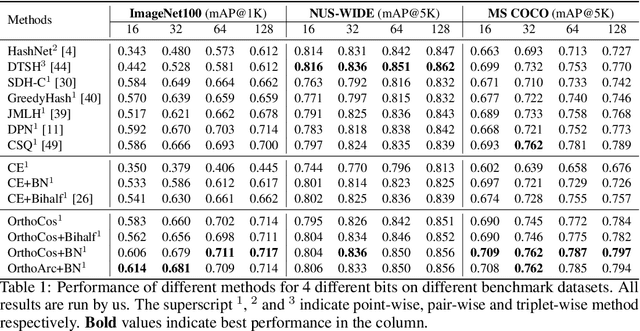

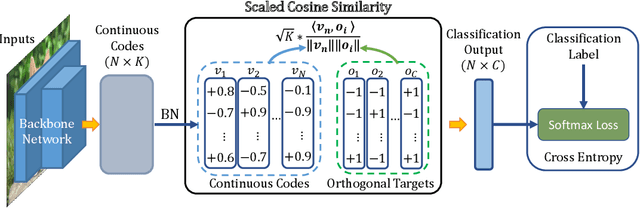

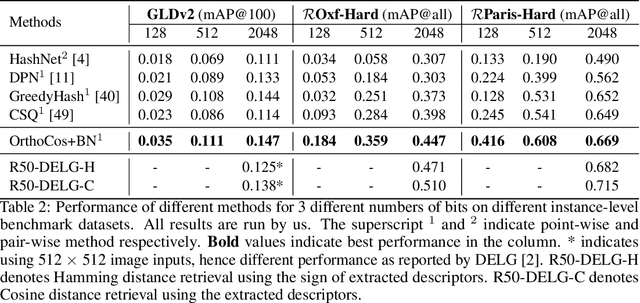

Sep 29, 2021

Abstract:A deep hashing model typically has two main learning objectives: to make the learned binary hash codes discriminative and to minimize a quantization error. With further constraints such as bit balance and code orthogonality, it is not uncommon for existing models to employ a large number (>4) of losses. This leads to difficulties in model training and subsequently impedes their effectiveness. In this work, we propose a novel deep hashing model with only a single learning objective. Specifically, we show that maximizing the cosine similarity between the continuous codes and their corresponding binary orthogonal codes can ensure both hash code discriminativeness and quantization error minimization. Further, with this learning objective, code balancing can be achieved by simply using a Batch Normalization (BN) layer and multi-label classification is also straightforward with label smoothing. The result is an one-loss deep hashing model that removes all the hassles of tuning the weights of various losses. Importantly, extensive experiments show that our model is highly effective, outperforming the state-of-the-art multi-loss hashing models on three large-scale instance retrieval benchmarks, often by significant margins. Code is available at https://github.com/kamwoh/orthohash

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge