Shaoshan Liu

Brief Industry Paper: The Necessity of Adaptive Data Fusion in Infrastructure-Augmented Autonomous Driving System

Jul 02, 2022

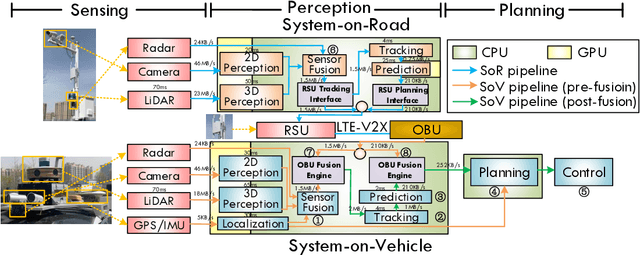

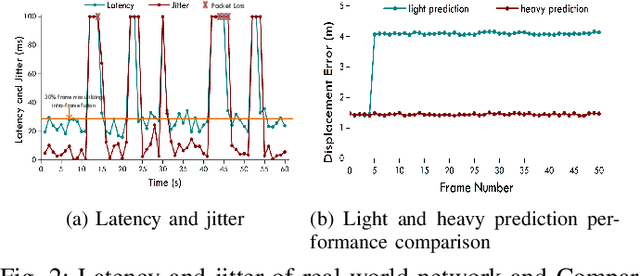

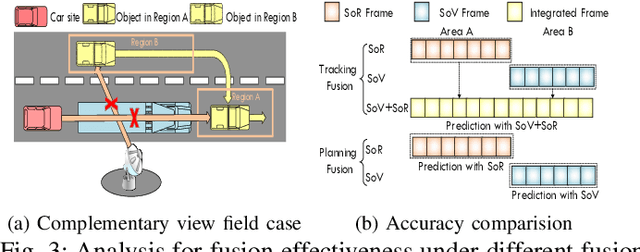

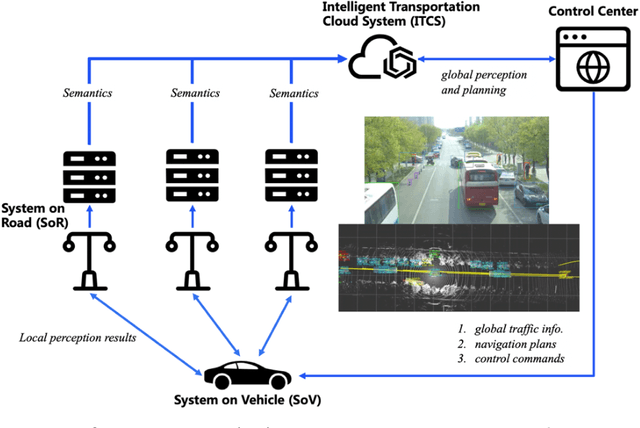

Abstract:This paper is the first to provide a thorough system design overview along with the fusion methods selection criteria of a real-world cooperative autonomous driving system, named Infrastructure-Augmented Autonomous Driving or IAAD. We present an in-depth introduction of the IAAD hardware and software on both road-side and vehicle-side computing and communication platforms. We extensively characterize the IAAD system in the context of real-world deployment scenarios and observe that the network condition that fluctuates along the road is currently the main technical roadblock for cooperative autonomous driving. To address this challenge, we propose new fusion methods, dubbed "inter-frame fusion" and "planning fusion" to complement the current state-of-the-art "intra-frame fusion". We demonstrate that each fusion method has its own benefit and constraint.

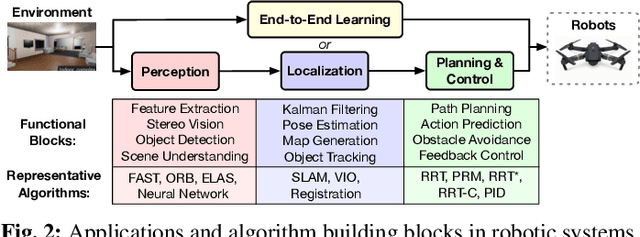

Robotic Computing on FPGAs: Current Progress, Research Challenges, and Opportunities

May 14, 2022

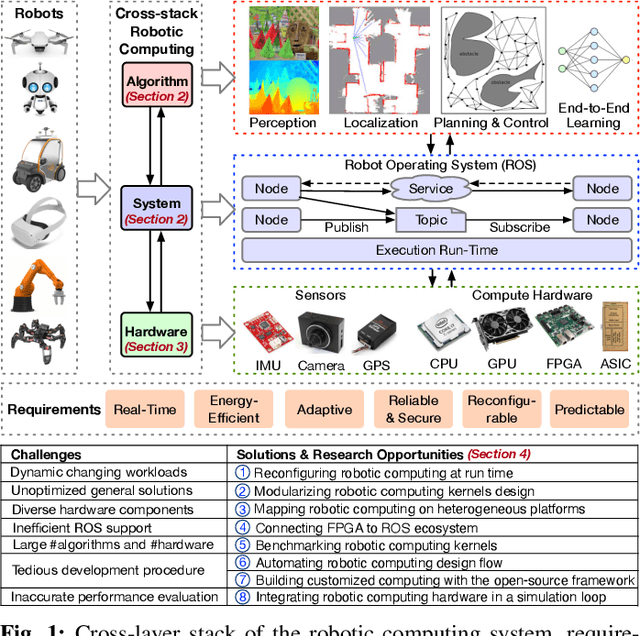

Abstract:Robotic computing has reached a tipping point, with a myriad of robots (e.g., drones, self-driving cars, logistic robots) being widely applied in diverse scenarios. The continuous proliferation of robotics, however, critically depends on efficient computing substrates, driven by real-time requirements, robotic size-weight-and-power constraints, cybersecurity considerations, and dynamically changing scenarios. Within all platforms, FPGA is able to deliver both software and hardware solutions with low power, high performance, reconfigurability, reliability, and adaptivity characteristics, serving as the promising computing substrate for robotic applications. This paper highlights the current progress, design techniques, challenges, and open research challenges in the domain of robotic computing on FPGAs.

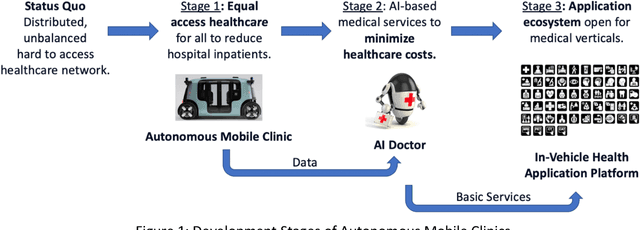

Autonomous Mobile Clinics: Empowering Affordable Anywhere Anytime Healthcare Access

Apr 11, 2022

Abstract:We are facing a global healthcare crisis today as the healthcare cost is ever climbing, but with the aging population, government fiscal revenue is ever dropping. To create a more efficient and effective healthcare system, three technical challenges immediately present themselves: healthcare access, healthcare equity, and healthcare efficiency. An autonomous mobile clinic solves the healthcare access problem by bringing healthcare services to the patient by the order of the patient's fingertips. Nevertheless, to enable a universal autonomous mobile clinic network, a three-stage technical roadmap needs to be achieved: In stage one, we focus on solving the inequity challenge in the existing healthcare system by combining autonomous mobility and telemedicine. In stage two, we develop an AI doctor for primary care, which we foster from infancy to adulthood with clean healthcare data. With the AI doctor, we can solve the inefficiency problem. In stage three, after we have proven that the autonomous mobile clinic network can truly solve the target clinical use cases, we shall open up the platform for all medical verticals, thus enabling universal healthcare through this whole new system.

Dilemma of the Artificial Intelligence Regulatory Landscape

Dec 17, 2021

Abstract:As a startup company in the autonomous driving space, we have undergone four years of painful experiences dealing with a broad spectrum of regulatory requirements. Compared to the software industry norm, which spends 13% of their overall budget on compliances, we were forced to spend 42% of our budget on compliances. Our situation is not alone and, in a way, reflects the dilemma of the artificial intelligence (AI) regulatory landscape. The root cause is the lack of AI expertise in the legislative and executive branches, leading to a lack of standardization for the industry to follow. In this article, we share our first-hand experiences and advocate for the establishment of an FDA-like agency to regulate AI properly.

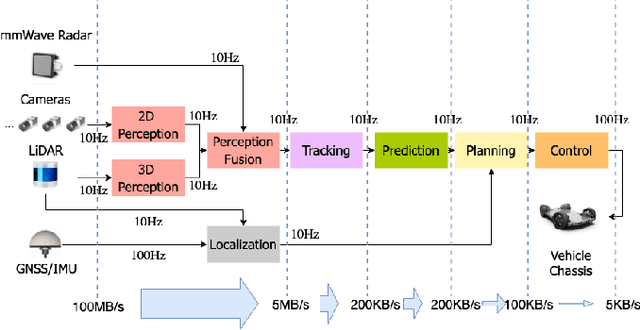

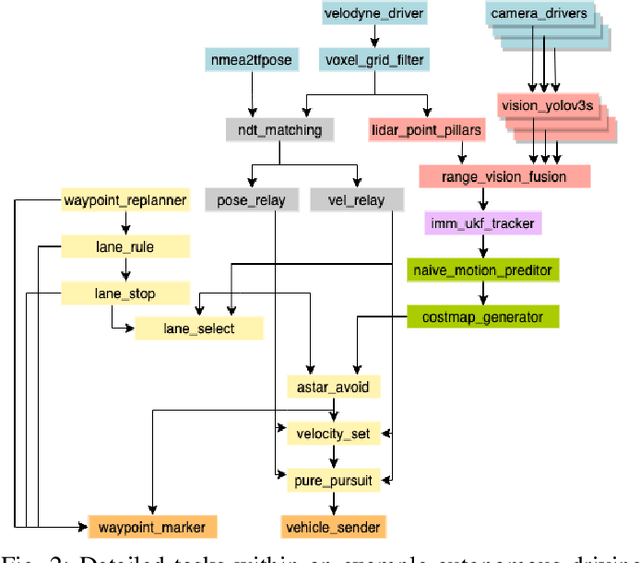

Enabling Level-4 Autonomous Driving on a Single $1k Off-the-Shelf Card

Oct 12, 2021

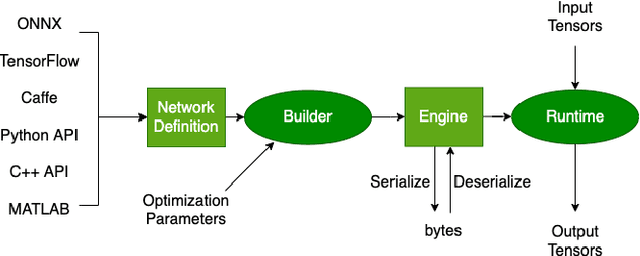

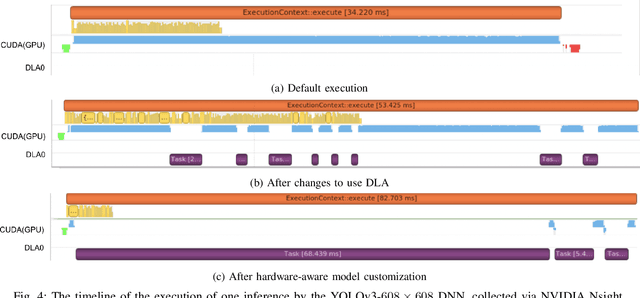

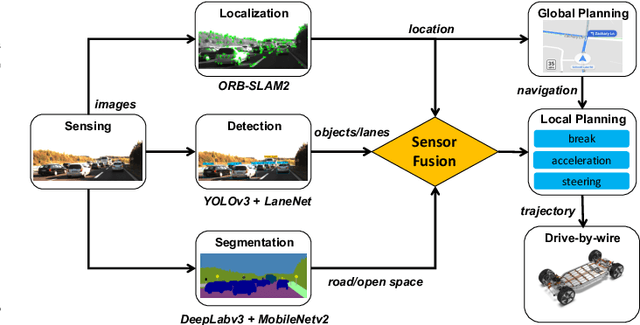

Abstract:Autonomous driving is of great interest in both research and industry. The high cost has been one of the major roadblocks that slow down the development and adoption of autonomous driving in practice. This paper, for the first-time, shows that it is possible to run level-4 (i.e., fully autonomous driving) software on a single off-the-shelf card (Jetson AGX Xavier) for less than $1k, an order of magnitude less than the state-of-the-art systems, while meeting all the requirements of latency. The success comes from the resolution of some important issues shared by existing practices through a series of measures and innovations. The study overturns the common perceptions of the computing resources required by level-4 autonomous driving, points out a promising path for the industry to lower the cost, and suggests a number of research opportunities for rethinking the architecture, software design, and optimizations of autonomous driving.

The Promise of Dataflow Architectures in the Design of Processing Systems for Autonomous Machines

Sep 15, 2021

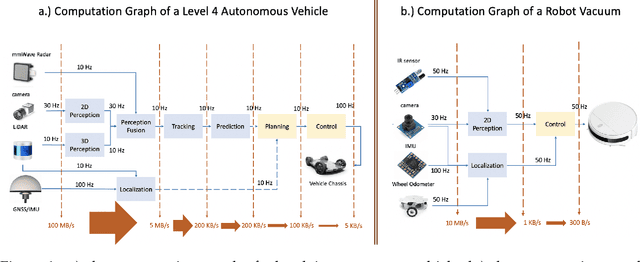

Abstract:The commercialization of autonomous machines is a thriving sector, and likely to be the next major computing demand driver, after PC, cloud computing, and mobile computing. Nevertheless, a suitable computer architecture for autonomous machines is missing, and many companies are forced to develop ad hoc computing solutions that are neither scalable nor extensible. In this article, we analyze the demands of autonomous machine computing, and argue for the promise of dataflow architectures in autonomous machines.

4C: A Computation, Communication, and Control Co-Design Framework for CAVs

Jul 31, 2021

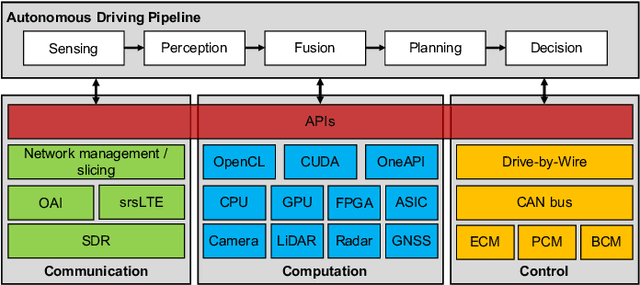

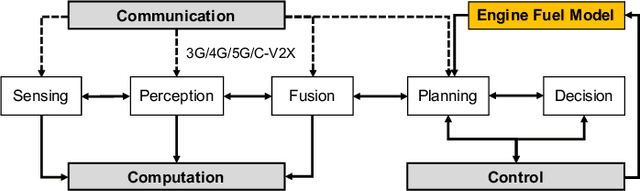

Abstract:Connected and autonomous vehicles (CAVs) are promising due to their potential safety and efficiency benefits and have attracted massive investment and interest from government agencies, industry, and academia. With more computing and communication resources are available, both vehicles and edge servers are equipped with a set of camera-based vision sensors, also known as Visual IoT (V-IoT) techniques, for sensing and perception. Tremendous efforts have been made for achieving programmable communication, computation, and control. However, they are conducted mainly in the silo mode, limiting the responsiveness and efficiency of handling challenging scenarios in the real world. To improve the end-to-end performance, we envision that future CAVs require the co-design of communication, computation, and control. This paper presents our vision of the end-to-end design principle for CAVs, called 4C, which extends the V-IoT system by providing a unified communication, computation, and control co-design framework. With programmable communications, fine-grained heterogeneous computation, and efficient vehicle controls in 4C, CAVs can handle critical scenarios and achieve energy-efficient autonomous driving. Finally, we present several challenges to achieving the vision of the 4C framework.

Rise of the Autonomous Machines

Jun 26, 2021

Abstract:After decades of uninterrupted progress and growth, information technology has so evolved that it can be said we are entering the age of autonomous machines, but there exist many roadblocks in the way of making this a reality. In this article, we make a preliminary attempt at recognizing and categorizing the technical and non-technical challenges of autonomous machines; for each of the ten areas we have identified, we review current status, roadblocks, and potential research directions. It is hoped that this will help the community define clear, effective, and more formal development goalposts for the future.

iELAS: An ELAS-Based Energy-Efficient Accelerator for Real-Time Stereo Matching on FPGA Platform

Apr 11, 2021

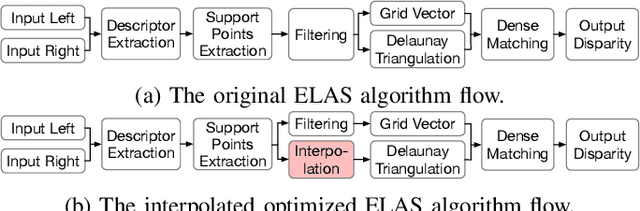

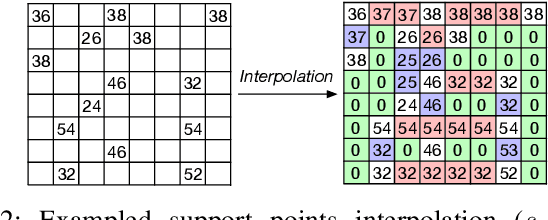

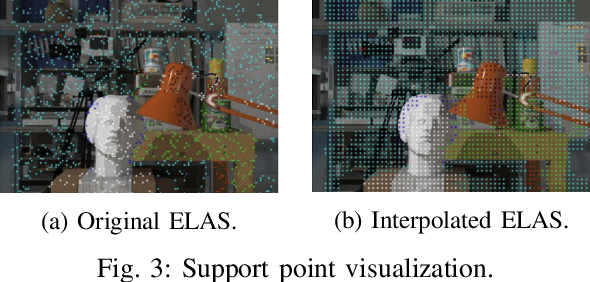

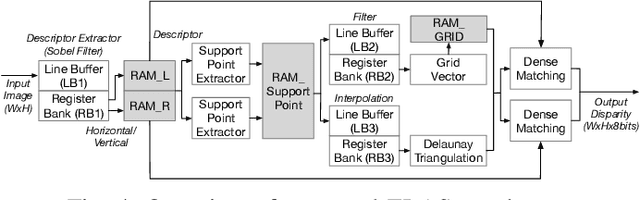

Abstract:Stereo matching is a critical task for robot navigation and autonomous vehicles, providing the depth estimation of surroundings. Among all stereo matching algorithms, Efficient Large-scale Stereo (ELAS) offers one of the best tradeoffs between efficiency and accuracy. However, due to the inherent iterative process and unpredictable memory access pattern, ELAS can only run at 1.5-3 fps on high-end CPUs and difficult to achieve real-time performance on low-power platforms. In this paper, we propose an energy-efficient architecture for real-time ELAS-based stereo matching on FPGA platform. Moreover, the original computational-intensive and irregular triangulation module is reformed in a regular manner with points interpolation, which is much more hardware-friendly. Optimizations, including memory management, parallelism, and pipelining, are further utilized to reduce memory footprint and improve throughput. Compared with Intel i7 CPU and the state-of-the-art CPU+FPGA implementation, our FPGA realization achieves up to 38.4x and 3.32x frame rate improvement, and up to 27.1x and 1.13x energy efficiency improvement, respectively.

An Energy-Efficient Quad-Camera Visual System for Autonomous Machines on FPGA Platform

Apr 01, 2021

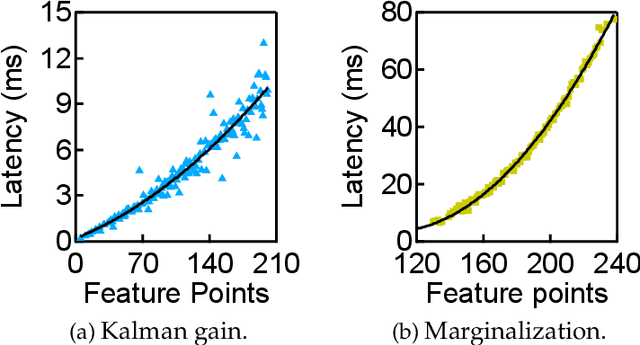

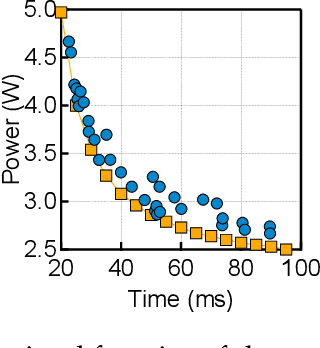

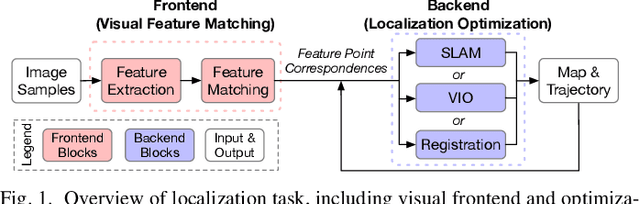

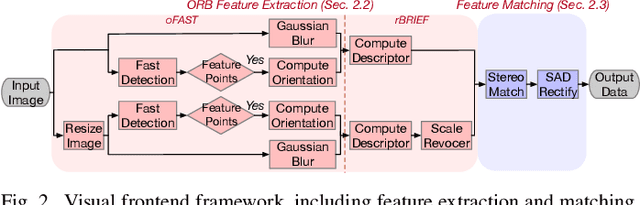

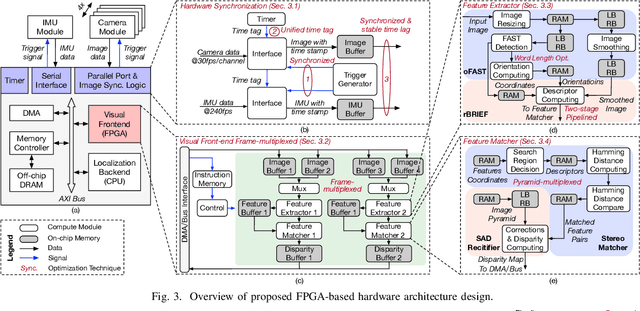

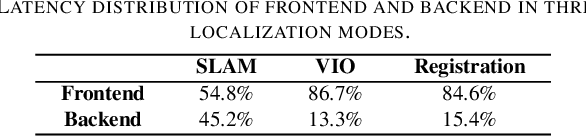

Abstract:In our past few years' of commercial deployment experiences, we identify localization as a critical task in autonomous machine applications, and a great acceleration target. In this paper, based on the observation that the visual frontend is a major performance and energy consumption bottleneck, we present our design and implementation of an energy-efficient hardware architecture for ORB (Oriented-Fast and Rotated- BRIEF) based localization system on FPGAs. To support our multi-sensor autonomous machine localization system, we present hardware synchronization, frame-multiplexing, and parallelization techniques, which are integrated in our design. Compared to Nvidia TX1 and Intel i7, our FPGA-based implementation achieves 5.6x and 3.4x speedup, as well as 3.0x and 34.6x power reduction, respectively.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge