Sejun Song

School of Science and Engineering, University of Missouri-Kansas City, Kansas City, MO, USA

MetaGreen: Meta-Learning Inspired Transformer Selection for Green Semantic Communication

Jun 22, 2024

Abstract:Semantic Communication can transform the way we transmit information, prioritizing meaningful and effective content over individual symbols or bits. This evolution promises significant benefits, including reduced latency, lower bandwidth usage, and higher throughput compared to traditional communication. However, the development of Semantic Communication faces a crucial challenge: the need for universal metrics to benchmark the joint effects of semantic information loss and energy consumption. This research introduces an innovative solution: the ``Energy-Optimized Semantic Loss'' (EOSL) function, a novel multi-objective loss function that effectively balances semantic information loss and energy consumption. Through comprehensive experiments on transformer models, including energy benchmarking, we demonstrate the remarkable effectiveness of EOSL-based model selection. We have established that EOSL-based transformer model selection achieves up to 83\% better similarity-to-power ratio (SPR) compared to BLEU score-based selection and 67\% better SPR compared to solely lowest power usage-based selection. Furthermore, we extend the applicability of EOSL to diverse and varying contexts, inspired by the principles of Meta-Learning. By cumulatively applying EOSL, we enable the model selection system to adapt to this change, leveraging historical EOSL values to guide the learning process. This work lays the foundation for energy-efficient model selection and the development of green semantic communication.

MIPI 2024 Challenge on Few-shot RAW Image Denoising: Methods and Results

Jun 11, 2024

Abstract:The increasing demand for computational photography and imaging on mobile platforms has led to the widespread development and integration of advanced image sensors with novel algorithms in camera systems. However, the scarcity of high-quality data for research and the rare opportunity for in-depth exchange of views from industry and academia constrain the development of mobile intelligent photography and imaging (MIPI). Building on the achievements of the previous MIPI Workshops held at ECCV 2022 and CVPR 2023, we introduce our third MIPI challenge including three tracks focusing on novel image sensors and imaging algorithms. In this paper, we summarize and review the Few-shot RAW Image Denoising track on MIPI 2024. In total, 165 participants were successfully registered, and 7 teams submitted results in the final testing phase. The developed solutions in this challenge achieved state-of-the-art erformance on Few-shot RAW Image Denoising. More details of this challenge and the link to the dataset can be found at https://mipichallenge.org/MIPI2024.

Deep RAW Image Super-Resolution. A NTIRE 2024 Challenge Survey

Apr 24, 2024

Abstract:This paper reviews the NTIRE 2024 RAW Image Super-Resolution Challenge, highlighting the proposed solutions and results. New methods for RAW Super-Resolution could be essential in modern Image Signal Processing (ISP) pipelines, however, this problem is not as explored as in the RGB domain. Th goal of this challenge is to upscale RAW Bayer images by 2x, considering unknown degradations such as noise and blur. In the challenge, a total of 230 participants registered, and 45 submitted results during thee challenge period. The performance of the top-5 submissions is reviewed and provided here as a gauge for the current state-of-the-art in RAW Image Super-Resolution.

Transformers for Green Semantic Communication: Less Energy, More Semantics

Oct 11, 2023Abstract:Semantic communication aims to transmit meaningful and effective information rather than focusing on individual symbols or bits, resulting in benefits like reduced latency, bandwidth usage, and higher throughput compared to traditional communication. However, semantic communication poses significant challenges due to the need for universal metrics for benchmarking the joint effects of semantic information loss and practical energy consumption. This research presents a novel multi-objective loss function named "Energy-Optimized Semantic Loss" (EOSL), addressing the challenge of balancing semantic information loss and energy consumption. Through comprehensive experiments on transformer models, including CPU and GPU energy usage, it is demonstrated that EOSL-based encoder model selection can save up to 90\% of energy while achieving a 44\% improvement in semantic similarity performance during inference in this experiment. This work paves the way for energy-efficient neural network selection and the development of greener semantic communication architectures.

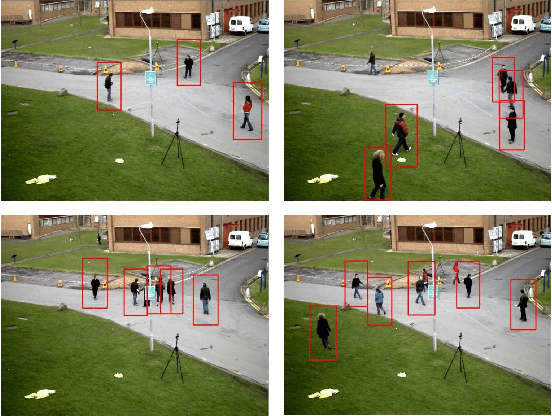

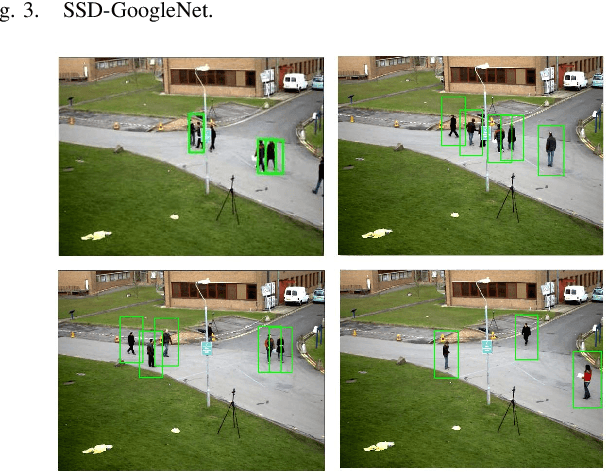

Smart Surveillance as an Edge Network Service: from Harr-Cascade, SVM to a Lightweight CNN

Oct 01, 2018

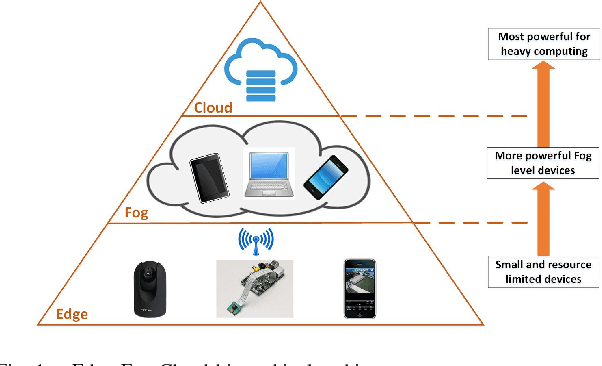

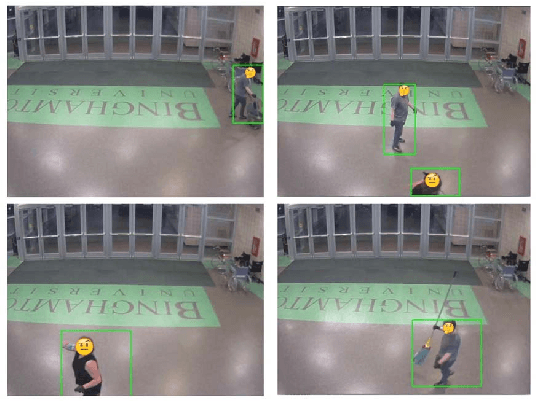

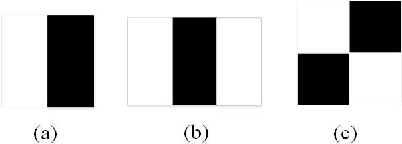

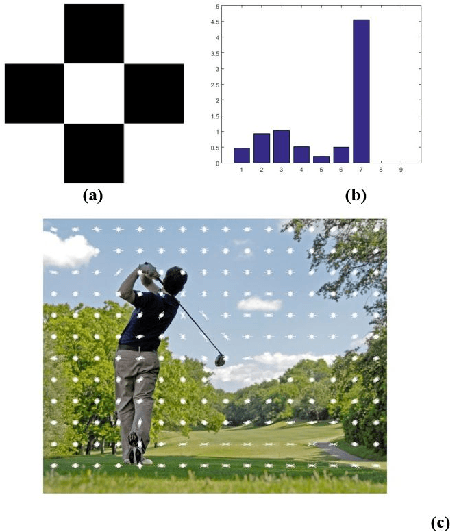

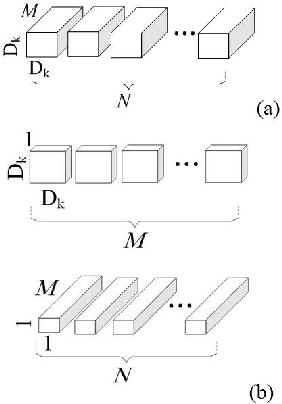

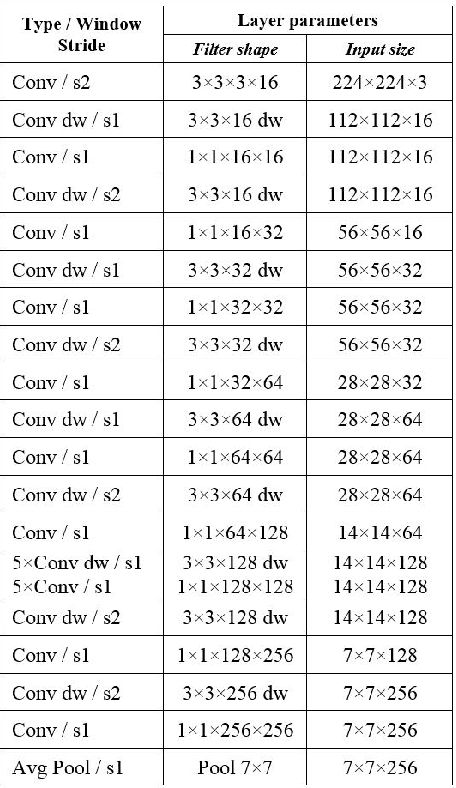

Abstract:Edge computing efficiently extends the realm of information technology beyond the boundary defined by cloud computing paradigm. Performing computation near the source and destination, edge computing is promising to address the challenges in many delay-sensitive applications, like real-time human surveillance. Leveraging the ubiquitously connected cameras and smart mobile devices, it enables video analytics at the edge. In recent years, many smart video surveillance approaches are proposed for object detection and tracking by using Artificial Intelligence (AI) and Machine Learning (ML) algorithms. This work explores the feasibility of two popular human-objects detection schemes, Harr-Cascade and HOG feature extraction and SVM classifier, at the edge and introduces a lightweight Convolutional Neural Network (L-CNN) leveraging the depthwise separable convolution for less computation, for human detection. Single Board computers (SBC) are used as edge devices for tests and algorithms are validated using real-world campus surveillance video streams and open data sets. The experimental results are promising that the final algorithm is able to track humans with a decent accuracy at a resource consumption affordable by edge devices in real-time manner.

Real-Time Human Detection as an Edge Service Enabled by a Lightweight CNN

Apr 24, 2018

Abstract:Edge computing allows more computing tasks to take place on the decentralized nodes at the edge of networks. Today many delay sensitive, mission-critical applications can leverage these edge devices to reduce the time delay or even to enable real time, online decision making thanks to their onsite presence. Human objects detection, behavior recognition and prediction in smart surveillance fall into that category, where a transition of a huge volume of video streaming data can take valuable time and place heavy pressure on communication networks. It is widely recognized that video processing and object detection are computing intensive and too expensive to be handled by resource limited edge devices. Inspired by the depthwise separable convolution and Single Shot Multi-Box Detector (SSD), a lightweight Convolutional Neural Network (LCNN) is introduced in this paper. By narrowing down the classifier's searching space to focus on human objects in surveillance video frames, the proposed LCNN algorithm is able to detect pedestrians with an affordable computation workload to an edge device. A prototype has been implemented on an edge node (Raspberry PI 3) using openCV libraries, and satisfactory performance is achieved using real world surveillance video streams. The experimental study has validated the design of LCNN and shown it is a promising approach to computing intensive applications at the edge.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge