Cory Beard

School of Science and Engineering, University of Missouri-Kansas City, Kansas City, MO, USA

MetaGreen: Meta-Learning Inspired Transformer Selection for Green Semantic Communication

Jun 22, 2024

Abstract:Semantic Communication can transform the way we transmit information, prioritizing meaningful and effective content over individual symbols or bits. This evolution promises significant benefits, including reduced latency, lower bandwidth usage, and higher throughput compared to traditional communication. However, the development of Semantic Communication faces a crucial challenge: the need for universal metrics to benchmark the joint effects of semantic information loss and energy consumption. This research introduces an innovative solution: the ``Energy-Optimized Semantic Loss'' (EOSL) function, a novel multi-objective loss function that effectively balances semantic information loss and energy consumption. Through comprehensive experiments on transformer models, including energy benchmarking, we demonstrate the remarkable effectiveness of EOSL-based model selection. We have established that EOSL-based transformer model selection achieves up to 83\% better similarity-to-power ratio (SPR) compared to BLEU score-based selection and 67\% better SPR compared to solely lowest power usage-based selection. Furthermore, we extend the applicability of EOSL to diverse and varying contexts, inspired by the principles of Meta-Learning. By cumulatively applying EOSL, we enable the model selection system to adapt to this change, leveraging historical EOSL values to guide the learning process. This work lays the foundation for energy-efficient model selection and the development of green semantic communication.

MODIPHY: Multimodal Obscured Detection for IoT using PHantom Convolution-Enabled Faster YOLO

Feb 12, 2024

Abstract:Low-light conditions and occluded scenarios impede object detection in real-world Internet of Things (IoT) applications like autonomous vehicles and security systems. While advanced machine learning models strive for accuracy, their computational demands clash with the limitations of resource-constrained devices, hampering real-time performance. In our current research, we tackle this challenge, by introducing "YOLO Phantom", one of the smallest YOLO models ever conceived. YOLO Phantom utilizes the novel Phantom Convolution block, achieving comparable accuracy to the latest YOLOv8n model while simultaneously reducing both parameters and model size by 43%, resulting in a significant 19% reduction in Giga Floating Point Operations (GFLOPs). YOLO Phantom leverages transfer learning on our multimodal RGB-infrared dataset to address low-light and occlusion issues, equipping it with robust vision under adverse conditions. Its real-world efficacy is demonstrated on an IoT platform with advanced low-light and RGB cameras, seamlessly connecting to an AWS-based notification endpoint for efficient real-time object detection. Benchmarks reveal a substantial boost of 17% and 14% in frames per second (FPS) for thermal and RGB detection, respectively, compared to the baseline YOLOv8n model. For community contribution, both the code and the multimodal dataset are available on GitHub.

Transformers for Green Semantic Communication: Less Energy, More Semantics

Oct 11, 2023Abstract:Semantic communication aims to transmit meaningful and effective information rather than focusing on individual symbols or bits, resulting in benefits like reduced latency, bandwidth usage, and higher throughput compared to traditional communication. However, semantic communication poses significant challenges due to the need for universal metrics for benchmarking the joint effects of semantic information loss and practical energy consumption. This research presents a novel multi-objective loss function named "Energy-Optimized Semantic Loss" (EOSL), addressing the challenge of balancing semantic information loss and energy consumption. Through comprehensive experiments on transformer models, including CPU and GPU energy usage, it is demonstrated that EOSL-based encoder model selection can save up to 90\% of energy while achieving a 44\% improvement in semantic similarity performance during inference in this experiment. This work paves the way for energy-efficient neural network selection and the development of greener semantic communication architectures.

Deep-Mobility: A Deep Learning Approach for an Efficient and Reliable 5G Handover

Jan 19, 2021

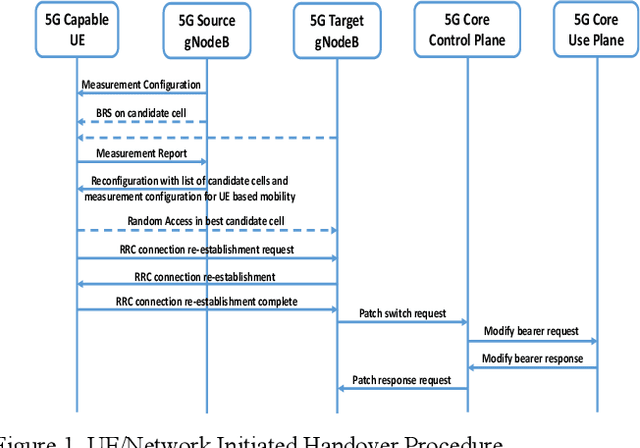

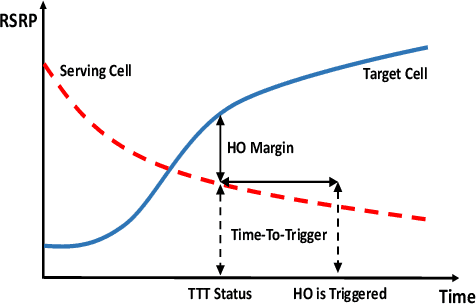

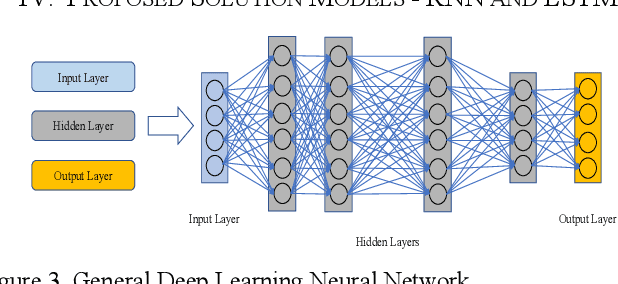

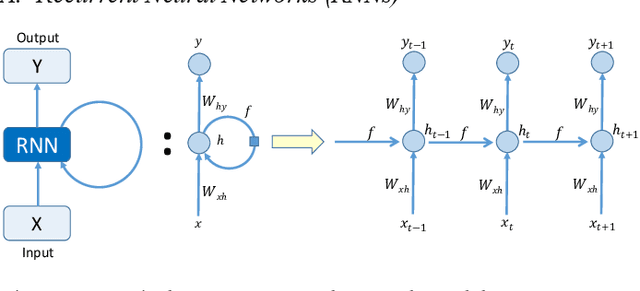

Abstract:5G cellular networks are being deployed all over the world and this architecture supports ultra-dense network (UDN) deployment. Small cells have a very important role in providing 5G connectivity to the end users. Exponential increases in devices, data and network demands make it mandatory for the service providers to manage handovers better, to cater to the services that a user desire. In contrast to any traditional handover improvement scheme, we develop a 'Deep-Mobility' model by implementing a deep learning neural network (DLNN) to manage network mobility, utilizing in-network deep learning and prediction. We use network key performance indicators (KPIs) to train our model to analyze network traffic and handover requirements. In this method, RF signal conditions are continuously observed and tracked using deep learning neural networks such as the Recurrent neural network (RNN) or Long Short-Term Memory network (LSTM) and system level inputs are also considered in conjunction, to take a collective decision for a handover. We can study multiple parameters and interactions between system events along with the user mobility, which would then trigger a handoff in any given scenario. Here, we show the fundamental modeling approach and demonstrate usefulness of our model while investigating impacts and sensitivities of certain KPIs from the user equipment (UE) and network side.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge