Ludovic Righetti

NYU Tandon School of Engineering

DeepQ Stepper: A framework for reactive dynamic walking on uneven terrain

Oct 28, 2020

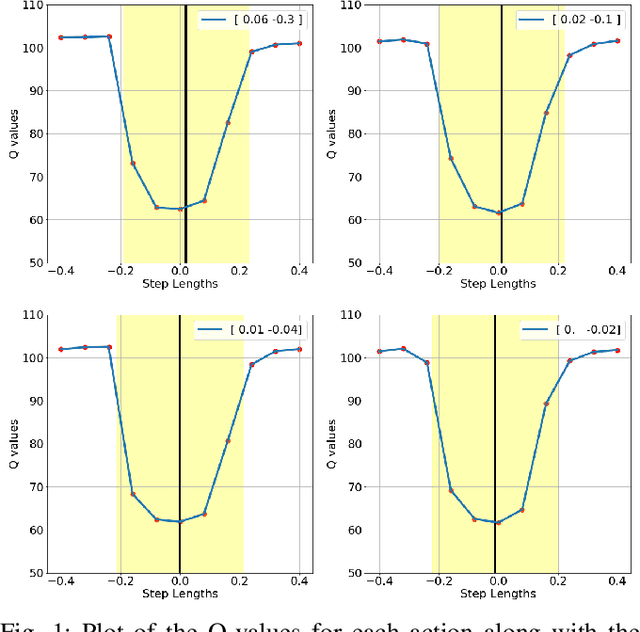

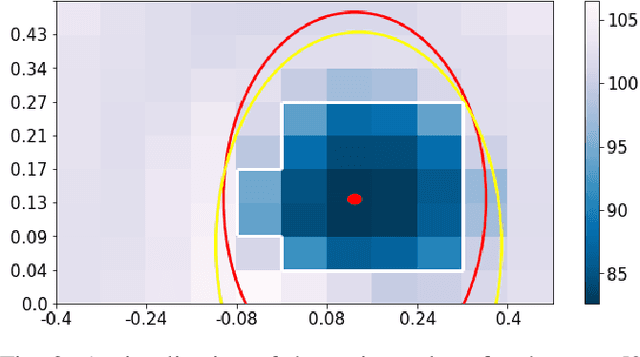

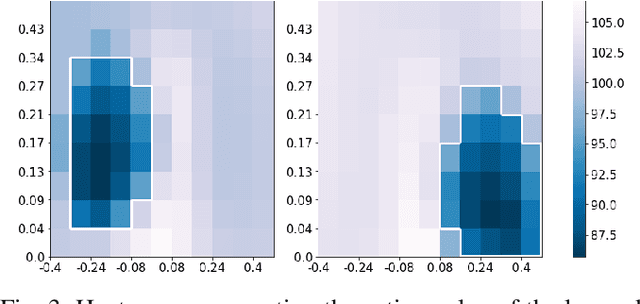

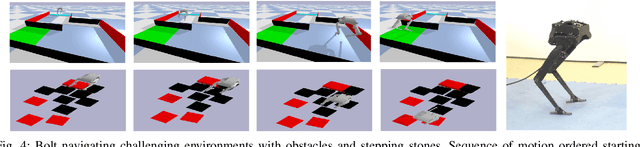

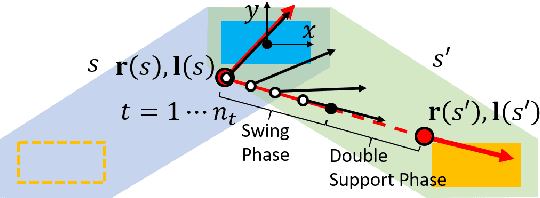

Abstract:Reactive stepping and push recovery for biped robots is often restricted to flat terrains because of the difficulty in computing capture regions for nonlinear dynamic models. In this paper, we address this limitation by using reinforcement learning to approximately learn the 3D capture region for such systems. We propose a novel 3D reactive stepper, The DeepQ stepper, that computes optimal step locations for walking at different velocities using the 3D capture regions approximated by the action-value function. We demonstrate the ability of the approach to learn stepping with a simplified 3D pendulum model and a full robot dynamics. Further, the stepper achieves a higher performance when it learns approximate capture regions while taking into account the entire dynamics of the robot that are often ignored in existing reactive steppers based on simplified models. The DeepQ stepper can handle non convex terrain with obstacles, walk on restricted surfaces like stepping stones and recover from external disturbances for a constant computational cost.

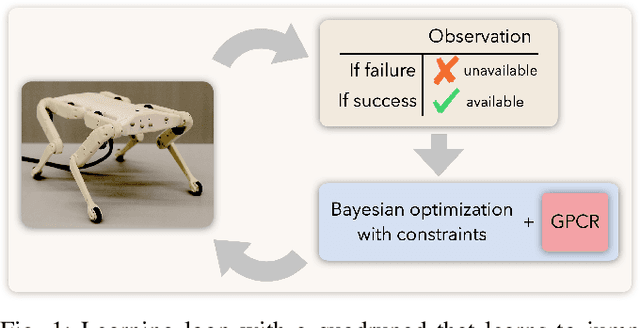

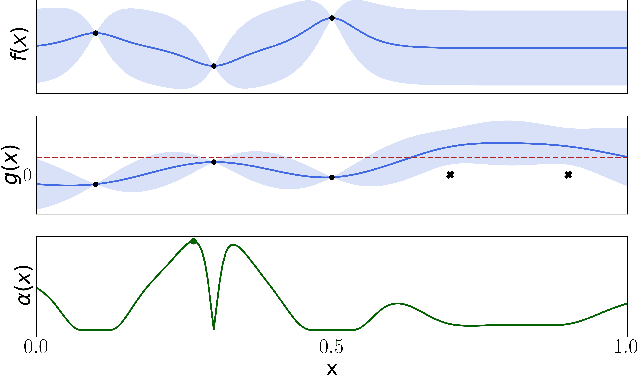

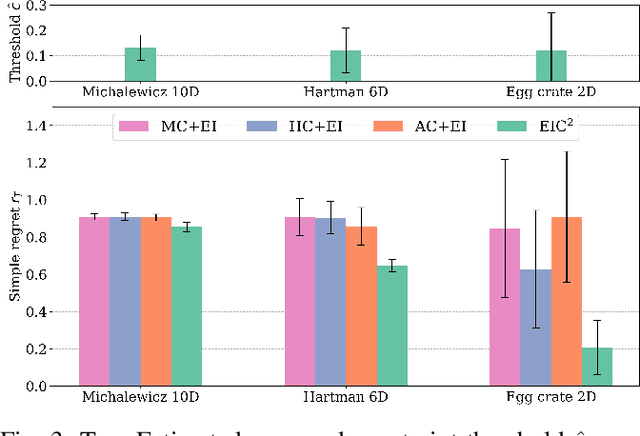

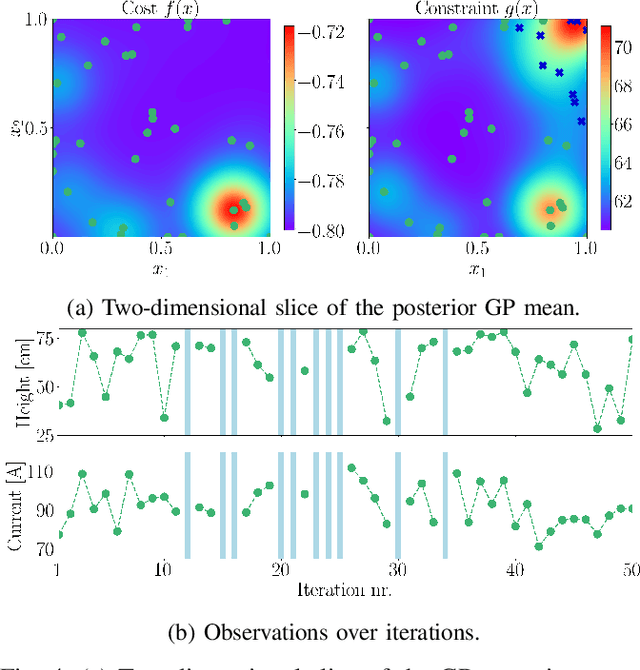

Robot Learning with Crash Constraints

Oct 16, 2020

Abstract:In the past decade, numerous machine learning algorithms have been shown to successfully learn optimal policies to control real robotic systems. However, it is not rare to encounter failing behaviors as the learning loop progresses. Specifically, in robot applications where failing is undesired but not catastrophic, many algorithms struggle with leveraging data obtained from failures. This is usually caused by (i) the failed experiment ending prematurely, or (ii) the acquired data being scarce or corrupted. Both complicate the design of proper reward functions to penalize failures. In this paper, we propose a framework that addresses those issues. We consider failing behaviors as those that violate a constraint and address the problem of "learning with crash constraints", where no data is obtained upon constraint violation. The no-data case is addressed by a novel GP model (GPCR) for the constraint that combines discrete events (failure/success) with continuous observations (only obtained upon success). We demonstrate the effectiveness of our framework on simulated benchmarks and on a real jumping quadruped, where the constraint boundary is unknown a priori. Experimental data is collected, by means of constrained Bayesian optimization, directly on the real robot. Our results outperform manual tuning and GPCR proves useful on estimating the constraint boundary.

Bipedal Walking Control using Variable Horizon MPC

Oct 16, 2020

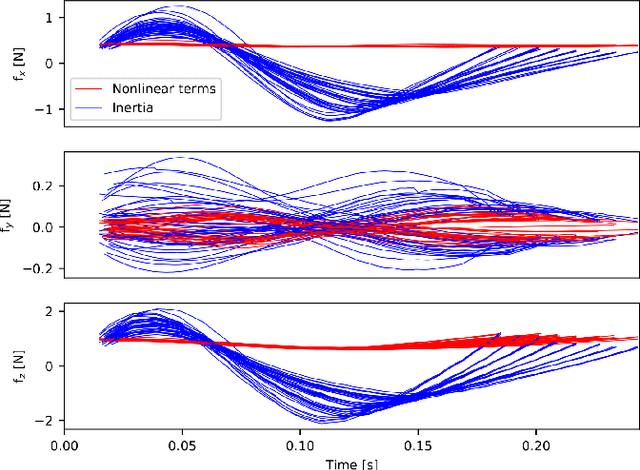

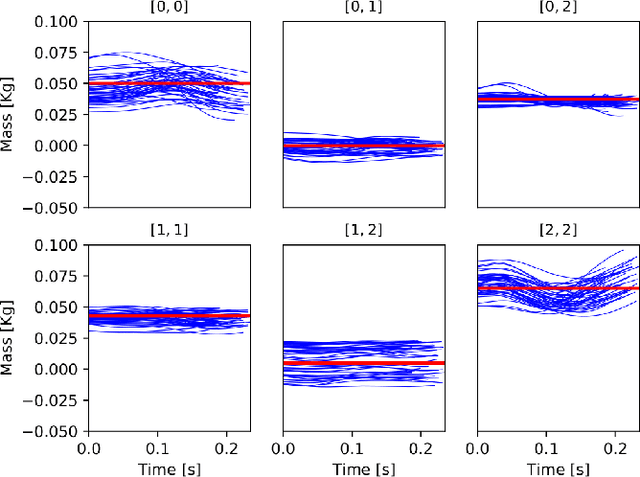

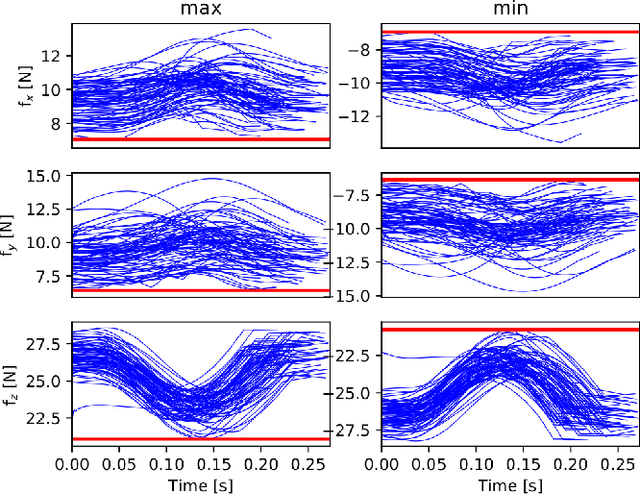

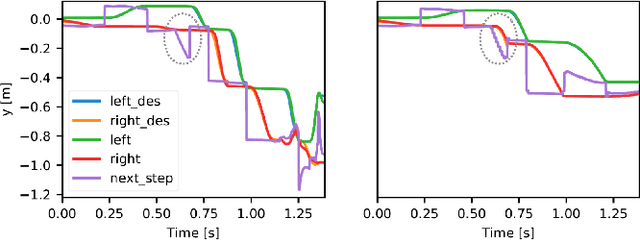

Abstract:In this paper, we present a novel two-level variable Horizon Model Predictive Control (VH-MPC) framework for bipedal locomotion. In this framework, the higher level computes the landing location and timing (horizon length) of the swing foot to stabilize the unstable part of the center of mass (CoM) dynamics, using feedback from the CoM state. The lower level takes into account the swing foot dynamics and generates dynamically consistent trajectories for landing at the desired time as close as possible to the desired location. To do that, we use a simplified model of the robot dynamics projected in swing foot space that takes into account joint torque constraints as well as the friction cone constraints of the stance foot. We show the effectiveness of our proposed control framework by implementing robust walking patterns on our torque-controlled and open-source biped robot, Bolt. We report extensive simulations and real robot experiments in the presence of various disturbances and uncertainties.

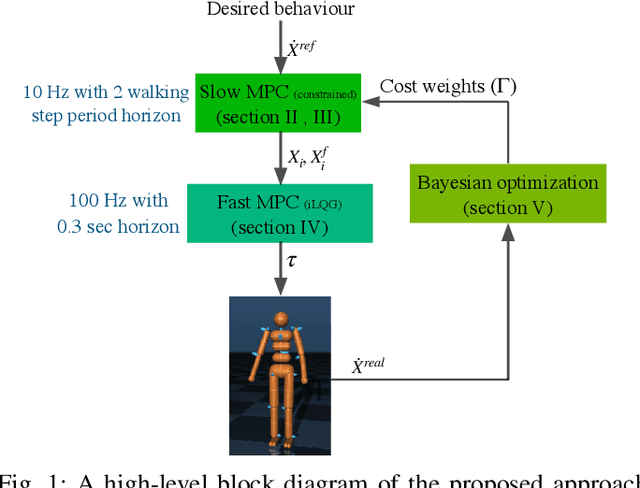

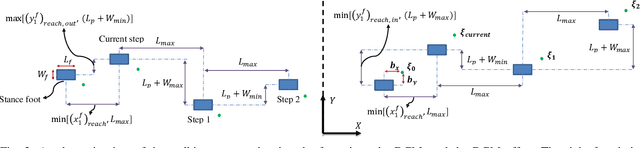

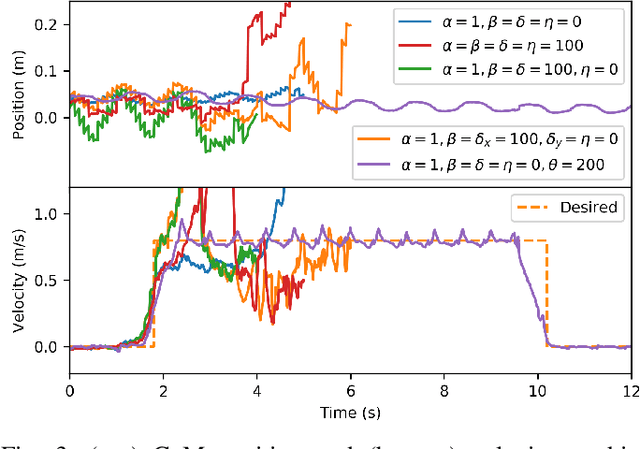

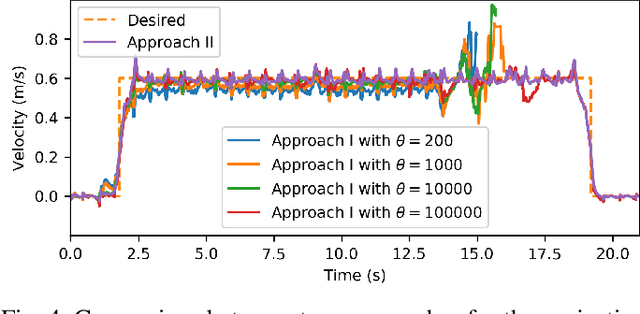

Robust walking based on MPC with viability-based feasibility guarantees

Oct 09, 2020

Abstract:Model predictive control (MPC) has shown great success for controlling complex systems such as legged robots. However, when closing the loop, the performance and feasibility of the finite horizon optimal control problem solved at each control cycle is not guaranteed anymore. This is due to model discrepancies, the effect of low-level controllers, uncertainties and sensor noise. To address these issues, we propose a modified version of a standard MPC approach used in legged locomotion with viability (weak forward invariance) guarantees that ensures the feasibility of the optimal control problem. Moreover, we use past experimental data to find the best cost weights, which measure a combination of performance, constraint satisfaction robustness, or stability (invariance). These interpretable costs measure the trade off between robustness and performance. For this purpose, we use Bayesian optimization (BO) to systematically design experiments that help efficiently collect data to learn a cost function leading to robust performance. Our simulation results with different realistic disturbances (i.e. external pushes, unmodeled actuator dynamics and computational delay) show the effectiveness of our approach to create robust controllers for humanoid robots.

Efficient Multi-Contact Pattern Generation with Sequential Convex Approximations of the Centroidal Dynamics

Oct 02, 2020

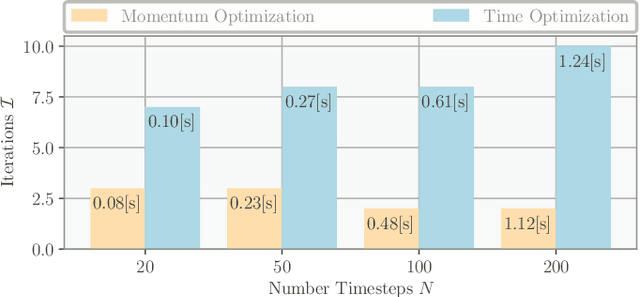

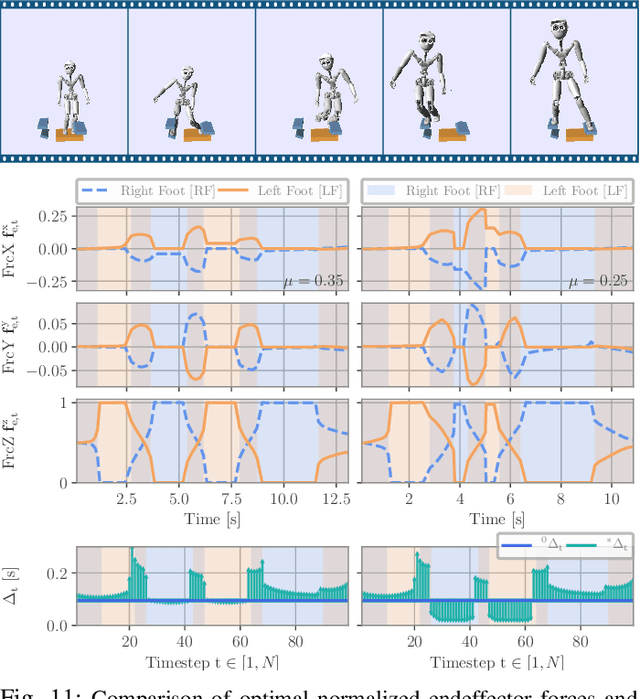

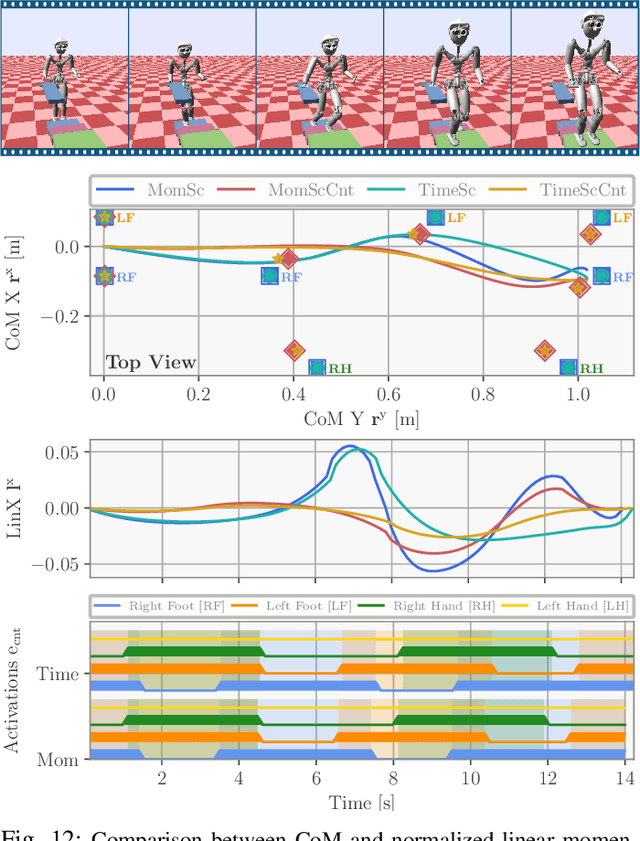

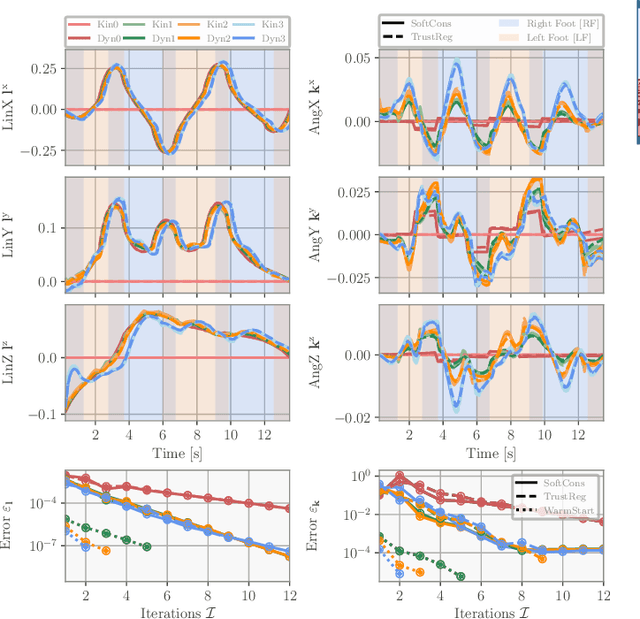

Abstract:This paper investigates the problem of efficient computation of physically consistent multi-contact behaviors. Recent work showed that under mild assumptions, the problem could be decomposed into simpler kinematic and centroidal dynamic optimization problems. Based on this approach, we propose a general convex relaxation of the centroidal dynamics leading to two computationally efficient algorithms based on iterative resolutions of second order cone programs. They optimize centroidal trajectories, contact forces and, importantly, the timing of the motions. We include the approach in a kino-dynamic optimization method to generate full-body movements. Finally, the approach is embedded in a mixed-integer solver to further find dynamically consistent contact sequences. Extensive numerical experiments demonstrate the computational efficiency of the approach, suggesting that it could be used in a fast receding horizon control loop. Executions of the planned motions on simulated humanoids and quadrupeds and on a real quadruped robot further show the quality of the optimized motions.

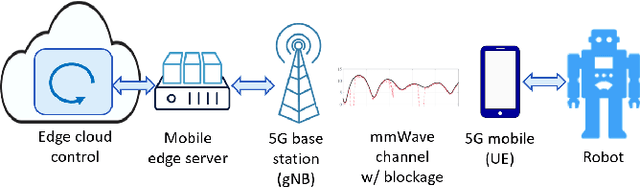

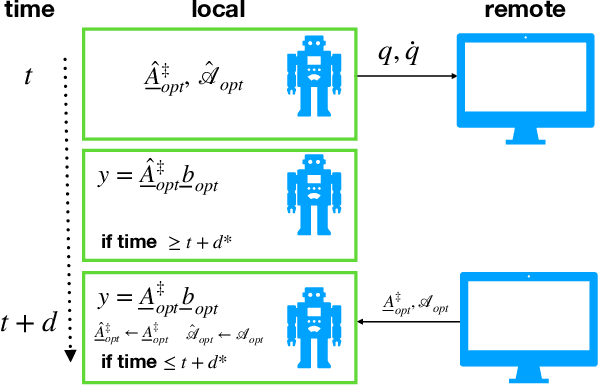

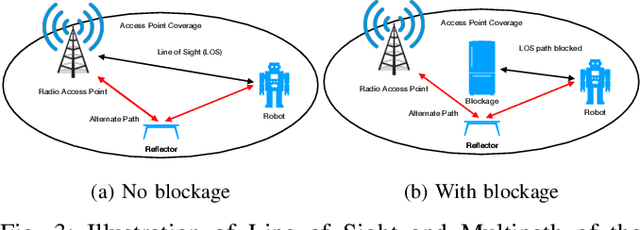

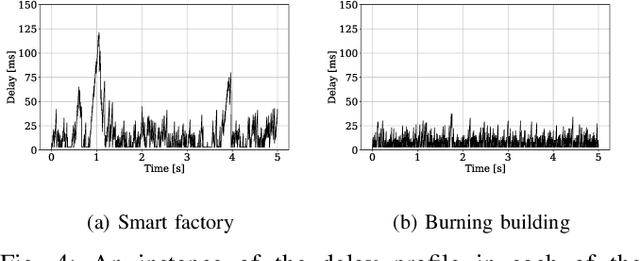

Enabling Remote Whole-Body Control with 5G Edge Computing

Aug 19, 2020

Abstract:Real-world applications require light-weight, energy-efficient, fully autonomous robots. Yet, increasing autonomy is oftentimes synonymous with escalating computational requirements. It might thus be desirable to offload intensive computation--not only sensing and planning, but also low-level whole-body control--to remote servers in order to reduce on-board computational needs. Fifth Generation (5G) wireless cellular technology, with its low latency and high bandwidth capabilities, has the potential to unlock cloud-based high performance control of complex robots. However, state-of-the-art control algorithms for legged robots can only tolerate very low control delays, which even ultra-low latency 5G edge computing can sometimes fail to achieve. In this work, we investigate the problem of cloud-based whole-body control of legged robots over a 5G link. We propose a novel approach that consists of a standard optimization-based controller on the network edge and a local linear, approximately optimal controller that significantly reduces on-board computational needs while increasing robustness to delay and possible loss of communication. Simulation experiments on humanoid balancing and walking tasks that includes a realistic 5G communication model demonstrate significant improvement of the reliability of robot locomotion under jitter and delays likely to experienced in 5G wireless links.

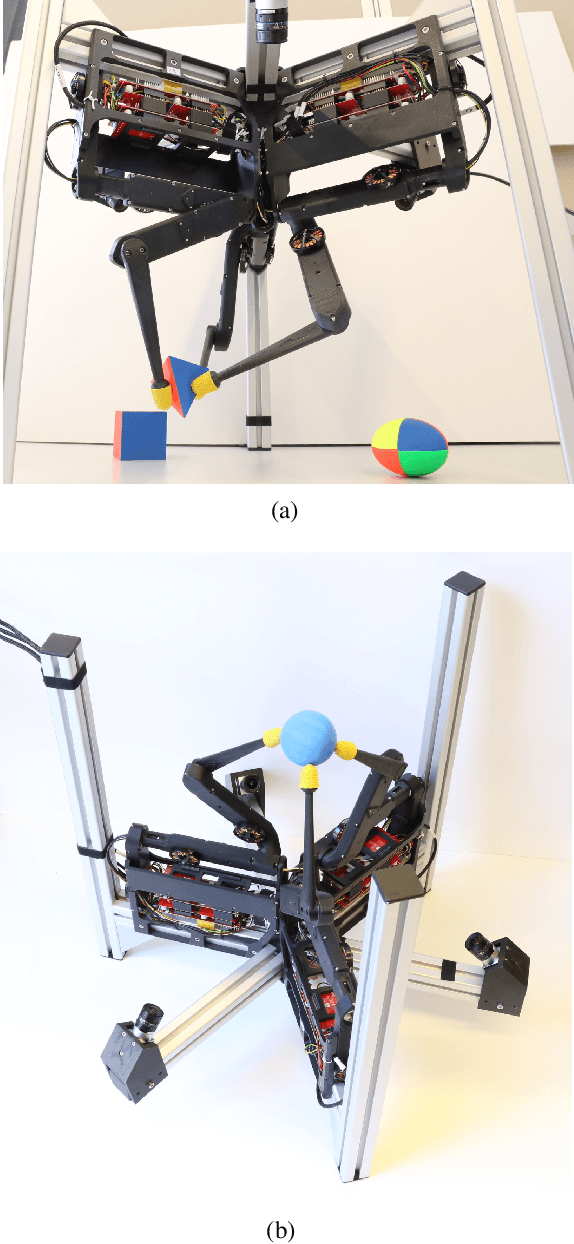

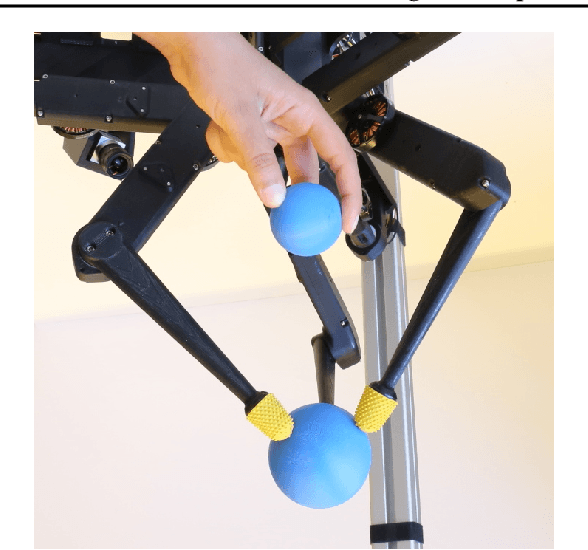

TriFinger: An Open-Source Robot for Learning Dexterity

Aug 08, 2020

Abstract:Dexterous object manipulation remains an open problem in robotics, despite the rapid progress in machine learning during the past decade. We argue that a hindrance is the high cost of experimentation on real systems, in terms of both time and money. We address this problem by proposing an open-source robotic platform which can safely operate without human supervision. The hardware is inexpensive (about \SI{5000}[\$]{}) yet highly dynamic, robust, and capable of complex interaction with external objects. The software operates at 1-kilohertz and performs safety checks to prevent the hardware from breaking. The easy-to-use front-end (in C++ and Python) is suitable for real-time control as well as deep reinforcement learning. In addition, the software framework is largely robot-agnostic and can hence be used independently of the hardware proposed herein. Finally, we illustrate the potential of the proposed platform through a number of experiments, including real-time optimal control, deep reinforcement learning from scratch, throwing, and writing.

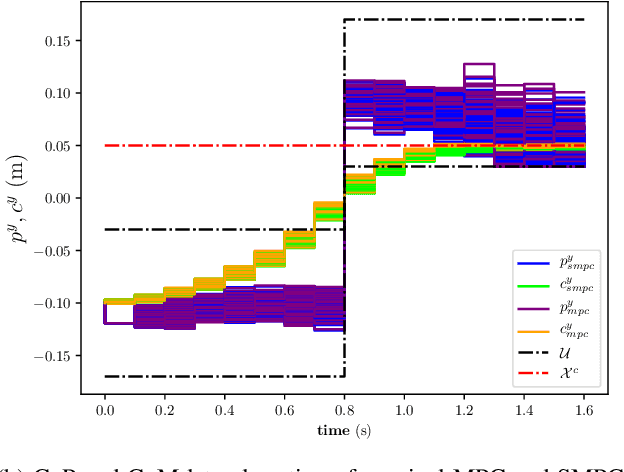

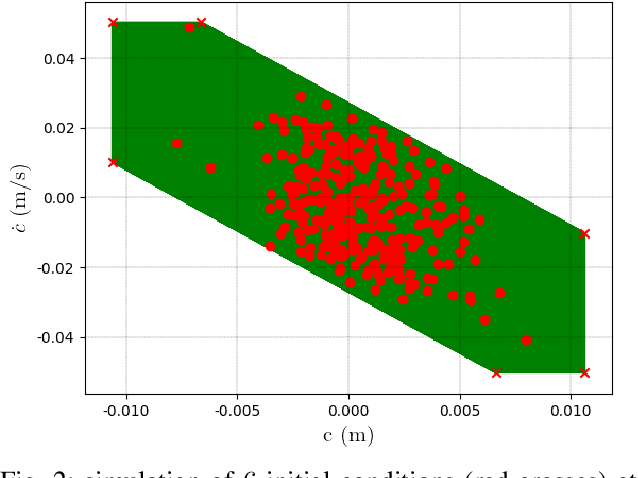

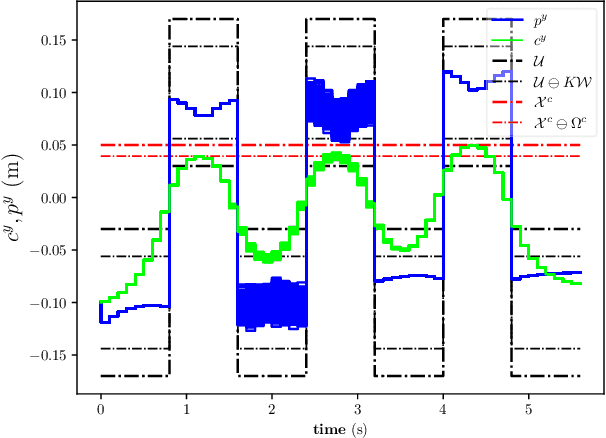

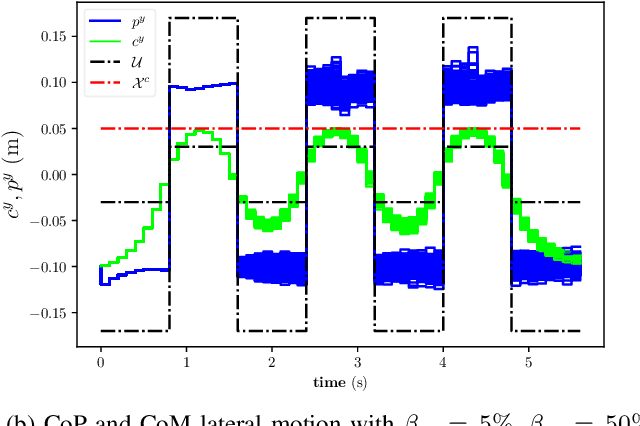

Stochastic and Robust MPC for Bipedal Locomotion: A Comparative Study on Robustness and Performance

May 15, 2020

Abstract:Linear Model Predictive Control (MPC) has been successfully used for generating feasible walking motions for humanoid robots. However, the effect of uncertainties on constraints satisfaction has only been studied using Robust MPC (RMPC) approaches, which account for the worst-case realization of bounded disturbances at each time instant. In this letter, we propose for the first time to use linear stochastic MPC (SMPC) to account for uncertainties in bipedal walking. We show that SMPC offers more flexibility to the user (or a high level decision maker) by tolerating small (user-defined) probabilities of constraint violation. Therefore, SMPC can be tuned to achieve a constraint satisfaction probability that is arbitrarily close to 100\%, but without sacrificing performance as much as tube-based RMPC. We compare SMPC against RMPC in terms of robustness (constraint satisfaction) and performance (optimality). Our results highlight the benefits of SMPC and its interest for the robotics community as a powerful mathematical tool for dealing with uncertainties.

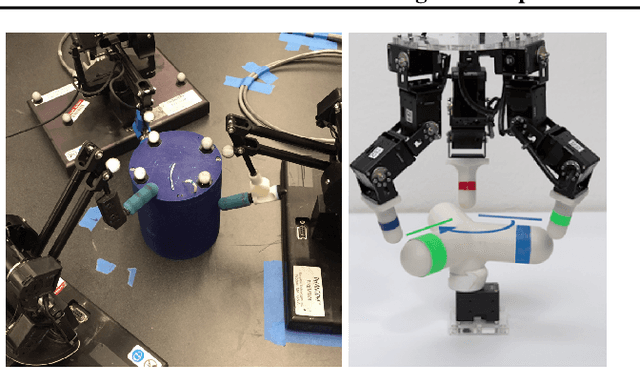

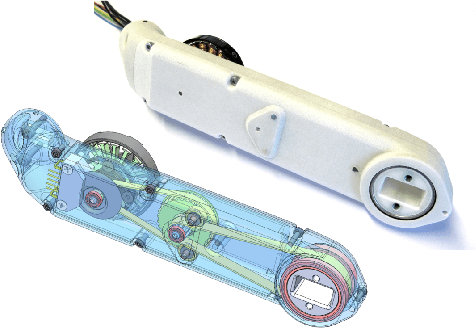

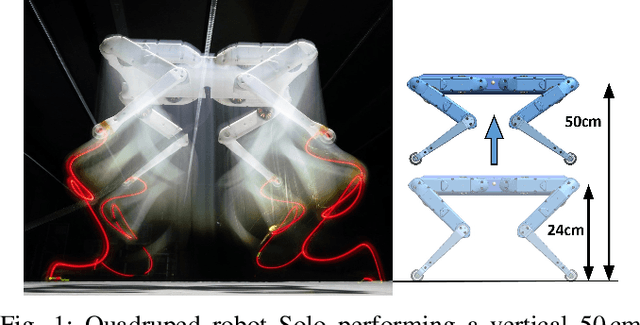

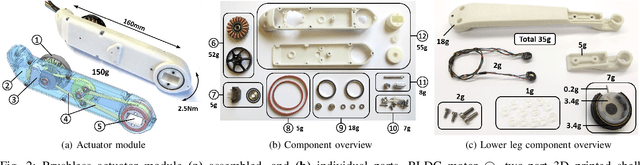

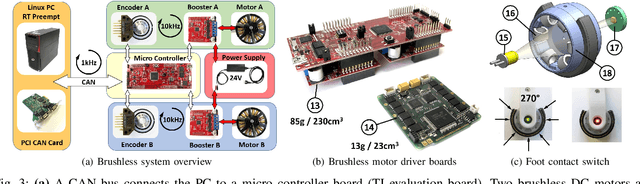

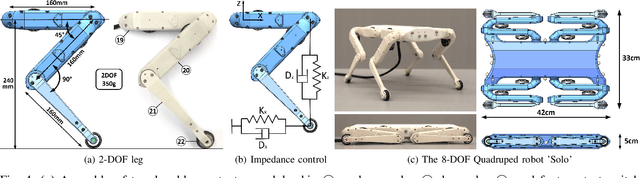

An Open Torque-Controlled Modular Robot Architecture for Legged Locomotion Research

Sep 30, 2019

Abstract:We present a new open-source torque-controlled legged robot system, with a low cost and low complexity actuator module at its core. It consists of a low-weight high torque brushless DC motor and a low gear ratio transmission suitable for impedance and force control. We also present a novel foot contact sensor suitable for legged locomotion with hard impacts. A 2.2 kg quadruped robot with a large range of motion is assembled from 8 identical actuator modules and 4 lower legs with foot contact sensors. To the best of our knowledge, it is the most lightest force-controlled quadruped robot. We leverage standard plastic 3D printing and off-the-shelf parts, resulting in light-weight and inexpensive robots, allowing for rapid distribution and duplication within the research community. In order to quantify the capabilities of our design, we systematically measure the achieved impedance at the foot in static and dynamic scenarios. We measured up to 10.8 dimensionless leg stiffness without active damping, which is comparable to the leg stiffness of a running human. Finally, in order to demonstrate the capabilities of our quadruped robot, we propose a novel controller which combines feedforward contact forces computed from a kino-dynamic optimizer with impedance control of the robot center of mass and base orientation. The controller is capable of regulating complex motions which are robust to environmental uncertainty.

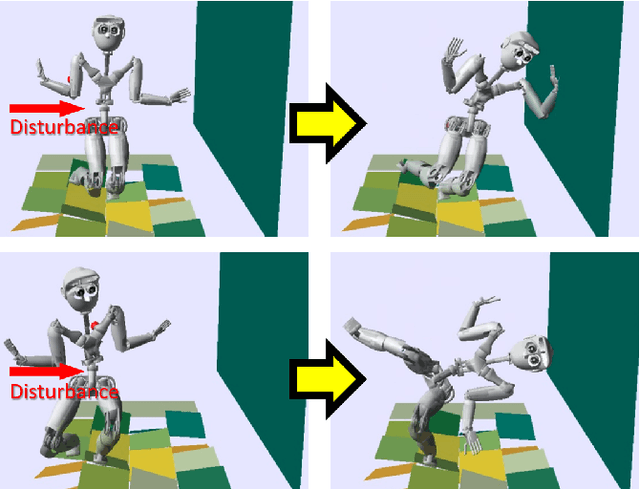

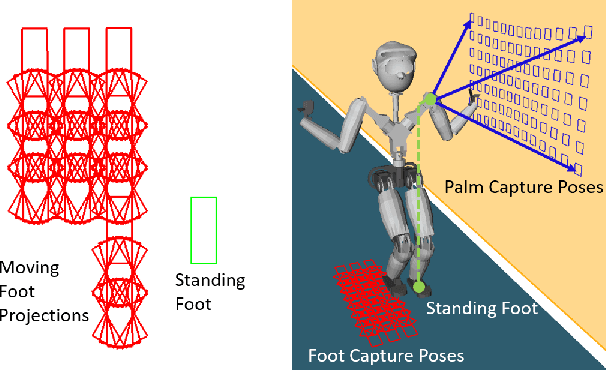

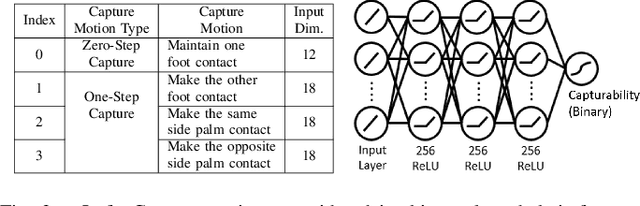

Robust Humanoid Contact Planning with Learned Zero- and One-Step Capturability Prediction

Sep 19, 2019

Abstract:Humanoid robots maintain balance and navigate by controlling the contact wrenches applied to the environment. While it is possible to plan dynamically-feasible motion that applies appropriate wrenches using existing methods, a humanoid may also be affected by external disturbances. Existing systems typically rely on controllers to reactively recover from disturbances. However, such controllers may fail when the robot cannot reach contacts capable of rejecting a given disturbance. In this paper, we propose a search-based footstep planner which aims to maximize the probability of the robot successfully reaching the goal without falling under disturbances. The planner considers not only the poses of the planned contact sequence, but also alternative contacts near the planned contact sequence that can be used to recover from external disturbances. Although this additional consideration significantly increases the computation load, we train neural networks to efficiently predict multi-contact zero-step and one-step capturability, which allows the planner to generate robust contact sequences efficiently. Our results show that our approach generates footstep sequences that are more robust to external disturbances than a conventional footstep planner in four challenging scenarios.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge