Guoquan Huang

Proprioceptive-only State Estimation for Legged Robots with Set-Coverage Measurements of Learned Dynamics

Mar 18, 2026Abstract:Proprioceptive-only state estimation is attractive for legged robots since it is computationally cheaper and is unaffected by perceptually degraded conditions. The history of joint-level measurements contains rich information that can be used to infer the dynamics of the system and subsequently produce navigational measurements. Recent approaches produce these estimates with learned measurement models and fuse with IMU data, under a Gaussian noise assumption. However, this assumption can easily break down with limited training data and render the estimates inconsistent and potentially divergent. In this work, we propose a proprioceptive-only state estimation framework for legged robots that characterizes the measurement noise using set-coverage statements that do not assume any distribution. We develop a practical and computationally inexpensive method to use these set-coverage measurements with a Gaussian filter in a systematic way. We validate the approach in both simulation and two real-world quadrupedal datasets. Comparison with the Gaussian baselines shows that our proposed method remains consistent and is not prone to drift under real noise scenarios.

Consistent and Efficient MSCKF-based LiDAR-Inertial Odometry with Inferred Cluster-to-Plane Constraints for UAVs

Mar 13, 2026Abstract:Robust and accurate navigation is critical for Unmanned Aerial Vehicles (UAVs) especially for those with stringent Size, Weight, and Power (SWaP) constraints. However, most state-of-the-art (SOTA) LiDAR-Inertial Odometry (LIO) systems still suffer from estimation inconsistency and computational bottlenecks when deployed on such platforms. To address these issues, this paper proposes a consistent and efficient tightly-coupled LIO framework tailored for UAVs. Within the efficient Multi-State Constraint Kalman Filter (MSCKF) framework, we build coplanar constraints inferred from planar features observed across a sliding window. By applying null-space projection to sliding-window coplanar constraints, we eliminate the direct dependency on feature parameters in the state vector, thereby mitigating overconfidence and improving consistency. More importantly, to further boost the efficiency, we introduce a parallel voxel-based data association and a novel compact cluster-to-plane measurement model. This compact measurement model losslessly reduces observation dimensionality and significantly accelerating the update process. Extensive evaluations demonstrate that our method outperforms most state-of-the-art (SOTA) approaches by providing a superior balance of consistency and efficiency. It exhibits improved robustness in degenerate scenarios, achieves the lowest memory usage via its map-free nature, and runs in real-time on resource-constrained embedded platforms (e.g., NVIDIA Jetson TX2).

Learning Neural Observer-Predictor Models for Limb-level Sampling-based Locomotion Planning

Oct 26, 2025Abstract:Accurate full-body motion prediction is essential for the safe, autonomous navigation of legged robots, enabling critical capabilities like limb-level collision checking in cluttered environments. Simplified kinematic models often fail to capture the complex, closed-loop dynamics of the robot and its low-level controller, limiting their predictions to simple planar motion. To address this, we present a learning-based observer-predictor framework that accurately predicts this motion. Our method features a neural observer with provable UUB guarantees that provides a reliable latent state estimate from a history of proprioceptive measurements. This stable estimate initializes a computationally efficient predictor, designed for the rapid, parallel evaluation of thousands of potential trajectories required by modern sampling-based planners. We validated the system by integrating our neural predictor into an MPPI-based planner on a Vision 60 quadruped. Hardware experiments successfully demonstrated effective, limb-aware motion planning in a challenging, narrow passage and over small objects, highlighting our system's ability to provide a robust foundation for high-performance, collision-aware planning on dynamic robotic platforms.

Learning IMU Bias with Diffusion Model

May 17, 2025Abstract:Motion sensing and tracking with IMU data is essential for spatial intelligence, which however is challenging due to the presence of time-varying stochastic bias. IMU bias is affected by various factors such as temperature and vibration, making it highly complex and difficult to model analytically. Recent data-driven approaches using deep learning have shown promise in predicting bias from IMU readings. However, these methods often treat the task as a regression problem, overlooking the stochatic nature of bias. In contrast, we model bias, conditioned on IMU readings, as a probabilistic distribution and design a conditional diffusion model to approximate this distribution. Through this approach, we achieve improved performance and make predictions that align more closely with the known behavior of bias.

Large-Scale Gaussian Splatting SLAM

May 15, 2025Abstract:The recently developed Neural Radiance Fields (NeRF) and 3D Gaussian Splatting (3DGS) have shown encouraging and impressive results for visual SLAM. However, most representative methods require RGBD sensors and are only available for indoor environments. The robustness of reconstruction in large-scale outdoor scenarios remains unexplored. This paper introduces a large-scale 3DGS-based visual SLAM with stereo cameras, termed LSG-SLAM. The proposed LSG-SLAM employs a multi-modality strategy to estimate prior poses under large view changes. In tracking, we introduce feature-alignment warping constraints to alleviate the adverse effects of appearance similarity in rendering losses. For the scalability of large-scale scenarios, we introduce continuous Gaussian Splatting submaps to tackle unbounded scenes with limited memory. Loops are detected between GS submaps by place recognition and the relative pose between looped keyframes is optimized utilizing rendering and feature warping losses. After the global optimization of camera poses and Gaussian points, a structure refinement module enhances the reconstruction quality. With extensive evaluations on the EuRoc and KITTI datasets, LSG-SLAM achieves superior performance over existing Neural, 3DGS-based, and even traditional approaches. Project page: https://lsg-slam.github.io.

Online Language Splatting

Mar 12, 2025Abstract:To enable AI agents to interact seamlessly with both humans and 3D environments, they must not only perceive the 3D world accurately but also align human language with 3D spatial representations. While prior work has made significant progress by integrating language features into geometrically detailed 3D scene representations using 3D Gaussian Splatting (GS), these approaches rely on computationally intensive offline preprocessing of language features for each input image, limiting adaptability to new environments. In this work, we introduce Online Language Splatting, the first framework to achieve online, near real-time, open-vocabulary language mapping within a 3DGS-SLAM system without requiring pre-generated language features. The key challenge lies in efficiently fusing high-dimensional language features into 3D representations while balancing the computation speed, memory usage, rendering quality and open-vocabulary capability. To this end, we innovatively design: (1) a high-resolution CLIP embedding module capable of generating detailed language feature maps in 18ms per frame, (2) a two-stage online auto-encoder that compresses 768-dimensional CLIP features to 15 dimensions while preserving open-vocabulary capabilities, and (3) a color-language disentangled optimization approach to improve rendering quality. Experimental results show that our online method not only surpasses the state-of-the-art offline methods in accuracy but also achieves more than 40x efficiency boost, demonstrating the potential for dynamic and interactive AI applications.

Robust 4D Radar-aided Inertial Navigation for Aerial Vehicles

Feb 21, 2025

Abstract:While LiDAR and cameras are becoming ubiquitous for unmanned aerial vehicles (UAVs) but can be ineffective in challenging environments, 4D millimeter-wave (MMW) radars that can provide robust 3D ranging and Doppler velocity measurements are less exploited for aerial navigation. In this paper, we develop an efficient and robust error-state Kalman filter (ESKF)-based radar-inertial navigation for UAVs. The key idea of the proposed approach is the point-to-distribution radar scan matching to provide motion constraints with proper uncertainty qualification, which are used to update the navigation states in a tightly coupled manner, along with the Doppler velocity measurements. Moreover, we propose a robust keyframe-based matching scheme against the prior map (if available) to bound the accumulated navigation errors and thus provide a radar-based global localization solution with high accuracy. Extensive real-world experimental validations have demonstrated that the proposed radar-aided inertial navigation outperforms state-of-the-art methods in both accuracy and robustness.

Visual-Inertial SLAM as Simple as A, B, VINS

Jun 10, 2024

Abstract:We present AB-VINS, a different kind of visual-inertial SLAM system. Unlike most VINS systems which only use hand-crafted techniques, AB-VINS makes use of three different deep networks. Instead of estimating sparse feature positions, AB-VINS only estimates the scale and bias parameters (a and b) of monocular depth maps, as well as other terms to correct the depth using multi-view information which results in a compressed feature state. Despite being an optimization-based system, the main VIO thread of AB-VINS surpasses the efficiency of a state-of-the-art filter-based method while also providing dense depth. While state-of-the-art loop-closing SLAM systems have to relinearize a number of variables linear the number of keyframes, AB-VINS can perform loop closures while only affecting a constant number of variables. This is due to a novel data structure called the memory tree, in which the keyframe poses are defined relative to each other rather than all in one global frame, allowing for all but a few states to be fixed. AB-VINS is not as accurate as state-of-the-art VINS systems, but it is shown through careful experimentation to be more robust.

Square-Root Inverse Filter-based GNSS-Visual-Inertial Navigation

May 17, 2024

Abstract:While Global Navigation Satellite System (GNSS) is often used to provide global positioning if available, its intermittency and/or inaccuracy calls for fusion with other sensors. In this paper, we develop a novel GNSS-Visual-Inertial Navigation System (GVINS) that fuses visual, inertial, and raw GNSS measurements within the square-root inverse sliding window filtering (SRI-SWF) framework in a tightly coupled fashion, which thus is termed SRI-GVINS. In particular, for the first time, we deeply fuse the GNSS pseudorange, Doppler shift, single-differenced pseudorange, and double-differenced carrier phase measurements, along with the visual-inertial measurements. Inherited from the SRI-SWF, the proposed SRI-GVINS gains significant numerical stability and computational efficiency over the start-of-the-art methods. Additionally, we propose to use a filter to sequentially initialize the reference frame transformation till converges, rather than collecting measurements for batch optimization. We also perform online calibration of GNSS-IMU extrinsic parameters to mitigate the possible extrinsic parameter degradation. The proposed SRI-GVINS is extensively evaluated on our own collected UAV datasets and the results demonstrate that the proposed method is able to suppress VIO drift in real-time and also show the effectiveness of online GNSS-IMU extrinsic calibration. The experimental validation on the public datasets further reveals that the proposed SRI-GVINS outperforms the state-of-the-art methods in terms of both accuracy and efficiency.

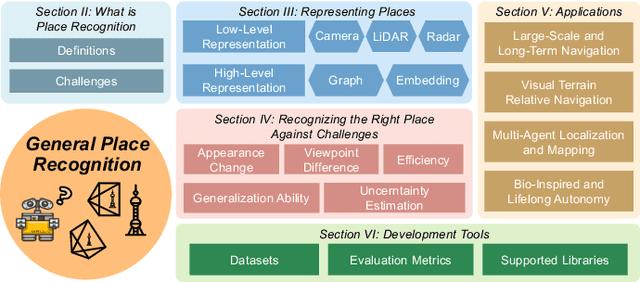

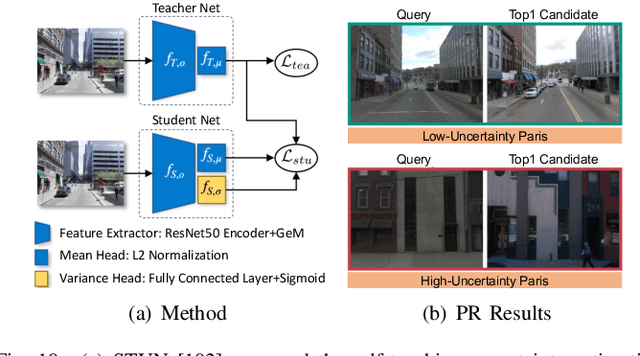

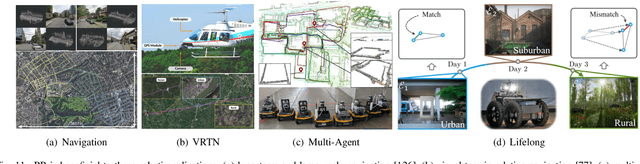

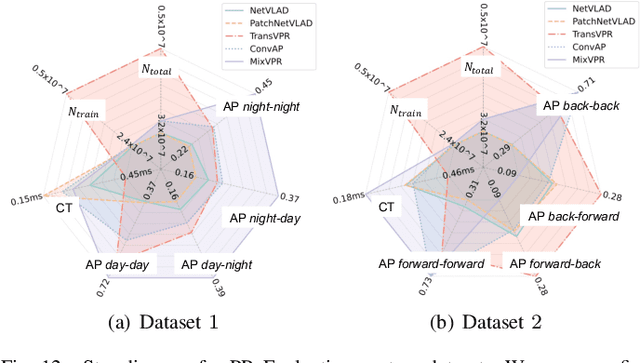

General Place Recognition Survey: Towards Real-World Autonomy

May 08, 2024

Abstract:In the realm of robotics, the quest for achieving real-world autonomy, capable of executing large-scale and long-term operations, has positioned place recognition (PR) as a cornerstone technology. Despite the PR community's remarkable strides over the past two decades, garnering attention from fields like computer vision and robotics, the development of PR methods that sufficiently support real-world robotic systems remains a challenge. This paper aims to bridge this gap by highlighting the crucial role of PR within the framework of Simultaneous Localization and Mapping (SLAM) 2.0. This new phase in robotic navigation calls for scalable, adaptable, and efficient PR solutions by integrating advanced artificial intelligence (AI) technologies. For this goal, we provide a comprehensive review of the current state-of-the-art (SOTA) advancements in PR, alongside the remaining challenges, and underscore its broad applications in robotics. This paper begins with an exploration of PR's formulation and key research challenges. We extensively review literature, focusing on related methods on place representation and solutions to various PR challenges. Applications showcasing PR's potential in robotics, key PR datasets, and open-source libraries are discussed. We also emphasizes our open-source package, aimed at new development and benchmark for general PR. We conclude with a discussion on PR's future directions, accompanied by a summary of the literature covered and access to our open-source library, available to the robotics community at: https://github.com/MetaSLAM/GPRS.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge