"Time": models, code, and papers

Nondestructive Quality Control in Powder Metallurgy using Hyperspectral Imaging

Jul 26, 2022

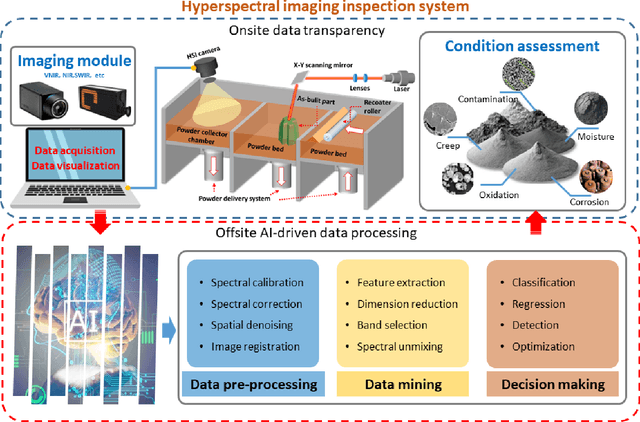

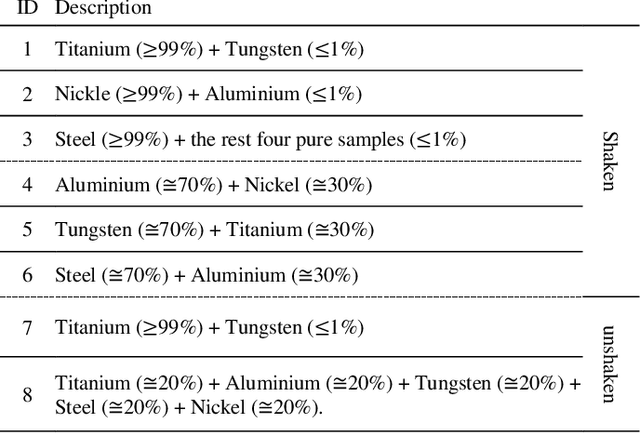

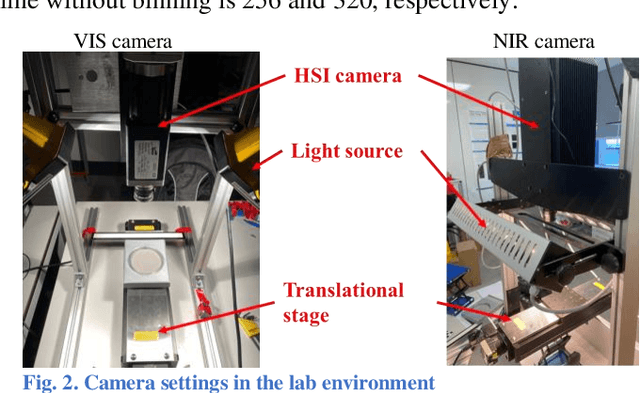

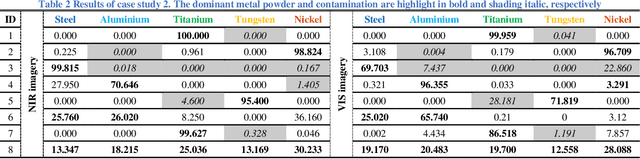

Measuring the purity in the metal powder is critical for preserving the quality of additive manufacturing products. Contamination is one of the most headache problems which can be caused by multiple reasons and lead to the as-built components cracking and malfunctions. Existing methods for metallurgical condition assessment are mostly time-consuming and mainly focus on the physical integrity of structure rather than material composition. Through capturing spectral data from a wide frequency range along with the spatial information, hyperspectral imaging (HSI) can detect minor differences in terms of temperature, moisture and chemical composition. Therefore, HSI can provide a unique way to tackle this challenge. In this paper, with the use of a near-infrared HSI camera, applications of HSI for the non-destructive inspection of metal powders are introduced. Technical assumptions and solutions on three step-by-step case studies are presented in detail, including powder characterization, contamination detection, and band selection analysis. Experimental results have fully demonstrated the great potential of HSI and related AI techniques for NDT of powder metallurgy, especially the potential to satisfy the industrial manufacturing environment.

Handling Structural Mismatches in Real-time Opera Tracking

May 18, 2021

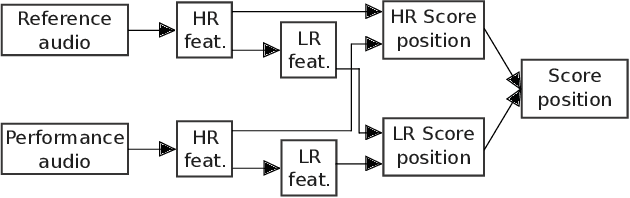

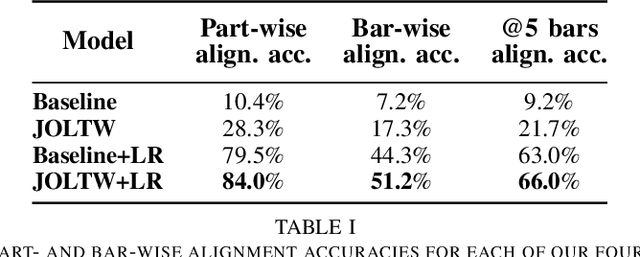

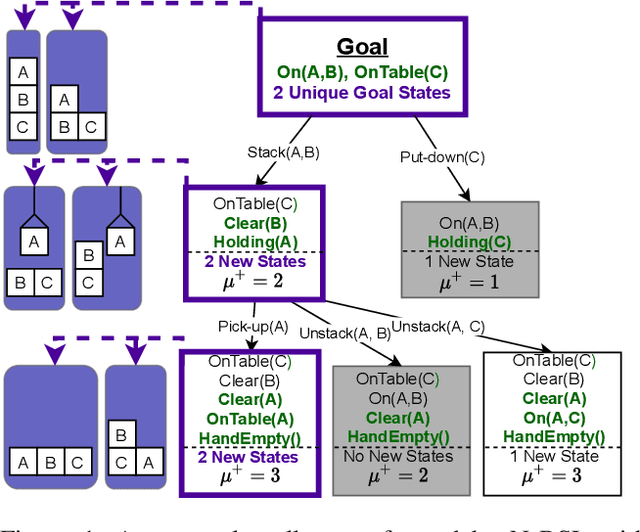

Algorithms for reliable real-time score following in live opera promise a lot of useful applications such as automatic subtitles display, or real-time video cutting in live streaming. Until now, such systems were based on the strong assumption that an opera performance follows the structure of the score linearly. However, this is rarely the case in practice, because of different opera versions and directors' cutting choices. In this paper, we propose a two-level solution to this problem. We introduce a real-time-capable, high-resolution (HR) tracker that can handle jumps or repetitions at specific locations provided to it. We then combine this with an additional low-resolution (LR) tracker that can handle all sorts of mismatches that can occur at any time, with some imprecision, and can re-direct the HR tracker if the latter is `lost' in the score. We show that the combination of the two improves tracking robustness in the presence of strong structural mismatches.

Sampling from Pre-Images to Learn Heuristic Functions for Classical Planning

Jul 07, 2022

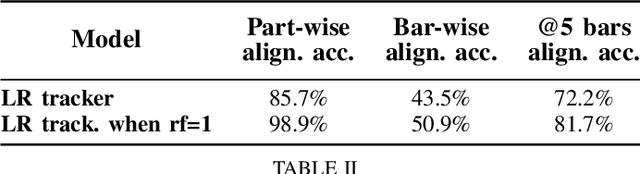

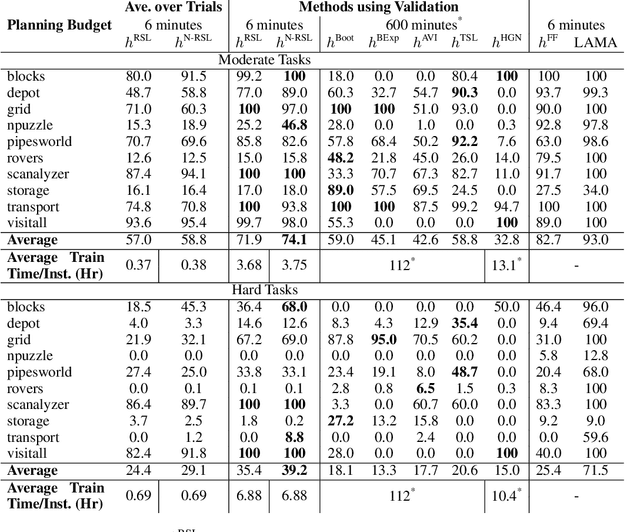

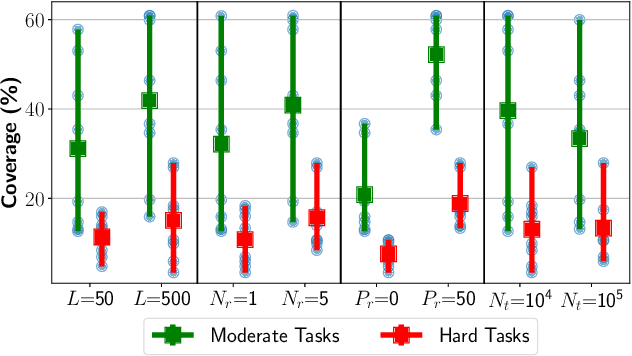

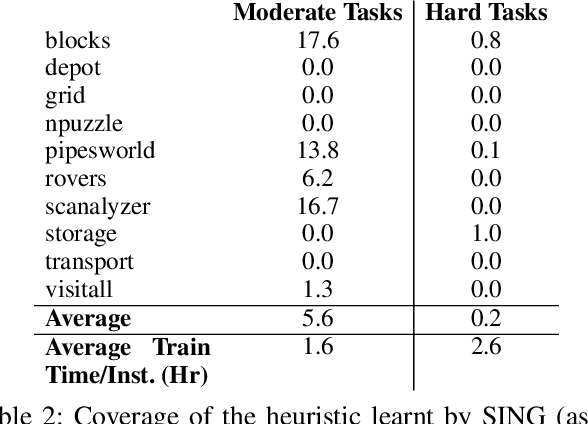

We introduce a new algorithm, Regression based Supervised Learning (RSL), for learning per instance Neural Network (NN) defined heuristic functions for classical planning problems. RSL uses regression to select relevant sets of states at a range of different distances from the goal. RSL then formulates a Supervised Learning problem to obtain the parameters that define the NN heuristic, using the selected states labeled with exact or estimated distances to goal states. Our experimental study shows that RSL outperforms, in terms of coverage, previous classical planning NN heuristics functions while requiring two orders of magnitude less training time.

A Temporal Fusion Transformer for Long-term Explainable Prediction of Emergency Department Overcrowding

Jul 01, 2022

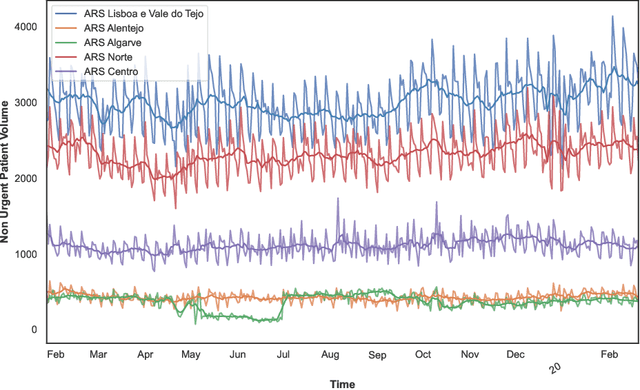

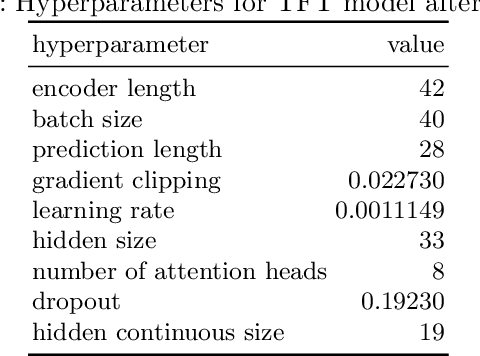

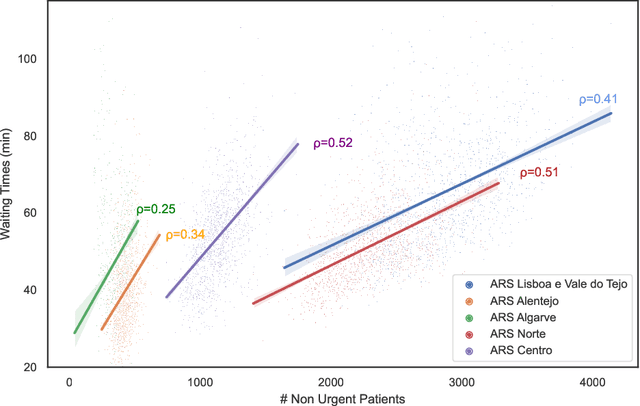

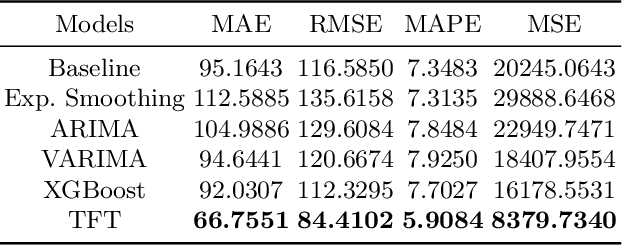

Emergency Departments (EDs) are a fundamental element of the Portuguese National Health Service, serving as an entry point for users with diverse and very serious medical problems. Due to the inherent characteristics of the ED; forecasting the number of patients using the services is particularly challenging. And a mismatch between the affluence and the number of medical professionals can lead to a decrease in the quality of the services provided and create problems that have repercussions for the entire hospital, with the requisition of health care workers from other departments and the postponement of surgeries. ED overcrowding is driven, in part, by non-urgent patients, that resort to emergency services despite not having a medical emergency and which represent almost half of the total number of daily patients. This paper describes a novel deep learning architecture, the Temporal Fusion Transformer, that uses calendar and time-series covariates to forecast prediction intervals and point predictions for a 4 week period. We have concluded that patient volume can be forecasted with a Mean Absolute Percentage Error (MAPE) of 5.90% for Portugal's Health Regional Areas (HRA) and a Root Mean Squared Error (RMSE) of 84.4102 people/day. The paper shows empirical evidence supporting the use of a multivariate approach with static and time-series covariates while surpassing other models commonly found in the literature.

Temporal Latent Bottleneck: Synthesis of Fast and Slow Processing Mechanisms in Sequence Learning

May 30, 2022

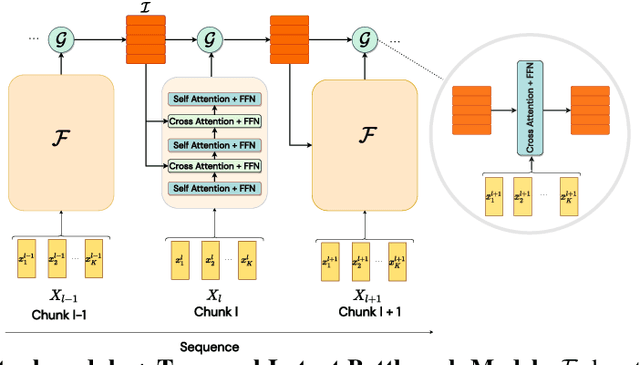

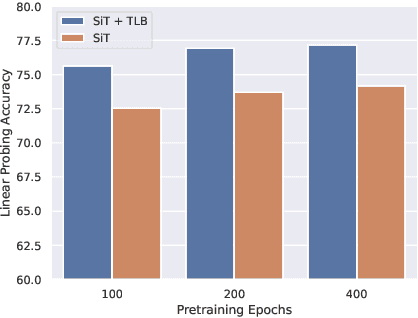

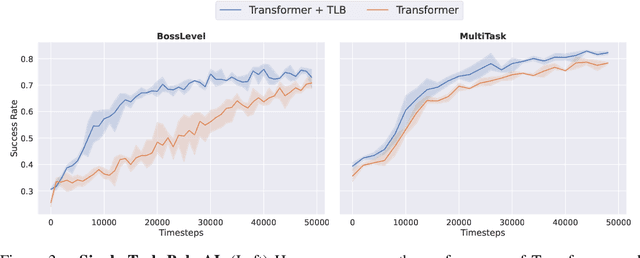

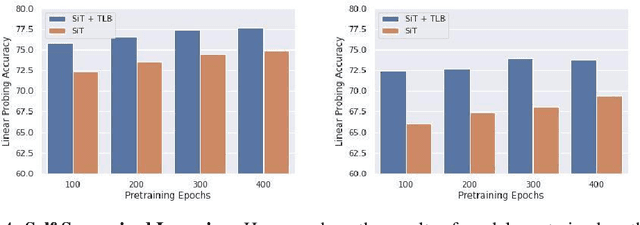

Recurrent neural networks have a strong inductive bias towards learning temporally compressed representations, as the entire history of a sequence is represented by a single vector. By contrast, Transformers have little inductive bias towards learning temporally compressed representations, as they allow for attention over all previously computed elements in a sequence. Having a more compressed representation of a sequence may be beneficial for generalization, as a high-level representation may be more easily re-used and re-purposed and will contain fewer irrelevant details. At the same time, excessive compression of representations comes at the cost of expressiveness. We propose a solution which divides computation into two streams. A slow stream that is recurrent in nature aims to learn a specialized and compressed representation, by forcing chunks of $K$ time steps into a single representation which is divided into multiple vectors. At the same time, a fast stream is parameterized as a Transformer to process chunks consisting of $K$ time-steps conditioned on the information in the slow-stream. In the proposed approach we hope to gain the expressiveness of the Transformer, while encouraging better compression and structuring of representations in the slow stream. We show the benefits of the proposed method in terms of improved sample efficiency and generalization performance as compared to various competitive baselines for visual perception and sequential decision making tasks.

Reactive Navigation of an Unmanned Aerial Vehicle with Perception-based Obstacle Avoidance Constraints

Jul 04, 2022

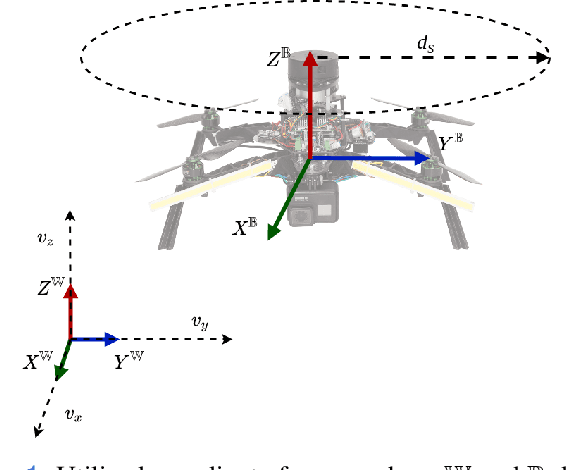

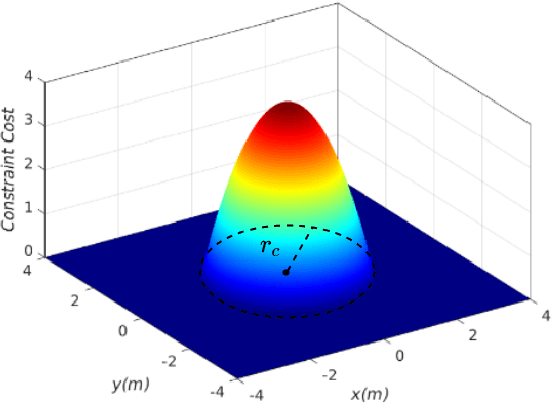

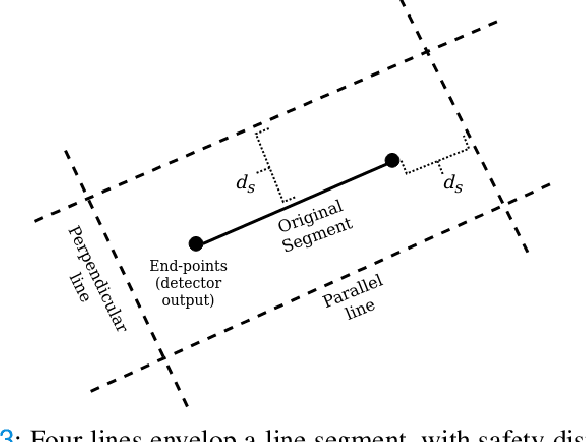

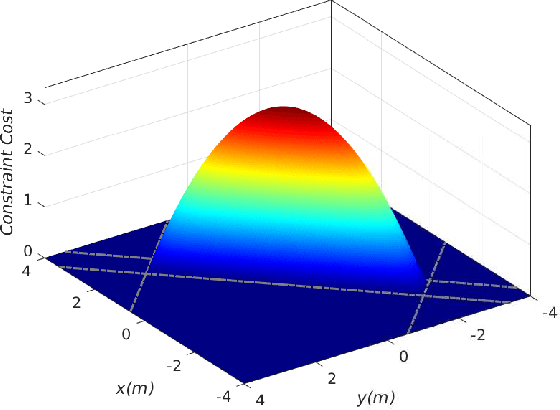

In this article we propose a reactive constrained navigation scheme, with embedded obstacles avoidance for an Unmanned Aerial Vehicle (UAV), for enabling navigation in obstacle-dense environments. The proposed navigation architecture is based on Nonlinear Model Predictive Control (NMPC), and utilizes an on-board 2D LiDAR to detect obstacles and translate online the key geometric information of the environment into parametric constraints for the NMPC that constrain the available position-space for the UAV. This article focuses also on the real-world implementation and experimental validation of the proposed reactive navigation scheme, and it is applied in multiple challenging laboratory experiments, where we also conduct comparisons with relevant methods of reactive obstacle avoidance. The solver utilized in the proposed approach is the Optimization Engine (OpEn) and the Proximal Averaged Newton for Optimal Control (PANOC) algorithm, where a penalty method is applied to properly consider obstacles and input constraints during the navigation task. The proposed novel scheme allows for fast solutions, while using limited on-board computational power, that is a required feature for the overall closed loop performance of an UAV and is applied in multiple real-time scenarios. The combination of built-in obstacle avoidance and real-time applicability makes the proposed reactive constrained navigation scheme an elegant framework for UAVs that is able to perform fast nonlinear control, local path-planning and obstacle avoidance, all embedded in the control layer.

* 16 pages, 28 figures

VacciNet: Towards a Smart Framework for Learning the Distribution Chain Optimization of Vaccines for a Pandemic

Aug 01, 2022

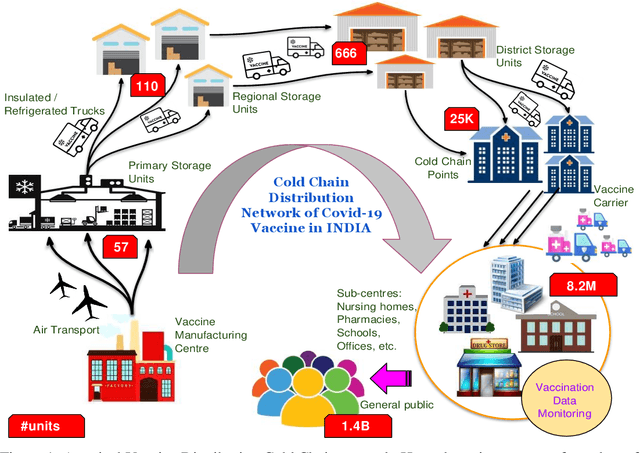

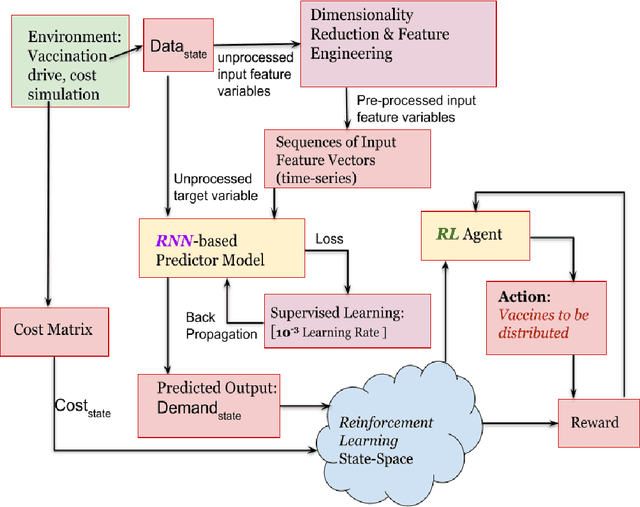

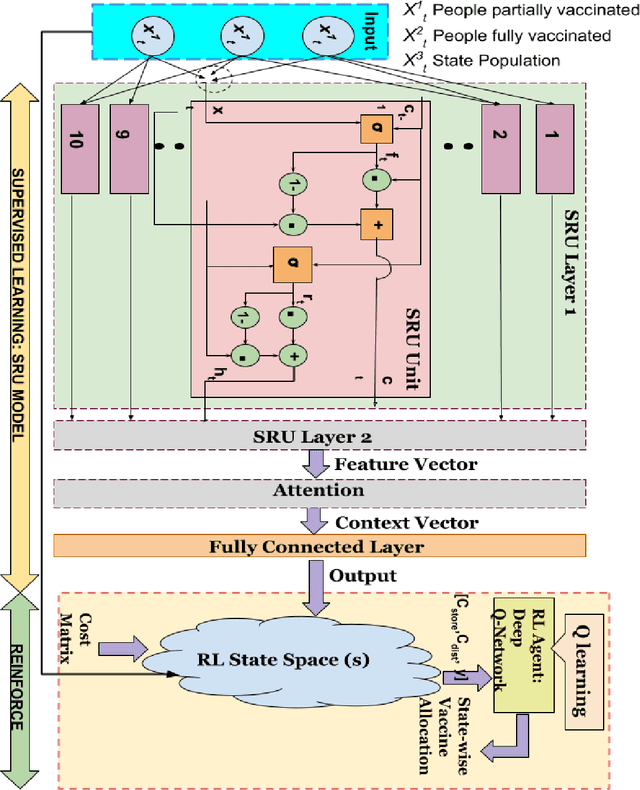

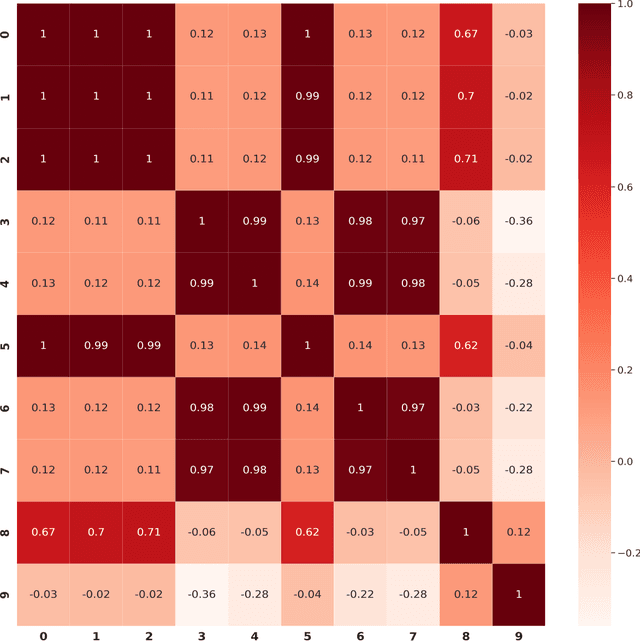

Vaccinations against viruses have always been the need of the hour since long past. However, it is hard to efficiently distribute the vaccines (on time) to all the corners of a country, especially during a pandemic. Considering the vastness of the population, diversified communities, and demands of a smart society, it is an important task to optimize the vaccine distribution strategy in any country/state effectively. Although there is a profusion of data (Big Data) from various vaccine administration sites that can be mined to gain valuable insights about mass vaccination drives, very few attempts has been made towards revolutionizing the traditional mass vaccination campaigns to mitigate the socio-economic crises of pandemic afflicted countries. In this paper, we bridge this gap in studies and experimentation. We collect daily vaccination data which is publicly available and carefully analyze it to generate meaning-full insights and predictions. We put forward a novel framework leveraging Supervised Learning and Reinforcement Learning (RL) which we call VacciNet, that is capable of learning to predict the demand of vaccination in a state of a country as well as suggest optimal vaccine allocation in the state for minimum cost of procurement and supply. At the present, our framework is trained and tested with vaccination data of the USA.

Lottery Ticket Hypothesis for Spiking Neural Networks

Jul 04, 2022

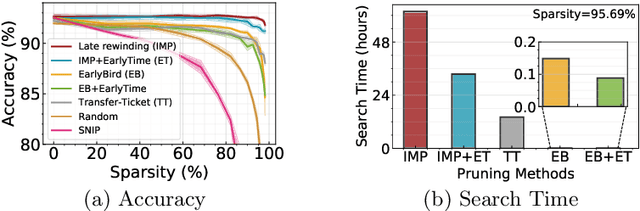

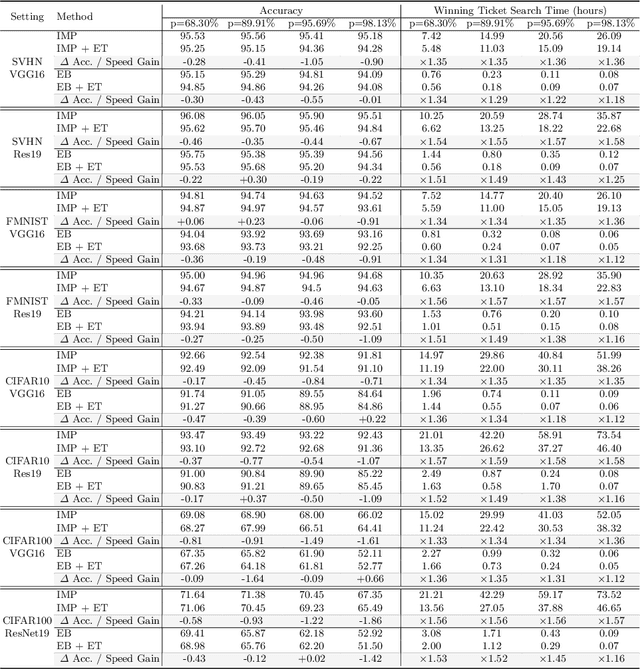

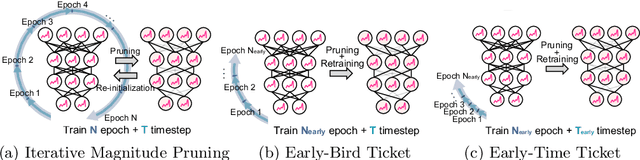

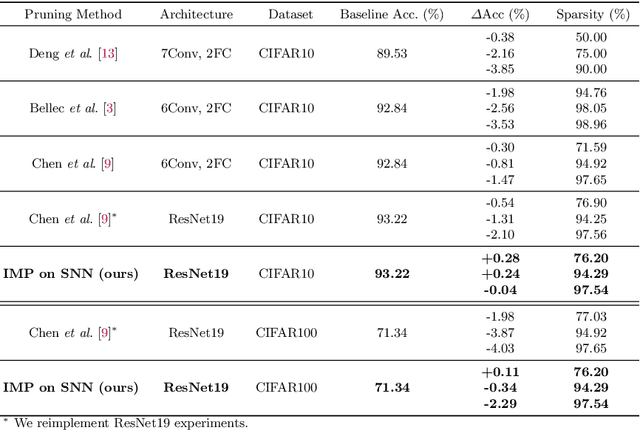

Spiking Neural Networks (SNNs) have recently emerged as a new generation of low-power deep neural networks where binary spikes convey information across multiple timesteps. Pruning for SNNs is highly important as they become deployed on a resource-constraint mobile/edge device. The previous SNN pruning works focus on shallow SNNs (2~6 layers), however, deeper SNNs (>16 layers) are proposed by state-of-the-art SNN works, which is difficult to be compatible with the current pruning work. To scale up a pruning technique toward deep SNNs, we investigate Lottery Ticket Hypothesis (LTH) which states that dense networks contain smaller subnetworks (i.e., winning tickets) that achieve comparable performance to the dense networks. Our studies on LTH reveal that the winning tickets consistently exist in deep SNNs across various datasets and architectures, providing up to 97% sparsity without huge performance degradation. However, the iterative searching process of LTH brings a huge training computational cost when combined with the multiple timesteps of SNNs. To alleviate such heavy searching cost, we propose Early-Time (ET) ticket where we find the important weight connectivity from a smaller number of timesteps. The proposed ET ticket can be seamlessly combined with common pruning techniques for finding winning tickets, such as Iterative Magnitude Pruning (IMP) and Early-Bird (EB) tickets. Our experiment results show that the proposed ET ticket reduces search time by up to 38% compared to IMP or EB methods.

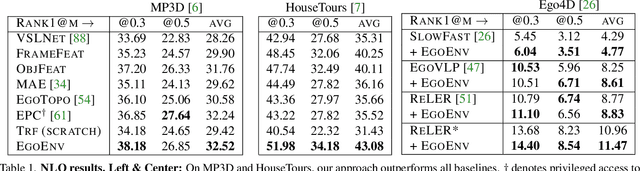

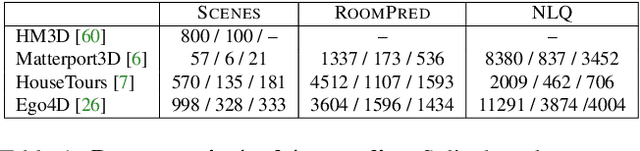

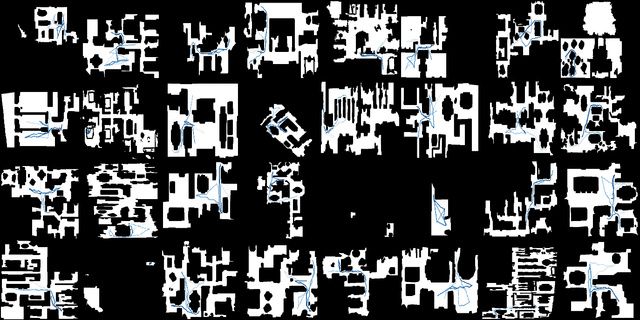

Egocentric scene context for human-centric environment understanding from video

Jul 22, 2022

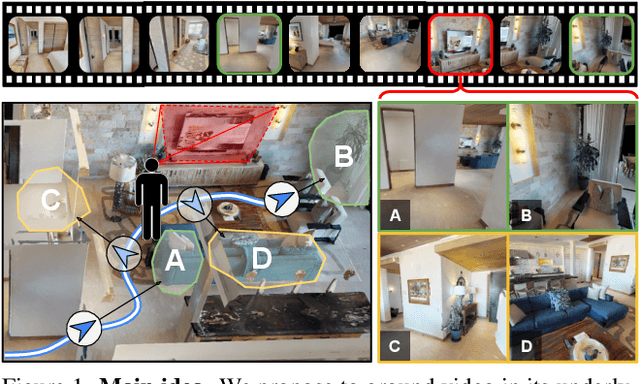

First-person video highlights a camera-wearer's activities in the context of their persistent environment. However, current video understanding approaches reason over visual features from short video clips that are detached from the underlying physical space and only capture what is directly seen. We present an approach that links egocentric video and camera pose over time by learning representations that are predictive of the camera-wearer's (potentially unseen) local surroundings to facilitate human-centric environment understanding. We train such models using videos from agents in simulated 3D environments where the environment is fully observable, and test them on real-world videos of house tours from unseen environments. We show that by grounding videos in their physical environment, our models surpass traditional scene classification models at predicting which room a camera-wearer is in (where frame-level information is insufficient), and can leverage this grounding to localize video moments corresponding to environment-centric queries, outperforming prior methods. Project page: http://vision.cs.utexas.edu/projects/ego-scene-context/

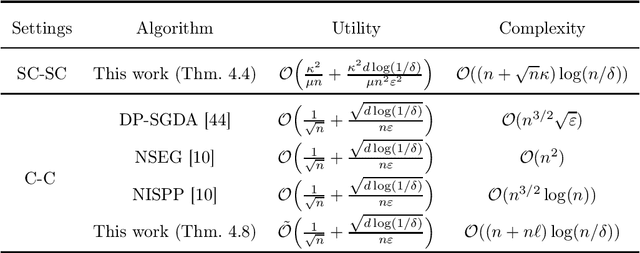

Bring Your Own Algorithm for Optimal Differentially Private Stochastic Minimax Optimization

Jun 01, 2022

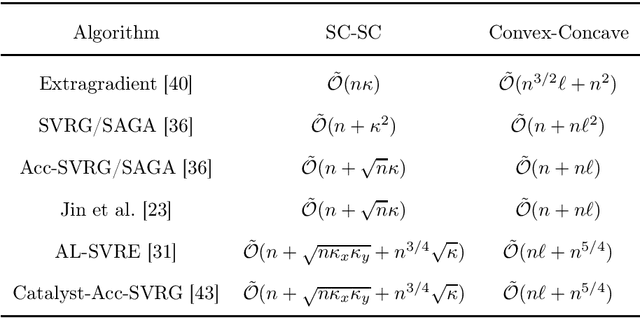

We study differentially private (DP) algorithms for smooth stochastic minimax optimization, with stochastic minimization as a byproduct. The holy grail of these settings is to guarantee the optimal trade-off between the privacy and the excess population loss, using an algorithm with a linear time-complexity in the number of training samples. We provide a general framework for solving differentially private stochastic minimax optimization (DP-SMO) problems, which enables the practitioners to bring their own base optimization algorithm and use it as a black-box to obtain the near-optimal privacy-loss trade-off. Our framework is inspired from the recently proposed Phased-ERM method [20] for nonsmooth differentially private stochastic convex optimization (DP-SCO), which exploits the stability of the empirical risk minimization (ERM) for the privacy guarantee. The flexibility of our approach enables us to sidestep the requirement that the base algorithm needs to have bounded sensitivity, and allows the use of sophisticated variance-reduced accelerated methods to achieve near-linear time-complexity. To the best of our knowledge, these are the first linear-time optimal algorithms, up to logarithmic factors, for smooth DP-SMO when the objective is (strongly-)convex-(strongly-)concave. Additionally, based on our flexible framework, we derive a new family of near-linear time algorithms for smooth DP-SCO with optimal privacy-loss trade-offs for a wider range of smoothness parameters compared to previous algorithms.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge