"Information": models, code, and papers

Learning to Fuse Monocular and Multi-view Cues for Multi-frame Depth Estimation in Dynamic Scenes

Apr 18, 2023

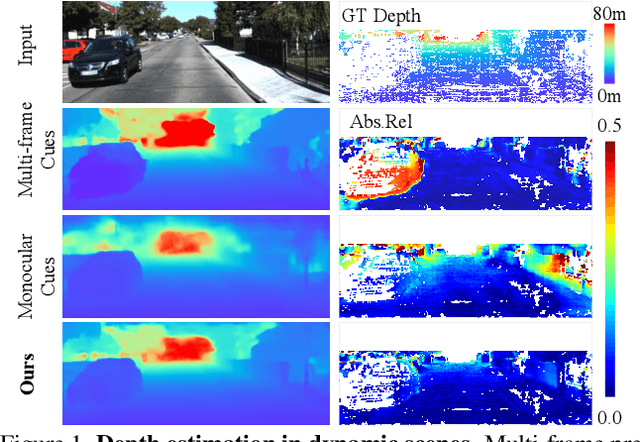

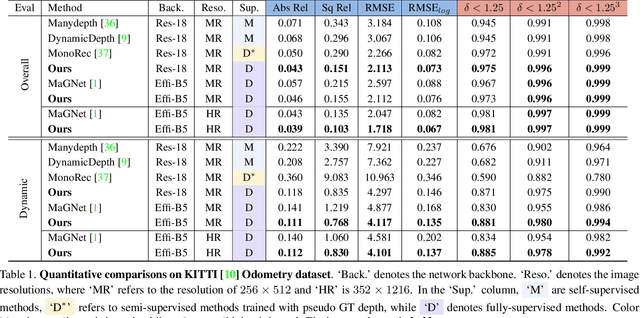

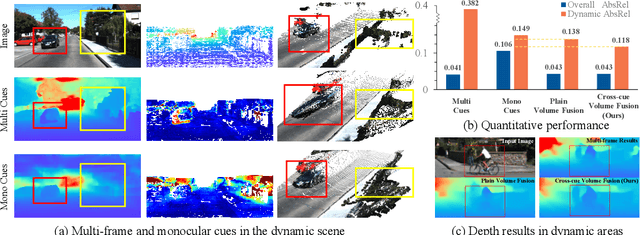

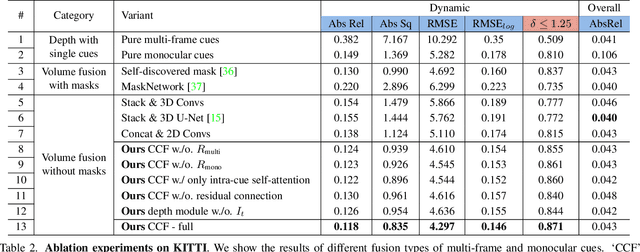

Multi-frame depth estimation generally achieves high accuracy relying on the multi-view geometric consistency. When applied in dynamic scenes, e.g., autonomous driving, this consistency is usually violated in the dynamic areas, leading to corrupted estimations. Many multi-frame methods handle dynamic areas by identifying them with explicit masks and compensating the multi-view cues with monocular cues represented as local monocular depth or features. The improvements are limited due to the uncontrolled quality of the masks and the underutilized benefits of the fusion of the two types of cues. In this paper, we propose a novel method to learn to fuse the multi-view and monocular cues encoded as volumes without needing the heuristically crafted masks. As unveiled in our analyses, the multi-view cues capture more accurate geometric information in static areas, and the monocular cues capture more useful contexts in dynamic areas. To let the geometric perception learned from multi-view cues in static areas propagate to the monocular representation in dynamic areas and let monocular cues enhance the representation of multi-view cost volume, we propose a cross-cue fusion (CCF) module, which includes the cross-cue attention (CCA) to encode the spatially non-local relative intra-relations from each source to enhance the representation of the other. Experiments on real-world datasets prove the significant effectiveness and generalization ability of the proposed method.

Semantic Ray: Learning a Generalizable Semantic Field with Cross-Reprojection Attention

Mar 23, 2023

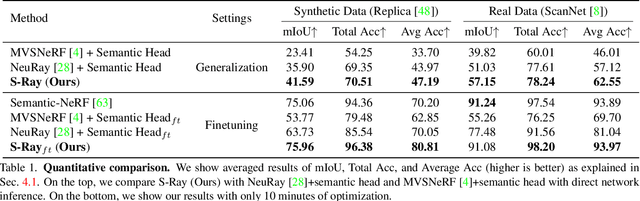

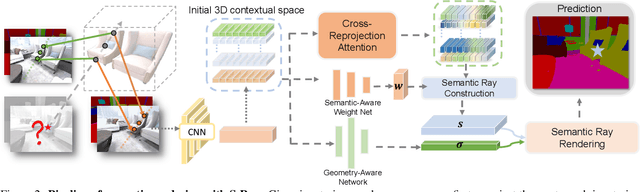

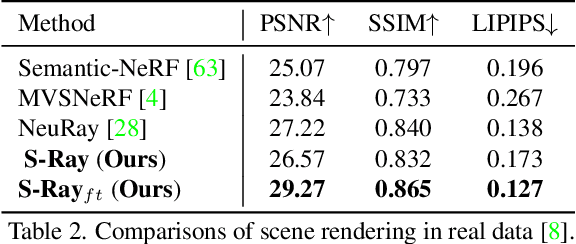

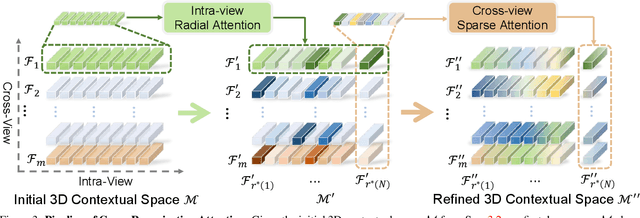

In this paper, we aim to learn a semantic radiance field from multiple scenes that is accurate, efficient and generalizable. While most existing NeRFs target at the tasks of neural scene rendering, image synthesis and multi-view reconstruction, there are a few attempts such as Semantic-NeRF that explore to learn high-level semantic understanding with the NeRF structure. However, Semantic-NeRF simultaneously learns color and semantic label from a single ray with multiple heads, where the single ray fails to provide rich semantic information. As a result, Semantic NeRF relies on positional encoding and needs to train one specific model for each scene. To address this, we propose Semantic Ray (S-Ray) to fully exploit semantic information along the ray direction from its multi-view reprojections. As directly performing dense attention over multi-view reprojected rays would suffer from heavy computational cost, we design a Cross-Reprojection Attention module with consecutive intra-view radial and cross-view sparse attentions, which decomposes contextual information along reprojected rays and cross multiple views and then collects dense connections by stacking the modules. Experiments show that our S-Ray is able to learn from multiple scenes, and it presents strong generalization ability to adapt to unseen scenes.

NaviSTAR: Socially Aware Robot Navigation with Hybrid Spatio-Temporal Graph Transformer and Preference Learning

Apr 12, 2023Developing robotic technologies for use in human society requires ensuring the safety of robots' navigation behaviors while adhering to pedestrians' expectations and social norms. However, maintaining real-time communication between robots and pedestrians to avoid collisions can be challenging. To address these challenges, we propose a novel socially-aware navigation benchmark called NaviSTAR, which utilizes a hybrid Spatio-Temporal grAph tRansformer (STAR) to understand interactions in human-rich environments fusing potential crowd multi-modal information. We leverage off-policy reinforcement learning algorithm with preference learning to train a policy and a reward function network with supervisor guidance. Additionally, we design a social score function to evaluate the overall performance of social navigation. To compare, we train and test our algorithm and other state-of-the-art methods in both simulator and real-world scenarios independently. Our results show that NaviSTAR outperforms previous methods with outstanding performance\footnote{The source code and experiment videos of this work are available at: https://sites.google.com/view/san-navistar

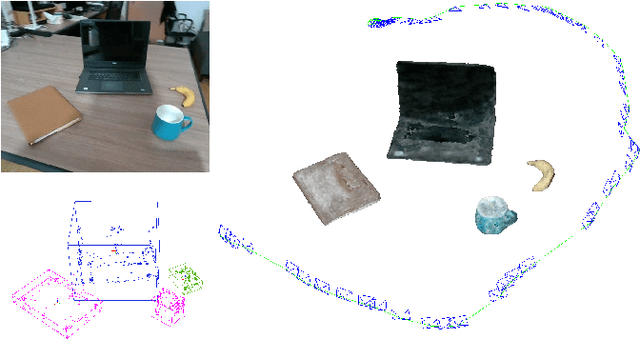

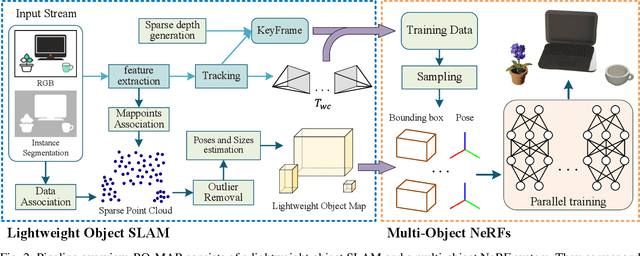

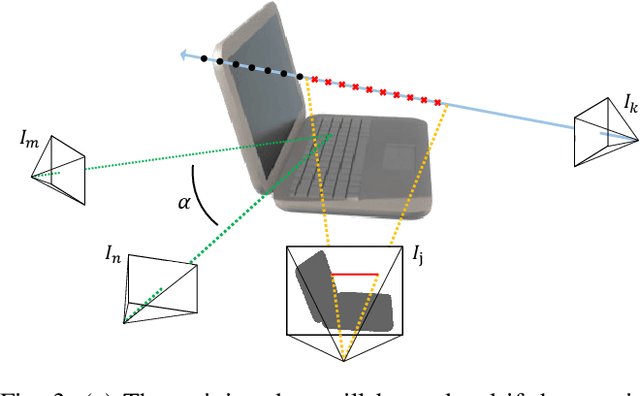

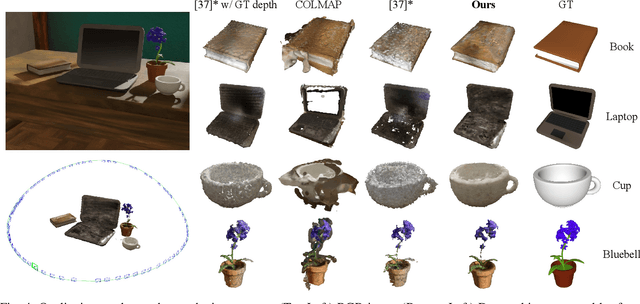

RO-MAP: Real-Time Multi-Object Mapping with Neural Radiance Fields

Apr 12, 2023

Accurate perception of objects in the environment is important for improving the scene understanding capability of SLAM systems. In robotic and augmented reality applications, object maps with semantic and metric information show attractive advantages. In this paper, we present RO-MAP, a novel multi-object mapping pipeline that does not rely on 3D priors. Given only monocular input, we use neural radiance fields to represent objects and couple them with a lightweight object SLAM based on multi-view geometry, to simultaneously localize objects and implicitly learn their dense geometry. We create separate implicit models for each detected object and train them dynamically and in parallel as new observations are added. Experiments on synthetic and real-world datasets demonstrate that our method can generate semantic object map with shape reconstruction, and be competitive with offline methods while achieving real-time performance (25Hz). The code and dataset will be available at: https://github.com/XiaoHan-Git/RO-MAP

Measuring Normative and Descriptive Biases in Language Models Using Census Data

Apr 12, 2023

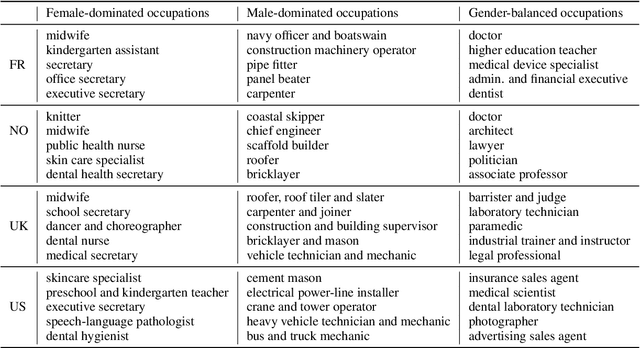

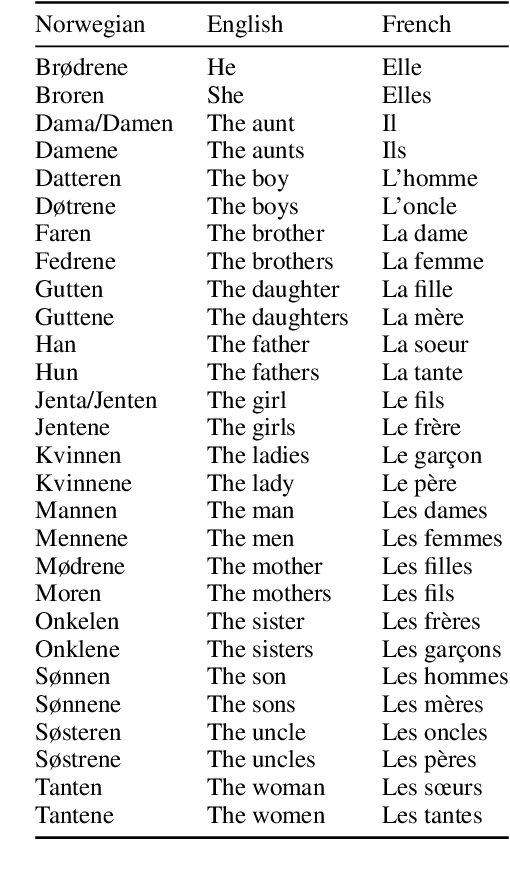

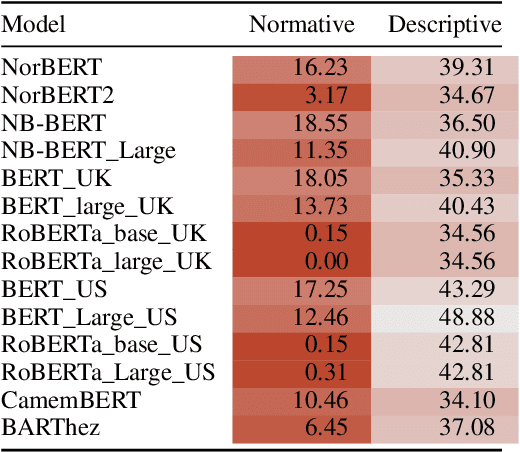

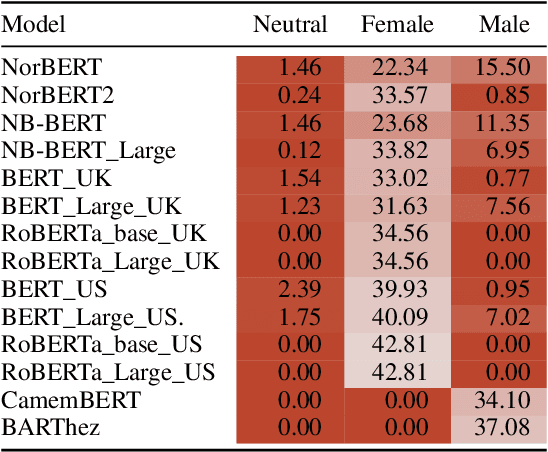

We investigate in this paper how distributions of occupations with respect to gender is reflected in pre-trained language models. Such distributions are not always aligned to normative ideals, nor do they necessarily reflect a descriptive assessment of reality. In this paper, we introduce an approach for measuring to what degree pre-trained language models are aligned to normative and descriptive occupational distributions. To this end, we use official demographic information about gender--occupation distributions provided by the national statistics agencies of France, Norway, United Kingdom, and the United States. We manually generate template-based sentences combining gendered pronouns and nouns with occupations, and subsequently probe a selection of ten language models covering the English, French, and Norwegian languages. The scoring system we introduce in this work is language independent, and can be used on any combination of template-based sentences, occupations, and languages. The approach could also be extended to other dimensions of national census data and other demographic variables.

Literature Review: Computer Vision Applications in Transportation Logistics and Warehousing

Apr 12, 2023

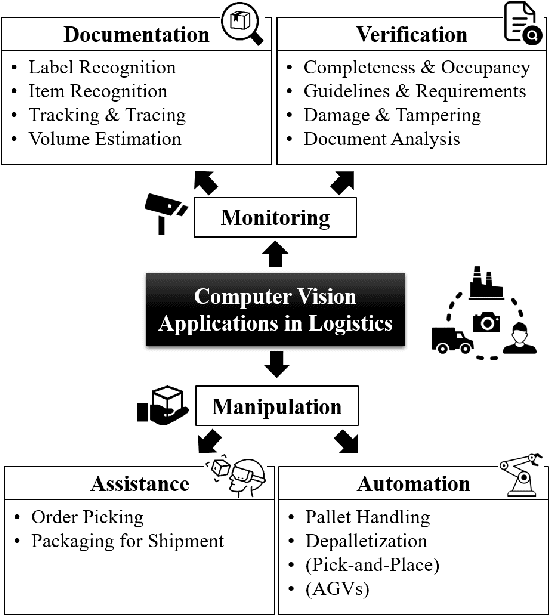

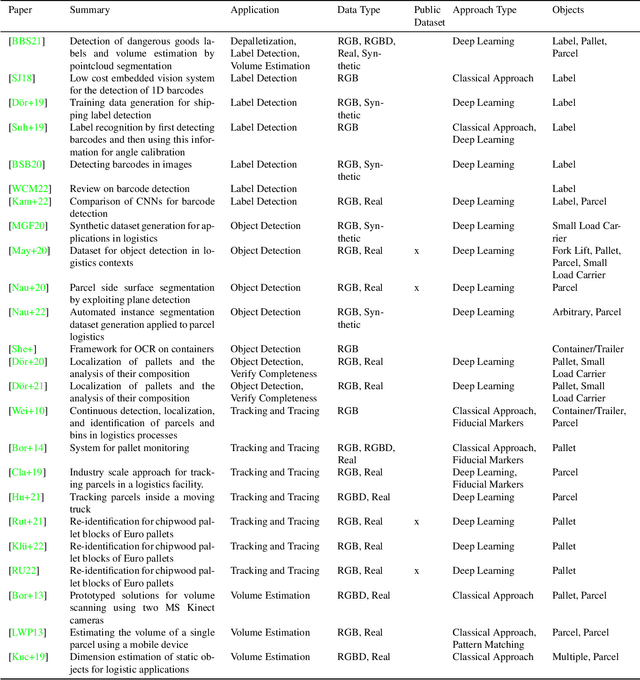

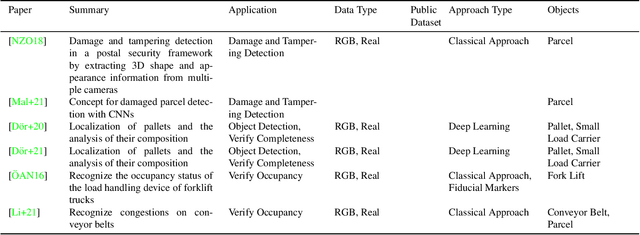

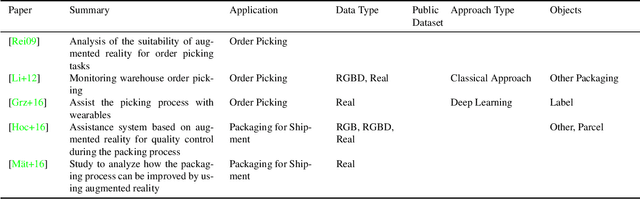

Computer vision applications in transportation logistics and warehousing have a huge potential for process automation. We present a structured literature review on research in the field to help leverage this potential. All literature is categorized w.r.t. the application, i.e. the task it tackles and w.r.t. the computer vision techniques that are used. Regarding applications, we subdivide the literature in two areas: Monitoring, i.e. observing and retrieving relevant information from the environment, and manipulation, where approaches are used to analyze and interact with the environment. In addition to that, we point out directions for future research and link to recent developments in computer vision that are suitable for application in logistics. Finally, we present an overview of existing datasets and industrial solutions. We conclude that while already many research areas have been investigated, there is still huge potential for future research. The results of our analysis are also available online at https://a-nau.github.io/cv-in-logistics.

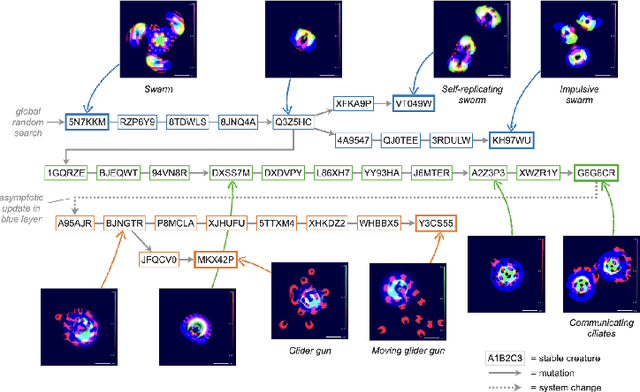

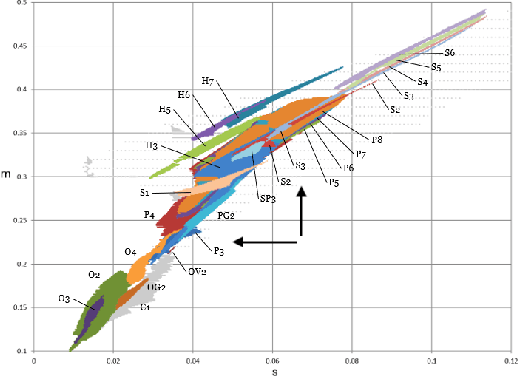

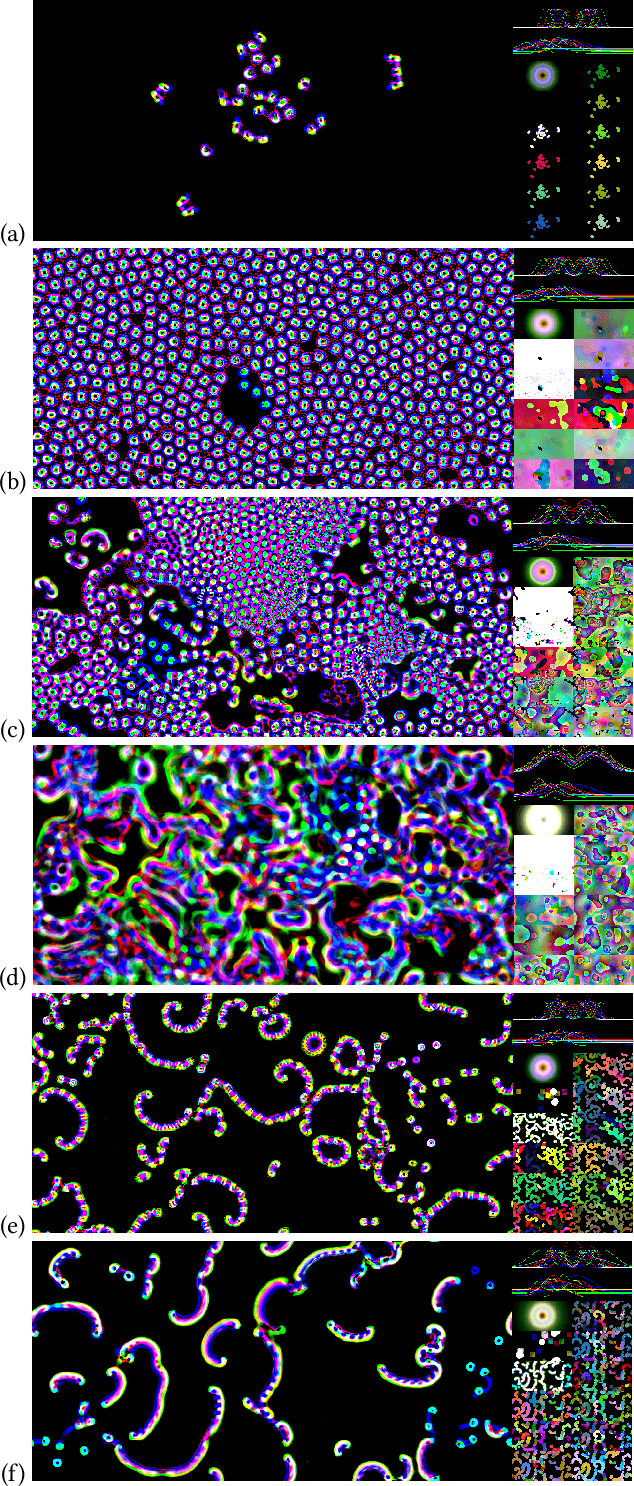

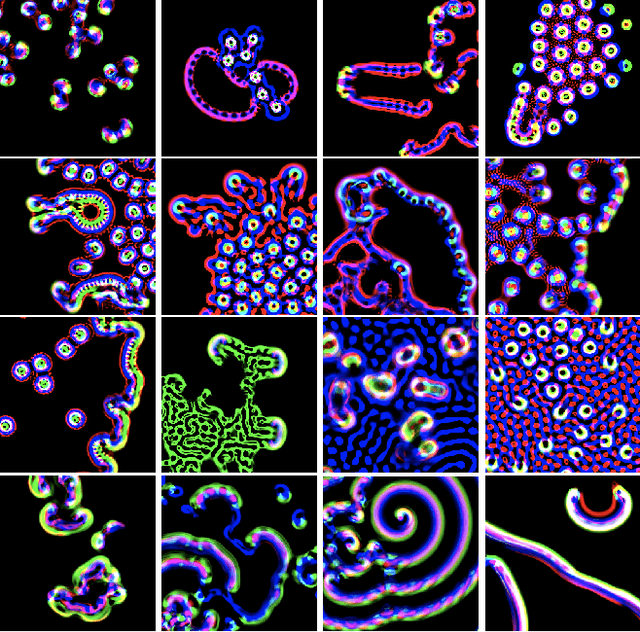

Towards Large-Scale Simulations of Open-Ended Evolution in Continuous Cellular Automata

Apr 12, 2023

Inspired by biological and cultural evolution, there have been many attempts to explore and elucidate the necessary conditions for open-endedness in artificial intelligence and artificial life. Using a continuous cellular automata called Lenia as the base system, we built large-scale evolutionary simulations using parallel computing framework JAX, in order to achieve the goal of never-ending evolution of self-organizing patterns. We report a number of system design choices, including (1) implicit implementation of genetic operators, such as reproduction by pattern self-replication, and selection by differential existential success; (2) localization of genetic information; and (3) algorithms for dynamically maintenance of the localized genotypes and translation to phenotypes. Simulation results tend to go through a phase of diversity and creativity, gradually converge to domination by fast expanding patterns, presumably a optimal solution under the current design. Based on our experimentation, we propose several factors that may further facilitate open-ended evolution, such as virtual environment design, mass conservation, and energy constraints.

Multi-step Jailbreaking Privacy Attacks on ChatGPT

Apr 11, 2023

With the rapid progress of large language models (LLMs), many downstream NLP tasks can be well solved given good prompts. Though model developers and researchers work hard on dialog safety to avoid generating harmful content from LLMs, it is still challenging to steer AI-generated content (AIGC) for the human good. As powerful LLMs are devouring existing text data from various domains (e.g., GPT-3 is trained on 45TB texts), it is natural to doubt whether the private information is included in the training data and what privacy threats can these LLMs and their downstream applications bring. In this paper, we study the privacy threats from OpenAI's model APIs and New Bing enhanced by ChatGPT and show that application-integrated LLMs may cause more severe privacy threats ever than before. To this end, we conduct extensive experiments to support our claims and discuss LLMs' privacy implications.

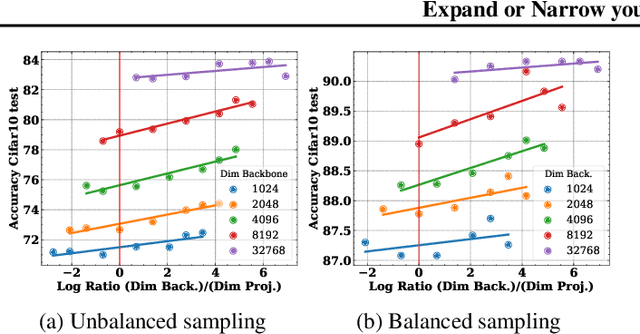

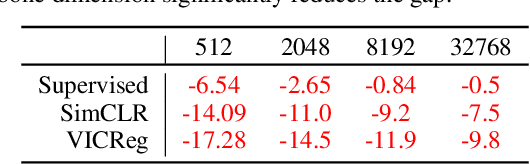

A surprisingly simple technique to control the pretraining bias for better transfer: Expand or Narrow your representation

Apr 11, 2023

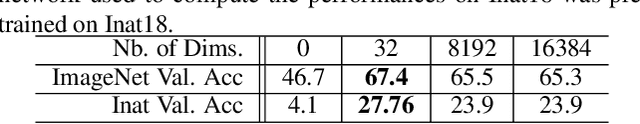

Self-Supervised Learning (SSL) models rely on a pretext task to learn representations. Because this pretext task differs from the downstream tasks used to evaluate the performance of these models, there is an inherent misalignment or pretraining bias. A commonly used trick in SSL, shown to make deep networks more robust to such bias, is the addition of a small projector (usually a 2 or 3 layer multi-layer perceptron) on top of a backbone network during training. In contrast to previous work that studied the impact of the projector architecture, we here focus on a simpler, yet overlooked lever to control the information in the backbone representation. We show that merely changing its dimensionality -- by changing only the size of the backbone's very last block -- is a remarkably effective technique to mitigate the pretraining bias. It significantly improves downstream transfer performance for both Self-Supervised and Supervised pretrained models.

Sentence-Level Relation Extraction via Contrastive Learning with Descriptive Relation Prompts

Apr 11, 2023

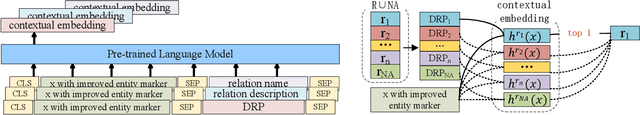

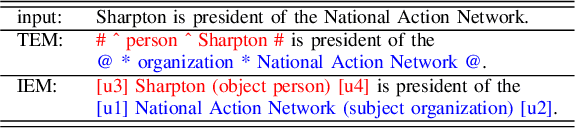

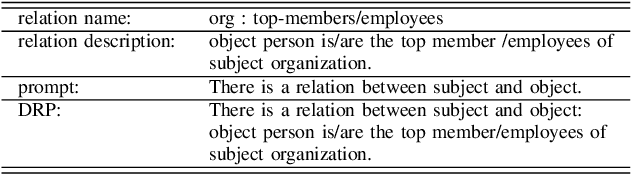

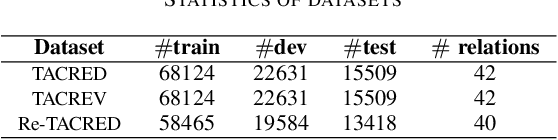

Sentence-level relation extraction aims to identify the relation between two entities for a given sentence. The existing works mostly focus on obtaining a better entity representation and adopting a multi-label classifier for relation extraction. A major limitation of these works is that they ignore background relational knowledge and the interrelation between entity types and candidate relations. In this work, we propose a new paradigm, Contrastive Learning with Descriptive Relation Prompts(CTL-DRP), to jointly consider entity information, relational knowledge and entity type restrictions. In particular, we introduce an improved entity marker and descriptive relation prompts when generating contextual embedding, and utilize contrastive learning to rank the restricted candidate relations. The CTL-DRP obtains a competitive F1-score of 76.7% on TACRED. Furthermore, the new presented paradigm achieves F1-scores of 85.8% and 91.6% on TACREV and Re-TACRED respectively, which are both the state-of-the-art performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge