"Image": models, code, and papers

Stitching Dynamic Movement Primitives and Image-based Visual Servo Control

Oct 29, 2021

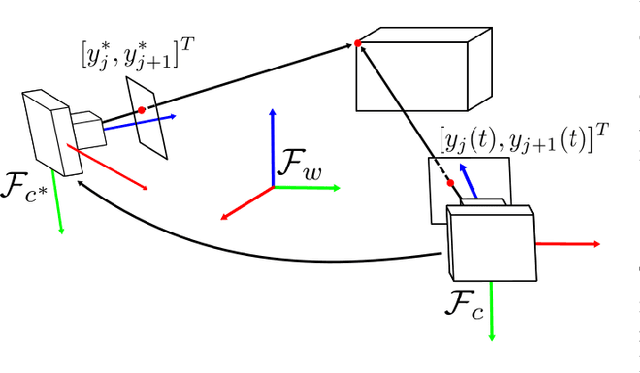

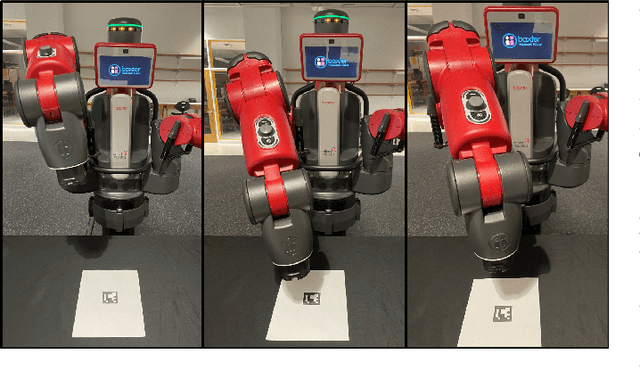

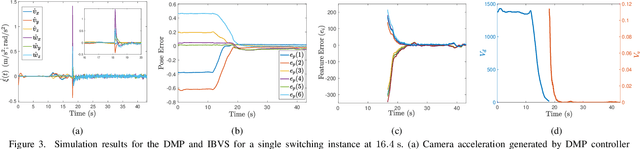

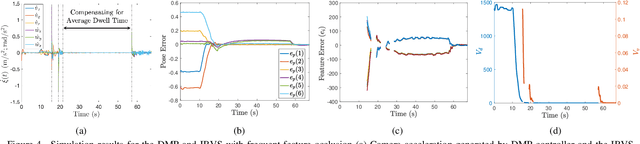

Utilizing perception for feedback control in combination with Dynamic Movement Primitive (DMP)-based motion generation for a robot's end-effector control is a useful solution for many robotic manufacturing tasks. For instance, while performing an insertion task when the hole or the recipient part is not visible in the eye-in-hand camera, a learning-based movement primitive method can be used to generate the end-effector path. Once the recipient part is in the field of view (FOV), Image-based Visual Servo (IBVS) can be used to control the motion of the robot. Inspired by such applications, this paper presents a generalized control scheme that switches between motion generation using DMPs and IBVS control. To facilitate the design, a common state space representation for the DMP and the IBVS systems is first established. Stability analysis of the switched system using multiple Lyapunov functions shows that the state trajectories converge to a bound asymptotically. The developed method is validated by two real world experiments using the eye-in-hand configuration on a Baxter research robot.

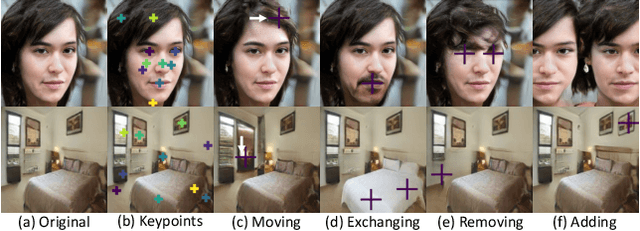

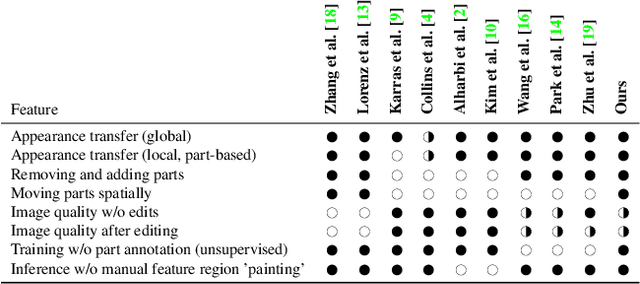

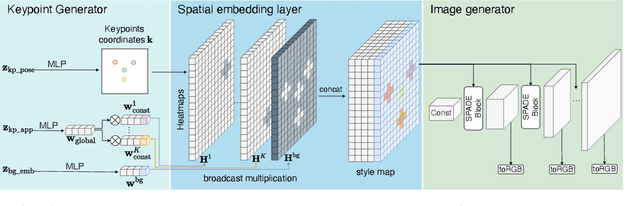

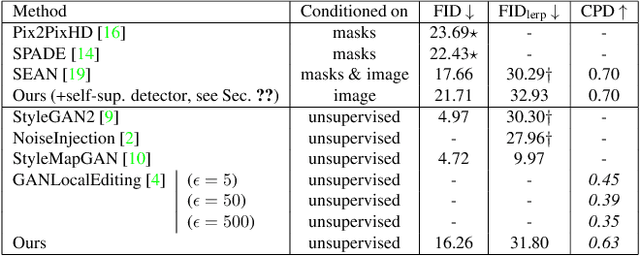

LatentKeypointGAN: Controlling Images via Latent Keypoints -- Extended Abstract

May 06, 2022

Generative adversarial networks (GANs) can now generate photo-realistic images. However, how to best control the image content remains an open challenge. We introduce LatentKeypointGAN, a two-stage GAN internally conditioned on a set of keypoints and associated appearance embeddings providing control of the position and style of the generated objects and their respective parts. A major difficulty that we address is disentangling the image into spatial and appearance factors with little domain knowledge and supervision signals. We demonstrate in a user study and quantitative experiments that LatentKeypointGAN provides an interpretable latent space that can be used to re-arrange the generated images by re-positioning and exchanging keypoint embeddings, such as generating portraits by combining the eyes, and mouth from different images. Notably, our method does not require labels as it is self-supervised and thereby applies to diverse application domains, such as editing portraits, indoor rooms, and full-body human poses.

Few-Shot Non-Parametric Learning with Deep Latent Variable Model

Jun 23, 2022

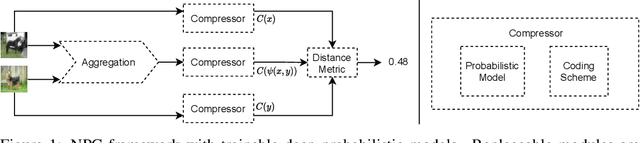

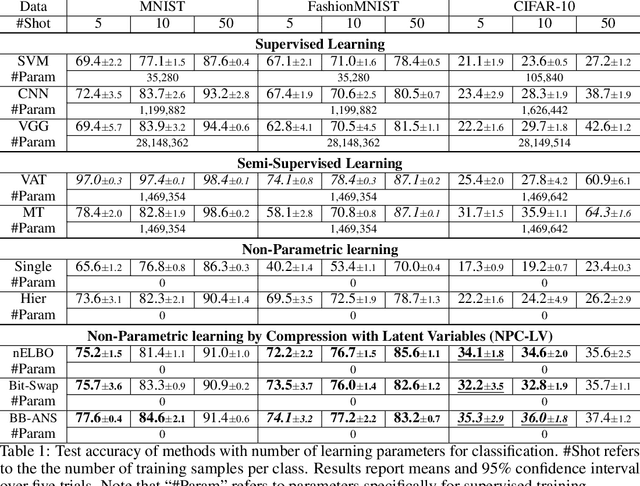

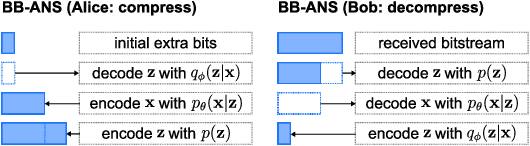

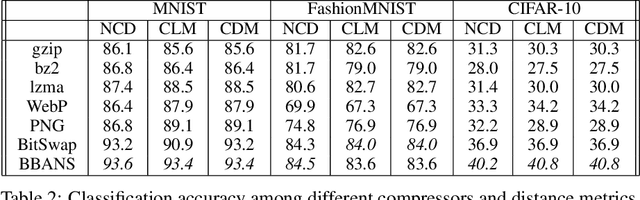

Most real-world problems that machine learning algorithms are expected to solve face the situation with 1) unknown data distribution; 2) little domain-specific knowledge; and 3) datasets with limited annotation. We propose Non-Parametric learning by Compression with Latent Variables (NPC-LV), a learning framework for any dataset with abundant unlabeled data but very few labeled ones. By only training a generative model in an unsupervised way, the framework utilizes the data distribution to build a compressor. Using a compressor-based distance metric derived from Kolmogorov complexity, together with few labeled data, NPC-LV classifies without further training. We show that NPC-LV outperforms supervised methods on all three datasets on image classification in low data regime and even outperform semi-supervised learning methods on CIFAR-10. We demonstrate how and when negative evidence lowerbound (nELBO) can be used as an approximate compressed length for classification. By revealing the correlation between compression rate and classification accuracy, we illustrate that under NPC-LV, the improvement of generative models can enhance downstream classification accuracy.

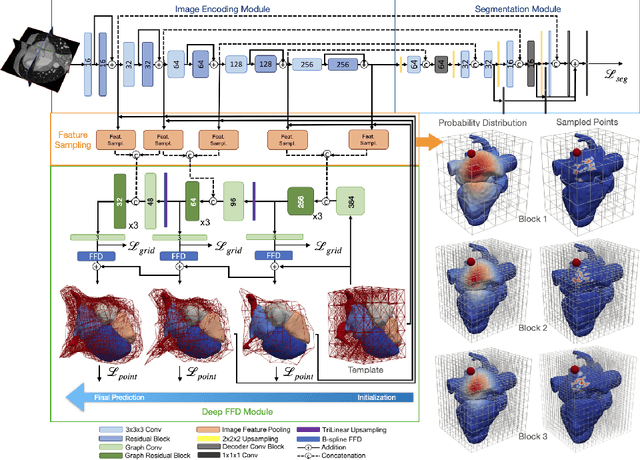

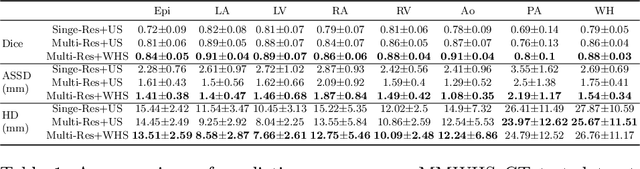

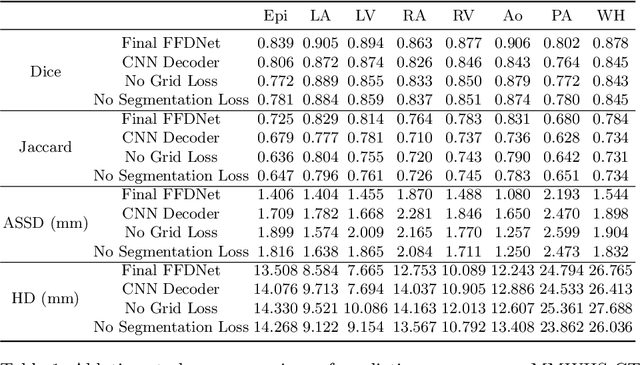

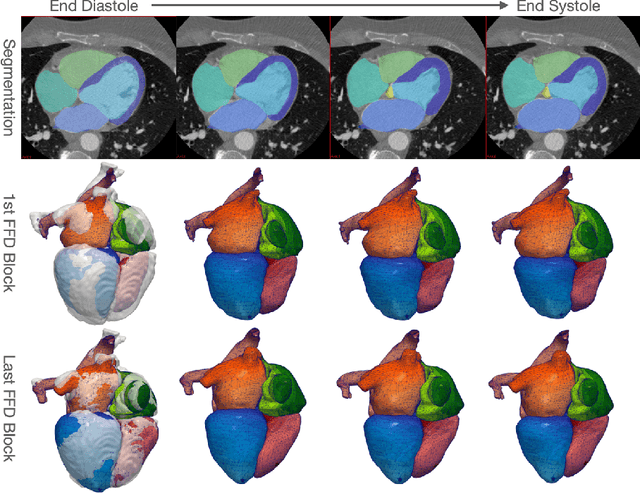

Whole Heart Mesh Generation For Image-Based Computational Simulations By Learning Free-From Deformations

Jul 22, 2021

Image-based computer simulation of cardiac function can be used to probe the mechanisms of (patho)physiology, and guide diagnosis and personalized treatment of cardiac diseases. This paradigm requires constructing simulation-ready meshes of cardiac structures from medical image data--a process that has traditionally required significant time and human effort, limiting large-cohort analyses and potential clinical translations. We propose a novel deep learning approach to reconstruct simulation-ready whole heart meshes from volumetric image data. Our approach learns to deform a template mesh to the input image data by predicting displacements of multi-resolution control point grids. We discuss the methods of this approach and demonstrate its application to efficiently create simulation-ready whole heart meshes for computational fluid dynamics simulations of the cardiac flow. Our source code is available at https://github.com/fkong7/HeartFFDNet.

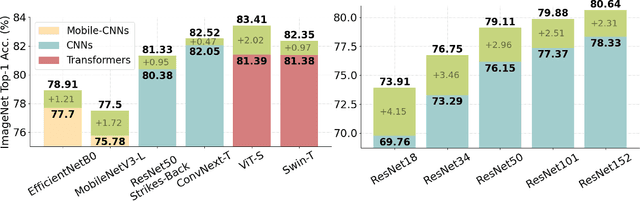

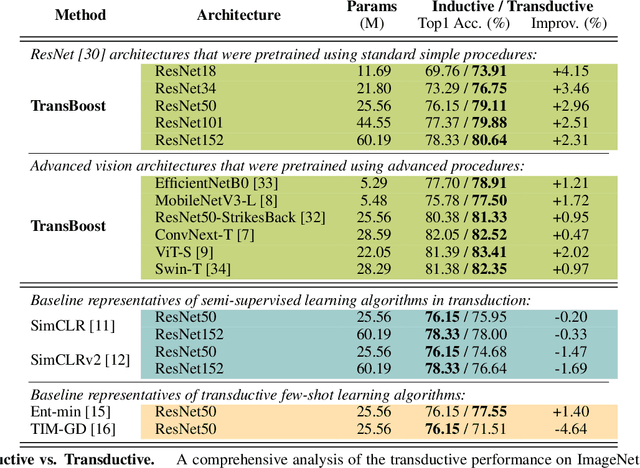

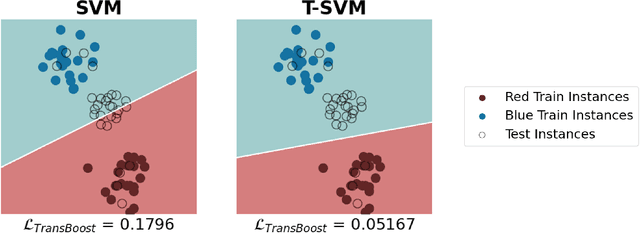

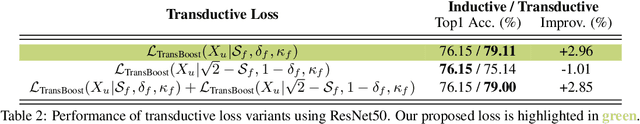

TransBoost: Improving the Best ImageNet Performance using Deep Transduction

May 27, 2022

This paper deals with deep transductive learning, and proposes TransBoost as a procedure for fine-tuning any deep neural model to improve its performance on any (unlabeled) test set provided at training time. TransBoost is inspired by a large margin principle and is efficient and simple to use. The ImageNet classification performance is consistently and significantly improved with TransBoost on many architectures such as ResNets, MobileNetV3-L, EfficientNetB0, ViT-S, and ConvNext-T. Additionally we show that TransBoost is effective on a wide variety of image classification datasets.

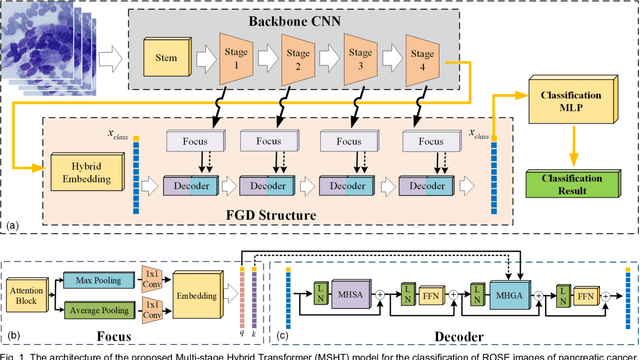

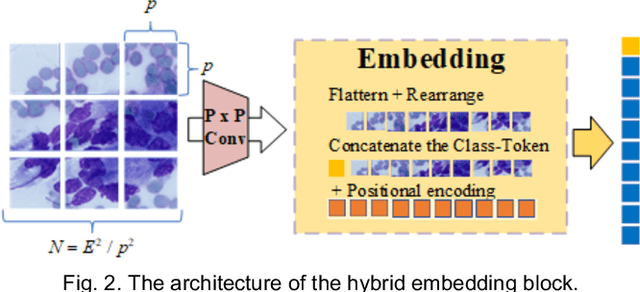

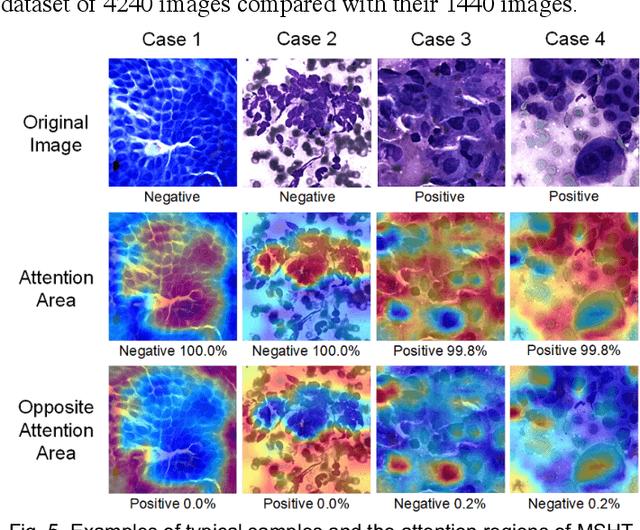

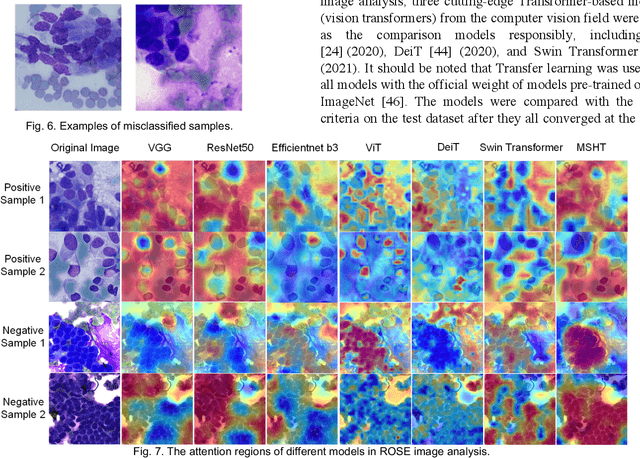

MSHT: Multi-stage Hybrid Transformer for the ROSE Image Analysis of Pancreatic Cancer

Dec 27, 2021

Pancreatic cancer is one of the most malignant cancers in the world, which deteriorates rapidly with very high mortality. The rapid on-site evaluation (ROSE) technique innovates the workflow by immediately analyzing the fast stained cytopathological images with on-site pathologists, which enables faster diagnosis in this time-pressured process. However, the wider expansion of ROSE diagnosis has been hindered by the lack of experienced pathologists. To overcome this problem, we propose a hybrid high-performance deep learning model to enable the automated workflow, thus freeing the occupation of the valuable time of pathologists. By firstly introducing the Transformer block into this field with our particular multi-stage hybrid design, the spatial features generated by the convolutional neural network (CNN) significantly enhance the Transformer global modeling. Turning multi-stage spatial features as global attention guidance, this design combines the robustness from the inductive bias of CNN with the sophisticated global modeling power of Transformer. A dataset of 4240 ROSE images is collected to evaluate the method in this unexplored field. The proposed multi-stage hybrid Transformer (MSHT) achieves 95.68% in classification accuracy, which is distinctively higher than the state-of-the-art models. Facing the need for interpretability, MSHT outperforms its counterparts with more accurate attention regions. The results demonstrate that the MSHT can distinguish cancer samples accurately at an unprecedented image scale, laying the foundation for deploying automatic decision systems and enabling the expansion of ROSE in clinical practice. The code and records are available at: https://github.com/sagizty/Multi-Stage-Hybrid-Transformer.

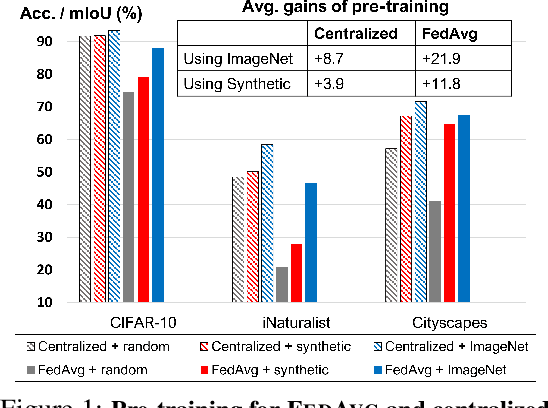

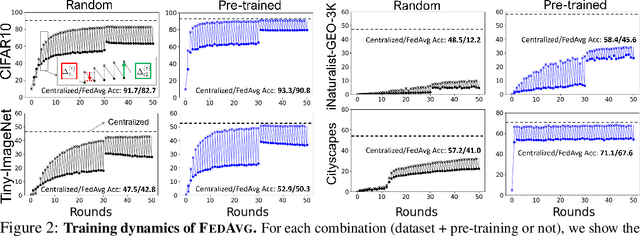

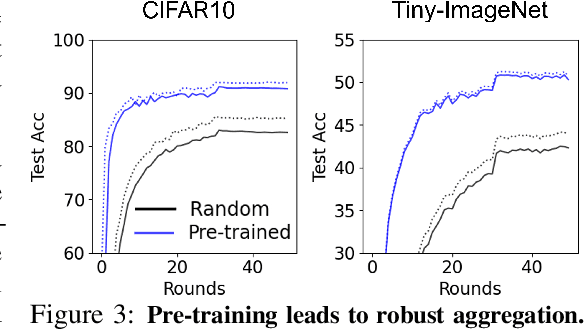

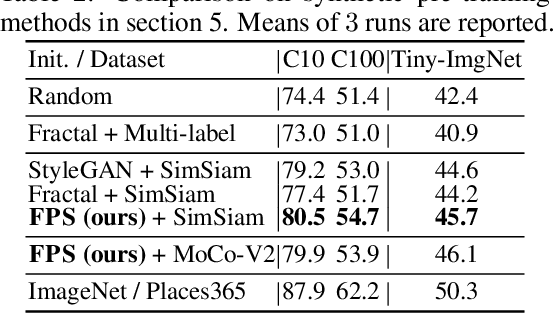

On Pre-Training for Federated Learning

Jun 23, 2022

In most of the literature on federated learning (FL), neural networks are initialized with random weights. In this paper, we present an empirical study on the effect of pre-training on FL. Specifically, we aim to investigate if pre-training can alleviate the drastic accuracy drop when clients' decentralized data are non-IID. We focus on FedAvg, the fundamental and most widely used FL algorithm. We found that pre-training does largely close the gap between FedAvg and centralized learning under non-IID data, but this does not come from alleviating the well-known model drifting problem in FedAvg's local training. Instead, how pre-training helps FedAvg is by making FedAvg's global aggregation more stable. When pre-training using real data is not feasible for FL, we propose a novel approach to pre-train with synthetic data. On various image datasets (including one for segmentation), our approach with synthetic pre-training leads to a notable gain, essentially a critical step toward scaling up federated learning for real-world applications.

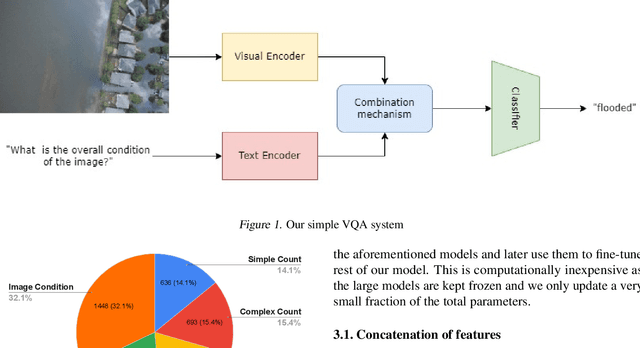

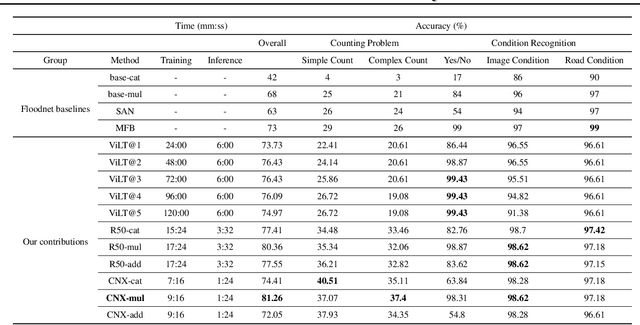

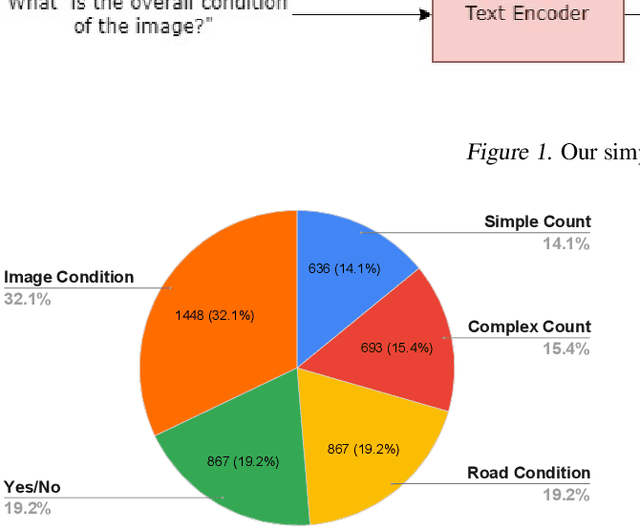

An Efficient Modern Baseline for FloodNet VQA

May 30, 2022

Designing efficient and reliable VQA systems remains a challenging problem, more so in the case of disaster management and response systems. In this work, we revisit fundamental combination methods like concatenation, addition and element-wise multiplication with modern image and text feature abstraction models. We design a simple and efficient system which outperforms pre-existing methods on the FloodNet dataset and achieves state-of-the-art performance. This simplified system requires significantly less training and inference time than modern VQA architectures. We also study the performance of various backbones and report their consolidated results. Code is available at https://github.com/sahilkhose/floodnet_vqa.

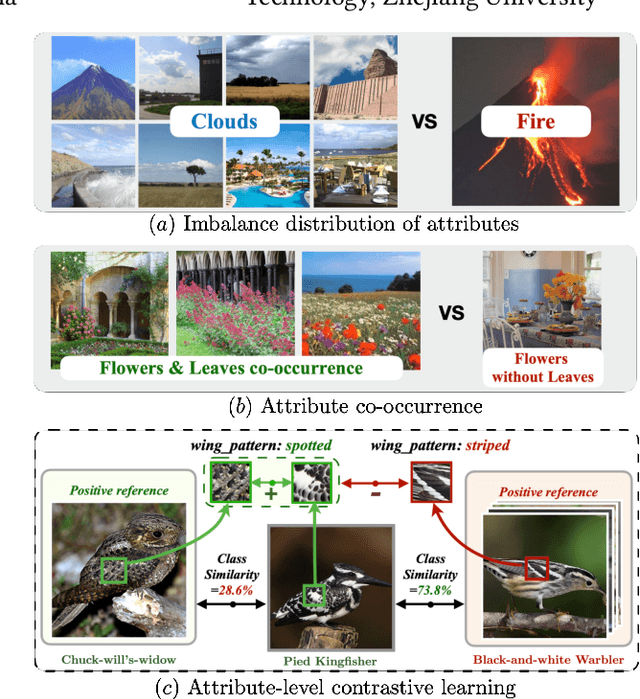

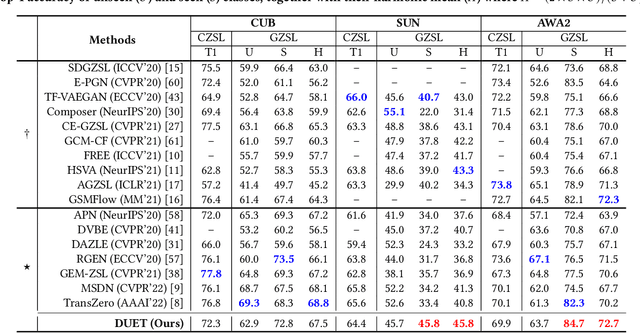

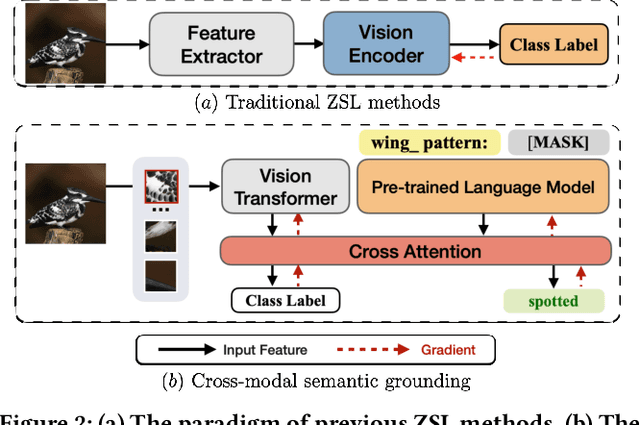

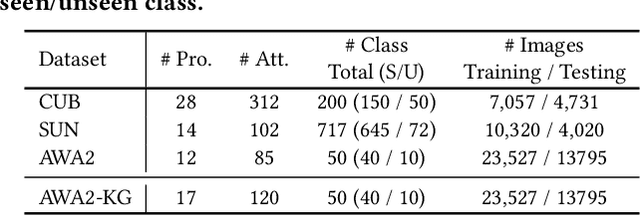

DUET: Cross-modal Semantic Grounding for Contrastive Zero-shot Learning

Jul 04, 2022

Zero-shot learning (ZSL) aims to predict unseen classes whose samples have never appeared during training, often utilizing additional semantic information (a.k.a. side information) to bridge the training (seen) classes and the unseen classes. One of the most effective and widely used semantic information for zero-shot image classification are attributes which are annotations for class-level visual characteristics. However, due to the shortage of fine-grained annotations, the attribute imbalance and co-occurrence, the current methods often fail to discriminate those subtle visual distinctions between images, which limits their performances. In this paper, we present a transformer-based end-to-end ZSL method named DUET, which integrates latent semantic knowledge from the pretrained language models (PLMs) via a self-supervised multi-modal learning paradigm. Specifically, we (1) developed a cross-modal semantic grounding network to investigate the model's capability of disentangling semantic attributes from the images, (2) applied an attribute-level contrastive learning strategy to further enhance the model's discrimination on fine-grained visual characteristics against the attribute co-occurrence and imbalance, and (3) proposed a multi-task learning policy for considering multi-model objectives. With extensive experiments on three standard ZSL benchmarks and a knowledge graph equipped ZSL benchmark, we find that DUET can often achieve state-of-the-art performance, its components are effective and its predictions are interpretable.

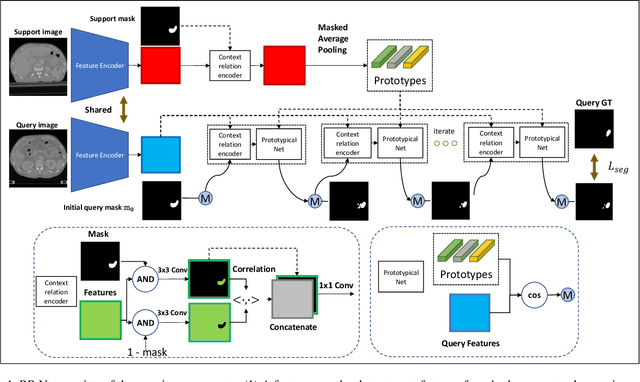

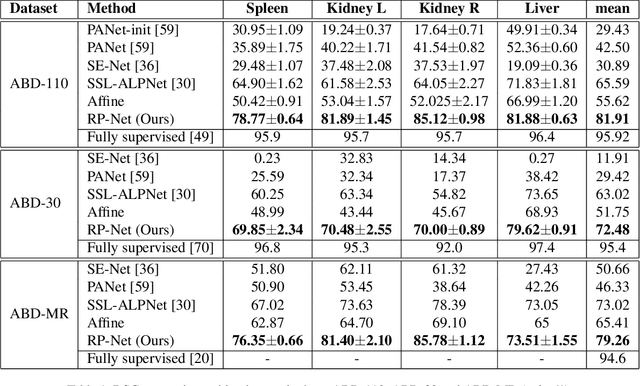

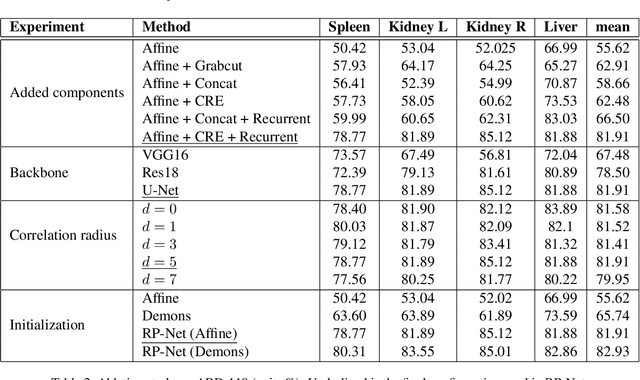

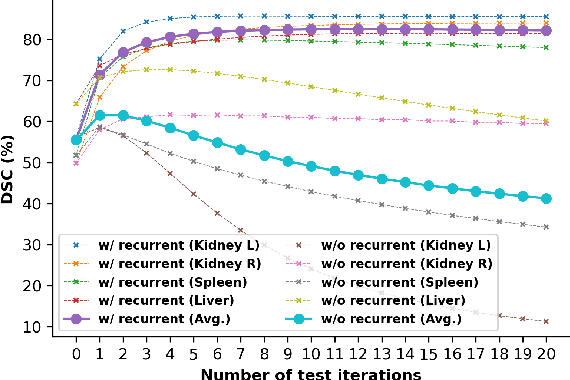

Recurrent Mask Refinement for Few-Shot Medical Image Segmentation

Aug 04, 2021

Although having achieved great success in medical image segmentation, deep convolutional neural networks usually require a large dataset with manual annotations for training and are difficult to generalize to unseen classes. Few-shot learning has the potential to address these challenges by learning new classes from only a few labeled examples. In this work, we propose a new framework for few-shot medical image segmentation based on prototypical networks. Our innovation lies in the design of two key modules: 1) a context relation encoder (CRE) that uses correlation to capture local relation features between foreground and background regions; and 2) a recurrent mask refinement module that repeatedly uses the CRE and a prototypical network to recapture the change of context relationship and refine the segmentation mask iteratively. Experiments on two abdomen CT datasets and an abdomen MRI dataset show the proposed method obtains substantial improvement over the state-of-the-art methods by an average of 16.32%, 8.45% and 6.24% in terms of DSC, respectively. Code is publicly available.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge