Yue Wu

FasterPy: An LLM-based Code Execution Efficiency Optimization Framework

Dec 28, 2025Abstract:Code often suffers from performance bugs. These bugs necessitate the research and practice of code optimization. Traditional rule-based methods rely on manually designing and maintaining rules for specific performance bugs (e.g., redundant loops, repeated computations), making them labor-intensive and limited in applicability. In recent years, machine learning and deep learning-based methods have emerged as promising alternatives by learning optimization heuristics from annotated code corpora and performance measurements. However, these approaches usually depend on specific program representations and meticulously crafted training datasets, making them costly to develop and difficult to scale. With the booming of Large Language Models (LLMs), their remarkable capabilities in code generation have opened new avenues for automated code optimization. In this work, we proposed FasterPy, a low-cost and efficient framework that adapts LLMs to optimize the execution efficiency of Python code. FasterPy combines Retrieval-Augmented Generation (RAG), supported by a knowledge base constructed from existing performance-improving code pairs and corresponding performance measurements, with Low-Rank Adaptation (LoRA) to enhance code optimization performance. Our experimental results on the Performance Improving Code Edits (PIE) benchmark demonstrate that our method outperforms existing models on multiple metrics. The FasterPy tool and the experimental results are available at https://github.com/WuYue22/fasterpy.

Sketch-in-Latents: Eliciting Unified Reasoning in MLLMs

Dec 18, 2025Abstract:While Multimodal Large Language Models (MLLMs) excel at visual understanding tasks through text reasoning, they often fall short in scenarios requiring visual imagination. Unlike current works that take predefined external toolkits or generate images during thinking, however, humans can form flexible visual-text imagination and interactions during thinking without predefined toolkits, where one important reason is that humans construct the visual-text thinking process in a unified space inside the brain. Inspired by this capability, given that current MLLMs already encode visual and text information in the same feature space, we hold that visual tokens can be seamlessly inserted into the reasoning process carried by text tokens, where ideally, all visual imagination processes can be encoded by the latent features. To achieve this goal, we propose Sketch-in-Latents (SkiLa), a novel paradigm for unified multi-modal reasoning that expands the auto-regressive capabilities of MLLMs to natively generate continuous visual embeddings, termed latent sketch tokens, as visual thoughts. During multi-step reasoning, the model dynamically alternates between textual thinking mode for generating textual think tokens and visual sketching mode for generating latent sketch tokens. A latent visual semantics reconstruction mechanism is proposed to ensure these latent sketch tokens are semantically grounded. Extensive experiments demonstrate that SkiLa achieves superior performance on vision-centric tasks while exhibiting strong generalization to diverse general multi-modal benchmarks. Codes will be released at https://github.com/TungChintao/SkiLa.

Use of Continuous Glucose Monitoring with Machine Learning to Identify Metabolic Subphenotypes and Inform Precision Lifestyle Changes

Nov 06, 2025Abstract:The classification of diabetes and prediabetes by static glucose thresholds obscures the pathophysiological dysglycemia heterogeneity, primarily driven by insulin resistance (IR), beta-cell dysfunction, and incretin deficiency. This review demonstrates that continuous glucose monitoring and wearable technologies enable a paradigm shift towards non-invasive, dynamic metabolic phenotyping. We show evidence that machine learning models can leverage high-resolution glucose data from at-home, CGM-enabled oral glucose tolerance tests to accurately predict gold-standard measures of muscle IR and beta-cell function. This personalized characterization extends to real-world nutrition, where an individual's unique postprandial glycemic response (PPGR) to standardized meals, such as the relative glucose spike to potatoes versus grapes, could serve as a biomarker for their metabolic subtype. Moreover, integrating wearable data reveals that habitual diet, sleep, and physical activity patterns, particularly their timing, are uniquely associated with specific metabolic dysfunctions, informing precision lifestyle interventions. The efficacy of dietary mitigators in attenuating PPGR is also shown to be phenotype-dependent. Collectively, this evidence demonstrates that CGM can deconstruct the complexity of early dysglycemia into distinct, actionable subphenotypes. This approach moves beyond simple glycemic control, paving the way for targeted nutritional, behavioral, and pharmacological strategies tailored to an individual's core metabolic defects, thereby paving the way for a new era of precision diabetes prevention.

TALENT: Table VQA via Augmented Language-Enhanced Natural-text Transcription

Oct 08, 2025Abstract:Table Visual Question Answering (Table VQA) is typically addressed by large vision-language models (VLMs). While such models can answer directly from images, they often miss fine-grained details unless scaled to very large sizes, which are computationally prohibitive, especially for mobile deployment. A lighter alternative is to have a small VLM perform OCR and then use a large language model (LLM) to reason over structured outputs such as Markdown tables. However, these representations are not naturally optimized for LLMs and still introduce substantial errors. We propose TALENT (Table VQA via Augmented Language-Enhanced Natural-text Transcription), a lightweight framework that leverages dual representations of tables. TALENT prompts a small VLM to produce both OCR text and natural language narration, then combines them with the question for reasoning by an LLM. This reframes Table VQA as an LLM-centric multimodal reasoning task, where the VLM serves as a perception-narration module rather than a monolithic solver. Additionally, we construct ReTabVQA, a more challenging Table VQA dataset requiring multi-step quantitative reasoning over table images. Experiments show that TALENT enables a small VLM-LLM combination to match or surpass a single large VLM at significantly lower computational cost on both public datasets and ReTabVQA.

Interference Alignment for Multi-cluster Over-the-Air Computation

Oct 06, 2025Abstract:One of the main challenges facing Internet of Things (IoT) networks is managing interference caused by the large number of devices communicating simultaneously, particularly in multi-cluster networks where multiple devices simultaneously transmit to their respective receiver. Over-the-Air Computation (AirComp) has emerged as a promising solution for efficient real-time data aggregation, yet its performance suffers in dense, interference-limited environments. To address this, we propose a novel Interference Alignment (IA) scheme tailored for up-link AirComp systems. Unlike previous approaches, the proposed method scales to an arbitrary number $\sf K$ of clusters and enables each cluster to exploit half of the available channels, instead of only $\tfrac{1}{\sf K}$ as in time-sharing. In addition, we develop schemes tailored to scenarios where users are shared between adjacent clusters.

FastAvatar: Towards Unified Fast High-Fidelity 3D Avatar Reconstruction with Large Gaussian Reconstruction Transformers

Aug 27, 2025Abstract:Despite significant progress in 3D avatar reconstruction, it still faces challenges such as high time complexity, sensitivity to data quality, and low data utilization. We propose FastAvatar, a feedforward 3D avatar framework capable of flexibly leveraging diverse daily recordings (e.g., a single image, multi-view observations, or monocular video) to reconstruct a high-quality 3D Gaussian Splatting (3DGS) model within seconds, using only a single unified model. FastAvatar's core is a Large Gaussian Reconstruction Transformer featuring three key designs: First, a variant VGGT-style transformer architecture aggregating multi-frame cues while injecting initial 3D prompt to predict an aggregatable canonical 3DGS representation; Second, multi-granular guidance encoding (camera pose, FLAME expression, head pose) mitigating animation-induced misalignment for variable-length inputs; Third, incremental Gaussian aggregation via landmark tracking and sliced fusion losses. Integrating these features, FastAvatar enables incremental reconstruction, i.e., improving quality with more observations, unlike prior work wasting input data. This yields a quality-speed-tunable paradigm for highly usable avatar modeling. Extensive experiments show that FastAvatar has higher quality and highly competitive speed compared to existing methods.

VRBench: A Benchmark for Multi-Step Reasoning in Long Narrative Videos

Jun 12, 2025Abstract:We present VRBench, the first long narrative video benchmark crafted for evaluating large models' multi-step reasoning capabilities, addressing limitations in existing evaluations that overlook temporal reasoning and procedural validity. It comprises 1,010 long videos (with an average duration of 1.6 hours), along with 9,468 human-labeled multi-step question-answering pairs and 30,292 reasoning steps with timestamps. These videos are curated via a multi-stage filtering process including expert inter-rater reviewing to prioritize plot coherence. We develop a human-AI collaborative framework that generates coherent reasoning chains, each requiring multiple temporally grounded steps, spanning seven types (e.g., event attribution, implicit inference). VRBench designs a multi-phase evaluation pipeline that assesses models at both the outcome and process levels. Apart from the MCQs for the final results, we propose a progress-level LLM-guided scoring metric to evaluate the quality of the reasoning chain from multiple dimensions comprehensively. Through extensive evaluations of 12 LLMs and 16 VLMs on VRBench, we undertake a thorough analysis and provide valuable insights that advance the field of multi-step reasoning.

Tensor-to-Tensor Models with Fast Iterated Sum Features

Jun 06, 2025Abstract:Data in the form of images or higher-order tensors is ubiquitous in modern deep learning applications. Owing to their inherent high dimensionality, the need for subquadratic layers processing such data is even more pressing than for sequence data. We propose a novel tensor-to-tensor layer with linear cost in the input size, utilizing the mathematical gadget of ``corner trees'' from the field of permutation counting. In particular, for order-two tensors, we provide an image-to-image layer that can be plugged into image processing pipelines. On the one hand, our method can be seen as a higher-order generalization of state-space models. On the other hand, it is based on a multiparameter generalization of the signature of iterated integrals (or sums). The proposed tensor-to-tensor concept is used to build a neural network layer called the Fast Iterated Sums (FIS) layer which integrates seamlessly with other layer types. We demonstrate the usability of the FIS layer with both classification and anomaly detection tasks. By replacing some layers of a smaller ResNet architecture with FIS, a similar accuracy (with a difference of only 0.1\%) was achieved in comparison to a larger ResNet while reducing the number of trainable parameters and multi-add operations. The FIS layer was also used to build an anomaly detection model that achieved an average AUROC of 97.3\% on the texture images of the popular MVTec AD dataset. The processing and modelling codes are publicly available at https://github.com/diehlj/fast-iterated-sums.

Alita: Generalist Agent Enabling Scalable Agentic Reasoning with Minimal Predefinition and Maximal Self-Evolution

May 26, 2025

Abstract:Recent advances in large language models (LLMs) have enabled agents to autonomously perform complex, open-ended tasks. However, many existing frameworks depend heavily on manually predefined tools and workflows, which hinder their adaptability, scalability, and generalization across domains. In this work, we introduce Alita--a generalist agent designed with the principle of "Simplicity is the ultimate sophistication," enabling scalable agentic reasoning through minimal predefinition and maximal self-evolution. For minimal predefinition, Alita is equipped with only one component for direct problem-solving, making it much simpler and neater than previous approaches that relied heavily on hand-crafted, elaborate tools and workflows. This clean design enhances its potential to generalize to challenging questions, without being limited by tools. For Maximal self-evolution, we enable the creativity of Alita by providing a suite of general-purpose components to autonomously construct, refine, and reuse external capabilities by generating task-related model context protocols (MCPs) from open source, which contributes to scalable agentic reasoning. Notably, Alita achieves 75.15% pass@1 and 87.27% pass@3 accuracy, which is top-ranking among general-purpose agents, on the GAIA benchmark validation dataset, 74.00% and 52.00% pass@1, respectively, on Mathvista and PathVQA, outperforming many agent systems with far greater complexity. More details will be updated at $\href{https://github.com/CharlesQ9/Alita}{https://github.com/CharlesQ9/Alita}$.

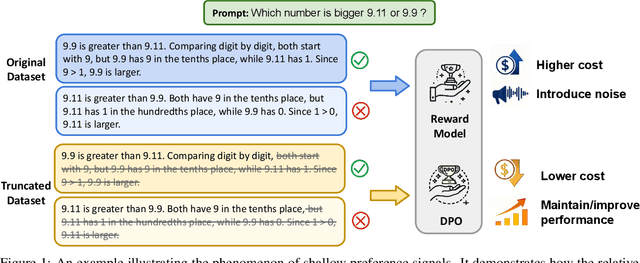

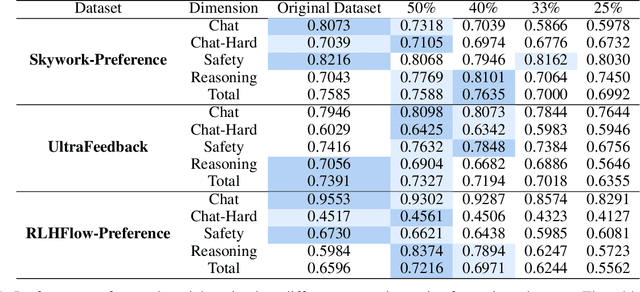

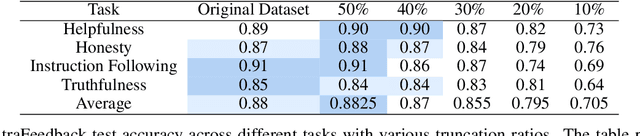

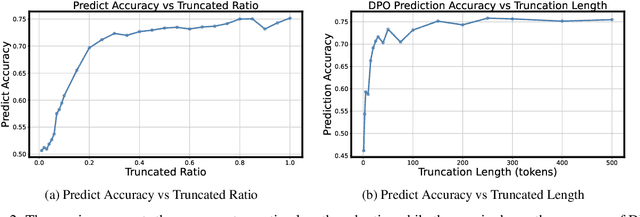

Shallow Preference Signals: Large Language Model Aligns Even Better with Truncated Data?

May 21, 2025

Abstract:Aligning large language models (LLMs) with human preferences remains a key challenge in AI. Preference-based optimization methods, such as Reinforcement Learning with Human Feedback (RLHF) and Direct Preference Optimization (DPO), rely on human-annotated datasets to improve alignment. In this work, we identify a crucial property of the existing learning method: the distinguishing signal obtained in preferred responses is often concentrated in the early tokens. We refer to this as shallow preference signals. To explore this property, we systematically truncate preference datasets at various points and train both reward models and DPO models on the truncated data. Surprisingly, models trained on truncated datasets, retaining only the first half or fewer tokens, achieve comparable or even superior performance to those trained on full datasets. For example, a reward model trained on the Skywork-Reward-Preference-80K-v0.2 dataset outperforms the full dataset when trained on a 40\% truncated dataset. This pattern is consistent across multiple datasets, suggesting the widespread presence of shallow preference signals. We further investigate the distribution of the reward signal through decoding strategies. We consider two simple decoding strategies motivated by the shallow reward signal observation, namely Length Control Decoding and KL Threshold Control Decoding, which leverage shallow preference signals to optimize the trade-off between alignment and computational efficiency. The performance is even better, which again validates our hypothesis. The phenomenon of shallow preference signals highlights potential issues in LLM alignment: existing alignment methods often focus on aligning only the initial tokens of responses, rather than considering the full response. This could lead to discrepancies with real-world human preferences, resulting in suboptimal alignment performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge