Mengdi Wang

Rectified Diffusion: Straightness Is Not Your Need in Rectified Flow

Oct 09, 2024Abstract:Diffusion models have greatly improved visual generation but are hindered by slow generation speed due to the computationally intensive nature of solving generative ODEs. Rectified flow, a widely recognized solution, improves generation speed by straightening the ODE path. Its key components include: 1) using the diffusion form of flow-matching, 2) employing $\boldsymbol v$-prediction, and 3) performing rectification (a.k.a. reflow). In this paper, we argue that the success of rectification primarily lies in using a pretrained diffusion model to obtain matched pairs of noise and samples, followed by retraining with these matched noise-sample pairs. Based on this, components 1) and 2) are unnecessary. Furthermore, we highlight that straightness is not an essential training target for rectification; rather, it is a specific case of flow-matching models. The more critical training target is to achieve a first-order approximate ODE path, which is inherently curved for models like DDPM and Sub-VP. Building on this insight, we propose Rectified Diffusion, which generalizes the design space and application scope of rectification to encompass the broader category of diffusion models, rather than being restricted to flow-matching models. We validate our method on Stable Diffusion v1-5 and Stable Diffusion XL. Our method not only greatly simplifies the training procedure of rectified flow-based previous works (e.g., InstaFlow) but also achieves superior performance with even lower training cost. Our code is available at https://github.com/G-U-N/Rectified-Diffusion.

IterComp: Iterative Composition-Aware Feedback Learning from Model Gallery for Text-to-Image Generation

Oct 09, 2024

Abstract:Advanced diffusion models like RPG, Stable Diffusion 3 and FLUX have made notable strides in compositional text-to-image generation. However, these methods typically exhibit distinct strengths for compositional generation, with some excelling in handling attribute binding and others in spatial relationships. This disparity highlights the need for an approach that can leverage the complementary strengths of various models to comprehensively improve the composition capability. To this end, we introduce IterComp, a novel framework that aggregates composition-aware model preferences from multiple models and employs an iterative feedback learning approach to enhance compositional generation. Specifically, we curate a gallery of six powerful open-source diffusion models and evaluate their three key compositional metrics: attribute binding, spatial relationships, and non-spatial relationships. Based on these metrics, we develop a composition-aware model preference dataset comprising numerous image-rank pairs to train composition-aware reward models. Then, we propose an iterative feedback learning method to enhance compositionality in a closed-loop manner, enabling the progressive self-refinement of both the base diffusion model and reward models over multiple iterations. Theoretical proof demonstrates the effectiveness and extensive experiments show our significant superiority over previous SOTA methods (e.g., Omost and FLUX), particularly in multi-category object composition and complex semantic alignment. IterComp opens new research avenues in reward feedback learning for diffusion models and compositional generation. Code: https://github.com/YangLing0818/IterComp

AIME: AI System Optimization via Multiple LLM Evaluators

Oct 04, 2024Abstract:Text-based AI system optimization typically involves a feedback loop scheme where a single LLM generates an evaluation in natural language of the current output to improve the next iteration's output. However, in this work, we empirically demonstrate that for a practical and complex task (code generation) with multiple criteria to evaluate, utilizing only one LLM evaluator tends to let errors in generated code go undetected, thus leading to incorrect evaluations and ultimately suboptimal test case performance. Motivated by this failure case, we assume there exists an optimal evaluation policy that samples an evaluation between response and ground truth. We then theoretically prove that a linear combination of multiple evaluators can approximate this optimal policy. From this insight, we propose AI system optimization via Multiple LLM Evaluators (AIME). AIME is an evaluation protocol that utilizes multiple LLMs that each independently generate an evaluation on separate criteria and then combine them via concatenation. We provide an extensive empirical study showing AIME outperforming baseline methods in code generation tasks, with up to $62\%$ higher error detection rate and up to $16\%$ higher success rate than a single LLM evaluation protocol on LeetCodeHard and HumanEval datasets. We also show that the selection of the number of evaluators and which criteria to utilize is non-trivial as it can impact pact success rate by up to $12\%$.

Latent Diffusion Models for Controllable RNA Sequence Generation

Sep 15, 2024

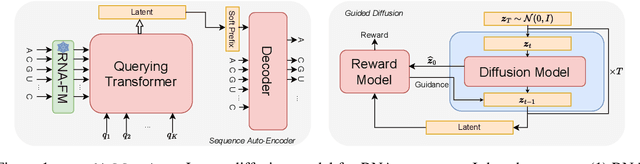

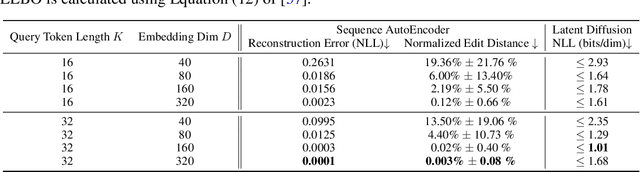

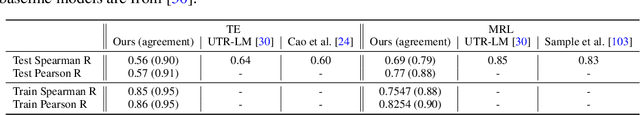

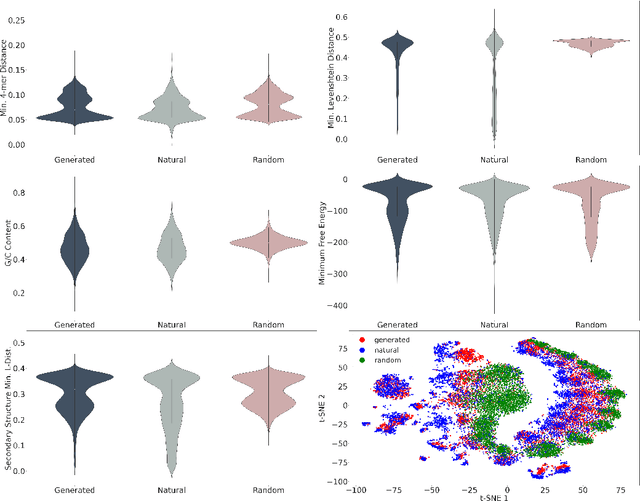

Abstract:This paper presents RNAdiffusion, a latent diffusion model for generating and optimizing discrete RNA sequences. RNA is a particularly dynamic and versatile molecule in biological processes. RNA sequences exhibit high variability and diversity, characterized by their variable lengths, flexible three-dimensional structures, and diverse functions. We utilize pretrained BERT-type models to encode raw RNAs into token-level biologically meaningful representations. A Q-Former is employed to compress these representations into a fixed-length set of latent vectors, with an autoregressive decoder trained to reconstruct RNA sequences from these latent variables. We then develop a continuous diffusion model within this latent space. To enable optimization, we train reward networks to estimate functional properties of RNA from the latent variables. We employ gradient-based guidance during the backward diffusion process, aiming to generate RNA sequences that are optimized for higher rewards. Empirical experiments confirm that RNAdiffusion generates non-coding RNAs that align with natural distributions across various biological indicators. We fine-tuned the diffusion model on untranslated regions (UTRs) of mRNA and optimize sample sequences for protein translation efficiencies. Our guided diffusion model effectively generates diverse UTR sequences with high Mean Ribosome Loading (MRL) and Translation Efficiency (TE), surpassing baselines. These results hold promise for studies on RNA sequence-function relationships, protein synthesis, and enhancing therapeutic RNA design.

Relative-Translation Invariant Wasserstein Distance

Sep 04, 2024

Abstract:We introduce a new family of distances, relative-translation invariant Wasserstein distances ($RW_p$), for measuring the similarity of two probability distributions under distribution shift. Generalizing it from the classical optimal transport model, we show that $RW_p$ distances are also real distance metrics defined on the quotient set $\mathcal{P}_p(\mathbb{R}^n)/\sim$ and invariant to distribution translations. When $p=2$, the $RW_2$ distance enjoys more exciting properties, including decomposability of the optimal transport model, translation-invariance of the $RW_2$ distance, and a Pythagorean relationship between $RW_2$ and the classical quadratic Wasserstein distance ($W_2$). Based on these properties, we show that a distribution shift, measured by $W_2$ distance, can be explained in the bias-variance perspective. In addition, we propose a variant of the Sinkhorn algorithm, named $RW_2$ Sinkhorn algorithm, for efficiently calculating $RW_2$ distance, coupling solutions, as well as $W_2$ distance. We also provide the analysis of numerical stability and time complexity for the proposed algorithm. Finally, we validate the $RW_2$ distance metric and the algorithm performance with three experiments. We conduct one numerical validation for the $RW_2$ Sinkhorn algorithm and show two real-world applications demonstrating the effectiveness of using $RW_2$ under distribution shift: digits recognition and similar thunderstorm detection. The experimental results report that our proposed algorithm significantly improves the computational efficiency of Sinkhorn in certain practical applications, and the $RW_2$ distance is robust to distribution translations compared with baselines.

Conversational Dueling Bandits in Generalized Linear Models

Jul 26, 2024

Abstract:Conversational recommendation systems elicit user preferences by interacting with users to obtain their feedback on recommended commodities. Such systems utilize a multi-armed bandit framework to learn user preferences in an online manner and have received great success in recent years. However, existing conversational bandit methods have several limitations. First, they only enable users to provide explicit binary feedback on the recommended items or categories, leading to ambiguity in interpretation. In practice, users are usually faced with more than one choice. Relative feedback, known for its informativeness, has gained increasing popularity in recommendation system design. Moreover, current contextual bandit methods mainly work under linear reward assumptions, ignoring practical non-linear reward structures in generalized linear models. Therefore, in this paper, we introduce relative feedback-based conversations into conversational recommendation systems through the integration of dueling bandits in generalized linear models (GLM) and propose a novel conversational dueling bandit algorithm called ConDuel. Theoretical analyses of regret upper bounds and empirical validations on synthetic and real-world data underscore ConDuel's efficacy. We also demonstrate the potential to extend our algorithm to multinomial logit bandits with theoretical and experimental guarantees, which further proves the applicability of the proposed framework.

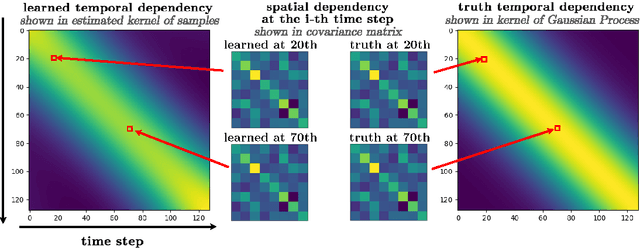

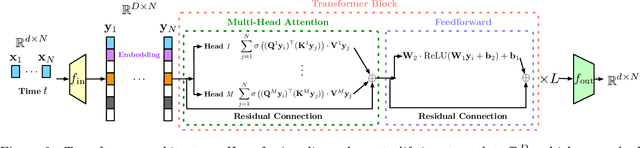

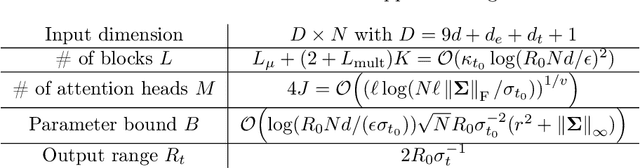

Diffusion Transformer Captures Spatial-Temporal Dependencies: A Theory for Gaussian Process Data

Jul 23, 2024

Abstract:Diffusion Transformer, the backbone of Sora for video generation, successfully scales the capacity of diffusion models, pioneering new avenues for high-fidelity sequential data generation. Unlike static data such as images, sequential data consists of consecutive data frames indexed by time, exhibiting rich spatial and temporal dependencies. These dependencies represent the underlying dynamic model and are critical to validate the generated data. In this paper, we make the first theoretical step towards bridging diffusion transformers for capturing spatial-temporal dependencies. Specifically, we establish score approximation and distribution estimation guarantees of diffusion transformers for learning Gaussian process data with covariance functions of various decay patterns. We highlight how the spatial-temporal dependencies are captured and affect learning efficiency. Our study proposes a novel transformer approximation theory, where the transformer acts to unroll an algorithm. We support our theoretical results by numerical experiments, providing strong evidence that spatial-temporal dependencies are captured within attention layers, aligning with our approximation theory.

Natural language is not enough: Benchmarking multi-modal generative AI for Verilog generation

Jul 11, 2024

Abstract:Natural language interfaces have exhibited considerable potential in the automation of Verilog generation derived from high-level specifications through the utilization of large language models, garnering significant attention. Nevertheless, this paper elucidates that visual representations contribute essential contextual information critical to design intent for hardware architectures possessing spatial complexity, potentially surpassing the efficacy of natural-language-only inputs. Expanding upon this premise, our paper introduces an open-source benchmark for multi-modal generative models tailored for Verilog synthesis from visual-linguistic inputs, addressing both singular and complex modules. Additionally, we introduce an open-source visual and natural language Verilog query language framework to facilitate efficient and user-friendly multi-modal queries. To evaluate the performance of the proposed multi-modal hardware generative AI in Verilog generation tasks, we compare it with a popular method that relies solely on natural language. Our results demonstrate a significant accuracy improvement in the multi-modal generated Verilog compared to queries based solely on natural language. We hope to reveal a new approach to hardware design in the large-hardware-design-model era, thereby fostering a more diversified and productive approach to hardware design.

Contractual Reinforcement Learning: Pulling Arms with Invisible Hands

Jul 02, 2024

Abstract:The agency problem emerges in today's large scale machine learning tasks, where the learners are unable to direct content creation or enforce data collection. In this work, we propose a theoretical framework for aligning economic interests of different stakeholders in the online learning problems through contract design. The problem, termed \emph{contractual reinforcement learning}, naturally arises from the classic model of Markov decision processes, where a learning principal seeks to optimally influence the agent's action policy for their common interests through a set of payment rules contingent on the realization of next state. For the planning problem, we design an efficient dynamic programming algorithm to determine the optimal contracts against the far-sighted agent. For the learning problem, we introduce a generic design of no-regret learning algorithms to untangle the challenges from robust design of contracts to the balance of exploration and exploitation, reducing the complexity analysis to the construction of efficient search algorithms. For several natural classes of problems, we design tailored search algorithms that provably achieve $\tilde{O}(\sqrt{T})$ regret. We also present an algorithm with $\tilde{O}(T^{2/3})$ for the general problem that improves the existing analysis in online contract design with mild technical assumptions.

Provable Statistical Rates for Consistency Diffusion Models

Jun 23, 2024Abstract:Diffusion models have revolutionized various application domains, including computer vision and audio generation. Despite the state-of-the-art performance, diffusion models are known for their slow sample generation due to the extensive number of steps involved. In response, consistency models have been developed to merge multiple steps in the sampling process, thereby significantly boosting the speed of sample generation without compromising quality. This paper contributes towards the first statistical theory for consistency models, formulating their training as a distribution discrepancy minimization problem. Our analysis yields statistical estimation rates based on the Wasserstein distance for consistency models, matching those of vanilla diffusion models. Additionally, our results encompass the training of consistency models through both distillation and isolation methods, demystifying their underlying advantage.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge