Marco Pavone

Sanford University and

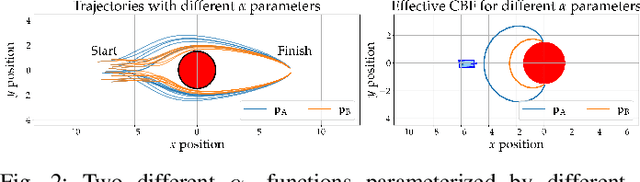

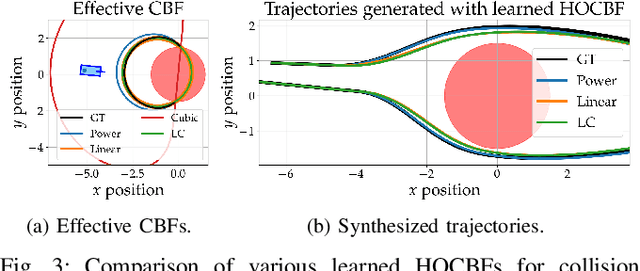

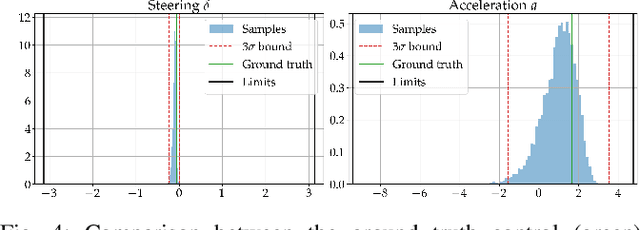

Learning Autonomous Vehicle Safety Concepts from Demonstrations

Oct 06, 2022

Abstract:Evaluating the safety of an autonomous vehicle (AV) depends on the behavior of surrounding agents which can be heavily influenced by factors such as environmental context and informally-defined driving etiquette. A key challenge is in determining a minimum set of assumptions on what constitutes reasonable foreseeable behaviors of other road users for the development of AV safety models and techniques. In this paper, we propose a data-driven AV safety design methodology that first learns ``reasonable'' behavioral assumptions from data, and then synthesizes an AV safety concept using these learned behavioral assumptions. We borrow techniques from control theory, namely high order control barrier functions and Hamilton-Jacobi reachability, to provide inductive bias to aid interpretability, verifiability, and tractability of our approach. In our experiments, we learn an AV safety concept using demonstrations collected from a highway traffic-weaving scenario, compare our learned concept to existing baselines, and showcase its efficacy in evaluating real-world driving logs.

Data Lifecycle Management in Evolving Input Distributions for Learning-based Aerospace Applications

Sep 24, 2022

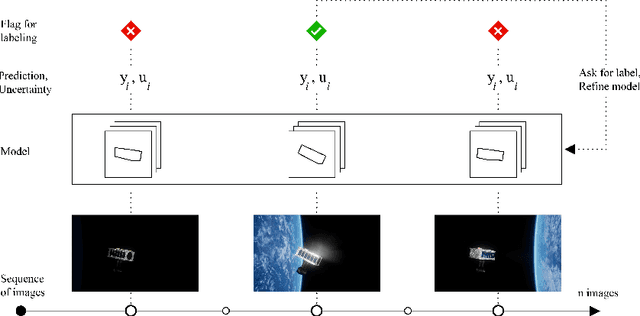

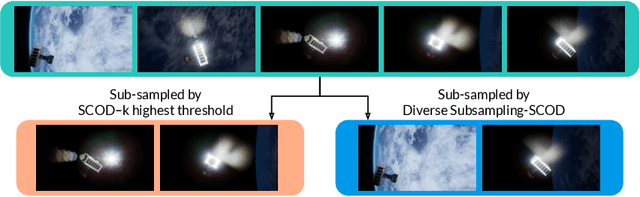

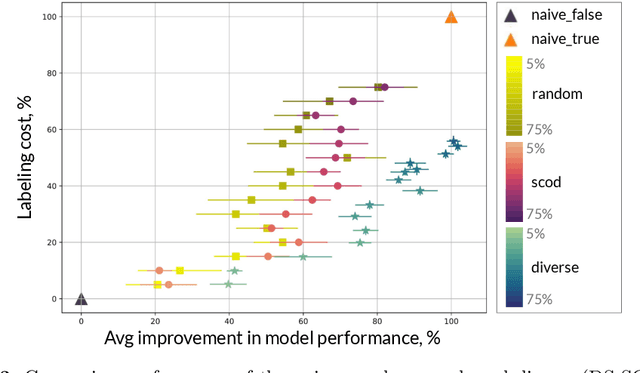

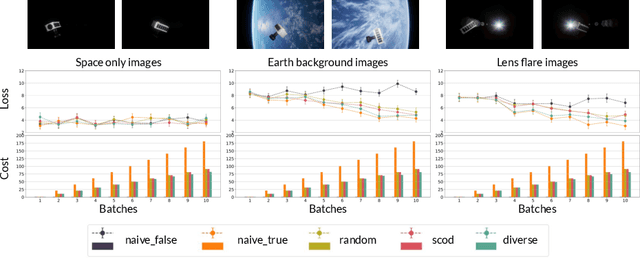

Abstract:As input distributions evolve over a mission lifetime, maintaining performance of learning-based models becomes challenging. This paper presents a framework to incrementally retrain a model by selecting a subset of test inputs to label, which allows the model to adapt to changing input distributions. Algorithms within this framework are evaluated based on (1) model performance throughout mission lifetime and (2) cumulative costs associated with labeling and model retraining. We provide an open-source benchmark of a satellite pose estimation model trained on images of a satellite in space and deployed in novel scenarios (e.g., different backgrounds or misbehaving pixels), where algorithms are evaluated on their ability to maintain high performance by retraining on a subset of inputs. We also propose a novel algorithm to select a diverse subset of inputs for labeling, by characterizing the information gain from an input using Bayesian uncertainty quantification and choosing a subset that maximizes collective information gain using concepts from batch active learning. We show that our algorithm outperforms others on the benchmark, e.g., achieves comparable performance to an algorithm that labels 100% of inputs, while only labeling 50% of inputs, resulting in low costs and high performance over the mission lifetime.

Expanding the Deployment Envelope of Behavior Prediction via Adaptive Meta-Learning

Sep 23, 2022

Abstract:Learning-based behavior prediction methods are increasingly being deployed in real-world autonomous systems, e.g., in fleets of self-driving vehicles, which are beginning to commercially operate in major cities across the world. Despite their advancements, however, the vast majority of prediction systems are specialized to a set of well-explored geographic regions or operational design domains, complicating deployment to additional cities, countries, or continents. Towards this end, we present a novel method for efficiently adapting behavior prediction models to new environments. Our approach leverages recent advances in meta-learning, specifically Bayesian regression, to augment existing behavior prediction models with an adaptive layer that enables efficient domain transfer via offline fine-tuning, online adaptation, or both. Experiments across multiple real-world datasets demonstrate that our method can efficiently adapt to a variety of unseen environments.

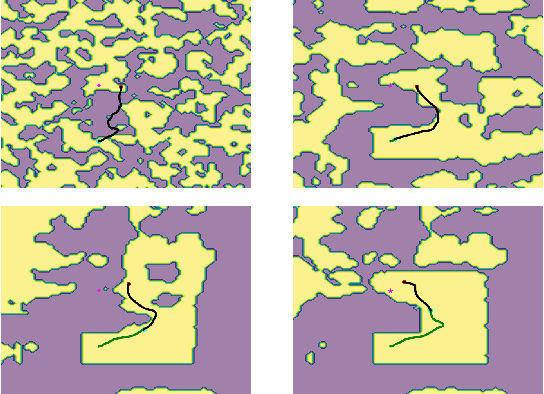

Motion Planning for a Climbing Robot with Stochastic Grasps

Sep 21, 2022

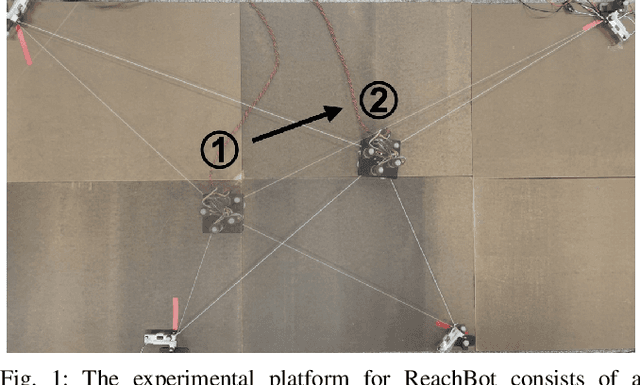

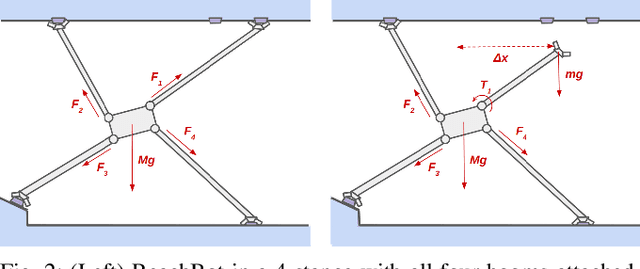

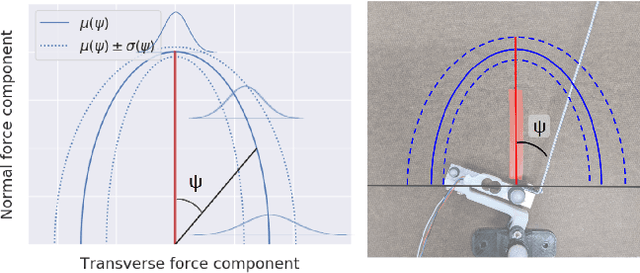

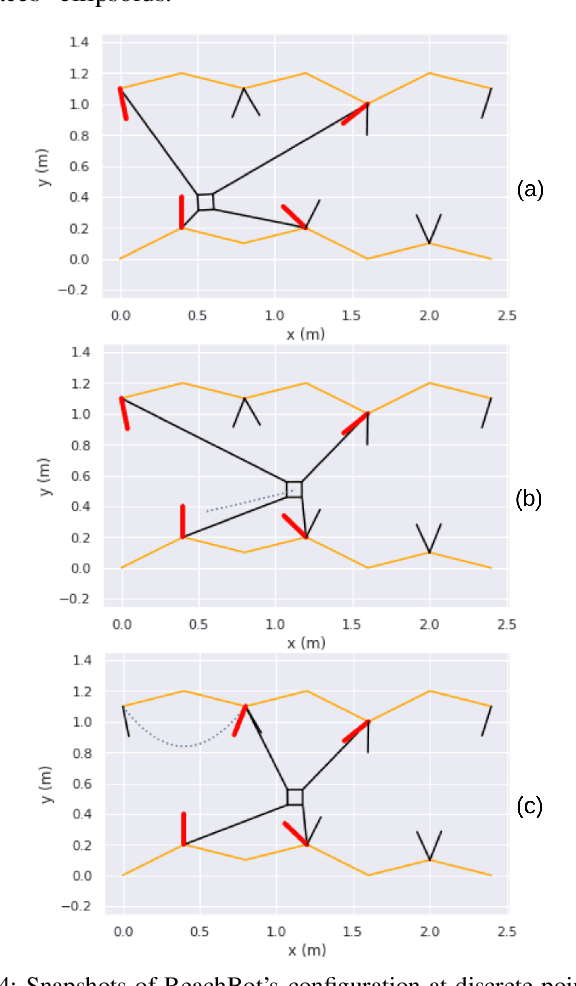

Abstract:Motion planning for a multi-limbed climbing robot must consider the robot's posture, joint torques, and how it uses contact forces to interact with its environment. This paper focuses on motion planning for a robot that uses nontraditional locomotion to explore unpredictable environments such as martian caves. Our robotic concept, ReachBot, uses extendable and retractable booms as limbs to achieve a large reachable workspace while climbing. Each extendable boom is capped by a microspine gripper designed for grasping rocky surfaces. ReachBot leverages its large workspace to navigate around obstacles, over crevasses, and through challenging terrain. Our planning approach must be versatile to accommodate variable terrain features and robust to mitigate risks from the stochastic nature of grasping with spines. In this paper, we introduce a graph traversal algorithm to select a discrete sequence of grasps based on available terrain features suitable for grasping. This discrete plan is complemented by a decoupled motion planner that considers the alternating phases of body movement and end-effector movement, using a combination of sampling-based planning and sequential convex programming to optimize individual phases. We use our motion planner to plan a trajectory across a simulated 2D cave environment with at least 95% probability of success and demonstrate improved robustness over a baseline trajectory. Finally, we verify our motion planning algorithm through experimentation on a 2D planar prototype.

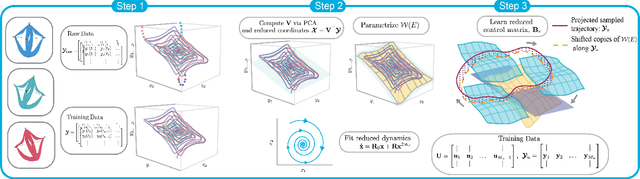

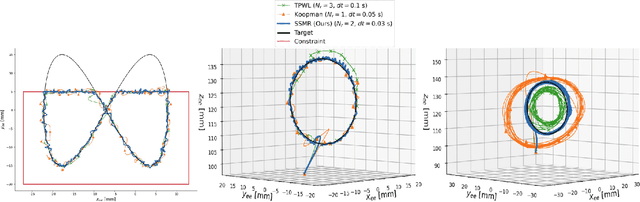

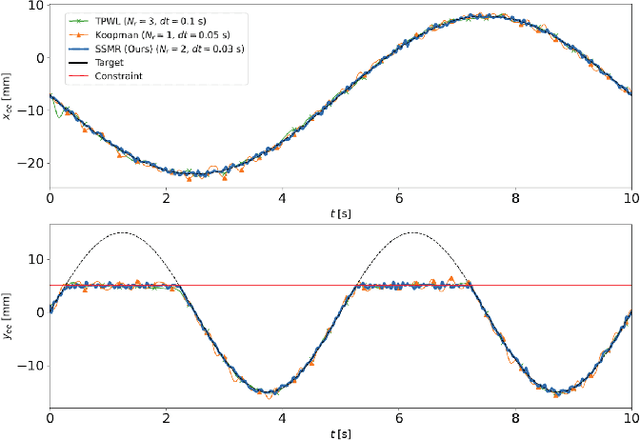

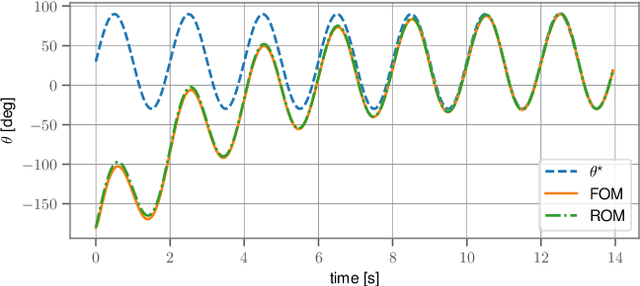

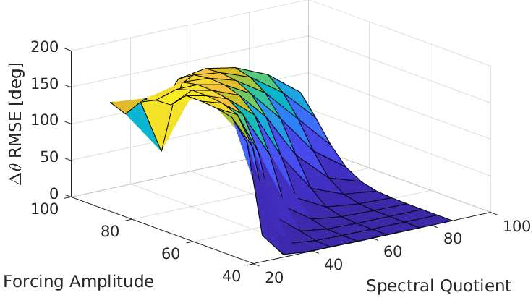

Data-Driven Spectral Submanifold Reduction for Nonlinear Optimal Control of High-Dimensional Robots

Sep 20, 2022

Abstract:Modeling and control of high-dimensional, nonlinear robotic systems remains a challenging task. While various model- and learning-based approaches have been proposed to address these challenges, they broadly lack generalizability to different control tasks and rarely preserve the structure of the dynamics. In this work, we propose a new, data-driven approach for extracting low-dimensional models from data using Spectral Submanifold Reduction (SSMR). In contrast to other data-driven methods which fit dynamical models to training trajectories, we identify the dynamics on generic, low-dimensional attractors embedded in the full phase space of the robotic system. This allows us to obtain computationally-tractable models for control which preserve the system's dominant dynamics and better track trajectories radically different from the training data. We demonstrate the superior performance and generalizability of SSMR in dynamic trajectory tracking tasks vis-a-vis the state of the art, including Koopman operator-based approaches.

AdvDO: Realistic Adversarial Attacks for Trajectory Prediction

Sep 19, 2022

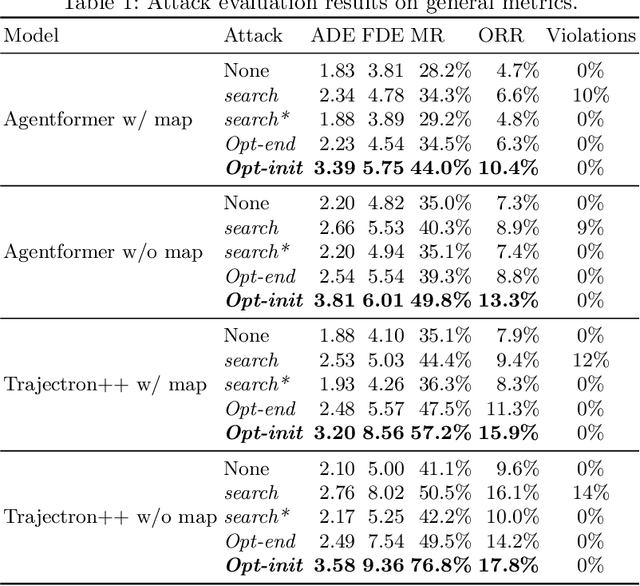

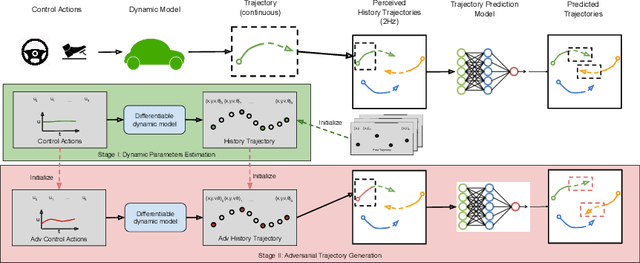

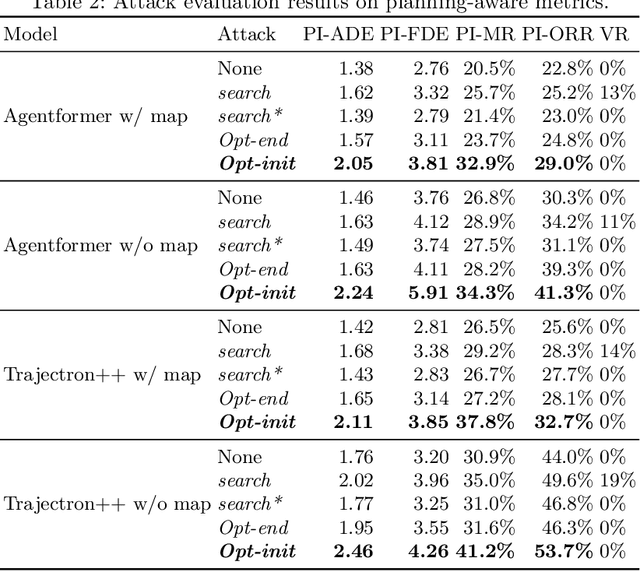

Abstract:Trajectory prediction is essential for autonomous vehicles (AVs) to plan correct and safe driving behaviors. While many prior works aim to achieve higher prediction accuracy, few study the adversarial robustness of their methods. To bridge this gap, we propose to study the adversarial robustness of data-driven trajectory prediction systems. We devise an optimization-based adversarial attack framework that leverages a carefully-designed differentiable dynamic model to generate realistic adversarial trajectories. Empirically, we benchmark the adversarial robustness of state-of-the-art prediction models and show that our attack increases the prediction error for both general metrics and planning-aware metrics by more than 50% and 37%. We also show that our attack can lead an AV to drive off road or collide into other vehicles in simulation. Finally, we demonstrate how to mitigate the adversarial attacks using an adversarial training scheme.

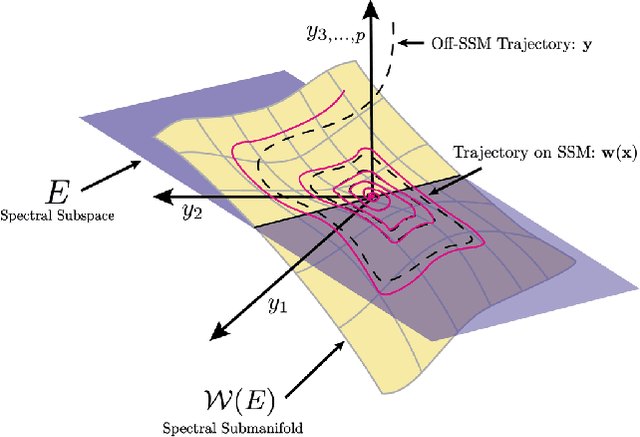

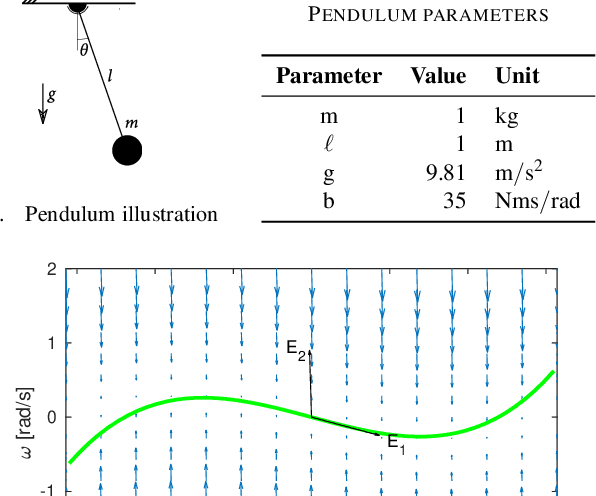

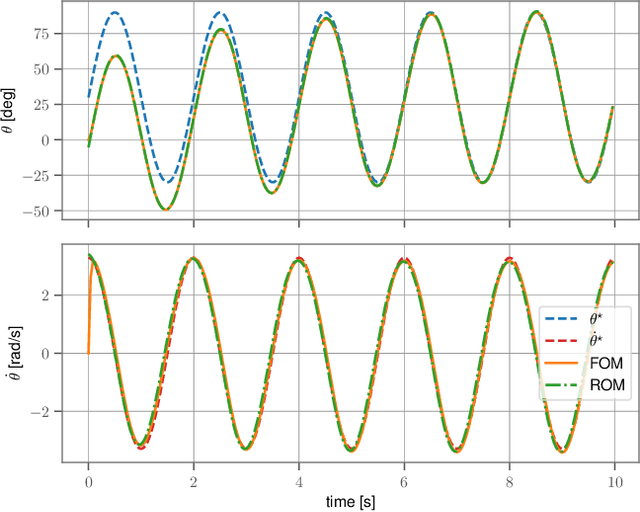

Using Spectral Submanifolds for Nonlinear Periodic Control

Sep 14, 2022

Abstract:Very high dimensional nonlinear systems arise in many engineering problems due to semi-discretization of the governing partial differential equations, e.g. through finite element methods. The complexity of these systems present computational challenges for direct application to automatic control. While model reduction has seen ubiquitous applications in control, the use of nonlinear model reduction methods in this setting remains difficult. The problem lies in preserving the structure of the nonlinear dynamics in the reduced order model for high-fidelity control. In this work, we leverage recent advances in Spectral Submanifold (SSM) theory to enable model reduction under well-defined assumptions for the purpose of efficiently synthesizing feedback controllers.

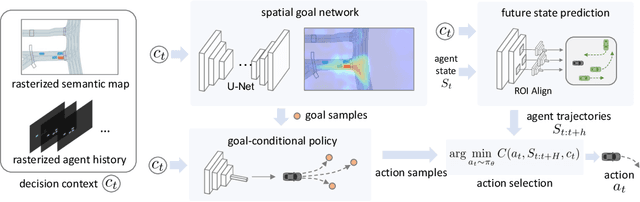

BITS: Bi-level Imitation for Traffic Simulation

Aug 26, 2022

Abstract:Simulation is the key to scaling up validation and verification for robotic systems such as autonomous vehicles. Despite advances in high-fidelity physics and sensor simulation, a critical gap remains in simulating realistic behaviors of road users. This is because, unlike simulating physics and graphics, devising first principle models for human-like behaviors is generally infeasible. In this work, we take a data-driven approach and propose a method that can learn to generate traffic behaviors from real-world driving logs. The method achieves high sample efficiency and behavior diversity by exploiting the bi-level hierarchy of driving behaviors by decoupling the traffic simulation problem into high-level intent inference and low-level driving behavior imitation. The method also incorporates a planning module to obtain stable long-horizon behaviors. We empirically validate our method, named Bi-level Imitation for Traffic Simulation (BITS), with scenarios from two large-scale driving datasets and show that BITS achieves balanced traffic simulation performance in realism, diversity, and long-horizon stability. We also explore ways to evaluate behavior realism and introduce a suite of evaluation metrics for traffic simulation. Finally, as part of our core contributions, we develop and open source a software tool that unifies data formats across different driving datasets and converts scenes from existing datasets into interactive simulation environments. For additional information and videos, see https://sites.google.com/view/nvr-bits2022/home

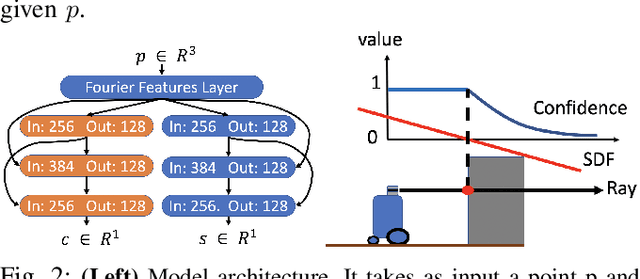

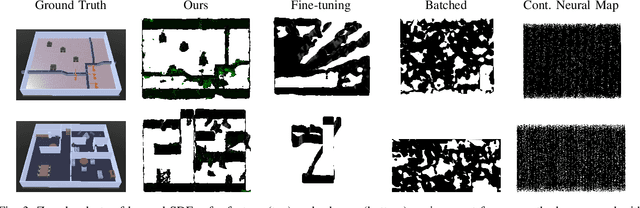

Learning Deep SDF Maps Online for Robot Navigation and Exploration

Aug 02, 2022

Abstract:We propose an algorithm to (i) learn online a deep signed distance function (SDF) with a LiDAR-equipped robot to represent the 3D environment geometry, and (ii) plan collision-free trajectories given this deep learned map. Our algorithm takes a stream of incoming LiDAR scans and continually optimizes a neural network to represent the SDF of the environment around its current vicinity. When the SDF network quality saturates, we cache a copy of the network, along with a learned confidence metric, and initialize a new SDF network to continue mapping new regions of the environment. We then concatenate all the cached local SDFs through a confidence-weighted scheme to give a global SDF for planning. For planning, we make use of a sequential convex model predictive control (MPC) algorithm. The MPC planner optimizes a dynamically feasible trajectory for the robot while enforcing no collisions with obstacles mapped in the global SDF. We show that our online mapping algorithm produces higher-quality maps than existing methods for online SDF training. In the WeBots simulator, we further showcase the combined mapper and planner running online -- navigating autonomously and without collisions in an unknown environment.

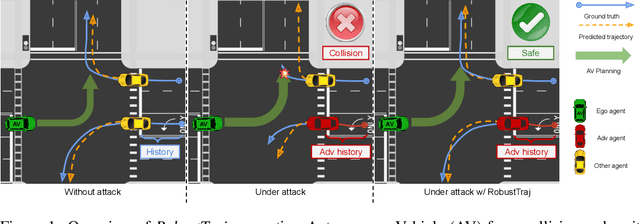

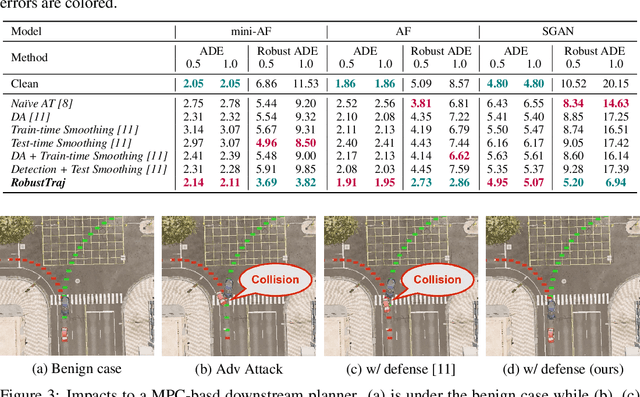

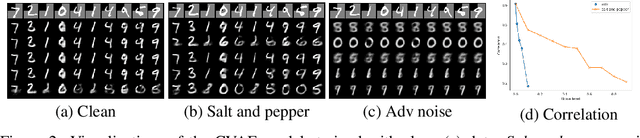

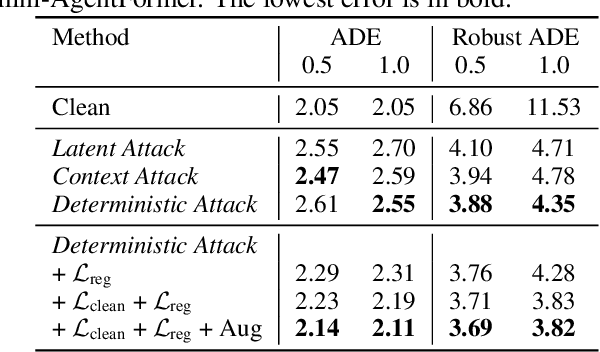

Robust Trajectory Prediction against Adversarial Attacks

Jul 29, 2022

Abstract:Trajectory prediction using deep neural networks (DNNs) is an essential component of autonomous driving (AD) systems. However, these methods are vulnerable to adversarial attacks, leading to serious consequences such as collisions. In this work, we identify two key ingredients to defend trajectory prediction models against adversarial attacks including (1) designing effective adversarial training methods and (2) adding domain-specific data augmentation to mitigate the performance degradation on clean data. We demonstrate that our method is able to improve the performance by 46% on adversarial data and at the cost of only 3% performance degradation on clean data, compared to the model trained with clean data. Additionally, compared to existing robust methods, our method can improve performance by 21% on adversarial examples and 9% on clean data. Our robust model is evaluated with a planner to study its downstream impacts. We demonstrate that our model can significantly reduce the severe accident rates (e.g., collisions and off-road driving).

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge