Juan Nieto

ETH Zürich

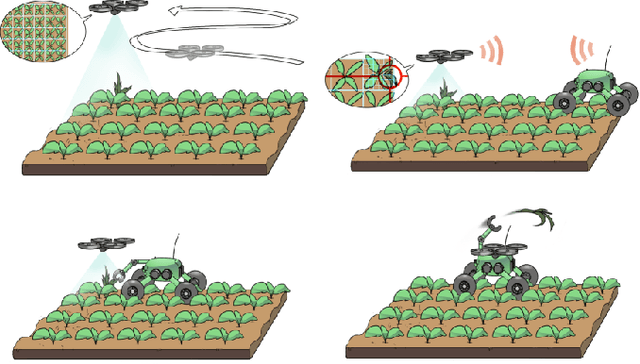

Building an Aerial-Ground Robotics System for Precision Farming

Nov 08, 2019

Abstract:The application of autonomous robots in agriculture is gaining more and more popularity thanks to the high impact it may have on food security, sustainability, resource use efficiency, reduction of chemical treatments, minimization of the human effort and maximization of yield. The Flourish research project faced this challenge by developing an adaptable robotic solution for precision farming that combines the aerial survey capabilities of small autonomous unmanned aerial vehicles (UAVs) with flexible targeted intervention performed by multi-purpose agricultural unmanned ground vehicles (UGVs). This paper presents an exhaustive overview of the scientific and technological advances and outcomes obtained in the Flourish project. We introduce multi-spectral perception algorithms and aerial and ground based systems developed to monitor crop density, weed pressure, crop nitrogen nutrition status, and to accurately classify and locate weeds. We then introduce the navigation and mapping systems to deal with the specificity of the employed robots and of the agricultural environment, highlighting the collaborative modules that enable the UAVs and UGVs to collect and share information in a unified environment model. We finally present the ground intervention hardware, software solutions, and interfaces we implemented and tested in different field conditions and with different crops. We describe here a real use case in which a UAV collaborates with a UGV to monitor the field and to perform selective spraying treatments in a totally autonomous way.

SegMap: Segment-based mapping and localization using data-driven descriptors

Sep 27, 2019

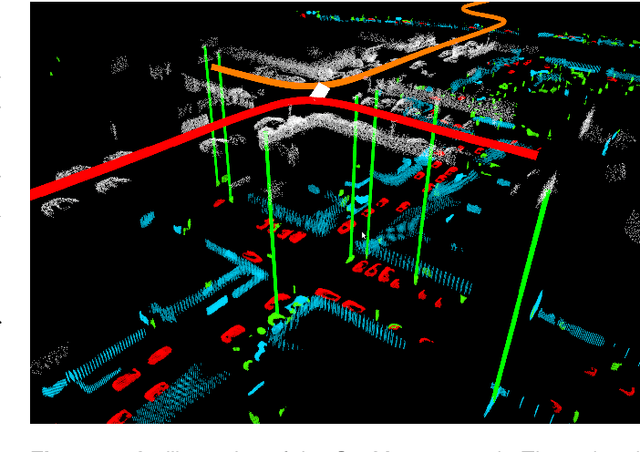

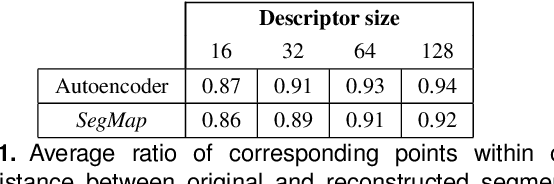

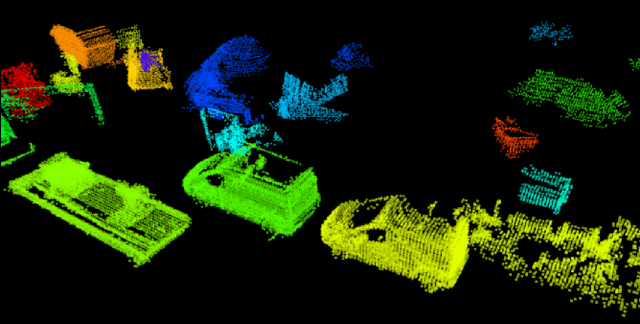

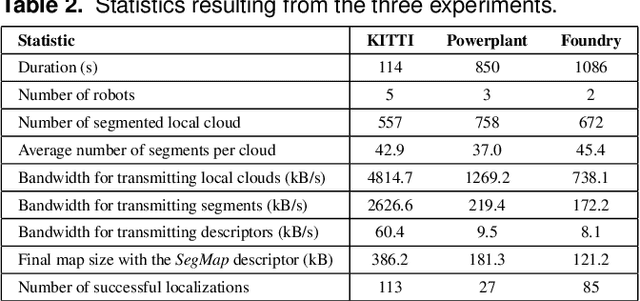

Abstract:Precisely estimating a robot's pose in a prior, global map is a fundamental capability for mobile robotics, e.g. autonomous driving or exploration in disaster zones. This task, however, remains challenging in unstructured, dynamic environments, where local features are not discriminative enough and global scene descriptors only provide coarse information. We therefore present SegMap: a map representation solution for localization and mapping based on the extraction of segments in 3D point clouds. Working at the level of segments offers increased invariance to view-point and local structural changes, and facilitates real-time processing of large-scale 3D data. SegMap exploits a single compact data-driven descriptor for performing multiple tasks: global localization, 3D dense map reconstruction, and semantic information extraction. The performance of SegMap is evaluated in multiple urban driving and search and rescue experiments. We show that the learned SegMap descriptor has superior segment retrieval capabilities, compared to state-of-the-art handcrafted descriptors. In consequence, we achieve a higher localization accuracy and a 6% increase in recall over state-of-the-art. These segment-based localizations allow us to reduce the open-loop odometry drift by up to 50%. SegMap is open-source available along with easy to run demonstrations.

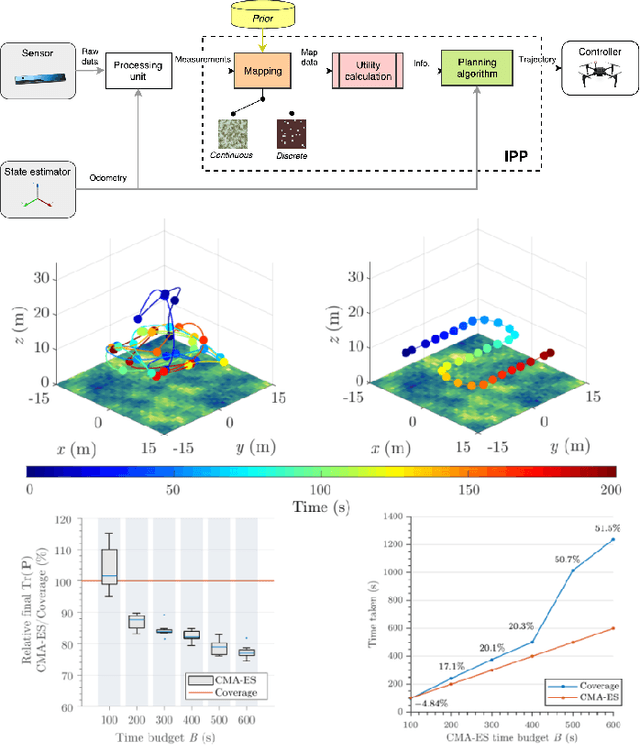

An Efficient Sampling-based Method for Online Informative Path Planning in Unknown Environments

Sep 20, 2019

Abstract:The ability to plan informative paths online is essential to robot autonomy. In particular, sampling-based approaches are often used as they are capable of using arbitrary information gain formulations. However, they are prone to local minima, resulting in sub-optimal trajectories, and sometimes do not reach global coverage. In this paper, we present a new RRT*-inspired online informative path planning algorithm. Our method continuously expands a single tree of candidate trajectories and rewires segments to maintain the tree and refine intermediate trajectories. This allows the algorithm to achieve global coverage and maximize the utility of a path in a global context, using a single objective function. We demonstrate the algorithm's capabilities in the applications of autonomous indoor exploration as well as accurate Truncated Signed Distance Field (TSDF)-based 3D reconstruction on-board a Micro Aerial vehicle (MAV). We study the impact of commonly used information gain and cost formulations in these scenarios and propose a novel TSDF-based 3D reconstruction gain and cost-utility formulation. Detailed evaluation in realistic simulation environments show that our approach outperforms state of the art methods in these tasks. Experiments on a real MAV demonstrate the ability of our method to robustly plan in real-time, exploring an indoor environment solely with on-board sensing and computation. We make our framework available for future research.

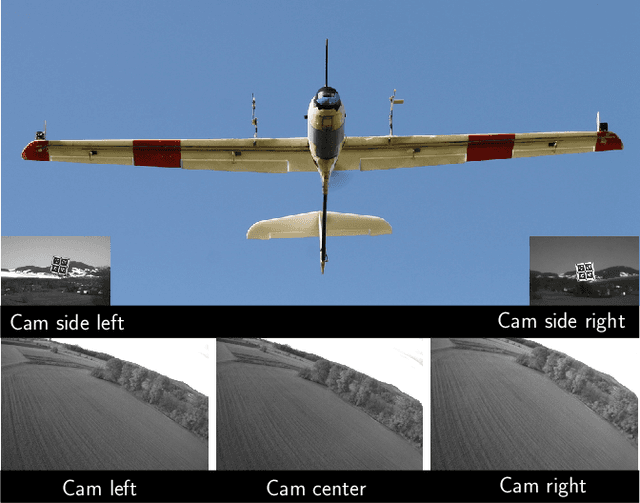

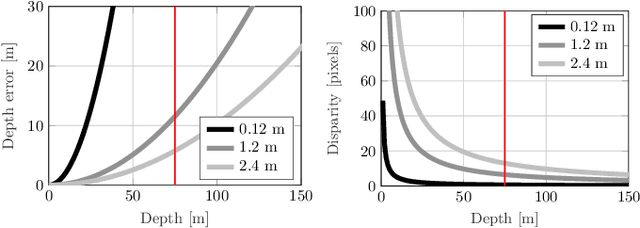

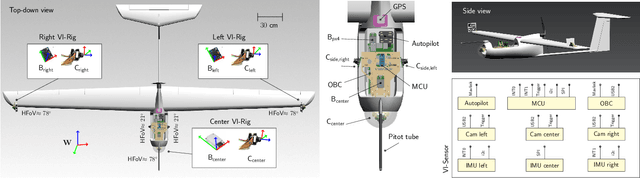

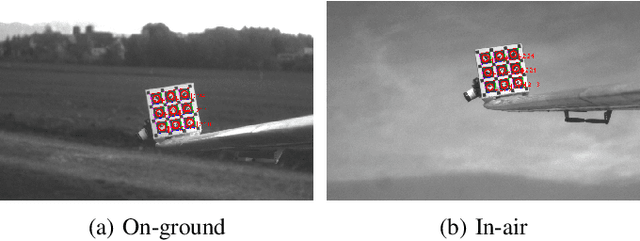

Flexible Trinocular: Non-rigid Multi-Camera-IMU Dense Reconstruction for UAV Navigation and Mapping

Aug 23, 2019

Abstract:In this paper, we propose a visual-inertial framework able to efficiently estimate the camera poses of a non-rigid trinocular baseline for long-range depth estimation on-board a fast moving aerial platform. The estimation of the time-varying baseline is based on relative inertial measurements, a photometric relative pose optimizer, and a probabilistic wing model fused in an efficient Extended Kalman Filter (EKF) formulation. The estimated depth measurements can be integrated into a geo-referenced global map to render a reconstruction of the environment useful for local replanning algorithms. Based on extensive real-world experiments we describe the challenges and solutions for obtaining the probabilistic wing model, reliable relative inertial measurements, and vision-based relative pose updates and demonstrate the computational efficiency and robustness of the overall system under challenging conditions.

Free-Space Features: Global Localization in 2D Laser SLAM Using Distance Function Maps

Aug 05, 2019

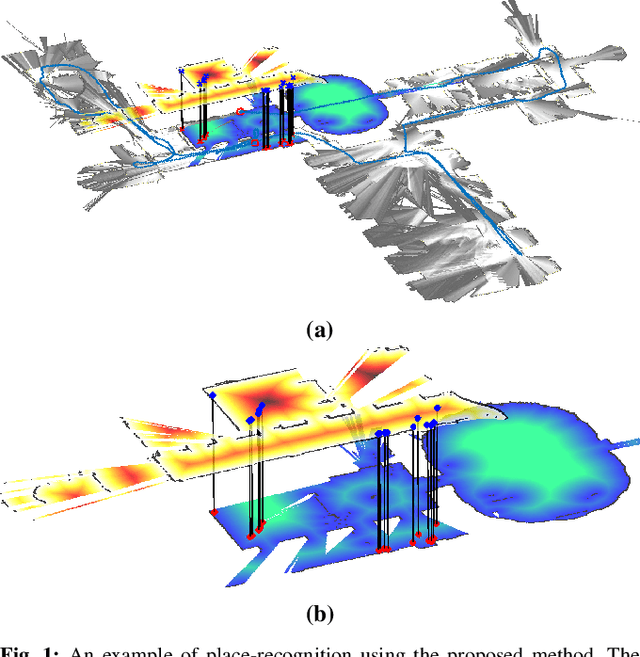

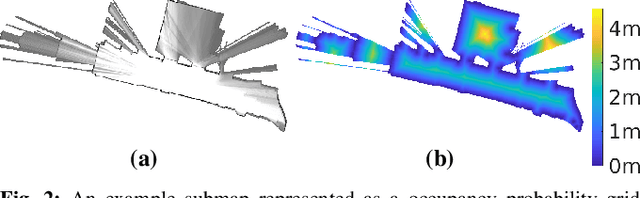

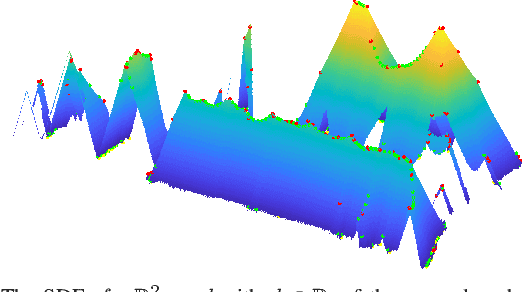

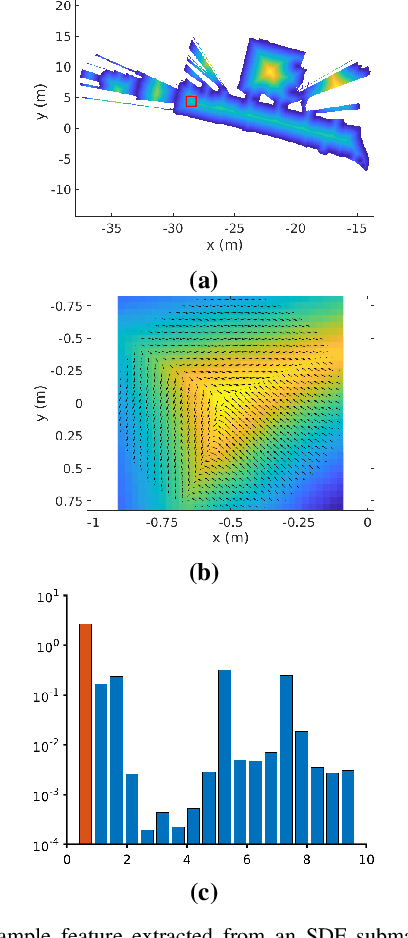

Abstract:In many applications, maintaining a consistent map of the environment is key to enabling robotic platforms to perform higher-level decision making. Detection of already visited locations is one of the primary ways in which map consistency is maintained, especially in situations where external positioning systems are unavailable or unreliable. Mapping in 2D is an important field in robotics, largely due to the fact that man-made environments such as warehouses and homes, where robots are expected to play an increasing role, can often be approximated as planar. Place recognition in this context remains challenging: 2D lidar scans contain scant information with which to characterize, and therefore recognize, a location. This paper introduces a novel approach aimed at addressing this problem. At its core, the system relies on the use of the distance function for representation of geometry. This representation allows extraction of features which describe the geometry of both surfaces and free-space in the environment. We propose a feature for this purpose. Through evaluations on public datasets, we demonstrate the utility of free-space in the description of places, and show an increase in localization performance over a state-of-the-art descriptor extracted from surface geometry.

Revisiting Boustrophedon Coverage Path Planning as a Generalized Traveling Salesman Problem

Jul 22, 2019

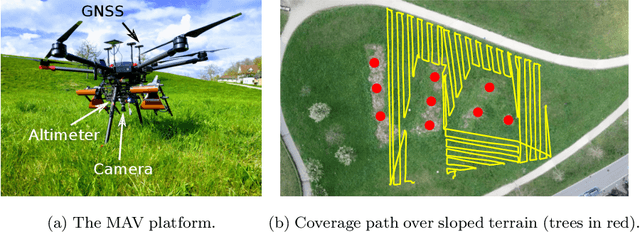

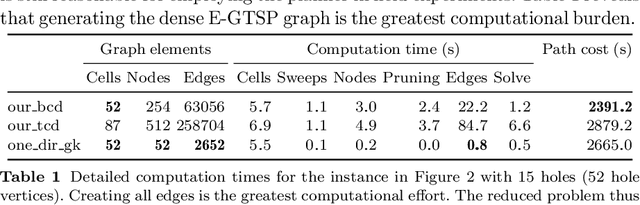

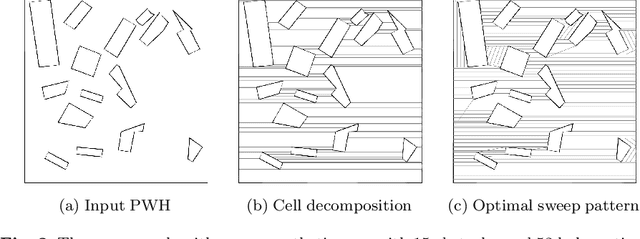

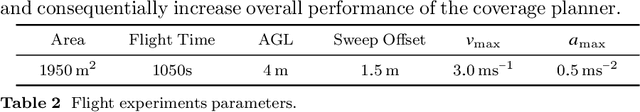

Abstract:In this paper, we present a path planner for low-altitude terrain coverage in known environments with unmanned rotary-wing micro aerial vehicles (MAVs). Airborne systems can assist humanitarian demining by surveying suspected hazardous areas (SHAs) with cameras, ground-penetrating synthetic aperture radar (GPSAR), and metal detectors. Most available coverage planner implementations for MAVs do not consider obstacles and thus cannot be deployed in obstructed environments. We describe an open source framework to perform coverage planning in polygon flight corridors with obstacles. Our planner extends boustrophedon coverage planning by optimizing over different sweep combinations to find the optimal sweep path, and considers obstacles during transition flights between cells. We evaluate the path planner on 320 synthetic maps and show that it is able to solve realistic planning instances fast enough to run in the field. The planner achieves 14% lower path costs than a conventional coverage planner. We validate the planner on a real platform where we show low-altitude coverage over a sloped terrain with trees.

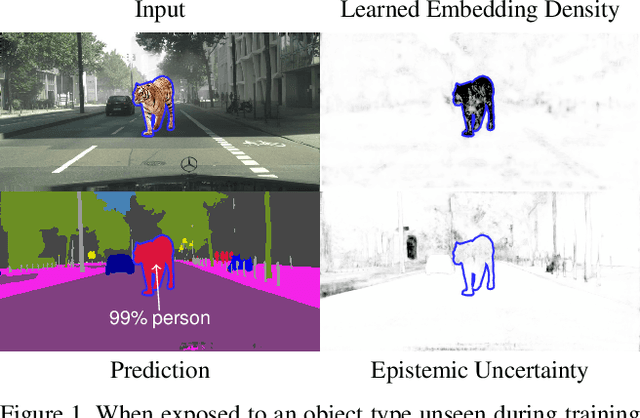

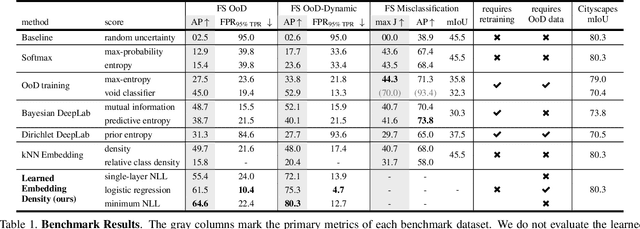

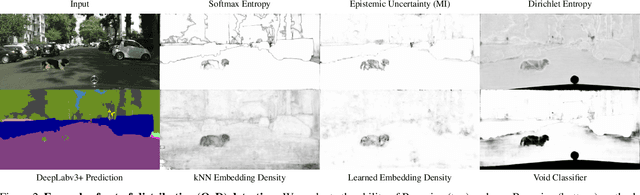

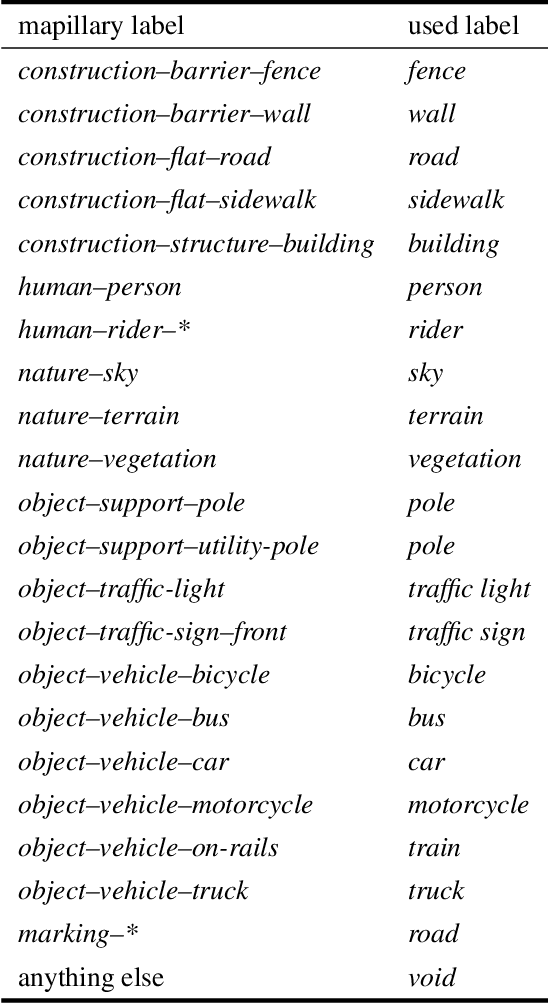

The Fishyscapes Benchmark: Measuring Blind Spots in Semantic Segmentation

May 13, 2019

Abstract:Deep learning has enabled impressive progress in the accuracy of semantic segmentation. Yet, the ability to estimate uncertainty and detect failure is key for safety-critical applications like autonomous driving. Existing uncertainty estimates have mostly been evaluated on simple tasks, and it is unclear whether these methods generalize to more complex scenarios. We present Fishyscapes, the first public benchmark for uncertainty estimation in a real-world task of semantic segmentation for urban driving. It evaluates pixel-wise uncertainty estimates and covers the detection of both out-of-distribution objects and misclassifications. We adapt state-of-the-art methods to recent semantic segmentation models and compare approaches based on softmax confidence, Bayesian learning, and embedding density. A thorough evaluation of these methods reveals a clear gap to their alleged capabilities. Our results show that failure detection is far from solved even for ordinary situations, while our benchmark allows measuring advancements beyond the state-of-the-art.

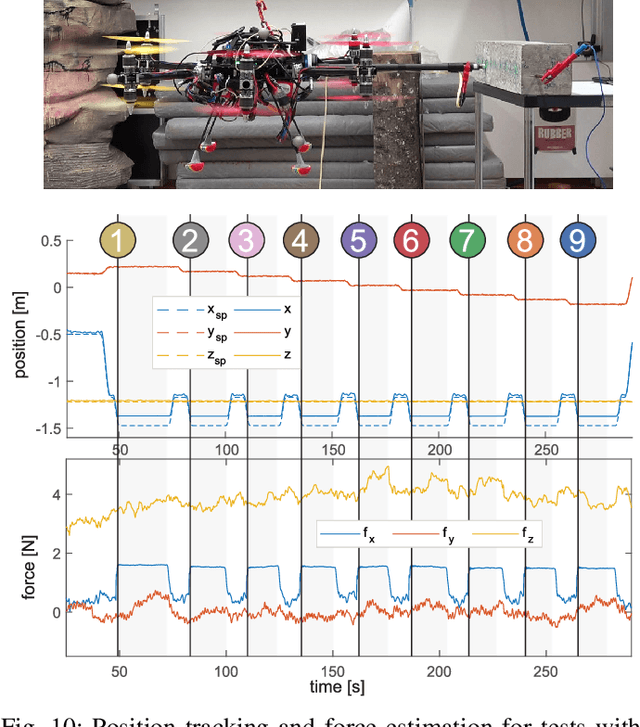

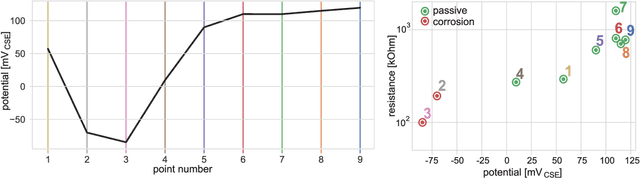

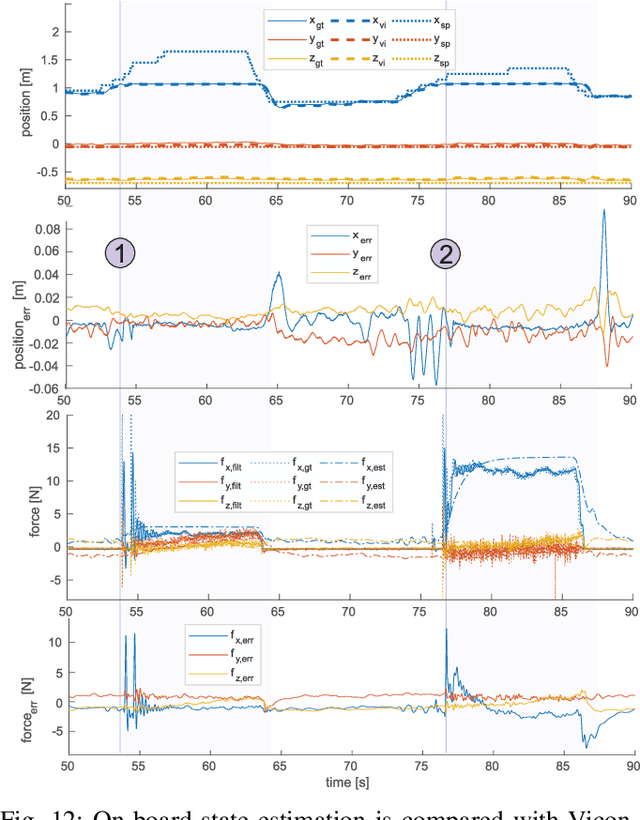

An Omnidirectional Aerial Manipulation Platform for Contact-Based Inspection

May 09, 2019

Abstract:This paper presents an omnidirectional aerial manipulation platform for robust and responsive interaction with unstructured environments, toward the goal of contact-based inspection. The fully actuated tilt-rotor aerial system is equipped with a rigidly mounted end-effector, and is able to exert a 6 degree of freedom force and torque, decoupling the system's translational and rotational dynamics, and enabling precise interaction with the environment while maintaining stability. An impedance controller with selective apparent inertia is formulated to permit compliance in certain degrees of freedom while achieving precise trajectory tracking and disturbance rejection in others. Experiments demonstrate disturbance rejection, push-and-slide interaction, and on-board state estimation with depth servoing to interact with local surfaces. The system is also validated as a tool for contact-based non-destructive testing of concrete infrastructure.

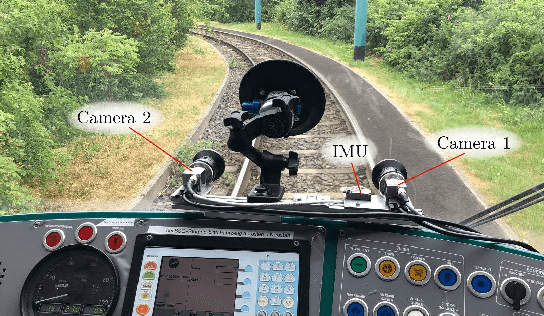

Experimental Comparison of Visual-Aided Odometry Methods for Rail Vehicles

Apr 01, 2019

Abstract:Today, rail vehicle localization is based on infrastructure-side Balises (beacons) together with on-board odometry to determine whether a rail segment is occupied. Such a coarse locking leads to a sub-optimal usage of the rail networks. New railway standards propose the use of moving blocks centered around the rail vehicles to increase the capacity of the network. However, this approach requires accurate and robust position and velocity estimation of all vehicles. In this work, we investigate the applicability, challenges and limitations of current visual and visual-inertial motion estimation frameworks for rail applications. An evaluation against RTK-GPS ground truth is performed on multiple datasets recorded in industrial, sub-urban, and forest environments. Our results show that stereo visual-inertial odometry has a great potential to provide a precise motion estimation because of its complementing sensor modalities and shows superior performance in challenging situations compared to other frameworks.

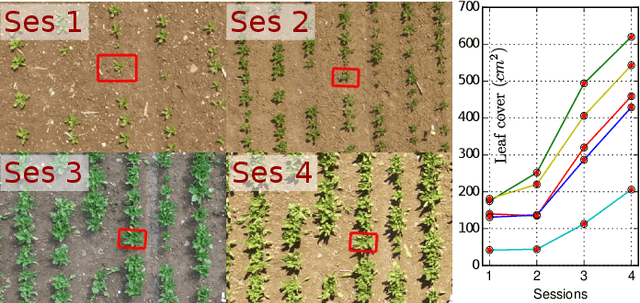

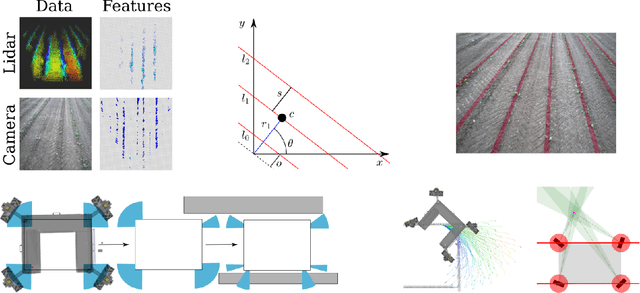

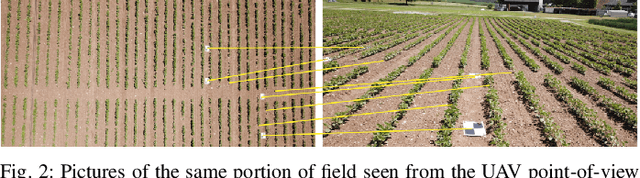

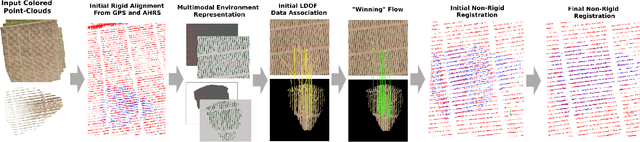

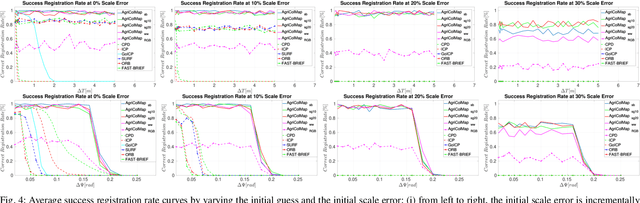

AgriColMap: Aerial-Ground Collaborative 3D Mapping for Precision Farming

Mar 14, 2019

Abstract:The combination of aerial survey capabilities of Unmanned Aerial Vehicles with targeted intervention abilities of agricultural Unmanned Ground Vehicles can significantly improve the effectiveness of robotic systems applied to precision agriculture. In this context, building and updating a common map of the field is an essential but challenging task. The maps built using robots of different types show differences in size, resolution and scale, the associated geolocation data may be inaccurate and biased, while the repetitiveness of both visual appearance and geometric structures found within agricultural contexts render classical map merging techniques ineffective. In this paper we propose AgriColMap, a novel map registration pipeline that leverages a grid-based multimodal environment representation which includes a vegetation index map and a Digital Surface Model. We cast the data association problem between maps built from UAVs and UGVs as a multimodal, large displacement dense optical flow estimation. The dominant, coherent flows, selected using a voting scheme, are used as point-to-point correspondences to infer a preliminary non-rigid alignment between the maps. A final refinement is then performed, by exploiting only meaningful parts of the registered maps. We evaluate our system using real world data for 3 fields with different crop species. The results show that our method outperforms several state of the art map registration and matching techniques by a large margin, and has a higher tolerance to large initial misalignments. We release an implementation of the proposed approach along with the acquired datasets with this paper.

* Published in IEEE Robotics and Automation Letters, 2019

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge