Jean-Jacques Slotine

Generalization in Supervised Learning Through Riemannian Contraction

Jan 26, 2022

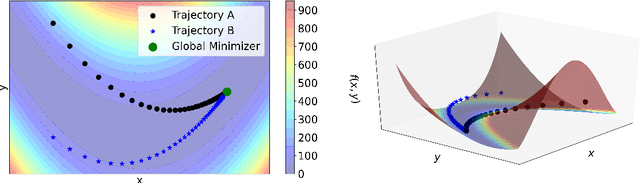

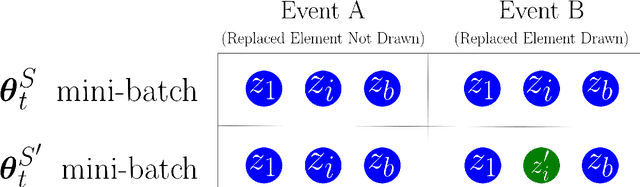

Abstract:We prove that Riemannian contraction in a supervised learning setting implies generalization. Specifically, we show that if an optimizer is contracting in some Riemannian metric with rate $\lambda > 0$, it is uniformly algorithmically stable with rate $\mathcal{O}(1/\lambda n)$, where $n$ is the number of labelled examples in the training set. The results hold for stochastic and deterministic optimization, in both continuous and discrete-time, for convex and non-convex loss surfaces. The associated generalization bounds reduce to well-known results in the particular case of gradient descent over convex or strongly convex loss surfaces. They can be shown to be optimal in certain linear settings, such as kernel ridge regression under gradient flow.

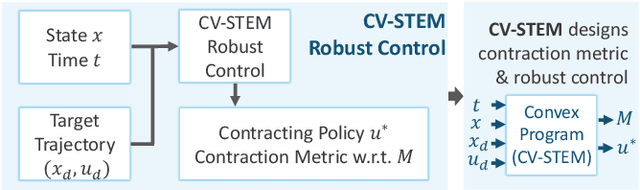

A Theoretical Overview of Neural Contraction Metrics for Learning-based Control with Guaranteed Stability

Oct 02, 2021

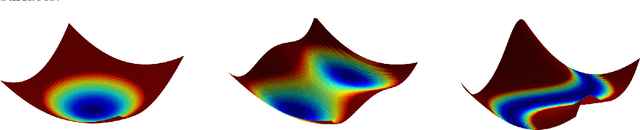

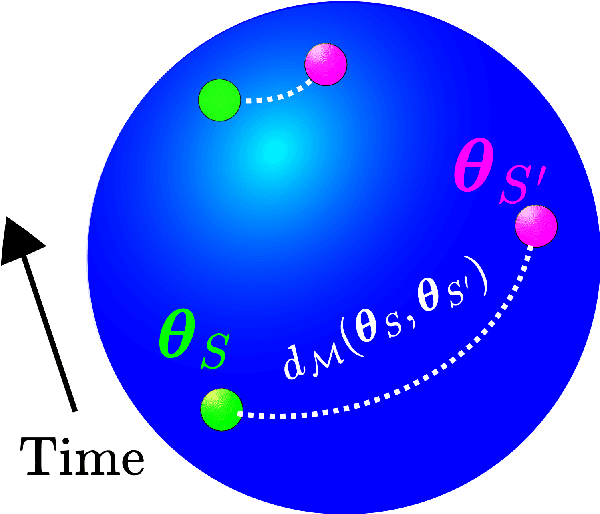

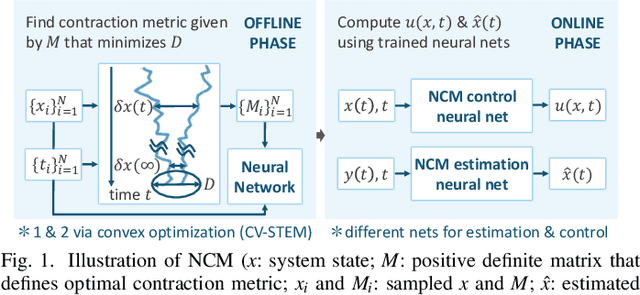

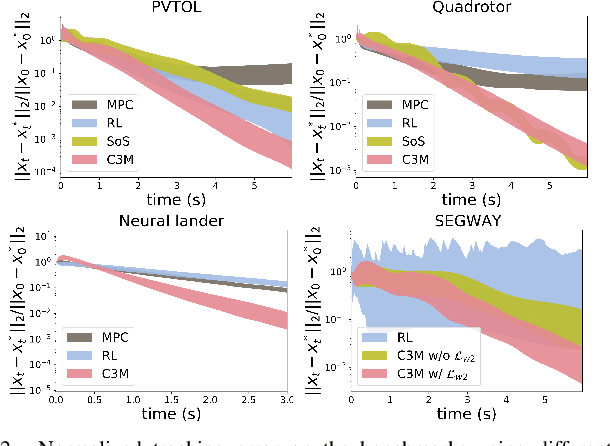

Abstract:This paper presents a theoretical overview of a Neural Contraction Metric (NCM): a neural network model of an optimal contraction metric and corresponding differential Lyapunov function, the existence of which is a necessary and sufficient condition for incremental exponential stability of non-autonomous nonlinear system trajectories. Its innovation lies in providing formal robustness guarantees for learning-based control frameworks, utilizing contraction theory as an analytical tool to study the nonlinear stability of learned systems via convex optimization. In particular, we rigorously show in this paper that, by regarding modeling errors of the learning schemes as external disturbances, the NCM control is capable of obtaining an explicit bound on the distance between a time-varying target trajectory and perturbed solution trajectories, which exponentially decreases with time even under the presence of deterministic and stochastic perturbation. These useful features permit simultaneous synthesis of a contraction metric and associated control law by a neural network, thereby enabling real-time computable and probably robust learning-based control for general control-affine nonlinear systems.

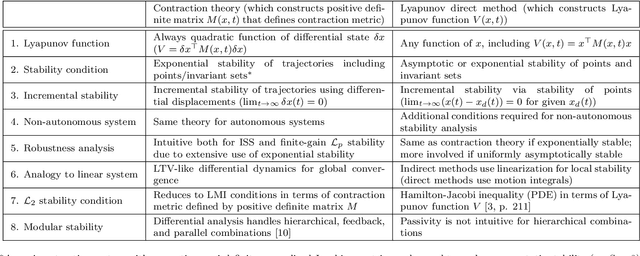

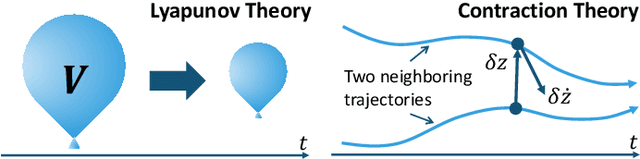

Contraction Theory for Nonlinear Stability Analysis and Learning-based Control: A Tutorial Overview

Oct 01, 2021

Abstract:Contraction theory is an analytical tool to study differential dynamics of a non-autonomous (i.e., time-varying) nonlinear system under a contraction metric defined with a uniformly positive definite matrix, the existence of which results in a necessary and sufficient characterization of incremental exponential stability of multiple solution trajectories with respect to each other. By using a squared differential length as a Lyapunov-like function, its nonlinear stability analysis boils down to finding a suitable contraction metric that satisfies a stability condition expressed as a linear matrix inequality, indicating that many parallels can be drawn between well-known linear systems theory and contraction theory for nonlinear systems. Furthermore, contraction theory takes advantage of a superior robustness property of exponential stability used in conjunction with the comparison lemma. This yields much-needed safety and stability guarantees for neural network-based control and estimation schemes, without resorting to a more involved method of using uniform asymptotic stability for input-to-state stability. Such distinctive features permit systematic construction of a contraction metric via convex optimization, thereby obtaining an explicit exponential bound on the distance between a time-varying target trajectory and solution trajectories perturbed externally due to disturbances and learning errors. The objective of this paper is therefore to present a tutorial overview of contraction theory and its advantages in nonlinear stability analysis of deterministic and stochastic systems, with an emphasis on deriving formal robustness and stability guarantees for various learning-based and data-driven automatic control methods. In particular, we provide a detailed review of techniques for finding contraction metrics and associated control and estimation laws using deep neural networks.

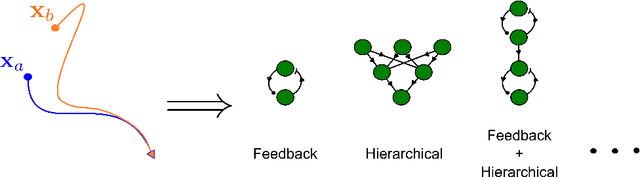

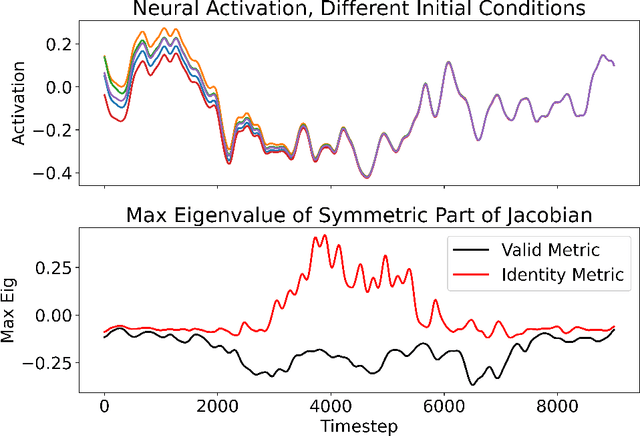

Recursive Construction of Stable Assemblies of Recurrent Neural Networks

Jun 16, 2021

Abstract:Advanced applications of modern machine learning will likely involve combinations of trained networks, as are already used in spectacular systems such as DeepMind's AlphaGo. Recursively building such combinations in an effective and stable fashion while also allowing for continual refinement of the individual networks - as nature does for biological networks - will require new analysis tools. This paper takes a step in this direction by establishing contraction properties of broad classes of nonlinear recurrent networks and neural ODEs, and showing how these quantified properties allow in turn to recursively construct stable networks of networks in a systematic fashion. The results can also be used to stably combine recurrent networks and physical systems with quantified contraction properties. Similarly, they may be applied to modular computational models of cognition.

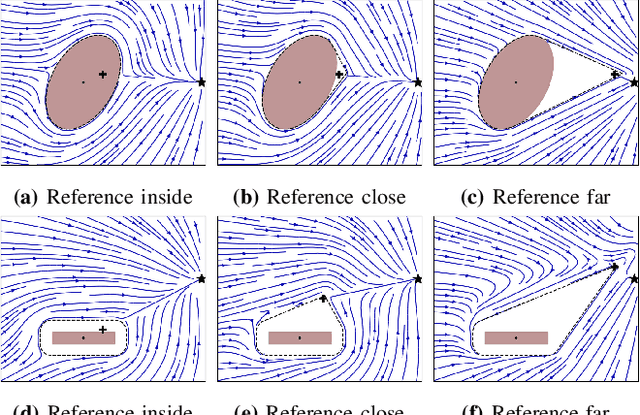

Avoiding Dense and Dynamic Obstacles in Enclosed Spaces: Application to Moving in Crowds

Jun 04, 2021

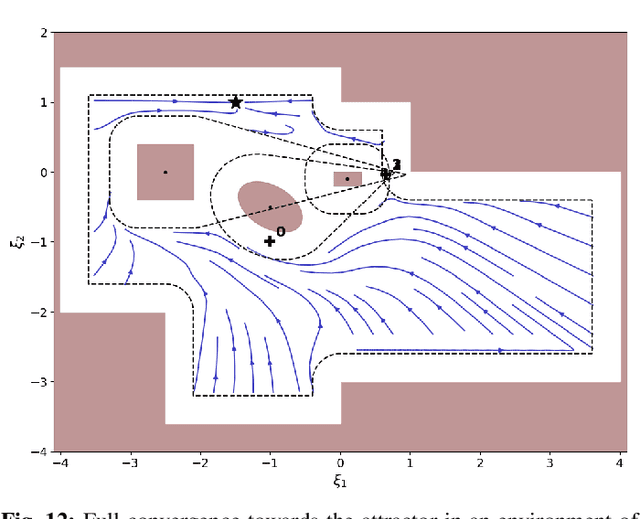

Abstract:This paper presents a closed-form approach to constrain a flow within a given volume and around objects. The flow is guaranteed to converge and to stop at a single fixed point. We show that the obstacle avoidance problem can be inverted to enforce that the flow remains enclosed within a volume defined by a polygonal surface. We formally guarantee that such a flow will never contact the boundaries of the enclosing volume and obstacles, and will asymptotically converge towards an attractor. We further create smooth motion fields around obstacles with edges (e.g. tables). Both obstacles and enclosures may be time-varying, i.e. moving, expanding and shrinking. The technique enables a robot to navigate within an enclosed corridor while avoiding static and moving obstacles. It was applied on an autonomous robot (QOLO) in a static complex indoor environment, and also tested in simulations with dense crowds. The final proof of concept was performed in an outdoor environment in Lausanne. The QOLO-robot successfully traversed a marketplace in the center of town in presence of a diverse crowd with a non-uniform motion pattern.

Dynamical Pose Estimation

Mar 11, 2021

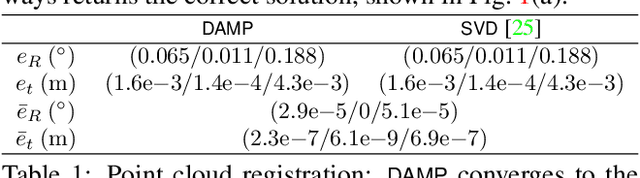

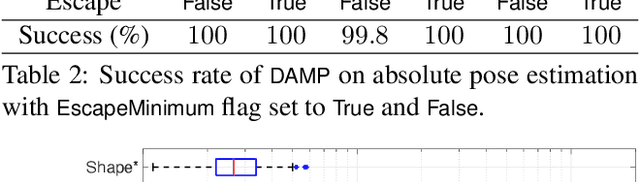

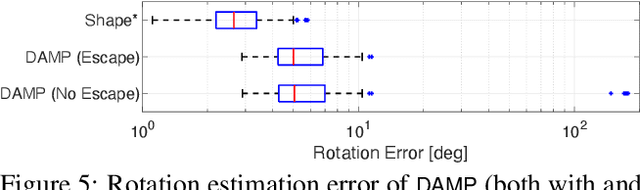

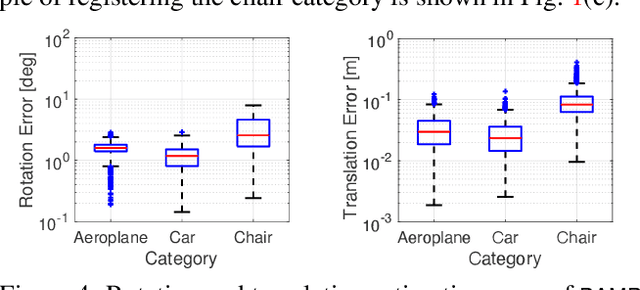

Abstract:We study the problem of aligning two sets of 3D geometric primitives given known correspondences. Our first contribution is to show that this primitive alignment framework unifies five perception problems including point cloud registration, primitive (mesh) registration, category-level 3D registration, absolution pose estimation (APE), and category-level APE. Our second contribution is to propose DynAMical Pose estimation (DAMP), the first general and practical algorithm to solve primitive alignment problem by simulating rigid body dynamics arising from virtual springs and damping, where the springs span the shortest distances between corresponding primitives. Our third contribution is to apply DAMP to the five perception problems in simulated and real datasets and demonstrate (i) DAMP always converges to the globally optimal solution in the first three problems with 3D-3D correspondences; (ii) although DAMP sometimes converges to suboptimal solutions in the last two problems with 2D-3D correspondences, with a simple scheme for escaping local minima, DAMP almost always succeeds. Our last contribution is to demystify the surprising empirical performance of DAMP and formally prove a global convergence result in the case of point cloud registration by charactering local stability of the equilibrium points of the underlying dynamical system.

Learning-based Adaptive Control via Contraction Theory

Mar 04, 2021

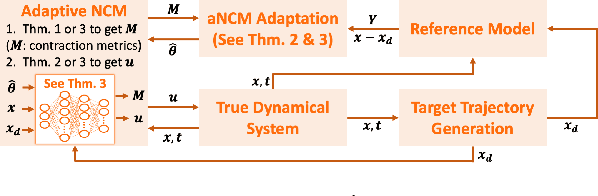

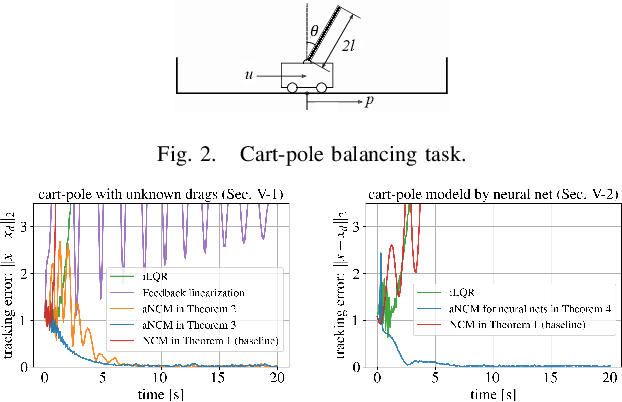

Abstract:We present a new deep learning-based adaptive control framework for nonlinear systems with multiplicatively-separable parametric uncertainty, called an adaptive Neural Contraction Metric (aNCM). The aNCM uses a neural network model of an optimal adaptive contraction metric, the existence of which guarantees asymptotic stability and exponential boundedness of system trajectories under the parametric uncertainty. In particular, we exploit the concept of a Neural Contraction Metric (NCM) to obtain a nominal provably stable robust control policy for nonlinear systems with bounded disturbances, and combine this policy with a novel adaptation law to achieve stability guarantees. We also show that the framework is applicable to adaptive control of dynamical systems modeled via basis function approximation. Furthermore, the use of neural networks in the aNCM permits its real-time implementation, resulting in broad applicability to a variety of systems. Its superiority to the state-of-the-art is illustrated with a simple cart-pole balancing task.

An Ode to an ODE

Jun 23, 2020

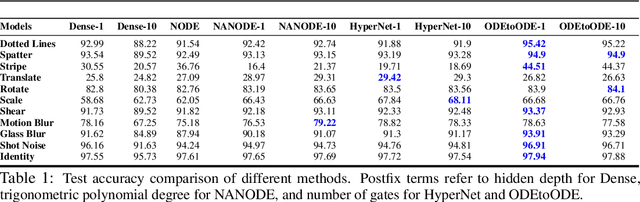

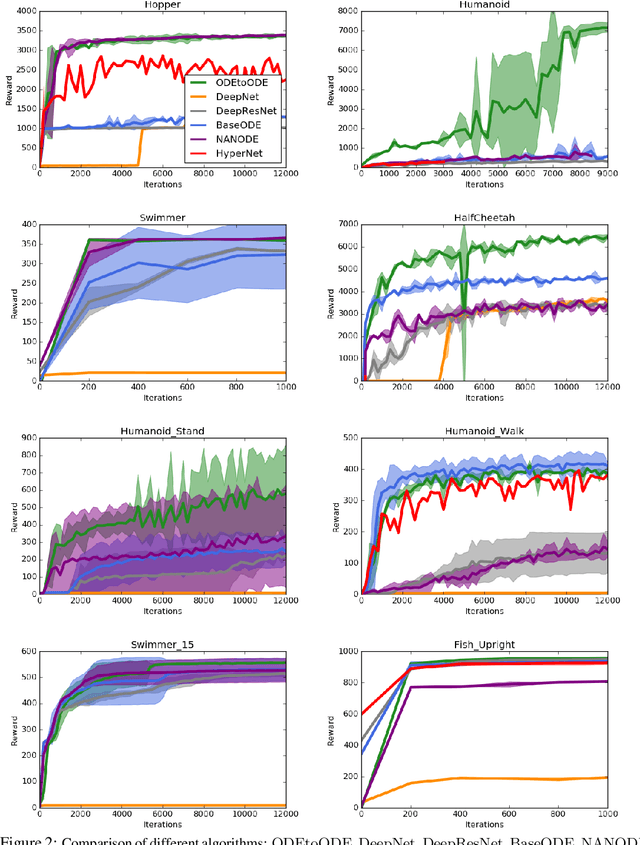

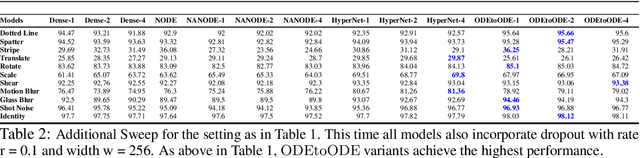

Abstract:We present a new paradigm for Neural ODE algorithms, called ODEtoODE, where time-dependent parameters of the main flow evolve according to a matrix flow on the orthogonal group O(d). This nested system of two flows, where the parameter-flow is constrained to lie on the compact manifold, provides stability and effectiveness of training and provably solves the gradient vanishing-explosion problem which is intrinsically related to training deep neural network architectures such as Neural ODEs. Consequently, it leads to better downstream models, as we show on the example of training reinforcement learning policies with evolution strategies, and in the supervised learning setting, by comparing with previous SOTA baselines. We provide strong convergence results for our proposed mechanism that are independent of the depth of the network, supporting our empirical studies. Our results show an intriguing connection between the theory of deep neural networks and the field of matrix flows on compact manifolds.

Time Dependence in Non-Autonomous Neural ODEs

May 06, 2020

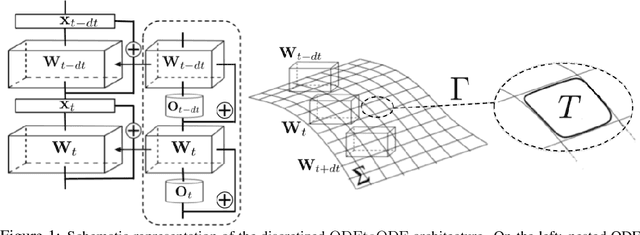

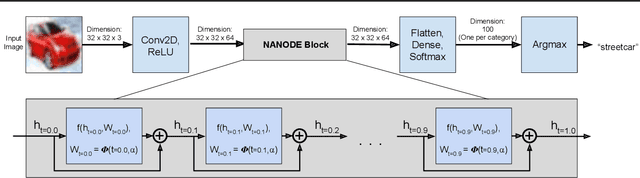

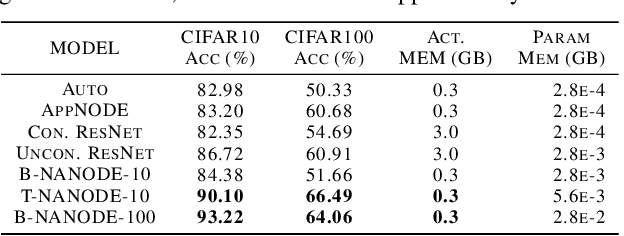

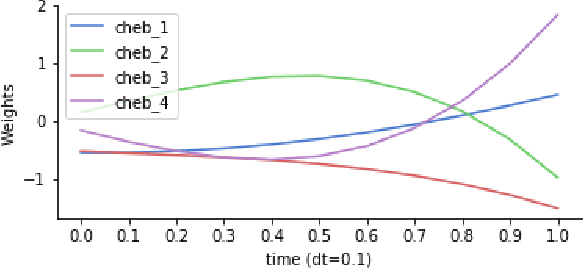

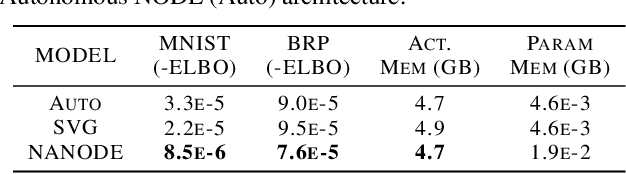

Abstract:Neural Ordinary Differential Equations (ODEs) are elegant reinterpretations of deep networks where continuous time can replace the discrete notion of depth, ODE solvers perform forward propagation, and the adjoint method enables efficient, constant memory backpropagation. Neural ODEs are universal approximators only when they are non-autonomous, that is, the dynamics depends explicitly on time. We propose a novel family of Neural ODEs with time-varying weights, where time-dependence is non-parametric, and the smoothness of weight trajectories can be explicitly controlled to allow a tradeoff between expressiveness and efficiency. Using this enhanced expressiveness, we outperform previous Neural ODE variants in both speed and representational capacity, ultimately outperforming standard ResNet and CNN models on select image classification and video prediction tasks.

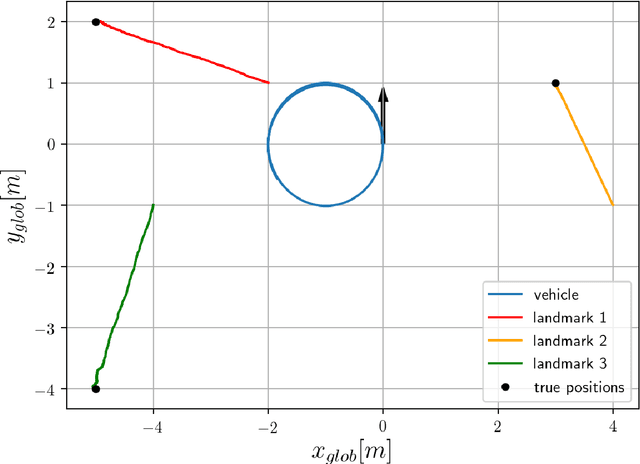

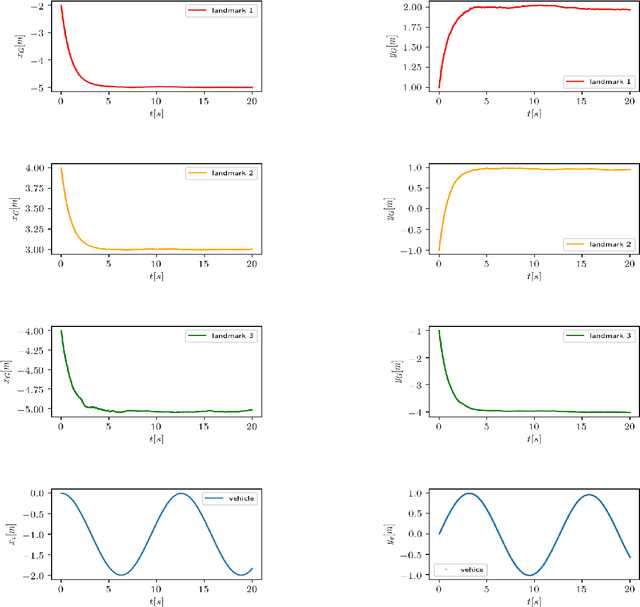

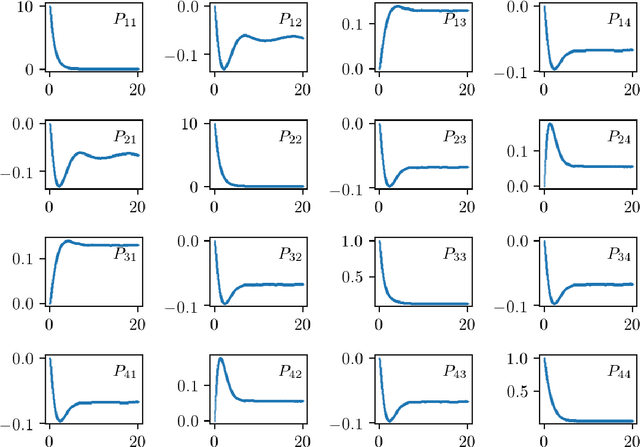

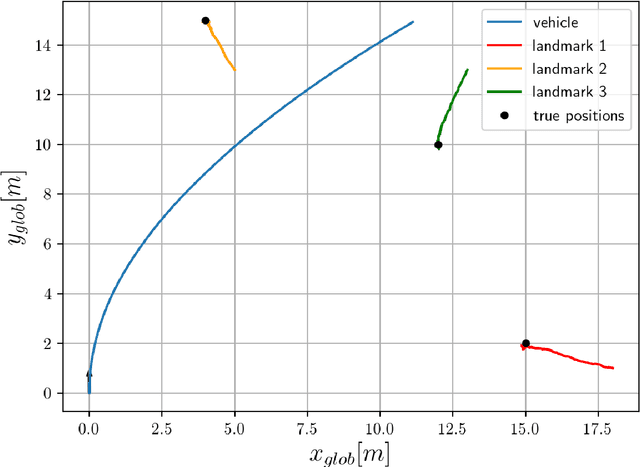

Numerical and experimental realization of analytical SLAM

Nov 15, 2019

Abstract:Analytical approach to SLAM problem was introduced in the recent years. In our work we investigate the method numerically with the motivation of using the algorithm in a real hardware experiments. We perform a robustness test of the algorithm and apply it to the robotic hardware in two different setups. In one we try to recover a map of the environment using bearing angle measurements and radial distance measurements. The another setup utilizes only bearing angle information.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge