Henggang Cui

PBP: Path-based Trajectory Prediction for Autonomous Driving

Sep 07, 2023

Abstract:Trajectory prediction plays a crucial role in the autonomous driving stack by enabling autonomous vehicles to anticipate the motion of surrounding agents. Goal-based prediction models have gained traction in recent years for addressing the multimodal nature of future trajectories. Goal-based prediction models simplify multimodal prediction by first predicting 2D goal locations of agents and then predicting trajectories conditioned on each goal. However, a single 2D goal location serves as a weak inductive bias for predicting the whole trajectory, often leading to poor map compliance, i.e., part of the trajectory going off-road or breaking traffic rules. In this paper, we improve upon goal-based prediction by proposing the Path-based prediction (PBP) approach. PBP predicts a discrete probability distribution over reference paths in the HD map using the path features and predicts trajectories in the path-relative Frenet frame. We applied the PBP trajectory decoder on top of the HiVT scene encoder and report results on the Argoverse dataset. Our experiments show that PBP achieves competitive performance on the standard trajectory prediction metrics, while significantly outperforming state-of-the-art baselines in terms of map compliance.

Improving Motion Forecasting for Autonomous Driving with the Cycle Consistency Loss

Oct 31, 2022Abstract:Robust motion forecasting of the dynamic scene is a critical component of an autonomous vehicle. It is a challenging problem due to the heterogeneity in the scene and the inherent uncertainties in the problem. To improve the accuracy of motion forecasting, in this work, we identify a new consistency constraint in this task, that is an agent's future trajectory should be coherent with its history observations and visa versa. To leverage this property, we propose a novel cycle consistency training scheme and define a novel cycle loss to encourage this consistency. In particular, we reverse the predicted future trajectory backward in time and feed it back into the prediction model to predict the history and compute the loss as an additional cycle loss term. Through our experiments on the Argoverse dataset, we demonstrate that cycle loss can improve the performance of competitive motion forecasting models.

Importance is in your attention: agent importance prediction for autonomous driving

Apr 19, 2022

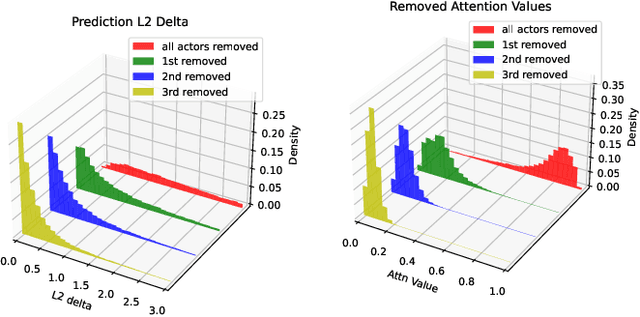

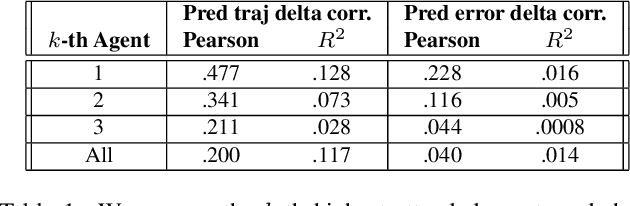

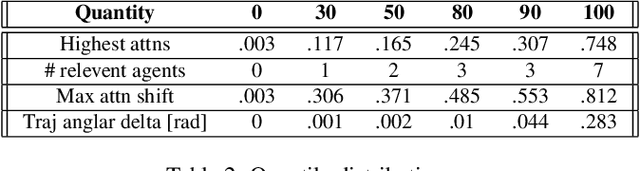

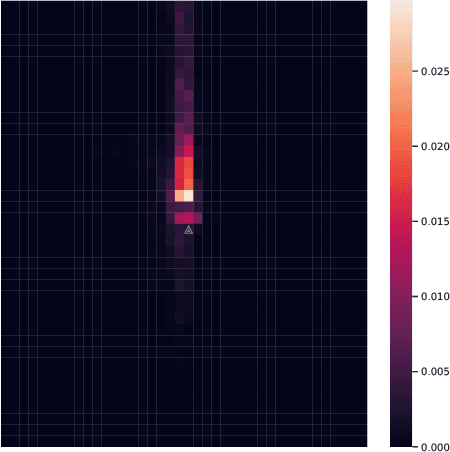

Abstract:Trajectory prediction is an important task in autonomous driving. State-of-the-art trajectory prediction models often use attention mechanisms to model the interaction between agents. In this paper, we show that the attention information from such models can also be used to measure the importance of each agent with respect to the ego vehicle's future planned trajectory. Our experiment results on the nuPlans dataset show that our method can effectively find and rank surrounding agents by their impact on the ego's plan.

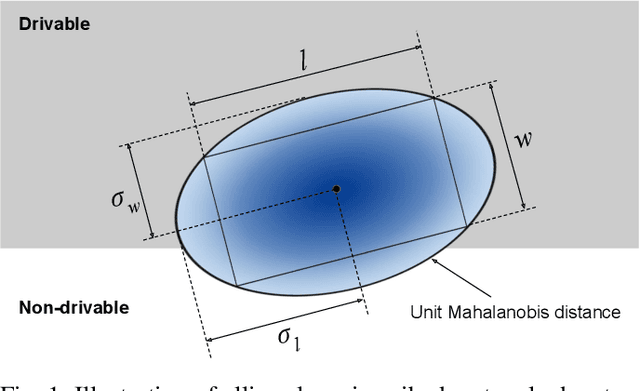

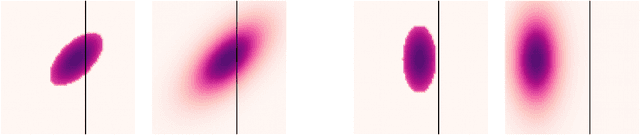

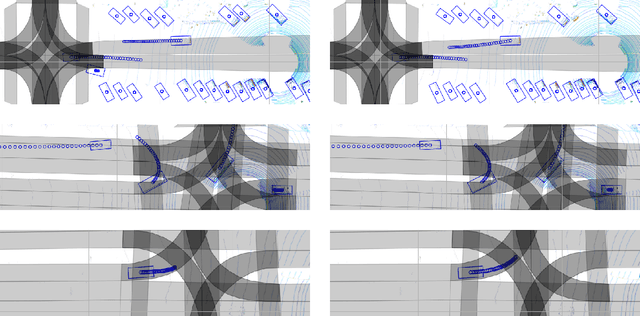

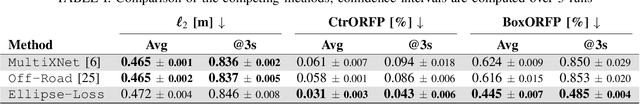

Ellipse Loss for Scene-Compliant Motion Prediction

Nov 05, 2020

Abstract:Motion prediction is a critical part of self-driving technology, responsible for inferring future behavior of traffic actors in autonomous vehicle's surroundings. In order to ensure safe and efficient operations, prediction models need to output accurate trajectories that obey the map constraints. In this paper, we address this task and propose a novel ellipse loss that allows the models to better reason about scene compliance and predict more realistic trajectories. Ellipse loss penalizes off-road predictions directly in a supervised manner, by projecting the output trajectories into the top-down map frame using a differentiable trajectory rasterizer module. Moreover, it takes into account the actor dimension and orientation, providing more direct training signals to the model. We applied ellipse loss to a recently proposed state-of-the-art joint detection-prediction model to showcase its benefits. Evaluation results on a large-scale autonomous driving data set strongly indicate that our method allows for more accurate and more realistic trajectory predictions.

Uncertainty-Aware Vehicle Orientation Estimation for Joint Detection-Prediction Models

Nov 05, 2020

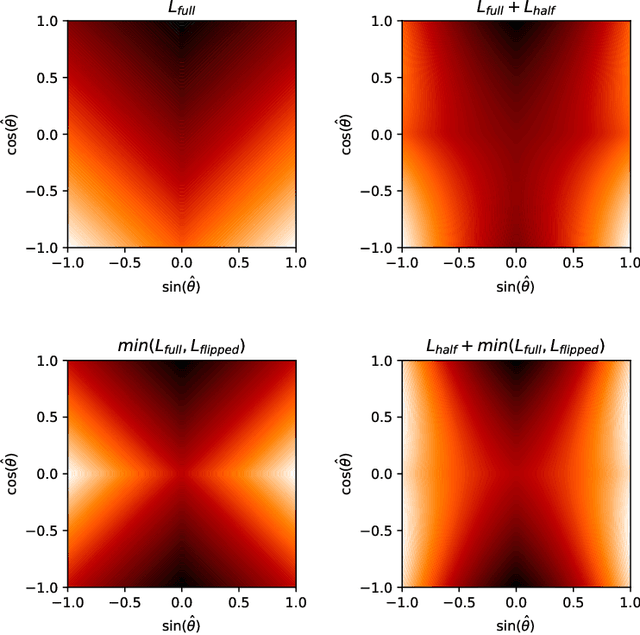

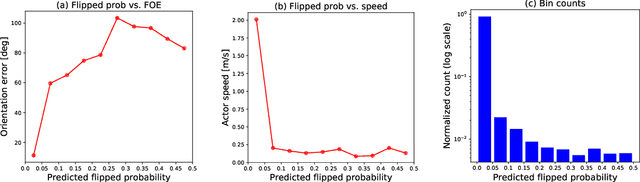

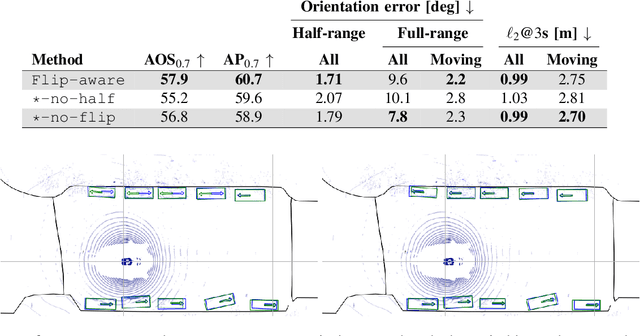

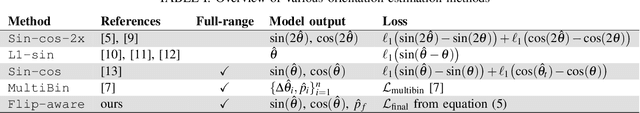

Abstract:Object detection is a critical component of a self-driving system, tasked with inferring the current states of the surrounding traffic actors. While there exist a number of studies on the problem of inferring the position and shape of vehicle actors, understanding actors' orientation remains a challenge for existing state-of-the-art detectors. Orientation is an important property for downstream modules of an autonomous system, particularly relevant for motion prediction of stationary or reversing actors where current approaches struggle. We focus on this task and present a method that extends the existing models that perform joint object detection and motion prediction, allowing us to more accurately infer vehicle orientations. In addition, the approach is able to quantify prediction uncertainty, outputting the probability that the inferred orientation is flipped, which allows for improved motion prediction and safer autonomous operations. Empirical results show the benefits of the approach, obtaining state-of-the-art performance on the open-sourced nuScenes data set.

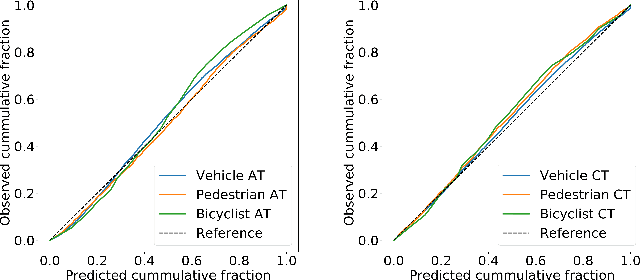

Temporally-Continuous Probabilistic Prediction using Polynomial Trajectory Parameterization

Nov 01, 2020

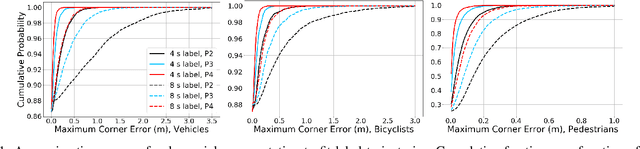

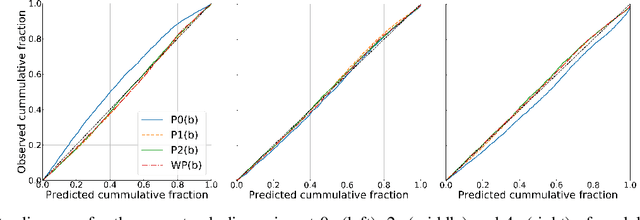

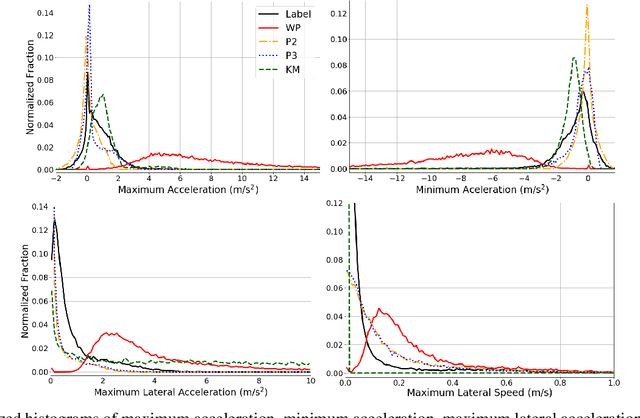

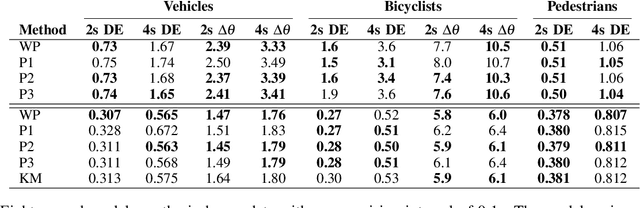

Abstract:A commonly-used representation for motion prediction of actors is a sequence of waypoints (comprising positions and orientations) for each actor at discrete future time-points. While this approach is simple and flexible, it can exhibit unrealistic higher-order derivatives (such as acceleration) and approximation errors at intermediate time steps. To address this issue we propose a simple and general representation for temporally continuous probabilistic trajectory prediction that is based on polynomial trajectory parameterization. We evaluate the proposed representation on supervised trajectory prediction tasks using two large self-driving data sets. The results show realistic higher-order derivatives and better accuracy at interpolated time-points, as well as the benefits of the inferred noise distributions over the trajectories. Extensive experimental studies based on existing state-of-the-art models demonstrate the effectiveness of the proposed approach relative to other representations in predicting the future motions of vehicle, bicyclist, and pedestrian traffic actors.

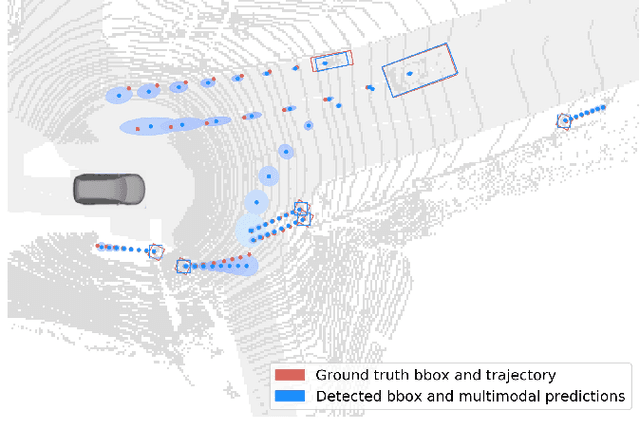

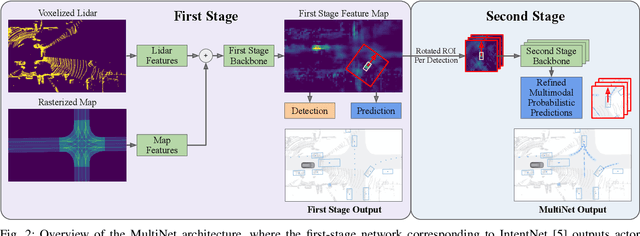

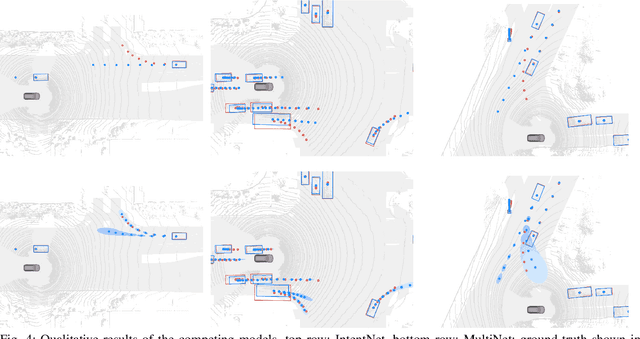

MultiXNet: Multiclass Multistage Multimodal Motion Prediction

Jun 10, 2020

Abstract:One of the critical pieces of the self-driving puzzle is understanding the surroundings of the self-driving vehicle (SDV) and predicting how these surroundings will change in the near future. To address this task we propose MultiXNet, an end-to-end approach for detection and motion prediction based directly on lidar sensor data. This approach builds on prior work by handling multiple classes of traffic actors, adding a jointly trained second-stage trajectory refinement step, and producing a multimodal probability distribution over future actor motion that includes both multiple discrete traffic behaviors and calibrated continuous uncertainties. The method was evaluated on a large-scale, real-world data set collected by a fleet of SDVs in several cities, with the results indicating that it outperforms existing state-of-the-art approaches.

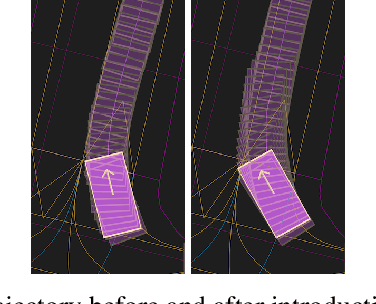

Improving Movement Predictions of Traffic Actors in Bird's-Eye View Models using GANs and Differentiable Trajectory Rasterization

Apr 14, 2020

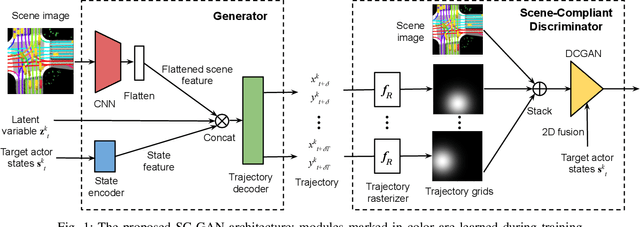

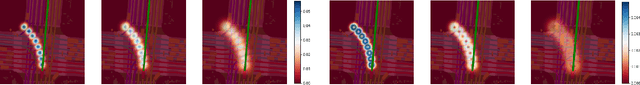

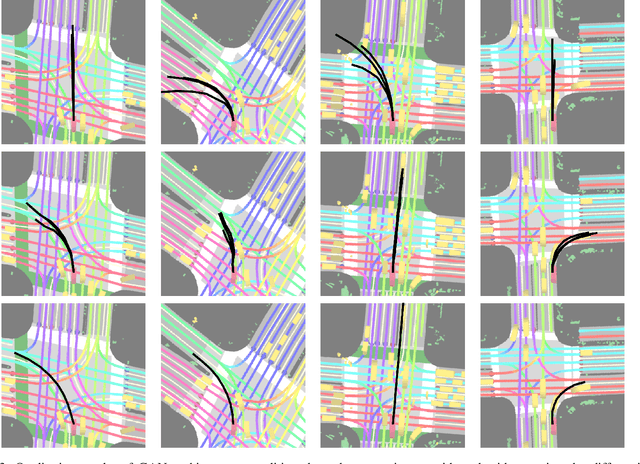

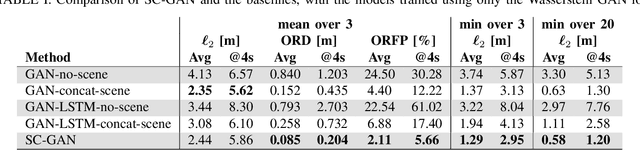

Abstract:One of the most critical pieces of the self-driving puzzle is the task of predicting future movement of surrounding traffic actors, which allows the autonomous vehicle to safely and effectively plan its future route in a complex world. Recently, a number of algorithms have been proposed to address this important problem, spurred by a growing interest of researchers from both industry and academia. Methods based on top-down scene rasterization on one side and Generative Adversarial Networks (GANs) on the other have shown to be particularly successful, obtaining state-of-the-art accuracies on the task of traffic movement prediction. In this paper we build upon these two directions and propose a raster-based conditional GAN architecture, powered by a novel differentiable rasterizer module at the input of the conditional discriminator that maps generated trajectories into the raster space in a differentiable manner. This simplifies the task for the discriminator as trajectories that are not scene-compliant are easier to discern, and allows the gradients to flow back forcing the generator to output better, more realistic trajectories. We evaluated the proposed method on a large-scale, real-world data set, showing that it outperforms state-of-the-art GAN-based baselines.

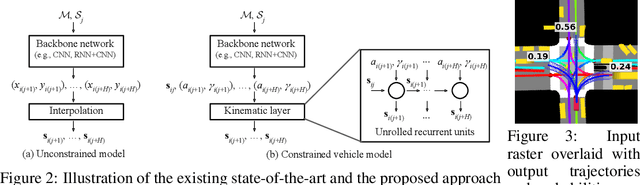

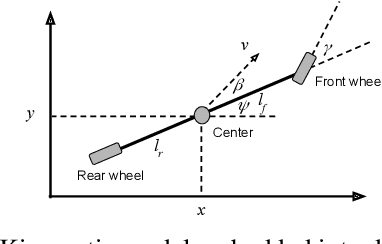

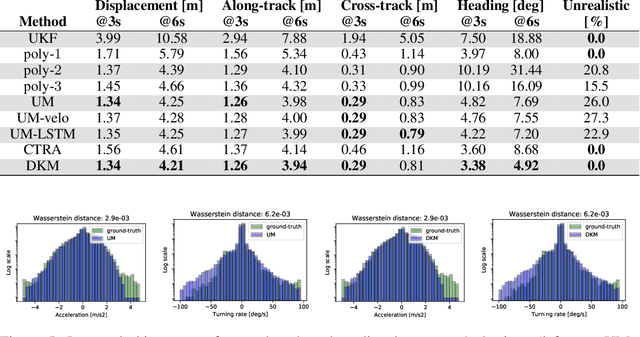

Deep Kinematic Models for Physically Realistic Prediction of Vehicle Trajectories

Aug 01, 2019

Abstract:Self-driving vehicles (SDVs) hold great potential for improving traffic safety and are poised to positively affect the quality of life of millions of people. One of the critical aspects of the autonomous technology is understanding and predicting future movement of vehicles surrounding the SDV. This work presents a deep-learning-based method for physically realistic motion prediction of such traffic actors. Previous work did not explicitly encode physical realism and instead relied on the models to learn the laws of physics directly from the data, potentially resulting in implausible trajectory predictions. To account for this issue we propose a method that seamlessly combines ideas from the AI with physically grounded vehicle motion models. In this way we employ best of the both worlds, coupling powerful learning models with strong physical guarantees for their outputs. The proposed approach is general, being applicable to any type of learning method. Extensive experiments using deep convnets on large-scale, real-world data strongly indicate its benefits, outperforming the existing state-of-the-art.

Predicting Motion of Vulnerable Road Users using High-Definition Maps and Efficient ConvNets

Jun 20, 2019

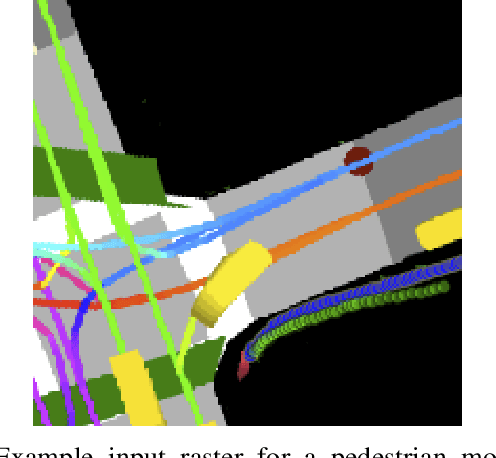

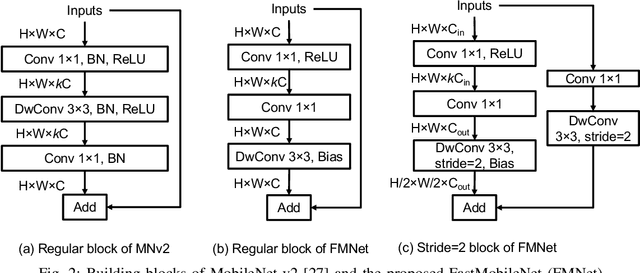

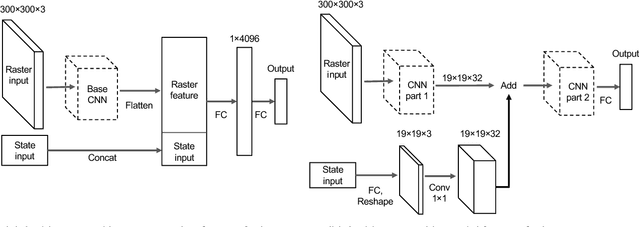

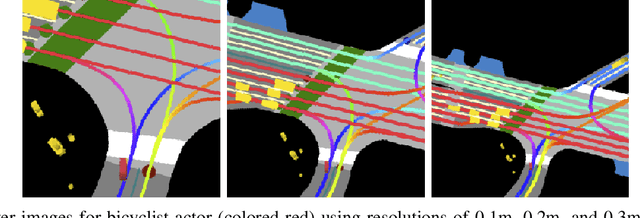

Abstract:Following detection and tracking of traffic actors, prediction of their future motion is the next critical component of a self-driving vehicle (SDV) technology, allowing the SDV to operate safely and efficiently in its environment. This is particularly important when it comes to vulnerable road users (VRUs), such as pedestrians and bicyclists. These actors need to be handled with special care due to an increased risk of injury, as well as the fact that their behavior is less predictable than that of motorized actors. To address this issue, in this paper we present a deep learning-based method for predicting VRU movement, where we rasterize high-definition maps and actor's surroundings into bird's-eye view image used as an input to deep convolutional networks. In addition, we propose a fast architecture suitable for real-time inference, and present a detailed ablation study of various rasterization choices. The results strongly indicate benefits of using the proposed approach for motion prediction of VRUs, both in terms of accuracy and latency.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge