Tsung-Han Lin

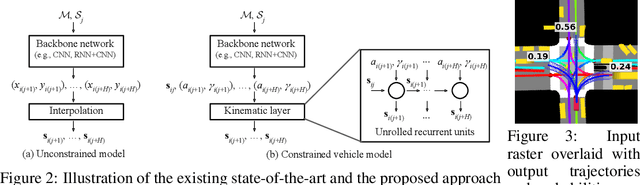

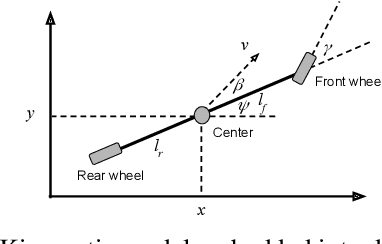

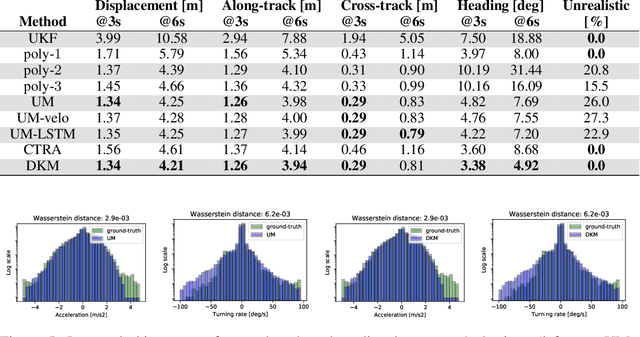

Deep Kinematic Models for Physically Realistic Prediction of Vehicle Trajectories

Aug 01, 2019

Abstract:Self-driving vehicles (SDVs) hold great potential for improving traffic safety and are poised to positively affect the quality of life of millions of people. One of the critical aspects of the autonomous technology is understanding and predicting future movement of vehicles surrounding the SDV. This work presents a deep-learning-based method for physically realistic motion prediction of such traffic actors. Previous work did not explicitly encode physical realism and instead relied on the models to learn the laws of physics directly from the data, potentially resulting in implausible trajectory predictions. To account for this issue we propose a method that seamlessly combines ideas from the AI with physically grounded vehicle motion models. In this way we employ best of the both worlds, coupling powerful learning models with strong physical guarantees for their outputs. The proposed approach is general, being applicable to any type of learning method. Extensive experiments using deep convnets on large-scale, real-world data strongly indicate its benefits, outperforming the existing state-of-the-art.

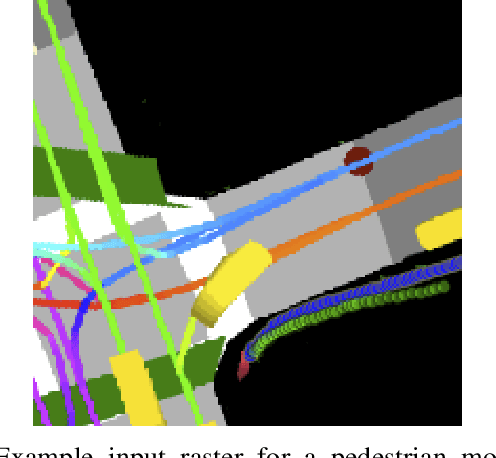

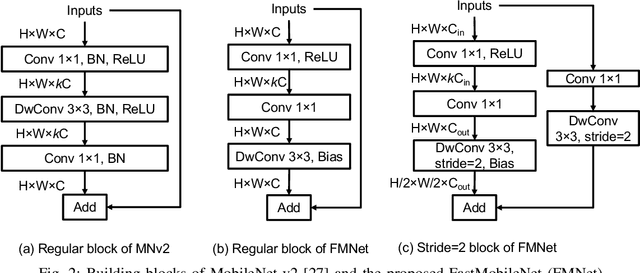

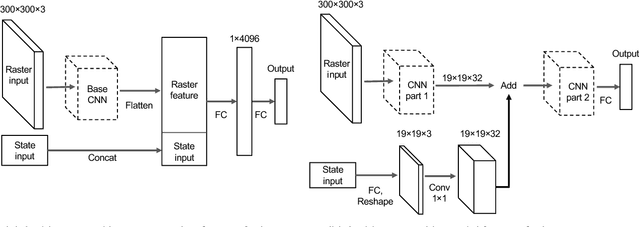

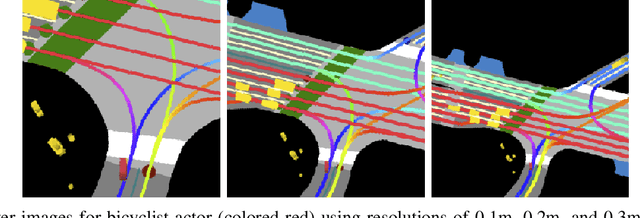

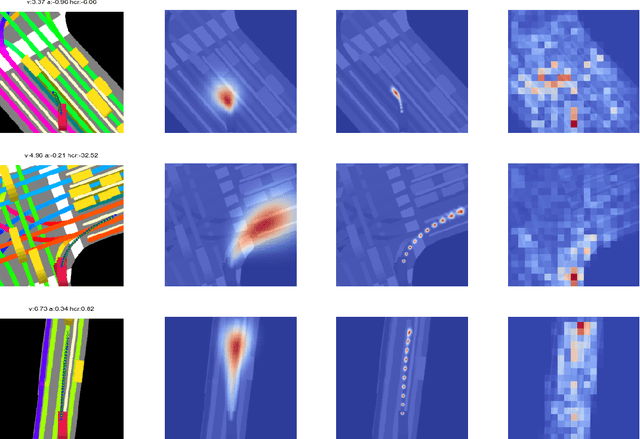

Predicting Motion of Vulnerable Road Users using High-Definition Maps and Efficient ConvNets

Jun 20, 2019

Abstract:Following detection and tracking of traffic actors, prediction of their future motion is the next critical component of a self-driving vehicle (SDV) technology, allowing the SDV to operate safely and efficiently in its environment. This is particularly important when it comes to vulnerable road users (VRUs), such as pedestrians and bicyclists. These actors need to be handled with special care due to an increased risk of injury, as well as the fact that their behavior is less predictable than that of motorized actors. To address this issue, in this paper we present a deep learning-based method for predicting VRU movement, where we rasterize high-definition maps and actor's surroundings into bird's-eye view image used as an input to deep convolutional networks. In addition, we propose a fast architecture suitable for real-time inference, and present a detailed ablation study of various rasterization choices. The results strongly indicate benefits of using the proposed approach for motion prediction of VRUs, both in terms of accuracy and latency.

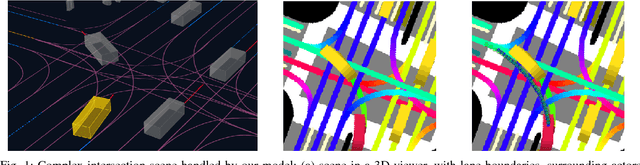

Multimodal Trajectory Predictions for Autonomous Driving using Deep Convolutional Networks

Mar 01, 2019

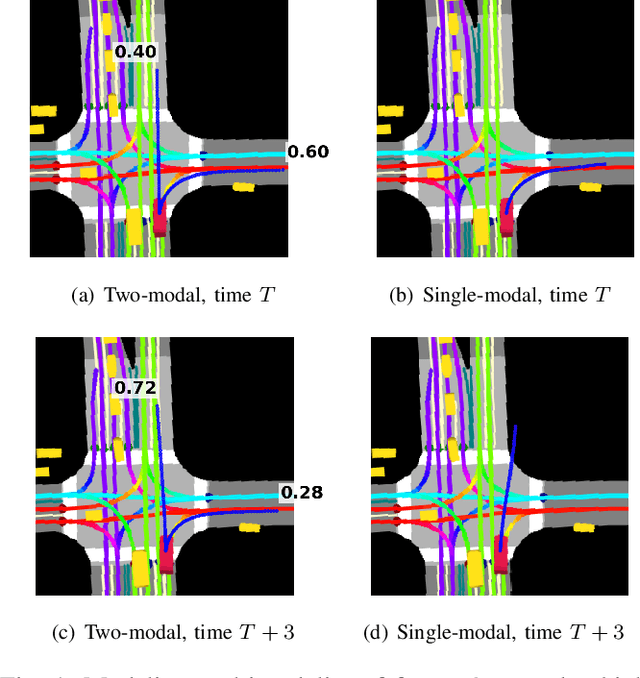

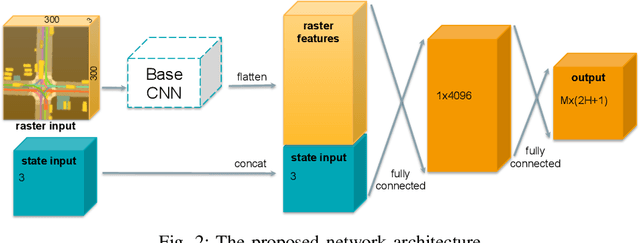

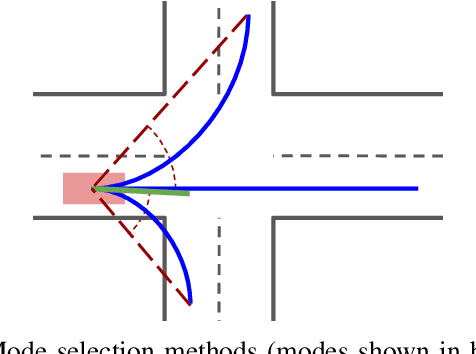

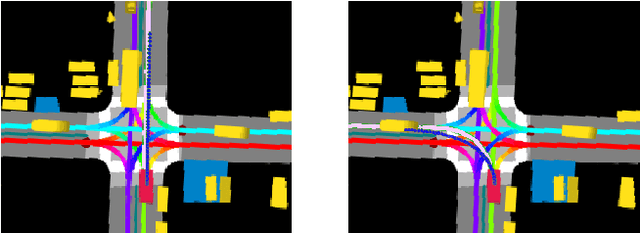

Abstract:Autonomous driving presents one of the largest problems that the robotics and artificial intelligence communities are facing at the moment, both in terms of difficulty and potential societal impact. Self-driving vehicles (SDVs) are expected to prevent road accidents and save millions of lives while improving the livelihood and life quality of many more. However, despite large interest and a number of industry players working in the autonomous domain, there still remains more to be done in order to develop a system capable of operating at a level comparable to best human drivers. One reason for this is high uncertainty of traffic behavior and large number of situations that an SDV may encounter on the roads, making it very difficult to create a fully generalizable system. To ensure safe and efficient operations, an autonomous vehicle is required to account for this uncertainty and to anticipate a multitude of possible behaviors of traffic actors in its surrounding. We address this critical problem and present a method to predict multiple possible trajectories of actors while also estimating their probabilities. The method encodes each actor's surrounding context into a raster image, used as input by deep convolutional networks to automatically derive relevant features for the task. Following extensive offline evaluation and comparison to state-of-the-art baselines, the method was successfully tested on SDVs in closed-course tests.

Short-term Motion Prediction of Traffic Actors for Autonomous Driving using Deep Convolutional Networks

Sep 16, 2018

Abstract:Despite its ubiquity in our daily lives, AI is only just starting to make advances in what may arguably have the largest societal impact thus far, the nascent field of autonomous driving. In this work we discuss this important topic and address one of crucial aspects of the emerging area, the problem of predicting future state of autonomous vehicle's surrounding necessary for safe and efficient operations. We introduce a deep learning-based approach that takes into account current world state and produces rasterized representations of each actor's vicinity. The raster images are then used by deep convolutional models to infer future movement of actors while accounting for inherent uncertainty of the prediction task. Extensive experiments on real-world data strongly suggest benefits of the proposed approach. Moreover, following successful tests the system was deployed to a fleet of autonomous vehicles.

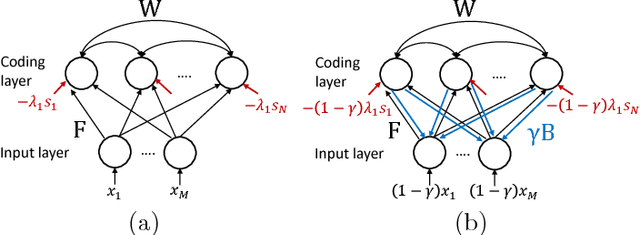

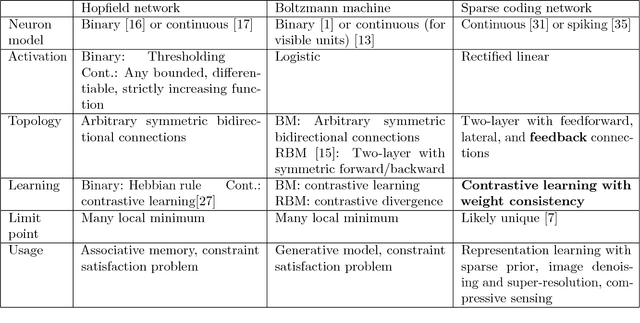

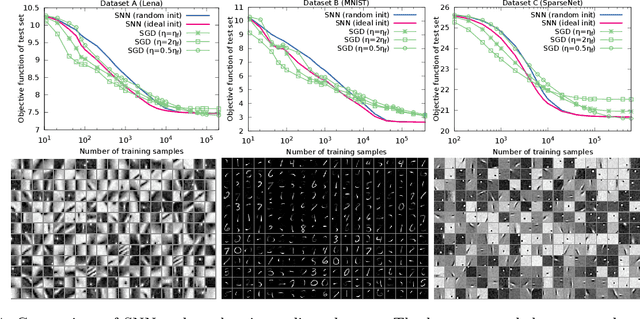

Dictionary Learning by Dynamical Neural Networks

May 23, 2018

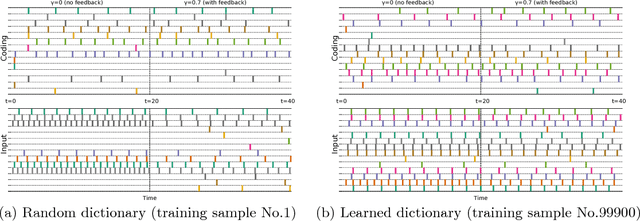

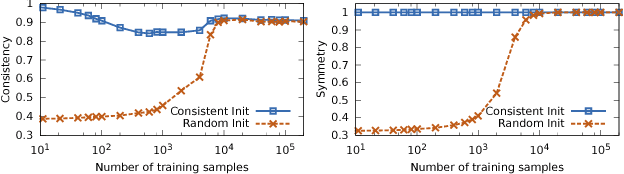

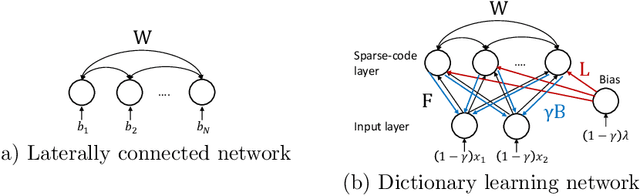

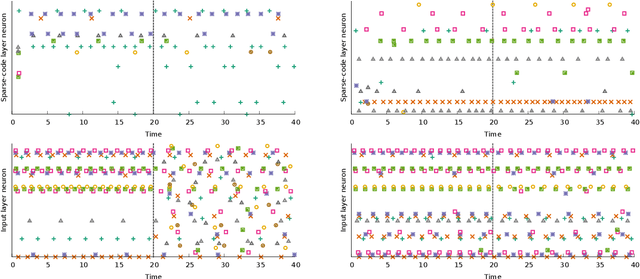

Abstract:A dynamical neural network consists of a set of interconnected neurons that interact over time continuously. It can exhibit computational properties in the sense that the dynamical system's evolution and/or limit points in the associated state space can correspond to numerical solutions to certain mathematical optimization or learning problems. Such a computational system is particularly attractive in that it can be mapped to a massively parallel computer architecture for power and throughput efficiency, especially if each neuron can rely solely on local information (i.e., local memory). Deriving gradients from the dynamical network's various states while conforming to this last constraint, however, is challenging. We show that by combining ideas of top-down feedback and contrastive learning, a dynamical network for solving the l1-minimizing dictionary learning problem can be constructed, and the true gradients for learning are provably computable by individual neurons. Using spiking neurons to construct our dynamical network, we present a learning process, its rigorous mathematical analysis, and numerical results on several dictionary learning problems.

Local Information with Feedback Perturbation Suffices for Dictionary Learning in Neural Circuits

May 19, 2017

Abstract:While the sparse coding principle can successfully model information processing in sensory neural systems, it remains unclear how learning can be accomplished under neural architectural constraints. Feasible learning rules must rely solely on synaptically local information in order to be implemented on spatially distributed neurons. We describe a neural network with spiking neurons that can address the aforementioned fundamental challenge and solve the L1-minimizing dictionary learning problem, representing the first model able to do so. Our major innovation is to introduce feedback synapses to create a pathway to turn the seemingly non-local information into local ones. The resulting network encodes the error signal needed for learning as the change of network steady states caused by feedback, and operates akin to the classical stochastic gradient descent method.

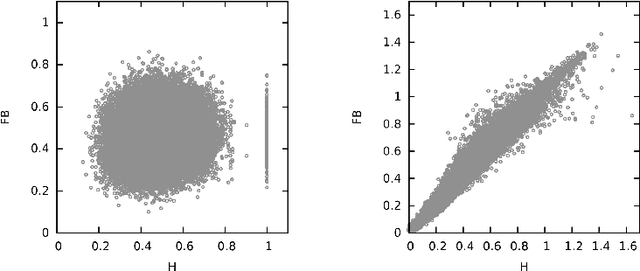

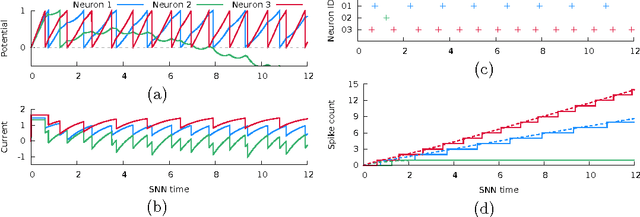

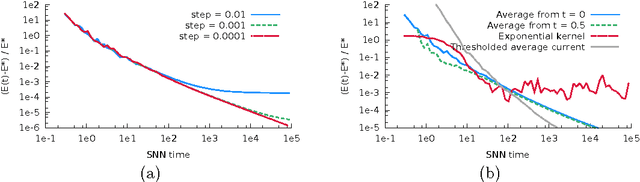

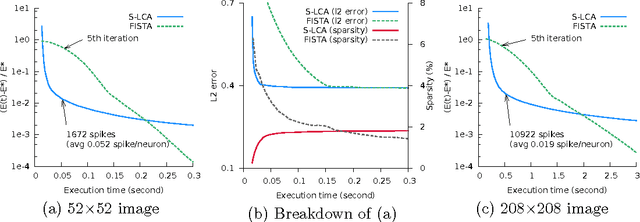

Sparse Coding by Spiking Neural Networks: Convergence Theory and Computational Results

May 15, 2017

Abstract:In a spiking neural network (SNN), individual neurons operate autonomously and only communicate with other neurons sparingly and asynchronously via spike signals. These characteristics render a massively parallel hardware implementation of SNN a potentially powerful computer, albeit a non von Neumann one. But can one guarantee that a SNN computer solves some important problems reliably? In this paper, we formulate a mathematical model of one SNN that can be configured for a sparse coding problem for feature extraction. With a moderate but well-defined assumption, we prove that the SNN indeed solves sparse coding. To the best of our knowledge, this is the first rigorous result of this kind.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge