Gemine Vivone

Hyperspectral Anomaly Detection Fused Unified Nonconvex Tensor Ring Factors Regularization

May 23, 2025Abstract:In recent years, tensor decomposition-based approaches for hyperspectral anomaly detection (HAD) have gained significant attention in the field of remote sensing. However, existing methods often fail to fully leverage both the global correlations and local smoothness of the background components in hyperspectral images (HSIs), which exist in both the spectral and spatial domains. This limitation results in suboptimal detection performance. To mitigate this critical issue, we put forward a novel HAD method named HAD-EUNTRFR, which incorporates an enhanced unified nonconvex tensor ring (TR) factors regularization. In the HAD-EUNTRFR framework, the raw HSIs are first decomposed into background and anomaly components. The TR decomposition is then employed to capture the spatial-spectral correlations within the background component. Additionally, we introduce a unified and efficient nonconvex regularizer, induced by tensor singular value decomposition (TSVD), to simultaneously encode the low-rankness and sparsity of the 3-D gradient TR factors into a unique concise form. The above characterization scheme enables the interpretable gradient TR factors to inherit the low-rankness and smoothness of the original background. To further enhance anomaly detection, we design a generalized nonconvex regularization term to exploit the group sparsity of the anomaly component. To solve the resulting doubly nonconvex model, we develop a highly efficient optimization algorithm based on the alternating direction method of multipliers (ADMM) framework. Experimental results on several benchmark datasets demonstrate that our proposed method outperforms existing state-of-the-art (SOTA) approaches in terms of detection accuracy.

Zero-Shot Hyperspectral Pansharpening Using Hysteresis-Based Tuning for Spectral Quality Control

May 22, 2025Abstract:Hyperspectral pansharpening has received much attention in recent years due to technological and methodological advances that open the door to new application scenarios. However, research on this topic is only now gaining momentum. The most popular methods are still borrowed from the more mature field of multispectral pansharpening and often overlook the unique challenges posed by hyperspectral data fusion, such as i) the very large number of bands, ii) the overwhelming noise in selected spectral ranges, iii) the significant spectral mismatch between panchromatic and hyperspectral components, iv) a typically high resolution ratio. Imprecise data modeling especially affects spectral fidelity. Even state-of-the-art methods perform well in certain spectral ranges and much worse in others, failing to ensure consistent quality across all bands, with the risk of generating unreliable results. Here, we propose a hyperspectral pansharpening method that explicitly addresses this problem and ensures uniform spectral quality. To this end, a single lightweight neural network is used, with weights that adapt on the fly to each band. During fine-tuning, the spatial loss is turned on and off to ensure a fast convergence of the spectral loss to the desired level, according to a hysteresis-like dynamic. Furthermore, the spatial loss itself is appropriately redefined to account for nonlinear dependencies between panchromatic and spectral bands. Overall, the proposed method is fully unsupervised, with no prior training on external data, flexible, and low-complexity. Experiments on a recently published benchmarking toolbox show that it ensures excellent sharpening quality, competitive with the state-of-the-art, consistently across all bands. The software code and the full set of results are shared online on https://github.com/giu-guarino/rho-PNN.

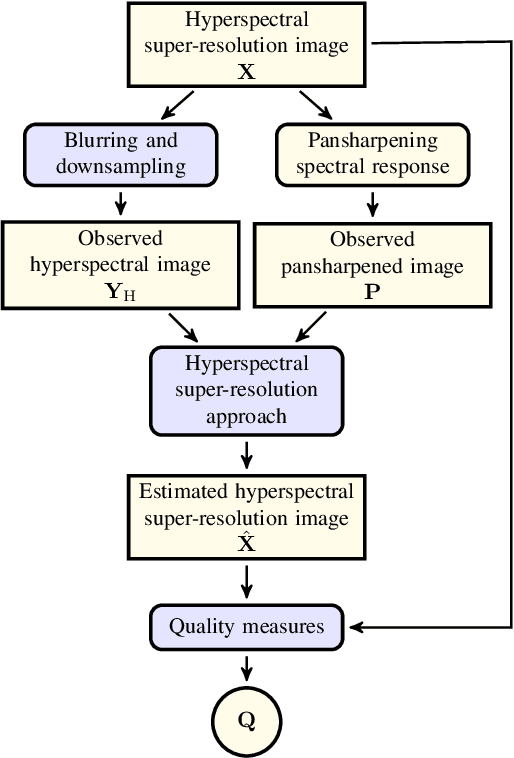

Hyperspectral Pansharpening: Critical Review, Tools and Future Perspectives

Jul 01, 2024Abstract:Hyperspectral pansharpening consists of fusing a high-resolution panchromatic band and a low-resolution hyperspectral image to obtain a new image with high resolution in both the spatial and spectral domains. These remote sensing products are valuable for a wide range of applications, driving ever growing research efforts. Nonetheless, results still do not meet application demands. In part, this comes from the technical complexity of the task: compared to multispectral pansharpening, many more bands are involved, in a spectral range only partially covered by the panchromatic component and with overwhelming noise. However, another major limiting factor is the absence of a comprehensive framework for the rapid development and accurate evaluation of new methods. This paper attempts to address this issue. We started by designing a dataset large and diverse enough to allow reliable training (for data-driven methods) and testing of new methods. Then, we selected a set of state-of-the-art methods, following different approaches, characterized by promising performance, and reimplemented them in a single PyTorch framework. Finally, we carried out a critical comparative analysis of all methods, using the most accredited quality indicators. The analysis highlights the main limitations of current solutions in terms of spectral/spatial quality and computational efficiency, and suggests promising research directions. To ensure full reproducibility of the results and support future research, the framework (including codes, evaluation procedures and links to the dataset) is shared on https://github.com/matciotola/hyperspectral_pansharpening_toolbox, as a single Python-based reference benchmark toolbox.

A Comprehensive Survey for Hyperspectral Image Classification: The Evolution from Conventional to Transformers

May 09, 2024

Abstract:Hyperspectral Image Classification (HSC) is a challenging task due to the high dimensionality and complex nature of Hyperspectral (HS) data. Traditional Machine Learning approaches while effective, face challenges in real-world data due to varying optimal feature sets, subjectivity in human-driven design, biases, and limitations. Traditional approaches encounter the curse of dimensionality, struggle with feature selection and extraction, lack spatial information consideration, exhibit limited robustness to noise, face scalability issues, and may not adapt well to complex data distributions. In recent years, Deep Learning (DL) techniques have emerged as powerful tools for addressing these challenges. This survey provides a comprehensive overview of the current trends and future prospects in HSC, focusing on the advancements from DL models to the emerging use of Transformers. We review the key concepts, methodologies, and state-of-the-art approaches in DL for HSC. We explore the potential of Transformer-based models in HSC, outlining their benefits and challenges. We also delve into emerging trends in HSC, as well as thorough discussions on Explainable AI and Interoperability concepts along with Diffusion Models (image denoising, feature extraction, and image fusion). Lastly, we address several open challenges and research questions pertinent to HSC. Comprehensive experimental results have been undertaken using three HS datasets to verify the efficacy of various conventional DL models and Transformers. Finally, we outline future research directions and potential applications that can further enhance the accuracy and efficiency of HSC. The Source code is available at \href{https://github.com/mahmad00/Conventional-to-Transformer-for-Hyperspectral-Image-Classification-Survey-2024}{github.com/mahmad00}.

Band-wise Hyperspectral Image Pansharpening using CNN Model Propagation

Nov 11, 2023

Abstract:Hyperspectral pansharpening is receiving a growing interest since the last few years as testified by a large number of research papers and challenges. It consists in a pixel-level fusion between a lower-resolution hyperspectral datacube and a higher-resolution single-band image, the panchromatic image, with the goal of providing a hyperspectral datacube at panchromatic resolution. Thanks to their powerful representational capabilities, deep learning models have succeeded to provide unprecedented results on many general purpose image processing tasks. However, when moving to domain specific problems, as in this case, the advantages with respect to traditional model-based approaches are much lesser clear-cut due to several contextual reasons. Scarcity of training data, lack of ground-truth, data shape variability, are some such factors that limit the generalization capacity of the state-of-the-art deep learning networks for hyperspectral pansharpening. To cope with these limitations, in this work we propose a new deep learning method which inherits a simple single-band unsupervised pansharpening model nested in a sequential band-wise adaptive scheme, where each band is pansharpened refining the model tuned on the preceding one. By doing so, a simple model is propagated along the wavelength dimension, adaptively and flexibly, with no need to have a fixed number of spectral bands, and, with no need to dispose of large, expensive and labeled training datasets. The proposed method achieves very good results on our datasets, outperforming both traditional and deep learning reference methods. The implementation of the proposed method can be found on https://github.com/giu-guarino/R-PNN

A Benchmarking Protocol for SAR Colorization: From Regression to Deep Learning Approaches

Oct 12, 2023

Abstract:Synthetic aperture radar (SAR) images are widely used in remote sensing. Interpreting SAR images can be challenging due to their intrinsic speckle noise and grayscale nature. To address this issue, SAR colorization has emerged as a research direction to colorize gray scale SAR images while preserving the original spatial information and radiometric information. However, this research field is still in its early stages, and many limitations can be highlighted. In this paper, we propose a full research line for supervised learning-based approaches to SAR colorization. Our approach includes a protocol for generating synthetic color SAR images, several baselines, and an effective method based on the conditional generative adversarial network (cGAN) for SAR colorization. We also propose numerical assessment metrics for the problem at hand. To our knowledge, this is the first attempt to propose a research line for SAR colorization that includes a protocol, a benchmark, and a complete performance evaluation. Our extensive tests demonstrate the effectiveness of our proposed cGAN-based network for SAR colorization. The code will be made publicly available.

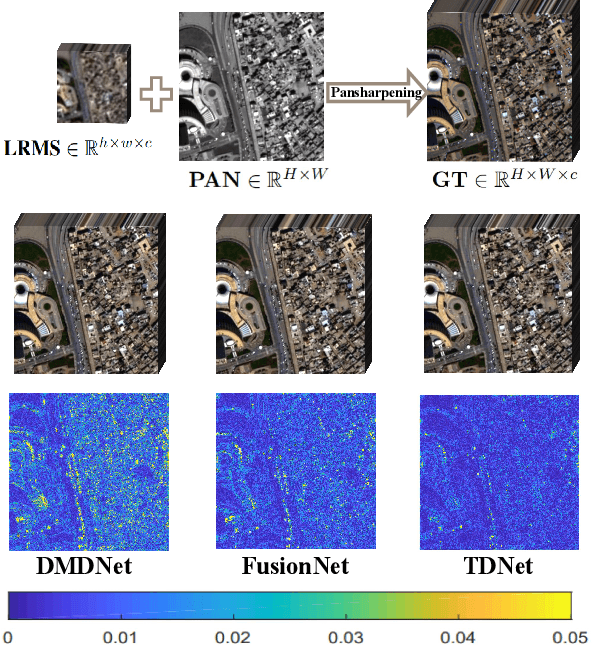

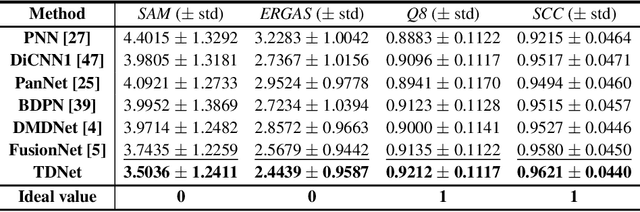

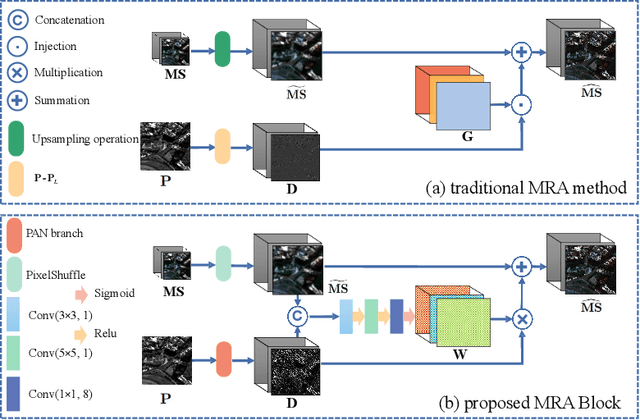

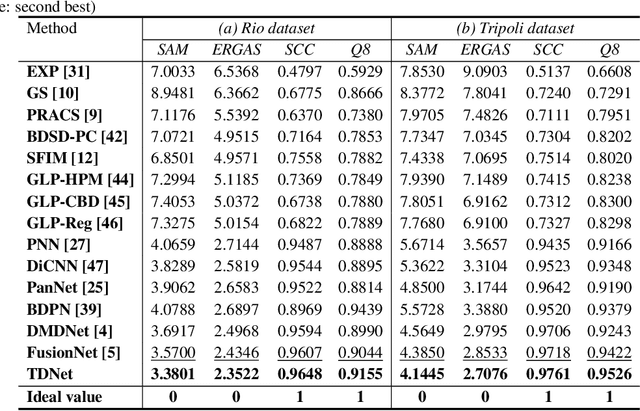

A Triple-Double Convolutional Neural Network for Panchromatic Sharpening

Dec 04, 2021

Abstract:Pansharpening refers to the fusion of a panchromatic image with a high spatial resolution and a multispectral image with a low spatial resolution, aiming to obtain a high spatial resolution multispectral image. In this paper, we propose a novel deep neural network architecture with level-domain based loss function for pansharpening by taking into account the following double-type structures, \emph{i.e.,} double-level, double-branch, and double-direction, called as triple-double network (TDNet). By using the structure of TDNet, the spatial details of the panchromatic image can be fully exploited and utilized to progressively inject into the low spatial resolution multispectral image, thus yielding the high spatial resolution output. The specific network design is motivated by the physical formula of the traditional multi-resolution analysis (MRA) methods. Hence, an effective MRA fusion module is also integrated into the TDNet. Besides, we adopt a few ResNet blocks and some multi-scale convolution kernels to deepen and widen the network to effectively enhance the feature extraction and the robustness of the proposed TDNet. Extensive experiments on reduced- and full-resolution datasets acquired by WorldView-3, QuickBird, and GaoFen-2 sensors demonstrate the superiority of the proposed TDNet compared with some recent state-of-the-art pansharpening approaches. An ablation study has also corroborated the effectiveness of the proposed approach.

Hyperspectral Image Super-resolution via Deep Spatio-spectral Convolutional Neural Networks

May 29, 2020

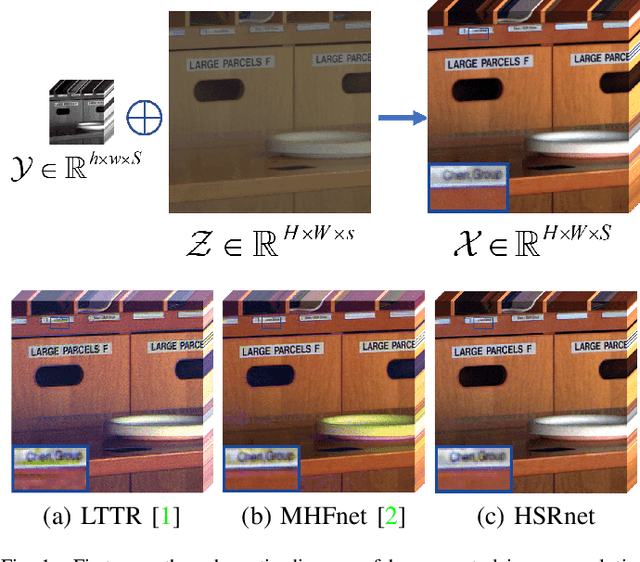

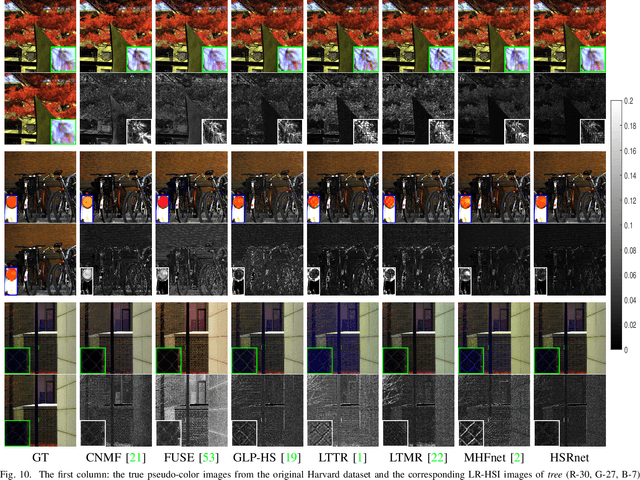

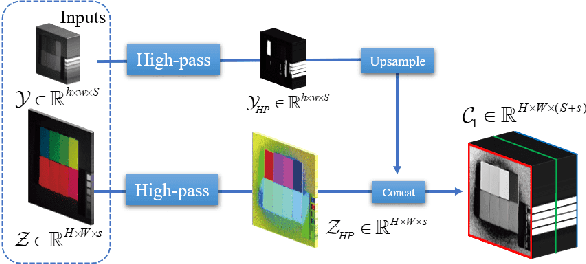

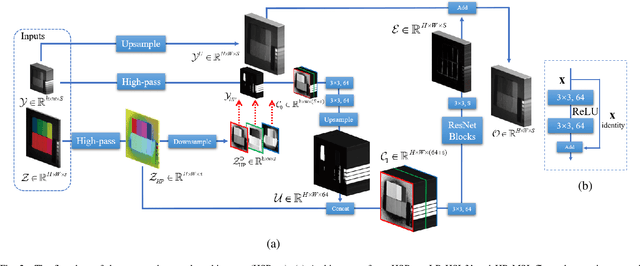

Abstract:Hyperspectral images are of crucial importance in order to better understand features of different materials. To reach this goal, they leverage on a high number of spectral bands. However, this interesting characteristic is often paid by a reduced spatial resolution compared with traditional multispectral image systems. In order to alleviate this issue, in this work, we propose a simple and efficient architecture for deep convolutional neural networks to fuse a low-resolution hyperspectral image (LR-HSI) and a high-resolution multispectral image (HR-MSI), yielding a high-resolution hyperspectral image (HR-HSI). The network is designed to preserve both spatial and spectral information thanks to an architecture from two folds: one is to utilize the HR-HSI at a different scale to get an output with a satisfied spectral preservation; another one is to apply concepts of multi-resolution analysis to extract high-frequency information, aiming to output high quality spatial details. Finally, a plain mean squared error loss function is used to measure the performance during the training. Extensive experiments demonstrate that the proposed network architecture achieves best performance (both qualitatively and quantitatively) compared with recent state-of-the-art hyperspectral image super-resolution approaches. Moreover, other significant advantages can be pointed out by the use of the proposed approach, such as, a better network generalization ability, a limited computational burden, and a robustness with respect to the number of training samples.

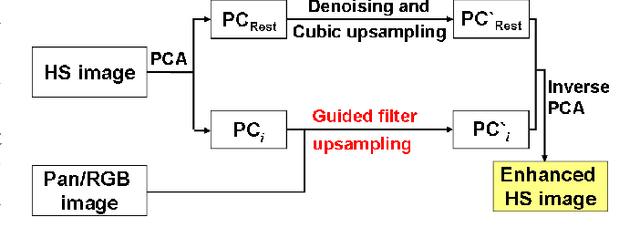

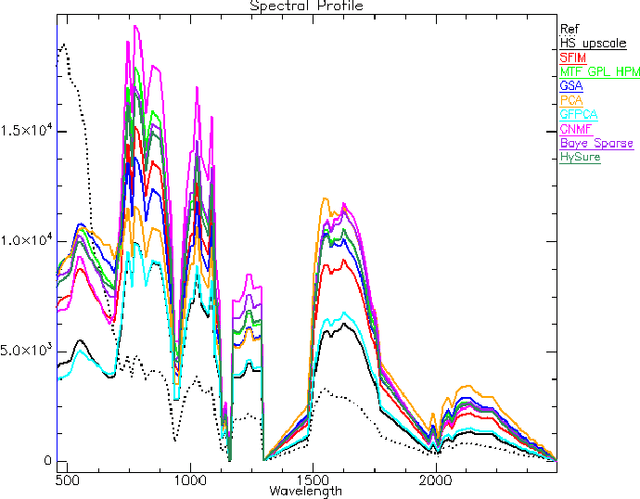

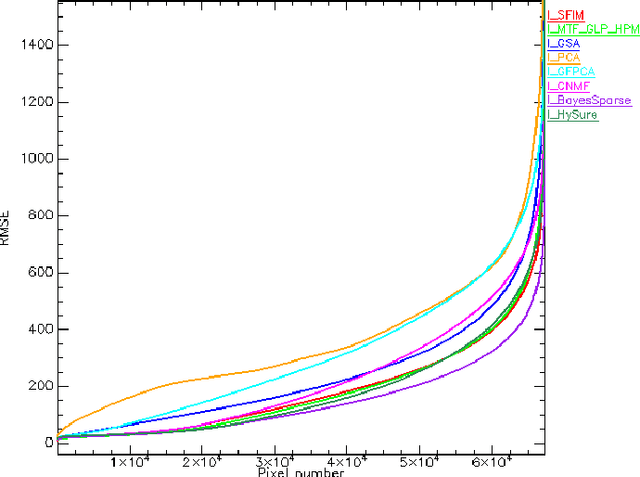

Hyperspectral pansharpening: a review

Apr 17, 2015

Abstract:Pansharpening aims at fusing a panchromatic image with a multispectral one, to generate an image with the high spatial resolution of the former and the high spectral resolution of the latter. In the last decade, many algorithms have been presented in the literature for pansharpening using multispectral data. With the increasing availability of hyperspectral systems, these methods are now being adapted to hyperspectral images. In this work, we compare new pansharpening techniques designed for hyperspectral data with some of the state of the art methods for multispectral pansharpening, which have been adapted for hyperspectral data. Eleven methods from different classes (component substitution, multiresolution analysis, hybrid, Bayesian and matrix factorization) are analyzed. These methods are applied to three datasets and their effectiveness and robustness are evaluated with widely used performance indicators. In addition, all the pansharpening techniques considered in this paper have been implemented in a MATLAB toolbox that is made available to the community.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge