Fengming Zhou

Optimized Biomedical Question-Answering Services with LLM and Multi-BERT Integration

Oct 11, 2024

Abstract:We present a refined approach to biomedical question-answering (QA) services by integrating large language models (LLMs) with Multi-BERT configurations. By enhancing the ability to process and prioritize vast amounts of complex biomedical data, this system aims to support healthcare professionals in delivering better patient outcomes and informed decision-making. Through innovative use of BERT and BioBERT models, combined with a multi-layer perceptron (MLP) layer, we enable more specialized and efficient responses to the growing demands of the healthcare sector. Our approach not only addresses the challenge of overfitting by freezing one BERT model while training another but also improves the overall adaptability of QA services. The use of extensive datasets, such as BioASQ and BioMRC, demonstrates the system's ability to synthesize critical information. This work highlights how advanced language models can make a tangible difference in healthcare, providing reliable and responsive tools for professionals to manage complex information, ultimately serving the broader goal of improved care and data-driven insights.

Synslator: An Interactive Machine Translation Tool with Online Learning

Oct 08, 2023Abstract:Interactive machine translation (IMT) has emerged as a progression of the computer-aided translation paradigm, where the machine translation system and the human translator collaborate to produce high-quality translations. This paper introduces Synslator, a user-friendly computer-aided translation (CAT) tool that not only supports IMT, but is adept at online learning with real-time translation memories. To accommodate various deployment environments for CAT services, Synslator integrates two different neural translation models to handle translation memories for online learning. Additionally, the system employs a language model to enhance the fluency of translations in an interactive mode. In evaluation, we have confirmed the effectiveness of online learning through the translation models, and have observed a 13% increase in post-editing efficiency with the interactive functionalities of Synslator. A tutorial video is available at:https://youtu.be/K0vRsb2lTt8.

Non-imaging real-time detection and tracking of fast-moving objects using a single-pixel detector

Sep 10, 2021

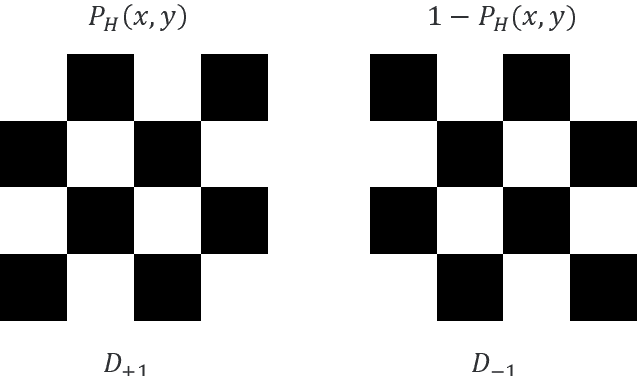

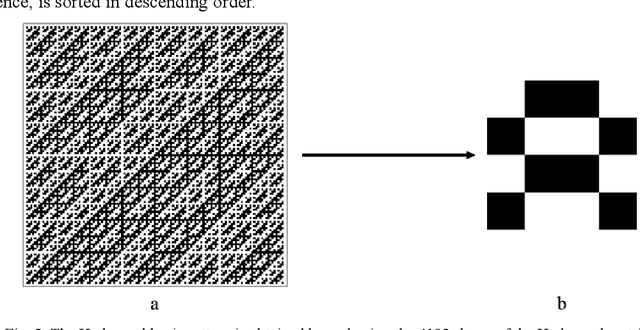

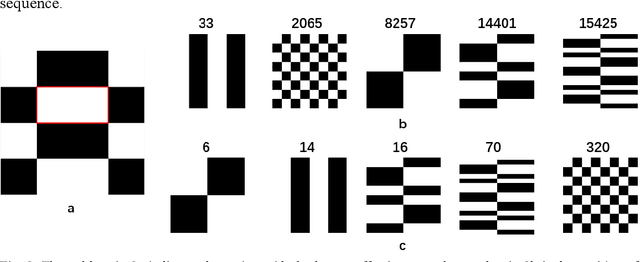

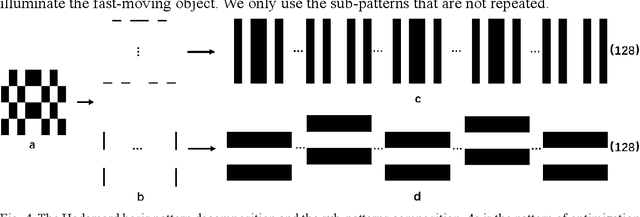

Abstract:Detection and tracking of fast-moving objects have widespread utility in many fields. However, fulfilling this demand for fast and efficient detecting and tracking using image-based techniques is problematic, owing to the complex calculations and limited data processing capabilities. To tackle this problem, we propose an image-free method to achieve real-time detection and tracking of fast-moving objects. It employs the Hadamard pattern to illuminate the fast-moving object by a spatial light modulator, in which the resulting light signal is collected by a single-pixel detector. The single-pixel measurement values are directly used to reconstruct the position information without image reconstruction. Furthermore, a new sampling method is used to optimize the pattern projection way for achieving an ultra-low sampling rate. Compared with the state-of-the-art methods, our approach is not only capable of handling real-time detection and tracking, but also it has a small amount of calculation and high efficiency. We experimentally demonstrate that the proposed method, using a 22kHz digital micro-mirror device, can implement a 105fps frame rate at a 1.28% sampling rate when tracks. Our method breaks through the traditional tracking ways, which can implement the object real-time tracking without image reconstruction.

"Bilingual Expert" Can Find Translation Errors

Aug 03, 2018

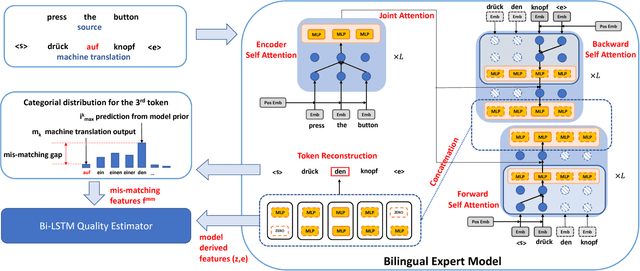

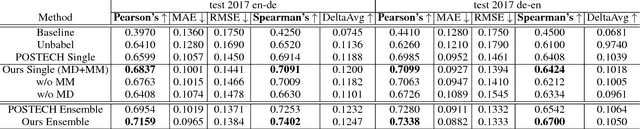

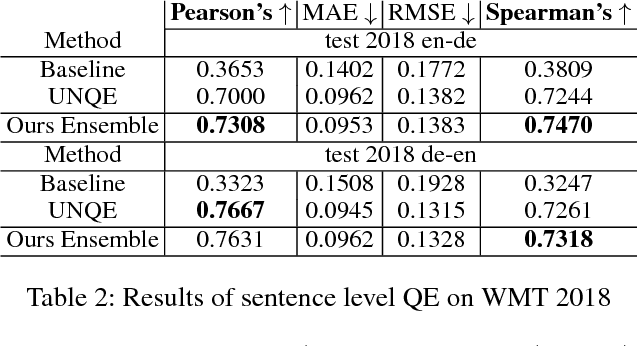

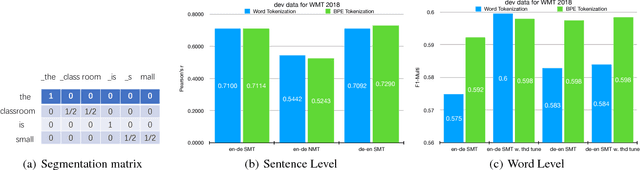

Abstract:Recent advances in statistical machine translation via the adoption of neural sequence-to-sequence models empower the end-to-end system to achieve state-of-the-art in many WMT benchmarks. The performance of such machine translation (MT) system is usually evaluated by automatic metric BLEU when the golden references are provided for validation. However, for model inference or production deployment, the golden references are prohibitively available or require expensive human annotation with bilingual expertise. In order to address the issue of quality evaluation (QE) without reference, we propose a general framework for automatic evaluation of translation output for most WMT quality evaluation tasks. We first build a conditional target language model with a novel bidirectional transformer, named neural bilingual expert model, which is pre-trained on large parallel corpora for feature extraction. For QE inference, the bilingual expert model can simultaneously produce the joint latent representation between the source and the translation, and real-valued measurements of possible erroneous tokens based on the prior knowledge learned from parallel data. Subsequently, the features will further be fed into a simple Bi-LSTM predictive model for quality evaluation. The experimental results show that our approach achieves the state-of-the-art performance in the quality estimation track of WMT 2017/2018.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge