Cheng Luo

REACT 2025: the Third Multiple Appropriate Facial Reaction Generation Challenge

May 22, 2025

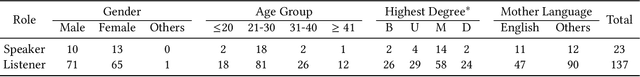

Abstract:In dyadic interactions, a broad spectrum of human facial reactions might be appropriate for responding to each human speaker behaviour. Following the successful organisation of the REACT 2023 and REACT 2024 challenges, we are proposing the REACT 2025 challenge encouraging the development and benchmarking of Machine Learning (ML) models that can be used to generate multiple appropriate, diverse, realistic and synchronised human-style facial reactions expressed by human listeners in response to an input stimulus (i.e., audio-visual behaviours expressed by their corresponding speakers). As a key of the challenge, we provide challenge participants with the first natural and large-scale multi-modal MAFRG dataset (called MARS) recording 137 human-human dyadic interactions containing a total of 2856 interaction sessions covering five different topics. In addition, this paper also presents the challenge guidelines and the performance of our baselines on the two proposed sub-challenges: Offline MAFRG and Online MAFRG, respectively. The challenge baseline code is publicly available at https://github.com/reactmultimodalchallenge/baseline_react2025

MOM: Memory-Efficient Offloaded Mini-Sequence Inference for Long Context Language Models

Apr 16, 2025Abstract:Long-context language models exhibit impressive performance but remain challenging to deploy due to high GPU memory demands during inference. We propose Memory-efficient Offloaded Mini-sequence Inference (MOM), a method that partitions critical layers into smaller "mini-sequences" and integrates seamlessly with KV cache offloading. Experiments on various Llama, Qwen, and Mistral models demonstrate that MOM reduces peak memory usage by over 50\% on average. On Meta-Llama-3.2-8B, MOM extends the maximum context length from 155k to 455k tokens on a single A100 80GB GPU, while keeping outputs identical and not compromising accuracy. MOM also maintains highly competitive throughput due to minimal computational overhead and efficient last-layer processing. Compared to traditional chunked prefill methods, MOM achieves a 35\% greater context length extension. More importantly, our method drastically reduces prefill memory consumption, eliminating it as the longstanding dominant memory bottleneck during inference. This breakthrough fundamentally changes research priorities, redirecting future efforts from prefill-stage optimizations to improving decode-stage residual KV cache efficiency.

Algorithm Design and Prototype Validation for Reconfigurable Intelligent Sensing Surface: Forward-Only Transmission

Mar 31, 2025

Abstract:Sensing-assisted communication schemes have recently garnered significant research attention. In this work, we design a dual-function reconfigurable intelligent surface (RIS), integrating both active and passive elements, referred to as the reconfigurable intelligent sensing surface (RISS), to enhance communication. By leveraging sensing results from the active elements, we propose communication enhancement and robust interference suppression schemes for both near-field and far-field models, implemented through the passive elements. These schemes remove the need for base station (BS) feedback for RISS control, simplifying the communication process by replacing traditional channel state information (CSI) feedback with real-time sensing from the active elements. The proposed schemes are theoretically analyzed and then validated using software-defined radio (SDR). Experimental results demonstrate the effectiveness of the sensing algorithms in real-world scenarios, such as direction of arrival (DOA) estimation and radio frequency (RF) identification recognition. Moreover, the RISS-assisted communication system shows strong performance in communication enhancement and interference suppression, particularly in near-field models.

Low-Complexity Beamforming Design for Null Space-based Simultaneous Wireless Information and Power Transfer Systems

Mar 11, 2025

Abstract:Simultaneous wireless information and power transfer (SWIPT) is a promising technology for the upcoming sixth-generation (6G) communication networks, enabling internet of things (IoT) devices and sensors to extend their operational lifetimes. In this paper, we propose a SWIPT scheme by projecting the interference signals from both intra-wireless information transfer (WIT) and inter-wireless energy transfer (WET) into the null space, simplifying the system into a point-to-point WIT and WET problem. Upon further analysis, we confirm that dedicated energy beamforming is unnecessary. In addition, we develop a low-complexity algorithm to solve the problem efficiently, further reducing computational overhead. Numerical results validate our analysis, showing that the computational complexity is reduced by 97.5\% and 99.96\% for the cases of $K^I = K^E = 2$, $M = 4$ and $K^I = K^E = 16$, $M = 64$, respectively.

Bedrock Models in Communication and Sensing: Advancing Generalization, Transferability, and Performance

Mar 11, 2025

Abstract:Deep learning (DL) has emerged as a powerful tool for addressing the intricate challenges inherent in communication and sensing systems, significantly enhancing the intelligence of future sixth-generation (6G) networks. A substantial body of research has highlighted the promise of DL-based techniques in these domains. However, in addition to improving accuracy, new challenges must be addressed regarding the generalization and transferability of DL-based systems. To tackle these issues, this paper introduces a series of mathematically grounded and modularized models, referred to as bedrock models, specifically designed for integration into both communication and sensing systems. Due to their modular architecture, these models can be seamlessly incorporated into existing communication and sensing frameworks. For communication systems, the proposed models demonstrate substantial performance improvements while also exhibit strong transferability, enabling direct parameter sharing across different tasks, which greatly facilitates practical deployment. In sensing applications, the integration of the bedrock models into existing systems results in superior performance, reducing delay and Doppler estimation errors by an order of magnitude compared to traditional methods. Additionally, a pre-equalization strategy based on the bedrock models is proposed for the transmitter. By leveraging sensing information, the transmitted communication signal is dynamically adjusted without altering the communication model pre-trained in AWGN channels. This adaptation enables the system to effectively cope with doubly dispersive channels, restoring the received signal to an AWGN-like condition and achieving near-optimal performance. Simulation results substantiate the effectiveness and transferability of the proposed bedrock models, underscoring their potential to advance both communication and sensing systems.

CaseGen: A Benchmark for Multi-Stage Legal Case Documents Generation

Feb 25, 2025

Abstract:Legal case documents play a critical role in judicial proceedings. As the number of cases continues to rise, the reliance on manual drafting of legal case documents is facing increasing pressure and challenges. The development of large language models (LLMs) offers a promising solution for automating document generation. However, existing benchmarks fail to fully capture the complexities involved in drafting legal case documents in real-world scenarios. To address this gap, we introduce CaseGen, the benchmark for multi-stage legal case documents generation in the Chinese legal domain. CaseGen is based on 500 real case samples annotated by legal experts and covers seven essential case sections. It supports four key tasks: drafting defense statements, writing trial facts, composing legal reasoning, and generating judgment results. To the best of our knowledge, CaseGen is the first benchmark designed to evaluate LLMs in the context of legal case document generation. To ensure an accurate and comprehensive evaluation, we design the LLM-as-a-judge evaluation framework and validate its effectiveness through human annotations. We evaluate several widely used general-domain LLMs and legal-specific LLMs, highlighting their limitations in case document generation and pinpointing areas for potential improvement. This work marks a step toward a more effective framework for automating legal case documents drafting, paving the way for the reliable application of AI in the legal field. The dataset and code are publicly available at https://github.com/CSHaitao/CaseGen.

HeadInfer: Memory-Efficient LLM Inference by Head-wise Offloading

Feb 18, 2025

Abstract:Transformer-based large language models (LLMs) demonstrate impressive performance in long context generation. Extending the context length has disproportionately shifted the memory footprint of LLMs during inference to the key-value cache (KV cache). In this paper, we propose HEADINFER, which offloads the KV cache to CPU RAM while avoiding the need to fully store the KV cache for any transformer layer on the GPU. HEADINFER employs a fine-grained, head-wise offloading strategy, maintaining only selective attention heads KV cache on the GPU while computing attention output dynamically. Through roofline analysis, we demonstrate that HEADINFER maintains computational efficiency while significantly reducing memory footprint. We evaluate HEADINFER on the Llama-3-8B model with a 1-million-token sequence, reducing the GPU memory footprint of the KV cache from 128 GB to 1 GB and the total GPU memory usage from 207 GB to 17 GB, achieving a 92% reduction compared to BF16 baseline inference. Notably, HEADINFER enables 4-million-token inference with an 8B model on a single consumer GPU with 24GB memory (e.g., NVIDIA RTX 4090) without approximation methods.

Finedeep: Mitigating Sparse Activation in Dense LLMs via Multi-Layer Fine-Grained Experts

Feb 18, 2025

Abstract:Large language models have demonstrated exceptional performance across a wide range of tasks. However, dense models usually suffer from sparse activation, where many activation values tend towards zero (i.e., being inactivated). We argue that this could restrict the efficient exploration of model representation space. To mitigate this issue, we propose Finedeep, a deep-layered fine-grained expert architecture for dense models. Our framework partitions the feed-forward neural network layers of traditional dense models into small experts, arranges them across multiple sub-layers. A novel routing mechanism is proposed to determine each expert's contribution. We conduct extensive experiments across various model sizes, demonstrating that our approach significantly outperforms traditional dense architectures in terms of perplexity and benchmark performance while maintaining a comparable number of parameters and floating-point operations. Moreover, we find that Finedeep achieves optimal results when balancing depth and width, specifically by adjusting the number of expert sub-layers and the number of experts per sub-layer. Empirical results confirm that Finedeep effectively alleviates sparse activation and efficiently utilizes representation capacity in dense models.

DSMoE: Matrix-Partitioned Experts with Dynamic Routing for Computation-Efficient Dense LLMs

Feb 18, 2025Abstract:As large language models continue to scale, computational costs and resource consumption have emerged as significant challenges. While existing sparsification methods like pruning reduce computational overhead, they risk losing model knowledge through parameter removal. This paper proposes DSMoE (Dynamic Sparse Mixture-of-Experts), a novel approach that achieves sparsification by partitioning pre-trained FFN layers into computational blocks. We implement adaptive expert routing using sigmoid activation and straight-through estimators, enabling tokens to flexibly access different aspects of model knowledge based on input complexity. Additionally, we introduce a sparsity loss term to balance performance and computational efficiency. Extensive experiments on LLaMA models demonstrate that under equivalent computational constraints, DSMoE achieves superior performance compared to existing pruning and MoE approaches across language modeling and downstream tasks, particularly excelling in generation tasks. Analysis reveals that DSMoE learns distinctive layerwise activation patterns, providing new insights for future MoE architecture design.

Tensor-GaLore: Memory-Efficient Training via Gradient Tensor Decomposition

Jan 04, 2025

Abstract:We present Tensor-GaLore, a novel method for efficient training of neural networks with higher-order tensor weights. Many models, particularly those used in scientific computing, employ tensor-parameterized layers to capture complex, multidimensional relationships. When scaling these methods to high-resolution problems makes memory usage grow intractably, and matrix based optimization methods lead to suboptimal performance and compression. We propose to work directly in the high-order space of the complex tensor parameter space using a tensor factorization of the gradients during optimization. We showcase its effectiveness on Fourier Neural Operators (FNOs), a class of models crucial for solving partial differential equations (PDE) and prove the theory of it. Across various PDE tasks like the Navier Stokes and Darcy Flow equations, Tensor-GaLore achieves substantial memory savings, reducing optimizer memory usage by up to 75%. These substantial memory savings across AI for science demonstrate Tensor-GaLore's potential.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge