"Information": models, code, and papers

Topic Modeling on Podcast Short-Text Metadata

Jan 12, 2022

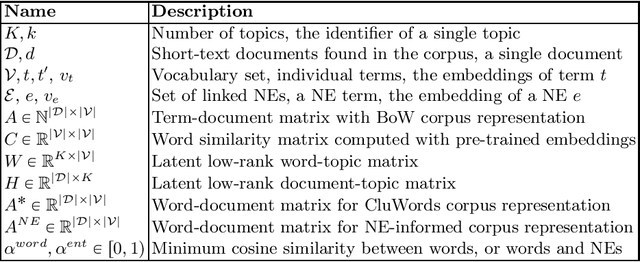

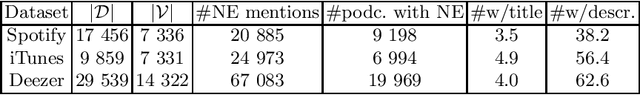

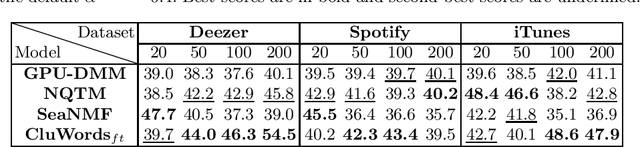

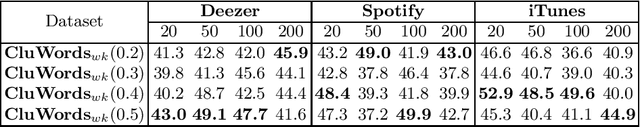

Podcasts have emerged as a massively consumed online content, notably due to wider accessibility of production means and scaled distribution through large streaming platforms. Categorization systems and information access technologies typically use topics as the primary way to organize or navigate podcast collections. However, annotating podcasts with topics is still quite problematic because the assigned editorial genres are broad, heterogeneous or misleading, or because of data challenges (e.g. short metadata text, noisy transcripts). Here, we assess the feasibility to discover relevant topics from podcast metadata, titles and descriptions, using topic modeling techniques for short text. We also propose a new strategy to leverage named entities (NEs), often present in podcast metadata, in a Non-negative Matrix Factorization (NMF) topic modeling framework. Our experiments on two existing datasets from Spotify and iTunes and Deezer, a new dataset from an online service providing a catalog of podcasts, show that our proposed document representation, NEiCE, leads to improved topic coherence over the baselines. We release the code for experimental reproducibility of the results.

A Scalable Deep Reinforcement Learning Model for Online Scheduling Coflows of Multi-Stage Jobs for High Performance Computing

Dec 21, 2021

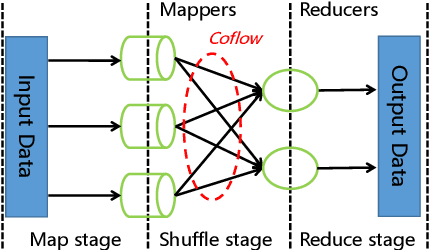

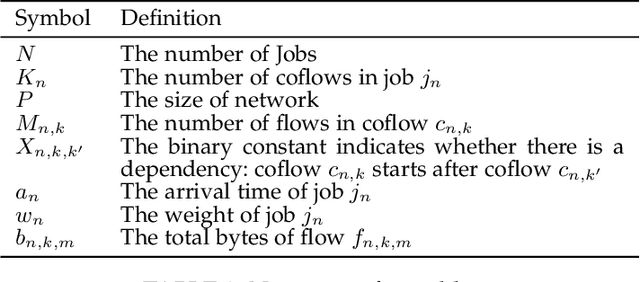

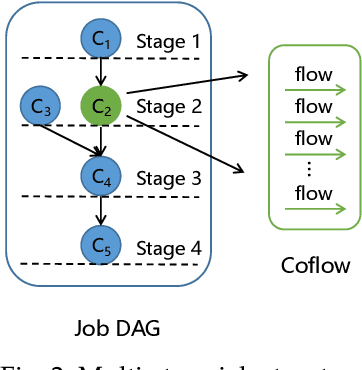

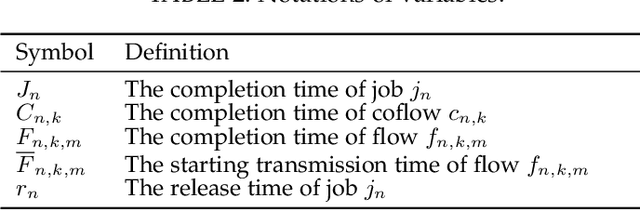

Coflow is a recently proposed networking abstraction to help improve the communication performance of data-parallel computing jobs. In multi-stage jobs, each job consists of multiple coflows and is represented by a Directed Acyclic Graph (DAG). Efficiently scheduling coflows is critical to improve the data-parallel computing performance in data centers. Compared with hand-tuned scheduling heuristics, existing work DeepWeave [1] utilizes Reinforcement Learning (RL) framework to generate highly-efficient coflow scheduling policies automatically. It employs a graph neural network (GNN) to encode the job information in a set of embedding vectors, and feeds a flat embedding vector containing the whole job information to the policy network. However, this method has poor scalability as it is unable to cope with jobs represented by DAGs of arbitrary sizes and shapes, which requires a large policy network for processing a high-dimensional embedding vector that is difficult to train. In this paper, we first utilize a directed acyclic graph neural network (DAGNN) to process the input and propose a novel Pipelined-DAGNN, which can effectively speed up the feature extraction process of the DAGNN. Next, we feed the embedding sequence composed of schedulable coflows instead of a flat embedding of all coflows to the policy network, and output a priority sequence, which makes the size of the policy network depend on only the dimension of features instead of the product of dimension and number of nodes in the job's DAG.Furthermore, to improve the accuracy of the priority scheduling policy, we incorporate the Self-Attention Mechanism into a deep RL model to capture the interaction between different parts of the embedding sequence to make the output priority scores relevant. Based on this model, we then develop a coflow scheduling algorithm for online multi-stage jobs.

Accurate Hydrologic Modeling Using Less Information

Nov 21, 2019

Joint models are a common and important tool in the intersection of machine learning and the physical sciences, particularly in contexts where real-world measurements are scarce. Recent developments in rainfall-runoff modeling, one of the prime challenges in hydrology, show the value of a joint model with shared representation in this important context. However, current state-of-the-art models depend on detailed and reliable attributes characterizing each site to help the model differentiate correctly between the behavior of different sites. This dependency can present a challenge in data-poor regions. In this paper, we show that we can replace the need for such location-specific attributes with a completely data-driven learned embedding, and match previous state-of-the-art results with less information.

Neural Architecture Ranker

Jan 30, 2022

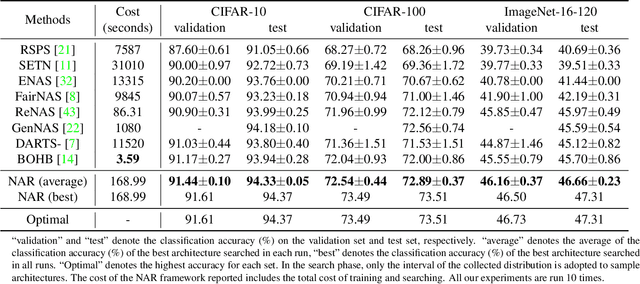

Architecture ranking has recently been advocated to design an efficient and effective performance predictor for Neural Architecture Search (NAS). The previous contrastive method solves the ranking problem by comparing pairs of architectures and predicting their relative performance, which may suffer generalization issues due to local pair-wise comparison. Inspired by the quality stratification phenomenon in the search space, we propose a predictor, namely Neural Architecture Ranker (NAR), from a new and global perspective by exploiting the quality distribution of the whole search space. The NAR learns the similar characteristics of the same quality tier (i.e., level) and distinguishes among different individuals by first matching architectures with the representation of tiers, and then classifying and scoring them. It can capture the features of different quality tiers and thus generalize its ranking ability to the entire search space. Besides, distributions of different quality tiers are also beneficial to guide the sampling procedure, which is free of training a search algorithm and thus simplifies the NAS pipeline. The proposed NAR achieves better performance than the state-of-the-art methods on two widely accepted datasets. On NAS-Bench-101, it finds the architectures with top 0.01$\unicode{x2030}$ performance among the search space and stably focuses on the top architectures. On NAS-Bench-201, it identifies the optimal architectures on CIFAR-10, CIFAR-100 and, ImageNet-16-120. We expand and release these two datasets covering detailed cell computational information to boost the study of NAS.

Hybrid 3D Beamforming Relying on Sensor-Based Training and Channel Estimation for Reconfigurable Intelligent Surface Aided TeraHertz MIMO systems

Jan 26, 2022

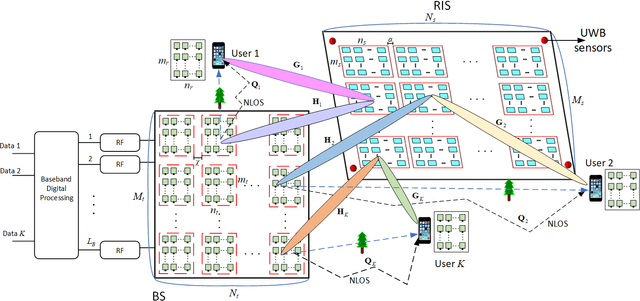

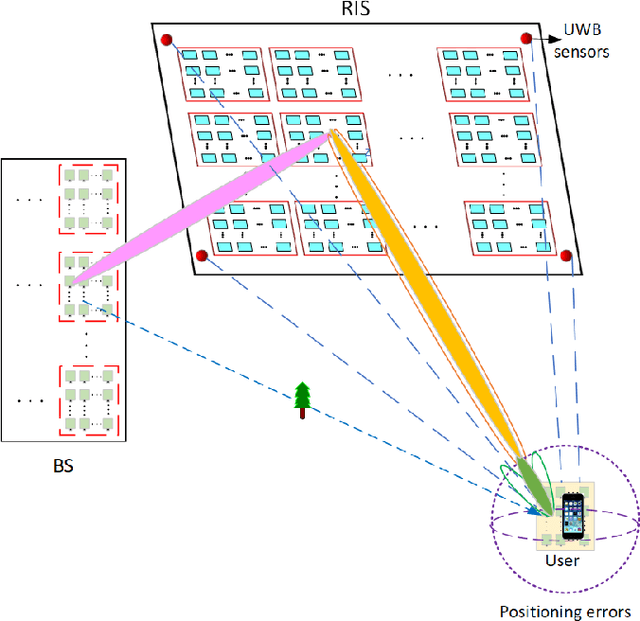

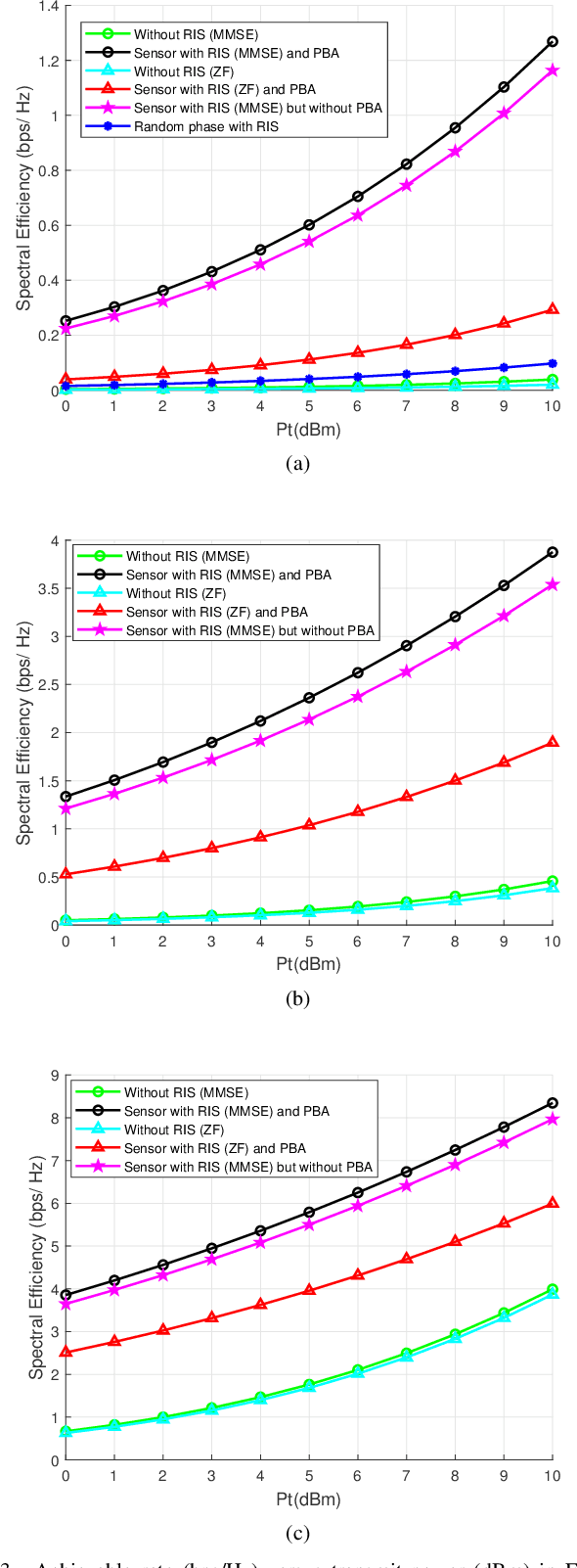

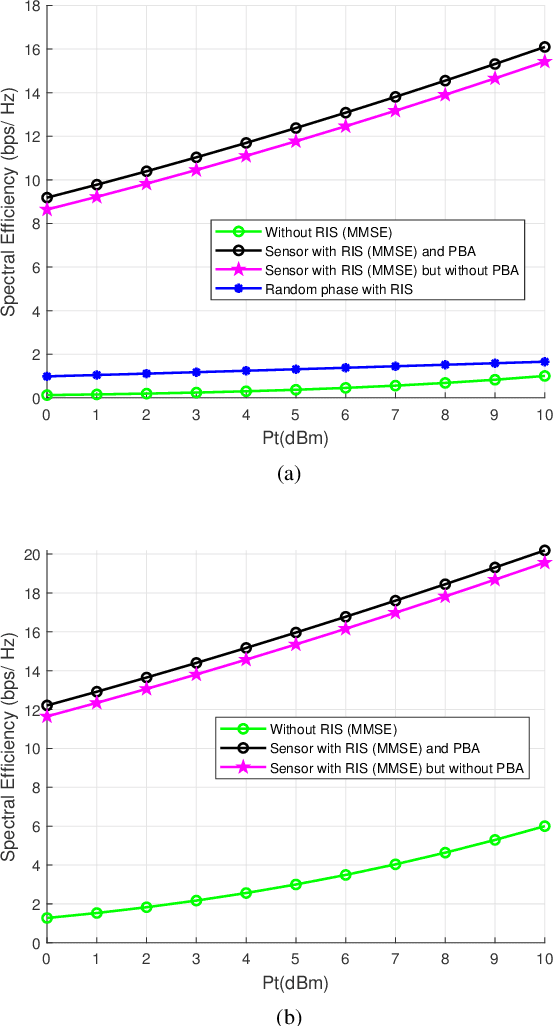

Terahertz (THz) systems have the benefit of high bandwidth and hence are capable of supporting ultra-high data rates, albeit at the cost of high pathloss. Hence they tend to harness high-gain beamforming. Therefore a novel hybrid 3D beamformer relying on sophisticated sensor-based beam training and channel estimation is proposed for Reconfigurable Intelligent Surface (RIS) aided THz MIMO systems. A so-called array-of-subarray based THz BS architecture is adopted and the corresponding sub-RIS structure is proposed. The BS, RIS and receiver antenna arrays of the users are all uniform planar arrays (UPAs). The Ultra-wideband (UWB) sensors are integrated into the RIS and the user location information obtained by the UWB sensors is exploited for channel estimation and beamforming. Furthermore, the novel concept of a Precise Beamforming Algorithm (PBA) is proposed, which further improves the beam-forming accuracy by circumventing the performance limitations imposed by positioning errors. Moreover, the conditions of maintaining the orthogonality of the RIS-aided THz channel are derived in support of spatial multiplexing. The closed-form expressions of the near-field and far-field path-loss are also derived. Our simulation results show that the proposed scheme accurately estimates the RIS-aided THz channel and the spectral efficiency is much improved, despite its low complexity. This makes our solution eminently suitable for delay-sensitive applications.

Improving short-term bike sharing demand forecast through an irregular convolutional neural network

Feb 09, 2022

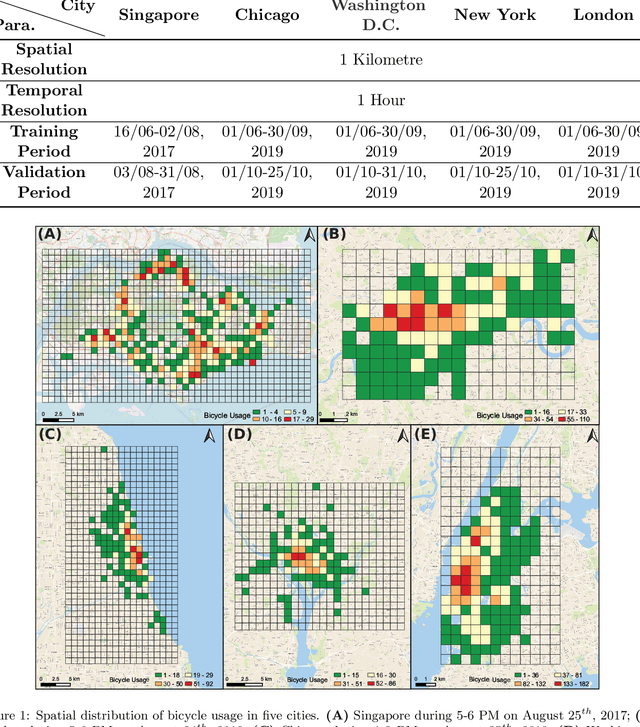

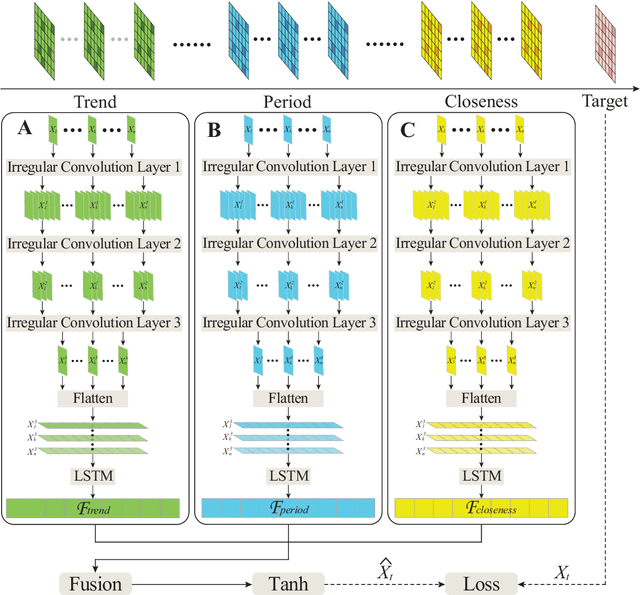

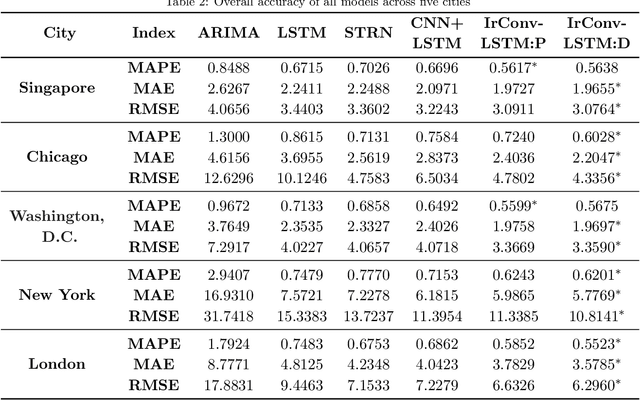

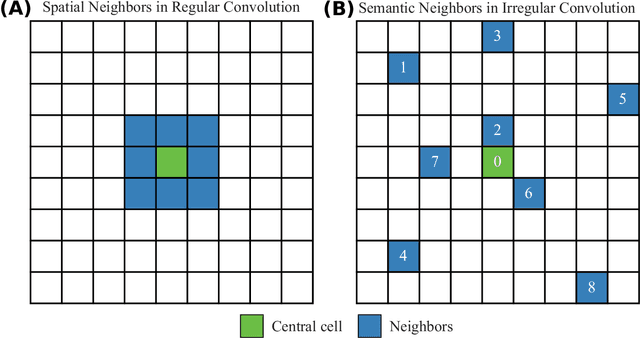

As an important task for the management of bike sharing systems, accurate forecast of travel demand could facilitate dispatch and relocation of bicycles to improve user satisfaction. In recent years, many deep learning algorithms have been introduced to improve bicycle usage forecast. A typical practice is to integrate convolutional (CNN) and recurrent neural network (RNN) to capture spatial-temporal dependency in historical travel demand. For typical CNN, the convolution operation is conducted through a kernel that moves across a "matrix-format" city to extract features over spatially adjacent urban areas. This practice assumes that areas close to each other could provide useful information that improves prediction accuracy. However, bicycle usage in neighboring areas might not always be similar, given spatial variations in built environment characteristics and travel behavior that affect cycling activities. Yet, areas that are far apart can be relatively more similar in temporal usage patterns. To utilize the hidden linkage among these distant urban areas, the study proposes an irregular convolutional Long-Short Term Memory model (IrConv+LSTM) to improve short-term bike sharing demand forecast. The model modifies traditional CNN with irregular convolutional architecture to extract dependency among "semantic neighbors". The proposed model is evaluated with a set of benchmark models in five study sites, which include one dockless bike sharing system in Singapore, and four station-based systems in Chicago, Washington, D.C., New York, and London. We find that IrConv+LSTM outperforms other benchmark models in the five cities. The model also achieves superior performance in areas with varying levels of bicycle usage and during peak periods. The findings suggest that "thinking beyond spatial neighbors" can further improve short-term travel demand prediction of urban bike sharing systems.

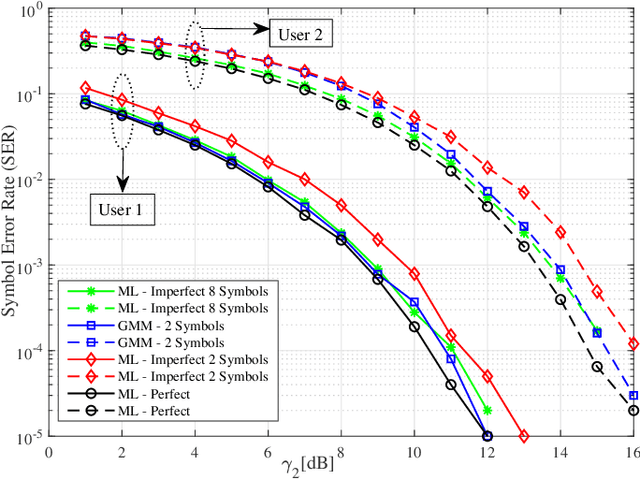

Clustering-based Joint Channel Estimation and Signal Detection for NOMA

Jan 17, 2022

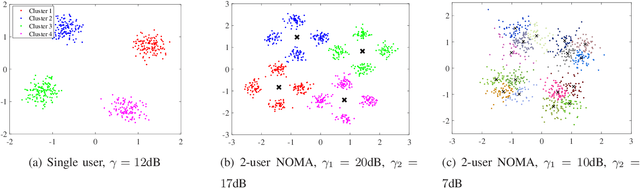

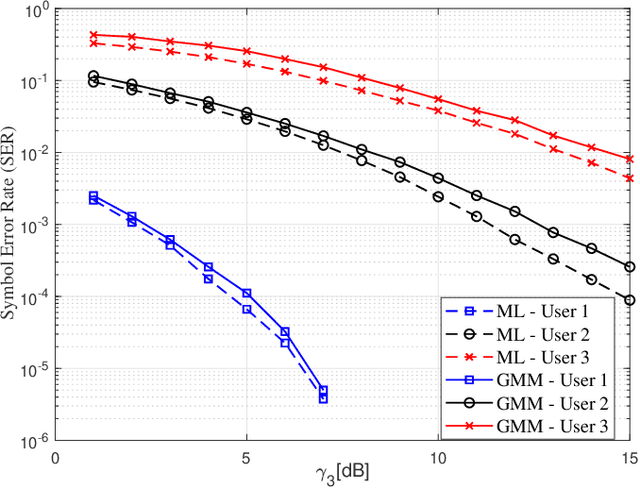

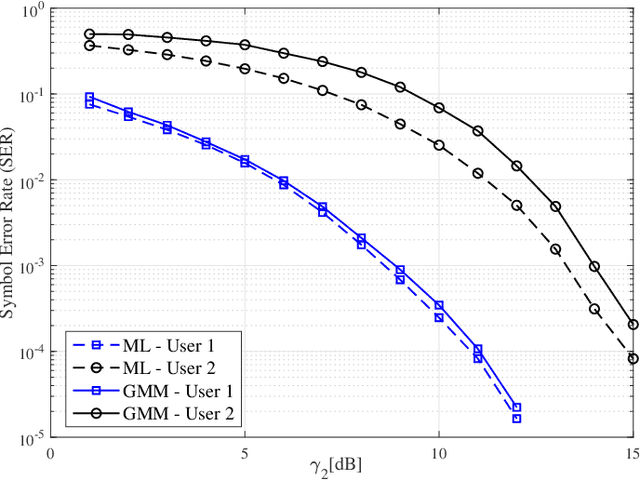

We propose a joint channel estimation and signal detection approach for the uplink non-orthogonal multiple access using unsupervised machine learning. We apply the Gaussian mixture model to cluster the received signals, and accordingly optimize the decision regions to enhance the symbol error rate (SER). We show that, when the received powers of the users are sufficiently different, the proposed clustering-based approach achieves an SER performance on a par with that of the conventional maximum-likelihood detector with full channel state information. However, unlike the proposed approach, the maximum-likelihood detector requires the transmission of a large number of pilot symbols to accurately estimate the channel. The accuracy of the utilized clustering algorithm depends on the number of the data points available at the receiver. Therefore, there exists a tradeoff between accuracy and block length. We provide a comprehensive performance analysis of the proposed approach as well as deriving a theoretical bound on its SER performance as a function of the block length. Our simulation results corroborate the effectiveness of the proposed approach and verify that the calculated theoretical bound can predict the SER performance of the proposed approach well.

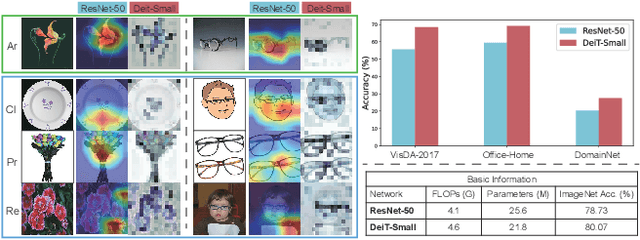

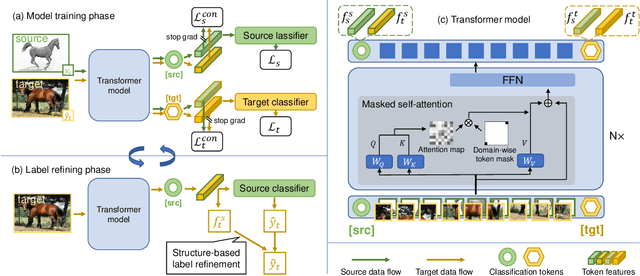

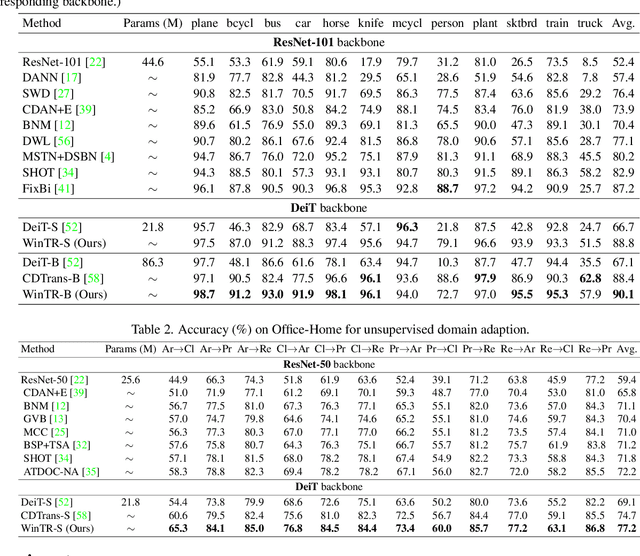

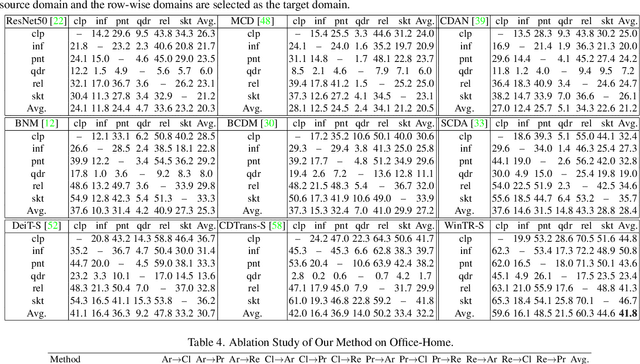

Exploiting Both Domain-specific and Invariant Knowledge via a Win-win Transformer for Unsupervised Domain Adaptation

Nov 25, 2021

Unsupervised Domain Adaptation (UDA) aims to transfer knowledge from a labeled source domain to an unlabeled target domain. Most existing UDA approaches enable knowledge transfer via learning domain-invariant representation and sharing one classifier across two domains. However, ignoring the domain-specific information that are related to the task, and forcing a unified classifier to fit both domains will limit the feature expressiveness in each domain. In this paper, by observing that the Transformer architecture with comparable parameters can generate more transferable representations than CNN counterparts, we propose a Win-Win TRansformer framework (WinTR) that separately explores the domain-specific knowledge for each domain and meanwhile interchanges cross-domain knowledge. Specifically, we learn two different mappings using two individual classification tokens in the Transformer, and design for each one a domain-specific classifier. The cross-domain knowledge is transferred via source guided label refinement and single-sided feature alignment with respect to source or target, which keeps the integrity of domain-specific information. Extensive experiments on three benchmark datasets show that our method outperforms the state-of-the-art UDA methods, validating the effectiveness of exploiting both domain-specific and invariant

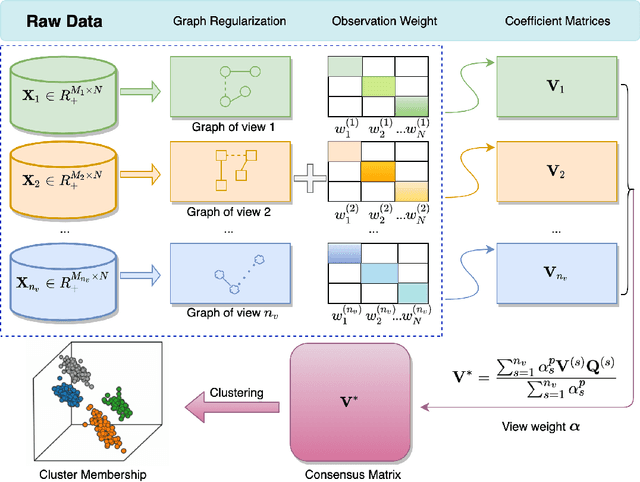

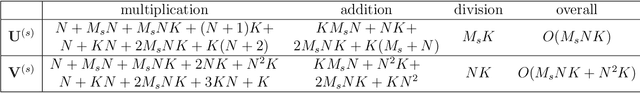

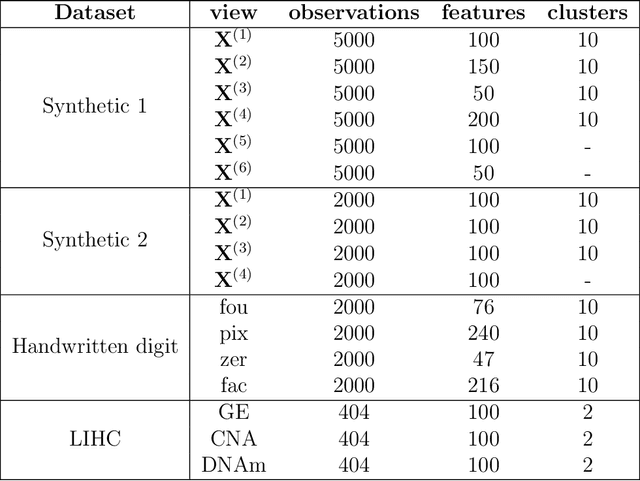

Integrative Clustering of Multi-View Data by Nonnegative Matrix Factorization

Oct 25, 2021

Learning multi-view data is an emerging problem in machine learning research, and nonnegative matrix factorization (NMF) is a popular dimensionality-reduction method for integrating information from multiple views. These views often provide not only consensus but also diverse information. However, most multi-view NMF algorithms assign equal weight to each view or tune the weight via line search empirically, which can be computationally expensive or infeasible without any prior knowledge of the views. In this paper, we propose a weighted multi-view NMF (WM-NMF) algorithm. In particular, we aim to address the critical technical gap, which is to learn both view-specific and observation-specific weights to quantify each view's information content. The introduced weighting scheme can alleviate unnecessary views' adverse effects and enlarge the positive effects of the important views by assigning smaller and larger weights, respectively. In addition, we provide theoretical investigations about the convergence, perturbation analysis, and generalization error of the WM-NMF algorithm. Experimental results confirm the effectiveness and advantages of the proposed algorithm in terms of achieving better clustering performance and dealing with the corrupted data compared to the existing algorithms.

Stochastic Coded Federated Learning with Convergence and Privacy Guarantees

Jan 26, 2022

Federated learning (FL) has attracted much attention as a privacy-preserving distributed machine learning framework, where many clients collaboratively train a machine learning model by exchanging model updates with a parameter server instead of sharing their raw data. Nevertheless, FL training suffers from slow convergence and unstable performance due to stragglers caused by the heterogeneous computational resources of clients and fluctuating communication rates. This paper proposes a coded FL framework, namely stochastic coded federated learning (SCFL) to mitigate the straggler issue. In the proposed framework, each client generates a privacy-preserving coded dataset by adding additive noise to the random linear combination of its local data. The server collects the coded datasets from all the clients to construct a composite dataset, which helps to compensate for the straggling effect. In the training process, the server as well as clients perform mini-batch stochastic gradient descent (SGD), and the server adds a make-up term in model aggregation to obtain unbiased gradient estimates. We characterize the privacy guarantee by the mutual information differential privacy (MI-DP) and analyze the convergence performance in federated learning. Besides, we demonstrate a privacy-performance tradeoff of the proposed SCFL method by analyzing the influence of the privacy constraint on the convergence rate. Finally, numerical experiments corroborate our analysis and show the benefits of SCFL in achieving fast convergence while preserving data privacy.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge