Yonghui Li

Deep Reinforcement Learning for Radio Resource Allocation in NOMA-based Remote State Estimation

May 24, 2022

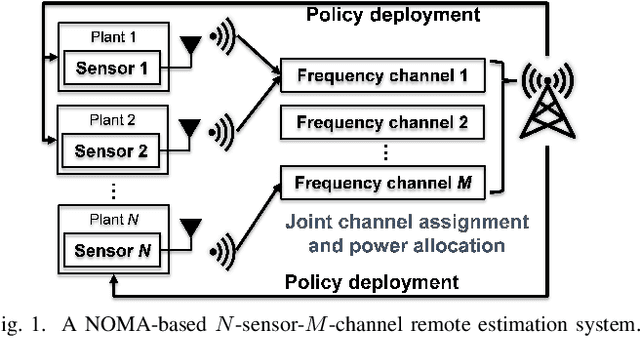

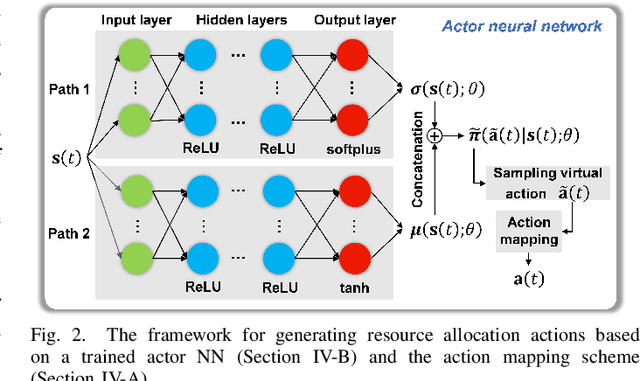

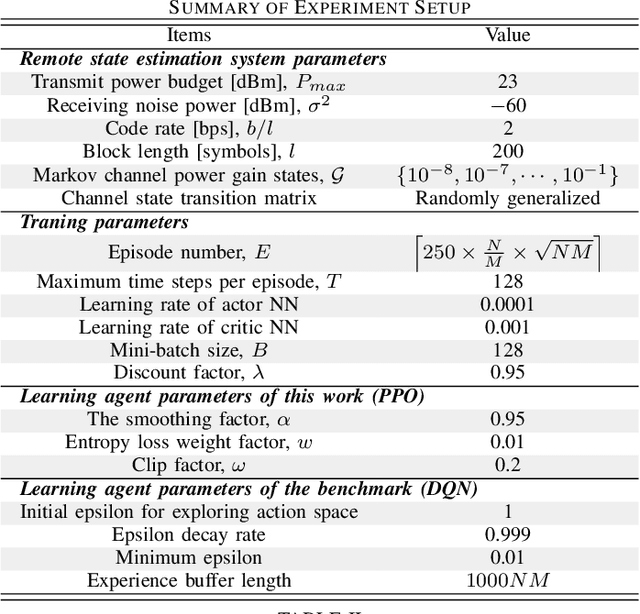

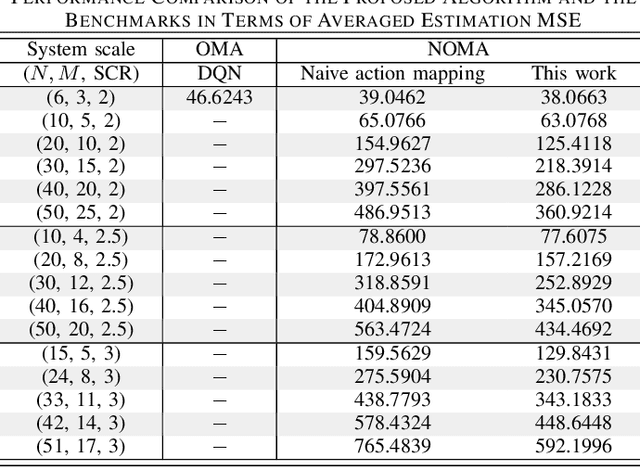

Abstract:Remote state estimation, where many sensors send their measurements of distributed dynamic plants to a remote estimator over shared wireless resources, is essential for mission-critical applications of Industry 4.0. Most of the existing works on remote state estimation assumed orthogonal multiple access and the proposed dynamic radio resource allocation algorithms can only work for very small-scale settings. In this work, we consider a remote estimation system with non-orthogonal multiple access. We formulate a novel dynamic resource allocation problem for achieving the minimum overall long-term average estimation mean-square error. Both the estimation quality state and the channel quality state are taken into account for decision making at each time. The problem has a large hybrid discrete and continuous action space for joint channel assignment and power allocation. We propose a novel action-space compression method and develop an advanced deep reinforcement learning algorithm to solve the problem. Numerical results show that our algorithm solves the resource allocation problem effectively, presents much better scalability than the literature, and provides significant performance gain compared to some benchmarks.

Design of a Reconfigurable Intelligent Surface-Assisted FM-DCSK-SWIPT Scheme with Non-linear Energy Harvesting Model

May 14, 2022

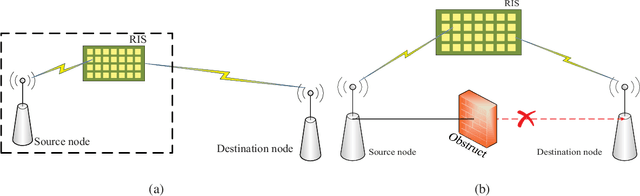

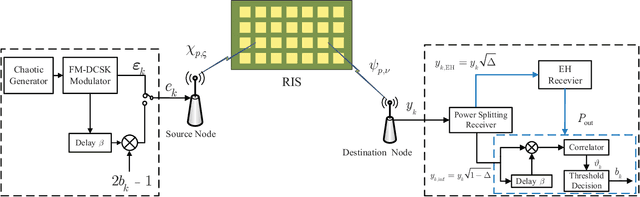

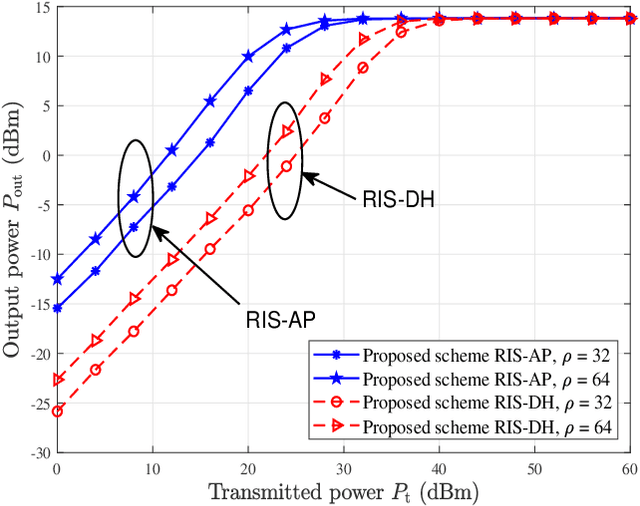

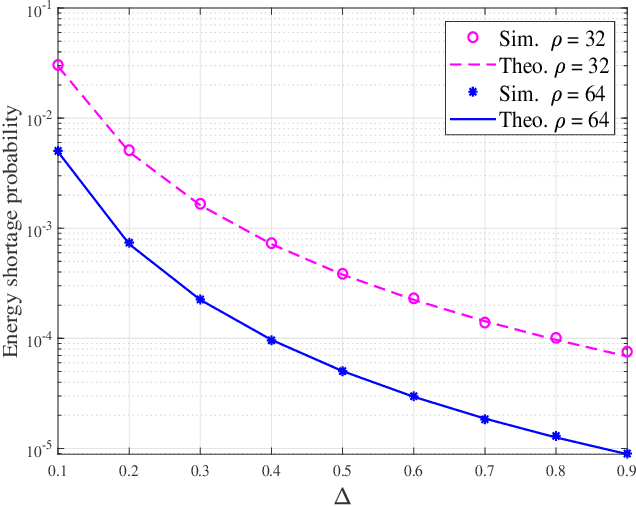

Abstract:In this paper, we propose a reconfigurable intelligent surface (RIS)-assisted frequency-modulated (FM) differential chaos shift keying (DCSK) scheme with simultaneous wireless information and power transfer (SWIPT), called RIS-FM-DCSK-SWIPT scheme, for low-power, low-cost, and high-reliability wireless communication networks. In particular, the proposed scheme is developed under a non-linear energy-harvesting (EH) model which can accurately characterize the practical situation. The proposed RIS-FM-DCSK-SWIPT scheme has an appealing feature that it does not require channel state information, thus avoiding the complex channel estimation. We derive the theoretical expressions for the energy shortage probability and bit error rate (BER) of the proposed scheme over the multipath Rayleigh fading channel. We investigate the influence of key parameters on the performance of the proposed scheme in two different scenarios, i.e., RIS-assisted access point (RIS-AP) and dual-hop communication (RIS-DH). Finally, we carry out various Monte-Carlo experiments to verify the accuracy of the theoretical derivation, and illustrate the performance advantage of the proposed scheme over the existing DCSK-SWIPT schemes.

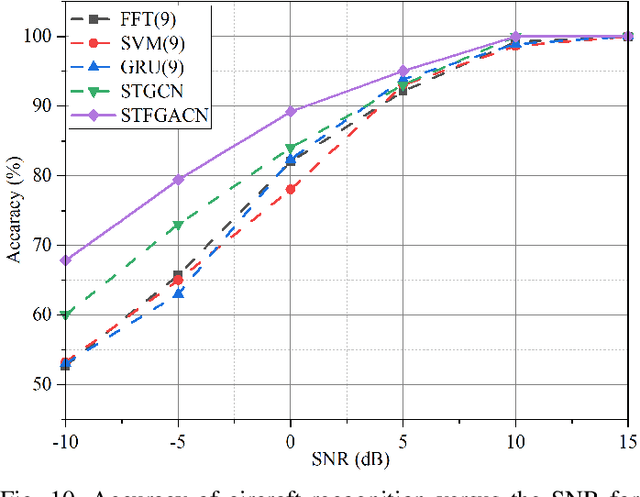

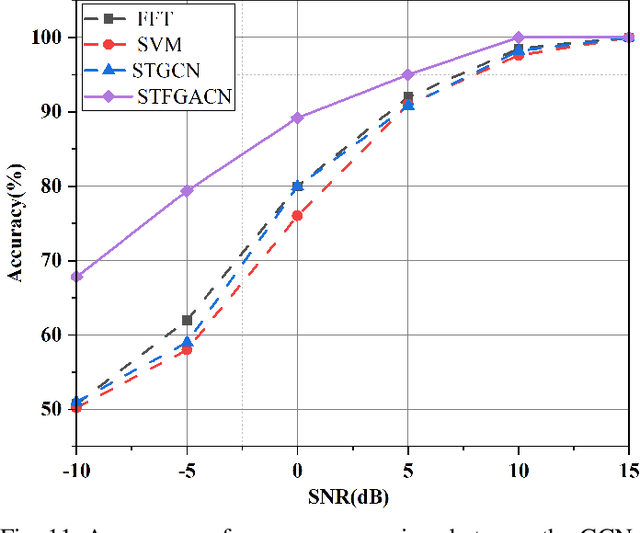

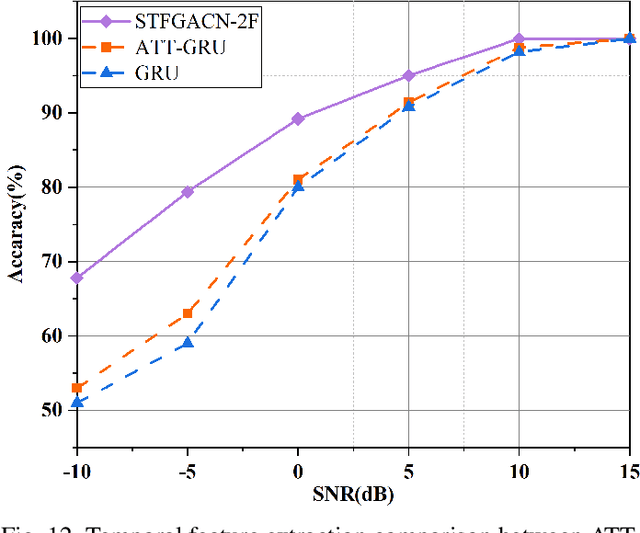

Spatio-Temporal-Frequency Graph Attention Convolutional Network for Aircraft Recognition Based on Heterogeneous Radar Network

Apr 15, 2022

Abstract:This paper proposes a knowledge-and-data-driven graph neural network-based collaboration learning model for reliable aircraft recognition in a heterogeneous radar network. The aircraft recognizability analysis shows that: (1) the semantic feature of an aircraft is motion patterns driven by the kinetic characteristics, and (2) the grammatical features contained in the radar cross-section (RCS) signals present spatial-temporal-frequency (STF) diversity decided by both the electromagnetic radiation shape and motion pattern of the aircraft. Then a STF graph attention convolutional network (STFGACN) is developed to distill semantic features from the RCS signals received by the heterogeneous radar network. Extensive experiment results verify that the STFGACN outperforms the baseline methods in terms of detection accuracy, and ablation experiments are carried out to further show that the expansion of the information dimension can gain considerable benefits to perform robustly in the low signal-to-noise ratio region.

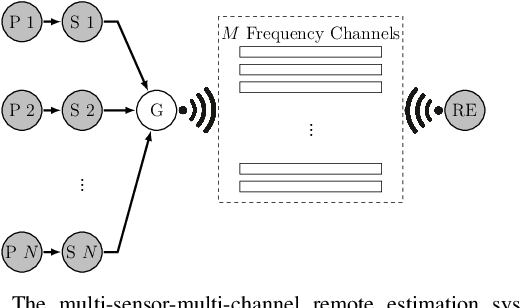

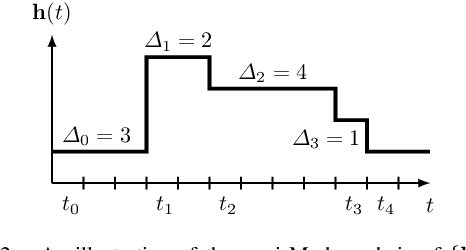

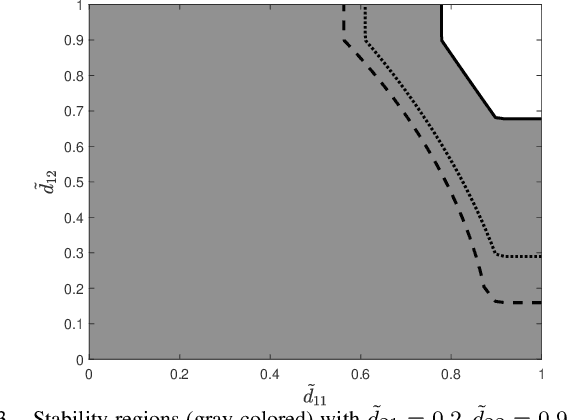

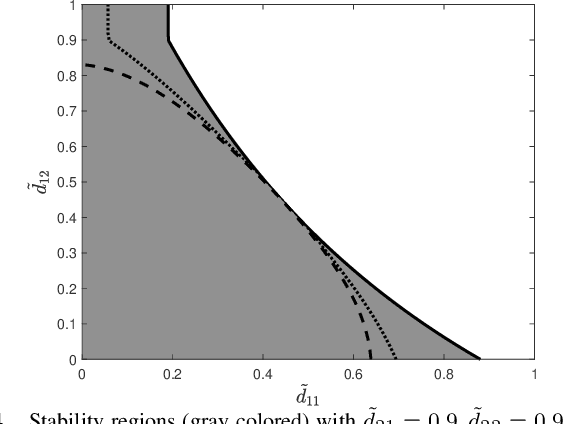

Remote State Estimation of Multiple Systems over Semi-Markov Wireless Fading Channels

Mar 31, 2022

Abstract:This work studies remote state estimation of multiple linear time-invariant systems over shared wireless time-varying communication channels. We model the channel states by a semi-Markov process which captures both the random holding period of each channel state and the state transitions. The model is sufficiently general to be used in both fast and slow fading scenarios. We derive necessary and sufficient stability conditions of the multi-sensor-multi-channel system in terms of the system parameters. We further investigate how the delay of the channel state information availability and the holding period of channel states affect the stability. In particular, we show that, from a system stability perspective, fast fading channels may be preferable to slow fading ones.

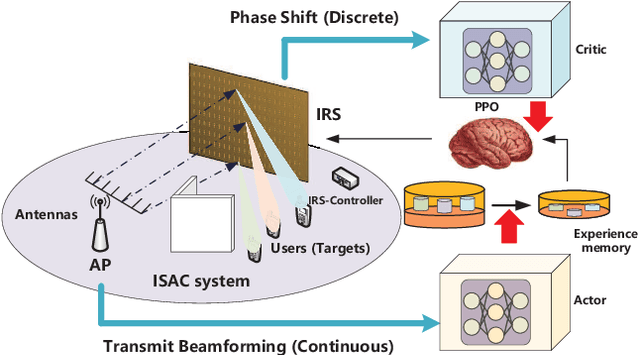

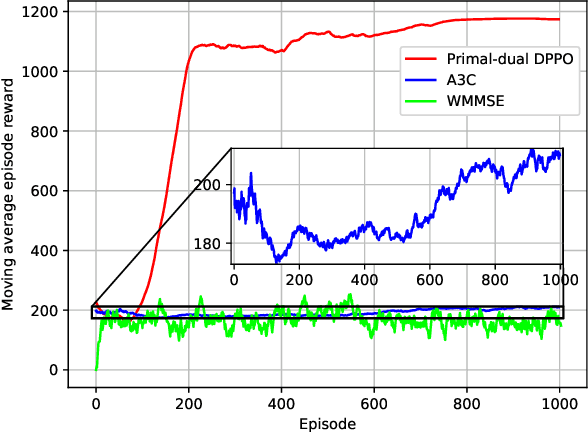

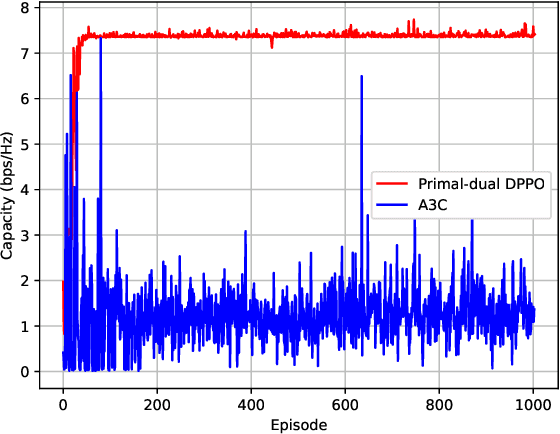

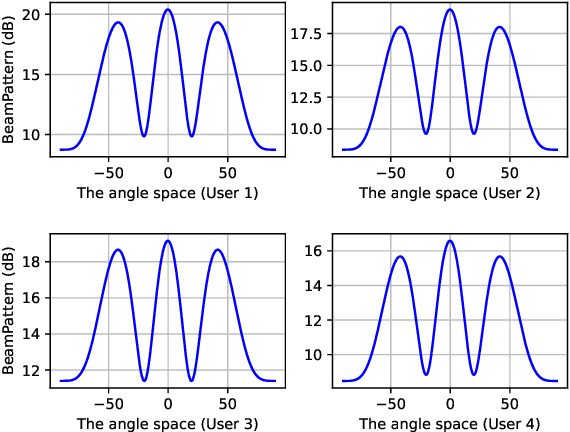

Proximal Policy Optimization-based Transmit Beamforming and Phase-shift Design in an IRS-aided ISAC System for the THz Band

Mar 21, 2022

Abstract:In this paper, an IRS-aided integrated sensing and communications (ISAC) system operating in the terahertz (THz) band is proposed to maximize the system capacity. Transmit beamforming and phase-shift design are transformed into a universal optimization problem with ergodic constraints. Then the joint optimization of transmit beamforming and phase-shift design is achieved by gradient-based, primal-dual proximal policy optimization (PPO) in the multi-user multiple-input single-output (MISO) scenario. Specifically, the actor part generates continuous transmit beamforming and the critic part takes charge of discrete phase shift design. Based on the MISO scenario, we investigate a distributed PPO (DPPO) framework with the concept of multi-threading learning in the multi-user multiple-input multiple-output (MIMO) scenario. Simulation results demonstrate the effectiveness of the primal-dual PPO algorithm and its multi-threading version in terms of transmit beamforming and phase-shift design.

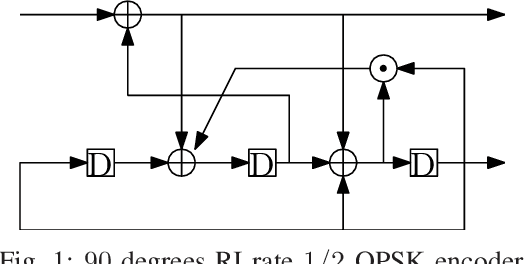

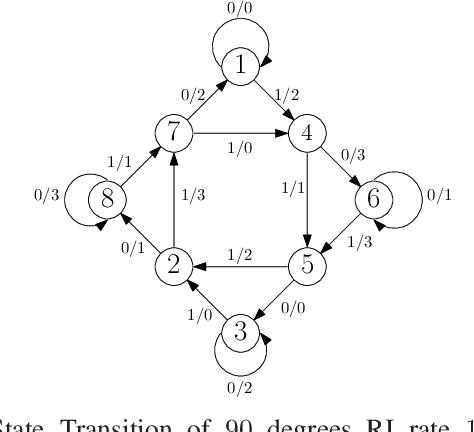

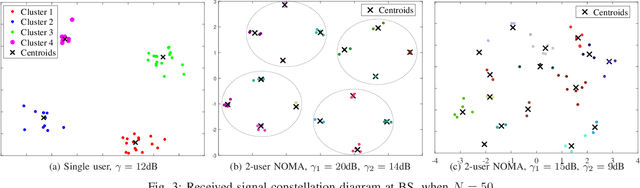

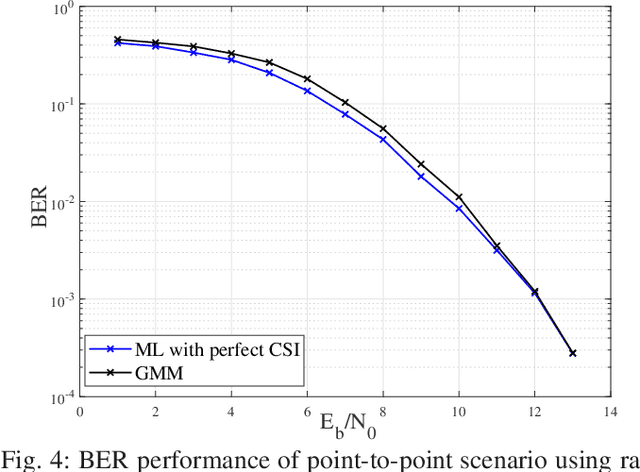

NOMA Channel Estimation and Signal Detection using Rotational Invariant Codes and Machine Learning

Mar 15, 2022

Abstract:This paper studies the joint channel estimation and signal detection for the uplink power domain non-orthogonal multiple access. The proposed technique performs both detection and estimation without the need of pilot symbols by using a clustering technique. To remove the effect of channel fading, we apply rotational invariant coding to assist signal detection at receiver without sending pilots. We utilize Gaussian mixture model (GMM) to automatically cluster the received signals without supervision and optimize decision boundaries to improve the bit error rate (BER) performance.

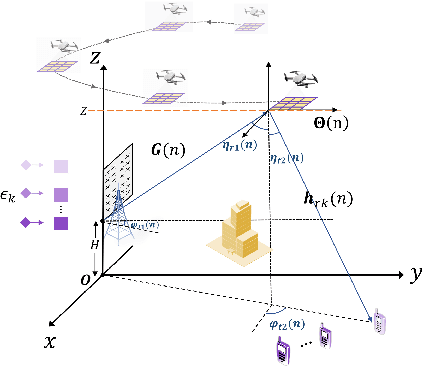

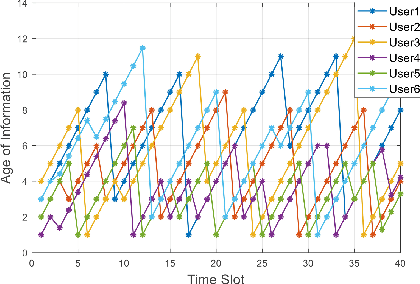

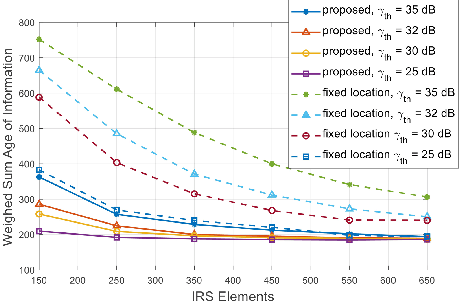

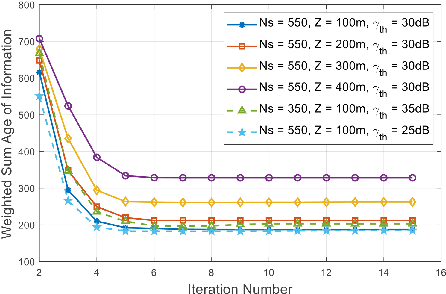

Weighted Sum Age of Information Minimization in Wireless Networks with Aerial IRS

Mar 09, 2022

Abstract:In this letter, we analyze a terrestrial wireless communication network assisted by an aerial intelligent reflecting surface (IRS). We consider a packet scheduling problem at the ground base station (BS) aimed at improving the information freshness by selecting packets based on their AoI. To further improve the communication quality, the trajectory of the unmanned aerial vehicle (UAV) which carries the IRS is optimized with joint active and passive beamforming design. To solve the formulated non-convex problem, we propose an iterative alternating optimization problem based on a successive convex approximation (SCA) algorithm. The simulation results shows significant performance improvement in terms of weighted sum AoI, and the SCA solution converges quickly with low computational complexity.

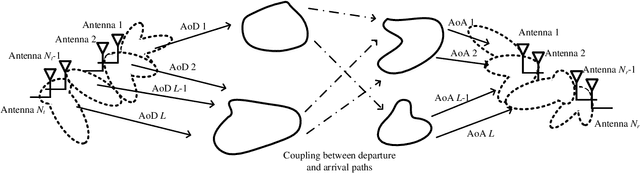

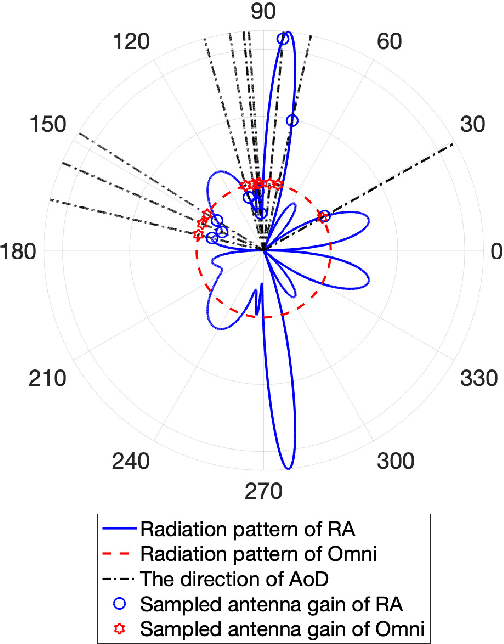

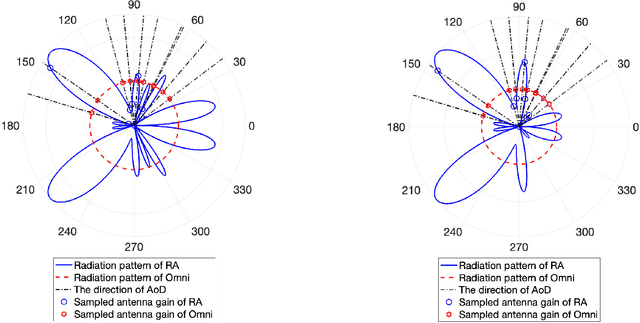

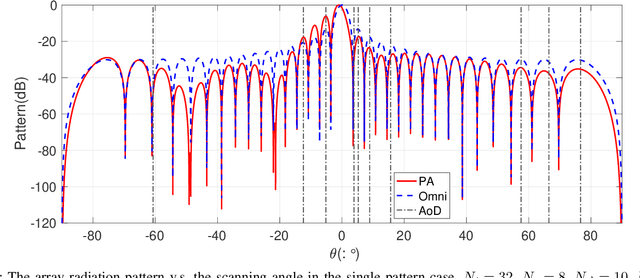

Capacity Maximization Pattern Design for Reconfigurable MIMO Antenna Array

Feb 12, 2022

Abstract:Multi-functional and reconfigurable multiple-input multiple-output (MR-MIMO) can provide performance gains over traditional MIMO by introducing additional degrees of freedom. In this paper, we focus on the capacity maximization pattern design for MR-MIMO systems. Firstly, we introduce the matrix representation of MR-MIMO, based on which a pattern design problem is formulated. To further reveal the effect of the radiation pattern on the wireless channel, we consider pattern design for both the single-pattern case where the optimized radiation pattern is the same for all the antenna elements, and the multi-pattern case where different antenna elements can adopt different radiation patterns. For the single-pattern case, we show that the pattern design is equivalent to a redistribution of power among all scattering paths, and an eigenvalue optimization based solution is obtained. For the multi-pattern case, we propose a sequential optimization framework with manifold optimization and eigenvalue decomposition to obtain near-optimal solutions. Numerical results validate the superiority of MR-MIMO systems over traditional MIMO in terms of capacity, and also show the effectiveness of the proposed solutions.

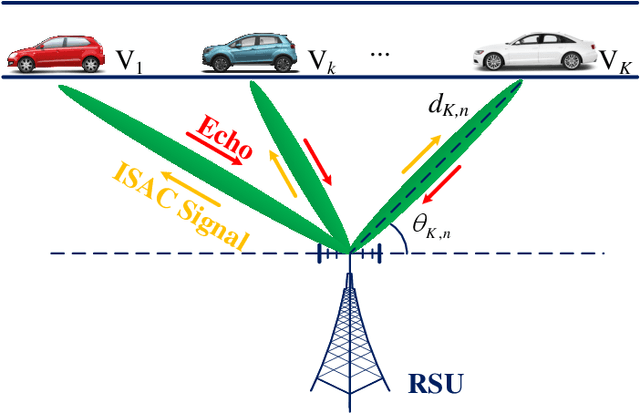

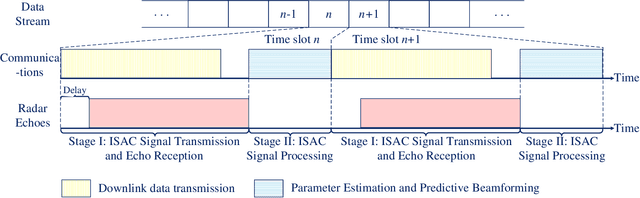

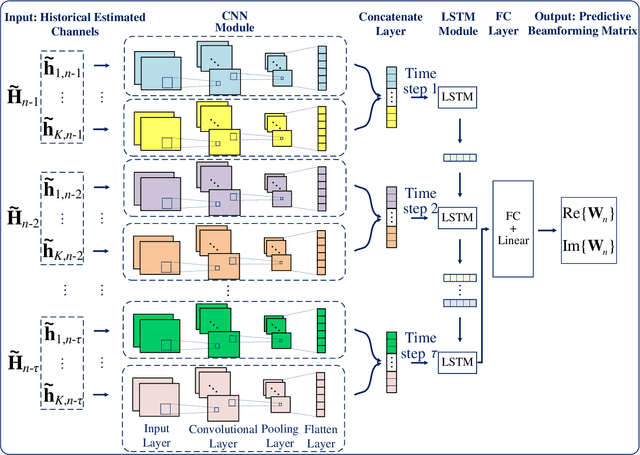

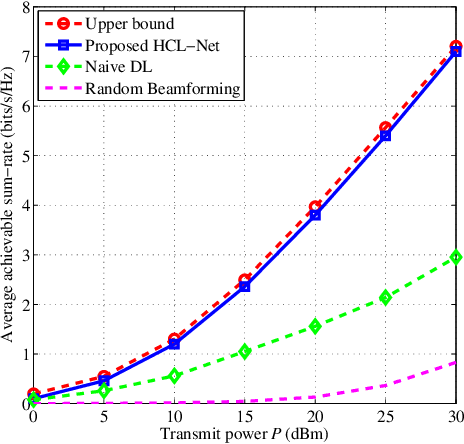

Predictive Beamforming for Integrated Sensing and Communication in Vehicular Networks: A Deep Learning Approach

Feb 08, 2022

Abstract:The implementation of integrated sensing and communication (ISAC) highly depends on the effective beamforming design exploiting accurate instantaneous channel state information (ICSI). However, channel tracking in ISAC requires large amount of training overhead and prohibitively large computational complexity. To address this problem, in this paper, we focus on ISAC-assisted vehicular networks and exploit a deep learning approach to implicitly learn the features of historical channels and directly predict the beamforming matrix for the next time slot to maximize the average achievable sum-rate of system, thus bypassing the need of explicit channel tracking for reducing the system signaling overhead. To this end, a general sum-rate maximization problem with Cramer-Rao lower bounds-based sensing constraints is first formulated for the considered ISAC system. Then, a historical channels-based convolutional long short-term memory network is designed for predictive beamforming that can exploit the spatial and temporal dependencies of communication channels to further improve the learning performance. Finally, simulation results show that the proposed method can satisfy the requirement of sensing performance, while its achievable sum-rate can approach the upper bound obtained by a genie-aided scheme with perfect ICSI available.

Deep Reinforcement Learning for Wireless Scheduling in Distributed Networked Control

Sep 26, 2021

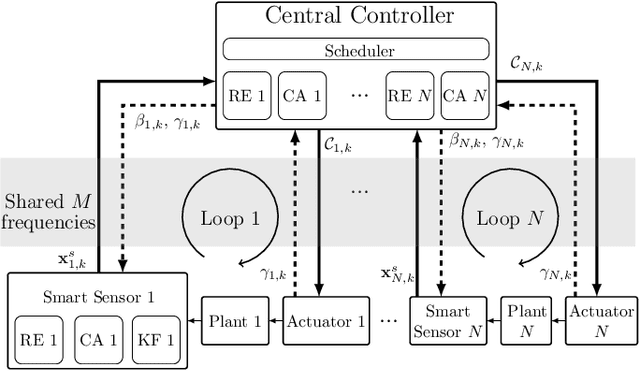

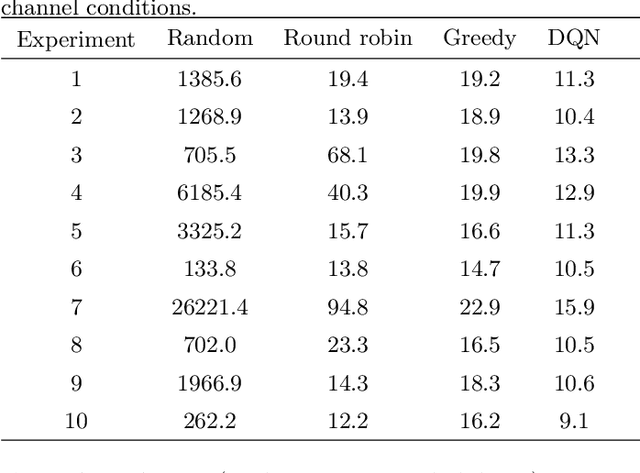

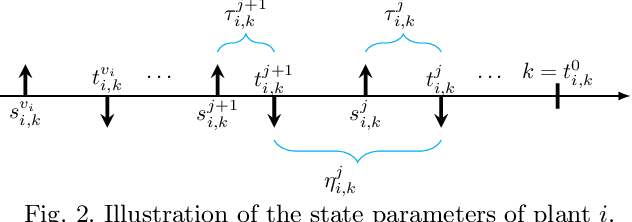

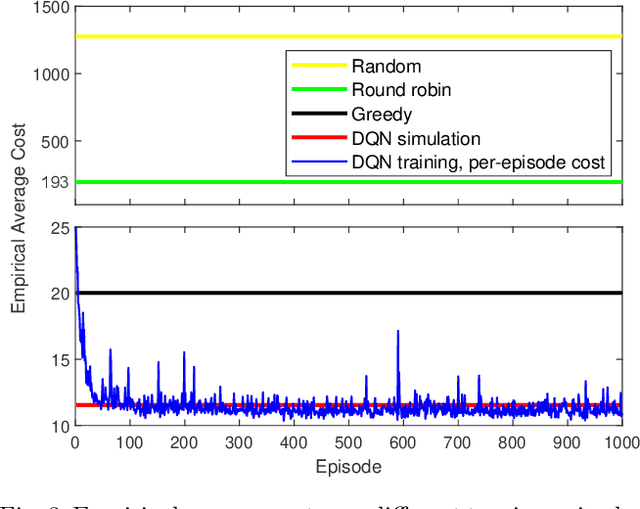

Abstract:In the literature of transmission scheduling in wireless networked control systems (WNCSs) over shared wireless resources, most research works have focused on partially distributed settings, i.e., where either the controller and actuator, or the sensor and controller are co-located. To overcome this limitation, the present work considers a fully distributed WNCS with distributed plants, sensors, actuators and a controller, sharing a limited number of frequency channels. To overcome communication limitations, the controller schedules the transmissions and generates sequential predictive commands for control. Using elements of stochastic systems theory, we derive a sufficient stability condition of the WNCS, which is stated in terms of both the control and communication system parameters. Once the condition is satisfied, there exists at least one stationary and deterministic scheduling policy that can stabilize all plants of the WNCS. By analyzing and representing the per-step cost function of the WNCS in terms of a finite-length countable vector state, we formulate the optimal transmission scheduling problem into a Markov decision process problem and develop a deep-reinforcement-learning-based algorithm for solving it. Numerical results show that the proposed algorithm significantly outperforms the benchmark policies.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge