Gaoyang Pang

Medical Referring Image Segmentation via Next-Token Mask Prediction

Nov 07, 2025Abstract:Medical Referring Image Segmentation (MRIS) involves segmenting target regions in medical images based on natural language descriptions. While achieving promising results, recent approaches usually involve complex design of multimodal fusion or multi-stage decoders. In this work, we propose NTP-MRISeg, a novel framework that reformulates MRIS as an autoregressive next-token prediction task over a unified multimodal sequence of tokenized image, text, and mask representations. This formulation streamlines model design by eliminating the need for modality-specific fusion and external segmentation models, supports a unified architecture for end-to-end training. It also enables the use of pretrained tokenizers from emerging large-scale multimodal models, enhancing generalization and adaptability. More importantly, to address challenges under this formulation-such as exposure bias, long-tail token distributions, and fine-grained lesion edges-we propose three novel strategies: (1) a Next-k Token Prediction (NkTP) scheme to reduce cumulative prediction errors, (2) Token-level Contrastive Learning (TCL) to enhance boundary sensitivity and mitigate long-tail distribution effects, and (3) a memory-based Hard Error Token (HET) optimization strategy that emphasizes difficult tokens during training. Extensive experiments on the QaTa-COV19 and MosMedData+ datasets demonstrate that NTP-MRISeg achieves new state-of-the-art performance, offering a streamlined and effective alternative to traditional MRIS pipelines.

Wireless Human-Machine Collaboration in Industry 5.0

Oct 21, 2024Abstract:Wireless Human-Machine Collaboration (WHMC) represents a critical advancement for Industry 5.0, enabling seamless interaction between humans and machines across geographically distributed systems. As the WHMC systems become increasingly important for achieving complex collaborative control tasks, ensuring their stability is essential for practical deployment and long-term operation. Stability analysis certifies how the closed-loop system will behave under model randomness, which is essential for systems operating with wireless communications. However, the fundamental stability analysis of the WHMC systems remains an unexplored challenge due to the intricate interplay between the stochastic nature of wireless communications, dynamic human operations, and the inherent complexities of control system dynamics. This paper establishes a fundamental WHMC model incorporating dual wireless loops for machine and human control. Our framework accounts for practical factors such as short-packet transmissions, fading channels, and advanced HARQ schemes. We model human control lag as a Markov process, which is crucial for capturing the stochastic nature of human interactions. Building on this model, we propose a stochastic cycle-cost-based approach to derive a stability condition for the WHMC system, expressed in terms of wireless channel statistics, human dynamics, and control parameters. Our findings are validated through extensive numerical simulations and a proof-of-concept experiment, where we developed and tested a novel wireless collaborative cart-pole control system. The results confirm the effectiveness of our approach and provide a robust framework for future research on WHMC systems in more complex environments.

Communication-Control Codesign for Large-Scale Wireless Networked Control Systems

Oct 15, 2024

Abstract:Wireless Networked Control Systems (WNCSs) are essential to Industry 4.0, enabling flexible control in applications, such as drone swarms and autonomous robots. The interdependence between communication and control requires integrated design, but traditional methods treat them separately, leading to inefficiencies. Current codesign approaches often rely on simplified models, focusing on single-loop or independent multi-loop systems. However, large-scale WNCSs face unique challenges, including coupled control loops, time-correlated wireless channels, trade-offs between sensing and control transmissions, and significant computational complexity. To address these challenges, we propose a practical WNCS model that captures correlated dynamics among multiple control loops with spatially distributed sensors and actuators sharing limited wireless resources over multi-state Markov block-fading channels. We formulate the codesign problem as a sequential decision-making task that jointly optimizes scheduling and control inputs across estimation, control, and communication domains. To solve this problem, we develop a Deep Reinforcement Learning (DRL) algorithm that efficiently handles the hybrid action space, captures communication-control correlations, and ensures robust training despite sparse cross-domain variables and floating control inputs. Extensive simulations show that the proposed DRL approach outperforms benchmarks and solves the large-scale WNCS codesign problem, providing a scalable solution for industrial automation.

DRL-based Resource Allocation in Remote State Estimation

May 24, 2022

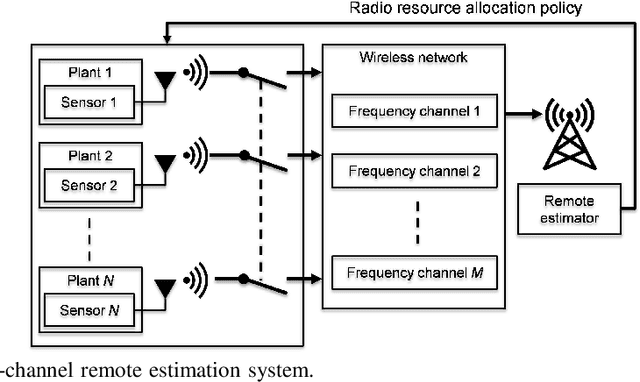

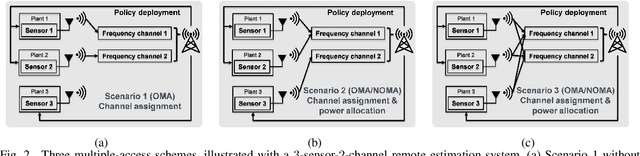

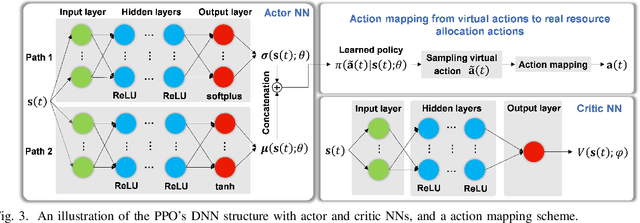

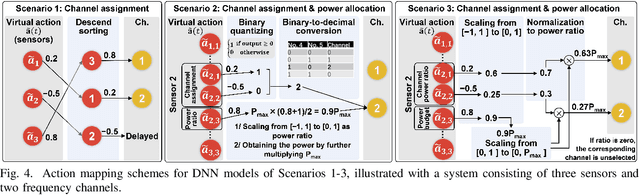

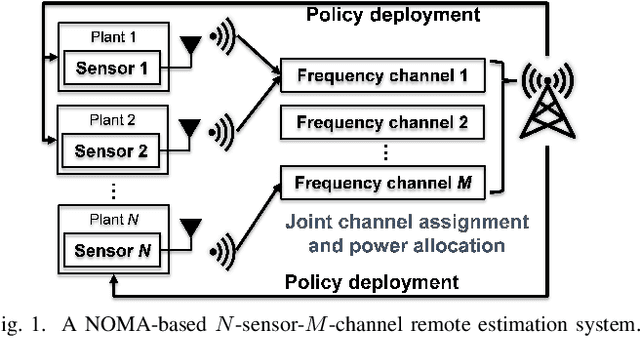

Abstract:Remote state estimation, where sensors send their measurements of distributed dynamic plants to a remote estimator over shared wireless resources, is essential for mission-critical applications of Industry 4.0. Existing algorithms on dynamic radio resource allocation for remote estimation systems assumed oversimplified wireless communications models and can only work for small-scale settings. In this work, we consider remote estimation systems with practical wireless models over the orthogonal multiple-access and non-orthogonal multiple-access schemes. We derive necessary and sufficient conditions under which remote estimation systems can be stabilized. The conditions are described in terms of the transmission power budget, channel statistics, and plants' parameters. For each multiple-access scheme, we formulate a novel dynamic resource allocation problem as a decision-making problem for achieving the minimum overall long-term average estimation mean-square error. Both the estimation quality and the channel quality states are taken into account for decision making. We systematically investigated the problems under different multiple-access schemes with large discrete, hybrid discrete-and-continuous, and continuous action spaces, respectively. We propose novel action-space compression methods and develop advanced deep reinforcement learning algorithms to solve the problems. Numerical results show that our algorithms solve the resource allocation problems effectively and provide much better scalability than the literature.

Deep Reinforcement Learning for Radio Resource Allocation in NOMA-based Remote State Estimation

May 24, 2022

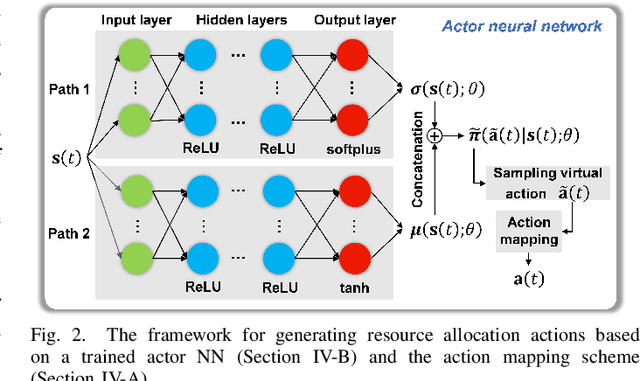

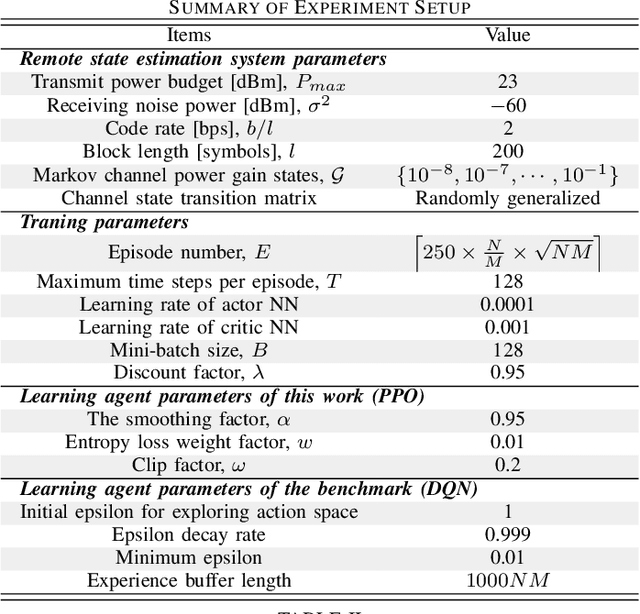

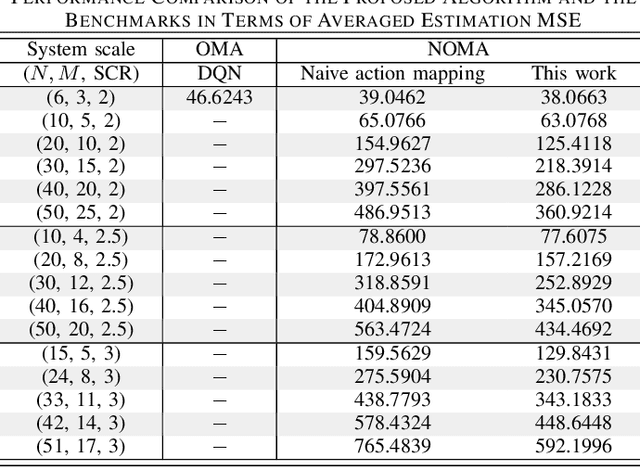

Abstract:Remote state estimation, where many sensors send their measurements of distributed dynamic plants to a remote estimator over shared wireless resources, is essential for mission-critical applications of Industry 4.0. Most of the existing works on remote state estimation assumed orthogonal multiple access and the proposed dynamic radio resource allocation algorithms can only work for very small-scale settings. In this work, we consider a remote estimation system with non-orthogonal multiple access. We formulate a novel dynamic resource allocation problem for achieving the minimum overall long-term average estimation mean-square error. Both the estimation quality state and the channel quality state are taken into account for decision making at each time. The problem has a large hybrid discrete and continuous action space for joint channel assignment and power allocation. We propose a novel action-space compression method and develop an advanced deep reinforcement learning algorithm to solve the problem. Numerical results show that our algorithm solves the resource allocation problem effectively, presents much better scalability than the literature, and provides significant performance gain compared to some benchmarks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge