Wenbiao Ding

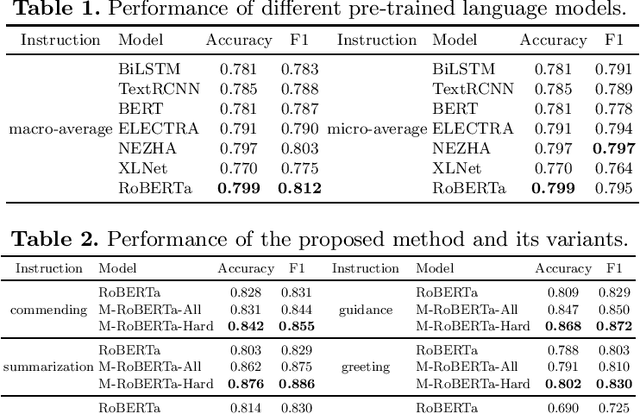

Multi-Task Learning based Online Dialogic Instruction Detection with Pre-trained Language Models

Jul 15, 2021

Abstract:In this work, we study computational approaches to detect online dialogic instructions, which are widely used to help students understand learning materials, and build effective study habits. This task is rather challenging due to the widely-varying quality and pedagogical styles of dialogic instructions. To address these challenges, we utilize pre-trained language models, and propose a multi-task paradigm which enhances the ability to distinguish instances of different classes by enlarging the margin between categories via contrastive loss. Furthermore, we design a strategy to fully exploit the misclassified examples during the training stage. Extensive experiments on a real-world online educational data set demonstrate that our approach achieves superior performance compared to representative baselines. To encourage reproducible results, we make our implementation online available at \url{https://github.com/AIED2021/multitask-dialogic-instruction}.

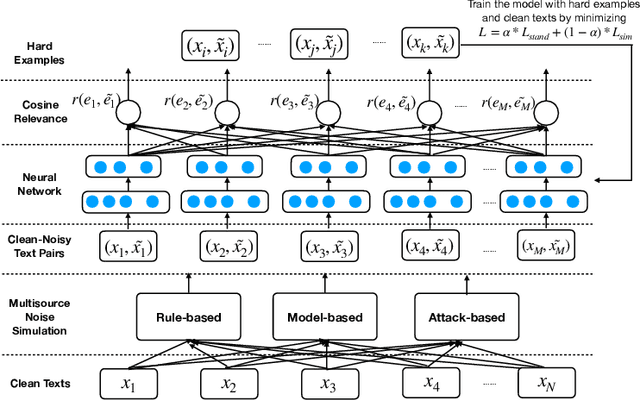

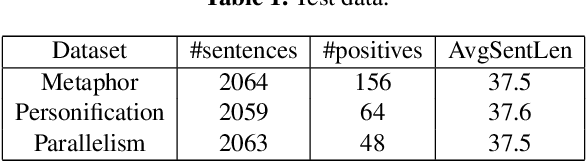

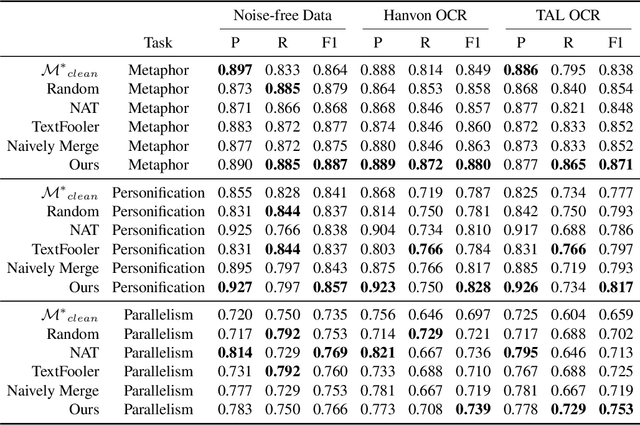

Robust Learning for Text Classification with Multi-source Noise Simulation and Hard Example Mining

Jul 15, 2021

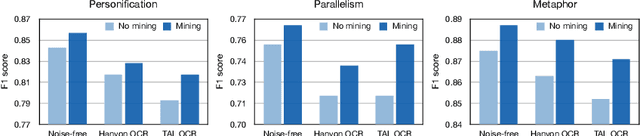

Abstract:Many real-world applications involve the use of Optical Character Recognition (OCR) engines to transform handwritten images into transcripts on which downstream Natural Language Processing (NLP) models are applied. In this process, OCR engines may introduce errors and inputs to downstream NLP models become noisy. Despite that pre-trained models achieve state-of-the-art performance in many NLP benchmarks, we prove that they are not robust to noisy texts generated by real OCR engines. This greatly limits the application of NLP models in real-world scenarios. In order to improve model performance on noisy OCR transcripts, it is natural to train the NLP model on labelled noisy texts. However, in most cases there are only labelled clean texts. Since there is no handwritten pictures corresponding to the text, it is impossible to directly use the recognition model to obtain noisy labelled data. Human resources can be employed to copy texts and take pictures, but it is extremely expensive considering the size of data for model training. Consequently, we are interested in making NLP models intrinsically robust to OCR errors in a low resource manner. We propose a novel robust training framework which 1) employs simple but effective methods to directly simulate natural OCR noises from clean texts and 2) iteratively mines the hard examples from a large number of simulated samples for optimal performance. 3) To make our model learn noise-invariant representations, a stability loss is employed. Experiments on three real-world datasets show that the proposed framework boosts the robustness of pre-trained models by a large margin. We believe that this work can greatly promote the application of NLP models in actual scenarios, although the algorithm we use is simple and straightforward. We make our codes and three datasets publicly available\footnote{https://github.com/tal-ai/Robust-learning-MSSHEM}.

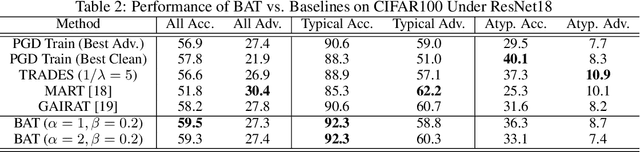

Towards the Memorization Effect of Neural Networks in Adversarial Training

Jun 09, 2021

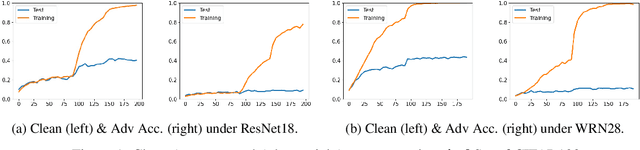

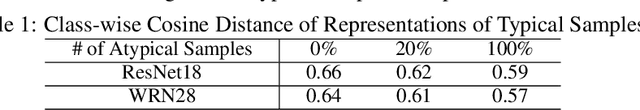

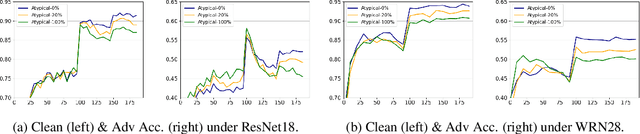

Abstract:Recent studies suggest that ``memorization'' is one important factor for overparameterized deep neural networks (DNNs) to achieve optimal performance. Specifically, the perfectly fitted DNNs can memorize the labels of many atypical samples, generalize their memorization to correctly classify test atypical samples and enjoy better test performance. While, DNNs which are optimized via adversarial training algorithms can also achieve perfect training performance by memorizing the labels of atypical samples, as well as the adversarially perturbed atypical samples. However, adversarially trained models always suffer from poor generalization, with both relatively low clean accuracy and robustness on the test set. In this work, we study the effect of memorization in adversarial trained DNNs and disclose two important findings: (a) Memorizing atypical samples is only effective to improve DNN's accuracy on clean atypical samples, but hardly improve their adversarial robustness and (b) Memorizing certain atypical samples will even hurt the DNN's performance on typical samples. Based on these two findings, we propose Benign Adversarial Training (BAT) which can facilitate adversarial training to avoid fitting ``harmful'' atypical samples and fit as more ``benign'' atypical samples as possible. In our experiments, we validate the effectiveness of BAT, and show it can achieve better clean accuracy vs. robustness trade-off than baseline methods, in benchmark datasets such as CIFAR100 and Tiny~ImageNet.

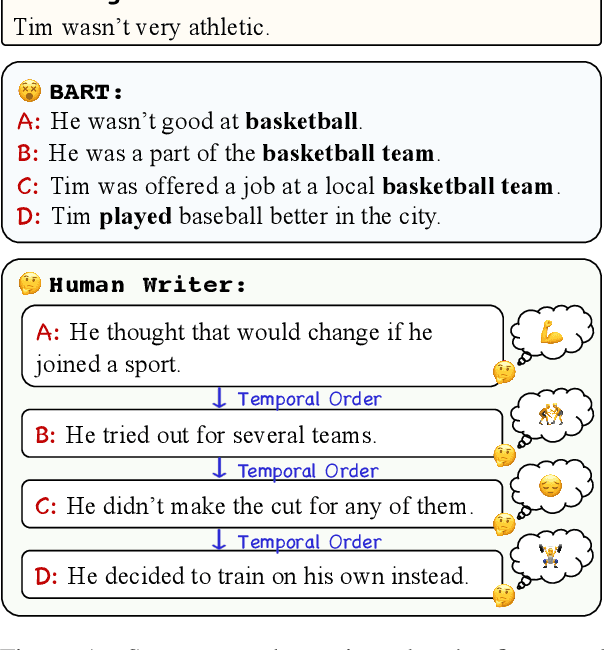

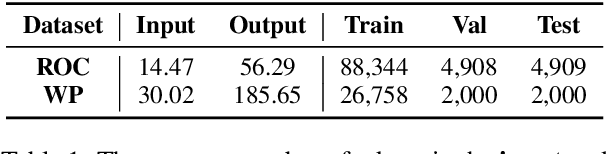

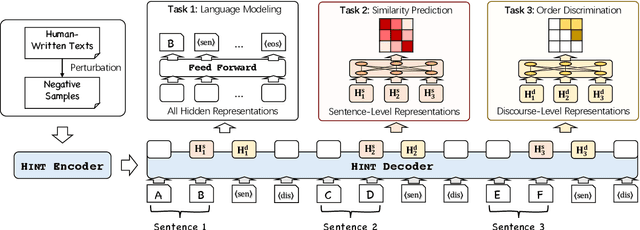

Long Text Generation by Modeling Sentence-Level and Discourse-Level Coherence

May 19, 2021

Abstract:Generating long and coherent text is an important but challenging task, particularly for open-ended language generation tasks such as story generation. Despite the success in modeling intra-sentence coherence, existing generation models (e.g., BART) still struggle to maintain a coherent event sequence throughout the generated text. We conjecture that this is because of the difficulty for the decoder to capture the high-level semantics and discourse structures in the context beyond token-level co-occurrence. In this paper, we propose a long text generation model, which can represent the prefix sentences at sentence level and discourse level in the decoding process. To this end, we propose two pretraining objectives to learn the representations by predicting inter-sentence semantic similarity and distinguishing between normal and shuffled sentence orders. Extensive experiments show that our model can generate more coherent texts than state-of-the-art baselines.

OpenMEVA: A Benchmark for Evaluating Open-ended Story Generation Metrics

May 19, 2021

Abstract:Automatic metrics are essential for developing natural language generation (NLG) models, particularly for open-ended language generation tasks such as story generation. However, existing automatic metrics are observed to correlate poorly with human evaluation. The lack of standardized benchmark datasets makes it difficult to fully evaluate the capabilities of a metric and fairly compare different metrics. Therefore, we propose OpenMEVA, a benchmark for evaluating open-ended story generation metrics. OpenMEVA provides a comprehensive test suite to assess the capabilities of metrics, including (a) the correlation with human judgments, (b) the generalization to different model outputs and datasets, (c) the ability to judge story coherence, and (d) the robustness to perturbations. To this end, OpenMEVA includes both manually annotated stories and auto-constructed test examples. We evaluate existing metrics on OpenMEVA and observe that they have poor correlation with human judgments, fail to recognize discourse-level incoherence, and lack inferential knowledge (e.g., causal order between events), the generalization ability and robustness. Our study presents insights for developing NLG models and metrics in further research.

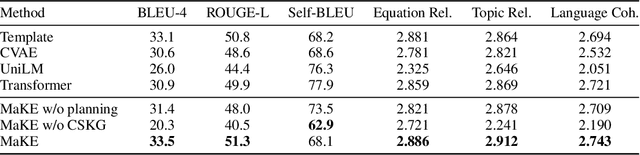

Mathematical Word Problem Generation from Commonsense Knowledge Graph and Equations

Oct 13, 2020

Abstract:There is an increasing interest in the use of automatic mathematical word problem (MWP) generation in educational assessment. Different from standard natural question generation, MWP generation needs to maintain the underlying mathematical operations between quantities and variables, while at the same time ensuring the relevance between the output and the given topic. To address above problem we develop an end-to-end neural model to generate personalized and diverse MWPs in real-world scenarios from commonsense knowledge graph and equations. The proposed model (1) learns both representations from edge-enhanced Levi graphs of symbolic equations and commonsense knowledge; (2) automatically fuses equation and commonsense knowledge information via a self-planning module when generating the MWPs. Experiments on an educational gold-standard set and a large-scale generated MWP set show that our approach is superior on the MWP generation task, and it outperforms the state-of-the-art models in terms of both automatic evaluation metrics, i.e., BLEU-4, ROUGE-L, Self-BLEU, and human evaluation metrics, i.e, equation relevance, topic relevance, and language coherence.

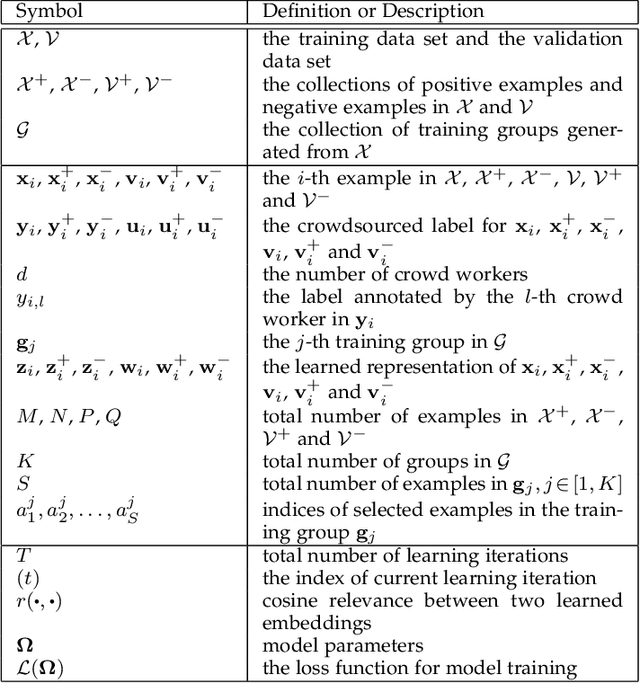

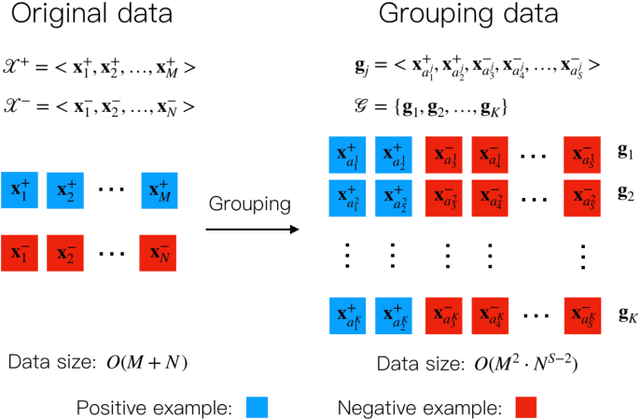

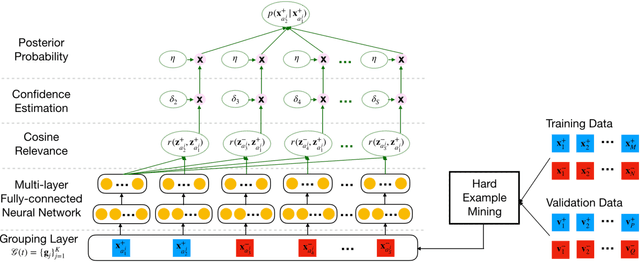

Representation Learning from Limited Educational Data with Crowdsourced Labels

Sep 23, 2020

Abstract:Representation learning has been proven to play an important role in the unprecedented success of machine learning models in numerous tasks, such as machine translation, face recognition and recommendation. The majority of existing representation learning approaches often require a large number of consistent and noise-free labels. However, due to various reasons such as budget constraints and privacy concerns, labels are very limited in many real-world scenarios. Directly applying standard representation learning approaches on small labeled data sets will easily run into over-fitting problems and lead to sub-optimal solutions. Even worse, in some domains such as education, the limited labels are usually annotated by multiple workers with diverse expertise, which yields noises and inconsistency in such crowdsourcing settings. In this paper, we propose a novel framework which aims to learn effective representations from limited data with crowdsourced labels. Specifically, we design a grouping based deep neural network to learn embeddings from a limited number of training samples and present a Bayesian confidence estimator to capture the inconsistency among crowdsourced labels. Furthermore, to expedite the training process, we develop a hard example selection procedure to adaptively pick up training examples that are misclassified by the model. Extensive experiments conducted on three real-world data sets demonstrate the superiority of our framework on learning representations from limited data with crowdsourced labels, comparing with various state-of-the-art baselines. In addition, we provide a comprehensive analysis on each of the main components of our proposed framework and also introduce the promising results it achieved in our real production to fully understand the proposed framework.

Neural Multi-Task Learning for Teacher Question Detection in Online Classrooms

May 16, 2020

Abstract:Asking questions is one of the most crucial pedagogical techniques used by teachers in class. It not only offers open-ended discussions between teachers and students to exchange ideas but also provokes deeper student thought and critical analysis. Providing teachers with such pedagogical feedback will remarkably help teachers improve their overall teaching quality over time in classrooms. Therefore, in this work, we build an end-to-end neural framework that automatically detects questions from teachers' audio recordings. Compared with traditional methods, our approach not only avoids cumbersome feature engineering, but also adapts to the task of multi-class question detection in real education scenarios. By incorporating multi-task learning techniques, we are able to strengthen the understanding of semantic relations among different types of questions. We conducted extensive experiments on the question detection tasks in a real-world online classroom dataset and the results demonstrate the superiority of our model in terms of various evaluation metrics.

Automatic Dialogic Instruction Detection for K-12 Online One-on-one Classes

May 16, 2020

Abstract:Online one-on-one class is created for highly interactive and immersive learning experience. It demands a large number of qualified online instructors. In this work, we develop six dialogic instructions and help teachers achieve the benefits of one-on-one learning paradigm. Moreover, we utilize neural language models, i.e., long short-term memory (LSTM), to detect above six instructions automatically. Experiments demonstrate that the LSTM approach achieves AUC scores from 0.840 to 0.979 among all six types of instructions on our real-world educational dataset.

Siamese Neural Networks for Class Activity Detection

May 15, 2020

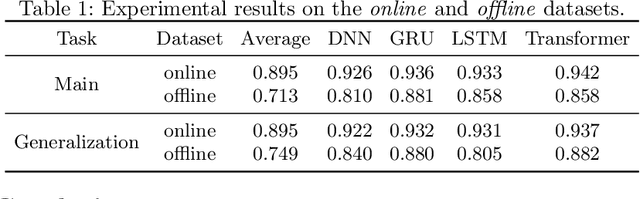

Abstract:Classroom activity detection (CAD) aims at accurately recognizing speaker roles (either teacher or student) in classrooms. A CAD solution helps teachers get instant feedback on their pedagogical instructions. However, CAD is very challenging because (1) classroom conversations contain many conversational turn-taking overlaps between teachers and students; (2) the CAD model needs to be generalized well enough for different teachers and students; and (3) classroom recordings may be very noisy and low-quality. In this work, we address the above challenges by building a Siamese neural framework to automatically identify teacher and student utterances from classroom recordings. The proposed model is evaluated on real-world educational datasets. The results demonstrate that (1) our approach is superior on the prediction tasks for both online and offline classroom environments; and (2) our framework exhibits robustness and generalization ability on new teachers (i.e., teachers never appear in training data).

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge