Wen-mei Hwu

EDD: Efficient Differentiable DNN Architecture and Implementation Co-search for Embedded AI Solutions

May 06, 2020

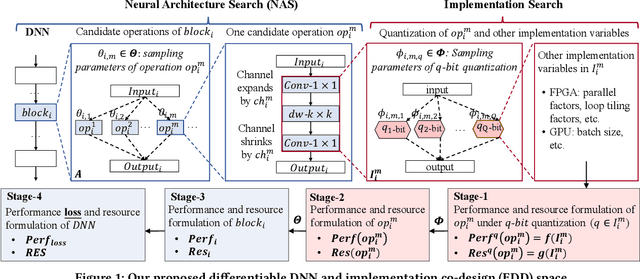

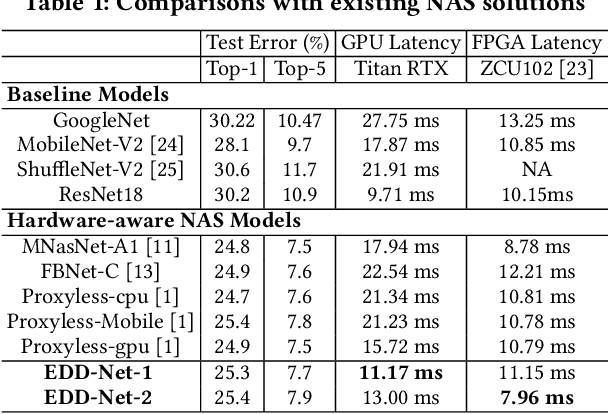

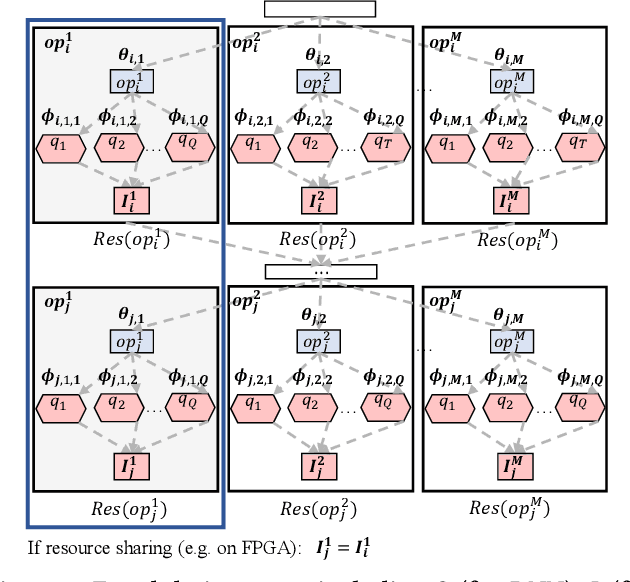

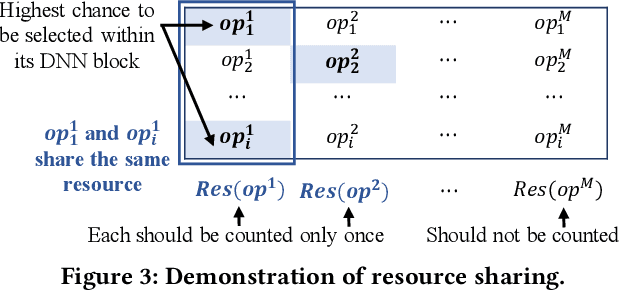

Abstract:High quality AI solutions require joint optimization of AI algorithms and their hardware implementations. In this work, we are the first to propose a fully simultaneous, efficient differentiable DNN architecture and implementation co-search (EDD) methodology. We formulate the co-search problem by fusing DNN search variables and hardware implementation variables into one solution space, and maximize both algorithm accuracy and hardware implementation quality. The formulation is differentiable with respect to the fused variables, so that gradient descent algorithm can be applied to greatly reduce the search time. The formulation is also applicable for various devices with different objectives. In the experiments, we demonstrate the effectiveness of our EDD methodology by searching for three representative DNNs, targeting low-latency GPU implementation and FPGA implementations with both recursive and pipelined architectures. Each model produced by EDD achieves similar accuracy as the best existing DNN models searched by neural architecture search (NAS) methods on ImageNet, but with superior performance obtained within 12 GPU-hour searches. Our DNN targeting GPU is 1.40x faster than the state-of-the-art solution reported in Proxyless, and our DNN targeting FPGA delivers 1.45x higher throughput than the state-of-the-art solution reported in DNNBuilder.

Differential Treatment for Stuff and Things: A Simple Unsupervised Domain Adaptation Method for Semantic Segmentation

Apr 22, 2020

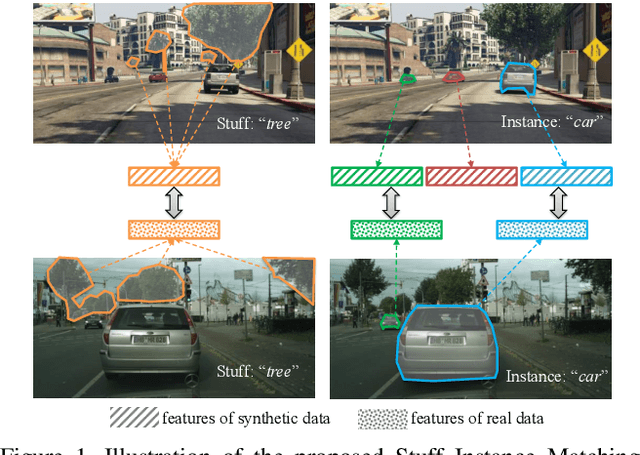

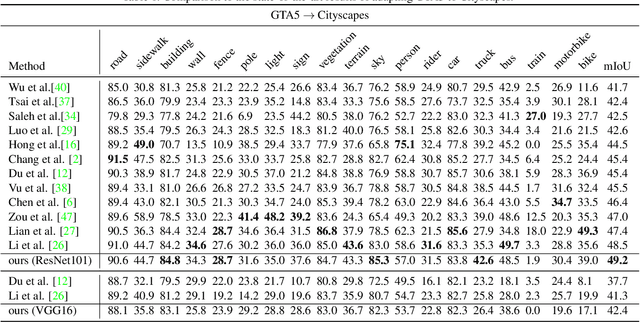

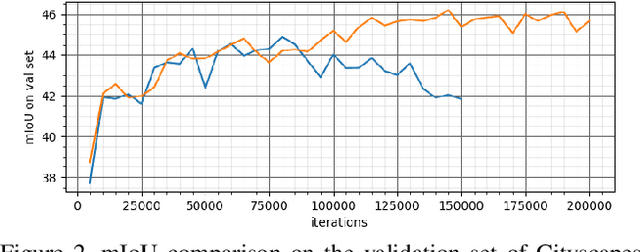

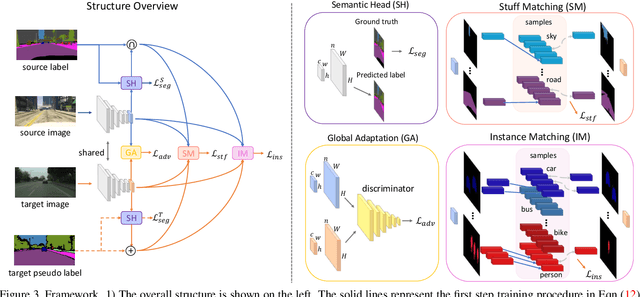

Abstract:We consider the problem of unsupervised domain adaptation for semantic segmentation by easing the domain shift between the source domain (synthetic data) and the target domain (real data) in this work. State-of-the-art approaches prove that performing semantic-level alignment is helpful in tackling the domain shift issue. Based on the observation that stuff categories usually share similar appearances across images of different domains while things (i.e. object instances) have much larger differences, we propose to improve the semantic-level alignment with different strategies for stuff regions and for things: 1) for the stuff categories, we generate feature representation for each class and conduct the alignment operation from the target domain to the source domain; 2) for the thing categories, we generate feature representation for each individual instance and encourage the instance in the target domain to align with the most similar one in the source domain. In this way, the individual differences within thing categories will also be considered to alleviate over-alignment. In addition to our proposed method, we further reveal the reason why the current adversarial loss is often unstable in minimizing the distribution discrepancy and show that our method can help ease this issue by minimizing the most similar stuff and instance features between the source and the target domains. We conduct extensive experiments in two unsupervised domain adaptation tasks, i.e. GTA5 to Cityscapes and SYNTHIA to Cityscapes, and achieve the new state-of-the-art segmentation accuracy.

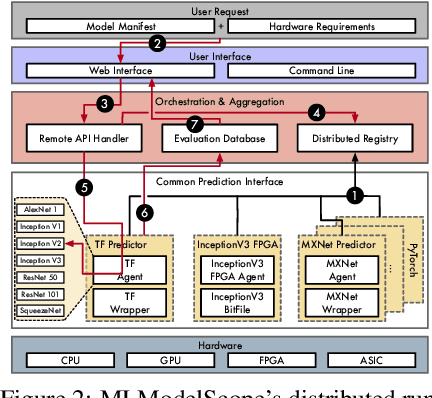

MLModelScope: A Distributed Platform for Model Evaluation and Benchmarking at Scale

Feb 19, 2020

Abstract:Machine Learning (ML) and Deep Learning (DL) innovations are being introduced at such a rapid pace that researchers are hard-pressed to analyze and study them. The complicated procedures for evaluating innovations, along with the lack of standard and efficient ways of specifying and provisioning ML/DL evaluation, is a major "pain point" for the community. This paper proposes MLModelScope, an open-source, framework/hardware agnostic, extensible and customizable design that enables repeatable, fair, and scalable model evaluation and benchmarking. We implement the distributed design with support for all major frameworks and hardware, and equip it with web, command-line, and library interfaces. To demonstrate MLModelScope's capabilities we perform parallel evaluation and show how subtle changes to model evaluation pipeline affects the accuracy and HW/SW stack choices affect performance.

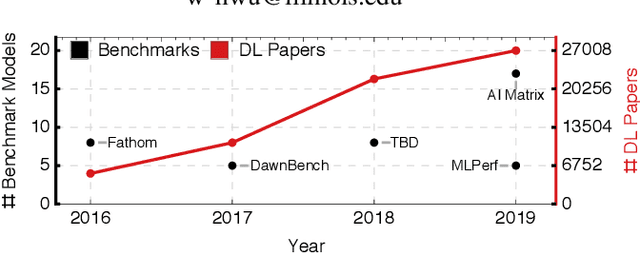

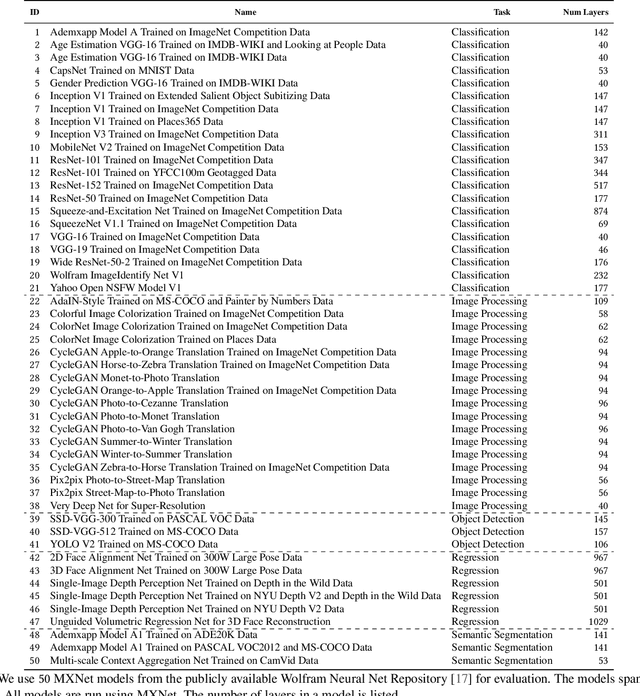

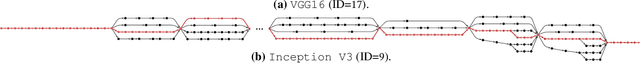

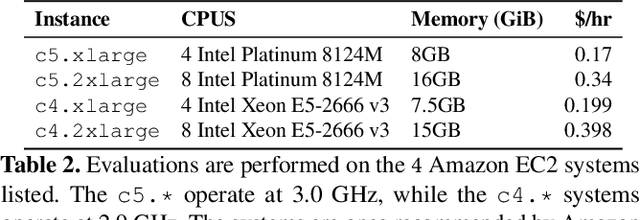

DLBricks: Composable Benchmark Generation to Reduce Deep Learning Benchmarking Effort on CPUs

Nov 20, 2019

Abstract:The past few years have seen a surge of applying Deep Learning (DL) models for a wide array of tasks such as image classification, object detection, machine translation, etc. While DL models provide an opportunity to solve otherwise intractable tasks, their adoption relies on them being optimized to meet latency and resource requirements. Benchmarking is a key step in this process but has been hampered in part due to the lack of representative and up-to-date benchmarking suites. This is exacerbated by the fast-evolving pace of DL models. This paper proposes DLBricks, a composable benchmark generation design that reduces the effort of developing, maintaining, and running DL benchmarks on CPUs. DLBricks decomposes DL models into a set of unique runnable networks and constructs the original model's performance using the performance of the generated benchmarks. DLBricks leverages two key observations: DL layers are the performance building blocks of DL models and layers are extensively repeated within and across DL models. Since benchmarks are generated automatically and the benchmarking time is minimized, DLBricks can keep up-to-date with the latest proposed models, relieving the pressure of selecting representative DL models. Moreover, DLBricks allows users to represent proprietary models within benchmark suites. We evaluate DLBricks using $50$ MXNet models spanning $5$ DL tasks on $4$ representative CPU systems. We show that DLBricks provides an accurate performance estimate for the DL models and reduces the benchmarking time across systems (e.g. within $95\%$ accuracy and up to $4.4\times$ benchmarking time speedup on Amazon EC2 c5.xlarge).

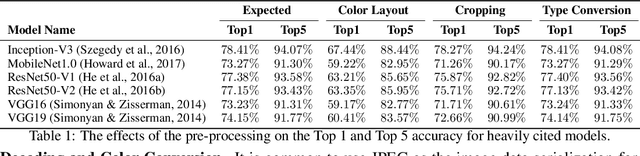

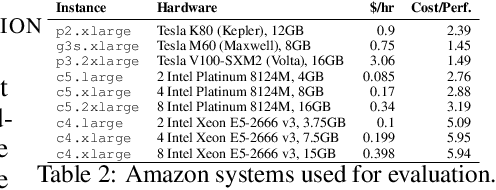

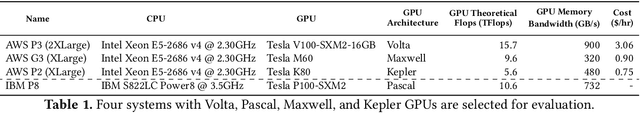

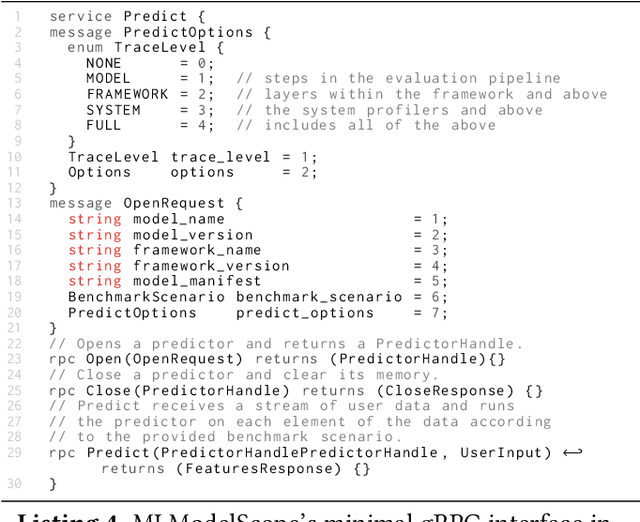

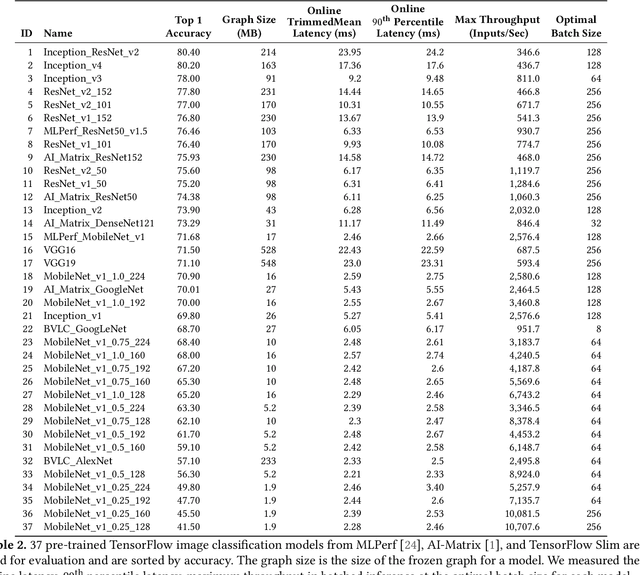

The Design and Implementation of a Scalable DL Benchmarking Platform

Nov 19, 2019

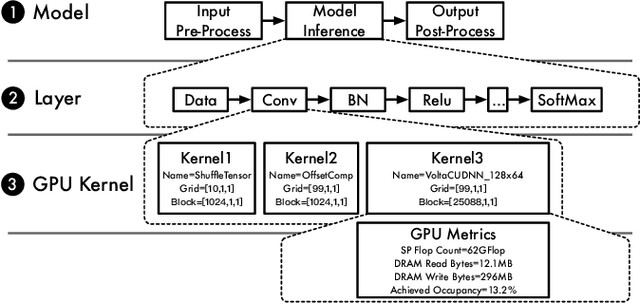

Abstract:The current Deep Learning (DL) landscape is fast-paced and is rife with non-uniform models, hardware/software (HW/SW) stacks, but lacks a DL benchmarking platform to facilitate evaluation and comparison of DL innovations, be it models, frameworks, libraries, or hardware. Due to the lack of a benchmarking platform, the current practice of evaluating the benefits of proposed DL innovations is both arduous and error-prone - stifling the adoption of the innovations. In this work, we first identify $10$ design features which are desirable within a DL benchmarking platform. These features include: performing the evaluation in a consistent, reproducible, and scalable manner, being framework and hardware agnostic, supporting real-world benchmarking workloads, providing in-depth model execution inspection across the HW/SW stack levels, etc. We then propose MLModelScope, a DL benchmarking platform design that realizes the $10$ objectives. MLModelScope proposes a specification to define DL model evaluations and techniques to provision the evaluation workflow using the user-specified HW/SW stack. MLModelScope defines abstractions for frameworks and supports board range of DL models and evaluation scenarios. We implement MLModelScope as an open-source project with support for all major frameworks and hardware architectures. Through MLModelScope's evaluation and automated analysis workflows, we performed case-study analyses of $37$ models across $4$ systems and show how model, hardware, and framework selection affects model accuracy and performance under different benchmarking scenarios. We further demonstrated how MLModelScope's tracing capability gives a holistic view of model execution and helps pinpoint bottlenecks.

Benanza: Automatic $μ$Benchmark Generation to Compute "Lower-bound" Latency and Inform Optimizations of Deep Learning Models on GPUs

Nov 19, 2019

Abstract:As Deep Learning (DL) models have been increasingly used in latency-sensitive applications, there has been a growing interest in improving their response time. An important venue for such improvement is to profile the execution of these models and characterize their performance to identify possible optimization opportunities. However, the current profiling tools lack the highly desired abilities to characterize ideal performance, identify sources of inefficiency, and quantify the benefits of potential optimizations. Such deficiencies have led to slow characterization/optimization cycles that cannot keep up with the fast pace at which new DL models are introduced. We propose Benanza, a sustainable and extensible benchmarking and analysis design that speeds up the characterization/optimization cycle of DL models on GPUs. Benanza consists of four major components: a model processor that parses models into an internal representation, a configurable benchmark generator that automatically generates micro-benchmarks given a set of models, a database of benchmark results, and an analyzer that computes the "lower-bound" latency of DL models using the benchmark data and informs optimizations of model execution. The "lower-bound" latency metric estimates the ideal model execution on a GPU system and serves as the basis for identifying optimization opportunities in frameworks or system libraries. We used Benanza to evaluate 30 ONNX models in MXNet, ONNX Runtime, and PyTorch on 7 GPUs ranging from Kepler to the latest Turing, and identified optimizations in parallel layer execution, cuDNN convolution algorithm selection, framework inefficiency, layer fusion, and using Tensor Cores.

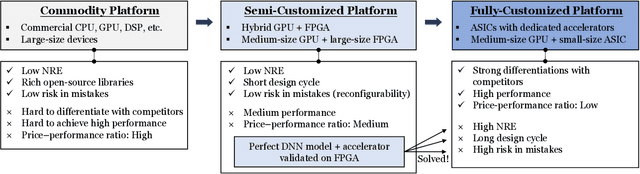

NAIS: Neural Architecture and Implementation Search and its Applications in Autonomous Driving

Nov 18, 2019

Abstract:The rapidly growing demands for powerful AI algorithms in many application domains have motivated massive investment in both high-quality deep neural network (DNN) models and high-efficiency implementations. In this position paper, we argue that a simultaneous DNN/implementation co-design methodology, named Neural Architecture and Implementation Search (NAIS), deserves more research attention to boost the development productivity and efficiency of both DNN models and implementation optimization. We propose a stylized design methodology that can drastically cut down the search cost while preserving the quality of the end solution.As an illustration, we discuss this DNN/implementation methodology in the context of both FPGAs and GPUs. We take autonomous driving as a key use case as it is one of the most demanding areas for high quality AI algorithms and accelerators. We discuss how such a co-design methodology can impact the autonomous driving industry significantly. We identify several research opportunities in this exciting domain.

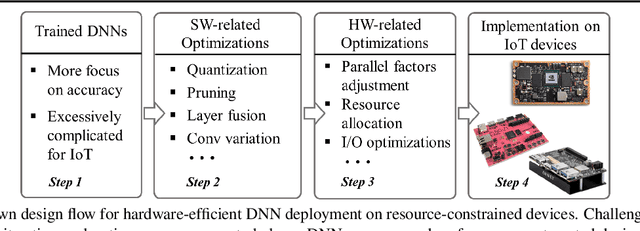

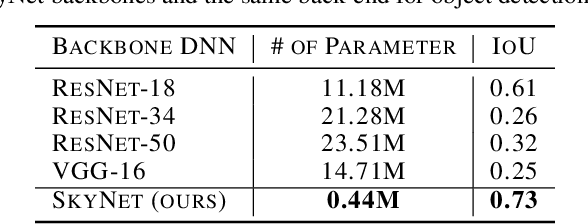

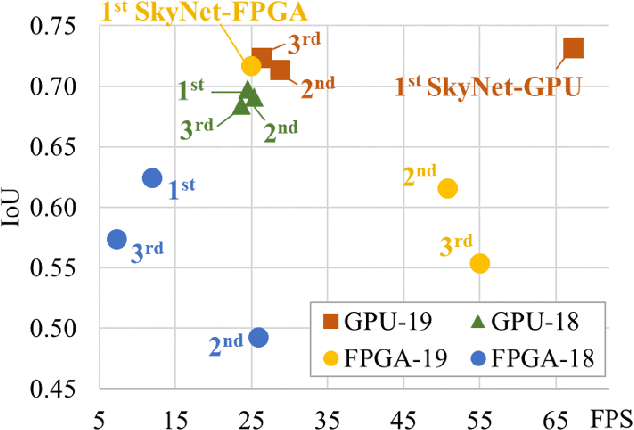

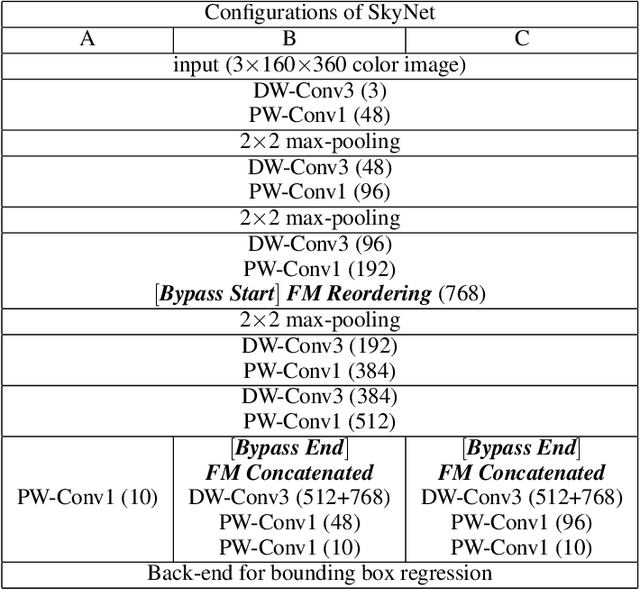

SkyNet: a Hardware-Efficient Method for Object Detection and Tracking on Embedded Systems

Sep 20, 2019

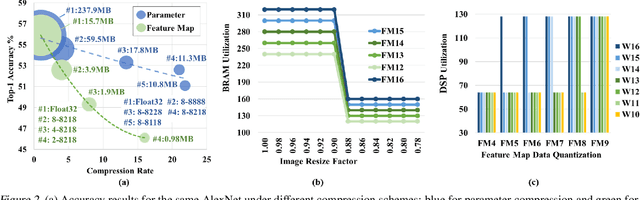

Abstract:Developing object detection and tracking on resource-constrained embedded systems is challenging. While object detection is one of the most compute-intensive tasks from the artificial intelligence domain, it is only allowed to use limited computation and memory resources on embedded devices. In the meanwhile, such resource-constrained implementations are often required to satisfy additional demanding requirements such as real-time response, high-throughput performance, and reliable inference accuracy. To overcome these challenges, we propose SkyNet, a hardware-efficient method to deliver the state-of-the-art detection accuracy and speed for embedded systems. Instead of following the common top-down flow for compact DNN design, SkyNet provides a bottom-up DNN design approach with comprehensive understanding of the hardware constraints at the very beginning to deliver hardware-efficient DNNs. The effectiveness of SkyNet is demonstrated by winning the extremely competitive System Design Contest for low power object detection in the 56th IEEE/ACM Design Automation Conference (DAC-SDC), where our SkyNet significantly outperforms all other 100+ competitors: it delivers 0.731 Intersection over Union (IoU) and 67.33 frames per second (FPS) on a TX2 embedded GPU; and 0.716 IoU and 25.05 FPS on an Ultra96 embedded FPGA. The evaluation of SkyNet is also extended to GOT-10K, a recent large-scale high-diversity benchmark for generic object tracking in the wild. For state-of-the-art object trackers SiamRPN++ and SiamMask, where ResNet-50 is employed as the backbone, implementations using our SkyNet as the backbone DNN are 1.60X and 1.73X faster with better or similar accuracy when running on a 1080Ti GPU, and 37.20X smaller in terms of parameter size for significantly better memory and storage footprint.

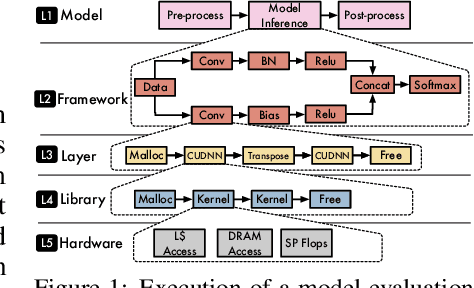

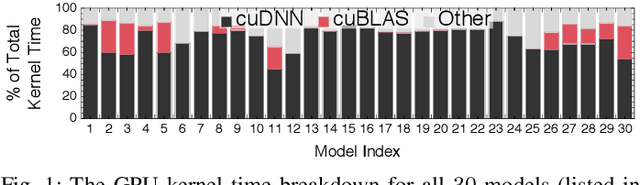

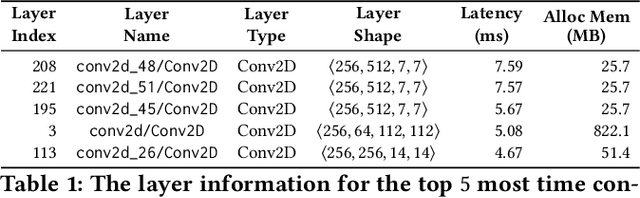

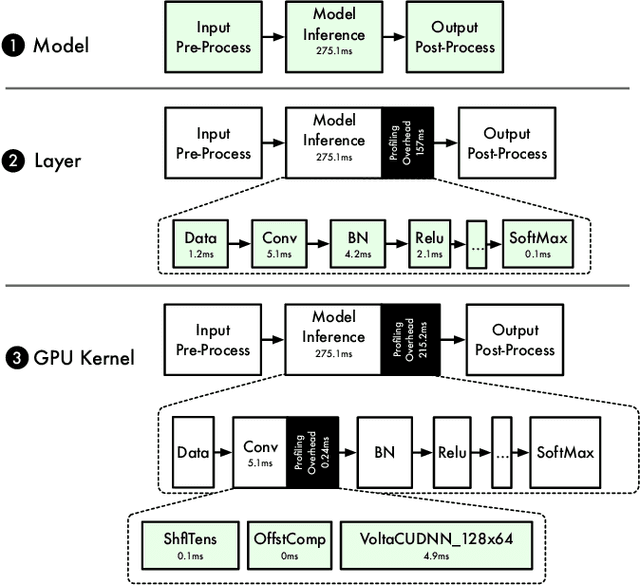

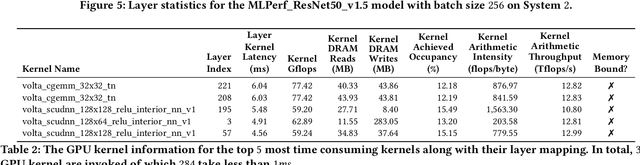

Across-Stack Profiling and Characterization of Machine Learning Models on GPUs

Aug 19, 2019

Abstract:The world sees a proliferation of machine learning/deep learning (ML) models and their wide adoption in different application domains recently. This has made the profiling and characterization of ML models an increasingly pressing task for both hardware designers and system providers, as they would like to offer the best possible computing system to serve ML models with the desired latency, throughput, and energy requirements while maximizing resource utilization. Such an endeavor is challenging as the characteristics of an ML model depend on the interplay between the model, framework, system libraries, and the hardware (or the HW/SW stack). A thorough characterization requires understanding the behavior of the model execution across the HW/SW stack levels. Existing profiling tools are disjoint, however, and only focus on profiling within a particular level of the stack. This paper proposes a leveled profiling design that leverages existing profiling tools to perform across-stack profiling. The design does so in spite of the profiling overheads incurred from the profiling providers. We coupled the profiling capability with an automatic analysis pipeline to systematically characterize 65 state-of-the-art ML models. Through this characterization, we show that our across-stack profiling solution provides insights (which are difficult to discern otherwise) on the characteristics of ML models, ML frameworks, and GPU hardware.

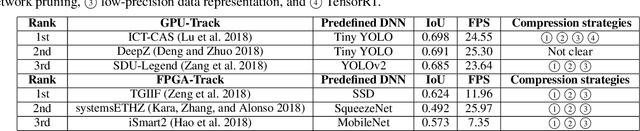

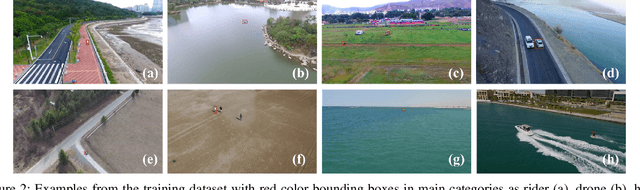

SkyNet: A Champion Model for DAC-SDC on Low Power Object Detection

Jul 09, 2019

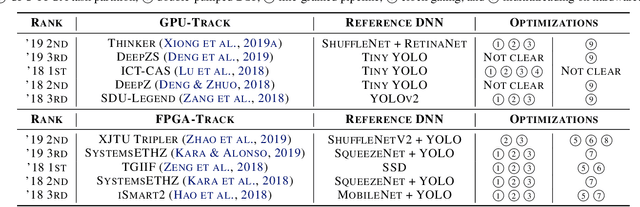

Abstract:Developing artificial intelligence (AI) at the edge is always challenging, since edge devices have limited computation capability and memory resources but need to meet demanding requirements, such as real-time processing, high throughput performance, and high inference accuracy. To overcome these challenges, we propose SkyNet, an extremely lightweight DNN with 12 convolutional (Conv) layers and only 1.82 megabyte (MB) of parameters following a bottom-up DNN design approach. SkyNet is demonstrated in the 56th IEEE/ACM Design Automation Conference System Design Contest (DAC-SDC), a low power object detection challenge in images captured by unmanned aerial vehicles (UAVs). SkyNet won the first place award for both the GPU and FPGA tracks of the contest: we deliver 0.731 Intersection over Union (IoU) and 67.33 frames per second (FPS) on a TX2 GPU and deliver 0.716 IoU and 25.05 FPS on an Ultra96 FPGA.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge