Robert D. Hawkins

Using Natural Language and Program Abstractions to Instill Human Inductive Biases in Machines

May 23, 2022

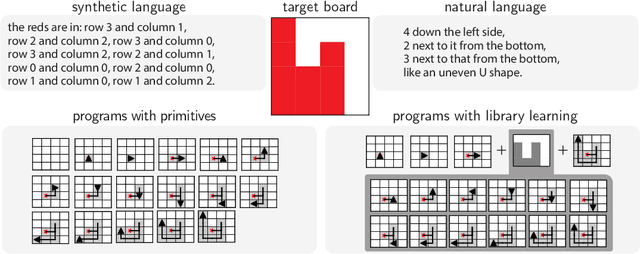

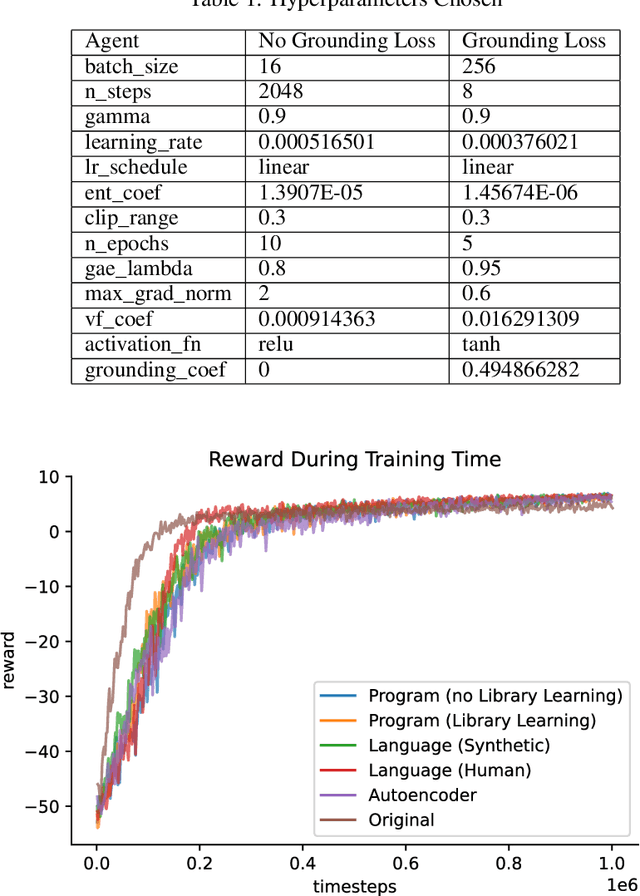

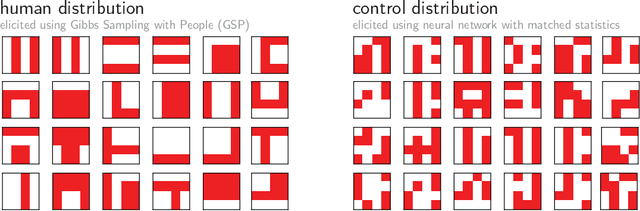

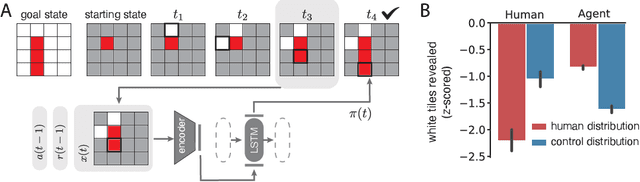

Abstract:Strong inductive biases are a key component of human intelligence, allowing people to quickly learn a variety of tasks. Although meta-learning has emerged as an approach for endowing neural networks with useful inductive biases, agents trained by meta-learning may acquire very different strategies from humans. We show that co-training these agents on predicting representations from natural language task descriptions and from programs induced to generate such tasks guides them toward human-like inductive biases. Human-generated language descriptions and program induction with library learning both result in more human-like behavior in downstream meta-reinforcement learning agents than less abstract controls (synthetic language descriptions, program induction without library learning), suggesting that the abstraction supported by these representations is key.

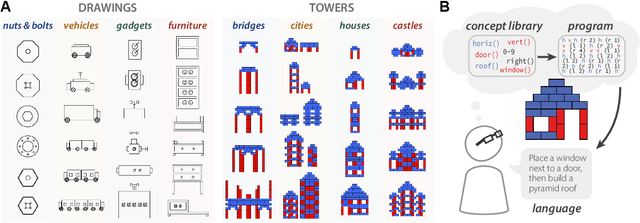

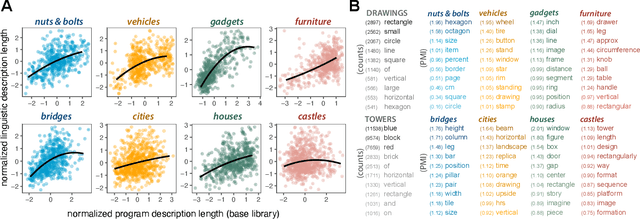

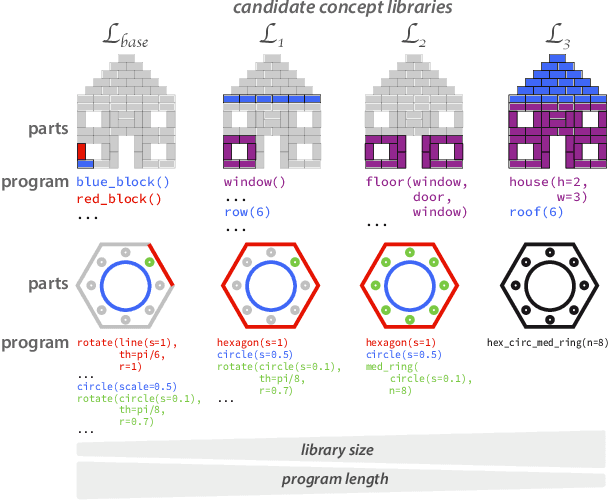

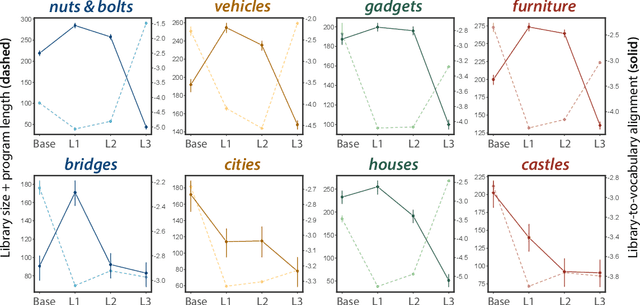

Identifying concept libraries from language about object structure

May 11, 2022

Abstract:Our understanding of the visual world goes beyond naming objects, encompassing our ability to parse objects into meaningful parts, attributes, and relations. In this work, we leverage natural language descriptions for a diverse set of 2K procedurally generated objects to identify the parts people use and the principles leading these parts to be favored over others. We formalize our problem as search over a space of program libraries that contain different part concepts, using tools from machine translation to evaluate how well programs expressed in each library align to human language. By combining naturalistic language at scale with structured program representations, we discover a fundamental information-theoretic tradeoff governing the part concepts people name: people favor a lexicon that allows concise descriptions of each object, while also minimizing the size of the lexicon itself.

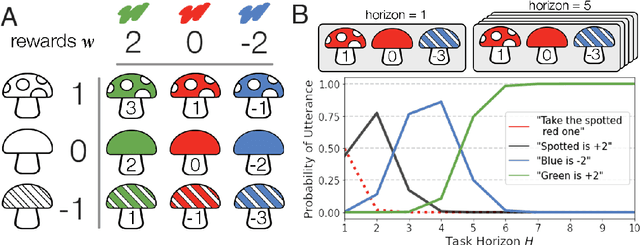

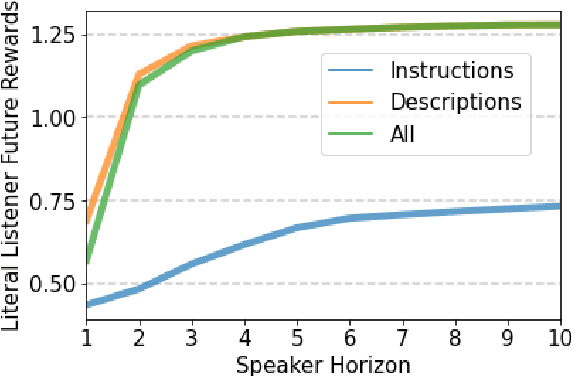

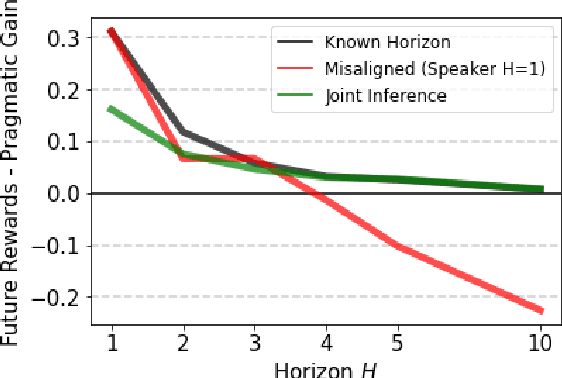

Linguistic communication as (inverse) reward design

Apr 11, 2022

Abstract:Natural language is an intuitive and expressive way to communicate reward information to autonomous agents. It encompasses everything from concrete instructions to abstract descriptions of the world. Despite this, natural language is often challenging to learn from: it is difficult for machine learning methods to make appropriate inferences from such a wide range of input. This paper proposes a generalization of reward design as a unifying principle to ground linguistic communication: speakers choose utterances to maximize expected rewards from the listener's future behaviors. We first extend reward design to incorporate reasoning about unknown future states in a linear bandit setting. We then define a speaker model which chooses utterances according to this objective. Simulations show that short-horizon speakers (reasoning primarily about a single, known state) tend to use instructions, while long-horizon speakers (reasoning primarily about unknown, future states) tend to describe the reward function. We then define a pragmatic listener which performs inverse reward design by jointly inferring the speaker's latent horizon and rewards. Our findings suggest that this extension of reward design to linguistic communication, including the notion of a latent speaker horizon, is a promising direction for achieving more robust alignment outcomes from natural language supervision.

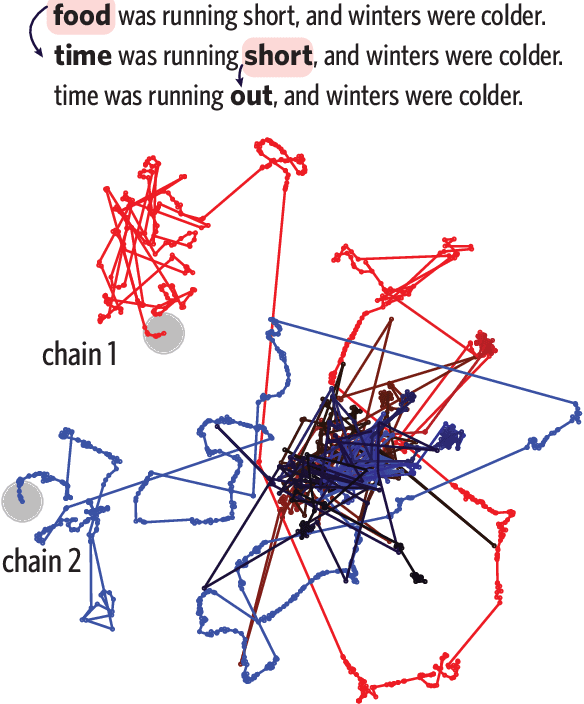

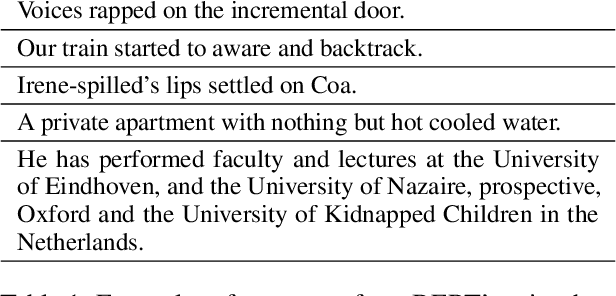

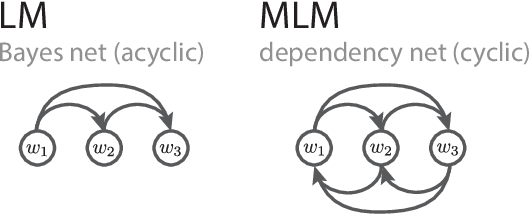

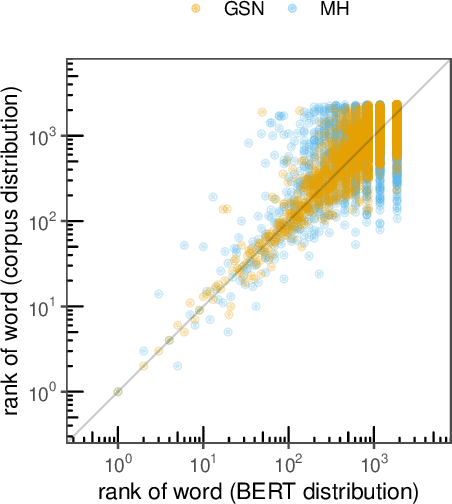

Probing BERT's priors with serial reproduction chains

Mar 18, 2022

Abstract:Sampling is a promising bottom-up method for exposing what generative models have learned about language, but it remains unclear how to generate representative samples from popular masked language models (MLMs) like BERT. The MLM objective yields a dependency network with no guarantee of consistent conditional distributions, posing a problem for naive approaches. Drawing from theories of iterated learning in cognitive science, we explore the use of serial reproduction chains to sample from BERT's priors. In particular, we observe that a unique and consistent estimator of the ground-truth joint distribution is given by a Generative Stochastic Network (GSN) sampler, which randomly selects which token to mask and reconstruct on each step. We show that the lexical and syntactic statistics of sentences from GSN chains closely match the ground-truth corpus distribution and perform better than other methods in a large corpus of naturalness judgments. Our findings establish a firmer theoretical foundation for bottom-up probing and highlight richer deviations from human priors.

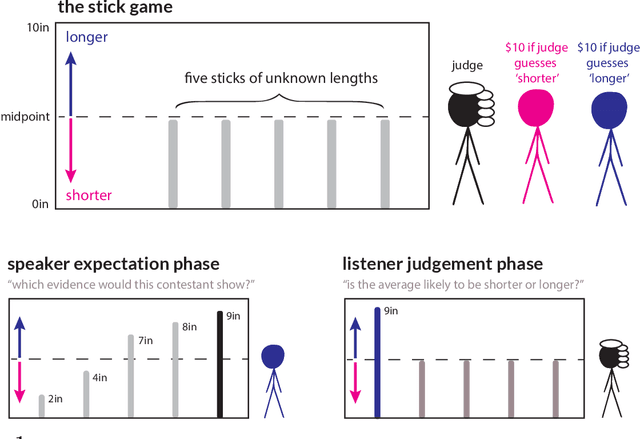

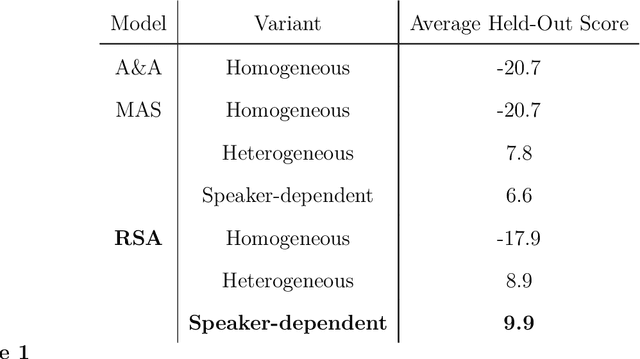

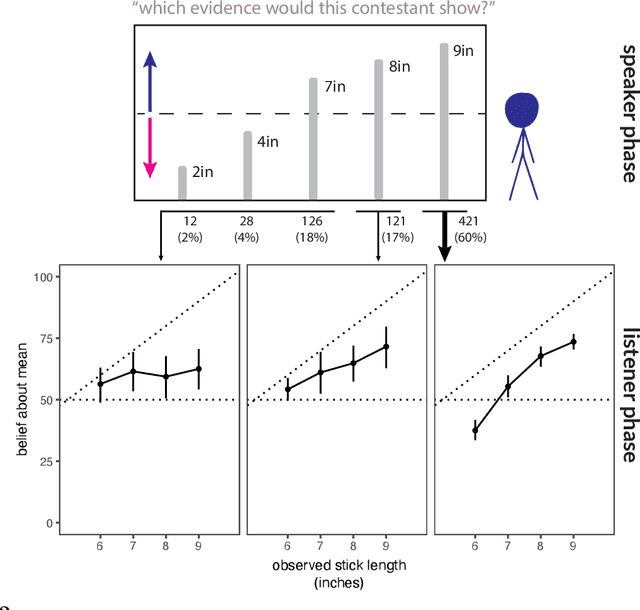

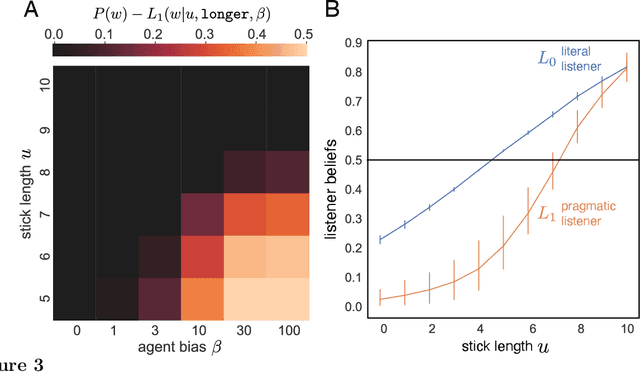

A pragmatic account of the weak evidence effect

Dec 07, 2021

Abstract:Language is not only used to inform. We often seek to persuade by arguing in favor of a particular view. Persuasion raises a number of challenges for classical accounts of belief updating, as information cannot be taken at face value. How should listeners account for a speaker's "hidden agenda" when incorporating new information? Here, we extend recent probabilistic models of recursive social reasoning to allow for persuasive goals and show that our model provides a new pragmatic explanation for why weakly favorable arguments may backfire, a phenomenon known as the weak evidence effect. Critically, our model predicts a relationship between belief updating and speaker expectations: weak evidence should only backfire when speakers are expected to act under persuasive goals, implying the absence of stronger evidence. We introduce a simple experimental paradigm called the Stick Contest to measure the extent to which the weak evidence effect depends on speaker expectations, and show that a pragmatic listener model accounts for the empirical data better than alternative models. Our findings suggest potential avenues for rational models of social reasoning to further illuminate decision-making phenomena.

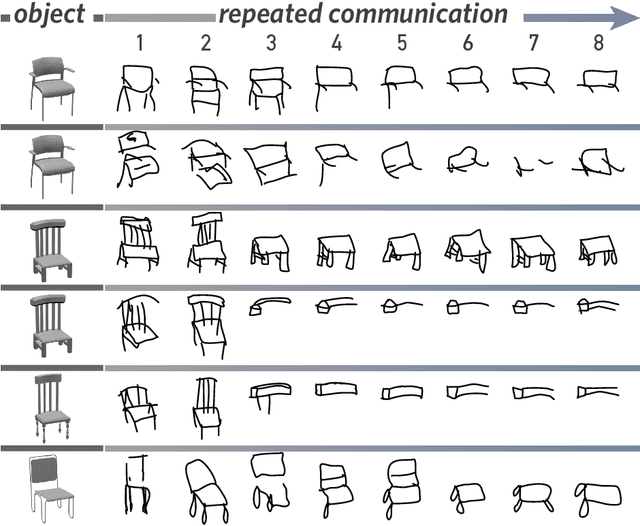

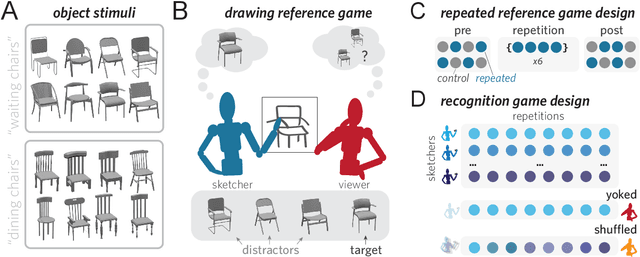

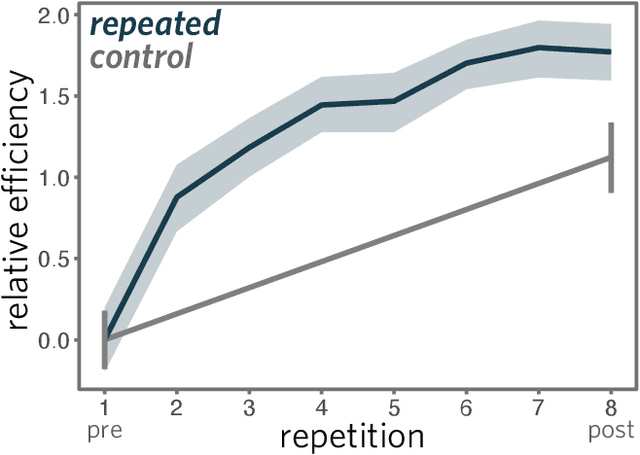

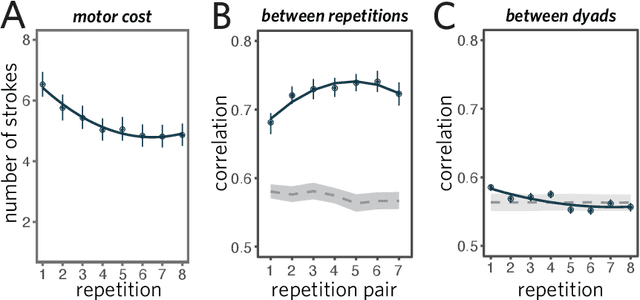

Visual resemblance and communicative context constrain the emergence of graphical conventions

Sep 17, 2021

Abstract:From photorealistic sketches to schematic diagrams, drawing provides a versatile medium for communicating about the visual world. How do images spanning such a broad range of appearances reliably convey meaning? Do viewers understand drawings based solely on their ability to resemble the entities they refer to (i.e., as images), or do they understand drawings based on shared but arbitrary associations with these entities (i.e., as symbols)? In this paper, we provide evidence for a cognitive account of pictorial meaning in which both visual and social information is integrated to support effective visual communication. To evaluate this account, we used a communication task where pairs of participants used drawings to repeatedly communicate the identity of a target object among multiple distractor objects. We manipulated social cues across three experiments and a full internal replication, finding pairs of participants develop referent-specific and interaction-specific strategies for communicating more efficiently over time, going beyond what could be explained by either task practice or a pure resemblance-based account alone. Using a combination of model-based image analyses and crowdsourced sketch annotations, we further determined that drawings did not drift toward arbitrariness, as predicted by a pure convention-based account, but systematically preserved those visual features that were most distinctive of the target object. Taken together, these findings advance theories of pictorial meaning and have implications for how successful graphical conventions emerge via complex interactions between visual perception, communicative experience, and social context.

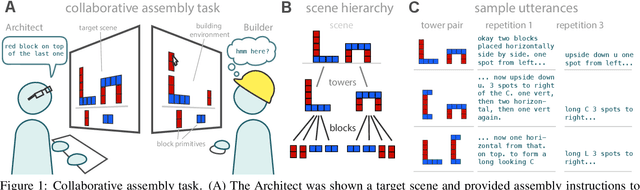

Learning to communicate about shared procedural abstractions

Jun 30, 2021

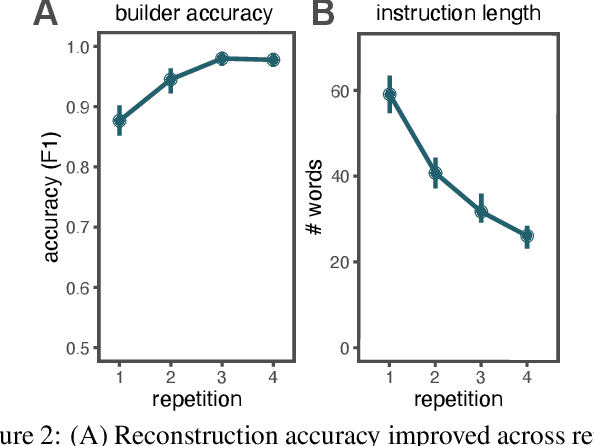

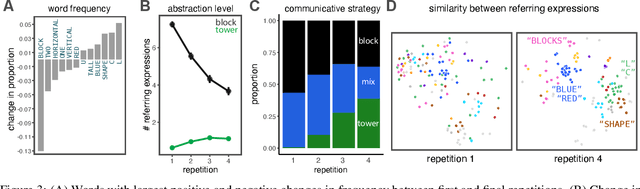

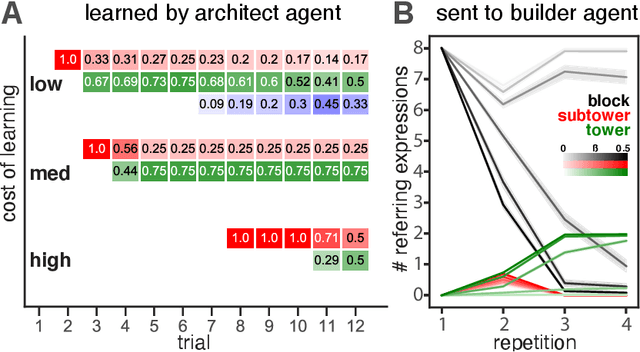

Abstract:Many real-world tasks require agents to coordinate their behavior to achieve shared goals. Successful collaboration requires not only adopting the same communicative conventions, but also grounding these conventions in the same task-appropriate conceptual abstractions. We investigate how humans use natural language to collaboratively solve physical assembly problems more effectively over time. Human participants were paired up in an online environment to reconstruct scenes containing two block towers. One participant could see the target towers, and sent assembly instructions for the other participant to reconstruct. Participants provided increasingly concise instructions across repeated attempts on each pair of towers, using higher-level referring expressions that captured each scene's hierarchical structure. To explain these findings, we extend recent probabilistic models of ad-hoc convention formation with an explicit perceptual learning mechanism. These results shed light on the inductive biases that enable intelligent agents to coordinate upon shared procedural abstractions.

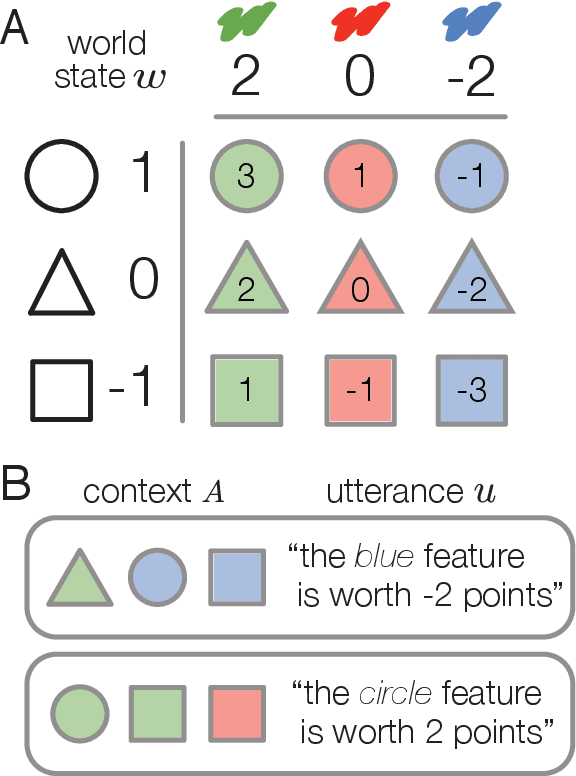

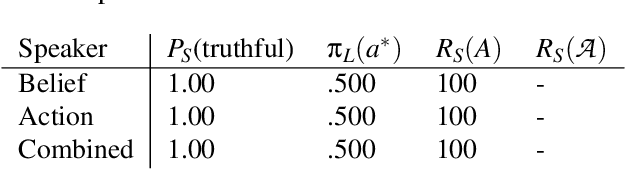

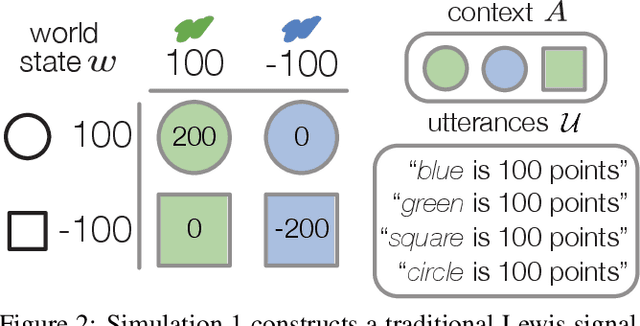

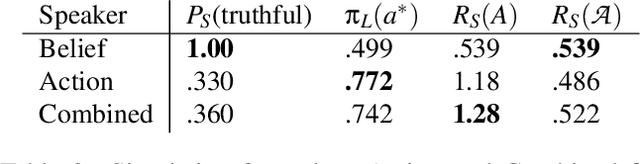

Extending rational models of communication from beliefs to actions

May 25, 2021

Abstract:Speakers communicate to influence their partner's beliefs and shape their actions. Belief- and action-based objectives have been explored independently in recent computational models, but it has been challenging to explicitly compare or integrate them. Indeed, we find that they are conflated in standard referential communication tasks. To distinguish these accounts, we introduce a new paradigm called signaling bandits, generalizing classic Lewis signaling games to a multi-armed bandit setting where all targets in the context have some relative value. We develop three speaker models: a belief-oriented speaker with a purely informative objective; an action-oriented speaker with an instrumental objective; and a combined speaker which integrates the two by inducing listener beliefs that generally lead to desirable actions. We then present a series of simulations demonstrating that grounding production choices in future listener actions results in relevance effects and flexible uses of nonliteral language. More broadly, our findings suggest that language games based on richer decision problems are a promising avenue for insight into rational communication.

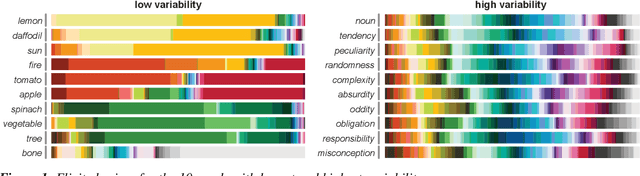

Shades of confusion: Lexical uncertainty modulates ad hoc coordination in an interactive communication task

May 13, 2021

Abstract:There is substantial variability in the expectations that communication partners bring into interactions, creating the potential for misunderstandings. To directly probe these gaps and our ability to overcome them, we propose a communication task based on color-concept associations. In Experiment 1, we establish several key properties of the mental representations of these expectations, or \emph{lexical priors}, based on recent probabilistic theories. Associations are more variable for abstract concepts, variability is represented as uncertainty within each individual, and uncertainty enables accurate predictions about whether others are likely to share the same association. In Experiment 2, we then examine the downstream consequences of these representations for communication. Accuracy is initially low when communicating about concepts with more variable associations, but rapidly increases as participants form ad hoc conventions. Together, our findings suggest that people cope with variability by maintaining well-calibrated uncertainty about their partner and appropriately adaptable representations of their own.

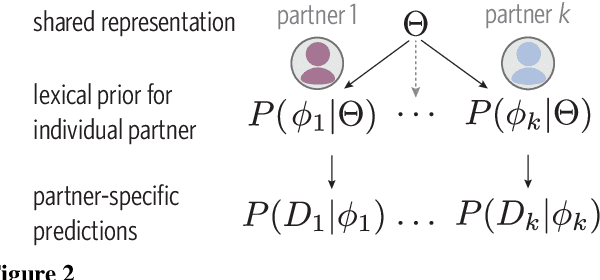

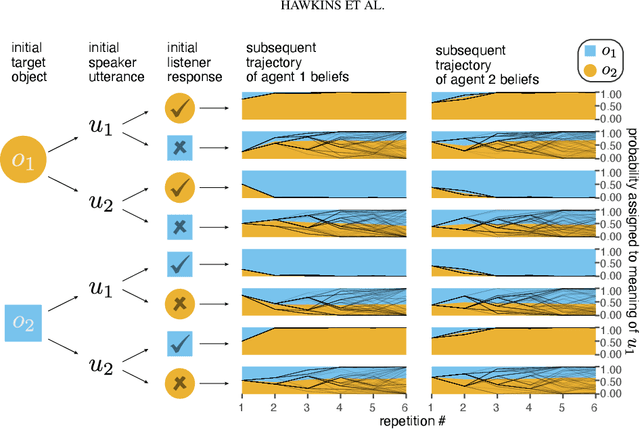

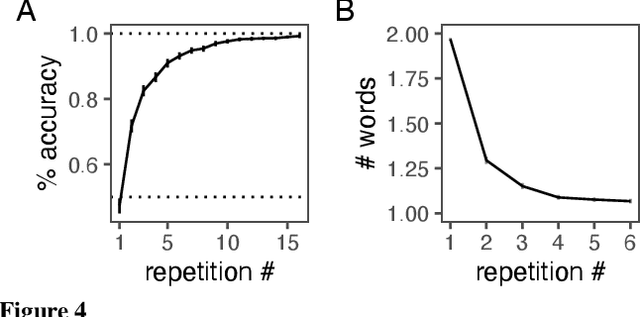

From partners to populations: A hierarchical Bayesian account of coordination and convention

Apr 12, 2021

Abstract:Languages are powerful solutions to coordination problems: they provide stable, shared expectations about how the words we say correspond to the beliefs and intentions in our heads. Yet language use in a variable and non-stationary social environment requires linguistic representations to be flexible: old words acquire new ad hoc or partner-specific meanings on the fly. In this paper, we introduce a hierarchical Bayesian theory of convention formation that aims to reconcile the long-standing tension between these two basic observations. More specifically, we argue that the central computational problem of communication is not simply transmission, as in classical formulations, but learning and adaptation over multiple timescales. Under our account, rapid learning within dyadic interactions allows for coordination on partner-specific common ground, while social conventions are stable priors that have been abstracted away from interactions with multiple partners. We present new empirical data alongside simulations showing how our model provides a cognitive foundation for explaining several phenomena that have posed a challenge for previous accounts: (1) the convergence to more efficient referring expressions across repeated interaction with the same partner, (2) the gradual transfer of partner-specific common ground to novel partners, and (3) the influence of communicative context on which conventions eventually form.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge