Kilian M. Pohl

Stanford University

GeoSAE: Geometric Prior-Guided Layer-Wise Sparse Autoencoder Annotation of Brain MRI Foundation Models

May 03, 2026Abstract:Brain MRI foundation models learn rich representations of anatomy, but interpreting what clinical information they encode remains an open problem. Standard sparse autoencoders (SAEs) suffer from severe feature collapse in deep transformer layers, and in Alzheimer's disease (AD) research, aging confounds nearly every clinical variable, making naive annotation unreliable. We propose GeoSAE, a geometry-guided SAE framework that uses the foundation model's learned manifold structure to prevent feature collapse and annotates each surviving feature via age-deconfounded partial correlations. Applied to ~14k T1-weighted MRI scans from the Alzheimer's Disease Neuroimaging Initiative (ADNI) and the Australian Imaging biomarkers and Lifestyle (AIBL) datasets, GeoSAE identifies a compact, fully interpretable feature set that predicts mild cognitive impairment (MCI)-to-AD conversion (AUC 0.746) using only 2% of the embedding dimensions, while comorbidity-annotated features achieve only chance-level performance. The identified features replicate across cohorts without retraining (r=0.97) and localize to neuroanatomically distinct regions consistent with Braak staging. This shows that geometry-guided SAEs can extract interpretable, biomarkers from frozen brain MRI foundation models.

Modality-Aware and Anatomical Vector-Quantized Autoencoding for Multimodal Brain MRI

Apr 06, 2026Abstract:Learning a robust Variational Autoencoder (VAE) is a fundamental step for many deep learning applications in medical image analysis, such as MRI synthesizes. Existing brain VAEs predominantly focus on single-modality data (i.e., T1-weighted MRI), overlooking the complementary diagnostic value of other modalities like T2-weighted MRIs. Here, we propose a modality-aware and anatomically grounded 3D vector-quantized VAE (VQ-VAE) for reconstructing multi-modal brain MRIs. Called NeuroQuant, it first learns a shared latent representation across modalities using factorized multi-axis attention, which can capture relationships between distant brain regions. It then employs a dual-stream 3D encoder that explicitly separates the encoding of modality-invariant anatomical structures from modality-dependent appearance. Next, the anatomical encoding is discretized using a shared codebook and combined with modality-specific appearance features via Feature-wise Linear Modulation (FiLM) during the decoding phase. This entire approach is trained using a joint 2D/3D strategy in order to account for the slice-based acquisition of 3D MRI data. Extensive experiments on two multi-modal brain MRI datasets demonstrate that NeuroQuant achieves superior reconstruction fidelity compared to existing VAEs, enabling a scalable foundation for downstream generative modeling and cross-modal brain image analysis.

A Generative Foundation Model for Multimodal Histopathology

Apr 04, 2026Abstract:Accurate diagnosis and treatment of complex diseases require integrating histological, molecular, and clinical data, yet in practice these modalities are often incomplete owing to tissue scarcity, assay cost, and workflow constraints. Existing computational approaches attempt to impute missing modalities from available data but rely on task-specific models trained on narrow, single source-target pairs, limiting their generalizability. Here we introduce MuPD (Multimodal Pathology Diffusion), a generative foundation model that embeds hematoxylin and eosin (H&E)-stained histology, molecular RNA profiles, and clinical text into a shared latent space through a diffusion transformer with decoupled cross-modal attention. Pretrained on 100 million histology image patches, 1.6 million text-histology pairs, and 10.8 million RNA-histology pairs spanning 34 human organs, MuPD supports diverse cross-modal synthesis tasks with minimal or no task-specific fine-tuning. For text-conditioned and image-to-image generation, MuPD synthesizes histologically faithful tissue architectures, reducing Fréchet inception distance (FID) scores by 50% relative to domain-specific models and improving few-shot classification accuracy by up to 47% through synthetic data augmentation. For RNA-conditioned histology generation, MuPD reduces FID by 23% compared with the next-best method while preserving cell-type distributions across five cancer types. As a virtual stainer, MuPD translates H&E images to immunohistochemistry and multiplex immunofluorescence, improving average marker correlation by 37% over existing approaches. These results demonstrate that a single, unified generative model pretrained across heterogeneous pathology modalities can substantially outperform specialized alternatives, providing a scalable computational framework for multimodal histopathology.

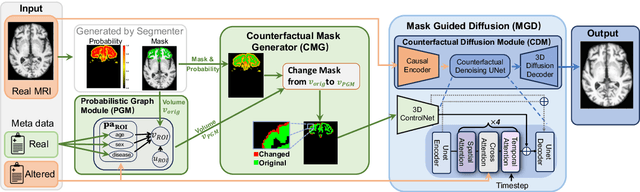

Integrating Anatomical Priors into a Causal Diffusion Model

Sep 10, 2025

Abstract:3D brain MRI studies often examine subtle morphometric differences between cohorts that are hard to detect visually. Given the high cost of MRI acquisition, these studies could greatly benefit from image syntheses, particularly counterfactual image generation, as seen in other domains, such as computer vision. However, counterfactual models struggle to produce anatomically plausible MRIs due to the lack of explicit inductive biases to preserve fine-grained anatomical details. This shortcoming arises from the training of the models aiming to optimize for the overall appearance of the images (e.g., via cross-entropy) rather than preserving subtle, yet medically relevant, local variations across subjects. To preserve subtle variations, we propose to explicitly integrate anatomical constraints on a voxel-level as prior into a generative diffusion framework. Called Probabilistic Causal Graph Model (PCGM), the approach captures anatomical constraints via a probabilistic graph module and translates those constraints into spatial binary masks of regions where subtle variations occur. The masks (encoded by a 3D extension of ControlNet) constrain a novel counterfactual denoising UNet, whose encodings are then transferred into high-quality brain MRIs via our 3D diffusion decoder. Extensive experiments on multiple datasets demonstrate that PCGM generates structural brain MRIs of higher quality than several baseline approaches. Furthermore, we show for the first time that brain measurements extracted from counterfactuals (generated by PCGM) replicate the subtle effects of a disease on cortical brain regions previously reported in the neuroscience literature. This achievement is an important milestone in the use of synthetic MRIs in studies investigating subtle morphological differences.

Brain-Cognition Fingerprinting via Graph-GCCA with Contrastive Learning

Sep 20, 2024

Abstract:Many longitudinal neuroimaging studies aim to improve the understanding of brain aging and diseases by studying the dynamic interactions between brain function and cognition. Doing so requires accurate encoding of their multidimensional relationship while accounting for individual variability over time. For this purpose, we propose an unsupervised learning model (called \underline{\textbf{Co}}ntrastive Learning-based \underline{\textbf{Gra}}ph Generalized \underline{\textbf{Ca}}nonical Correlation Analysis (CoGraCa)) that encodes their relationship via Graph Attention Networks and generalized Canonical Correlational Analysis. To create brain-cognition fingerprints reflecting unique neural and cognitive phenotype of each person, the model also relies on individualized and multimodal contrastive learning. We apply CoGraCa to longitudinal dataset of healthy individuals consisting of resting-state functional MRI and cognitive measures acquired at multiple visits for each participant. The generated fingerprints effectively capture significant individual differences and outperform current single-modal and CCA-based multimodal models in identifying sex and age. More importantly, our encoding provides interpretable interactions between those two modalities.

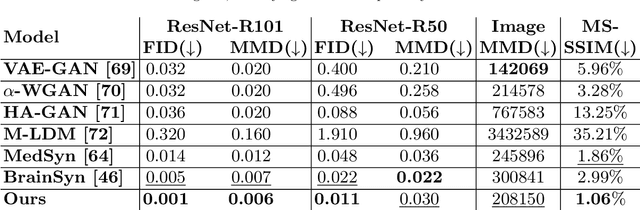

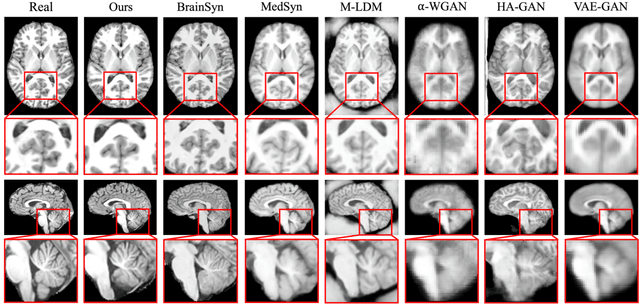

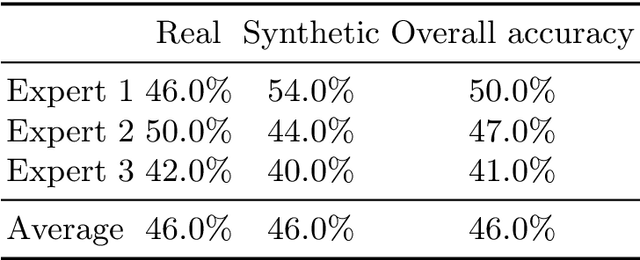

Evaluating the Quality of Brain MRI Generators

Sep 13, 2024Abstract:Deep learning models generating structural brain MRIs have the potential to significantly accelerate discovery of neuroscience studies. However, their use has been limited in part by the way their quality is evaluated. Most evaluations of generative models focus on metrics originally designed for natural images (such as structural similarity index and Frechet inception distance). As we show in a comparison of 6 state-of-the-art generative models trained and tested on over 3000 MRIs, these metrics are sensitive to the experimental setup and inadequately assess how well brain MRIs capture macrostructural properties of brain regions (i.e., anatomical plausibility). This shortcoming of the metrics results in inconclusive findings even when qualitative differences between the outputs of models are evident. We therefore propose a framework for evaluating models generating brain MRIs, which requires uniform processing of the real MRIs, standardizing the implementation of the models, and automatically segmenting the MRIs generated by the models. The segmentations are used for quantifying the plausibility of anatomy displayed in the MRIs. To ensure meaningful quantification, it is crucial that the segmentations are highly reliable. Our framework rigorously checks this reliability, a step often overlooked by prior work. Only 3 of the 6 generative models produced MRIs, of which at least 95% had highly reliable segmentations. More importantly, the assessment of each model by our framework is in line with qualitative assessments, reinforcing the validity of our approach.

Latent 3D Brain MRI Counterfactual

Sep 09, 2024

Abstract:The number of samples in structural brain MRI studies is often too small to properly train deep learning models. Generative models show promise in addressing this issue by effectively learning the data distribution and generating high-fidelity MRI. However, they struggle to produce diverse, high-quality data outside the distribution defined by the training data. One way to address the issue is using causal models developed for 3D volume counterfactuals. However, accurately modeling causality in high-dimensional spaces is a challenge so that these models generally generate 3D brain MRIS of lower quality. To address these challenges, we propose a two-stage method that constructs a Structural Causal Model (SCM) within the latent space. In the first stage, we employ a VQ-VAE to learn a compact embedding of the MRI volume. Subsequently, we integrate our causal model into this latent space and execute a three-step counterfactual procedure using a closed-form Generalized Linear Model (GLM). Our experiments conducted on real-world high-resolution MRI data (1mm) demonstrate that our method can generate high-quality 3D MRI counterfactuals.

Few Shot Part Segmentation Reveals Compositional Logic for Industrial Anomaly Detection

Dec 21, 2023Abstract:Logical anomalies (LA) refer to data violating underlying logical constraints e.g., the quantity, arrangement, or composition of components within an image. Detecting accurately such anomalies requires models to reason about various component types through segmentation. However, curation of pixel-level annotations for semantic segmentation is both time-consuming and expensive. Although there are some prior few-shot or unsupervised co-part segmentation algorithms, they often fail on images with industrial object. These images have components with similar textures and shapes, and a precise differentiation proves challenging. In this study, we introduce a novel component segmentation model for LA detection that leverages a few labeled samples and unlabeled images sharing logical constraints. To ensure consistent segmentation across unlabeled images, we employ a histogram matching loss in conjunction with an entropy loss. As segmentation predictions play a crucial role, we propose to enhance both local and global sample validity detection by capturing key aspects from visual semantics via three memory banks: class histograms, component composition embeddings and patch-level representations. For effective LA detection, we propose an adaptive scaling strategy to standardize anomaly scores from different memory banks in inference. Extensive experiments on the public benchmark MVTec LOCO AD reveal our method achieves 98.1% AUROC in LA detection vs. 89.6% from competing methods.

Metadata-Conditioned Generative Models to Synthesize Anatomically-Plausible 3D Brain MRIs

Oct 07, 2023

Abstract:Generative AI models hold great potential in creating synthetic brain MRIs that advance neuroimaging studies by, for example, enriching data diversity. However, the mainstay of AI research only focuses on optimizing the visual quality (such as signal-to-noise ratio) of the synthetic MRIs while lacking insights into their relevance to neuroscience. To gain these insights with respect to T1-weighted MRIs, we first propose a new generative model, BrainSynth, to synthesize metadata-conditioned (e.g., age- and sex-specific) MRIs that achieve state-of-the-art visual quality. We then extend our evaluation with a novel procedure to quantify anatomical plausibility, i.e., how well the synthetic MRIs capture macrostructural properties of brain regions, and how accurately they encode the effects of age and sex. Results indicate that more than half of the brain regions in our synthetic MRIs are anatomically accurate, i.e., with a small effect size between real and synthetic MRIs. Moreover, the anatomical plausibility varies across cortical regions according to their geometric complexity. As is, our synthetic MRIs can significantly improve the training of a Convolutional Neural Network to identify accelerated aging effects in an independent study. These results highlight the opportunities of using generative AI to aid neuroimaging research and point to areas for further improvement.

LSOR: Longitudinally-Consistent Self-Organized Representation Learning

Sep 30, 2023Abstract:Interpretability is a key issue when applying deep learning models to longitudinal brain MRIs. One way to address this issue is by visualizing the high-dimensional latent spaces generated by deep learning via self-organizing maps (SOM). SOM separates the latent space into clusters and then maps the cluster centers to a discrete (typically 2D) grid preserving the high-dimensional relationship between clusters. However, learning SOM in a high-dimensional latent space tends to be unstable, especially in a self-supervision setting. Furthermore, the learned SOM grid does not necessarily capture clinically interesting information, such as brain age. To resolve these issues, we propose the first self-supervised SOM approach that derives a high-dimensional, interpretable representation stratified by brain age solely based on longitudinal brain MRIs (i.e., without demographic or cognitive information). Called Longitudinally-consistent Self-Organized Representation learning (LSOR), the method is stable during training as it relies on soft clustering (vs. the hard cluster assignments used by existing SOM). Furthermore, our approach generates a latent space stratified according to brain age by aligning trajectories inferred from longitudinal MRIs to the reference vector associated with the corresponding SOM cluster. When applied to longitudinal MRIs of the Alzheimer's Disease Neuroimaging Initiative (ADNI, N=632), LSOR generates an interpretable latent space and achieves comparable or higher accuracy than the state-of-the-art representations with respect to the downstream tasks of classification (static vs. progressive mild cognitive impairment) and regression (determining ADAS-Cog score of all subjects). The code is available at https://github.com/ouyangjiahong/longitudinal-som-single-modality.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge