Hongyuan Su

AutoSOTA: An End-to-End Automated Research System for State-of-the-Art AI Model Discovery

Apr 07, 2026Abstract:Artificial intelligence research increasingly depends on prolonged cycles of reproduction, debugging, and iterative refinement to achieve State-Of-The-Art (SOTA) performance, creating a growing need for systems that can accelerate the full pipeline of empirical model optimization. In this work, we introduce AutoSOTA, an end-to-end automated research system that advances the latest SOTA models published in top-tier AI papers to reproducible and empirically improved new SOTA models. We formulate this problem through three tightly coupled stages: resource preparation and goal setting; experiment evaluation; and reflection and ideation. To tackle this problem, AutoSOTA adopts a multi-agent architecture with eight specialized agents that collaboratively ground papers to code and dependencies, initialize and repair execution environments, track long-horizon experiments, generate and schedule optimization ideas, and supervise validity to avoid spurious gains. We evaluate AutoSOTA on recent research papers collected from eight top-tier AI conferences under filters for code availability and execution cost. Across these papers, AutoSOTA achieves strong end-to-end performance in both automated replication and subsequent optimization. Specifically, it successfully discovers 105 new SOTA models that surpass the original reported methods, averaging approximately five hours per paper. Case studies spanning LLM, NLP, computer vision, time series, and optimization further show that the system can move beyond routine hyperparameter tuning to identify architectural innovation, algorithmic redesigns, and workflow-level improvements. These results suggest that end-to-end research automation can serve not only as a performance optimizer, but also as a new form of research infrastructure that reduces repetitive experimental burden and helps redirect human attention toward higher-level scientific creativity.

ContextEvolve: Multi-Agent Context Compression for Systems Code Optimization

Feb 01, 2026Abstract:Large language models are transforming systems research by automating the discovery of performance-critical algorithms for computer systems. Despite plausible codes generated by LLMs, producing solutions that meet the stringent correctness and performance requirements of systems demands iterative optimization. Test-time reinforcement learning offers high search efficiency but requires parameter updates infeasible under API-only access, while existing training-free evolutionary methods suffer from inefficient context utilization and undirected search. We introduce ContextEvolve, a multi-agent framework that achieves RL-level search efficiency under strict parameter-blind constraints by decomposing optimization context into three orthogonal dimensions: a Summarizer Agent condenses semantic state via code-to-language abstraction, a Navigator Agent distills optimization direction from trajectory analysis, and a Sampler Agent curates experience distribution through prioritized exemplar retrieval. This orchestration forms a functional isomorphism with RL-mapping to state representation, policy gradient, and experience replay-enabling principled optimization in a textual latent space. On the ADRS benchmark, ContextEvolve outperforms state-of-the-art baselines by 33.3% while reducing token consumption by 29.0%. Codes for our work are released at https://anonymous.4open.science/r/ContextEvolve-ACC

Understanding World or Predicting Future? A Comprehensive Survey of World Models

Nov 21, 2024

Abstract:The concept of world models has garnered significant attention due to advancements in multimodal large language models such as GPT-4 and video generation models such as Sora, which are central to the pursuit of artificial general intelligence. This survey offers a comprehensive review of the literature on world models. Generally, world models are regarded as tools for either understanding the present state of the world or predicting its future dynamics. This review presents a systematic categorization of world models, emphasizing two primary functions: (1) constructing internal representations to understand the mechanisms of the world, and (2) predicting future states to simulate and guide decision-making. Initially, we examine the current progress in these two categories. We then explore the application of world models in key domains, including autonomous driving, robotics, and social simulacra, with a focus on how each domain utilizes these aspects. Finally, we outline key challenges and provide insights into potential future research directions.

Large-scale Urban Facility Location Selection with Knowledge-informed Reinforcement Learning

Sep 03, 2024

Abstract:The facility location problem (FLP) is a classical combinatorial optimization challenge aimed at strategically laying out facilities to maximize their accessibility. In this paper, we propose a reinforcement learning method tailored to solve large-scale urban FLP, capable of producing near-optimal solutions at superfast inference speed. We distill the essential swap operation from local search, and simulate it by intelligently selecting edges on a graph of urban regions, guided by a knowledge-informed graph neural network, thus sidestepping the need for heavy computation of local search. Extensive experiments on four US cities with different geospatial conditions demonstrate that our approach can achieve comparable performance to commercial solvers with less than 5\% accessibility loss, while displaying up to 1000 times speedup. We deploy our model as an online geospatial application at https://huggingface.co/spaces/randommmm/MFLP.

Road Planning for Slums via Deep Reinforcement Learning

May 22, 2023Abstract:Millions of slum dwellers suffer from poor accessibility to urban services due to inadequate road infrastructure within slums, and road planning for slums is critical to the sustainable development of cities. Existing re-blocking or heuristic methods are either time-consuming which cannot generalize to different slums, or yield sub-optimal road plans in terms of accessibility and construction costs. In this paper, we present a deep reinforcement learning based approach to automatically layout roads for slums. We propose a generic graph model to capture the topological structure of a slum, and devise a novel graph neural network to select locations for the planned roads. Through masked policy optimization, our model can generate road plans that connect places in a slum at minimal construction costs. Extensive experiments on real-world slums in different countries verify the effectiveness of our model, which can significantly improve accessibility by 14.3% against existing baseline methods. Further investigations on transferring across different tasks demonstrate that our model can master road planning skills in simple scenarios and adapt them to much more complicated ones, indicating the potential of applying our model in real-world slum upgrading.

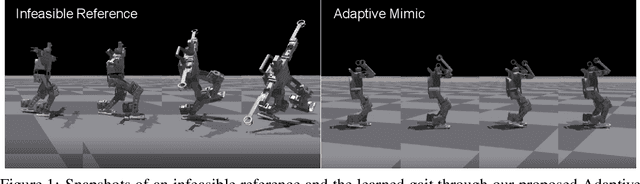

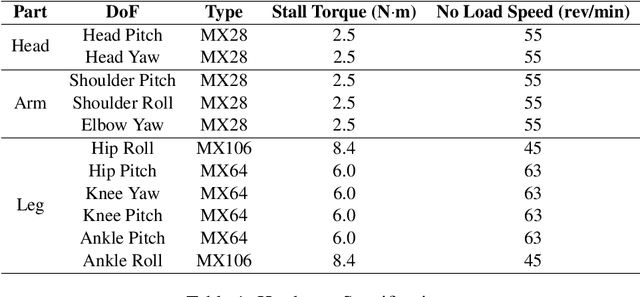

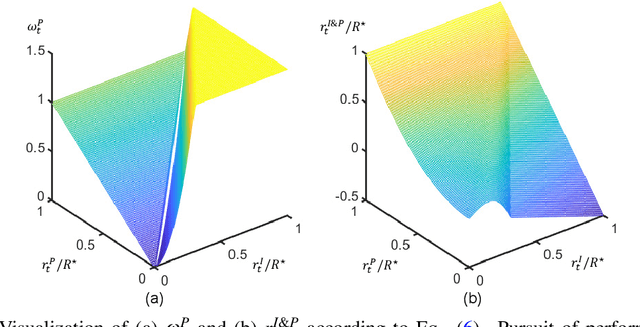

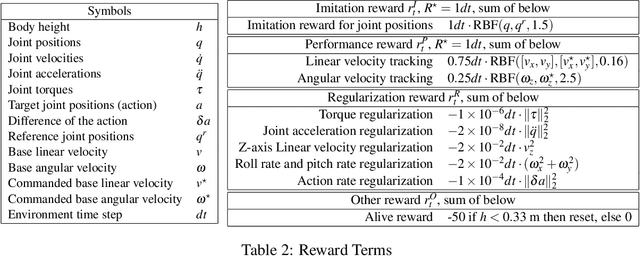

Adaptive Mimic: Deep Reinforcement Learning of Parameterized Bipedal Walking from Infeasible References

Dec 13, 2021

Abstract:Not until recently, robust robot locomotion has been achieved by deep reinforcement learning (DRL). However, for efficient learning of parametrized bipedal walking, developed references are usually required, limiting the performance to that of the references. In this paper, we propose to design an adaptive reward function for imitation learning from the references. The agent is encouraged to mimic the references when its performance is low, while to pursue high performance when it reaches the limit of references. We further demonstrate that developed references can be replaced by low-quality references that are generated without laborious tuning and infeasible to deploy by themselves, as long as they can provide a priori knowledge to expedite the learning process.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge