Guang-Zhong Yang

Varifocal-Net: A Chromosome Classification Approach using Deep Convolutional Networks

Oct 20, 2018

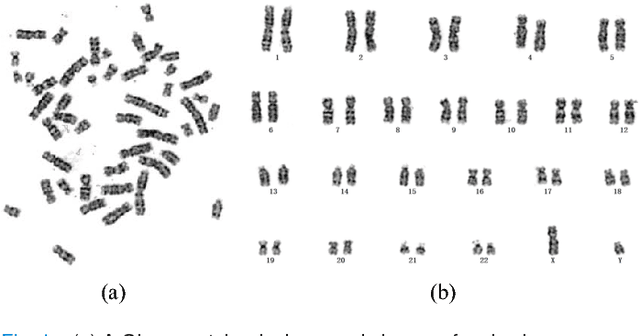

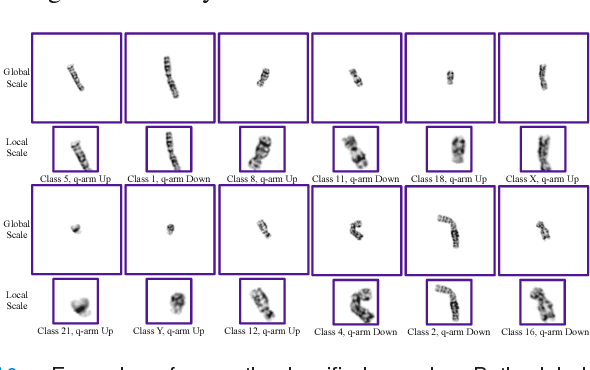

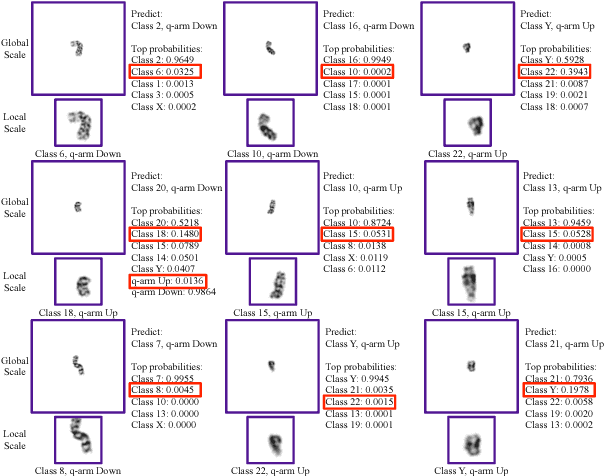

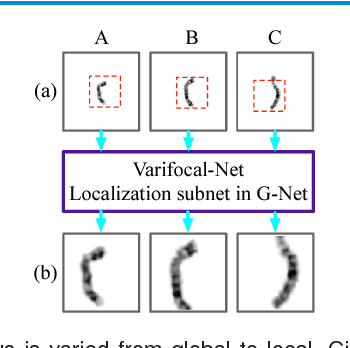

Abstract:Chromosome classification is critical for karyotyping in abnormality diagnosis. To expedite diagnosis process, we present a novel method named Varifocal-Net for simultaneous classification of chromosome's type and polarity using deep convolutional networks. The approach consists of one global-scale network (G-Net) and one local-scale network (L-Net). It follows two stages. The first stage is to learn both global and local features. We extract global features and detect finer local regions via the G-Net. With the proposed varifocal mechanism, we zoom into local parts and extract local features via the L-Net. Residual learning and multi-task learning strategies are utilized to promote high-level feature extraction. The detection of discriminative local parts is fulfilled by a localization subnet of the G-Net, whose training process involves both supervised and weekly-supervised learning. The second stage is to build two multi-layer perceptron classifiers that exploit features of both two scales to boost classification performance. Evaluation results from 1909 karyotyping cases demonstrate that our Varifocal-Net achieved the highest accuracy of 0.9805, 0.9909 and average F1-score of 0.9771, 0.9909 for the type and polarity task, respectively. It outperformed state-of-the-art methods, demonstrating the effectiveness of our Varifocal mechanism and multi-scale feature ensemble.

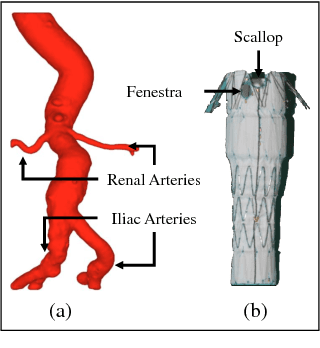

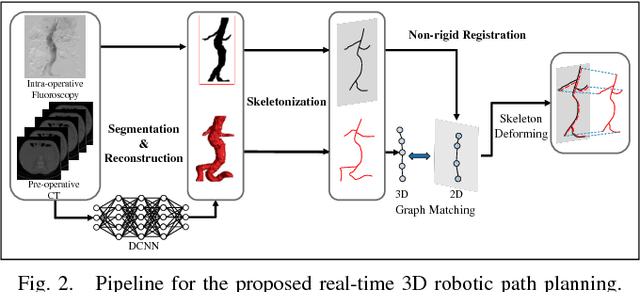

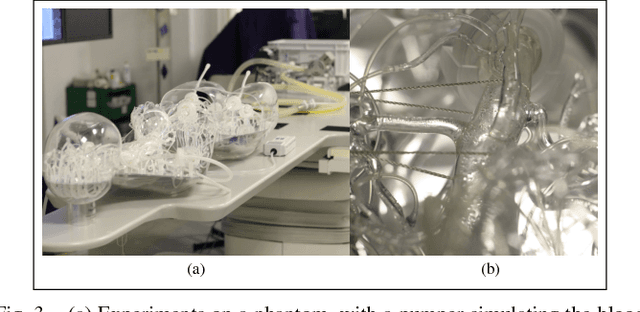

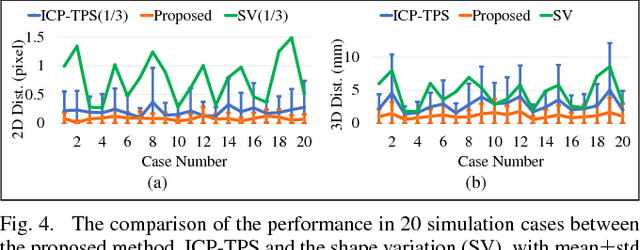

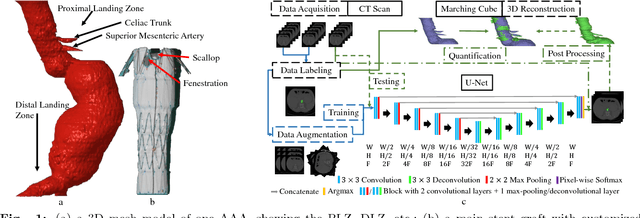

3D Path Planning from a Single 2D Fluoroscopic Image for Robot Assisted Fenestrated Endovascular Aortic Repair

Sep 16, 2018

Abstract:The current standard of intra-operative navigation during Fenestrated Endovascular Aortic Repair (FEVAR) calls for need of 3D alignments between inserted devices and aortic branches. The navigation commonly via 2D fluoroscopic images, lacks anatomical information, resulting in longer operation hours and radiation exposure. In this paper, a framework for real-time 3D robotic path planning from a single 2D fluoroscopic image of Abdominal Aortic Aneurysm (AAA) is introduced. A graph matching method is proposed to establish the correspondence between the 3D preoperative and 2D intra-operative AAA skeletons, and then the two skeletons are registered by skeleton deformation and regularization in respect to skeleton length and smoothness. Furthermore, deep learning was used to segment 3D pre-operative AAA from Computed Tomography (CT) scans to facilitate the framework automation. Simulation, phantom and patient AAA data sets have been used to validate the proposed framework. 3D distance error of 2mm was achieved in the phantom setup. Performance advantages were also achieved in terms of accuracy, robustness and time-efficiency. All the code will be open source.

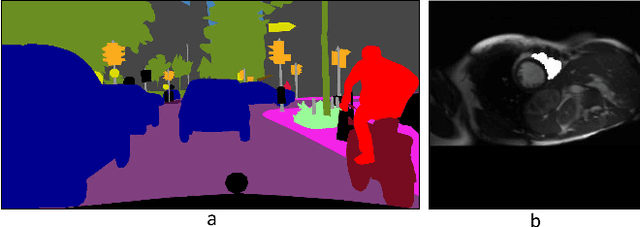

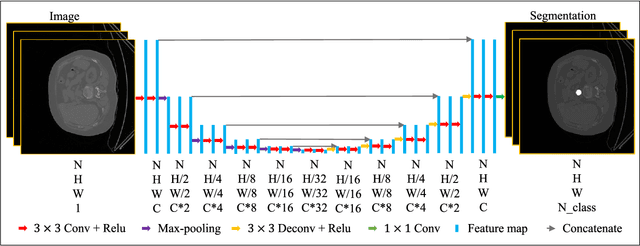

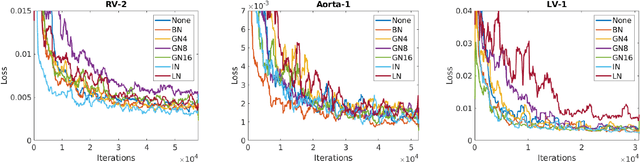

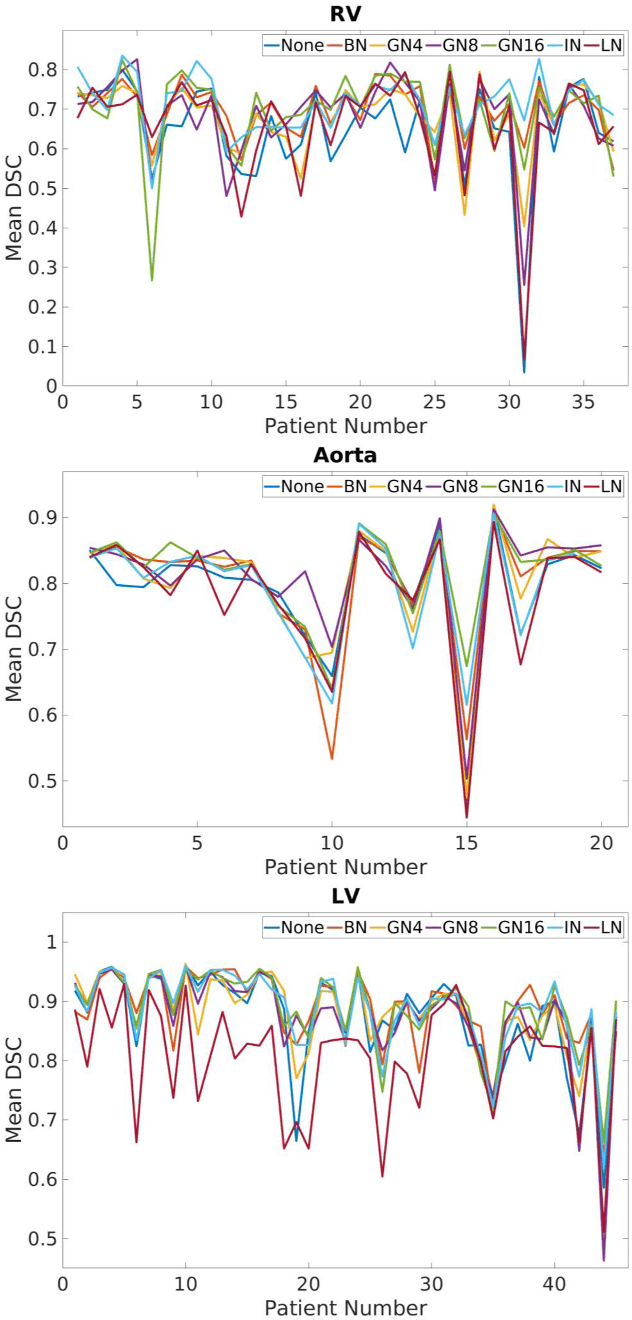

Normalization in Training Deep Convolutional Neural Networks for 2D Bio-medical Semantic Segmentation

Sep 11, 2018

Abstract:2D bio-medical semantic segmentation is important for surgical robotic vision. Segmentation methods based on Deep Convolutional Neural Network (DCNN) out-perform conventional methods in terms of both the accuracy and automation. One common issue in training DCNN is the internal covariate shift, where the convolutional kernels are trained to fit the distribution change of input feature, hence both the training speed and performance are decreased. Batch Normalization (BN) is the first proposed method for addressing internal covariate shift and is widely used. Later Instance Normalization (IN) and Layer Normalization (LN) were proposed and are used much less than BN. Group Normalization (GN) was proposed very recently and has not been applied into 2D bio-medical semantic segmentation yet. Most DCNN-based bio-medical semantic segmentation adopts BN as the normalization method by default, without reviewing its performance. In this paper, four normalization methods - BN, IN, LN and GN are compared and reviewed in details specifically for 2D bio-medical semantic segmentation. The result proved that GN out-performed the other three normalization methods - BN, IN and LN in 2D bio-medical semantic segmentation regarding both the accuracy and robustness. Unet is adopted as the basic DCNN structure. 37 RVs from both asymptomatic and Hypertrophic Cardiomyopathy (HCM) subjects and 20 aortas from asymptomatic subjects were used for the validation. The code and trained models will be available online.

Translational Motion Compensation for Soft Tissue Velocity Images

Aug 20, 2018

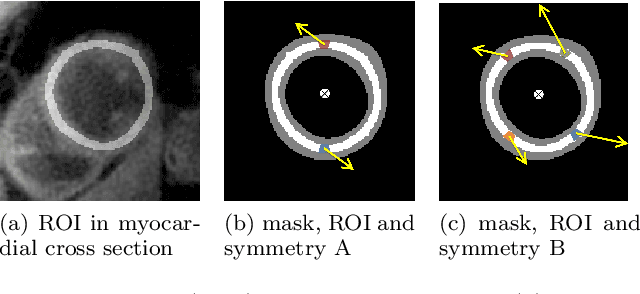

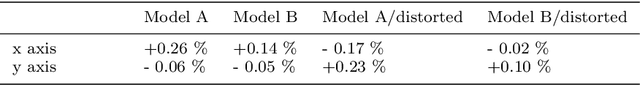

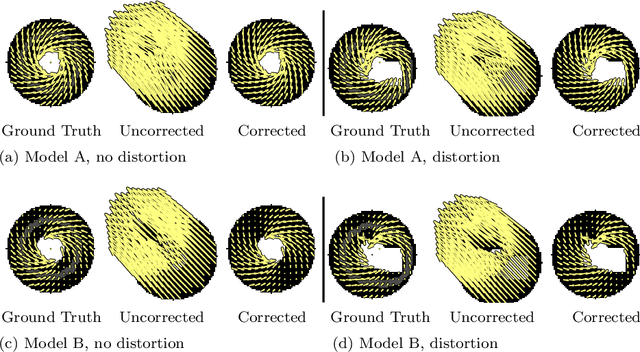

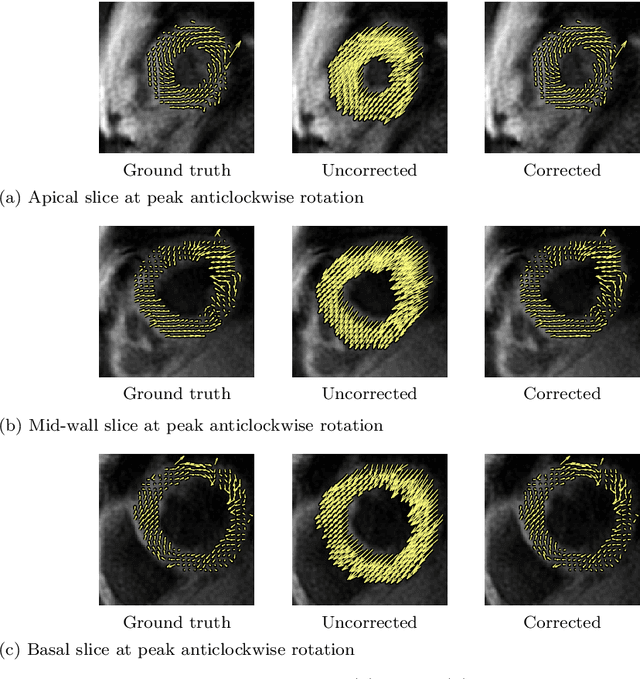

Abstract:Purpose: Advancements in MRI Tissue Phase Velocity Mapping (TPM) allow for the acquisition of higher quality velocity cardiac images providing better assessment of regional myocardial deformation for accurate disease diagnosis, pre-operative planning and post-operative patient surveillance. Translation of TPM velocities from the scanner's reference coordinate system to the regional cardiac coordinate system requires decoupling of translational motion and motion due to myocardial deformation. Despite existing techniques for respiratory motion compensation in TPM, there is still a remaining translational velocity component due to the global motion of the beating heart. To compensate for translational motion in cardiac TPM, we propose an image-processing method, which we have evaluated on synthetic data and applied on in vivo TPM data. Methods: Translational motion is estimated from a suitable region of velocities automatically defined in the left-ventricular volume. The region is generated by dilating the medial axis of myocardial masks in each slice and the translational velocity is estimated by integration in this region. The method was evaluated on synthetic data and in vivo data corrupted with a translational velocity component (200% of the maximum measured velocity). Accuracy and robustness were examined and the method was applied on 10 in vivo datasets. Results: The results from synthetic and in vivo corrupted data show excellent performance with an estimation error less than 0.3% and high robustness in both cases. The effectiveness of the method is confirmed with visual observation of results from the 10 datasets. Conclusion: The proposed method is accurate and suitable for translational motion correction of the left ventricular velocity fields. The current method for translational motion compensation could be applied to any annular contracting (tissue) structure.

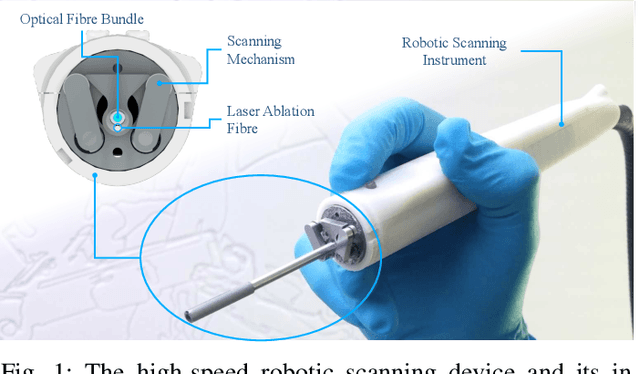

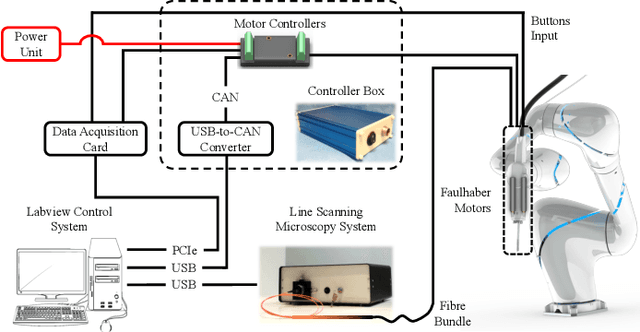

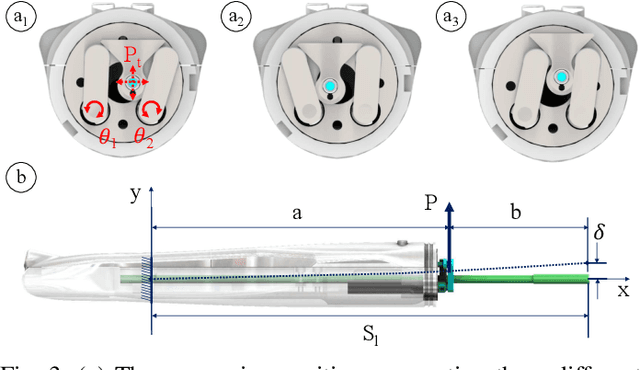

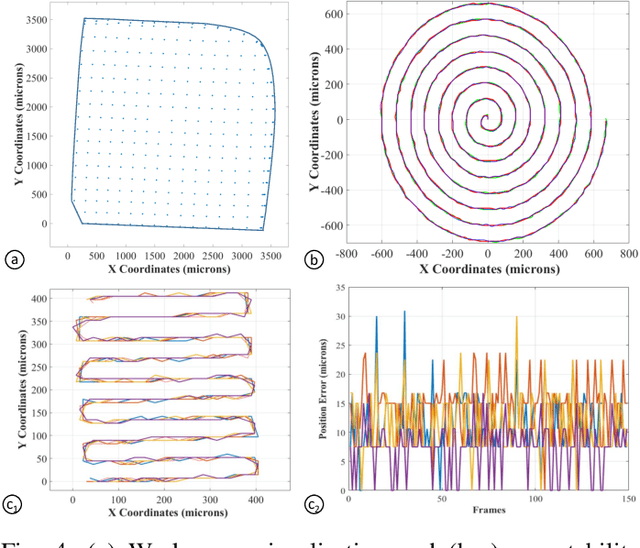

Intraoperative robotic-assisted large-area high-speed microscopic imaging and intervention

Aug 13, 2018

Abstract:Objective: Probe-based confocal endomicroscopy is an emerging high-magnification optical imaging technique that provides in vivo and in situ cellular-level imaging for real-time assessment of tissue pathology. Endomicroscopy could potentially be used for intraoperative surgical guidance, but it is challenging to assess a surgical site using individual microscopic images due to the limited field-of-view and difficulties associated with manually manipulating the probe. Methods: In this paper, a novel robotic device for large-area endomicroscopy imaging is proposed, demonstrating a rapid, but highly accurate, scanning mechanism with image-based motion control which is able to generate histology-like endomicroscopy mosaics. The device also includes, for the first time in robotic-assisted endomicroscopy, the capability to ablate tissue without the need for an additional tool. Results: The device achieves pre-programmed trajectories with positioning accuracy of less than 30 um, while the image-based approach demonstrated that it can suppress random motion disturbances up to 1.25 mm/s. Mosaics are presented from a range of ex vivo human and animal tissues, over areas of more than 3 mm^2, scanned in approximate 10 seconds. Conclusion: This work demonstrates the potential of the proposed instrument to generate large-area, high-resolution microscopic images for intraoperative tissue identification and margin assessment. Significance: This approach presents an important alternative to current histology techniques, significantly reducing the tissue assessment time, while simultaneously providing the capability to mark and ablate suspicious areas intraoperatively.

Agricultural Robotics: The Future of Robotic Agriculture

Aug 02, 2018Abstract:Agri-Food is the largest manufacturing sector in the UK. It supports a food chain that generates over {\pounds}108bn p.a., with 3.9m employees in a truly international industry and exports {\pounds}20bn of UK manufactured goods. However, the global food chain is under pressure from population growth, climate change, political pressures affecting migration, population drift from rural to urban regions and the demographics of an aging global population. These challenges are recognised in the UK Industrial Strategy white paper and backed by significant investment via a Wave 2 Industrial Challenge Fund Investment ("Transforming Food Production: from Farm to Fork"). Robotics and Autonomous Systems (RAS) and associated digital technologies are now seen as enablers of this critical food chain transformation. To meet these challenges, this white paper reviews the state of the art in the application of RAS in Agri-Food production and explores research and innovation needs to ensure these technologies reach their full potential and deliver the necessary impacts in the Agri-Food sector.

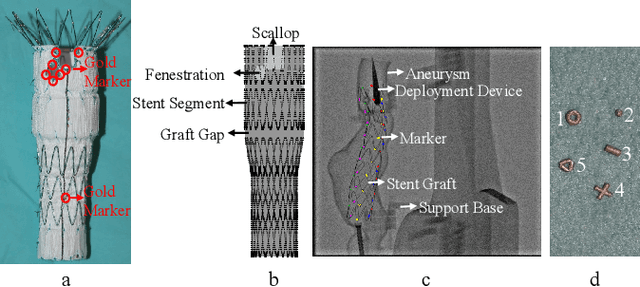

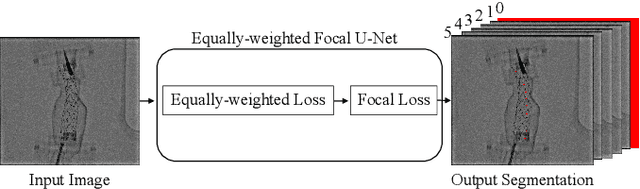

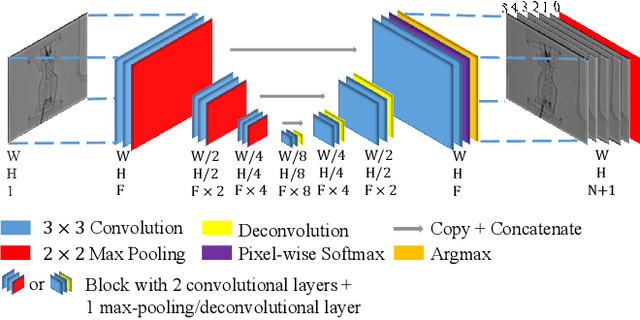

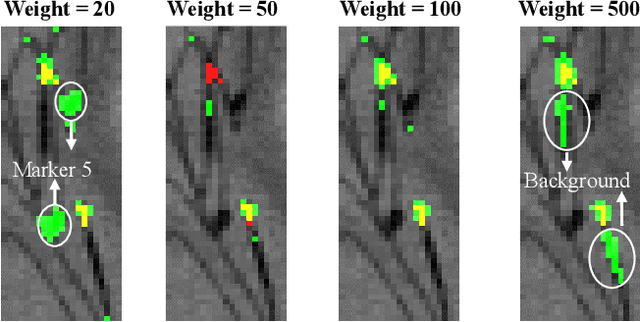

Towards Automatic 3D Shape Instantiation for Deployed Stent Grafts: 2D Multiple-class and Class-imbalance Marker Segmentation with Equally-weighted Focal U-Net

Jul 31, 2018

Abstract:Robot-assisted Fenestrated Endovascular Aortic Repair (FEVAR) is currently navigated by 2D fluoroscopy which is insufficiently informative. Previously, a semi-automatic 3D shape instantiation method was developed to instantiate the 3D shape of a main, deployed, and fenestrated stent graft from a single fluoroscopy projection in real-time, which could help 3D FEVAR navigation and robotic path planning. This proposed semi-automatic method was based on the Robust Perspective-5-Point (RP5P) method, graft gap interpolation and semi-automatic multiple-class marker center determination. In this paper, an automatic 3D shape instantiation could be achieved by automatic multiple-class marker segmentation and hence automatic multiple-class marker center determination. Firstly, the markers were designed into five different shapes. Then, Equally-weighted Focal U-Net was proposed to segment the fluoroscopy projections of customized markers into five classes and hence to determine the marker centers. The proposed Equally-weighted Focal U-Net utilized U-Net as the network architecture, equally-weighted loss function for initial marker segmentation, and then equally-weighted focal loss function for improving the initial marker segmentation. This proposed network outperformed traditional Weighted U-Net on the class-imbalance segmentation in this paper with reducing one hyper-parameter - the weight. An overall mean Intersection over Union (mIoU) of 0.6943 was achieved on 78 testing images, where 81.01% markers were segmented with a center position error <1.6mm. Comparable accuracy of 3D shape instantiation was also achieved and stated. The data, trained models and TensorFlow codes are available on-line.

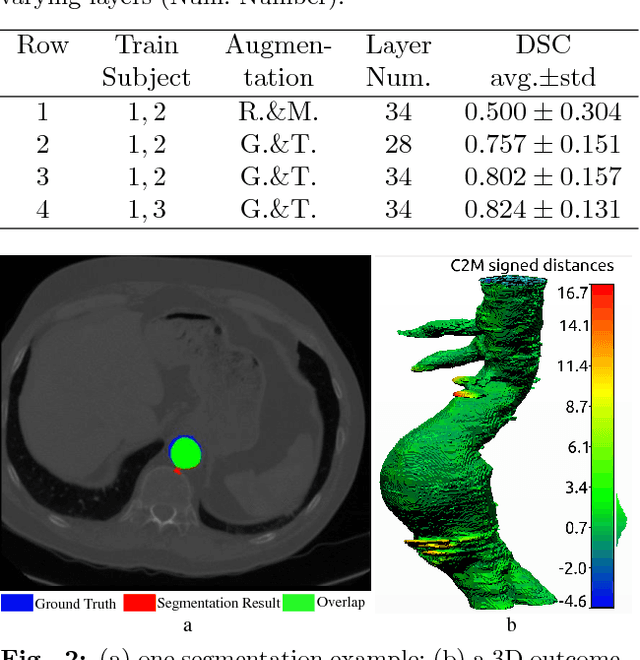

Abdominal Aortic Aneurysm Segmentation with a Small Number of Training Subjects

Apr 09, 2018

Abstract:Pre-operative Abdominal Aortic Aneurysm (AAA) 3D shape is critical for customized stent-graft design in Fenestrated Endovascular Aortic Repair (FEVAR). Traditional segmentation approaches implement expert-designed feature extractors while recent deep neural networks extract features automatically with multiple non-linear modules. Usually, a large training dataset is essential for applying deep learning on AAA segmentation. In this paper, the AAA was segmented using U-net with a small number (two) of training subjects. Firstly, Computed Tomography Angiography (CTA) slices were augmented with gray value variation and translation to avoid the overfitting caused by the small number of training subjects. Then, U-net was trained to segment the AAA. Dice Similarity Coefficients (DSCs) over 0.8 were achieved on the testing subjects. The PLZ, DLZ and aortic branches are all reconstructed reasonably, which will facilitate stent graft customization and help shape instantiation for intra-operative surgery navigation in FEVAR.

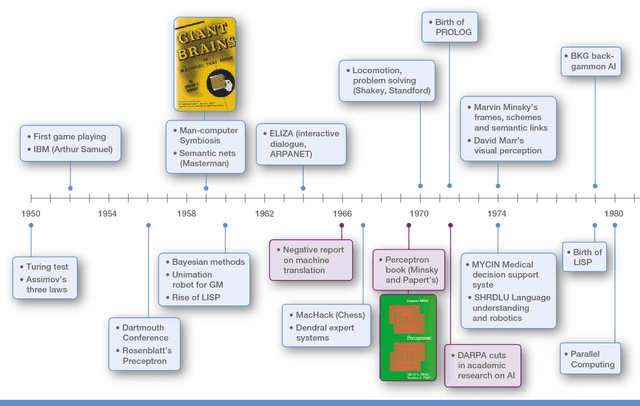

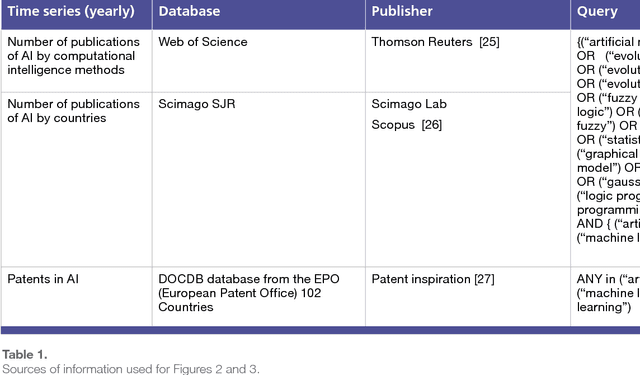

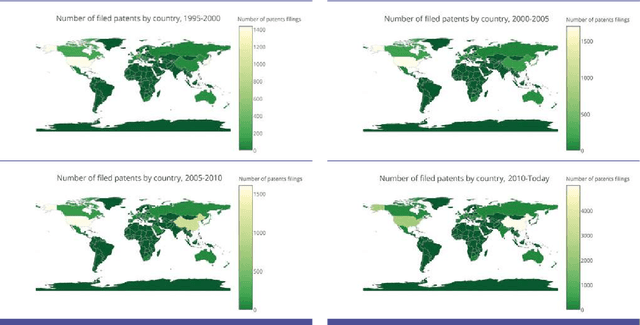

Artificial Intelligence and Robotics

Mar 28, 2018

Abstract:The recent successes of AI have captured the wildest imagination of both the scientific communities and the general public. Robotics and AI amplify human potentials, increase productivity and are moving from simple reasoning towards human-like cognitive abilities. Current AI technologies are used in a set area of applications, ranging from healthcare, manufacturing, transport, energy, to financial services, banking, advertising, management consulting and government agencies. The global AI market is around 260 billion USD in 2016 and it is estimated to exceed 3 trillion by 2024. To understand the impact of AI, it is important to draw lessons from it's past successes and failures and this white paper provides a comprehensive explanation of the evolution of AI, its current status and future directions.

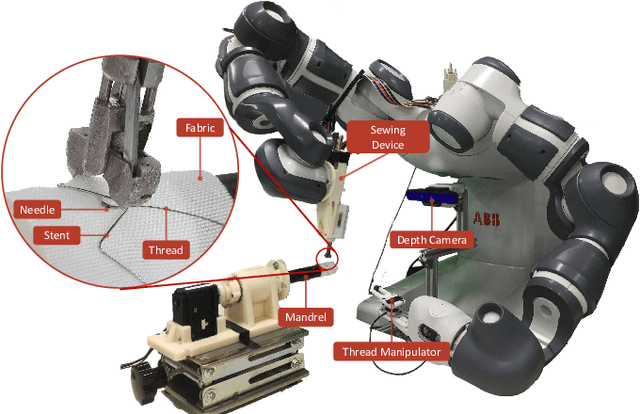

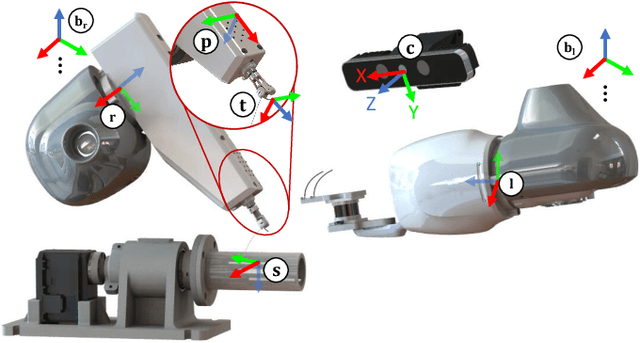

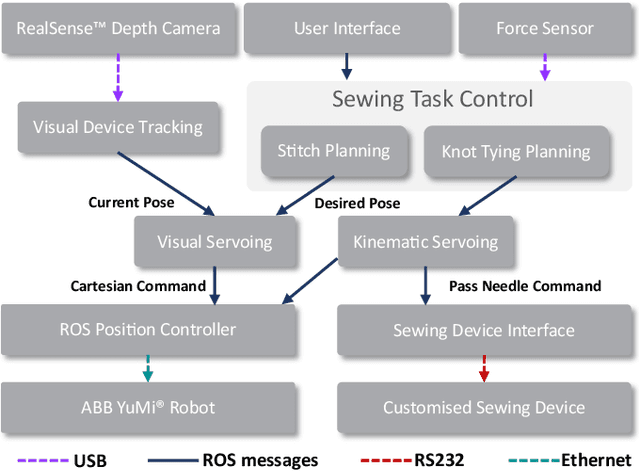

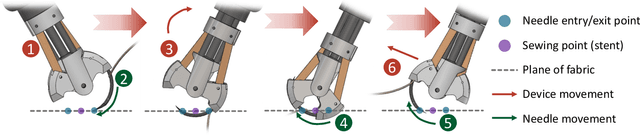

Robotic Sewing and Knot Tying for Personalized Stent Graft Manufacturing

Mar 22, 2018

Abstract:This paper presents a versatile robotic system for sewing 3D structured object. Leveraging on using a customized robotic sewing device and closed-loop visual servoing control, an all-in-one solution for sewing personalized stent graft is demonstrated. Stitch size planning and automatic knot tying are proposed as the two key functions of the system. By using effective stitch size planning, sub-millimetre sewing accuracy is achieved for stitch sizes ranging from 2mm to 5mm. In addition, a thread manipulator for thread management and tension control is also proposed to perform successive knot tying to secure each stitch. Detailed laboratory experiments have been performed to access the proposed instruments and allied algorithms. The proposed framework can be generalised to a wide range of applications including 3D industrial sewing, as well as transferred to other clinical areas such as surgical suturing.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge