David Navarro-Alarcon

A Novel Approach to Model the Kinematics of Human Fingers Based on an Elliptic Multi-Joint Configuration

Jul 30, 2021

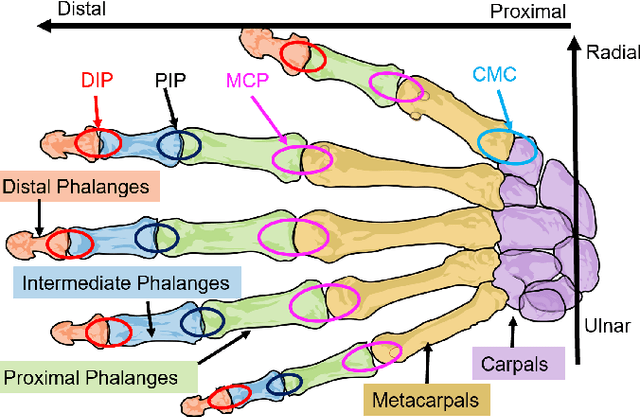

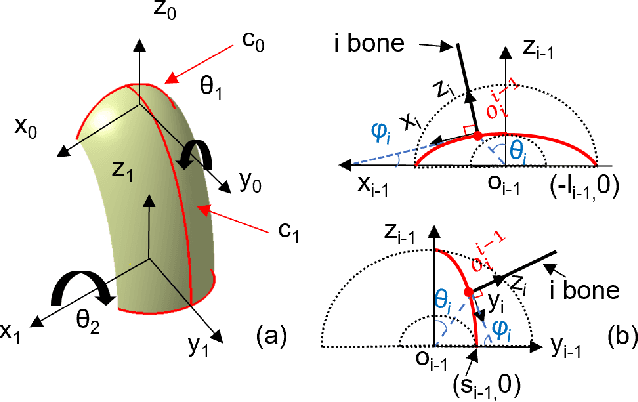

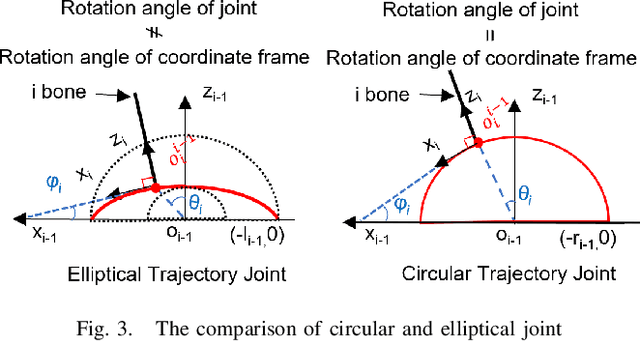

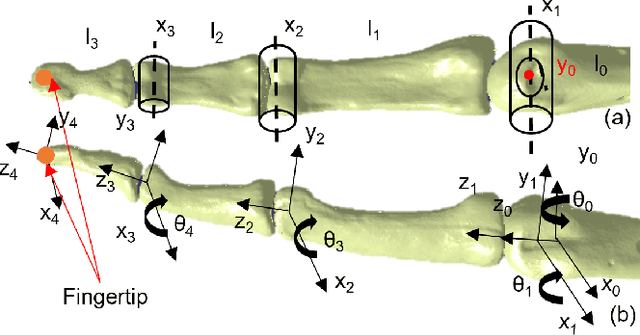

Abstract:In this paper, we present a novel kinematic model of the human phalanges based on the elliptical motion of their joints. The presence of the soft elastic tissues and the general anatomical structure of the hand joints highly affect the relative movement of the bones. Commonly used assumption of circular trajectories simplifies the designing process but leads to divergence with the actual hand behavior. The advantages of the proposed model are demonstrated through the comparison with the conventional revolute joint model. Conducted simulations and experiments validate designed forward and inverse kinematic algorithms. Obtained results show a high performance of the model in mimicking the human fingertip motion trajectory.

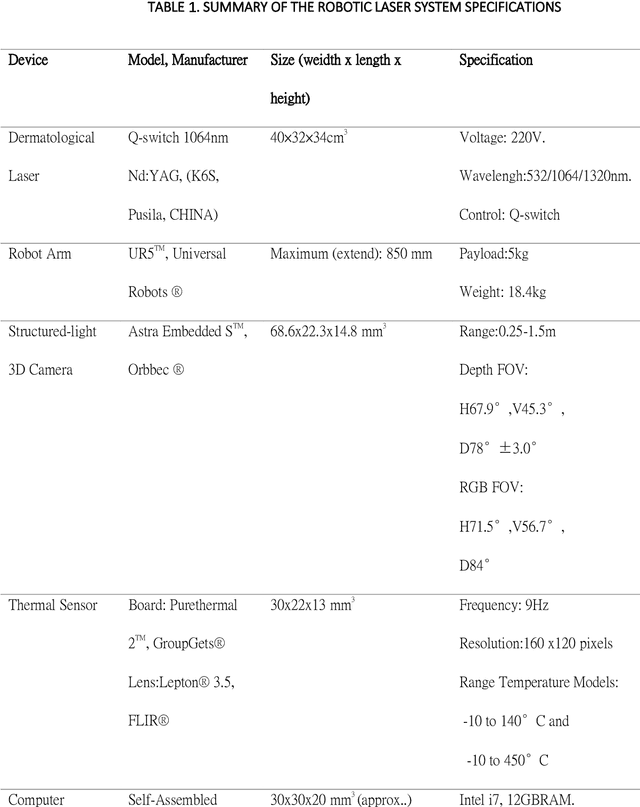

A Split-face Study of Novel Robotic Prototype vs Human Operator in Skin Rejuvenation Using Q-switched Nd:Yag Laser: Accuracy, Efficacy and Safety

Jun 05, 2021

Abstract:Background: Robotic technologies involved in skin laser are emerging. Objective: To compare the accuracy, efficacy and safety of novel robotic prototype with human operator in laser operation performance for skin photo-rejuvenation. Methods: Seventeen subjects were enrolled in a prospective, comparative split-face trial. Q-switch 1064nm laser conducted by the robotic prototype was provided on the right side of the face and that by the professional practitioner on the left. Each subject underwent a single time, one-pass, non-overlapped treatment on an equal size area of the forehead and cheek. Objective assessments included: treatment duration, laser irradiation shots, laser coverage percentage, VISIA parameters, skin temperature and the VAS pain scale. Results: Average time taken by robotic manipulator was longer than human operator; the average number of irradiation shots of both sides had no significant differences. Laser coverage rate of robotic manipulator (60.2 +-15.1%) was greater than that of human operator (43.6 +-12.9%). The VISIA parameters showed no significant differences between robotic manipulator and human operator. No short or long-term side effects were observed with maximum VAS score of 1 point. Limitations: Only one section of laser treatment was performed. Conclusion: Laser operation by novel robotic prototype is more reliable, stable and accurate than human operation.

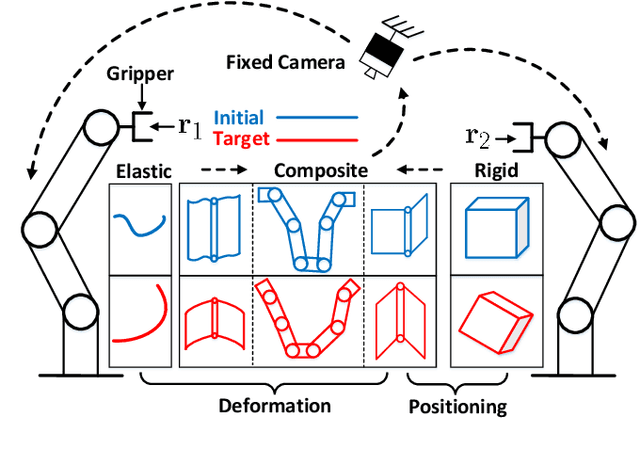

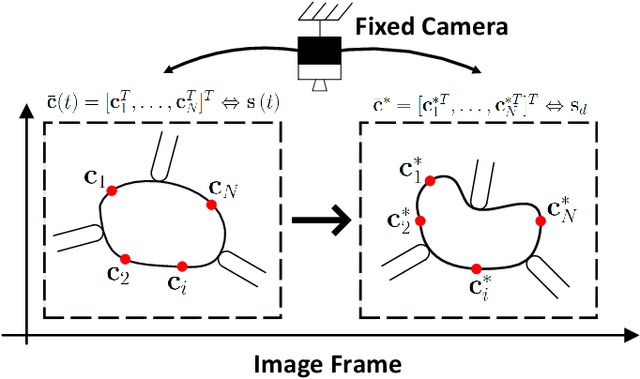

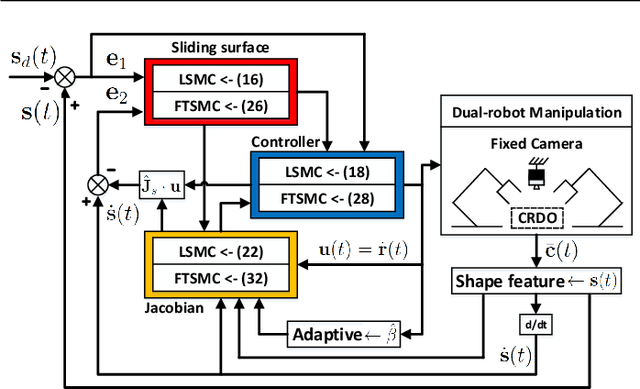

Contour Moments Based Manipulation of Composite Rigid-Deformable Objects with Finite Time Model Estimation and Shape/Position Control

Jun 04, 2021

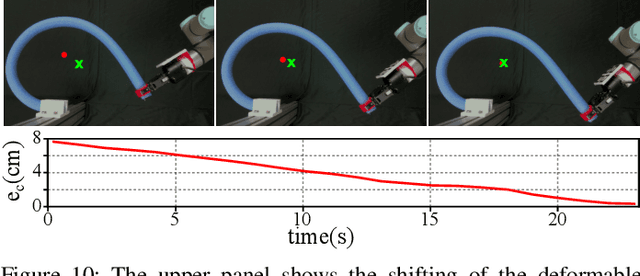

Abstract:The robotic manipulation of composite rigid-deformable objects (i.e. those with mixed non-homogeneous stiffness properties) is a challenging problem with clear practical applications that, despite the recent progress in the field, it has not been sufficiently studied in the literature. To deal with this issue, in this paper we propose a new visual servoing method that has the capability to manipulate this broad class of objects (which varies from soft to rigid) with the same adaptive strategy. To quantify the object's infinite-dimensional configuration, our new approach computes a compact feedback vector of 2D contour moments features. A sliding mode control scheme is then designed to simultaneously ensure the finite-time convergence of both the feedback shape error and the model estimation error. The stability of the proposed framework (including the boundedness of all the signals) is rigorously proved with Lyapunov theory. Detailed simulations and experiments are presented to validate the effectiveness of the proposed approach. To the best of the author's knowledge, this is the first time that contour moments along with finite-time control have been used to solve this difficult manipulation problem.

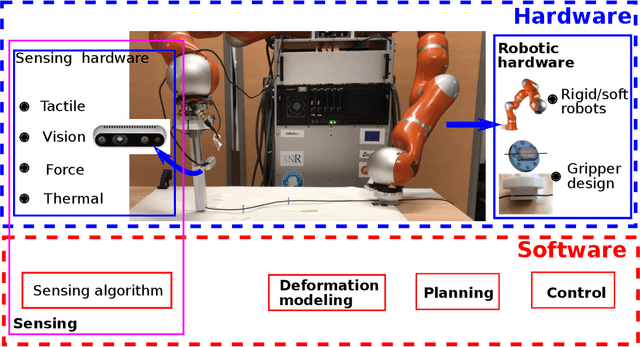

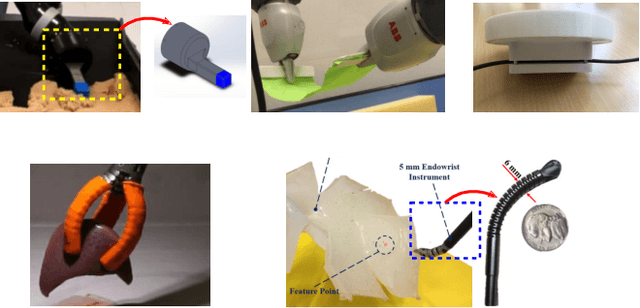

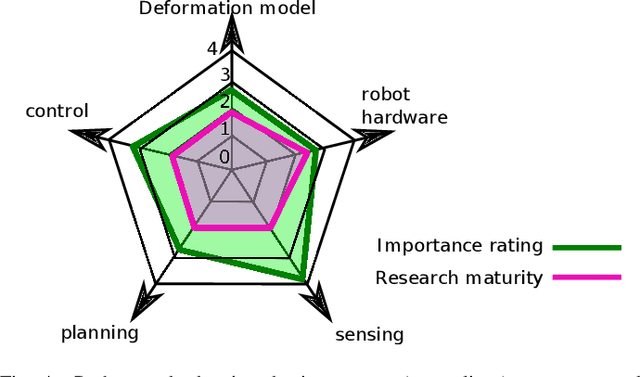

Challenges and Outlook in Robotic Manipulation of Deformable Objects

May 04, 2021

Abstract:Deformable object manipulation (DOM) is an emerging research problem in robotics. The ability to manipulate deformable objects endows robots with higher autonomy and promises new applications in the industrial, services, and healthcare sectors. However, compared to rigid object manipulation, the manipulation of deformable objects is considerably more complex and is still an open research problem. Tackling the challenges in DOM demands breakthroughs in almost all aspects of robotics, namely hardware design, sensing, deformation modeling, planning, and control. In this article, we highlight the main challenges that arise by considering deformation and review recent advances in each sub-field. A particular focus of our paper lies in the discussions of these challenges and proposing promising directions of research.

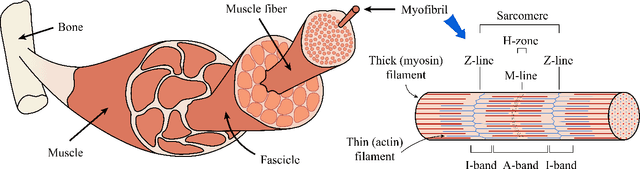

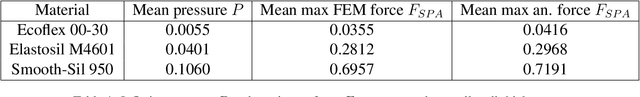

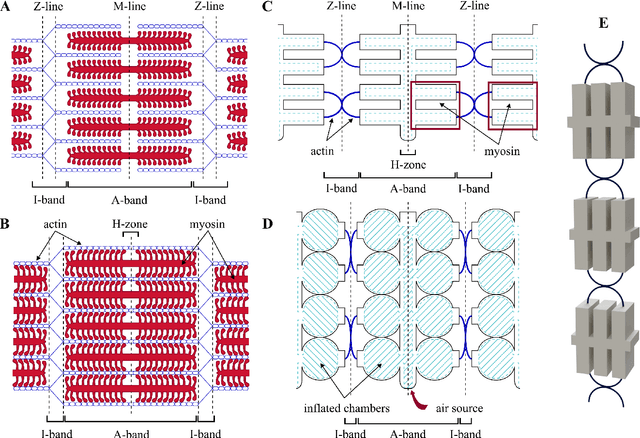

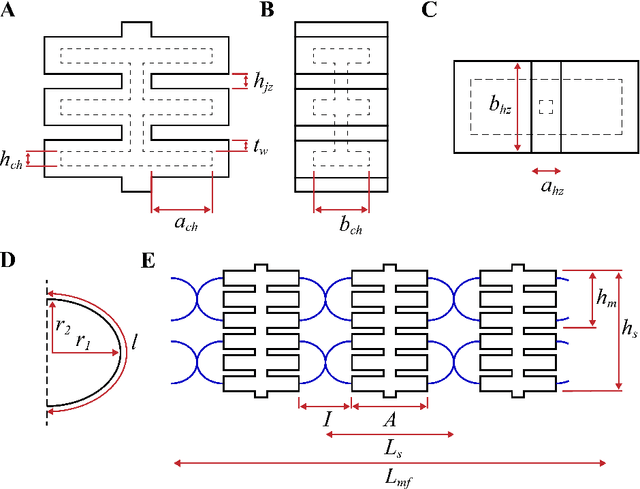

Bio-Inspired Design of Artificial Striated Muscles Composed of Sarcomere-Like Contraction Units (preprint)

Mar 17, 2021

Abstract:Biological muscles have always attracted robotics researchers due to their efficient capabilities in compliance, force generation, and mechanical work. Many groups are working on the development of artificial muscles, however, state-of-the-art methods still fall short in performance when compared with their biological counterpart. Muscles with high force output are mostly rigid, whereas traditional soft actuators take much space and are limited in strength and producing displacement. In this work, we aim to find a reasonable trade-off between these features by mimicking the striated structure of skeletal muscles. For that, we designed an artificial pneumatic myofibril composed of multiple contraction units that combine stretchable and inextensible materials. Varying the geometric parameters and the number of units in series provides flexible adjustment of the desired muscle operation. We derived a mathematical model that predicts the relationship between the input pneumatic pressure and the generated output force. A detailed experimental study is conducted to validate the performance of the proposed bio-inspired muscle.

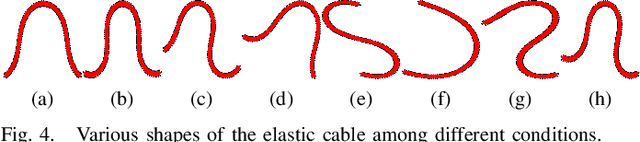

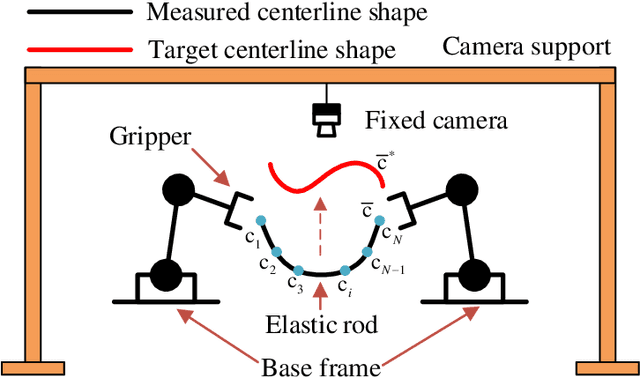

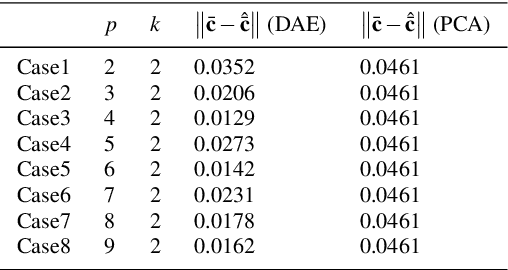

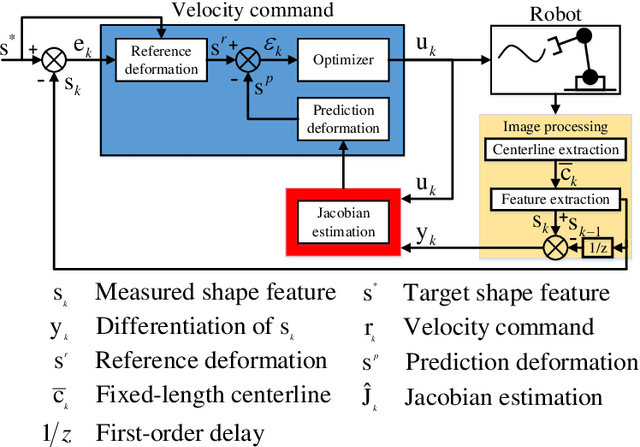

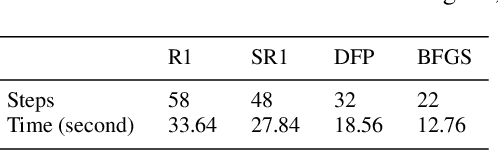

Towards Latent Space Based Manipulation of Elastic Rods using Autoencoder Models and Robust Centerline Extractions

Feb 09, 2021

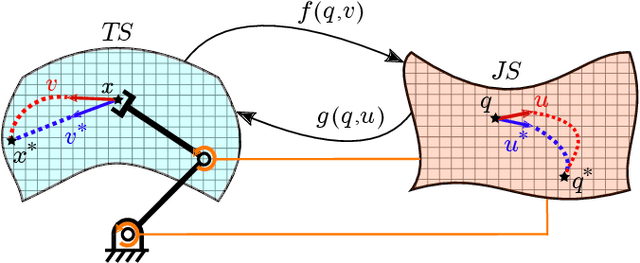

Abstract:The automatic shape control of deformable objects is a challenging (and currently hot) manipulation problem due to their high-dimensional geometric features and complex physical properties. In this study, a new methodology to manipulate elastic rods automatically into 2D desired shapes is presented. An efficient vision-based controller that uses a deep autoencoder network is designed to compute a compact representation of the object's infinite-dimensional shape. An online algorithm that approximates the sensorimotor mapping between the robot's configuration and the object's shape features is used to deal with the latter's (typically unknown) mechanical properties. The proposed approach computes the rod's centerline from raw visual data in real-time by introducing an adaptive algorithm on the basis of a self-organizing network. Its effectiveness is thoroughly validated with simulations and experiments.

A Neurorobotic Embodiment for Exploring the Dynamical Interactions of a Spiking Cerebellar Model and a Robot Arm During Vision-based Manipulation Tasks

Feb 03, 2021

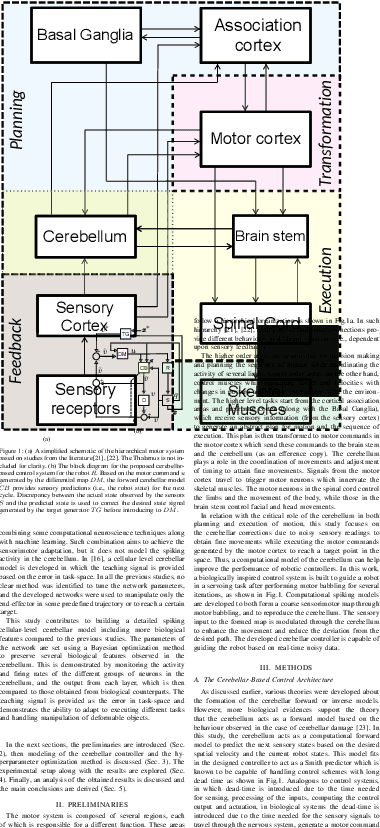

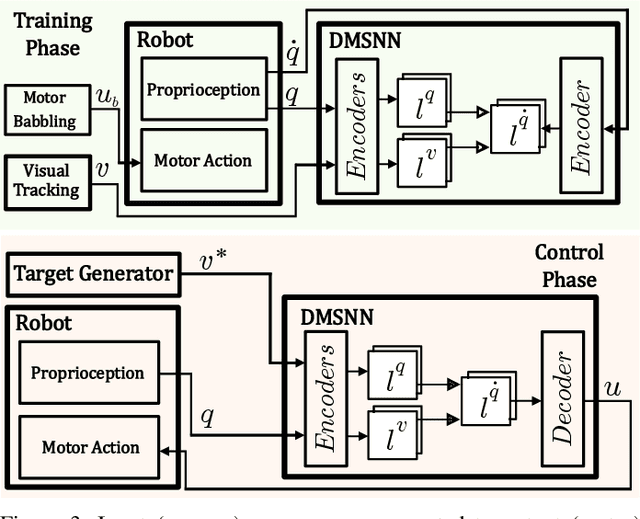

Abstract:While the original goal for developing robots is replacing humans in dangerous and tedious tasks, the final target shall be completely mimicking the human cognitive and motor behaviour. Hence, building detailed computational models for the human brain is one of the reasonable ways to attain this. The cerebellum is one of the key players in our neural system to guarantee dexterous manipulation and coordinated movements as concluded from lesions in that region. Studies suggest that it acts as a forward model providing anticipatory corrections for the sensory signals based on observed discrepancies from the reference values. While most studies consider providing the teaching signal as error in joint-space, few studies consider the error in task-space and even fewer consider the spiking nature of the cerebellum on the cellular-level. In this study, a detailed cellular-level forward cerebellar model is developed, including modeling of Golgi and Basket cells which are usually neglected in previous studies. To preserve the biological features of the cerebellum in the developed model, a hyperparameter optimization method tunes the network accordingly. The efficiency and biological plausibility of the proposed cerebellar-based controller is then demonstrated under different robotic manipulation tasks reproducing motor behaviour observed in human reaching experiments.

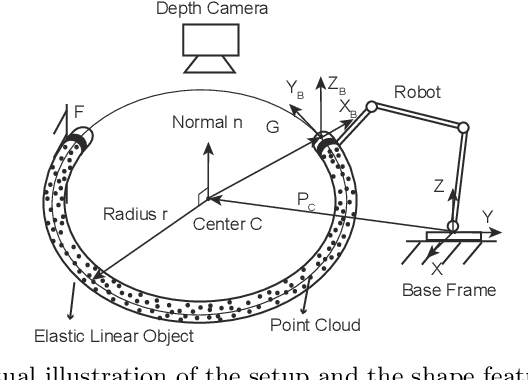

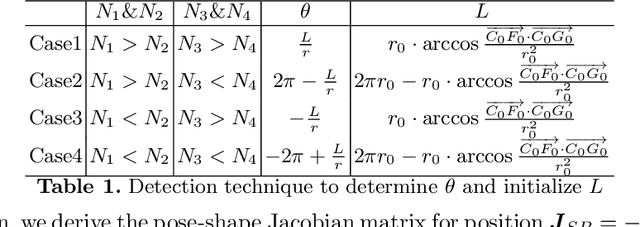

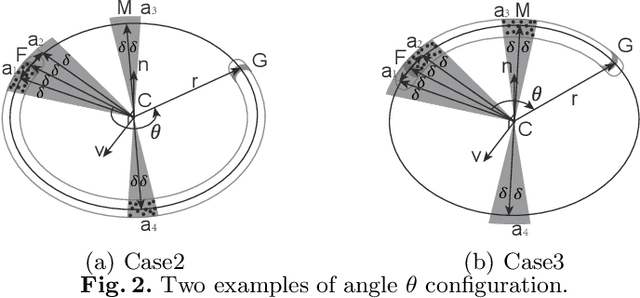

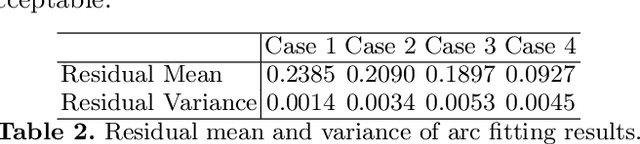

Shape Control of Elastic Objects Based on Implicit Sensorimotor Models and Data-Driven Geometric Features

Jan 06, 2021

Abstract:This paper proposes a general approach to design automatic controls to manipulate elastic objects into desired shapes. The object's geometric model is defined as the shape feature based on the specific task to globally describe the deformation. Raw visual feedback data is processed using classic regression methods to identify parameters of data-driven geometric models in real-time. Our proposed method is able to analytically compute a pose-shape Jacobian matrix based on implicit functions. This model is then used to derive a shape servoing controller. To validate the proposed method, we report a detailed experimental study with robotic manipulators deforming an elastic rod.

On Radiation-Based Thermal Servoing: New Models, Controls and Experiments

Dec 24, 2020

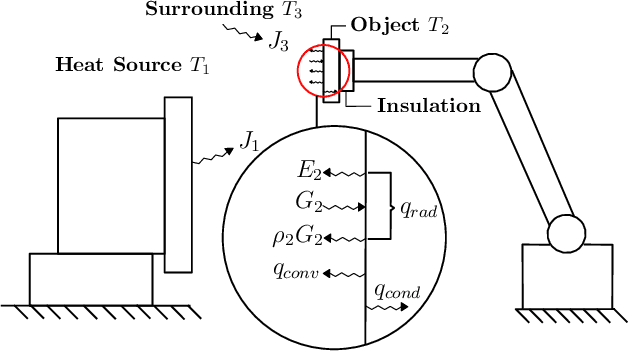

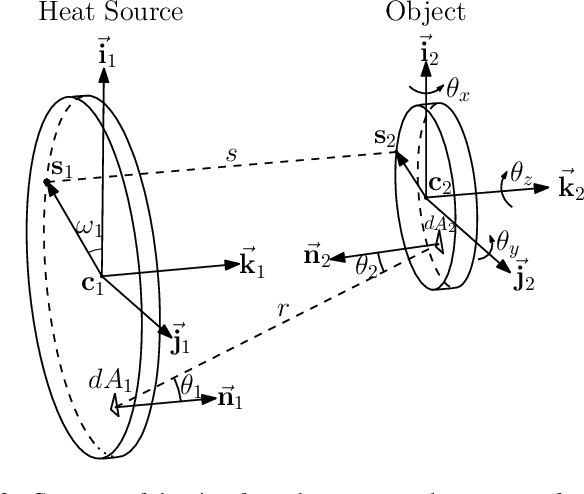

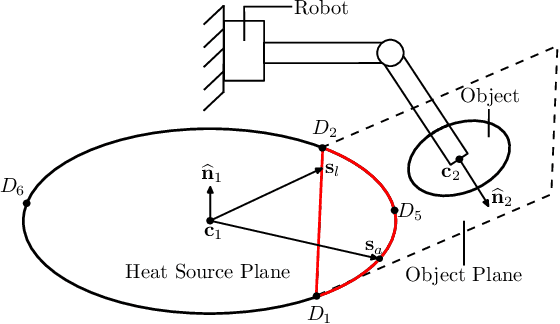

Abstract:In this paper, we introduce a new sensor-based control method that regulates (by means of robot motions) the heat transfer between a radiative source and an object of interest. This valuable sensorimotor capability is needed in many industrial, dermatology and field robot applications, and it is an essential component for creating machines with advanced thermo-motor intelligence. To this end, we derive a geometric-thermal-motor model which describes the relationship between the robot's active configuration and the produced dynamic thermal response. We then use the model to guide the design of two new thermal servoing controllers (one model-based and one adaptive), and analyze their stability with Lyapunov theory. To validate our method, we report a detailed experimental study with a robotic manipulator conducting autonomous thermal servoing tasks. To the best of the authors' knowledge, this is the first time that temperature regulation has been formulated as a motion control problem for robots.

LaSeSOM: A Latent Representation Framework for Semantic Soft Object Manipulation

Dec 10, 2020

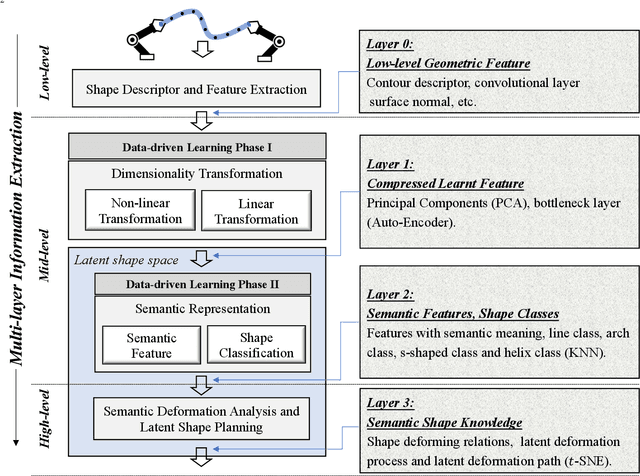

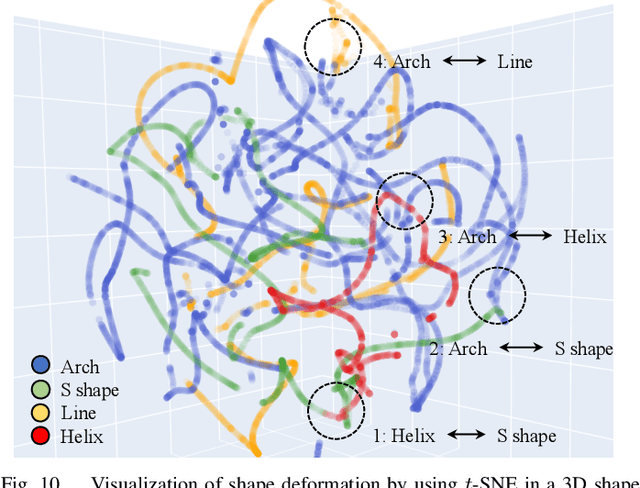

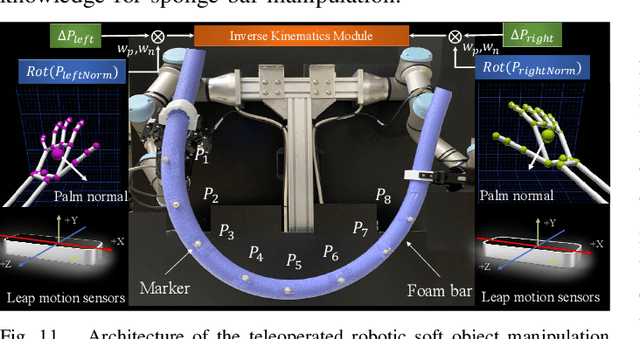

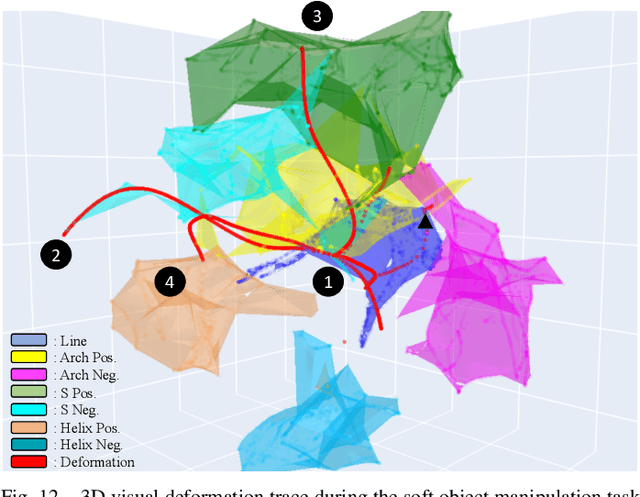

Abstract:Soft object manipulation has recently gained popularity within the robotics community due to its potential applications in many economically important areas. Although great progress has been recently achieved in these types of tasks, most state-of-the-art methods are case-specific; They can only be used to perform a single deformation task (e.g. bending), as their shape representation algorithms typically rely on "hard-coded" features. In this paper, we present LaSeSOM, a new feedback latent representation framework for semantic soft object manipulation. Our new method introduces internal latent representation layers between low-level geometric feature extraction and high-level semantic shape analysis; This allows the identification of each compressed semantic function and the formation of a valid shape classifier from different feature extraction levels. The proposed latent framework makes soft object representation more generic (independent from the object's geometry and its mechanical properties) and scalable (it can work with 1D/2D/3D tasks). Its high-level semantic layer enables to perform (quasi) shape planning tasks with soft objects, a valuable and underexplored capability in many soft manipulation tasks. To validate this new methodology, we report a detailed experimental study with robotic manipulators.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge