Daolin Ma

TouchGuide: Inference-Time Steering of Visuomotor Policies via Touch Guidance

Jan 28, 2026Abstract:Fine-grained and contact-rich manipulation remain challenging for robots, largely due to the underutilization of tactile feedback. To address this, we introduce TouchGuide, a novel cross-policy visuo-tactile fusion paradigm that fuses modalities within a low-dimensional action space. Specifically, TouchGuide operates in two stages to guide a pre-trained diffusion or flow-matching visuomotor policy at inference time. First, the policy produces a coarse, visually-plausible action using only visual inputs during early sampling. Second, a task-specific Contact Physical Model (CPM) provides tactile guidance to steer and refine the action, ensuring it aligns with realistic physical contact conditions. Trained through contrastive learning on limited expert demonstrations, the CPM provides a tactile-informed feasibility score to steer the sampling process toward refined actions that satisfy physical contact constraints. Furthermore, to facilitate TouchGuide training with high-quality and cost-effective data, we introduce TacUMI, a data collection system. TacUMI achieves a favorable trade-off between precision and affordability; by leveraging rigid fingertips, it obtains direct tactile feedback, thereby enabling the collection of reliable tactile data. Extensive experiments on five challenging contact-rich tasks, such as shoe lacing and chip handover, show that TouchGuide consistently and significantly outperforms state-of-the-art visuo-tactile policies.

UniTac2Pose: A Unified Approach Learned in Simulation for Category-level Visuotactile In-hand Pose Estimation

Sep 19, 2025

Abstract:Accurate estimation of the in-hand pose of an object based on its CAD model is crucial in both industrial applications and everyday tasks, ranging from positioning workpieces and assembling components to seamlessly inserting devices like USB connectors. While existing methods often rely on regression, feature matching, or registration techniques, achieving high precision and generalizability to unseen CAD models remains a significant challenge. In this paper, we propose a novel three-stage framework for in-hand pose estimation. The first stage involves sampling and pre-ranking pose candidates, followed by iterative refinement of these candidates in the second stage. In the final stage, post-ranking is applied to identify the most likely pose candidates. These stages are governed by a unified energy-based diffusion model, which is trained solely on simulated data. This energy model simultaneously generates gradients to refine pose estimates and produces an energy scalar that quantifies the quality of the pose estimates. Additionally, borrowing the idea from the computer vision domain, we incorporate a render-compare architecture within the energy-based score network to significantly enhance sim-to-real performance, as demonstrated by our ablation studies. We conduct comprehensive experiments to show that our method outperforms conventional baselines based on regression, matching, and registration techniques, while also exhibiting strong intra-category generalization to previously unseen CAD models. Moreover, our approach integrates tactile object pose estimation, pose tracking, and uncertainty estimation into a unified framework, enabling robust performance across a variety of real-world conditions.

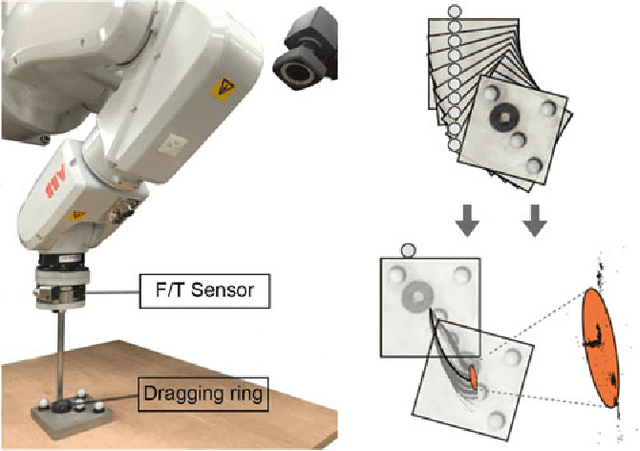

Extrinsic Contact Sensing with Relative-Motion Tracking from Distributed Tactile Measurements

Mar 24, 2021

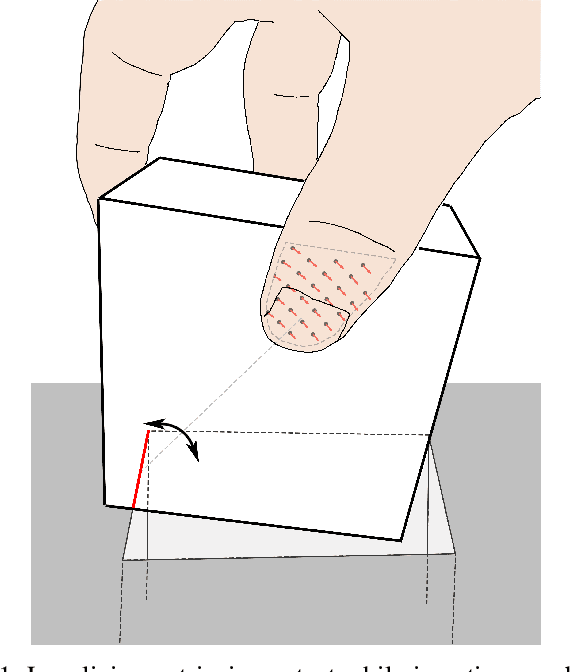

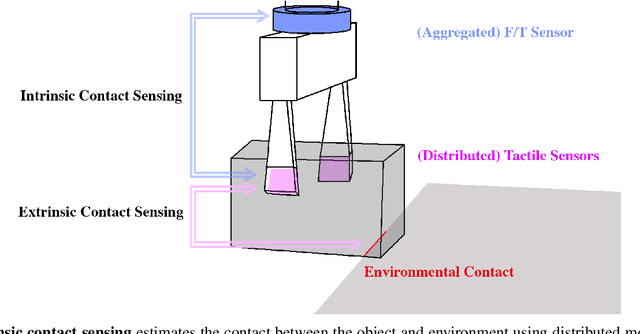

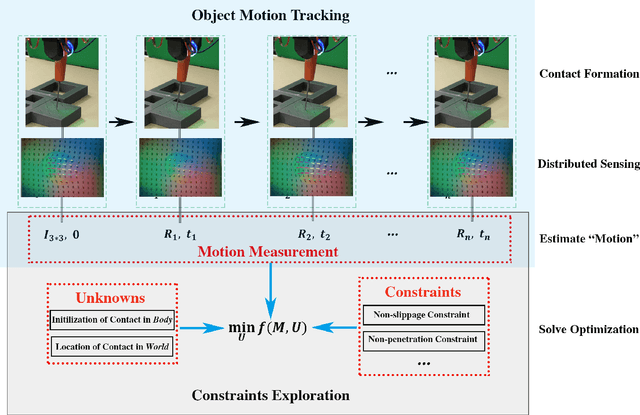

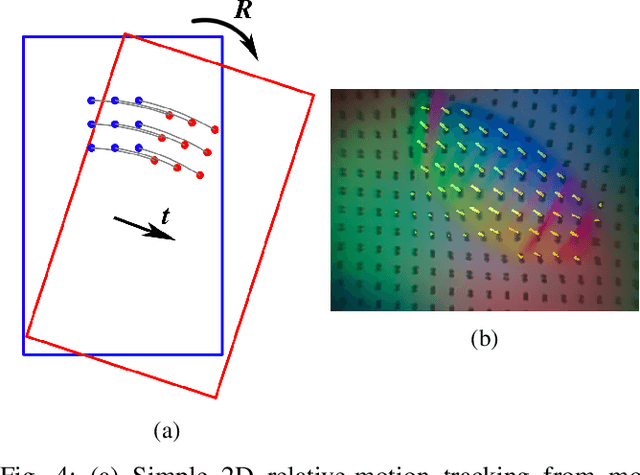

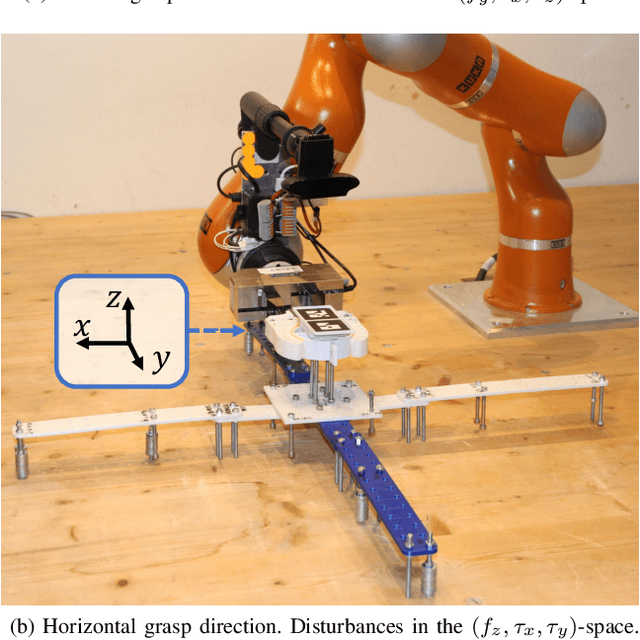

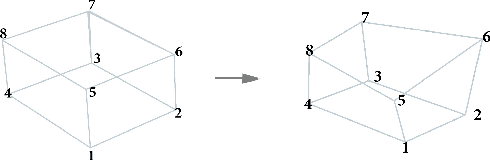

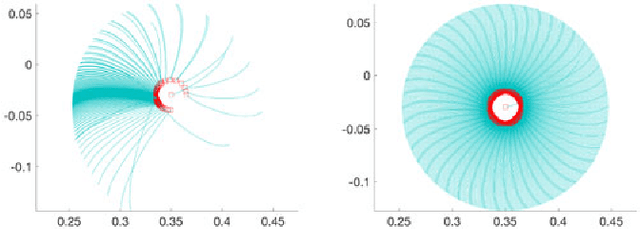

Abstract:This paper addresses the localization of contacts of an unknown grasped rigid object with its environment, i.e., extrinsic to the robot. We explore the key role that distributed tactile sensing plays in localizing contacts external to the robot, in contrast to the role that aggregated force/torque measurements play in localizing contacts on the robot. When in contact with the environment, an object will move in accordance with the kinematic and possibly frictional constraints imposed by that contact. Small motions of the object, which are observable with tactile sensors, indirectly encode those constraints and the geometry that defines them. We formulate the extrinsic contact sensing problem as a constraint-based estimation. The estimation is subject to the kinematic constraints imposed by the tactile measurements of object motion, as well as the kinematic (e.g., non-penetration) and possibly frictional (e.g., sticking) constraints imposed by rigid-body mechanics. We validate the approach in simulation and with real experiments on the case studies of fixed point and line contacts. This paper discusses the theoretical basis for the value of distributed tactile sensing in contrast to aggregated force/torque measurements. It also provides an estimation framework for localizing environmental contacts with potential impact in contact-rich manipulation scenarios such as assembling or packing.

Non-Planar Frictional Surface Contacts: Modeling and Application to Grasping

Sep 15, 2019

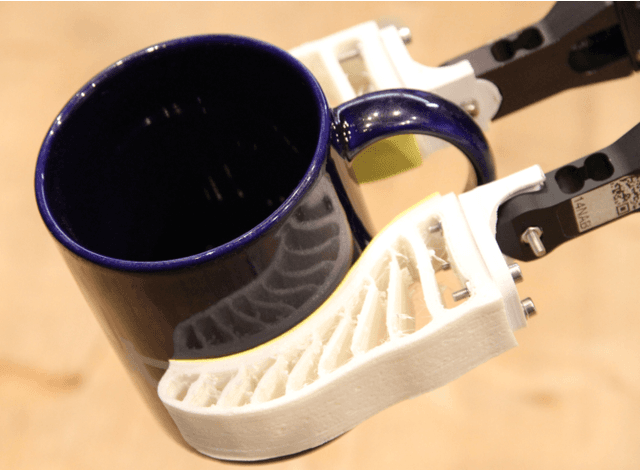

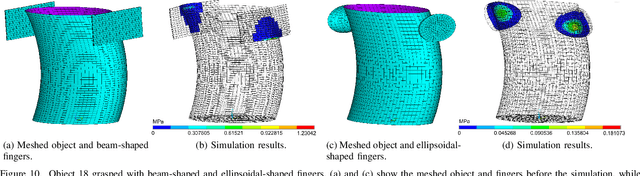

Abstract:Contact modeling is essential for robotic grasping and manipulation. The relation between friction and relative body motion is fundamental for controlled pushing. An accurate friction model is indispensable for grasp analysis as the stability heavily relies on friction. To increase the grasp stability, soft fingers are widely deployed for manipulation tasks as they adapt to the object geometry, where the deformability results in a curved contact area. The friction of such curved surfaces is in six dimensions and its model is yet not well defined. To address this issue, we derive the friction computation for curved surfaces by combining concepts of differential geometry and Coulomb friction law. We further generalize two classical limit surface models from three to six dimensions, which describe the friction-motion constraints for a single contact. To analyze multi contacts for grasping, we build the grasp wrench space by merging the normal wrench and the fitted limit surfaces of each contact. The performance of the two limit surface models is evaluated with six parametric surfaces and 2473 meshed contacts obtained from simulations using the finite element method. Results indicate that the proposed models yield 1.81% fitting error of the 6D friction wrench samples. We demonstrate the applicability of the proposed models to predict grasp success for a parallel-jaw gripper. Robotic experiments suggest that a prediction accuracy of up to 92.6% can be achieved with the presented frictional contact modeling.

Dense Tactile Force Distribution Estimation using GelSlim and inverse FEM

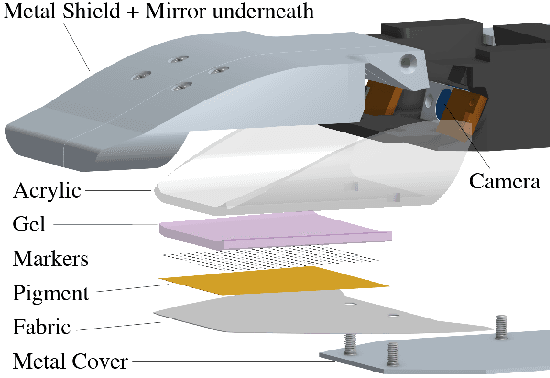

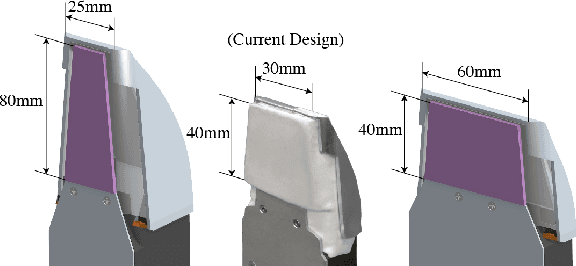

Apr 08, 2019

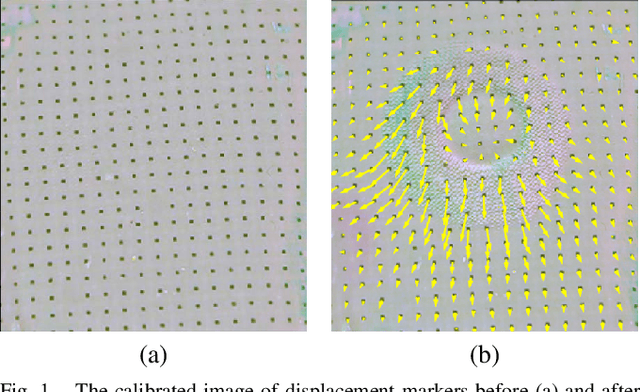

Abstract:In this paper, we present a new version of tactile sensor GelSlim 2.0 with the capability to estimate the contact force distribution in real time. The sensor is vision-based and uses an array of markers to track deformations on a gel pad due to contact. A new hardware design makes the sensor more rugged, parametrically adjustable and improves illumination. Leveraging the sensor's increased functionality, we propose to use inverse Finite Element Method (iFEM), a numerical method to reconstruct the contact force distribution based on marker displacements. The sensor is able to provide force distribution of contact with high spatial density. Experiments and comparison with ground truth show that the reconstructed force distribution is physically reasonable with good accuracy.

Maintaining Grasps within Slipping Bound by Monitoring Incipient Slip

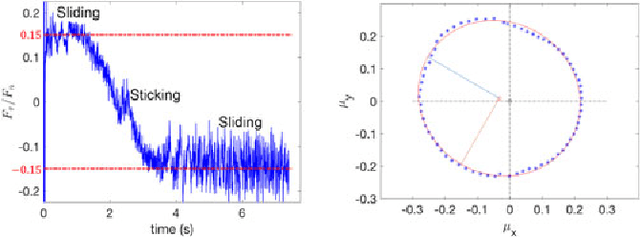

Oct 31, 2018

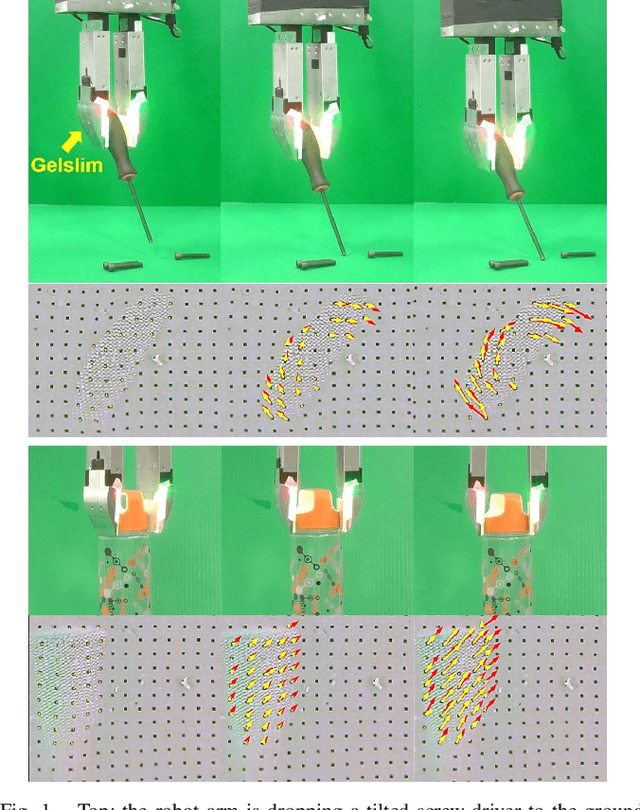

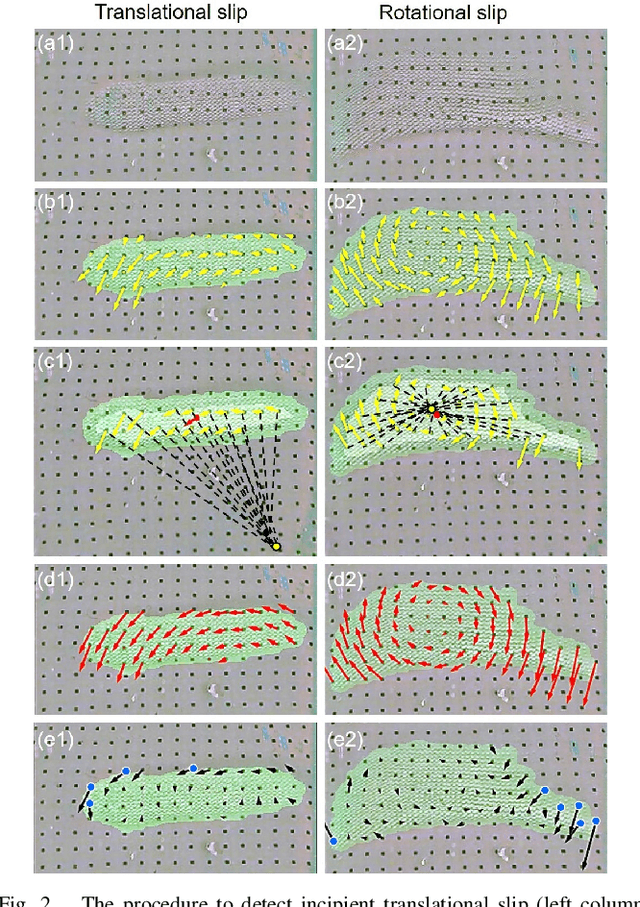

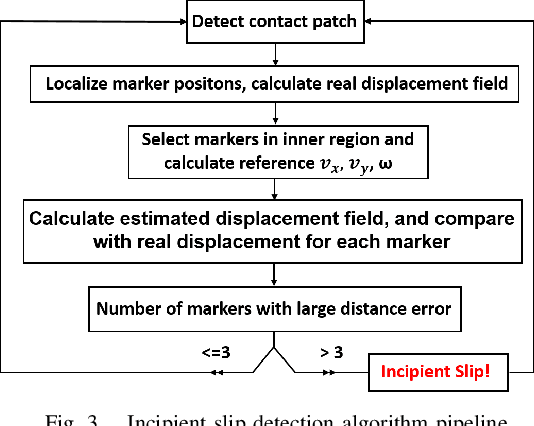

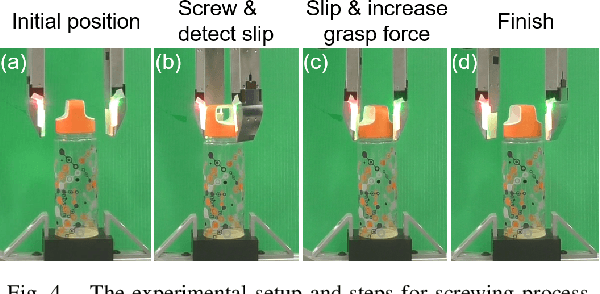

Abstract:In this paper, we propose an approach to detect incipient slip, i.e. predict slip, by using a high-resolution vision-based tactile sensor, GelSlim. The sensor dynamically captures the tactile imprints of the contact object and their changes with a soft gel pad. The method assumes the object is mostly rigid and treats the motion of object's imprint on sensor surface as a 2D rigid-body motion. We use the deviation of the true motion field from that of a 2D planar rigid transformation as a measure of slip. The output is a dense slip field which we use to detect when small areas of the contact patch start to slip (incipient slip). The method can detect both translational and rotational incipient slip without any prior knowledge of the object at 24 Hz. We test the method on 10 objects 240 times and achieve 86.25% detection accuracy. We further show how the slip feedback can be used to monitor the gripping force to avoid slip with a closed-loop bottle-cap screwing and unscrewing experiment with incipient slip detection feedback. The method was demonstrated to be useful for the robot to apply proper gripping force and stop screwing at the right point before breaking objects. The method can be applied to many manipulation tasks in both structured and unstructured environments.

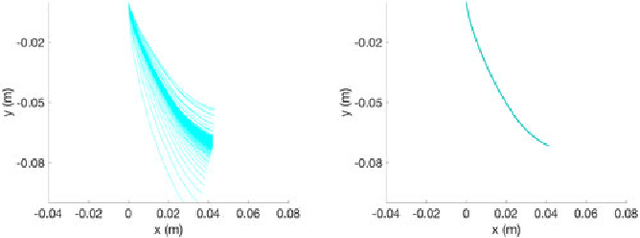

Friction Variability in Planar Pushing Data: Anisotropic Friction and Data-collection Bias

Jun 22, 2018

Abstract:Friction plays a key role in manipulating objects. Most of what we do with our hands, and most of what robots do with their grippers, is based on the ability to control frictional forces. This paper aims to better understand the variability and predictability of planar friction. In particular, we focus on the analysis of a recent dataset on planar pushing by Yu et al. [1] devised to create a data-driven footprint of planar friction. We show in this paper how we can explain a significant fraction of the observed unconventional phenomena, e.g., stochasticity and multi-modality, by combining the effects of material non-homogeneity, anisotropy of friction and biases due to data collection dynamics, hinting that the variability is explainable but inevitable in practice. We introduce an anisotropic friction model and conduct simulation experiments comparing with more standard isotropic friction models. The anisotropic friction between object and supporting surface results in convergence of initial condition during the automated data collection. Numerical results confirm that the anisotropic friction model explains the bias in the dataset and the apparent stochasticity in the outcome of a push. The fact that the data collection process itself can originate biases in the collected datasets, resulting in deterioration of trained models, calls attention to the data collection dynamics.

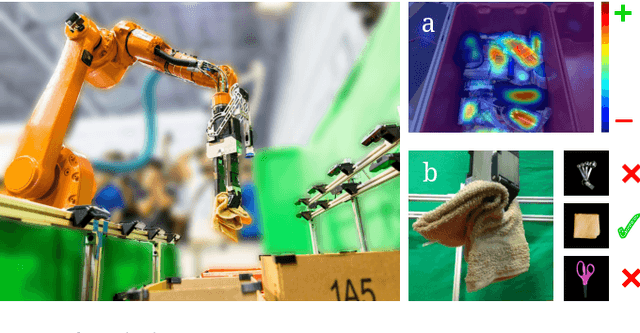

Robotic Pick-and-Place of Novel Objects in Clutter with Multi-Affordance Grasping and Cross-Domain Image Matching

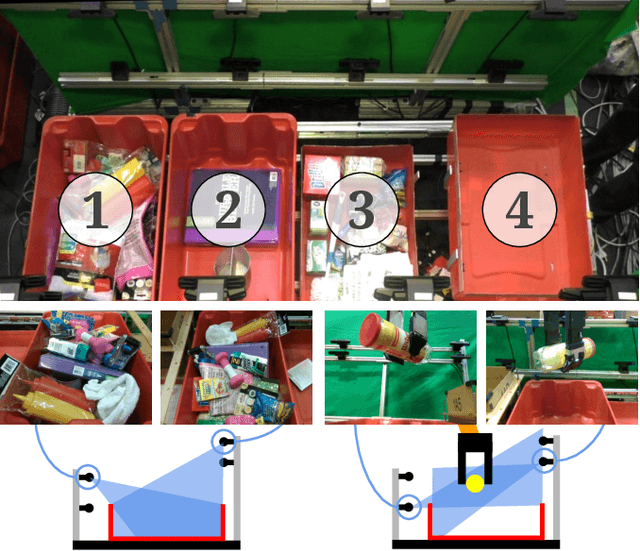

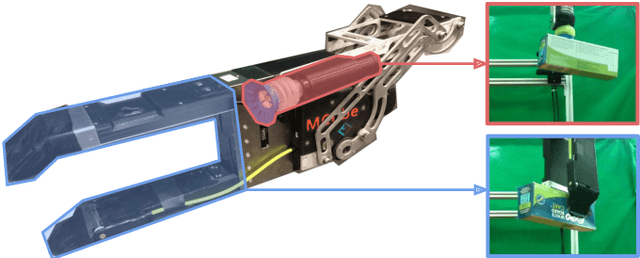

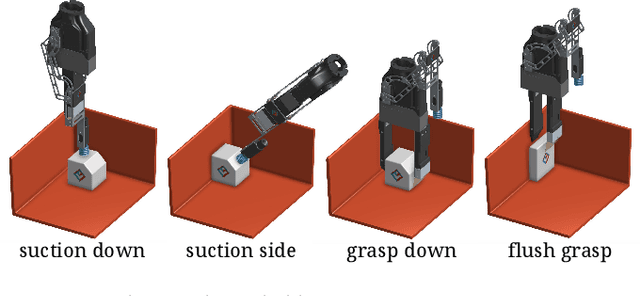

Apr 01, 2018

Abstract:This paper presents a robotic pick-and-place system that is capable of grasping and recognizing both known and novel objects in cluttered environments. The key new feature of the system is that it handles a wide range of object categories without needing any task-specific training data for novel objects. To achieve this, it first uses a category-agnostic affordance prediction algorithm to select and execute among four different grasping primitive behaviors. It then recognizes picked objects with a cross-domain image classification framework that matches observed images to product images. Since product images are readily available for a wide range of objects (e.g., from the web), the system works out-of-the-box for novel objects without requiring any additional training data. Exhaustive experimental results demonstrate that our multi-affordance grasping achieves high success rates for a wide variety of objects in clutter, and our recognition algorithm achieves high accuracy for both known and novel grasped objects. The approach was part of the MIT-Princeton Team system that took 1st place in the stowing task at the 2017 Amazon Robotics Challenge. All code, datasets, and pre-trained models are available online at http://arc.cs.princeton.edu

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge