Orion Taylor

Object manipulation through contact configuration regulation: multiple and intermittent contacts

Oct 01, 2023

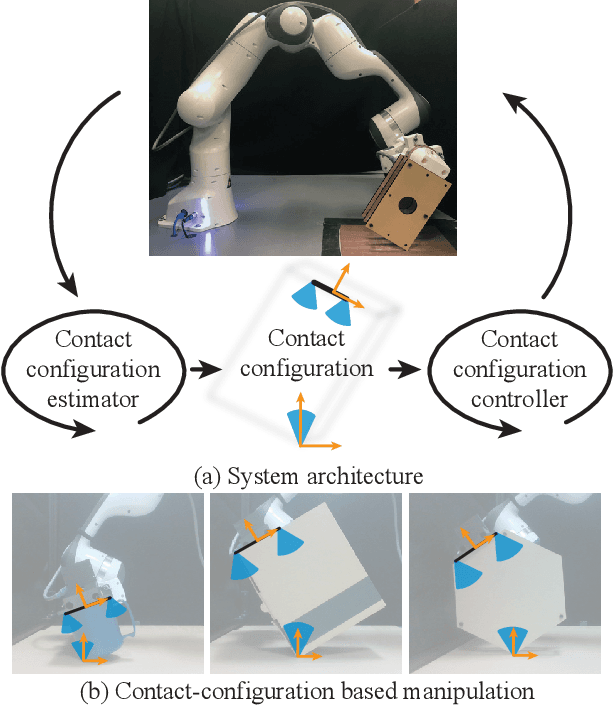

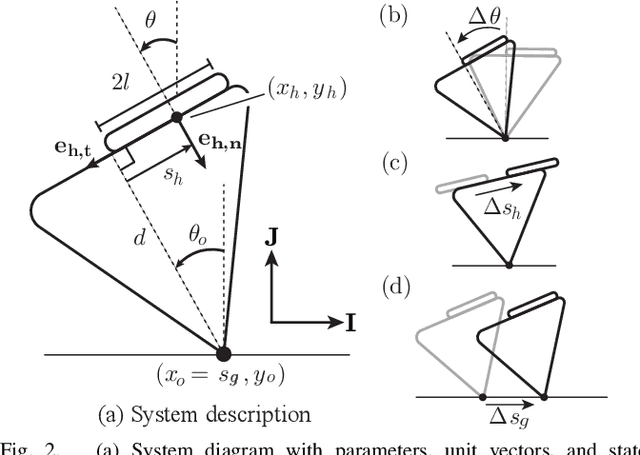

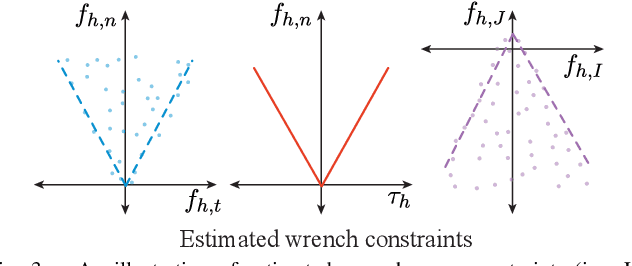

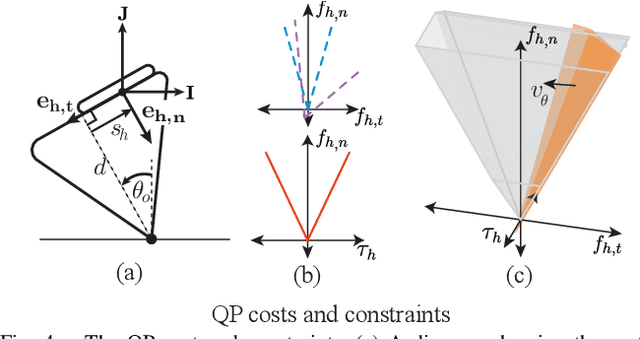

Abstract:In this work, we build on our method for manipulating unknown objects via contact configuration regulation: the estimation and control of the location, geometry, and mode of all contacts between the robot, object, and environment. We further develop our estimator and controller to enable manipulation through more complex contact interactions, including intermittent contact between the robot/object, and multiple contacts between the object/environment. In addition, we support a larger set of contact geometries at each interface. This is accomplished through a factor graph based estimation framework that reasons about the complementary kinematic and wrench constraints of contact to predict the current contact configuration. We are aided by the incorporation of a limited amount of visual feedback; which when combined with the available F/T sensing and robot proprioception, allows us to differentiate contact modes that were previously indistinguishable. We implement this revamped framework on our manipulation platform, and demonstrate that it allows the robot to perform a wider set of manipulation tasks. This includes, using a wall as a support to re-orient an object, or regulating the contact geometry between the object and the ground. Finally, we conduct ablation studies to understand the contributions from visual and tactile feedback in our manipulation framework. Our code can be found at: https://github.com/mcubelab/pbal.

Manipulation of unknown objects via contact configuration regulation

Mar 02, 2022

Abstract:We present an approach to robotic manipulation of unknown objects through regulation of the object's contact configuration: the location, geometry, and mode of all contacts between the object, robot, and environment. A contact configuration constrains the forces and motions that can be applied to the object; however, synthesizing these constraints generally requires knowledge of the object's pose and geometry. We develop an object-agnostic approach for estimation and control that circumvents this need. Our framework directly estimates a set of wrench and motion constraints which it uses to regulate the contact configuration. We use this to reactively manipulate unknown planar objects in the gravity plane. A video describing our work can be found on our project page: http://mcube.mit.edu/research/contactConfig.html.

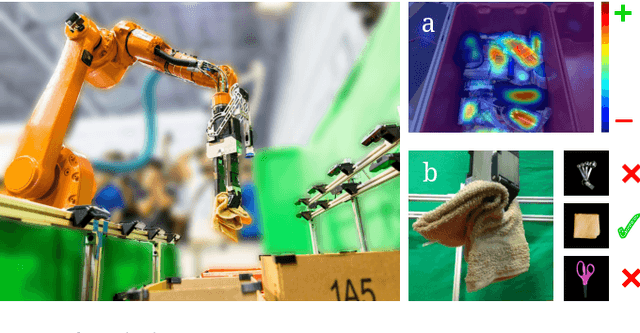

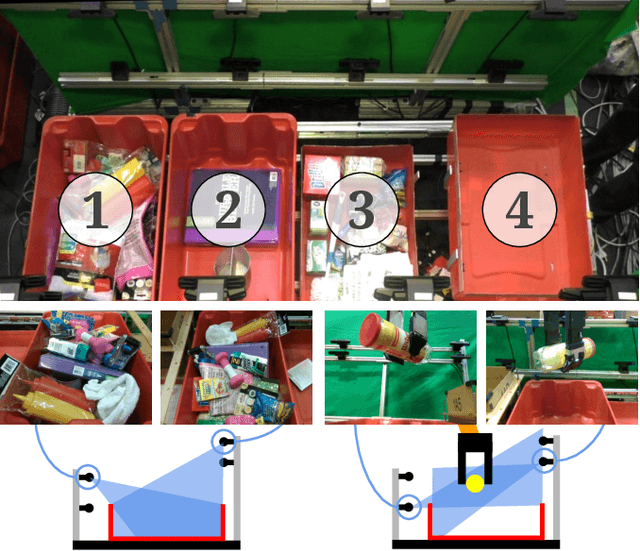

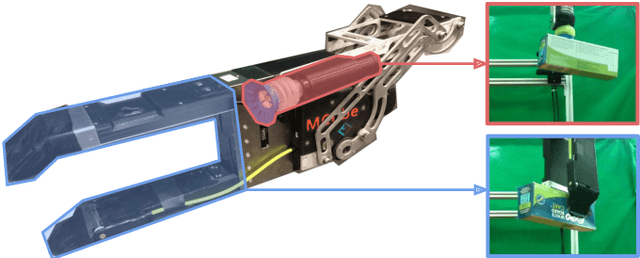

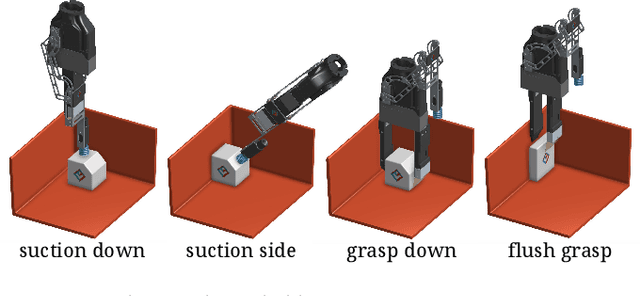

Robotic Pick-and-Place of Novel Objects in Clutter with Multi-Affordance Grasping and Cross-Domain Image Matching

Apr 01, 2018

Abstract:This paper presents a robotic pick-and-place system that is capable of grasping and recognizing both known and novel objects in cluttered environments. The key new feature of the system is that it handles a wide range of object categories without needing any task-specific training data for novel objects. To achieve this, it first uses a category-agnostic affordance prediction algorithm to select and execute among four different grasping primitive behaviors. It then recognizes picked objects with a cross-domain image classification framework that matches observed images to product images. Since product images are readily available for a wide range of objects (e.g., from the web), the system works out-of-the-box for novel objects without requiring any additional training data. Exhaustive experimental results demonstrate that our multi-affordance grasping achieves high success rates for a wide variety of objects in clutter, and our recognition algorithm achieves high accuracy for both known and novel grasped objects. The approach was part of the MIT-Princeton Team system that took 1st place in the stowing task at the 2017 Amazon Robotics Challenge. All code, datasets, and pre-trained models are available online at http://arc.cs.princeton.edu

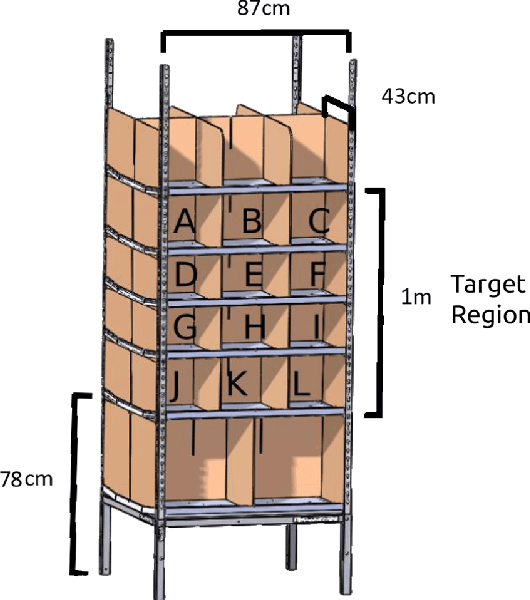

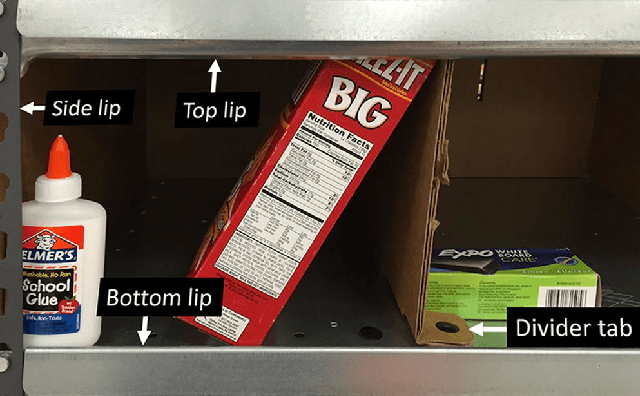

A Summary of Team MIT's Approach to the Amazon Picking Challenge 2015

Apr 13, 2016

Abstract:The Amazon Picking Challenge (APC), held alongside the International Conference on Robotics and Automation in May 2015 in Seattle, challenged roboticists from academia and industry to demonstrate fully automated solutions to the problem of picking objects from shelves in a warehouse fulfillment scenario. Packing density, object variability, speed, and reliability are the main complexities of the task. The picking challenge serves both as a motivation and an instrument to focus research efforts on a specific manipulation problem. In this document, we describe Team MIT's approach to the competition, including design considerations, contributions, and performance, and we compile the lessons learned. We also describe what we think are the main remaining challenges.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge