Christoffer Heckman

Department of Computer Science, University of Colorado Boulder, USA

RMap: Millimeter-Wave Radar Mapping Through Volumetric Upsampling

Oct 19, 2023

Abstract:Millimeter Wave Radar is being adopted as a viable alternative to lidar and radar in adverse visually degraded conditions, such as the presence of fog and dust. However, this sensor modality suffers from severe sparsity and noise under nominal conditions, which makes it difficult to use in precise applications such as mapping. This work presents a novel solution to generate accurate 3D maps from sparse radar point clouds. RMap uses a custom generative transformer architecture, UpPoinTr, which upsamples, denoises, and fills the incomplete radar maps to resemble lidar maps. We test this method on the ColoRadar dataset to demonstrate its efficacy.

A Population-Level Analysis of Neural Dynamics in Robust Legged Robots

Jun 27, 2023

Abstract:Recurrent neural network-based reinforcement learning systems are capable of complex motor control tasks such as locomotion and manipulation, however, much of their underlying mechanisms still remain difficult to interpret. Our aim is to leverage computational neuroscience methodologies to understanding the population-level activity of robust robot locomotion controllers. Our investigation begins by analyzing topological structure, discovering that fragile controllers have a higher number of fixed points with unstable directions, resulting in poorer balance when instructed to stand in place. Next, we analyze the forced response of the system by applying targeted neural perturbations along directions of dominant population-level activity. We find evidence that recurrent state dynamics are structured and low-dimensional during walking, which aligns with primate studies. Additionally, when recurrent states are perturbed to zero, fragile agents continue to walk, which is indicative of a stronger reliance on sensory input and weaker recurrence.

Tell Me Where to Go: A Composable Framework for Context-Aware Embodied Robot Navigation

Jun 19, 2023Abstract:Humans have the remarkable ability to navigate through unfamiliar environments by solely relying on our prior knowledge and descriptions of the environment. For robots to perform the same type of navigation, they need to be able to associate natural language descriptions with their associated physical environment with a limited amount of prior knowledge. Recently, Large Language Models (LLMs) have been able to reason over billions of parameters and utilize them in multi-modal chat-based natural language responses. However, LLMs lack real-world awareness and their outputs are not always predictable. In this work, we develop NavCon, a low-bandwidth framework that solves this lack of real-world generalization by creating an intermediate layer between an LLM and a robot navigation framework in the form of Python code. Our intermediate shoehorns the vast prior knowledge inherent in an LLM model into a series of input and output API instructions that a mobile robot can understand. We evaluate our method across four different environments and command classes on a mobile robot and highlight our NavCon's ability to interpret contextual commands.

Kalman Filter Auto-tuning through Enforcing Chi-Squared Normalized Error Distributions with Bayesian Optimization

Jun 12, 2023Abstract:The nonlinear and stochastic relationship between noise covariance parameter values and state estimator performance makes optimal filter tuning a very challenging problem. Popular optimization-based tuning approaches can easily get trapped in local minima, leading to poor noise parameter identification and suboptimal state estimation. Recently, black box techniques based on Bayesian optimization with Gaussian processes (GPBO) have been shown to overcome many of these issues, using normalized estimation error squared (NEES) and normalized innovation error (NIS) statistics to derive cost functions for Kalman filter auto-tuning. While reliable noise parameter estimates are obtained in many cases, GPBO solutions obtained with these conventional cost functions do not always converge to optimal filter noise parameters and lack robustness to parameter ambiguities in time-discretized system models. This paper addresses these issues by making two main contributions. First, we show that NIS and NEES errors are only chi-squared distributed for tuned estimators. As a result, chi-square tests are not sufficient to ensure that an estimator has been correctly tuned. We use this to extend the familiar consistency tests for NIS and NEES to penalize if the distribution is not chi-squared distributed. Second, this cost measure is applied within a Student-t processes Bayesian Optimization (TPBO) to achieve robust estimator performance for time discretized state space models. The robustness, accuracy, and reliability of our approach are illustrated on classical state estimation problems.

Looking Around Corners: Generative Methods in Terrain Extension

Jun 12, 2023

Abstract:In this paper, we provide an early look at our model for generating terrain that is occluded in the initial lidar scan or out of range of the sensor. As a proof of concept, we show that a transformer based framework is able to be overfit to predict the geometries of unobserved roads around intersections or corners. We discuss our method for generating training data, as well as a unique loss function for training our terrain extension network. The framework is tested on data from the SemanticKitti [1] dataset. Unlabeled point clouds measured from an onboard lidar are used as input data to generate predicted road points that are out of range or occluded in the original point-cloud scan. Then the input pointcloud and predicted terrain are concatenated to the terrain-extended pointcloud. We show promising qualitative results from these methods, as well as discussion for potential quantitative metrics to evaluate the overall success of our framework. Finally, we discuss improvements that can be made to the framework for successful generalization to test sets.

Towards Decentralized Heterogeneous Multi-Robot SLAM and Target Tracking

Jun 07, 2023

Abstract:In many robotics problems, there is a significant gain in collaborative information sharing between multiple robots, for exploration, search and rescue, tracking multiple targets, or mapping large environments. One of the key implicit assumptions when solving cooperative multi-robot problems is that all robots use the same (homogeneous) underlying algorithm. However, in practice, we want to allow collaboration between robots possessing different capabilities and that therefore must rely on heterogeneous algorithms. We present a system architecture and the supporting theory, to enable collaboration in a decentralized network of robots, where each robot relies on different estimation algorithms. To develop our approach, we focus on multi-robot simultaneous localization and mapping (SLAM) with multi-target tracking. Our theoretical framework builds on our idea of exploiting the conditional independence structure inherent to many robotics applications to separate between each robot's local inference (estimation) tasks and fuse only relevant parts of their non-equal, but overlapping probability density function (pdfs). We present a new decentralized graph-based approach to the multi-robot SLAM and tracking problem. We leverage factor graphs to split between different parts of the problem for efficient data sharing between robots in the network while enabling robots to use different local sparse landmark/dense/metric-semantic SLAM algorithms.

A New Wave in Robotics: Survey on Recent mmWave Radar Applications in Robotics

May 02, 2023

Abstract:We survey the current state of millimeterwave (mmWave) radar applications in robotics with a focus on unique capabilities, and discuss future opportunities based on the state of the art. Frequency Modulated Continuous Wave (FMCW) mmWave radars operating in the 76--81GHz range are an appealing alternative to lidars, cameras and other sensors operating in the near visual spectrum. Radar has been made more widely available in new packaging classes, more convenient for robotics and its longer wavelengths have the ability to bypass visual clutter such as fog, dust, and smoke. We begin by covering radar principles as they relate to robotics. We then review the relevant new research across a broad spectrum of robotics applications beginning with motion estimation, localization, and mapping. We then cover object detection and classification, and then close with an analysis of current datasets and calibration techniques that provide entry points into radar research.

BO-ICP: Initialization of Iterative Closest Point Based on Bayesian Optimization

Apr 25, 2023Abstract:Typical algorithms for point cloud registration such as Iterative Closest Point (ICP) require a favorable initial transform estimate between two point clouds in order to perform a successful registration. State-of-the-art methods for choosing this starting condition rely on stochastic sampling or global optimization techniques such as branch and bound. In this work, we present a new method based on Bayesian optimization for finding the critical initial ICP transform. We provide three different configurations for our method which highlights the versatility of the algorithm to both find rapid results and refine them in situations where more runtime is available such as offline map building. Experiments are run on popular data sets and we show that our approach outperforms state-of-the-art methods when given similar computation time. Furthermore, it is compatible with other improvements to ICP, as it focuses solely on the selection of an initial transform, a starting point for all ICP-based methods.

Flexible Supervised Autonomy for Exploration in Subterranean Environments

Jan 02, 2023

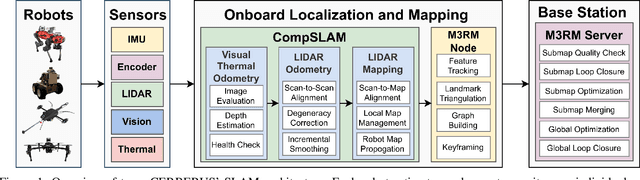

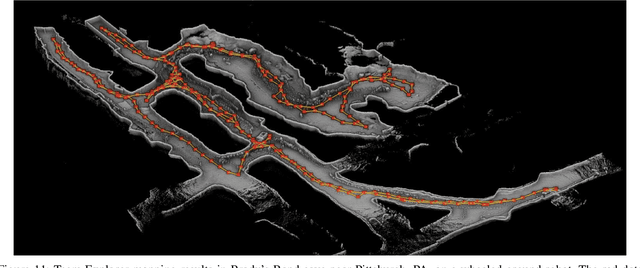

Abstract:While the capabilities of autonomous systems have been steadily improving in recent years, these systems still struggle to rapidly explore previously unknown environments without the aid of GPS-assisted navigation. The DARPA Subterranean (SubT) Challenge aimed to fast track the development of autonomous exploration systems by evaluating their performance in real-world underground search-and-rescue scenarios. Subterranean environments present a plethora of challenges for robotic systems, such as limited communications, complex topology, visually-degraded sensing, and harsh terrain. The presented solution enables long-term autonomy with minimal human supervision by combining a powerful and independent single-agent autonomy stack, with higher level mission management operating over a flexible mesh network. The autonomy suite deployed on quadruped and wheeled robots was fully independent, freeing the human supervision to loosely supervise the mission and make high-impact strategic decisions. We also discuss lessons learned from fielding our system at the SubT Final Event, relating to vehicle versatility, system adaptability, and re-configurable communications.

Present and Future of SLAM in Extreme Underground Environments

Aug 02, 2022

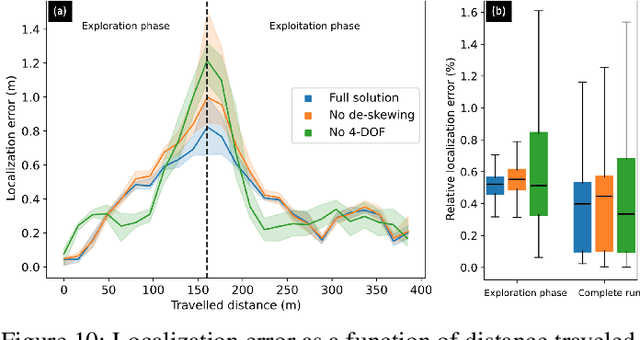

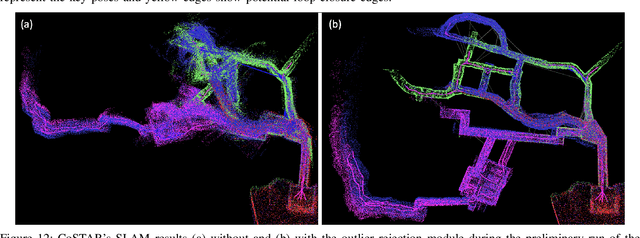

Abstract:This paper reports on the state of the art in underground SLAM by discussing different SLAM strategies and results across six teams that participated in the three-year-long SubT competition. In particular, the paper has four main goals. First, we review the algorithms, architectures, and systems adopted by the teams; particular emphasis is put on lidar-centric SLAM solutions (the go-to approach for virtually all teams in the competition), heterogeneous multi-robot operation (including both aerial and ground robots), and real-world underground operation (from the presence of obscurants to the need to handle tight computational constraints). We do not shy away from discussing the dirty details behind the different SubT SLAM systems, which are often omitted from technical papers. Second, we discuss the maturity of the field by highlighting what is possible with the current SLAM systems and what we believe is within reach with some good systems engineering. Third, we outline what we believe are fundamental open problems, that are likely to require further research to break through. Finally, we provide a list of open-source SLAM implementations and datasets that have been produced during the SubT challenge and related efforts, and constitute a useful resource for researchers and practitioners.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge