"Time": models, code, and papers

ALF: Autoencoder-based Low-rank Filter-sharing for Efficient Convolutional Neural Networks

Jul 27, 2020

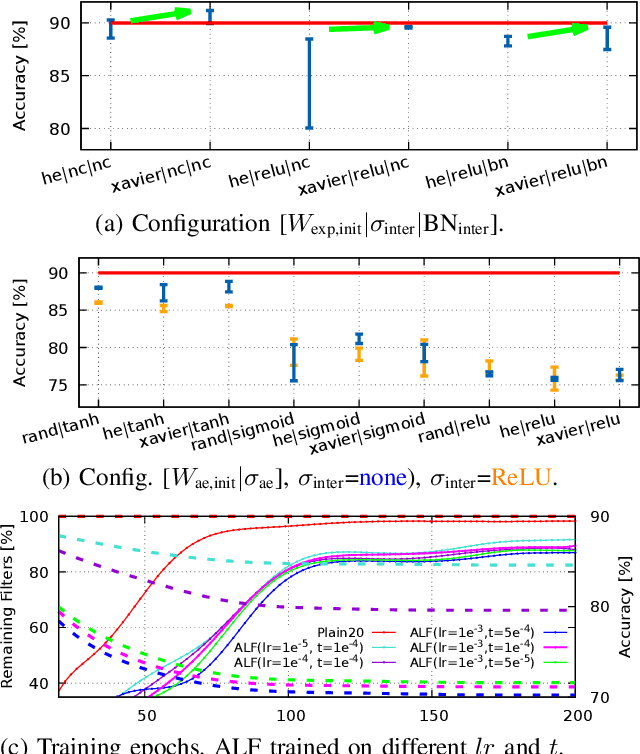

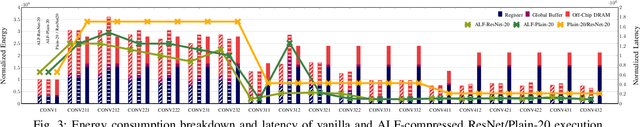

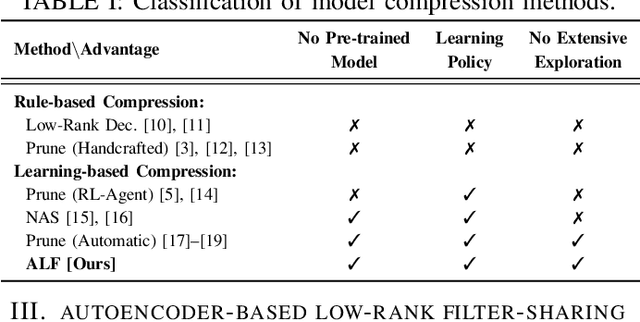

Closing the gap between the hardware requirements of state-of-the-art convolutional neural networks and the limited resources constraining embedded applications is the next big challenge in deep learning research. The computational complexity and memory footprint of such neural networks are typically daunting for deployment in resource constrained environments. Model compression techniques, such as pruning, are emphasized among other optimization methods for solving this problem. Most existing techniques require domain expertise or result in irregular sparse representations, which increase the burden of deploying deep learning applications on embedded hardware accelerators. In this paper, we propose the autoencoder-based low-rank filter-sharing technique technique (ALF). When applied to various networks, ALF is compared to state-of-the-art pruning methods, demonstrating its efficient compression capabilities on theoretical metrics as well as on an accurate, deterministic hardware-model. In our experiments, ALF showed a reduction of 70\% in network parameters, 61\% in operations and 41\% in execution time, with minimal loss in accuracy.

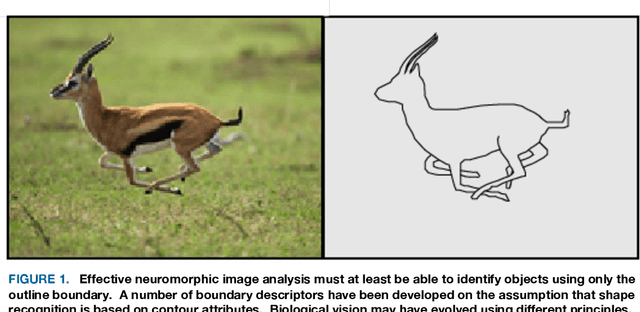

An evolutionary perspective on the design of neuromorphic shape filters

Aug 30, 2020

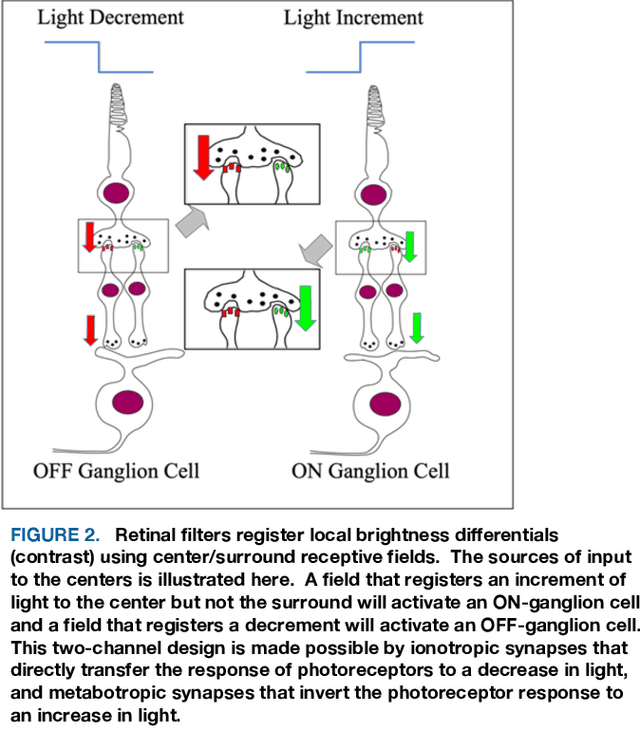

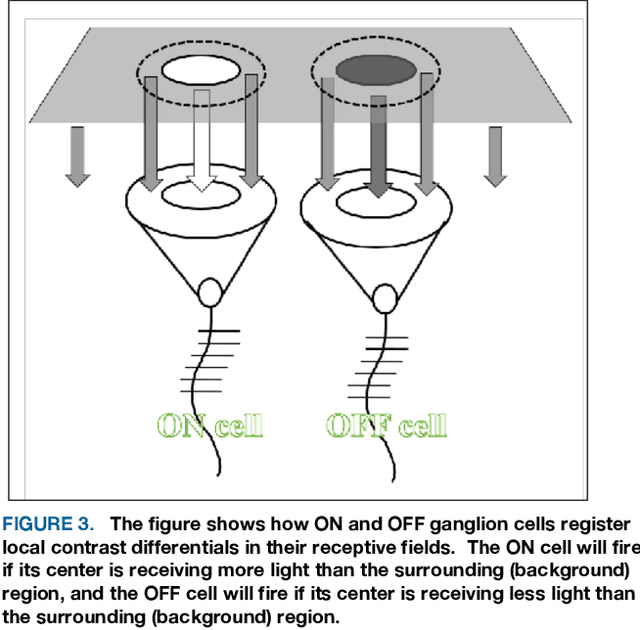

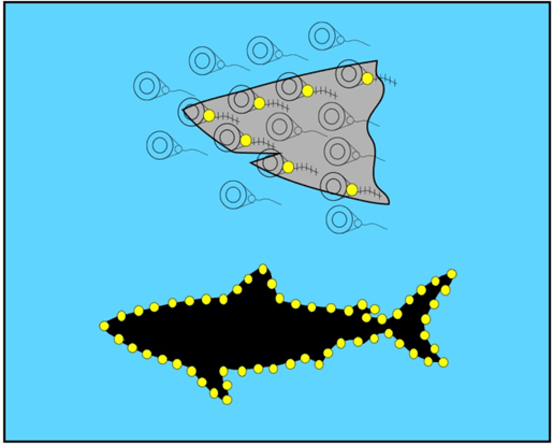

A substantial amount of time and energy has been invested to develop machine vision using connectionist (neural network) principles. Most of that work has been inspired by theories advanced by neuroscientists and behaviorists for how cortical systems store stimulus information. Those theories call for information flow through connections among several neuron populations, with the initial connections being random (or at least non-functional). Then the strength or location of connections are modified through training trials to achieve an effective output, such as the ability to identify an object. Those theories ignored the fact that animals that have no cortex, e.g., fish, can demonstrate visual skills that outpace the best neural network models. Neural circuits that allow for immediate effective vision and quick learning have been preprogrammed by hundreds of millions of years of evolution and the visual skills are available shortly after hatching. Cortical systems may be providing advanced image processing, but most likely are using design principles that had been proven effective in simpler systems. The present article provides a brief overview of retinal and cortical mechanisms for registering shape information, with the hope that it might contribute to the design of shape-encoding circuits that more closely match the mechanisms of biological vision.

Investigations on the inference optimization techniques and their impact on multiple hardware platforms for Semantic Segmentation

Nov 29, 2019

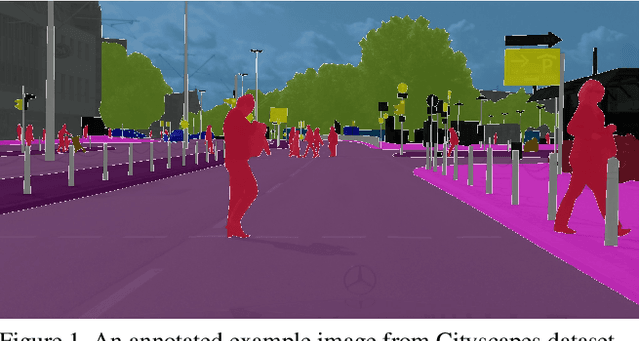

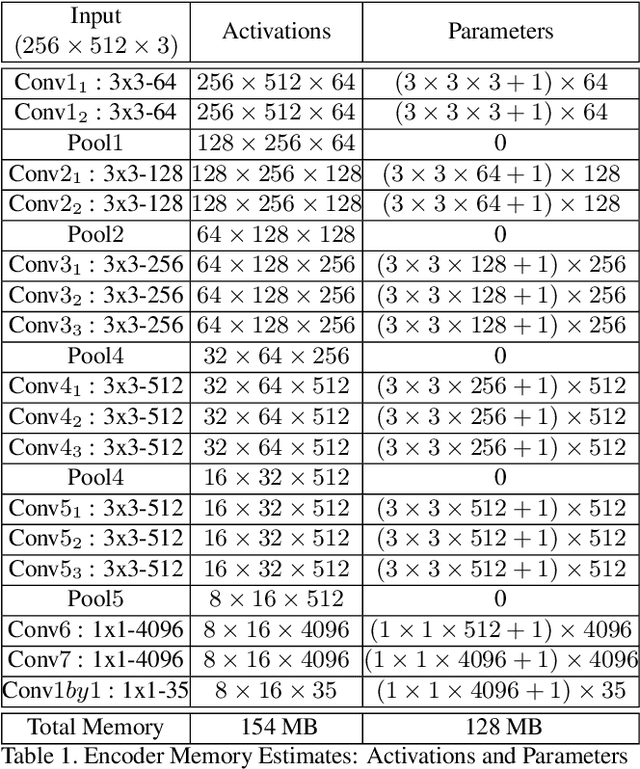

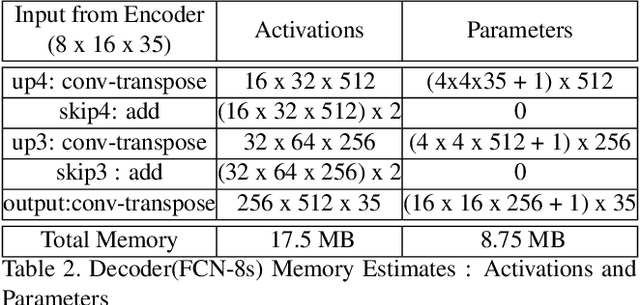

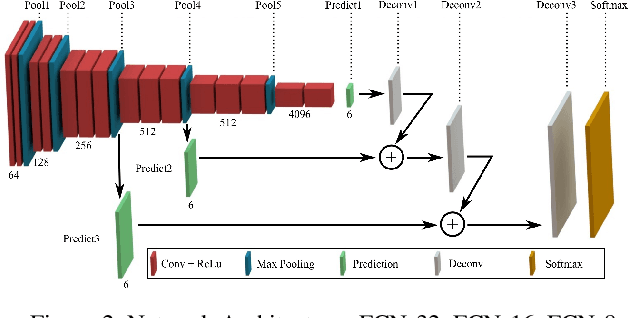

In this work, the task of pixel-wise semantic segmentation in the context of self-driving with a goal to reduce the inference time is explored. Fully Convolutional Network (FCN-8s, FCN-16s, and FCN-32s) with a VGG16 encoder architecture and skip connections is trained and validated on the Cityscapes dataset. Numerical investigations are carried out for several inference optimization techniques built into TensorFlow and TensorRT to quantify their impact on the inference time and network size. Finally, the trained network is ported on to an embedded platform (Nvidia Jetson TX1) and the inference time, as well as the total energy consumed for inference across hardware platforms, are compared.

Dynamic Fusion based Federated Learning for COVID-19 Detection

Sep 26, 2020

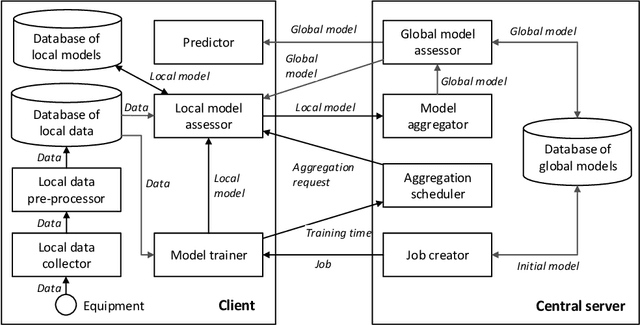

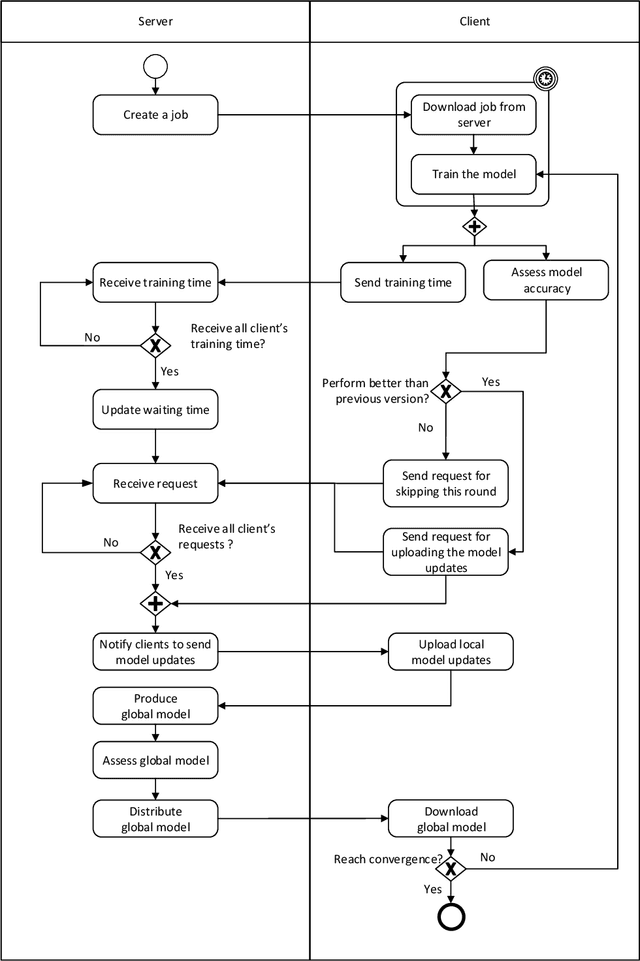

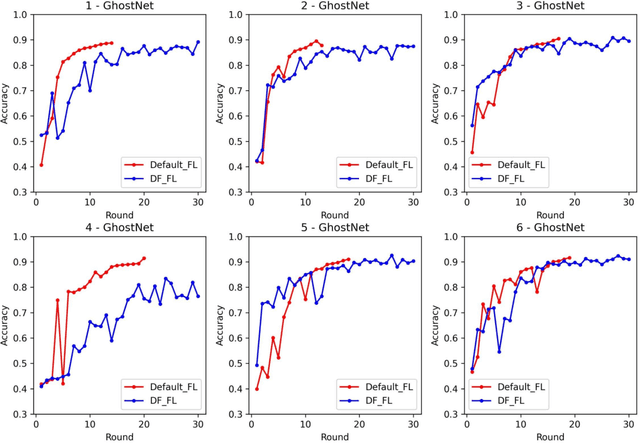

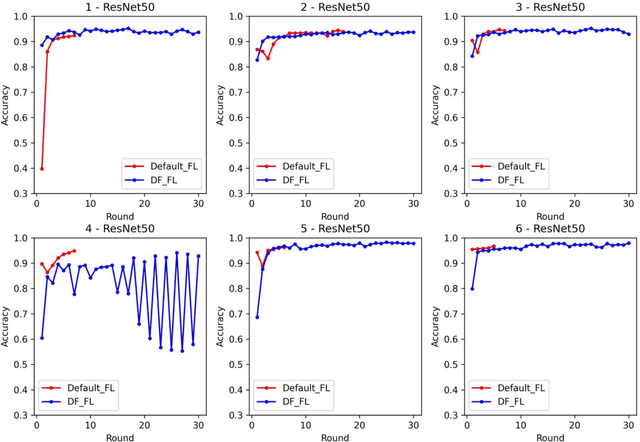

Medical diagnostic image analysis (e.g., CT scan or X-Ray) using machine learning is an efficient and accurate way to detect COVID-19 infections. However, sharing diagnostic images across medical institutions is usually not allowed due to the concern of patients' privacy. This causes the issue of insufficient datasets for training the image classification model. Federated learning is an emerging privacy-preserving machine learning paradigm that produces an unbiased global model based on the received updates of local models trained by clients without exchanging clients' local data. Nevertheless, the default setting of federated learning introduces huge communication cost of transferring model updates and can hardly ensure model performance when data heterogeneity of clients heavily exists. To improve communication efficiency and model performance, in this paper, we propose a novel dynamic fusion-based federated learning approach for medical diagnostic image analysis to detect COVID-19 infections. First, we design an architecture for dynamic fusion-based federated learning systems to analyse medical diagnostic images. Further, we present a dynamic fusion method to dynamically decide the participating clients according to their local model performance and schedule the model fusion-based on participating clients' training time. In addition, we summarise a category of medical diagnostic image datasets for COVID-19 detection, which can be used by the machine learning community for image analysis. The evaluation results show that the proposed approach is feasible and performs better than the default setting of federated learning in terms of model performance, communication efficiency and fault tolerance.

Stochastic properties of an inverted pendulum on a wheel on a soft surface

Jun 11, 2020

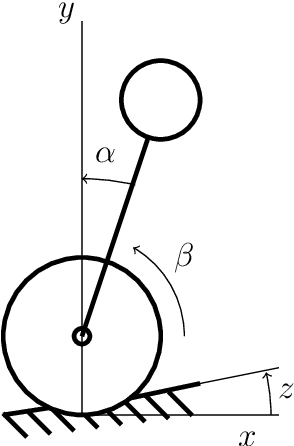

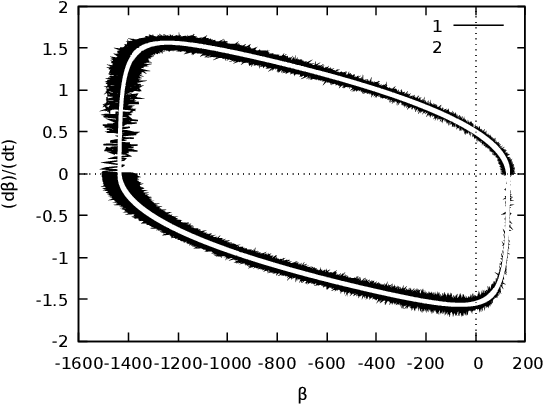

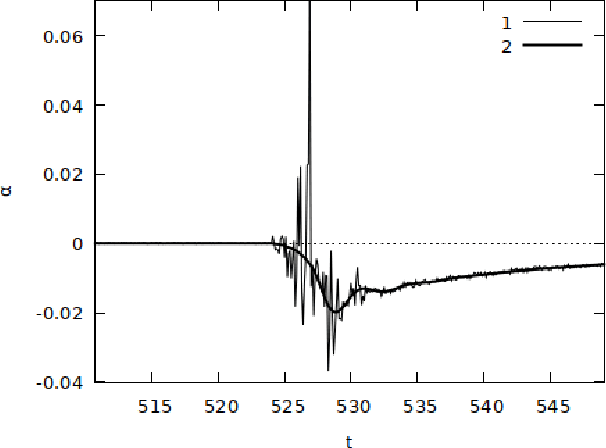

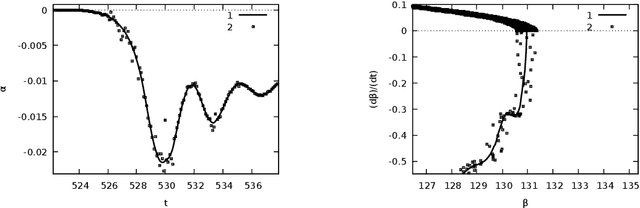

We study dynamics of the inverted pendulum on the wheel on a soft surface and under a proportional-integral-derivative controller. The behaviour of such pendulum is modelled by a system with a differential inclusion. If the the system has a sensor for the rotational velocity of the pendulum, the tilt sensor and the encoder for the wheel then this system is observable. The using of the observed data for the controller brings stochastic perturbations into the system. The properties of the differential inclusion under stochastic control is studied for upper position of the pendulum. The formula for the time, which the pendulum spends near the upper position, is derived.

Dynamic backup workers for parallel machine learning

Apr 30, 2020

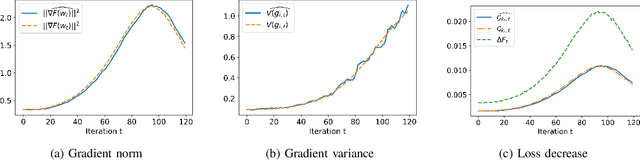

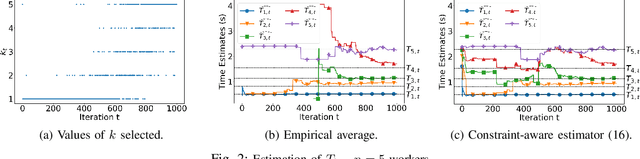

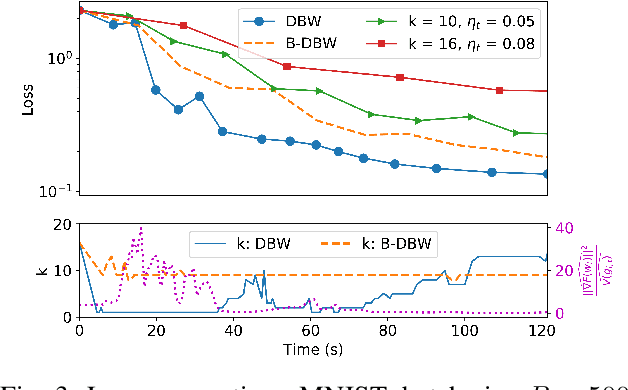

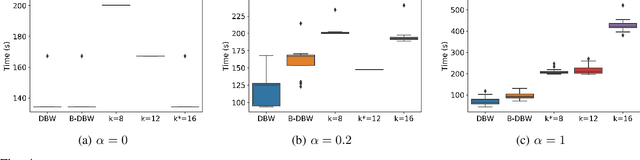

The most popular framework for distributed training of machine learning models is the (synchronous) parameter server (PS). This paradigm consists of $n$ workers, which iteratively compute updates of the model parameters, and a stateful PS, which waits and aggregates all updates to generate a new estimate of model parameters and sends it back to the workers for a new iteration. Transient computation slowdowns or transmission delays can intolerably lengthen the time of each iteration. An efficient way to mitigate this problem is to let the PS wait only for the fastest $n-b$ updates, before generating the new parameters. The slowest $b$ workers are called backup workers. The optimal number $b$ of backup workers depends on the cluster configuration and workload, but also (as we show in this paper) on the hyper-parameters of the learning algorithm and the current stage of the training. We propose DBW, an algorithm that dynamically decides the number of backup workers during the training process to maximize the convergence speed at each iteration. Our experiments show that DBW 1) removes the necessity to tune $b$ by preliminary time-consuming experiments, and 2) makes the training up to a factor $3$ faster than the optimal static configuration.

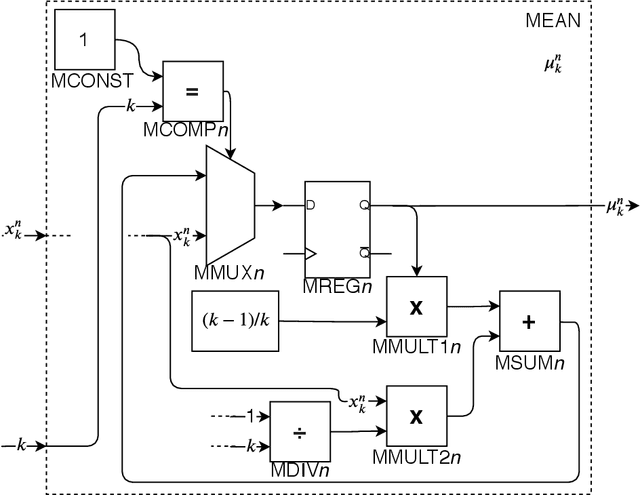

Hardware Architecture Proposal for TEDA algorithm to Data Streaming Anomaly Detection

Mar 08, 2020

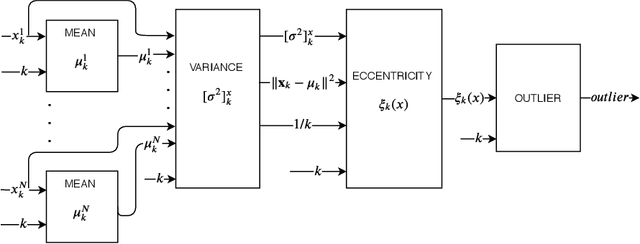

The amount of data in real-time, such as time series and streaming data, available today continues to grow. Being able to analyze this data the moment it arrives can bring an immense added value. However, it also requires a lot of computational effort and new acceleration techniques. As a possible solution to this problem, this paper proposes a hardware architecture for Typicality and Eccentricity Data Analytic (TEDA) algorithm implemented on Field Programmable Gate Arrays (FPGA) for use in data streaming anomaly detection. TEDA is based on a new approach to outlier detection in the data stream context. In order to validate the proposals, results of the occupation and throughput of the proposed hardware are presented. Besides, the bit accurate simulation results are also presented. The project aims to Xilinx Virtex-6 xc6vlx240t-1ff1156 as the target FPGA.

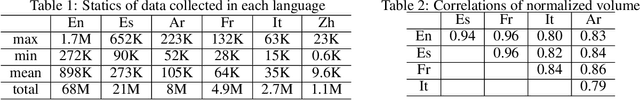

SenWave: Monitoring the Global Sentiments under the COVID-19 Pandemic

Jun 18, 2020

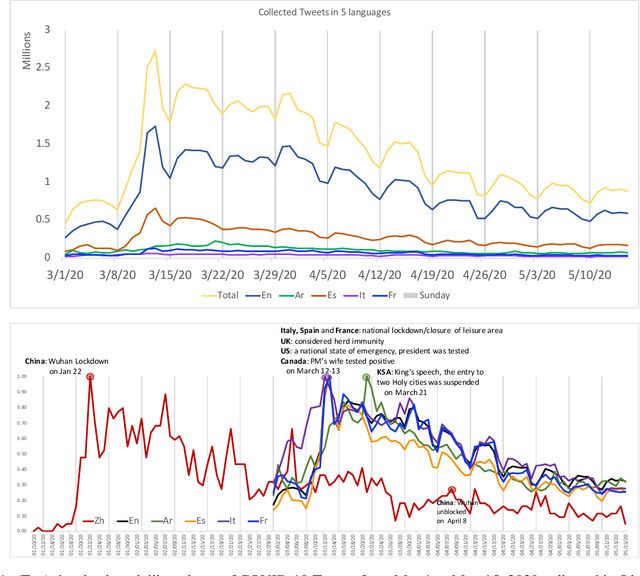

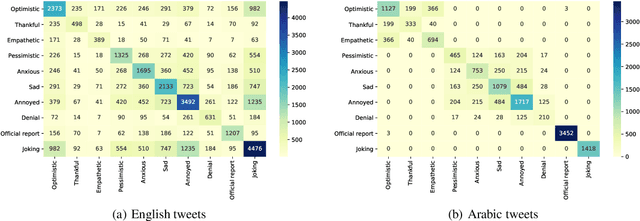

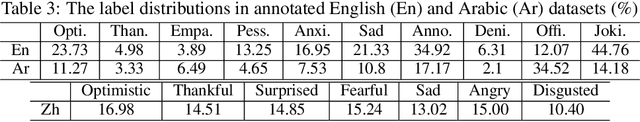

Since the first alert launched by the World Health Organization (5 January, 2020), COVID-19 has been spreading out to over 180 countries and territories. As of June 18, 2020, in total, there are now over 8,400,000 cases and over 450,000 related deaths. This causes massive losses in the economy and jobs globally and confining about 58% of the global population. In this paper, we introduce SenWave, a novel sentimental analysis work using 105+ million collected tweets and Weibo messages to evaluate the global rise and falls of sentiments during the COVID-19 pandemic. To make a fine-grained analysis on the feeling when we face this global health crisis, we annotate 10K tweets in English and 10K tweets in Arabic in 10 categories, including optimistic, thankful, empathetic, pessimistic, anxious, sad, annoyed, denial, official report, and joking. We then utilize an integrated transformer framework, called simpletransformer, to conduct multi-label sentimental classification by fine-tuning the pre-trained language model on the labeled data. Meanwhile, in order for a more complete analysis, we also translate the annotated English tweets into different languages (Spanish, Italian, and French) to generated training data for building sentiment analysis models for these languages. SenWave thus reveals the sentiment of global conversation in six different languages on COVID-19 (covering English, Spanish, French, Italian, Arabic and Chinese), followed the spread of the epidemic. The conversation showed a remarkably similar pattern of rapid rise and slow decline over time across all nations, as well as on special topics like the herd immunity strategies, to which the global conversation reacts strongly negatively. Overall, SenWave shows that optimistic and positive sentiments increased over time, foretelling a desire to seek, together, a reset for an improved COVID-19 world.

MementoML: Performance of selected machine learning algorithm configurations on OpenML100 datasets

Aug 30, 2020

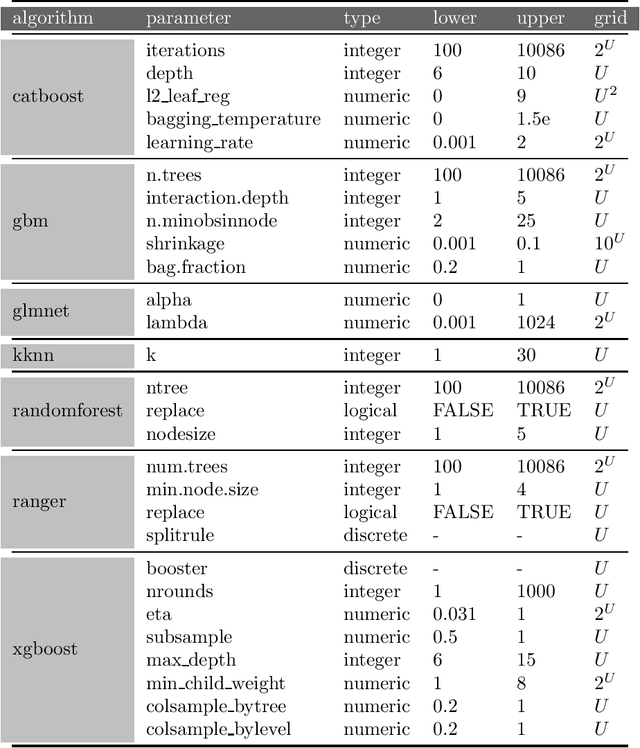

Finding optimal hyperparameters for the machine learning algorithm can often significantly improve its performance. But how to choose them in a time-efficient way? In this paper we present the protocol of generating benchmark data describing the performance of different ML algorithms with different hyperparameter configurations. Data collected in this way is used to study the factors influencing the algorithm's performance. This collection was prepared for the purposes of the study presented in the EPP study. We tested algorithms performance on dense grid of hyperparameters. Tested datasets and hyperparameters were chosen before any algorithm has run and were not changed. This is a different approach than the one usually used in hyperparameter tuning, where the selection of candidate hyperparameters depends on the results obtained previously. However, such selection allows for systematic analysis of performance sensitivity from individual hyperparameters. This resulted in a comprehensive dataset of such benchmarks that we would like to share. We hope, that computed and collected result may be helpful for other researchers. This paper describes the way data was collected. Here you can find benchmarks of 7 popular machine learning algorithms on 39 OpenML datasets. The detailed data forming this benchmark are available at: https://www.kaggle.com/mi2datalab/mementoml.

Fast, Composable Rescue Mission Planning for UAVs using Metric Temporal Logic

Dec 17, 2019

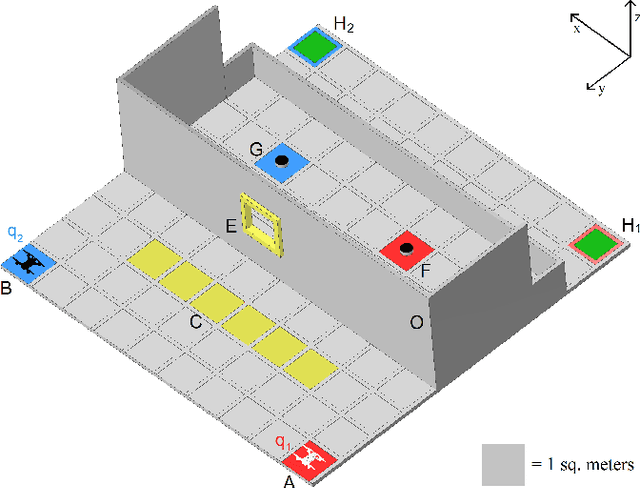

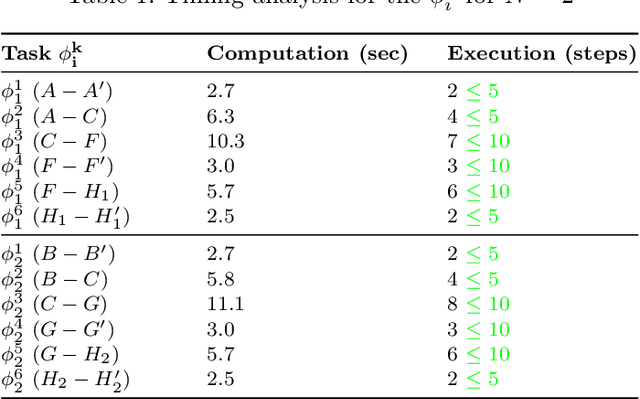

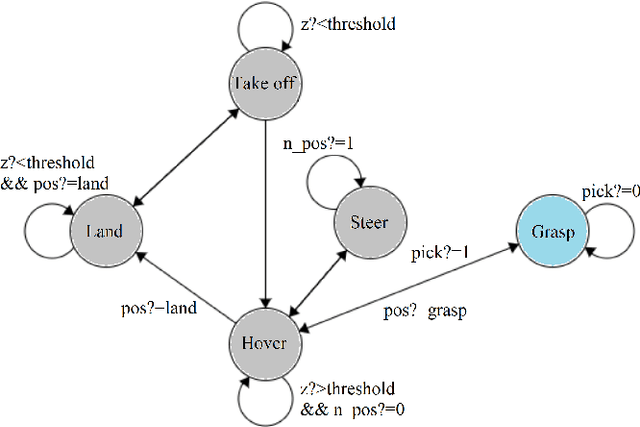

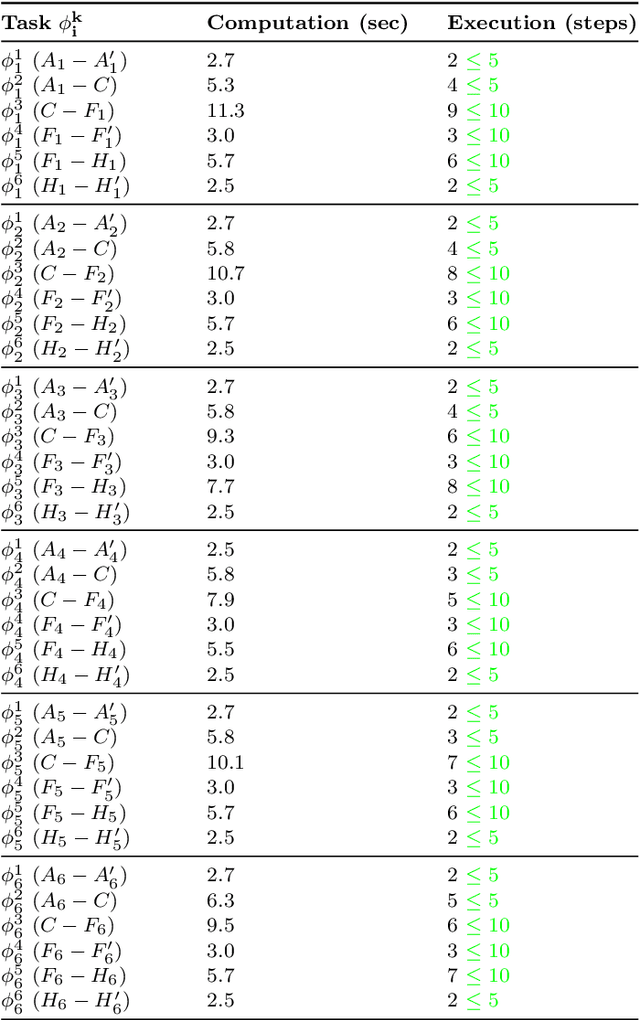

We present a hybrid compositional approach for real-time mission planning for multi-rotor unmanned aerial vehicles (UAVs) in a time critical search and rescue scenario. Starting with a known environment, we specify the mission using Metric Temporal Logic (MTL) and use a hybrid dynamical model to capture the various modes of UAV operation. We then divide the mission into several sub-tasks by exploiting the invariant nature of safety and timing constraints along the way, and the different modes (i.e., dynamics) of the UAV. For each sub-task, we translate the MTL specifications into linear constraints and solve the associated optimal control problem for desired path, using a Mixed Integer Linear Program (MILP) solver. The complete path for the mission is constructed recursively by composing the individual optimal sub-paths. We show by simulations that the resulting suboptimal trajectories satisfy the mission specifications, and the proposed approach leads to significant reduction in computational complexity of the problem, making it possible to implement in real-time. Our proposed method ensures the safety of UAVs at all times and guarantees finite time mission completion. It is also shown that our approach scales up nicely for a large number of UAVs.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge