"Time": models, code, and papers

A 3D Non-stationary MmWave Channel Model for Vacuum Tube Ultra-High-Speed Train Channels

Jan 17, 2021

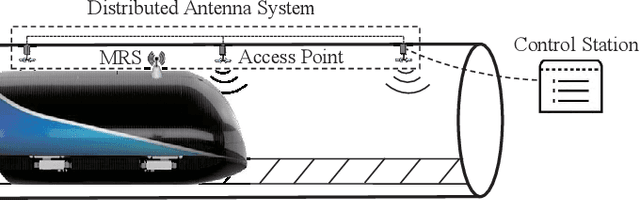

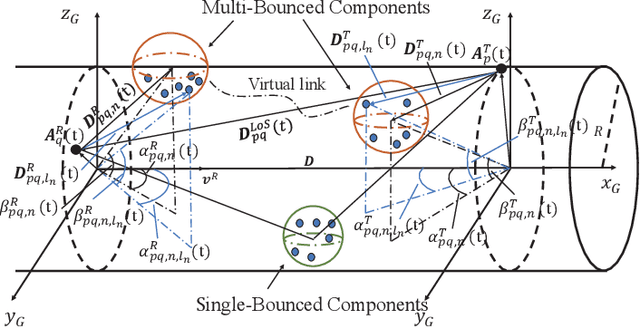

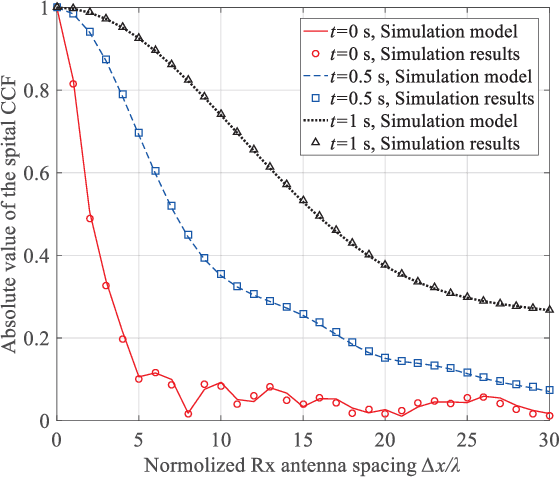

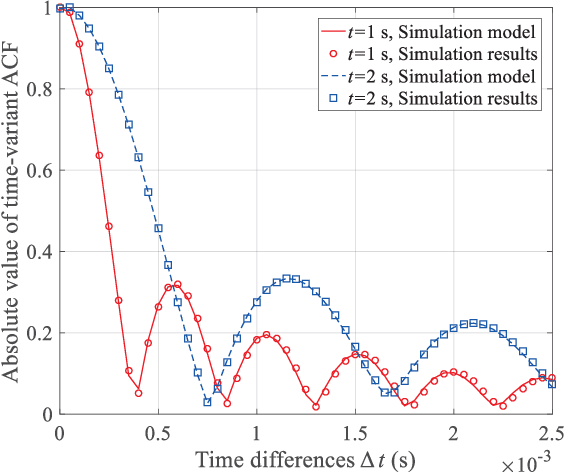

As a potential development direction of future transportation, the vacuum tube ultra-high-speed train (UHST) wireless communication systems have newly different channel characteristics from existing high-speed train (HST) scenarios. In this paper, a three-dimensional non-stationary millimeter wave (mmWave) geometry-based stochastic model (GBSM) is proposed to investigate the channel characteristics of UHST channels in vacuum tube scenarios, taking into account the waveguide effect and the impact of tube wall roughness on channel. Then, based on the proposed model, some important time-variant channel statistical properties are studied and compared with those in existing HST and tunnel channels. The results obtained show that the multipath effect in vacuum tube scenarios will be more obvious than tunnel scenarios but less than existing HST scenarios, which will provide some insights for future research on vacuum tube UHST wireless communications.

School of hard knocks: Curriculum analysis for Pommerman with a fixed computational budget

Feb 23, 2021

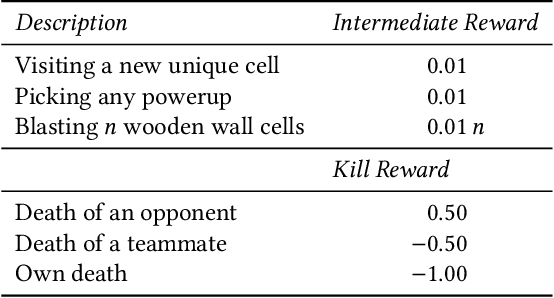

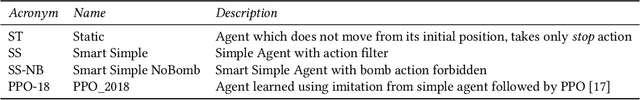

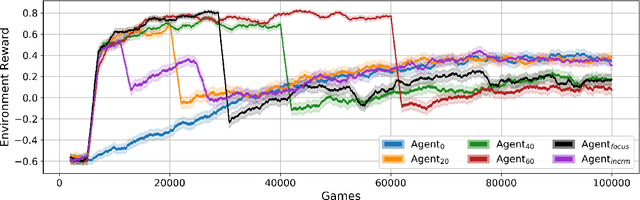

Pommerman is a hybrid cooperative/adversarial multi-agent environment, with challenging characteristics in terms of partial observability, limited or no communication, sparse and delayed rewards, and restrictive computational time limits. This makes it a challenging environment for reinforcement learning (RL) approaches. In this paper, we focus on developing a curriculum for learning a robust and promising policy in a constrained computational budget of 100,000 games, starting from a fixed base policy (which is itself trained to imitate a noisy expert policy). All RL algorithms starting from the base policy use vanilla proximal-policy optimization (PPO) with the same reward function, and the only difference between their training is the mix and sequence of opponent policies. One expects that beginning training with simpler opponents and then gradually increasing the opponent difficulty will facilitate faster learning, leading to more robust policies compared against a baseline where all available opponent policies are introduced from the start. We test this hypothesis and show that within constrained computational budgets, it is in fact better to "learn in the school of hard knocks", i.e., against all available opponent policies nearly from the start. We also include ablation studies where we study the effect of modifying the base environment properties of ammo and bomb blast strength on the agent performance.

Performance Improvement of Path Planning algorithms with Deep Learning Encoder Model

Aug 05, 2020

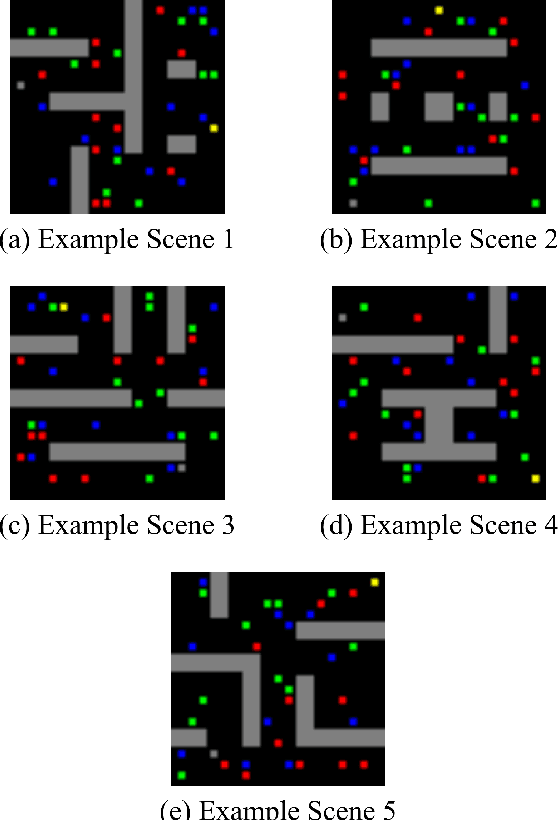

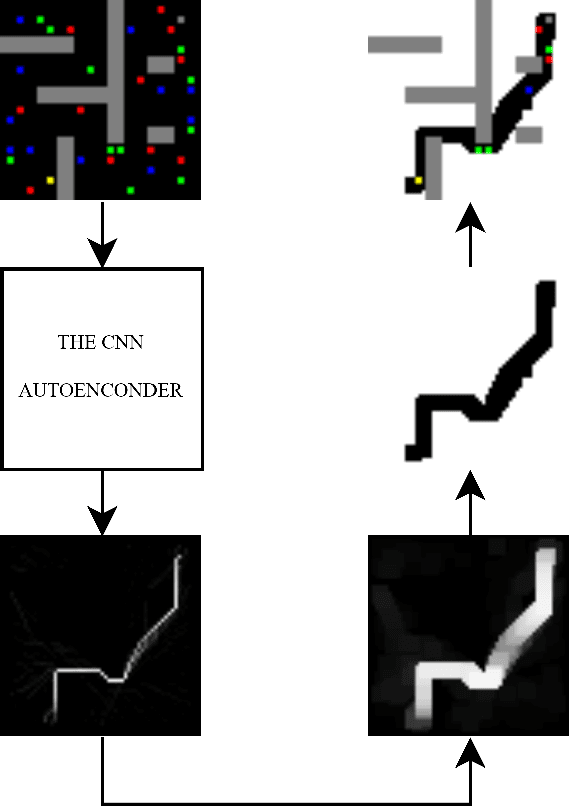

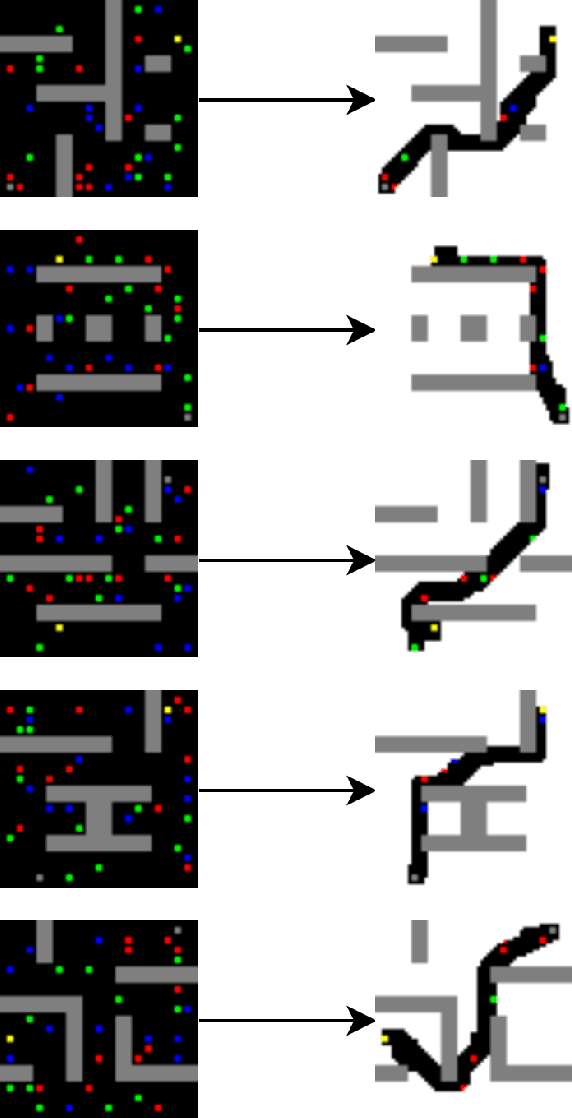

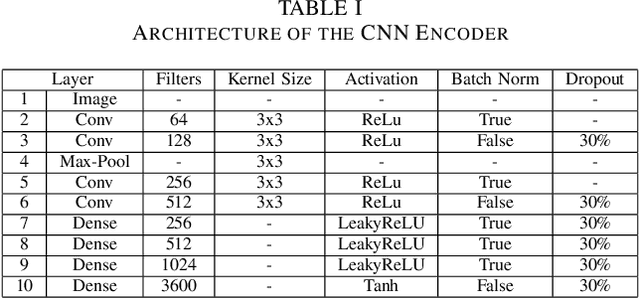

Currently, path planning algorithms are used in many daily tasks. They are relevant to find the best route in traffic and make autonomous robots able to navigate. The use of path planning presents some issues in large and dynamic environments. Large environments make these algorithms spend much time finding the shortest path. On the other hand, dynamic environments request a new execution of the algorithm each time a change occurs in the environment, and it increases the execution time. The dimensionality reduction appears as a solution to this problem, which in this context means removing useless paths present in those environments. Most of the algorithms that reduce dimensionality are limited to the linear correlation of the input data. Recently, a Convolutional Neural Network (CNN) Encoder was used to overcome this situation since it can use both linear and non-linear information to data reduction. This paper analyzes in-depth the performance to eliminate the useless paths using this CNN Encoder model. To measure the mentioned model efficiency, we combined it with different path planning algorithms. Next, the final algorithms (combined and not combined) are checked in a database that is composed of five scenarios. Each scenario contains fixed and dynamic obstacles. Their proposed model, the CNN Encoder, associated to other existent path planning algorithms in the literature, was able to obtain a time decrease to find the shortest path in comparison to all path planning algorithms analyzed. the average decreased time was 54.43 %.

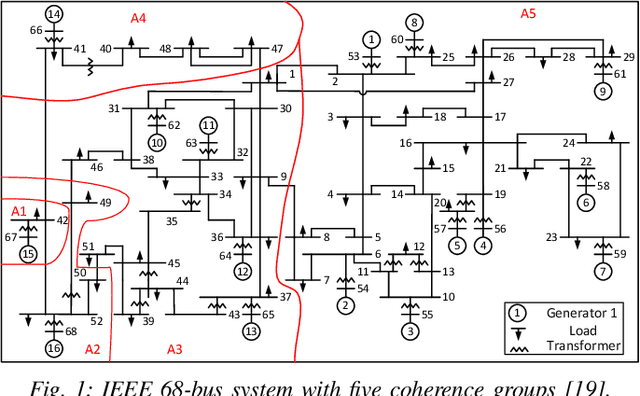

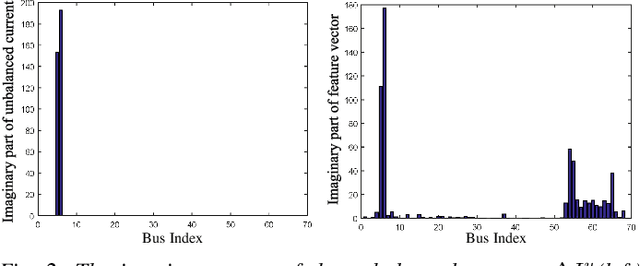

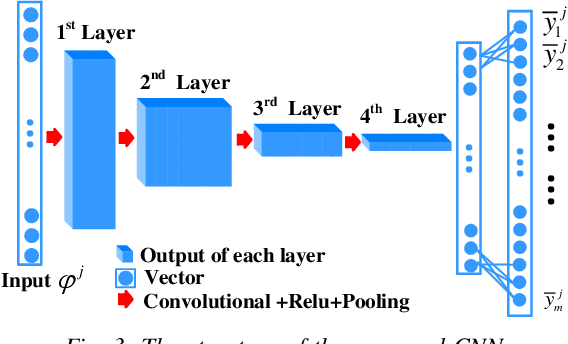

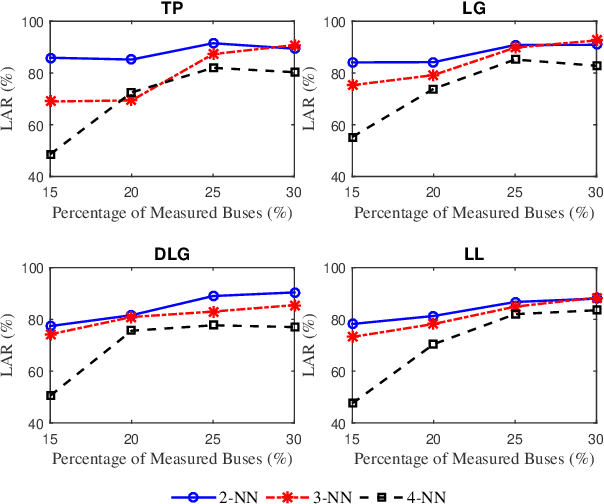

Real-time Fault Localization in Power Grids With Convolutional Neural Networks

Oct 11, 2018

Diverse fault types, fast re-closures and complicated transient states after a fault event make real-time fault location in power grids challenging. Existing localization techniques in this area rely on simplistic assumptions, such as static loads, or require much higher sampling rates or total measurement availability. This paper proposes a data-driven localization method based on a Convolutional Neural Network (CNN) classifier using bus voltages. Unlike prior data-driven methods, the proposed classifier is based on features with physical interpretations that are described in details. The accuracy of our CNN based localization tool is demonstrably superior to other machine learning classifiers in the literature. To further improve the location performance, a novel phasor measurement units (PMU) placement strategy is proposed and validated against other methods. A significant aspect of our methodology is that under very low observability (7% of buses), the algorithm is still able to localize the faulted line to a small neighborhood with high probability. The performance of our scheme is validated through simulations of faults of various types in the IEEE 68-bus power system under varying load conditions, system observability and measurement quality.

WRSE -- a non-parametric weighted-resolution ensemble for predicting individual survival distributions in the ICU

Nov 02, 2020

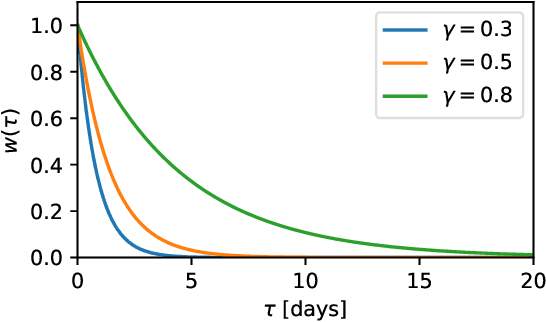

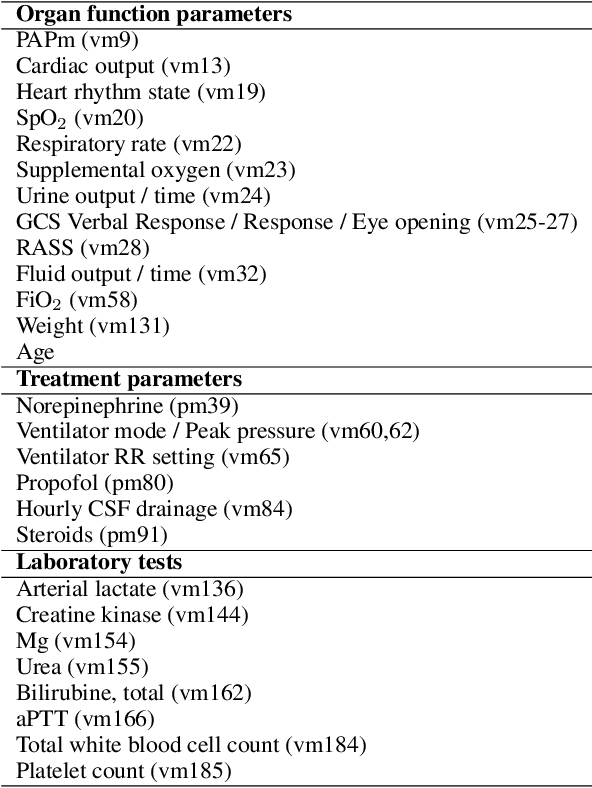

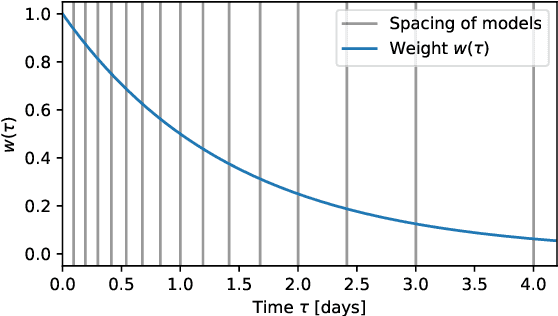

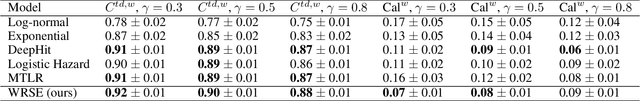

Dynamic assessment of mortality risk in the intensive care unit (ICU) can be used to stratify patients, inform about treatment effectiveness or serve as part of an early-warning system. Static risk scoring systems, such as APACHE or SAPS, have recently been supplemented with data-driven approaches that track the dynamic mortality risk over time. Recent works have focused on enhancing the information delivered to clinicians even further by producing full survival distributions instead of point predictions or fixed horizon risks. In this work, we propose a non-parametric ensemble model, Weighted Resolution Survival Ensemble (WRSE), tailored to estimate such dynamic individual survival distributions. Inspired by the simplicity and robustness of ensemble methods, the proposed approach combines a set of binary classifiers spaced according to a decay function reflecting the relevance of short-term mortality predictions. Models and baselines are evaluated under weighted calibration and discrimination metrics for individual survival distributions which closely reflect the utility of a model in ICU practice. We show competitive results with state-of-the-art probabilistic models, while greatly reducing training time by factors of 2-9x.

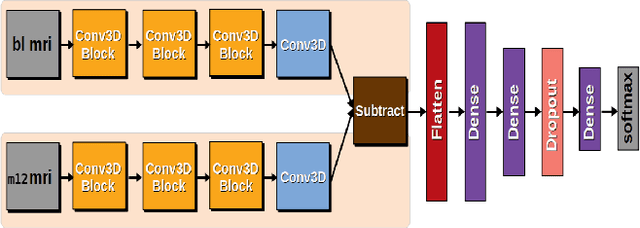

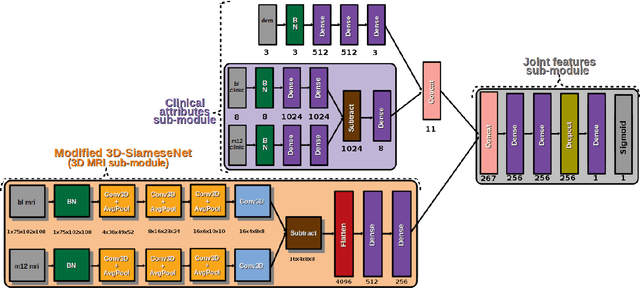

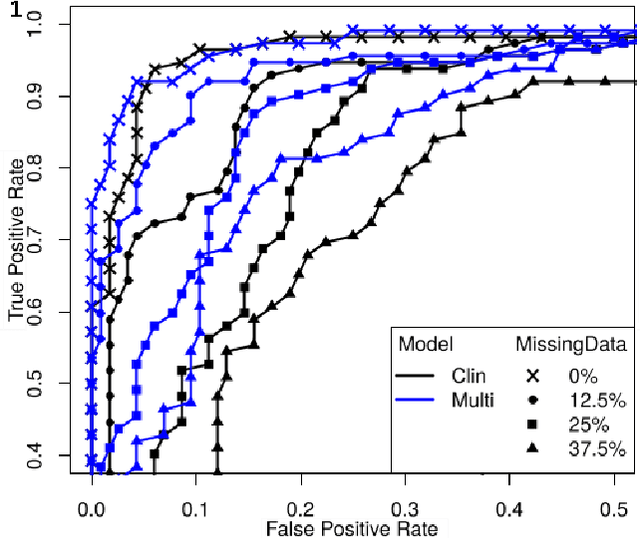

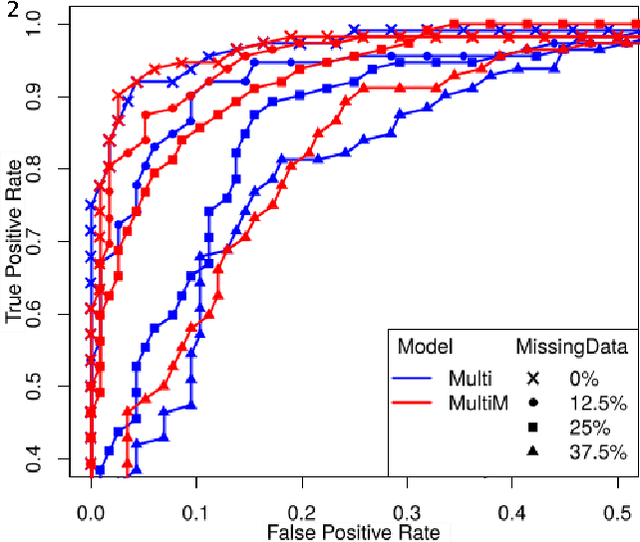

Predicting Brain Degeneration with a Multimodal Siamese Neural Network

Nov 02, 2020

To study neurodegenerative diseases, longitudinal studies are carried on volunteer patients. During a time span of several months to several years, they go through regular medical visits to acquire data from different modalities, such as biological samples, cognitive tests, structural and functional imaging. These variables are heterogeneous but they all depend on the patient's health condition, meaning that there are possibly unknown relationships between all modalities. Some information may be specific to some modalities, others may be complementary, and others may be redundant. Some data may also be missing. In this work we present a neural network architecture for multimodal learning, able to use imaging and clinical data from two time points to predict the evolution of a neurodegenerative disease, and robust to missing values. Our multimodal network achieves 92.5\% accuracy and an AUC score of 0.978 over a test set of 57 subjects. We also show the superiority of the multimodal architecture, for up to 37.5\% of missing values in test set subjects' clinical measurements, compared to a model using only the clinical modality.

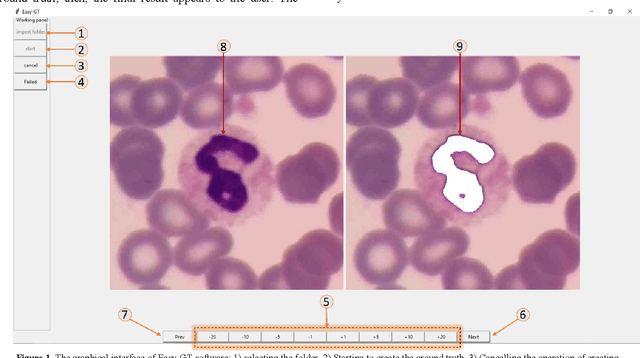

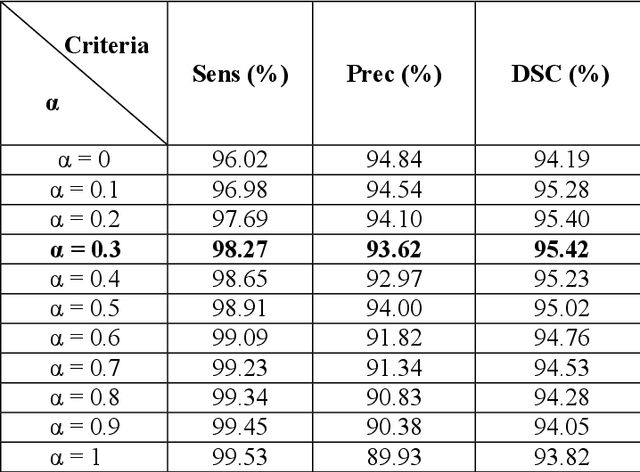

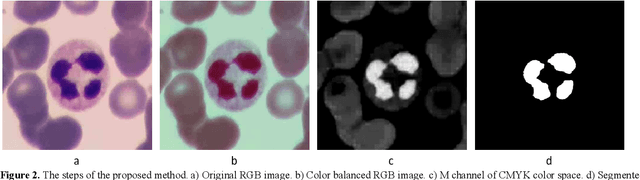

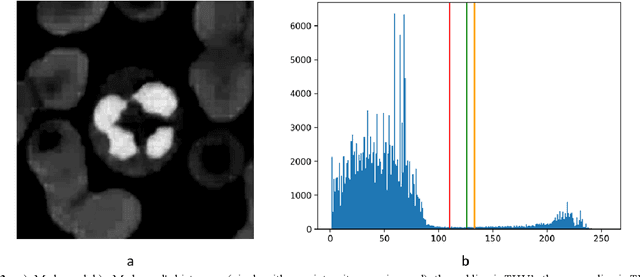

Easy-GT: Open-Source Software to Facilitate Making the Ground Truth for White Blood Cells Nucleus

Jan 27, 2021

The nucleus of white blood cells (WBCs) plays a significant role in their detection and classification. Appropriate feature extraction of the nucleus is necessary to fit a suitable artificial intelligence model to classify WBCs. Therefore, designing a method is needed to segment the nucleus accurately. The detected nuclei should be compared with the ground truths identified by a hematologist to obtain a proper performance evaluation of the nucleus segmentation method. It is a time-consuming and tedious task for experts to establish the ground truth manually. This paper presents an intelligent open-source software called Easy-GT to create the ground truth of WBCs nucleus faster and easier. This software first detects the nucleus by employing a new otsus thresholding based method with a dice similarity coefficient (DSC) of 95.42 %; the hematologist can then create a more accurate ground truth, using the designed buttons to modify the threshold value. This software can speed up ground truths forming process more than six times.

Learning adaptive differential evolution algorithm from optimization experiences by policy gradient

Feb 06, 2021

Differential evolution is one of the most prestigious population-based stochastic optimization algorithm for black-box problems. The performance of a differential evolution algorithm depends highly on its mutation and crossover strategy and associated control parameters. However, the determination process for the most suitable parameter setting is troublesome and time-consuming. Adaptive control parameter methods that can adapt to problem landscape and optimization environment are more preferable than fixed parameter settings. This paper proposes a novel adaptive parameter control approach based on learning from the optimization experiences over a set of problems. In the approach, the parameter control is modeled as a finite-horizon Markov decision process. A reinforcement learning algorithm, named policy gradient, is applied to learn an agent (i.e. parameter controller) that can provide the control parameters of a proposed differential evolution adaptively during the search procedure. The differential evolution algorithm based on the learned agent is compared against nine well-known evolutionary algorithms on the CEC'13 and CEC'17 test suites. Experimental results show that the proposed algorithm performs competitively against these compared algorithms on the test suites.

Surrogate-assisted cooperative signal optimization for large-scale traffic networks

Mar 03, 2021

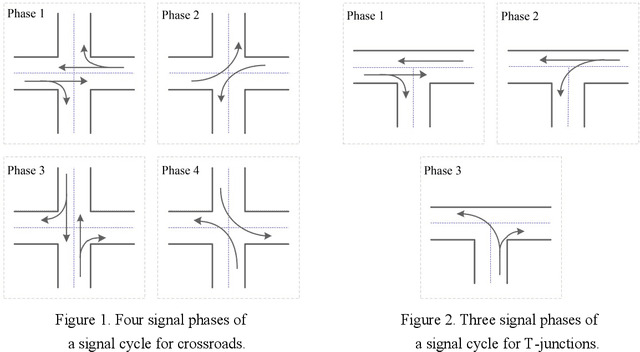

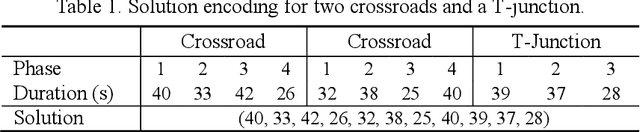

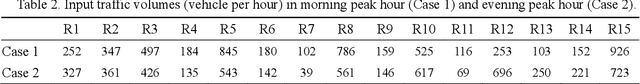

Reasonable setting of traffic signals can be very helpful in alleviating congestion in urban traffic networks. Meta-heuristic optimization algorithms have proved themselves to be able to find high-quality signal timing plans. However, they generally suffer from performance deterioration when solving large-scale traffic signal optimization problems due to the huge search space and limited computational budget. Directing against this issue, this study proposes a surrogate-assisted cooperative signal optimization (SCSO) method. Different from existing methods that directly deal with the entire traffic network, SCSO first decomposes it into a set of tractable sub-networks, and then achieves signal setting by cooperatively optimizing these sub-networks with a surrogate-assisted optimizer. The decomposition operation significantly narrows the search space of the whole traffic network, and the surrogate-assisted optimizer greatly lowers the computational burden by reducing the number of expensive traffic simulations. By taking Newman fast algorithm, radial basis function and a modified estimation of distribution algorithm as decomposer, surrogate model and optimizer, respectively, this study develops a concrete SCSO algorithm. To evaluate its effectiveness and efficiency, a large-scale traffic network involving crossroads and T-junctions is generated based on a real traffic network. Comparison with several existing meta-heuristic algorithms specially designed for traffic signal optimization demonstrates the superiority of SCSO in reducing the average delay time of vehicles.

MAPS-X: Explainable Multi-Robot Motion Planning via Segmentation

Nov 02, 2020

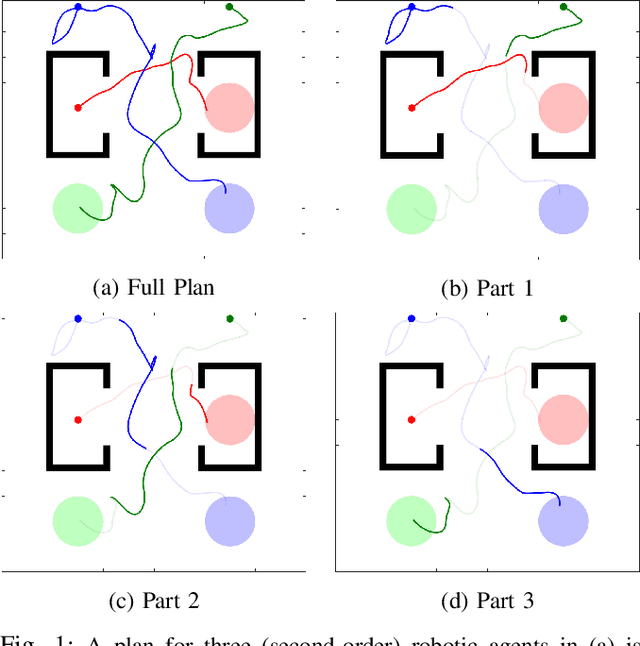

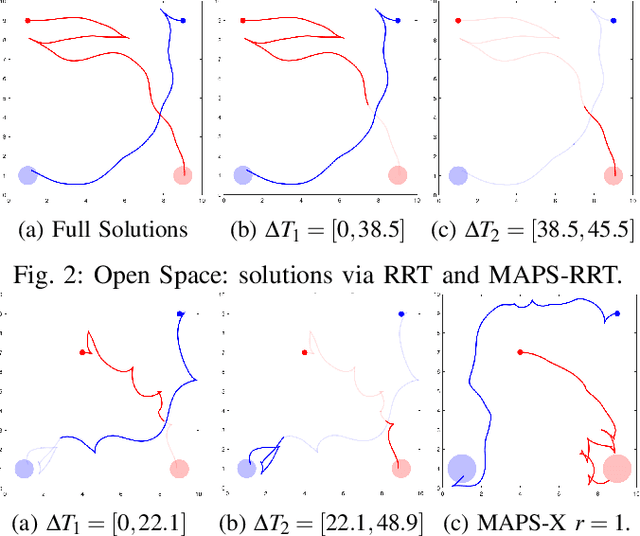

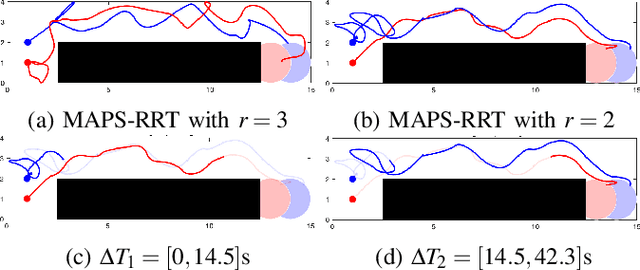

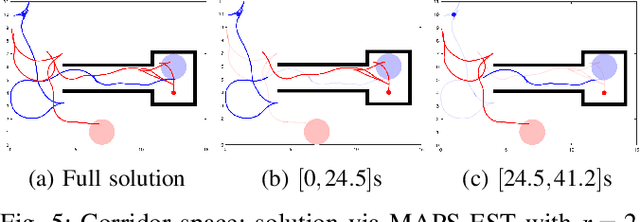

Traditional \textit{multi-robot motion planning} (MMP) focuses on computing trajectories for multiple robots acting in an environment, such that the robots do not collide when the trajectories are taken simultaneously. In \emph{safety-critical} applications, a human supervisor may want to verify that the plan is indeed collision-free. In this work, we propose a notion of explanation for a plan of MMP, based on visualization of the plan as a short sequence of images representing time segments, where in each time segment the trajectories of the agents are disjoint, clearly illustrating the safety of the plan. We show that standard notions of optimality (e.g., makespan) may create conflict with short explanations. Thus, we propose meta-algorithms, namely \emph{multi-agent plan segmenting}-X (MAPS-X) and its lazy variant, that can be plugged on existing centralized sampling-based tree planners X to produce plans with good explanations using a desirable number of images. We demonstrate the efficacy of this explanation-planning scheme and extensively evaluate the performance of MAPS-X

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge