"Time": models, code, and papers

A Novel Approach to Vehicle Pose Estimation using Automotive Radar

Jul 20, 2021

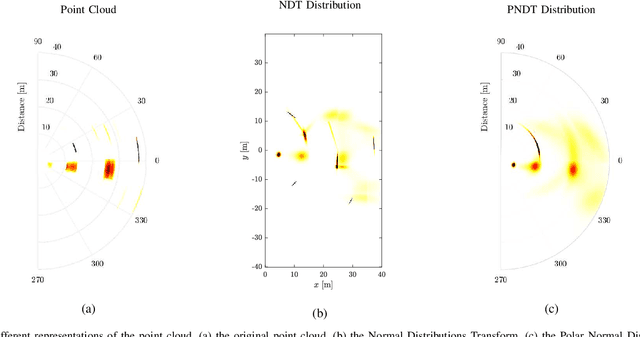

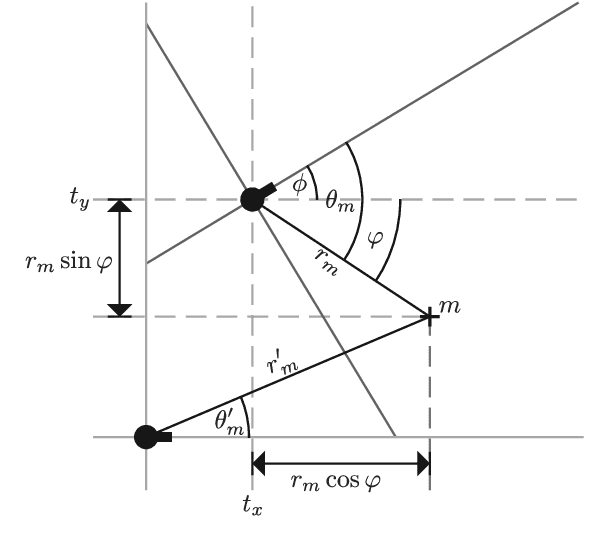

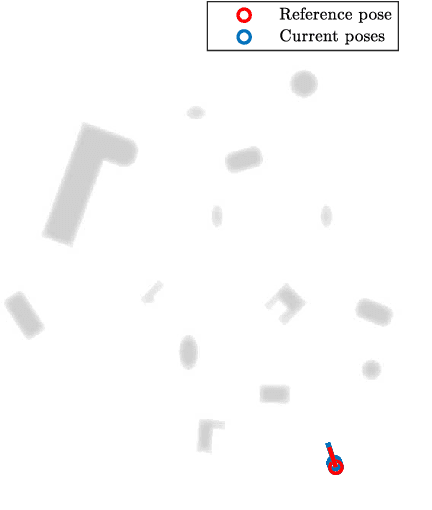

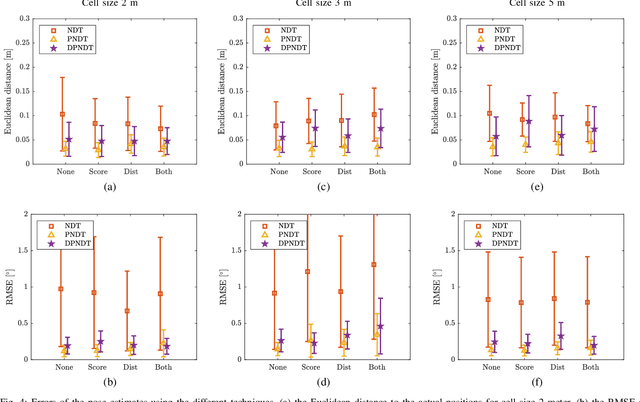

This paper presents a set of novel scan-matching techniques for vehicle pose estimation using automotive radar measurements. The proposed approach modifies the Normal Distributions Transform (NDT) -- a state-of-the-art scan-matching SLAM technique, widely used in lidar-based localization -- to account for particular aspects of radar environment perception. First, the polar NDT (PNDT) is introduced by solving the NDT problem in the polar coordinate system, natural for radar measurements. A better agreement between the measurement uncertainties and their representation in the scan-matching algorithm is achieved. Second, the extension of PNDT to take into account the Doppler measurements -- Doppler polar NDT (DPNDT) -- is proposed. Third, the SNR of detected targets is added to the optimization procedure to minimize the impact of RCS fluctuation. The improvement over the conventional NDT is demonstrated in numerical simulations and real data processing, showing the ability to decrease the localization error by a factor of 3 to 5, depending on the scenario, with a negligible increase in computational complexity. Finally, a DPNDT extension with the capability to compensate angular bias in array beam-forming is presented. Simulation results and real data processing show the possibility to correct it with the accuracy of $0.1^\circ$ almost in real-time.

Unsupervised learning with sparse space-and-time autoencoders

Nov 26, 2018

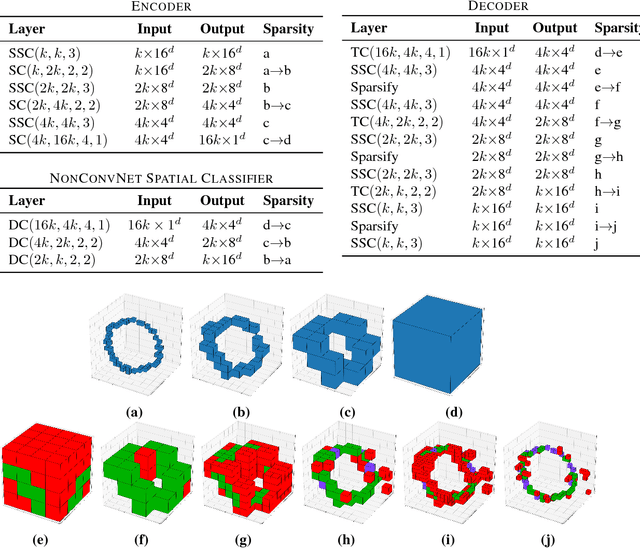

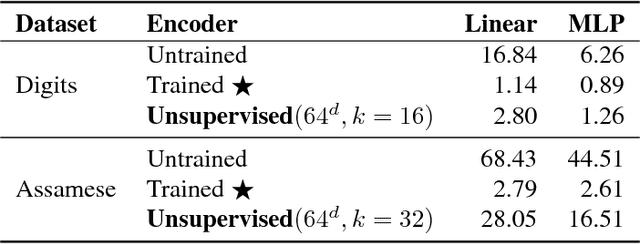

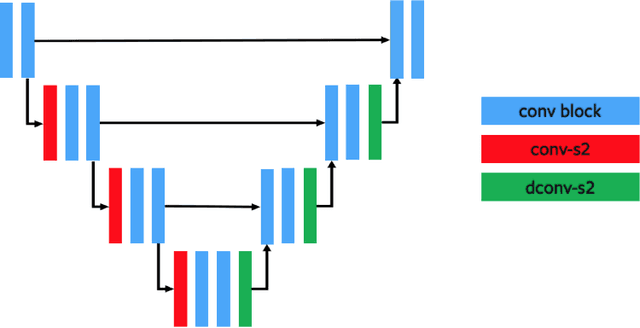

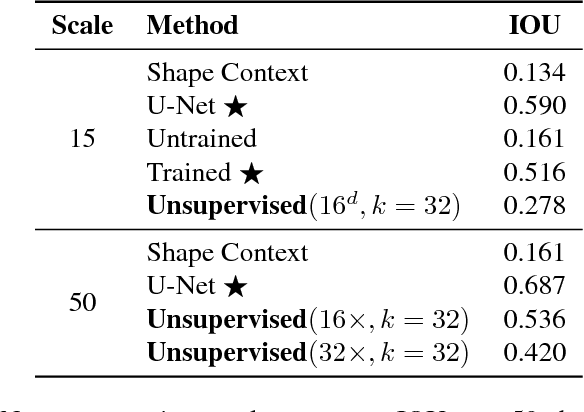

We use spatially-sparse two, three and four dimensional convolutional autoencoder networks to model sparse structures in 2D space, 3D space, and 3+1=4 dimensional space-time. We evaluate the resulting latent spaces by testing their usefulness for downstream tasks. Applications are to handwriting recognition in 2D, segmentation for parts in 3D objects, segmentation for objects in 3D scenes, and body-part segmentation for 4D wire-frame models generated from motion capture data.

Interactive query expansion for professional search applications

Jun 25, 2021

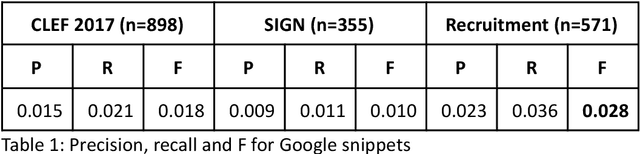

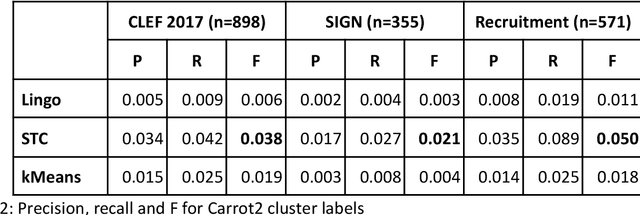

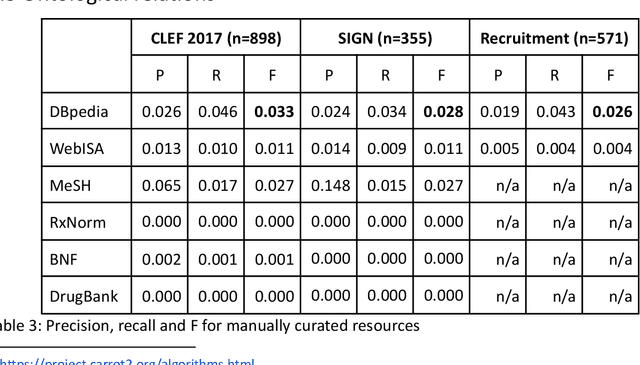

Knowledge workers (such as healthcare information professionals, patent agents and recruitment professionals) undertake work tasks where search forms a core part of their duties. In these instances, the search task is often complex and time-consuming and requires specialist expert knowledge to formulate accurate search strategies. Interactive features such as query expansion can play a key role in supporting these tasks. However, generating query suggestions within a professional search context requires that consideration be given to the specialist, structured nature of the search strategies they employ. In this paper, we investigate a variety of query expansion methods applied to a collection of Boolean search strategies used in a variety of real-world professional search tasks. The results demonstrate the utility of context-free distributional language models and the value of using linguistic cues such as ngram order to optimise the balance between precision and recall.

Improving Global Forest Mapping by Semi-automatic Sample Labeling with Deep Learning on Google Earth Images

Aug 06, 2021

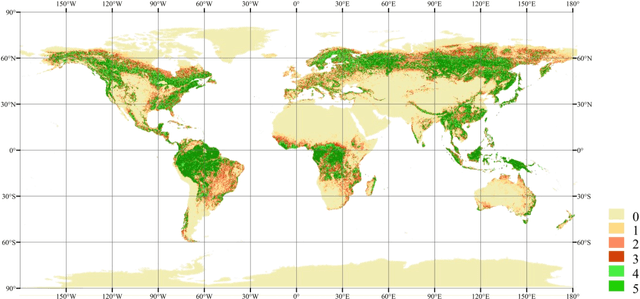

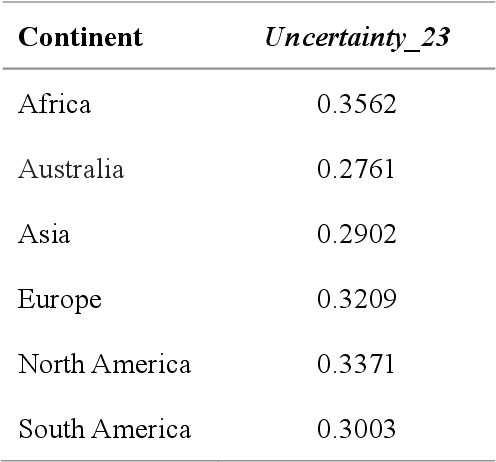

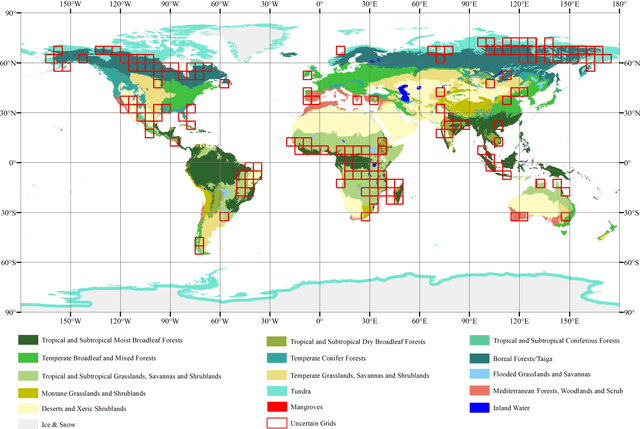

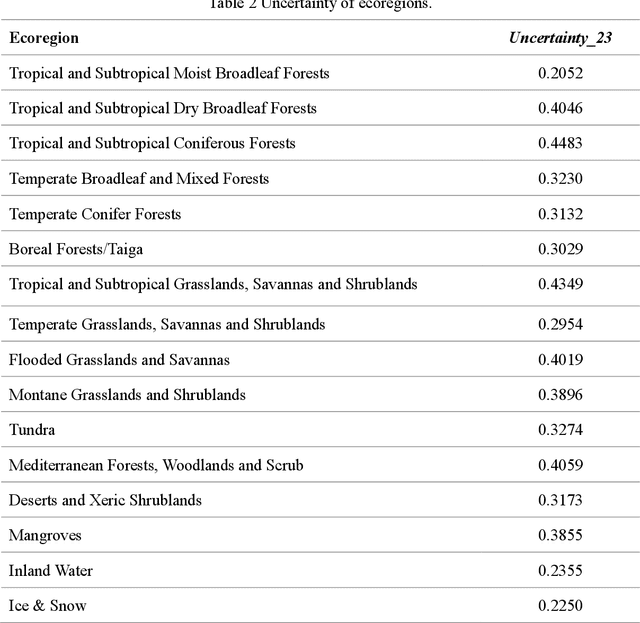

Global forest cover is critical to the provision of certain ecosystem services. With the advent of the google earth engine cloud platform, fine resolution global land cover mapping task could be accomplished in a matter of days instead of years. The amount of global forest cover (GFC) products has been steadily increasing in the last decades. However, it's hard for users to select suitable one due to great differences between these products, and the accuracy of these GFC products has not been verified on global scale. To provide guidelines for users and producers, it is urgent to produce a validation sample set at the global level. However, this labeling task is time and labor consuming, which has been the main obstacle to the progress of global land cover mapping. In this research, a labor-efficient semi-automatic framework is introduced to build a biggest ever Forest Sample Set (FSS) contained 395280 scattered samples categorized as forest, shrubland, grassland, impervious surface, etc. On the other hand, to provide guidelines for the users, we comprehensively validated the local and global mapping accuracy of all existing 30m GFC products, and analyzed and mapped the agreement of them. Moreover, to provide guidelines for the producers, optimal sampling strategy was proposed to improve the global forest classification. Furthermore, a new global forest cover named GlobeForest2020 has been generated, which proved to improve the previous highest state-of-the-art accuracies (obtained by Gong et al., 2017) by 2.77% in uncertain grids and by 1.11% in certain grids.

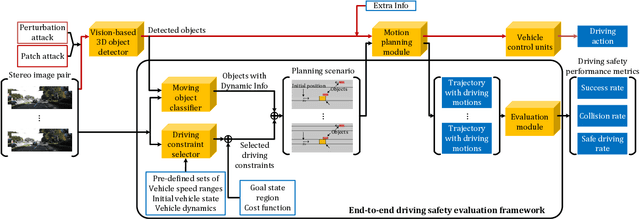

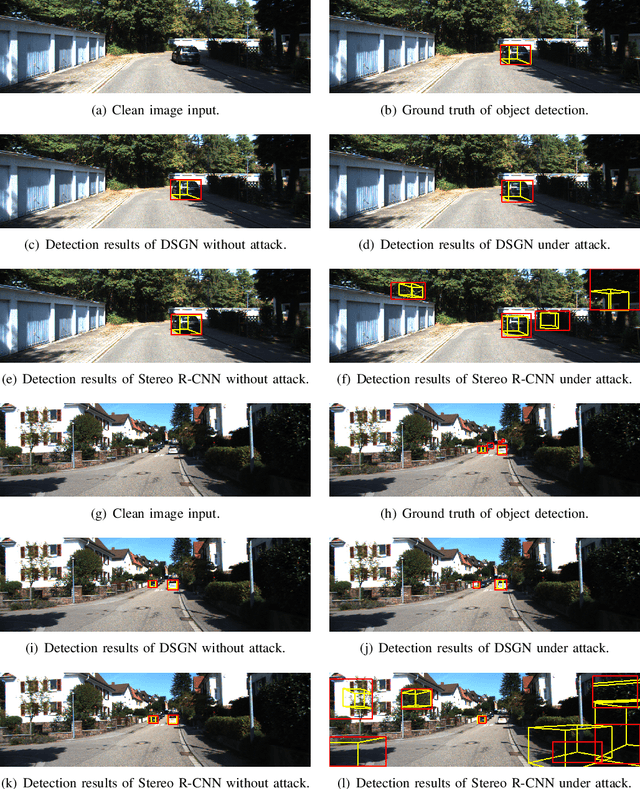

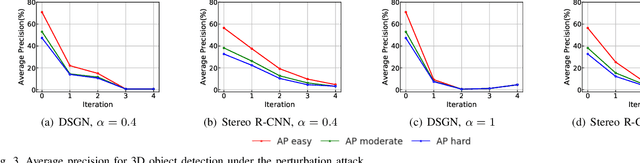

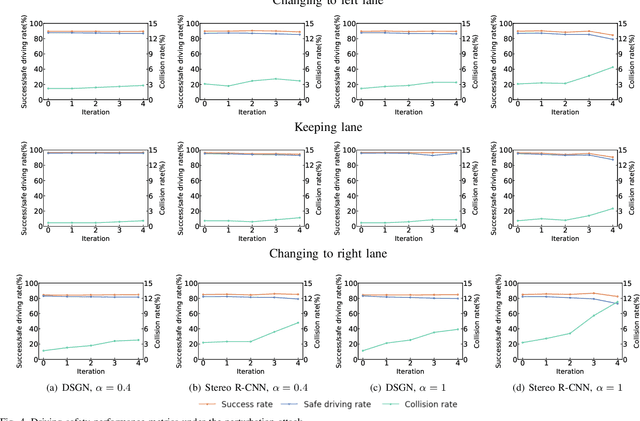

Evaluating Adversarial Attacks on Driving Safety in Vision-Based Autonomous Vehicles

Aug 06, 2021

In recent years, many deep learning models have been adopted in autonomous driving. At the same time, these models introduce new vulnerabilities that may compromise the safety of autonomous vehicles. Specifically, recent studies have demonstrated that adversarial attacks can cause a significant decline in detection precision of deep learning-based 3D object detection models. Although driving safety is the ultimate concern for autonomous driving, there is no comprehensive study on the linkage between the performance of deep learning models and the driving safety of autonomous vehicles under adversarial attacks. In this paper, we investigate the impact of two primary types of adversarial attacks, perturbation attacks and patch attacks, on the driving safety of vision-based autonomous vehicles rather than the detection precision of deep learning models. In particular, we consider two state-of-the-art models in vision-based 3D object detection, Stereo R-CNN and DSGN. To evaluate driving safety, we propose an end-to-end evaluation framework with a set of driving safety performance metrics. By analyzing the results of our extensive evaluation experiments, we find that (1) the attack's impact on the driving safety of autonomous vehicles and the attack's impact on the precision of 3D object detectors are decoupled, and (2) the DSGN model demonstrates stronger robustness to adversarial attacks than the Stereo R-CNN model. In addition, we further investigate the causes behind the two findings with an ablation study. The findings of this paper provide a new perspective to evaluate adversarial attacks and guide the selection of deep learning models in autonomous driving.

SynthSeg: Domain Randomisation for Segmentation of Brain MRI Scans of any Contrast and Resolution

Jul 20, 2021

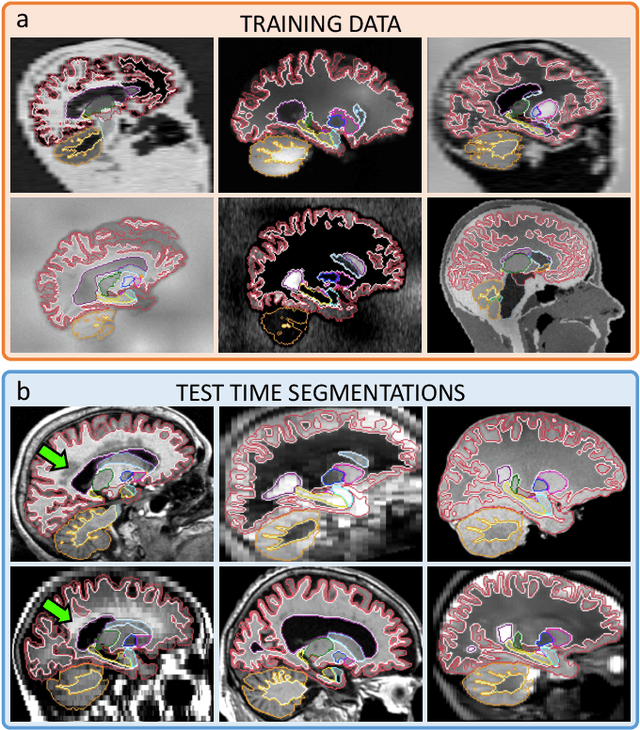

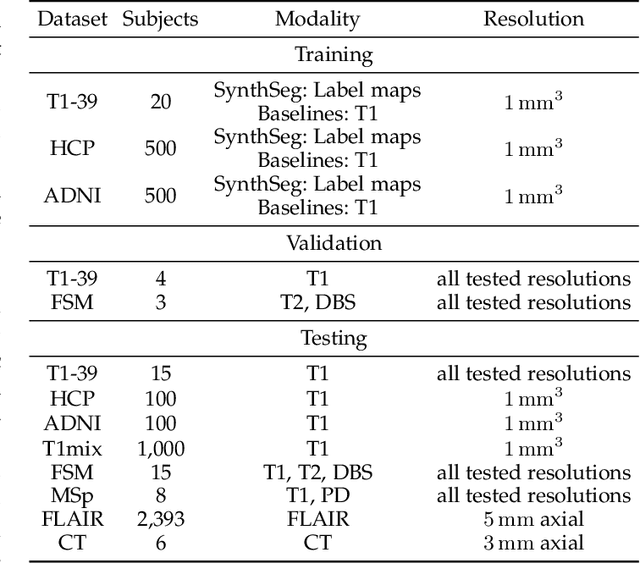

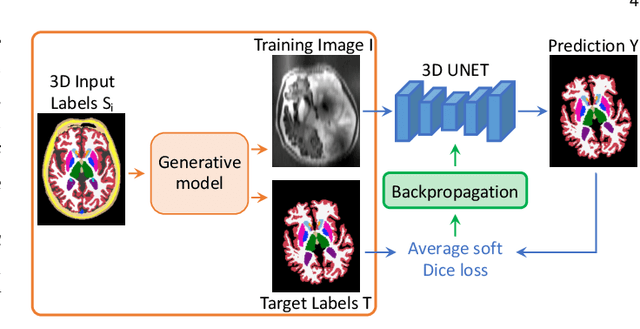

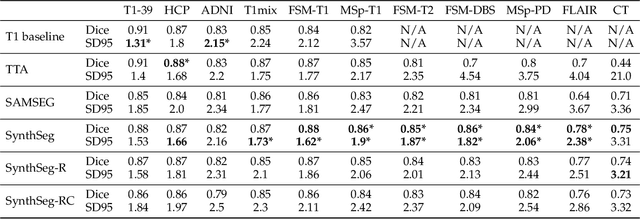

Despite advances in data augmentation and transfer learning, convolutional neural networks (CNNs) have difficulties generalising to unseen target domains. When applied to segmentation of brain MRI scans, CNNs are highly sensitive to changes in resolution and contrast: even within the same MR modality, decreases in performance can be observed across datasets. We introduce SynthSeg, the first segmentation CNN agnostic to brain MRI scans of any contrast and resolution. SynthSeg is trained with synthetic data sampled from a generative model inspired by Bayesian segmentation. Crucially, we adopt a \textit{domain randomisation} strategy where we fully randomise the generation parameters to maximise the variability of the training data. Consequently, SynthSeg can segment preprocessed and unpreprocessed real scans of any target domain, without retraining or fine-tuning. Because SynthSeg only requires segmentations to be trained (no images), it can learn from label maps obtained automatically from existing datasets of different populations (e.g., with atrophy and lesions), thus achieving robustness to a wide range of morphological variability. We demonstrate SynthSeg on 5,500 scans of 6 modalities and 10 resolutions, where it exhibits unparalleled generalisation compared to supervised CNNs, test time adaptation, and Bayesian segmentation. The code and trained model are available at https://github.com/BBillot/SynthSeg.

Interpreting Criminal Charge Prediction and Its Algorithmic Bias via Quantum-Inspired Complex Valued Networks

Jul 14, 2021

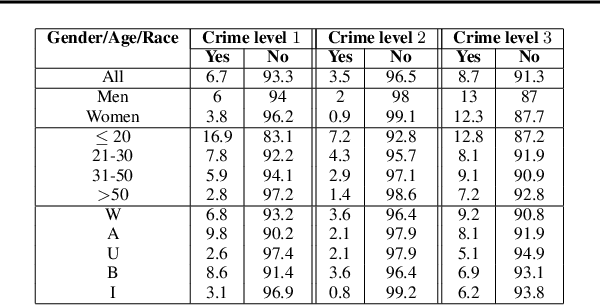

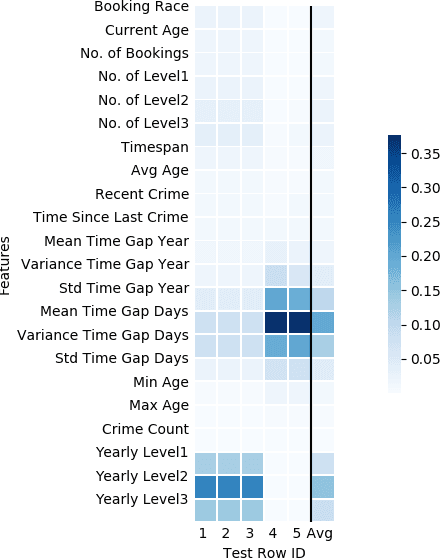

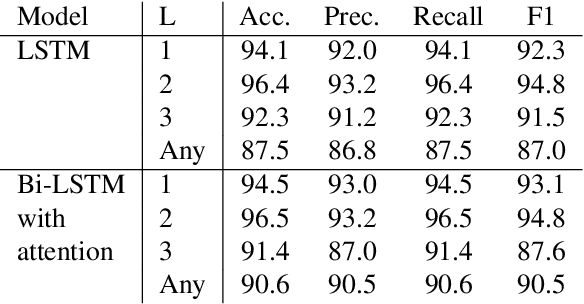

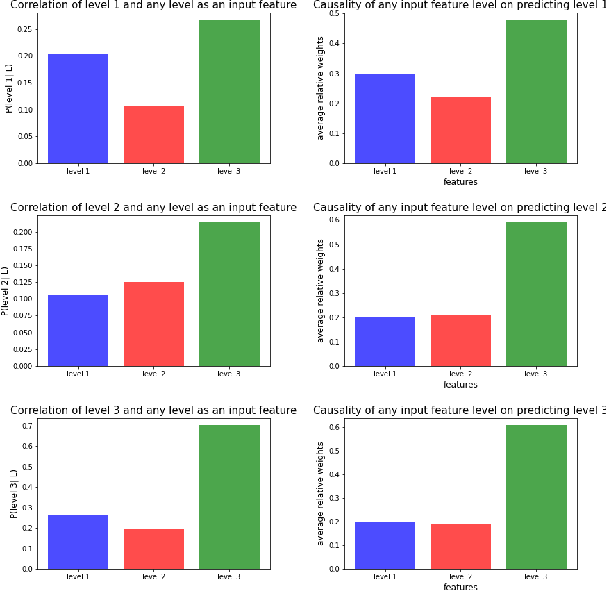

While predictive policing has become increasingly common in assisting with decisions in the criminal justice system, the use of these results is still controversial. Some software based on deep learning lacks accuracy (e.g., in F-1), and importantly many decision processes are not transparent, causing doubt about decision bias, such as perceived racial and age disparities. This paper addresses bias issues with post-hoc explanations to provide a trustable prediction of whether a person will receive future criminal charges given one's previous criminal records by learning temporal behavior patterns over twenty years. Bi-LSTM relieves the vanishing gradient problem, attentional mechanisms allow learning and interpretation of feature importance, and complex-valued networks inspired quantum physics to facilitate a certain level of transparency in modeling the decision process. Our approach shows a consistent and reliable prediction precision and recall on a real-life dataset. Our analysis of the importance of each input feature shows the critical causal impact on decision-making, suggesting that criminal histories are statistically significant factors, while identifiers, such as race and age, are not. Finally, our algorithm indicates that a suspect tends to rather than suddenly increase crime severity level over time gradually.

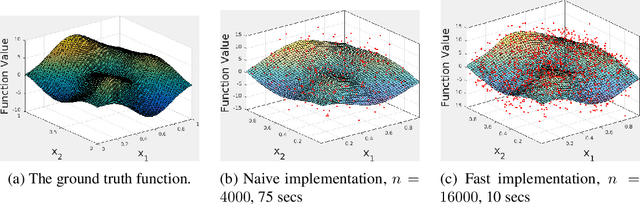

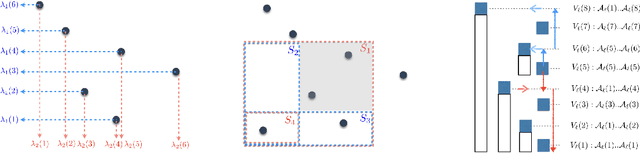

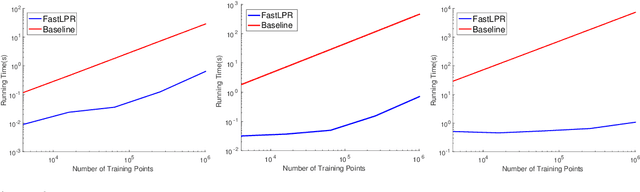

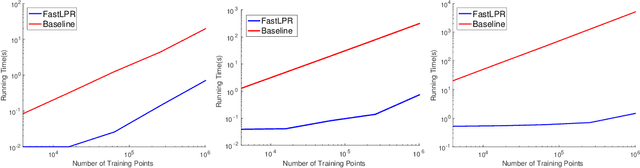

Near-Linear Time Local Polynomial Nonparametric Estimation

Feb 26, 2018

Local polynomial regression (Fan & Gijbels, 1996) is an important class of methods for nonparametric density estimation and regression problems. However, straightforward implementation of local polynomial regression has quadratic time complexity which hinders its applicability in large-scale data analysis. In this paper, we significantly accelerate the computation of local polynomial estimates by novel applications of multi-dimensional binary indexed trees (Fenwick, 1994). Both time and space complexities of our proposed algorithm are nearly linear in the number of inputs. Simulation results confirm the efficiency and effectiveness of our approach.

Impact of Rotary-Wing UAV Wobbling on Millimeter-wave Air-to-Ground Wireless Channel

Jul 14, 2021

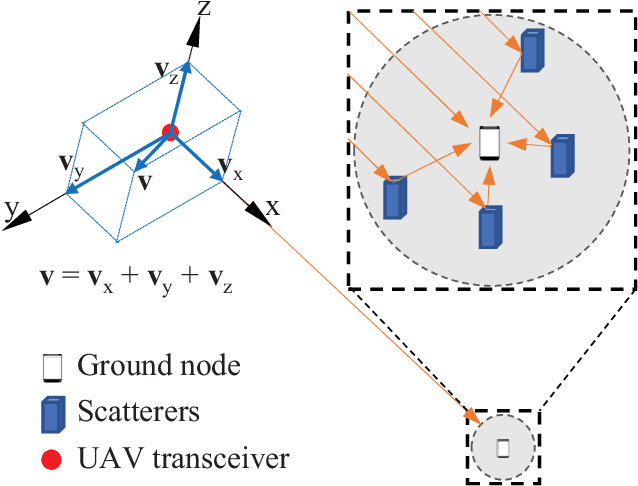

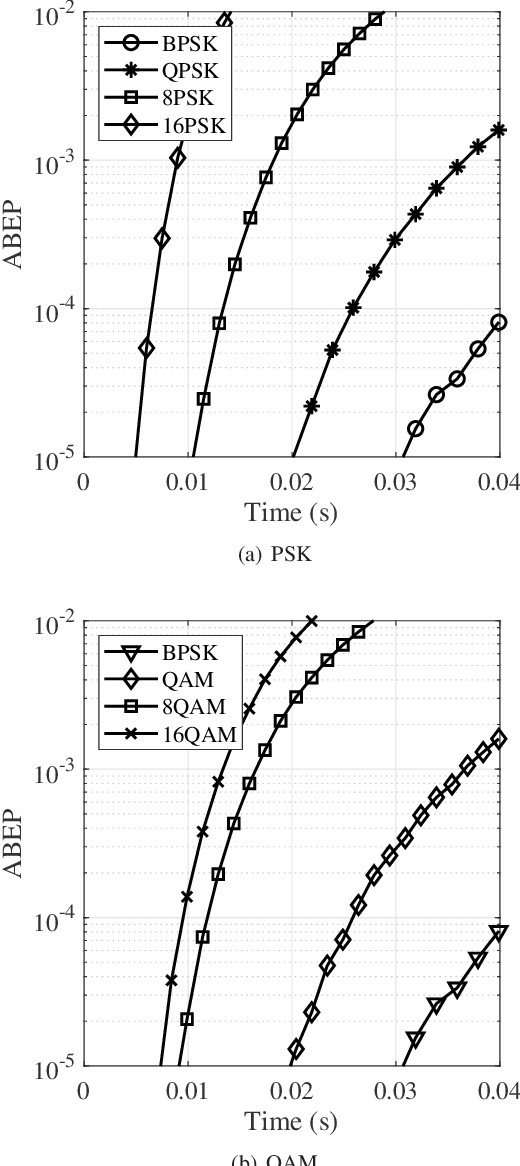

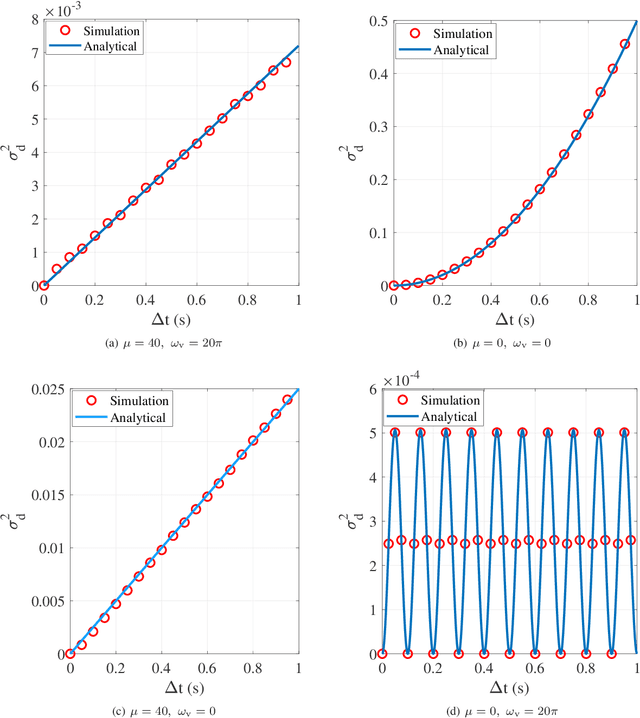

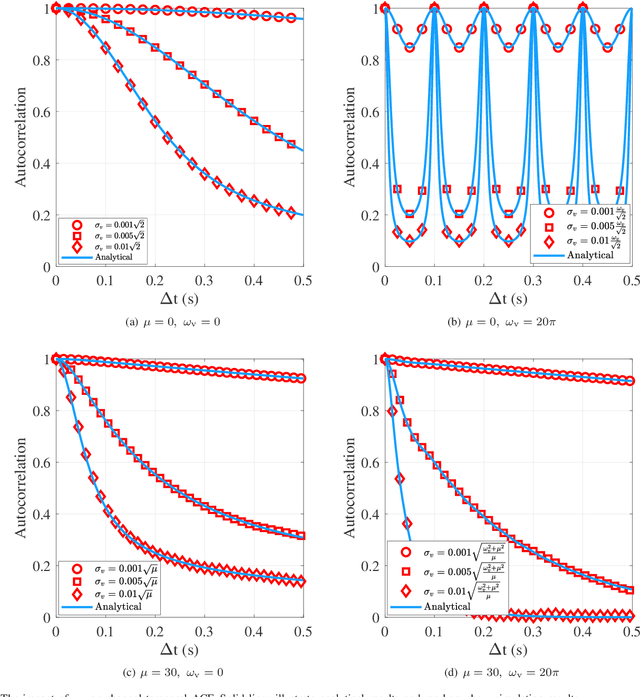

Millimeter-wave rotary-wing (RW) unmanned aerial vehicle (UAV) air-to-ground (A2G) links face unpredictable Doppler effect arising from the inevitable wobbling of RW UAV. Moreover, the time-varying channel characteristics during transmission lead to inaccurate channel estimation, which in turn results in the deteriorated bit error probability performance of the UAV A2G link. This paper studies the impact of mechanical wobbling on the Doppler effect of the millimeter-wave wireless channel between a hovering RW UAV and a ground node. Our contributions of this paper lie in: i) modeling the wobbling process of a hovering RW UAV; ii) developing an analytical model to derive the channel temporal autocorrelation function (ACF) for the millimeter-wave RW UAV A2G link in a closed-form expression; and iii) investigating how RW UAV wobbling impacts the Doppler effect on the millimeter-wave RW UAV A2G link. Numerical results show that different RW UAV wobbling patterns impact the amplitude and the frequency of ACF oscillation in the millimeter-wave RW UAV A2G link. For UAV wobbling, the channel temporal ACF decreases quickly and the impact of the Doppler effect is significant on the millimeter-wave A2G link.

Nonstationary Portfolios: Diversification in the Spectral Domain

Jan 31, 2021

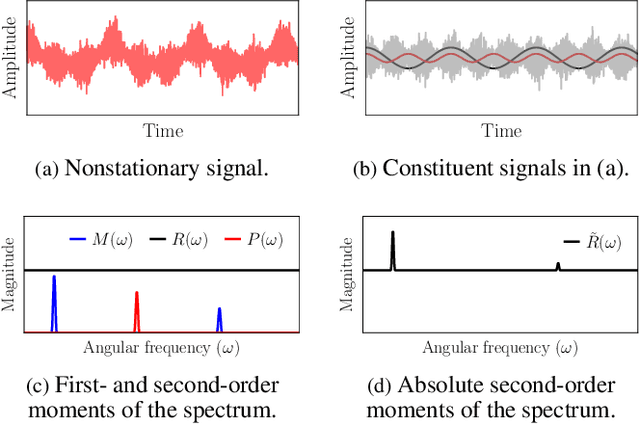

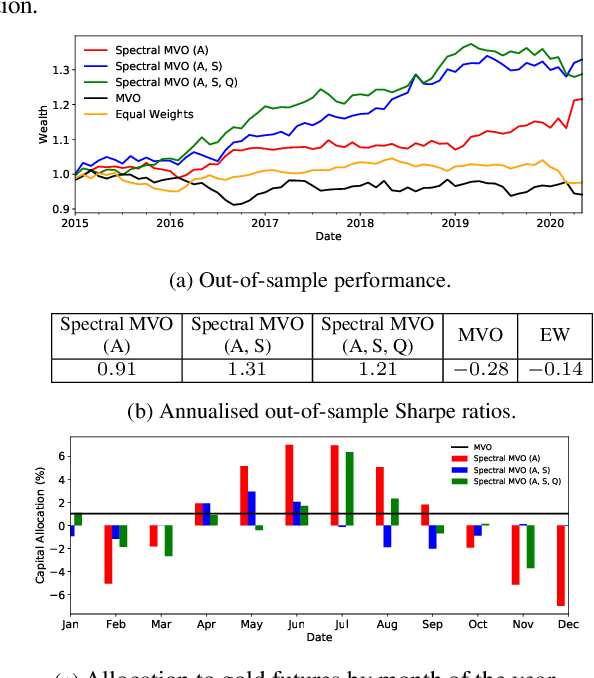

Classical portfolio optimization methods typically determine an optimal capital allocation through the implicit, yet critical, assumption of statistical time-invariance. Such models are inadequate for real-world markets as they employ standard time-averaging based estimators which suffer significant information loss if the market observables are non-stationary. To this end, we reformulate the portfolio optimization problem in the spectral domain to cater for the nonstationarity inherent to asset price movements and, in this way, allow for optimal capital allocations to be time-varying. Unlike existing spectral portfolio techniques, the proposed framework employs augmented complex statistics in order to exploit the interactions between the real and imaginary parts of the complex spectral variables, which in turn allows for the modelling of both harmonics and cyclostationarity in the time domain. The advantages of the proposed framework over traditional methods are demonstrated through numerical simulations using real-world price data.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge