"Time": models, code, and papers

Hierarchical Sketch Induction for Paraphrase Generation

Mar 21, 2022

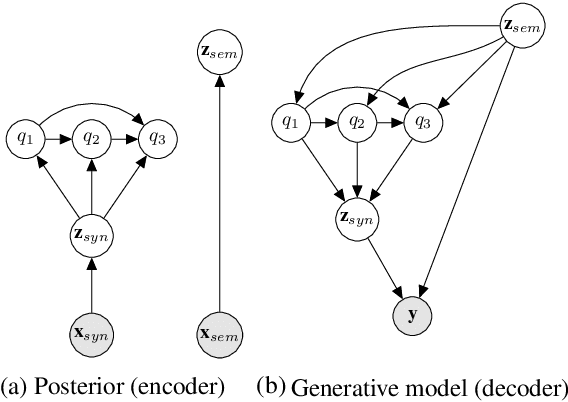

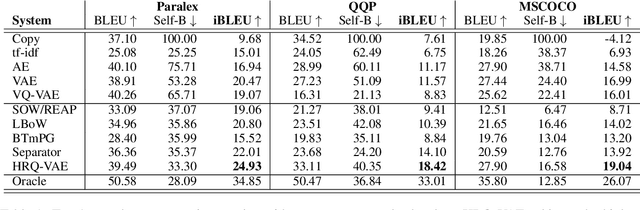

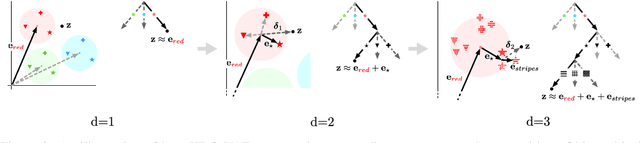

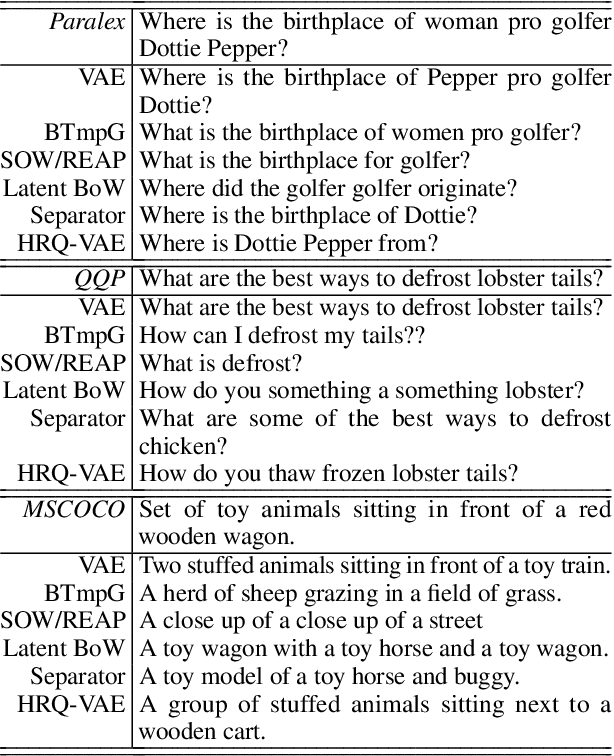

We propose a generative model of paraphrase generation, that encourages syntactic diversity by conditioning on an explicit syntactic sketch. We introduce Hierarchical Refinement Quantized Variational Autoencoders (HRQ-VAE), a method for learning decompositions of dense encodings as a sequence of discrete latent variables that make iterative refinements of increasing granularity. This hierarchy of codes is learned through end-to-end training, and represents fine-to-coarse grained information about the input. We use HRQ-VAE to encode the syntactic form of an input sentence as a path through the hierarchy, allowing us to more easily predict syntactic sketches at test time. Extensive experiments, including a human evaluation, confirm that HRQ-VAE learns a hierarchical representation of the input space, and generates paraphrases of higher quality than previous systems.

Accurate Molecular-Orbital-Based Machine Learning Energies via Unsupervised Clustering of Chemical Space

Apr 21, 2022

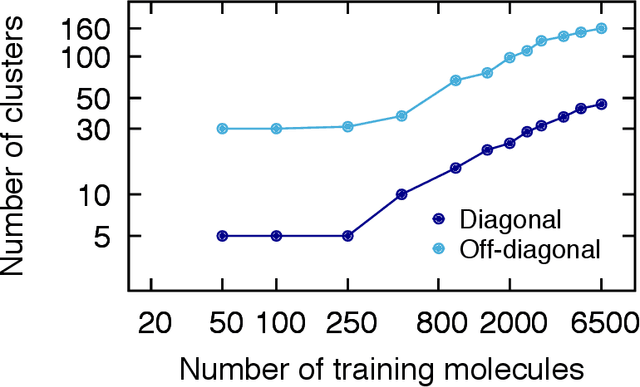

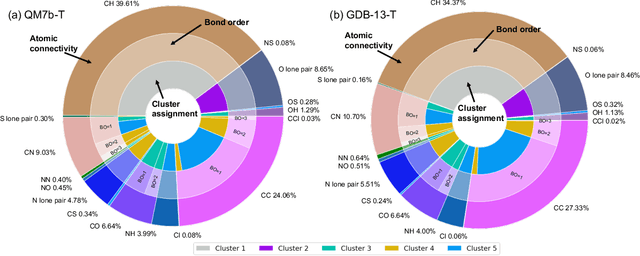

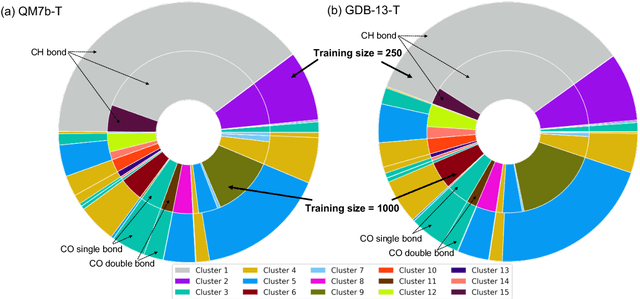

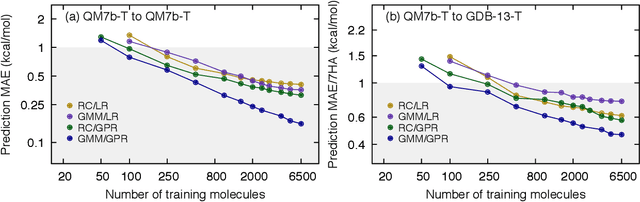

We introduce an unsupervised clustering algorithm to improve training efficiency and accuracy in predicting energies using molecular-orbital-based machine learning (MOB-ML). This work determines clusters via the Gaussian mixture model (GMM) in an entirely automatic manner and simplifies an earlier supervised clustering approach [J. Chem. Theory Comput., 15, 6668 (2019)] by eliminating both the necessity for user-specified parameters and the training of an additional classifier. Unsupervised clustering results from GMM have the advantage of accurately reproducing chemically intuitive groupings of frontier molecular orbitals and having improved performance with an increasing number of training examples. The resulting clusters from supervised or unsupervised clustering is further combined with scalable Gaussian process regression (GPR) or linear regression (LR) to learn molecular energies accurately by generating a local regression model in each cluster. Among all four combinations of regressors and clustering methods, GMM combined with scalable exact Gaussian process regression (GMM/GPR) is the most efficient training protocol for MOB-ML. The numerical tests of molecular energy learning on thermalized datasets of drug-like molecules demonstrate the improved accuracy, transferability, and learning efficiency of GMM/GPR over not only other training protocols for MOB-ML, i.e., supervised regression-clustering combined with GPR(RC/GPR) and GPR without clustering. GMM/GPR also provide the best molecular energy predictions compared with the ones from literature on the same benchmark datasets. With a lower scaling, GMM/GPR has a 10.4-fold speedup in wall-clock training time compared with scalable exact GPR with a training size of 6500 QM7b-T molecules.

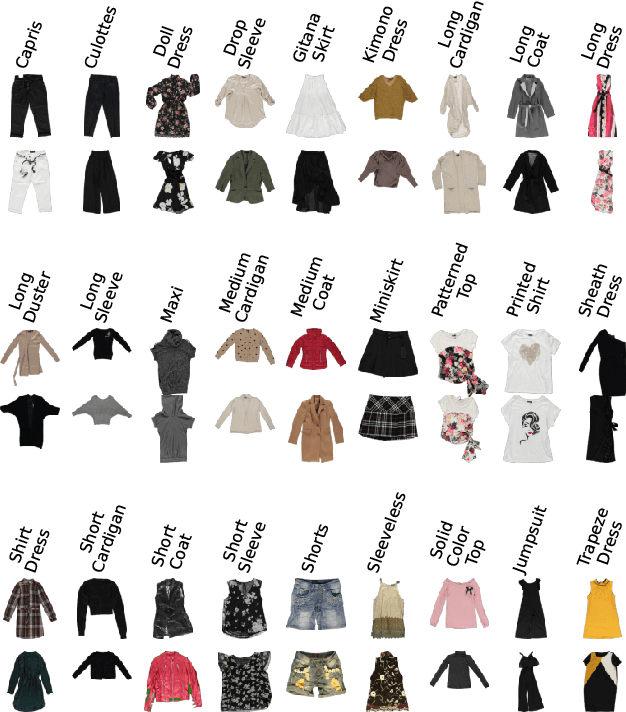

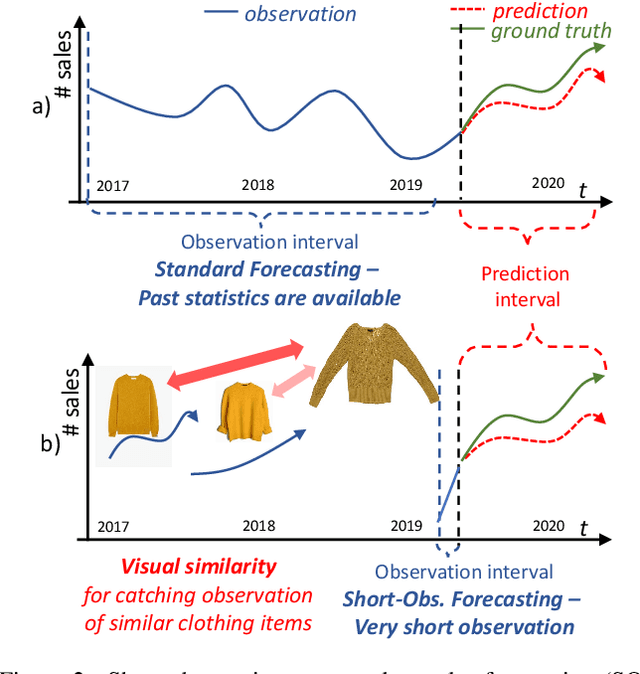

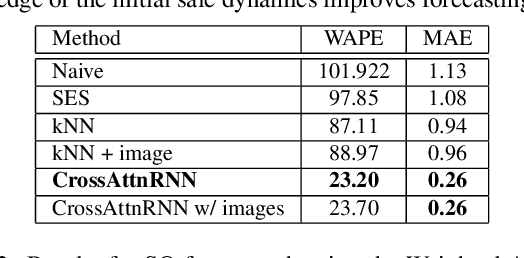

The multi-modal universe of fast-fashion: the Visuelle 2.0 benchmark

Apr 14, 2022

We present Visuelle 2.0, the first dataset useful for facing diverse prediction problems that a fast-fashion company has to manage routinely. Furthermore, we demonstrate how the use of computer vision is substantial in this scenario. Visuelle 2.0 contains data for 6 seasons / 5355 clothing products of Nuna Lie, a famous Italian company with hundreds of shops located in different areas within the country. In particular, we focus on a specific prediction problem, namely short-observation new product sale forecasting (SO-fore). SO-fore assumes that the season has started and a set of new products is on the shelves of the different stores. The goal is to forecast the sales for a particular horizon, given a short, available past (few weeks), since no earlier statistics are available. To be successful, SO-fore approaches should capture this short past and exploit other modalities or exogenous data. To these aims, Visuelle 2.0 is equipped with disaggregated data at the item-shop level and multi-modal information for each clothing item, allowing computer vision approaches to come into play. The main message that we deliver is that the use of image data with deep networks boosts performances obtained when using the time series in long-term forecasting scenarios, ameliorating the WAPE by 8.2% and the MAE by 7.7%. The dataset is available at: https://humaticslab.github.io/forecasting/visuelle.

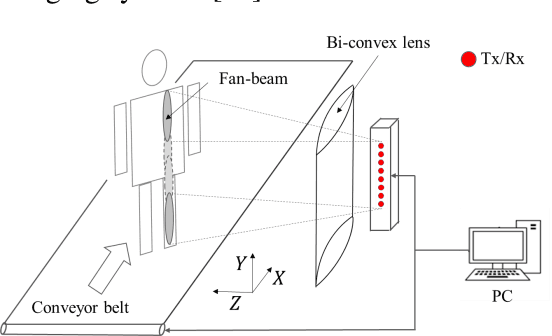

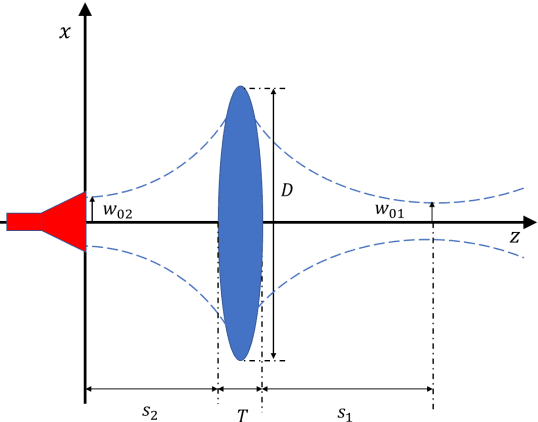

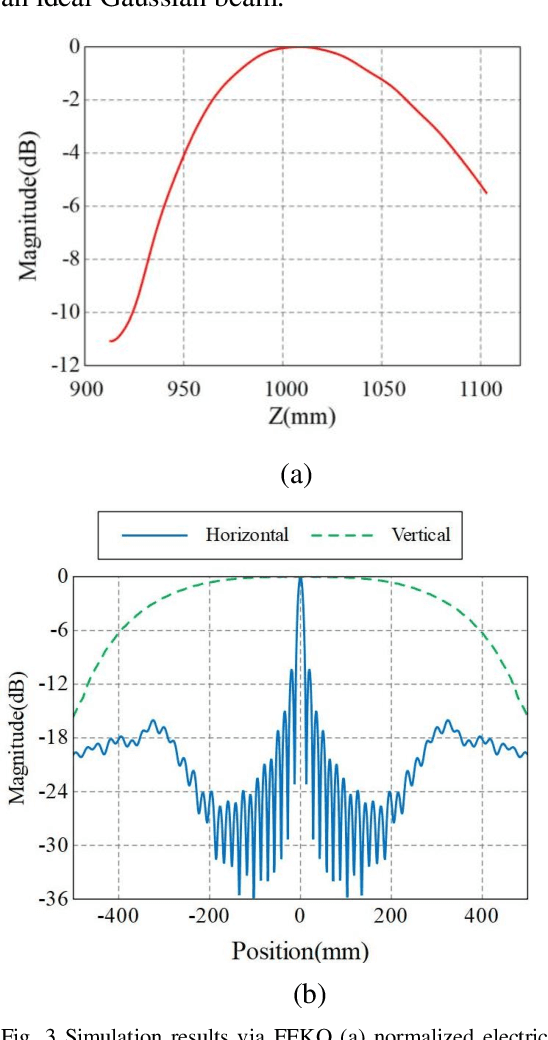

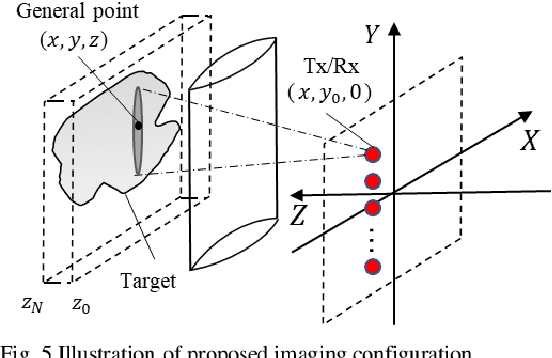

Active millimeter wave three-dimensional scan real-time imaging mechanism with a line antenna array

Feb 08, 2021

Active Millimeter wave (AMMW) imaging is of interest as it has played important roles in wide variety of applications, from nondestructive test to medical diagnosis. Current AMMW imaging systems have a high spatial resolution and can realize three-dimensional (3D) imaging. However, conventional AMMW imaging systems based on the synthetic aperture require either time-consume acquisition or reconstruction. The AMMW imaging systems based on real-aperture are able to real-time imaging but they need a large aperture and a complex two-dimensional (2D) scan structure to get 3D images. Besides, most AMMW imaging systems need the targets keep still and hold a special posture while screening, limiting the throughput. Here, by using beam control techniques and fast post-processing algorithms, we demonstrate the AMMW 3D scan real-time imaging mechanism with a line antenna array, which can realize 3D real-time imaging by a simple one-dimensional (1D) linear moving, simultaneously, with a satisfactory throughput (over 2000 people per-hour, 10 times than the commercial AMMW imaging systems) and a low system cost. First, the original spherical beam lines generated by the linear antenna array are modulated to fan beam lines via a bi-convex cylindrical lens. Then the holographic imaging algorithm is used to primarily focus the echo data of the imaged object. Finally, the defocus blur is corrected rapidly to get high resolution images by deconvolution. Since our method does not need targets to keep still, has a low system cost, can achieve 3D real-time imaging with a satisfactory throughput simultaneously, this work has the potential to serve as a foundation for future short-range AMMW imaging systems, which can be used in a variety of fields such as security inspection, medical diagnosis, etc.

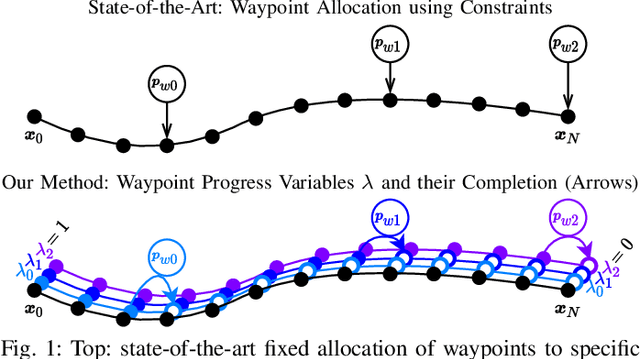

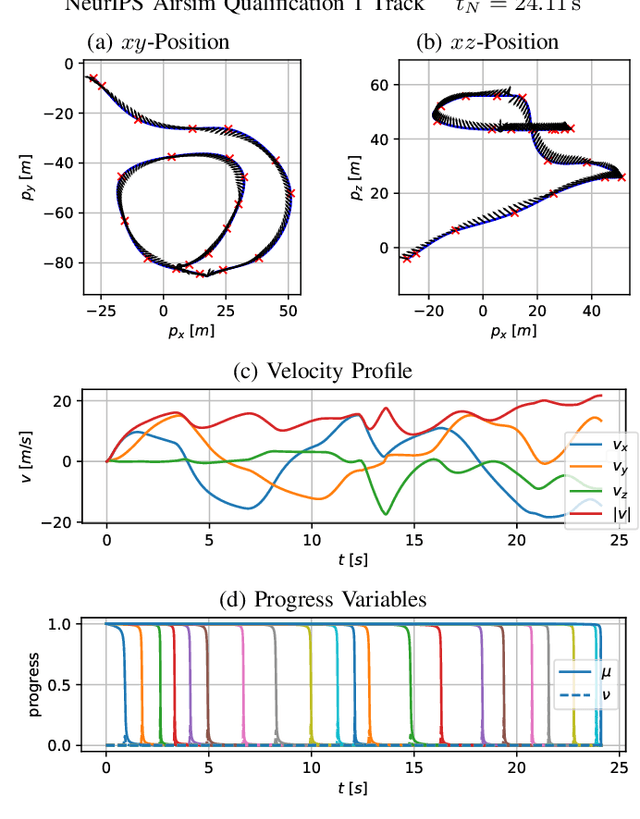

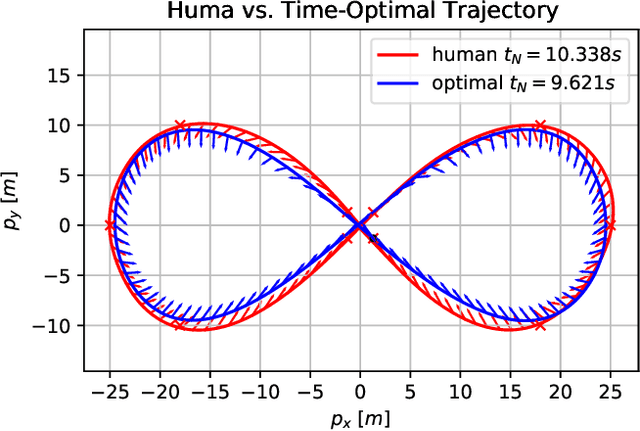

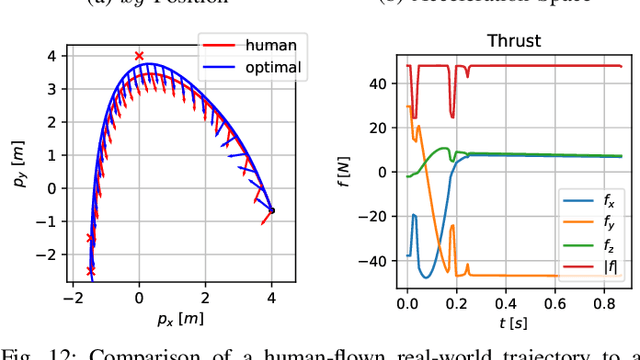

CPC: Complementary Progress Constraints for Time-Optimal Quadrotor Trajectories

Jul 13, 2020

In many mobile robotics scenarios, such as drone racing, the goal is to generate a trajectory that passes through multiple waypoints in minimal time. This problem is referred to as time-optimal planning. State-of-the-art approaches either use polynomial trajectory formulations, which are suboptimal due to their smoothness, or numerical optimization, which requires waypoints to be allocated as costs or constraints to specific discrete-time nodes. For time-optimal planning, this time-allocation is a priori unknown and renders traditional approaches incapable of producing truly time-optimal trajectories. We introduce a novel formulation of progress bound to waypoints by a complementarity constraint. While the progress variables indicate the completion of a waypoint, change of this progress is only allowed in local proximity to the waypoint via complementarity constraints. This enables the simultaneous optimization of the trajectory and the time-allocation of the waypoints. To the best of our knowledge, this is the first approach allowing for truly time-optimal trajectory planning for quadrotors and other systems. We perform and discuss evaluations on optimality and convexity, compare to other related approaches, and qualitatively to an expert-human baseline.

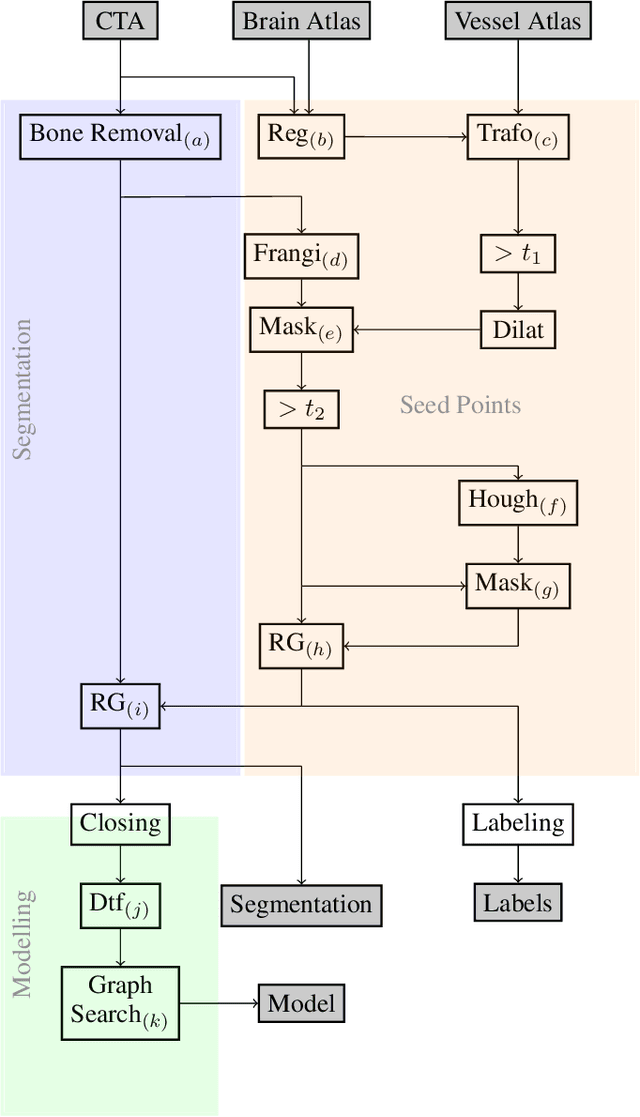

An Algorithm for the Labeling and Interactive Visualization of the Cerebrovascular System of Ischemic Strokes

Apr 26, 2022

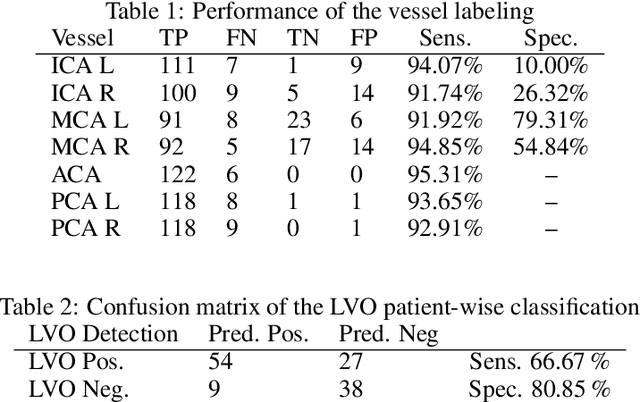

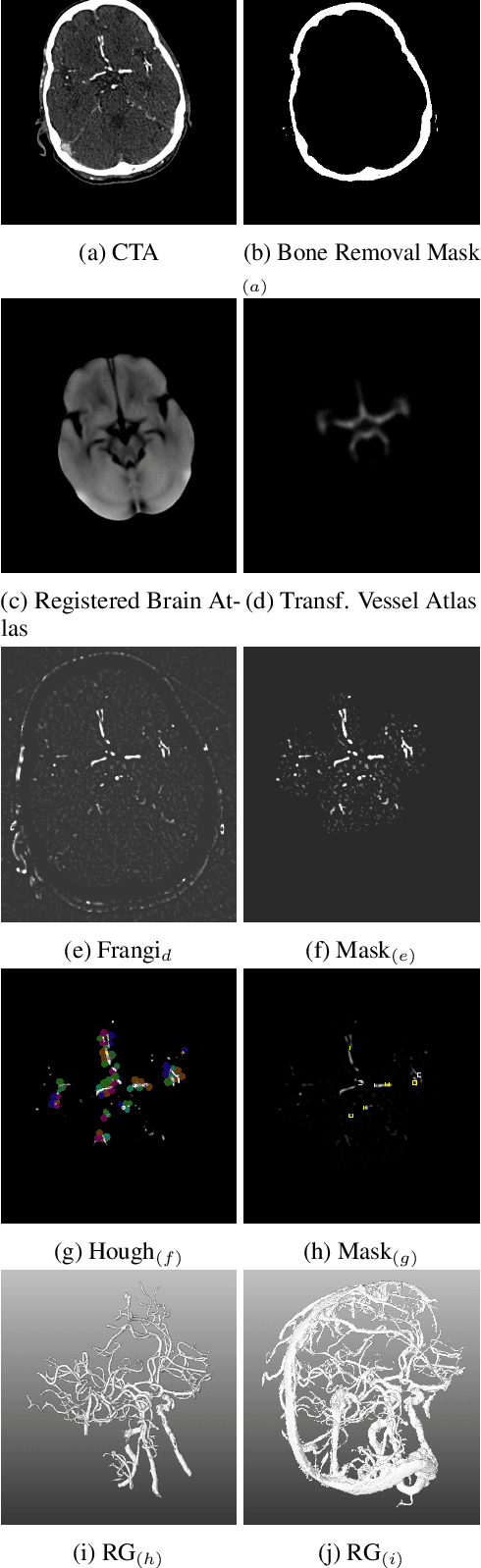

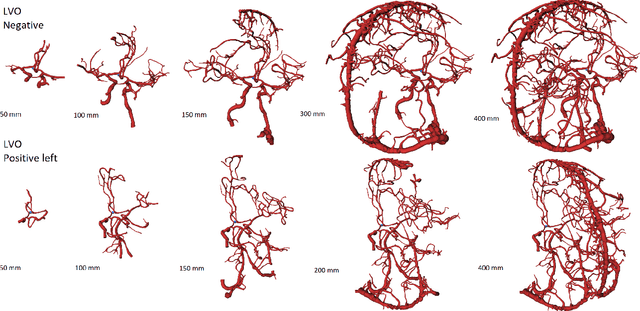

During the diagnosis of ischemic strokes, the Circle of Willis and its surrounding vessels are the arteries of interest. Their visualization in case of an acute stroke is often enabled by Computed Tomography Angiography (CTA). Still, the identification and analysis of the cerebral arteries remain time consuming in such scans due to a large number of peripheral vessels which may disturb the visual impression. In previous work we proposed VirtualDSA++, an algorithm designed to segment and label the cerebrovascular tree on CTA scans. Especially with stroke patients, labeling is a delicate procedure, as in the worst case whole hemispheres may not be present due to impeded perfusion. Hence, we extended the labeling mechanism for the cerebral arteries to identify occluded vessels. In the work at hand, we place the algorithm in a clinical context by evaluating the labeling and occlusion detection on stroke patients, where we have achieved labeling sensitivities comparable to other works between 92\,\% and 95\,\%. To the best of our knowledge, ours is the first work to address labeling and occlusion detection at once, whereby a sensitivity of 67\,\% and a specificity of 81\,\% were obtained for the latter. VirtualDSA++ also automatically segments and models the intracranial system, which we further used in a deep learning driven follow up work. We present the generic concept of iterative systematic search for pathways on all nodes of said model, which enables new interactive features. Exemplary, we derive in detail, firstly, the interactive planning of vascular interventions like the mechanical thrombectomy and secondly, the interactive suppression of vessel structures that are not of interest in diagnosing strokes (like veins). We discuss both features as well as further possibilities emerging from the proposed concept.

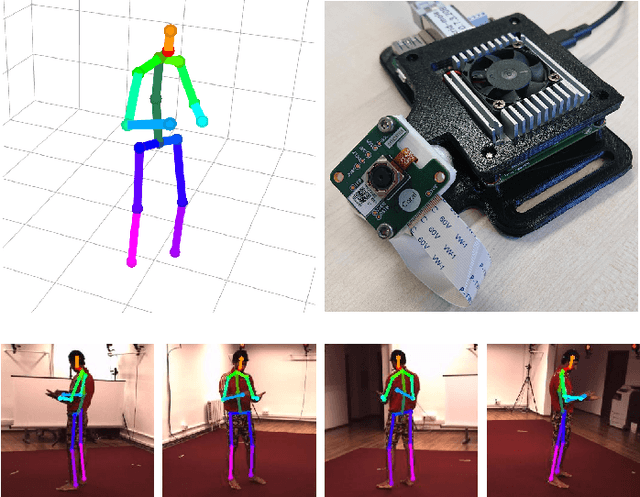

Real-Time Multi-View 3D Human Pose Estimation using Semantic Feedback to Smart Edge Sensors

Jun 28, 2021

We present a novel method for estimation of 3D human poses from a multi-camera setup, employing distributed smart edge sensors coupled with a backend through a semantic feedback loop. 2D joint detection for each camera view is performed locally on a dedicated embedded inference processor. Only the semantic skeleton representation is transmitted over the network and raw images remain on the sensor board. 3D poses are recovered from 2D joints on a central backend, based on triangulation and a body model which incorporates prior knowledge of the human skeleton. A feedback channel from backend to individual sensors is implemented on a semantic level. The allocentric 3D pose is backprojected into the sensor views where it is fused with 2D joint detections. The local semantic model on each sensor can thus be improved by incorporating global context information. The whole pipeline is capable of real-time operation. We evaluate our method on three public datasets, where we achieve state-of-the-art results and show the benefits of our feedback architecture, as well as in our own setup for multi-person experiments. Using the feedback signal improves the 2D joint detections and in turn the estimated 3D poses.

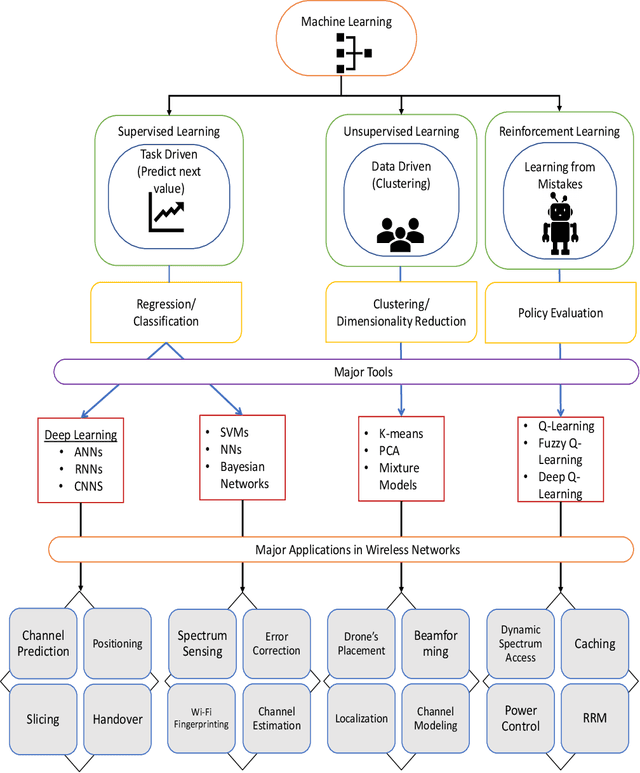

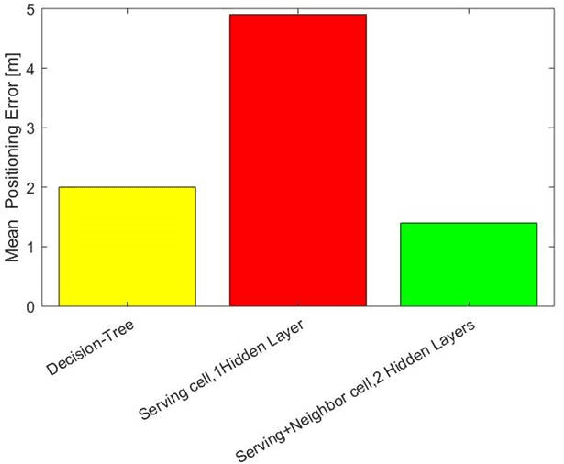

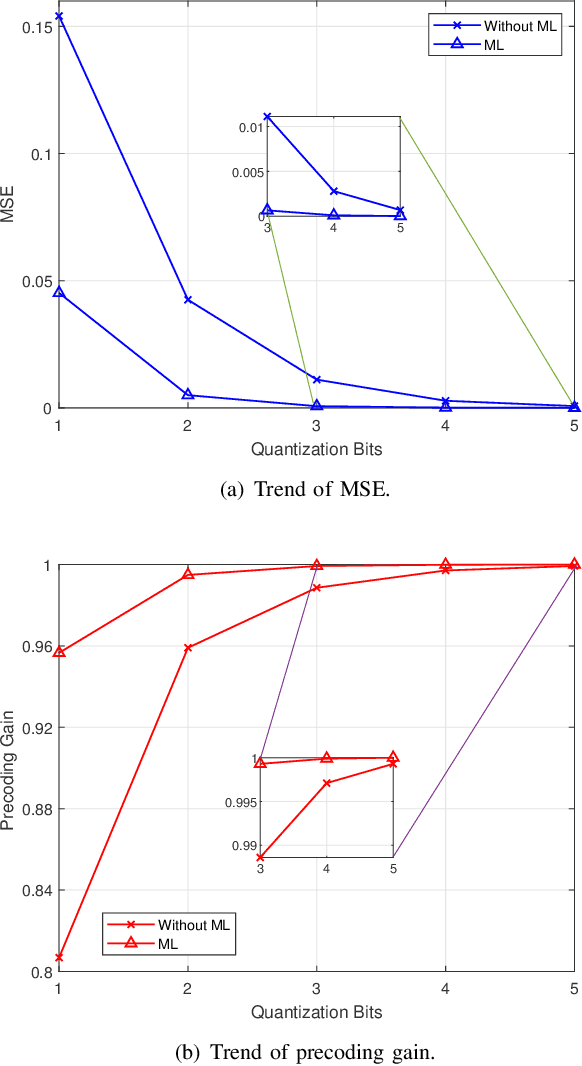

Artificial Intelligence for 6G Networks: Technology Advancement and Standardization

Apr 02, 2022

With the deployment of 5G networks, standards organizations have started working on the design phase for sixth-generation (6G) networks. 6G networks will be immensely complex, requiring more deployment time, cost and management efforts. On the other hand, mobile network operators demand these networks to be intelligent, self-organizing, and cost-effective to reduce operating expenses (OPEX). Machine learning (ML), a branch of artificial intelligence (AI), is the answer to many of these challenges providing pragmatic solutions, which can entirely change the future of wireless network technologies. By using some case study examples, we briefly examine the most compelling problems, particularly at the physical (PHY) and link layers in cellular networks where ML can bring significant gains. We also review standardization activities in relation to the use of ML in wireless networks and future timeline on readiness of standardization bodies to adapt to these changes. Finally, we highlight major issues in ML use in the wireless technology, and provide potential directions to mitigate some of them in 6G wireless networks.

* 6

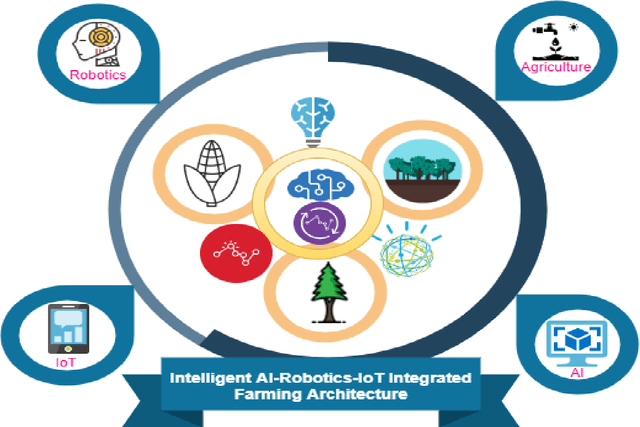

Towards technological adaptation of advanced farming through AI, IoT, and Robotics: A Comprehensive overview

Feb 21, 2022

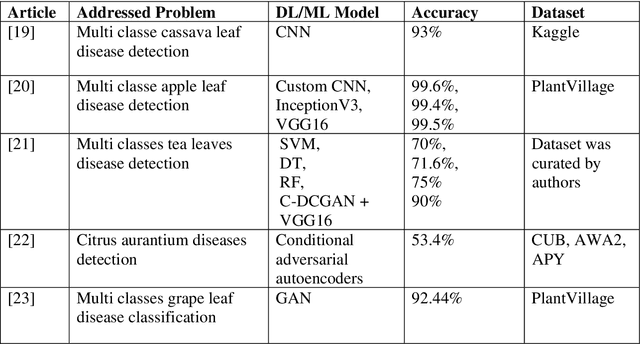

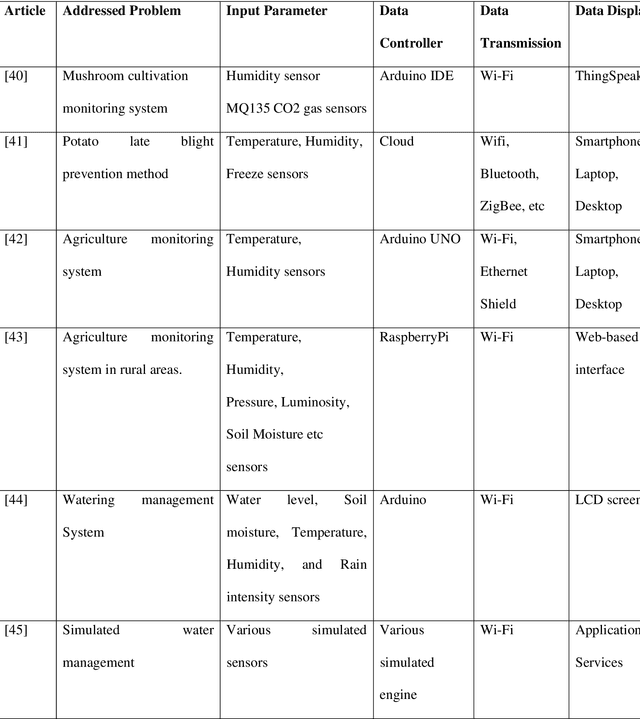

The population explosion of the 21st century has adversely affected the natural resources with restricted availability of cultivable land, increased average temperatures due to global warming, and carbon footprint resulting in a drastic increase in floods as well as droughts thus making food security significant anxiety for most countries. The traditional methods were no longer sufficient which paved the way for technological ascents such as a substantial rise in Artificial Intelligence (AI), Internet of Things (IoT), as well as Robotics that provides high productivity, functional efficiency, flexibility, cost-effectiveness in the domain of agriculture. AI, IoT, and Robotics-based devices and methods have produced new paradigms and opportunities in agriculture. AI's existing approaches are soil management, crop diseases identification, weed identification, and management in collaboration with IoT devices. IoT has utilized automatic agricultural operations and real-time monitoring with few personnel employed in real-time. The major existing applications of agricultural robotics are for the function of soil preparation, planting, monitoring, harvesting, and storage. In this paper, researchers have explored a comprehensive overview of recent implementation, scopes, opportunities, challenges, limitations, and future research instructions of AI, IoT, and Robotics based methodology in the agriculture sector.

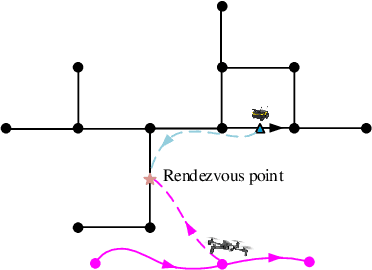

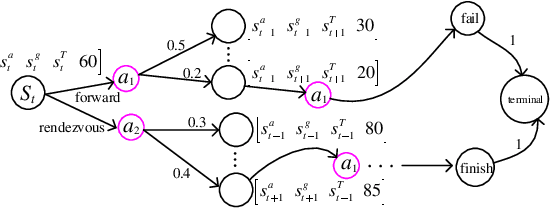

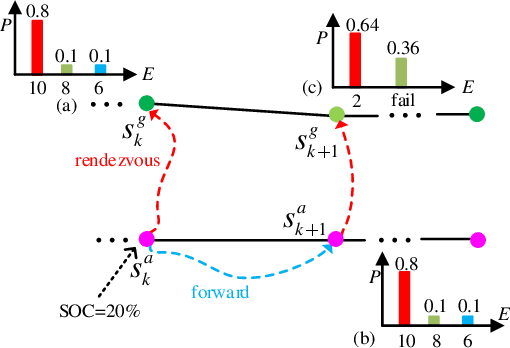

Risk-aware UAV-UGV Rendezvous with Chance-Constrained Markov Decision Process

Apr 10, 2022

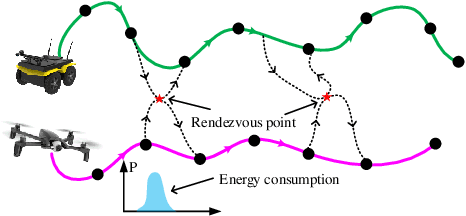

We study a chance-constrained variant of the cooperative aerial-ground vehicle routing problem, in which an Unmanned Aerial Vehicle (UAV) with limited battery capacity and an Unmanned Ground Vehicle (UGV) that can also act as a mobile recharging station need to jointly accomplish a mission such as monitoring a set of points. Due to the limited battery capacity of the UAV, two vehicles sometimes have to deviate from their task to rendezvous and recharge the UAV\@. Unlike prior work that has focused on the deterministic case, we address the challenge of stochastic energy consumption of the UAV\@. We are interested in finding the optimal policy that decides when and where to rendezvous such that the expected travel time of the UAV is minimized and the probability of running out of charge is less than a user-defined tolerance. We formulate this problem as a Chance Constrained Markov Decision Process (CCMDP). To the best knowledge of the authors, this is the first CMDP-based formulation for the UAV-UGV routing problems under power consumption uncertainty. We adopt a Linear Programming (LP) based approach to solve the problem optimally. We demonstrate the effectiveness of our formulation in the context of an Intelligence Surveillance and Reconnaissance (ISR) mission.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge