"Time": models, code, and papers

Generative Adversarial Learning for Intelligent Trust Management in 6G Wireless Networks

Aug 02, 2022

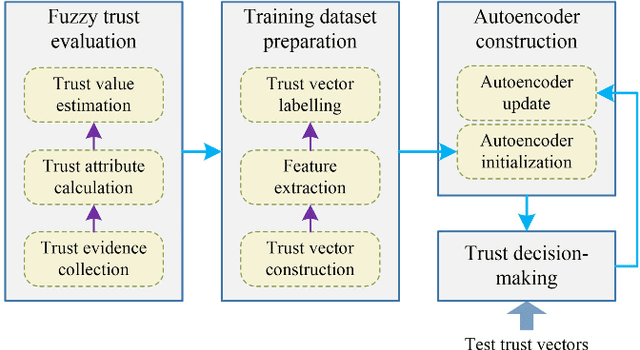

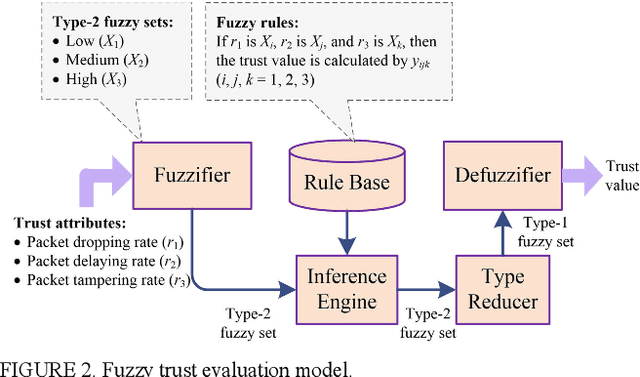

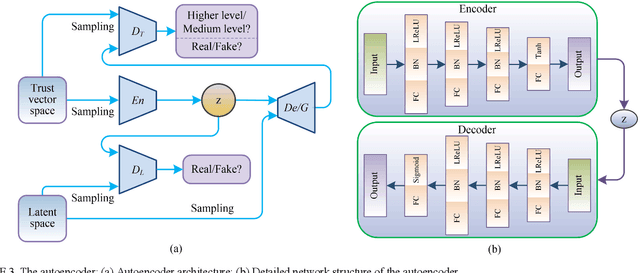

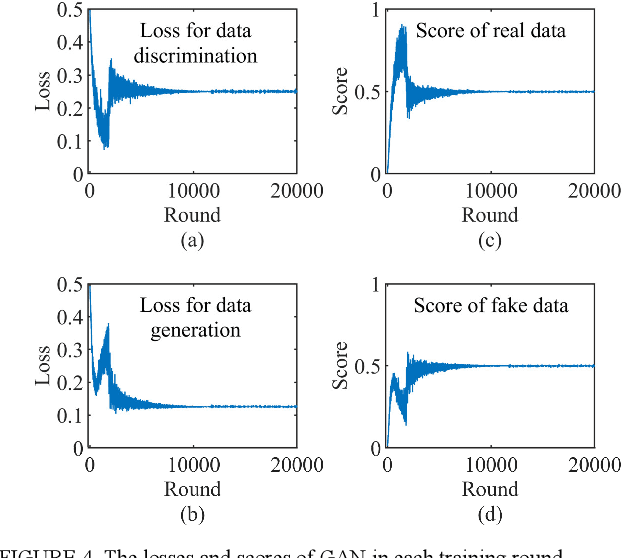

Emerging six generation (6G) is the integration of heterogeneous wireless networks, which can seamlessly support anywhere and anytime networking. But high Quality-of-Trust should be offered by 6G to meet mobile user expectations. Artificial intelligence (AI) is considered as one of the most important components in 6G. Then AI-based trust management is a promising paradigm to provide trusted and reliable services. In this article, a generative adversarial learning-enabled trust management method is presented for 6G wireless networks. Some typical AI-based trust management schemes are first reviewed, and then a potential heterogeneous and intelligent 6G architecture is introduced. Next, the integration of AI and trust management is developed to optimize the intelligence and security. Finally, the presented AI-based trust management method is applied to secure clustering to achieve reliable and real-time communications. Simulation results have demonstrated its excellent performance in guaranteeing network security and service quality.

Orthogonal Time Frequency Space Modulation: A Discrete Zak Transform Approach

Jun 24, 2021

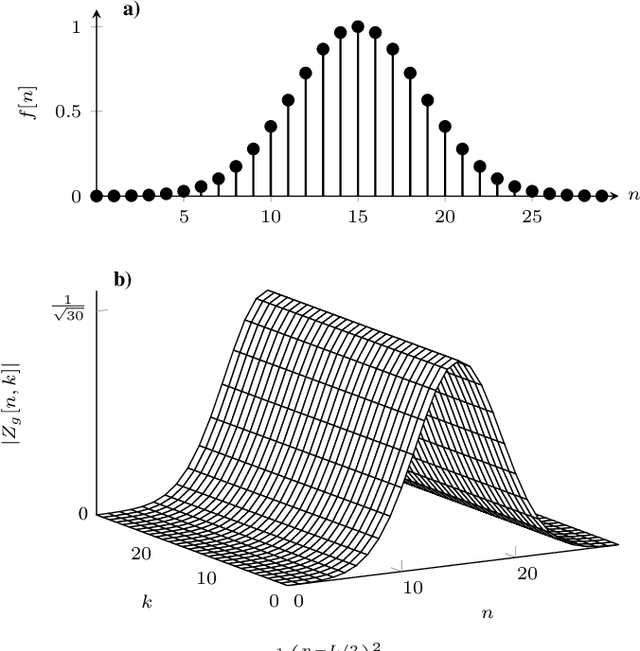

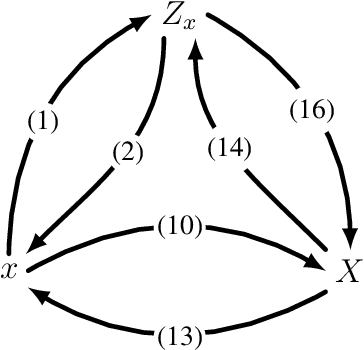

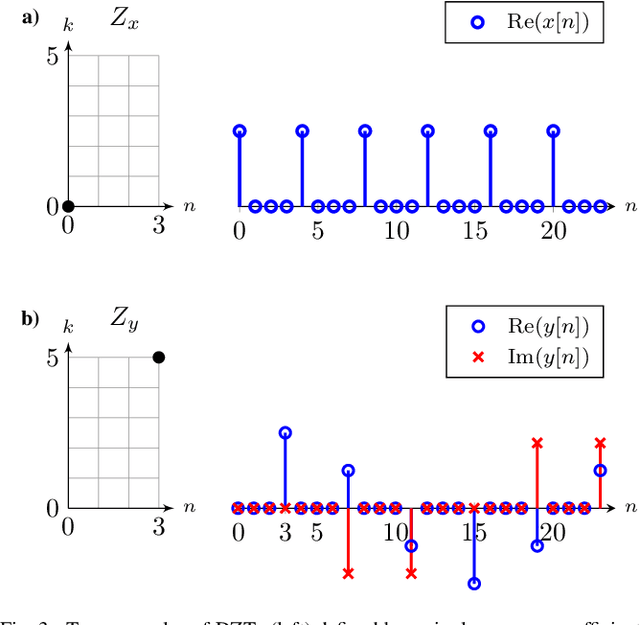

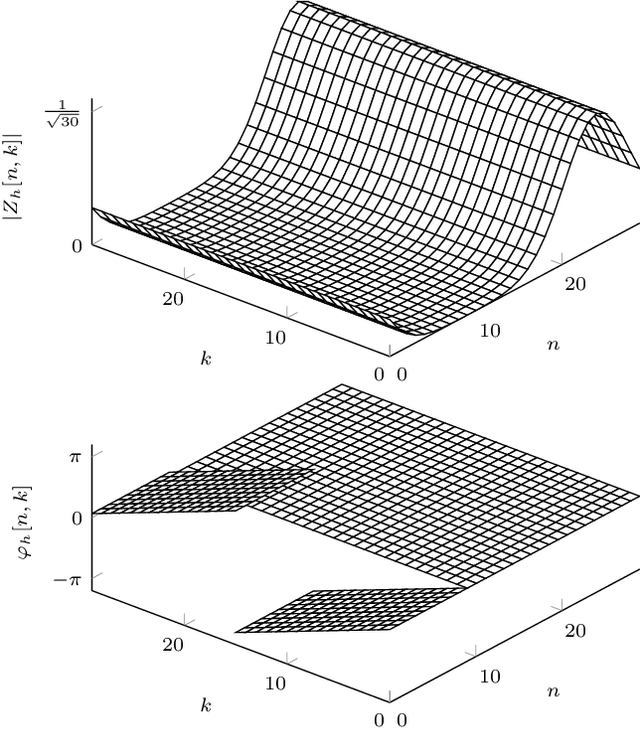

In orthogonal time frequency space (OTFS) modulation, information-carrying symbols reside in the delay-Doppler (DD) domain. By operating in the DD domain, an appealing property for communication arises: time-frequency (TF) dispersive channels encountered in high mobility environments become time-invariant. The time-invariance of the channel in the DD domain enables efficient equalizers for time-frequency dispersive channels. In this paper, we propose an OTFS system based on the discrete Zak transform. The presented formulation not only allows an efficient implementation of OTFS but also simplifies the derivation and analysis of the input-output relation of TF dispersive channel in the DD domain.

Minkowski Tracker: A Sparse Spatio-Temporal R-CNN for Joint Object Detection and Tracking

Aug 23, 2022

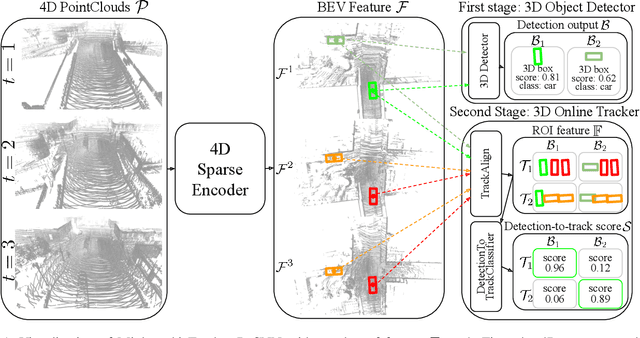

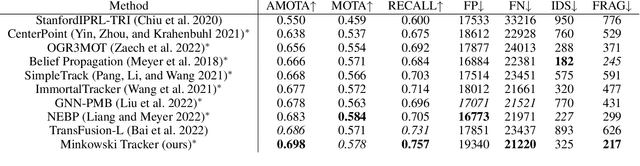

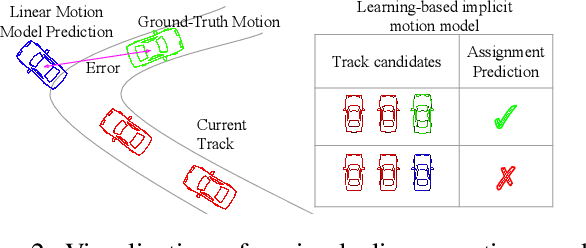

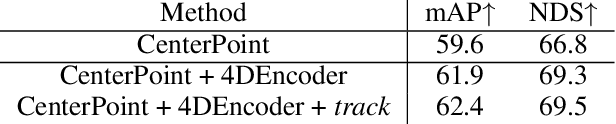

Recent research in multi-task learning reveals the benefit of solving related problems in a single neural network. 3D object detection and multi-object tracking (MOT) are two heavily intertwined problems predicting and associating an object instance location across time. However, most previous works in 3D MOT treat the detector as a preceding separated pipeline, disjointly taking the output of the detector as an input to the tracker. In this work, we present Minkowski Tracker, a sparse spatio-temporal R-CNN that jointly solves object detection and tracking. Inspired by region-based CNN (R-CNN), we propose to solve tracking as a second stage of the object detector R-CNN that predicts assignment probability to tracks. First, Minkowski Tracker takes 4D point clouds as input to generate a spatio-temporal Bird's-eye-view (BEV) feature map through a 4D sparse convolutional encoder network. Then, our proposed TrackAlign aggregates the track region-of-interest (ROI) features from the BEV features. Finally, Minkowski Tracker updates the track and its confidence score based on the detection-to-track match probability predicted from the ROI features. We show in large-scale experiments that the overall performance gain of our method is due to four factors: 1. The temporal reasoning of the 4D encoder improves the detection performance 2. The multi-task learning of object detection and MOT jointly enhances each other 3. The detection-to-track match score learns implicit motion model to enhance track assignment 4. The detection-to-track match score improves the quality of the track confidence score. As a result, Minkowski Tracker achieved the state-of-the-art performance on Nuscenes dataset tracking task without hand-designed motion models.

Patch Selection for Melanoma Classification

Jun 27, 2022

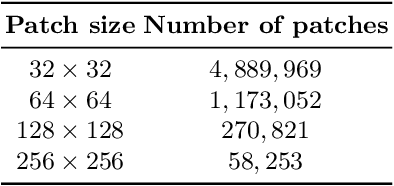

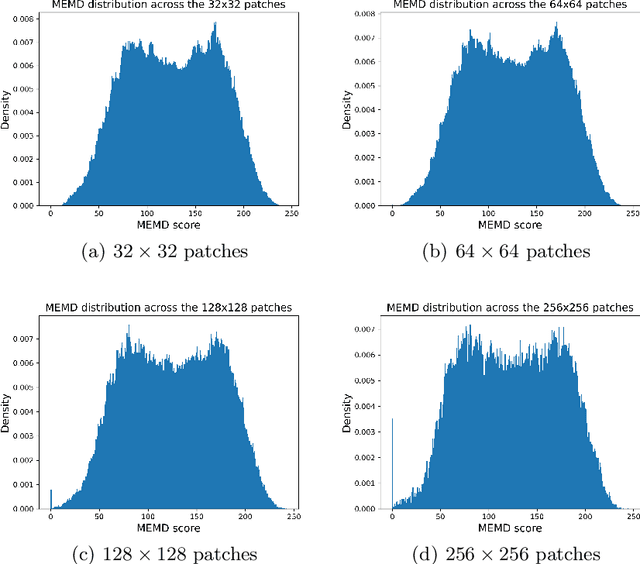

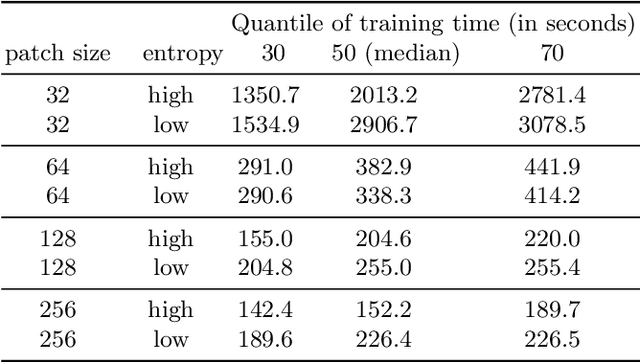

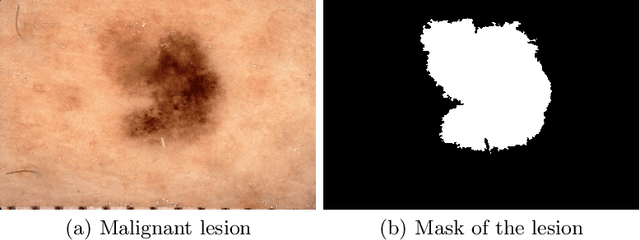

In medical image processing, the most important information is often located on small parts of the image. Patch-based approaches aim at using only the most relevant parts of the image. Finding ways to automatically select the patches is a challenge. In this paper, we investigate two criteria to choose patches: entropy and a spectral similarity criterion. We perform experiments at different levels of patch size. We train a Convolutional Neural Network on the subsets of patches and analyze the training time. We find that, in addition to requiring less preprocessing time, the classifiers trained on the datasets of patches selected based on entropy converge faster than on those selected based on the spectral similarity criterion and, furthermore, lead to higher accuracy. Moreover, patches of high entropy lead to faster convergence and better accuracy than patches of low entropy.

Accelerating Numerical Solvers for Large-Scale Simulation of Dynamical System via NeurVec

Aug 07, 2022

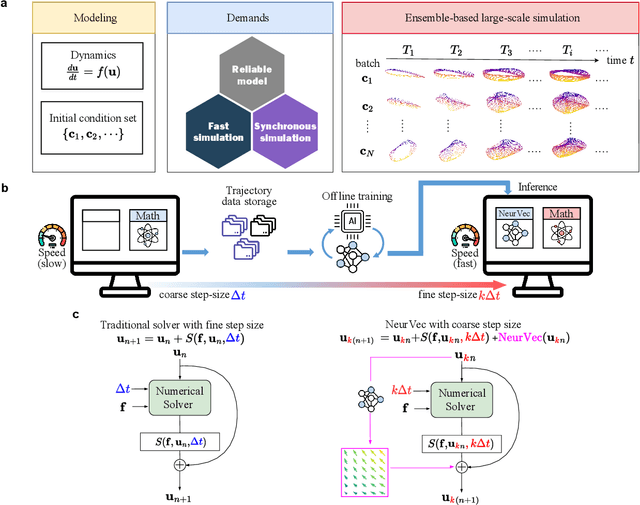

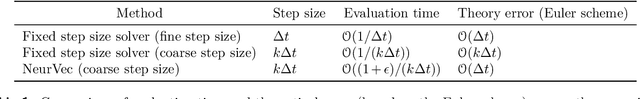

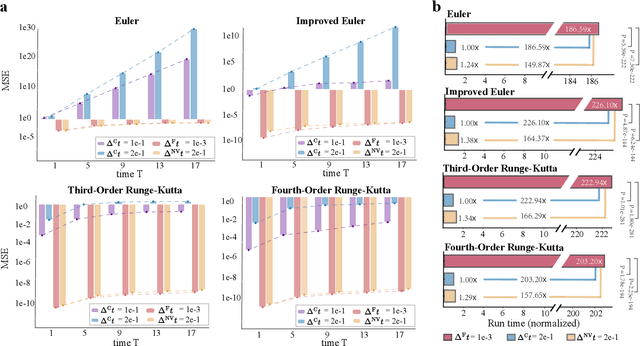

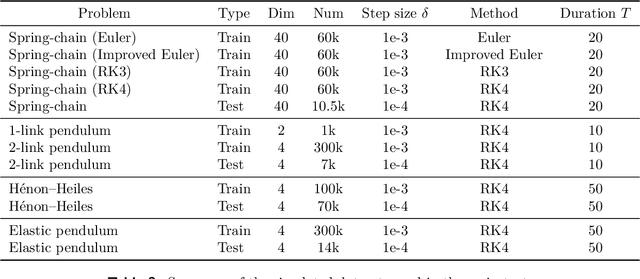

Ensemble-based large-scale simulation of dynamical systems is essential to a wide range of science and engineering problems. Conventional numerical solvers used in the simulation are significantly limited by the step size for time integration, which hampers efficiency and feasibility especially when high accuracy is desired. To overcome this limitation, we propose a data-driven corrector method that allows using large step sizes while compensating for the integration error for high accuracy. This corrector is represented in the form of a vector-valued function and is modeled by a neural network to regress the error in the phase space. Hence we name the corrector neural vector (NeurVec). We show that NeurVec can achieve the same accuracy as traditional solvers with much larger step sizes. We empirically demonstrate that NeurVec can accelerate a variety of numerical solvers significantly and overcome the stability restriction of these solvers. Our results on benchmark problems, ranging from high-dimensional problems to chaotic systems, suggest that NeurVec is capable of capturing the leading error term and maintaining the statistics of ensemble forecasts.

Graph Neural Networks Extract High-Resolution Cultivated Land Maps from Sentinel-2 Image Series

Aug 03, 2022

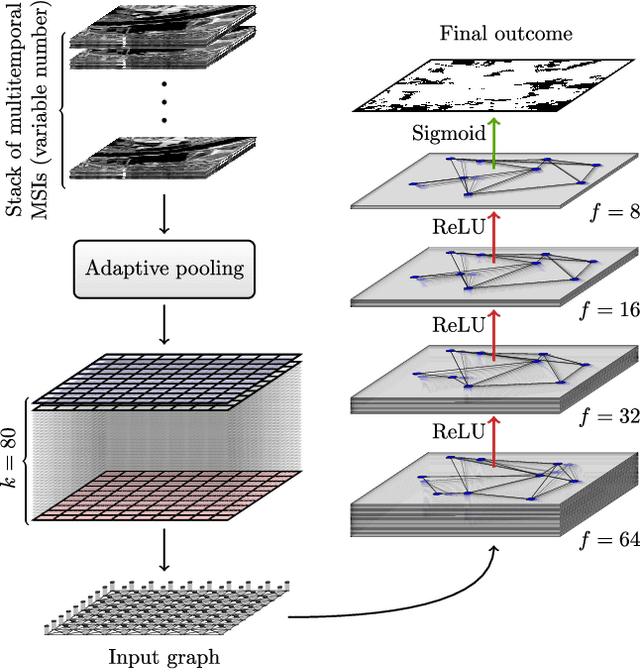

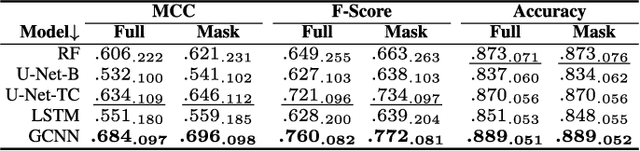

Maintaining farm sustainability through optimizing the agricultural management practices helps build more planet-friendly environment. The emerging satellite missions can acquire multi- and hyperspectral imagery which captures more detailed spectral information concerning the scanned area, hence allows us to benefit from subtle spectral features during the analysis process in agricultural applications. We introduce an approach for extracting 2.5 m cultivated land maps from 10 m Sentinel-2 multispectral image series which benefits from a compact graph convolutional neural network. The experiments indicate that our models not only outperform classical and deep machine learning techniques through delivering higher-quality segmentation maps, but also dramatically reduce the memory footprint when compared to U-Nets (almost 8k trainable parameters of our models, with up to 31M parameters of U-Nets). Such memory frugality is pivotal in the missions which allow us to uplink a model to the AI-powered satellite once it is in orbit, as sending large nets is impossible due to the time constraints.

* 7 pages (including supplementary material), published in IEEE Geoscience and Remote Sensing Letters

Looking for a Needle in a Haystack: A Comprehensive Study of Hallucinations in Neural Machine Translation

Aug 10, 2022

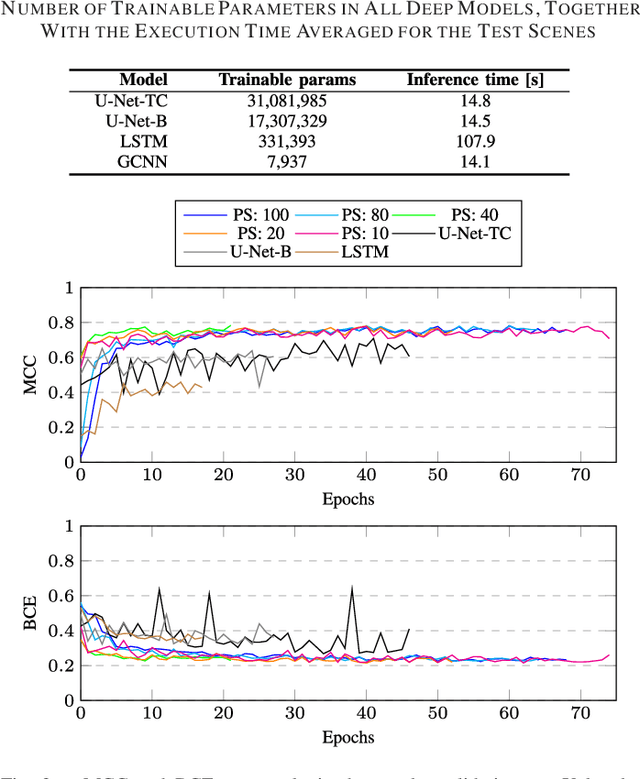

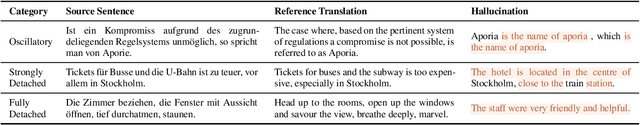

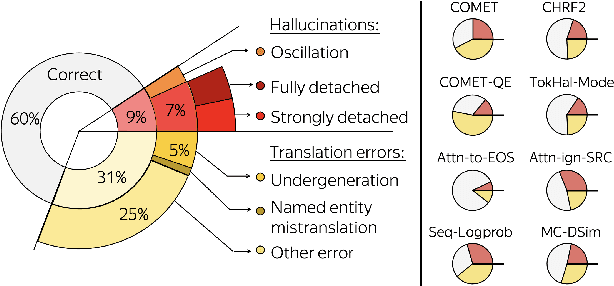

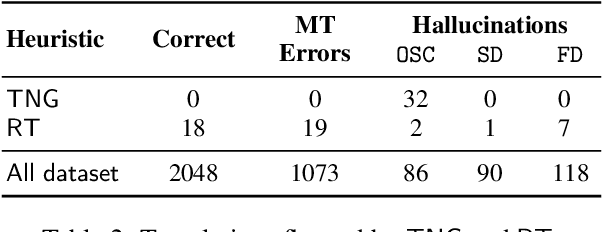

Although the problem of hallucinations in neural machine translation (NMT) has received some attention, research on this highly pathological phenomenon lacks solid ground. Previous work has been limited in several ways: it often resorts to artificial settings where the problem is amplified, it disregards some (common) types of hallucinations, and it does not validate adequacy of detection heuristics. In this paper, we set foundations for the study of NMT hallucinations. First, we work in a natural setting, i.e., in-domain data without artificial noise neither in training nor in inference. Next, we annotate a dataset of over 3.4k sentences indicating different kinds of critical errors and hallucinations. Then, we turn to detection methods and both revisit methods used previously and propose using glass-box uncertainty-based detectors. Overall, we show that for preventive settings, (i) previously used methods are largely inadequate, (ii) sequence log-probability works best and performs on par with reference-based methods. Finally, we propose DeHallucinator, a simple method for alleviating hallucinations at test time that significantly reduces the hallucinatory rate. To ease future research, we release our annotated dataset for WMT18 German-English data, along with the model, training data, and code.

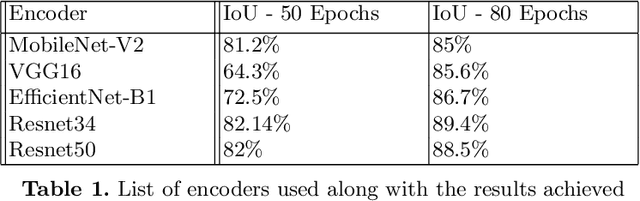

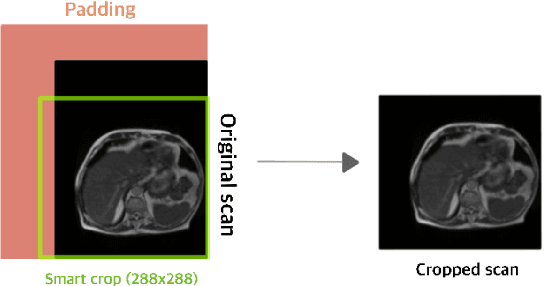

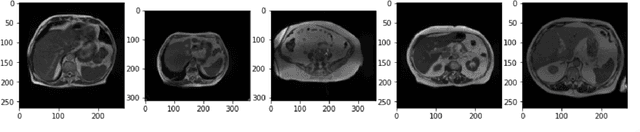

Automated GI tract segmentation using deep learning

Jun 29, 2022

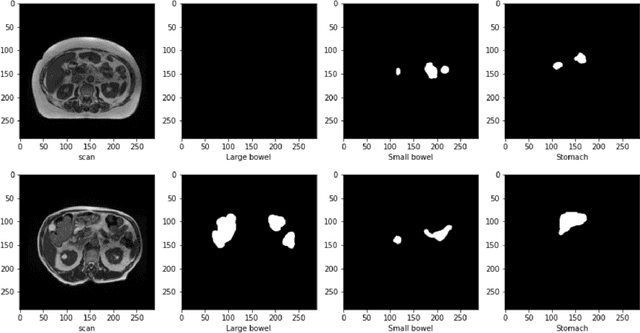

The job of Radiation oncologists is to deliver x-ray beams pointed toward the tumor and at the same time avoid the stomach and intestines. With MR-Linacs (magnetic resonance imaging and linear accelerator systems), oncologists can visualize the position of the tumor and allow for precise dose according to tumor cell presence which can vary from day to day. The current job of outlining the position of the stomach and intestines to adjust the X-ray beams direction for the dose delivery to the tumor while avoiding the organs. This is a time-consuming and labor-intensive process that can easily prolong treatments from 15 minutes to an hour a day unless deep learning methods can automate the segmentation process. This paper discusses an automated segmentation process using deep learning to make this process faster and allow more patients to get effective treatment.

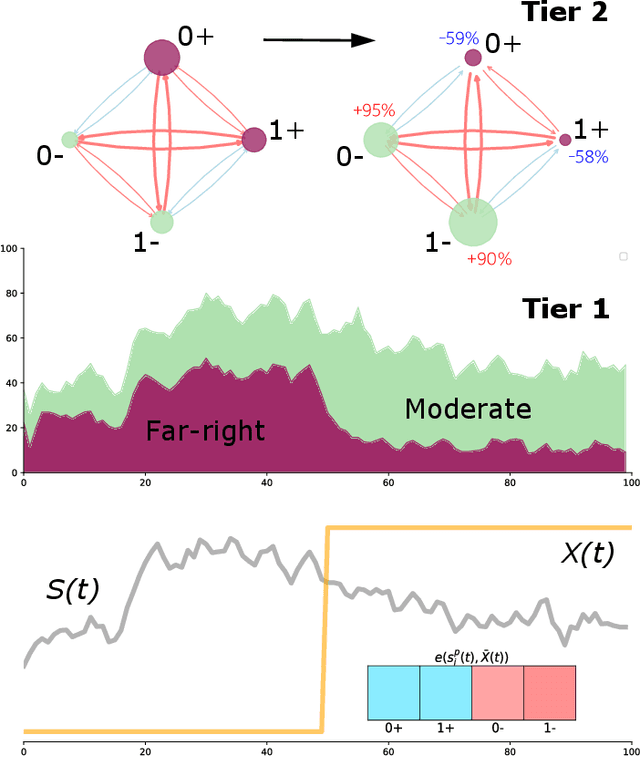

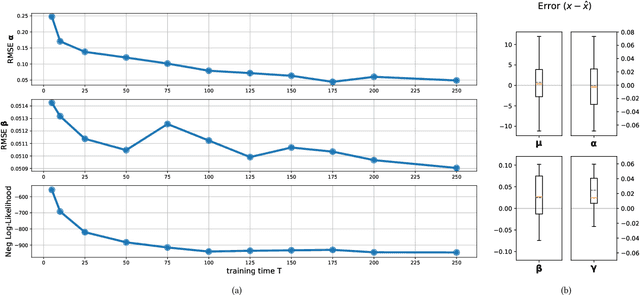

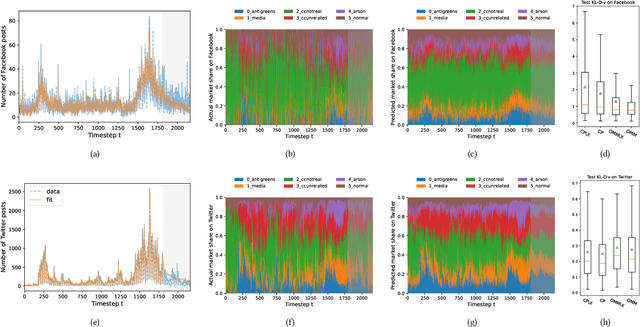

Opinion Market Model: Stemming Far-Right Opinion Spread using Positive Interventions

Aug 13, 2022

Recent years have seen the rise of extremist views in the opinion ecosystem we call social media. Allowing online extremism to persist has dire societal consequences, and efforts to mitigate it are continuously explored. Positive interventions, controlled signals that add attention to the opinion ecosystem with the aim of boosting certain opinions, are one such pathway for mitigation. This work proposes a platform to test the effectiveness of positive interventions, through the Opinion Market Model (OMM), a two-tier model of the online opinion ecosystem jointly accounting for both inter-opinion interactions and the role of positive interventions. The first tier models the size of the opinion attention market using the multivariate discrete-time Hawkes process; the second tier leverages the market share attraction model to model opinions cooperating and competing for market share given limited attention. On a synthetic dataset, we show the convergence of our proposed estimation scheme. On a dataset of Facebook and Twitter discussions containing moderate and far-right opinions about bushfires and climate change, we show superior predictive performance over the state-of-the-art and the ability to uncover latent opinion interactions. Lastly, we use OMM to demonstrate the effectiveness of mainstream media coverage as a positive intervention in suppressing far-right opinions.

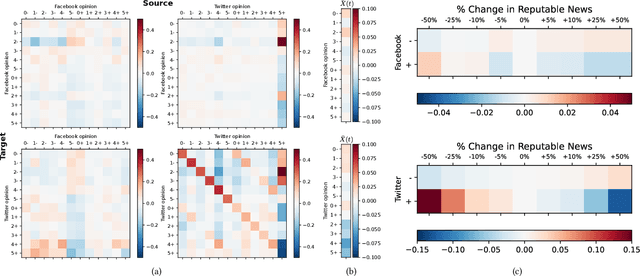

Delay-Doppler Reversal for OTFS System in Doubly-selective Fading Channels

Jul 22, 2022

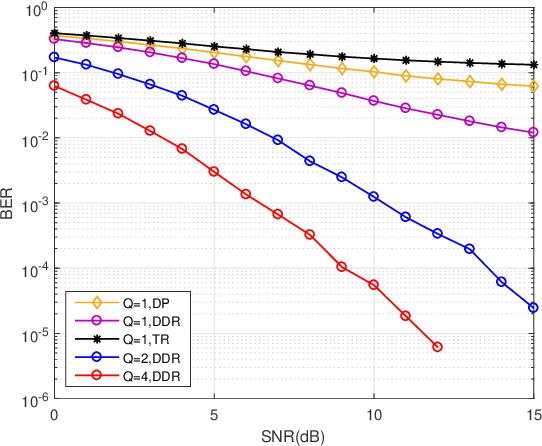

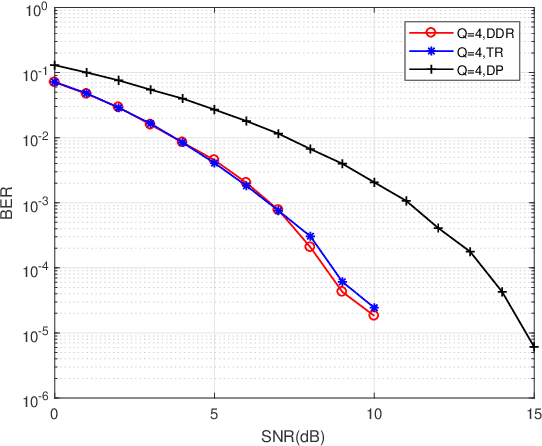

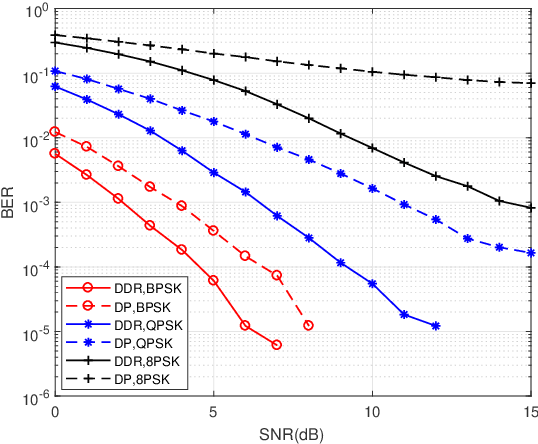

The recent proposed orthogonal time frequency space (OTFS) modulation shows signifcant advantages than conventional orthogonal frequency division multiplexing (OFDM) for high mobility wireless communications. However, a challenging problem is the development of effcient receivers for practical OTFS systems with low complexity. In this paper, we propose a novel delay-Doppler reversal (DDR) technology for OTFS system with desired performance and low complexity. We present the DDR technology from a perspective of two-dimensional cascaded channel model, analyze its computational complexity and also analyze its performance gain compared to the direct processing (DP) receiver without DDR. Simulation results demonstrate that our proposed DDR receiver outperforms traditional receivers in doubly-selective fading channels.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge