Zhenyu Liang

TransmissiveGS: Residual-Guided Disentangled Gaussian Splatting for Transmissive Scene Reconstruction and Rendering

May 11, 2026Abstract:Transmissive scenes are ubiquitous in daily life, yet reconstructing and rendering them remains highly challenging due to the inherent entanglement between near-field reflections from the surrounding environment on the transmissive surface, and the transmitted content of the scene behind it. This coupling gives rise to dual surface geometries and dual radiance components within each observation, posing ambiguities for standard methods. We present TransmissiveGS, a novel framework for disentangled reconstruction and rendering of transmissive scenes. Specifically, we model the scene with a dual-Gaussian representation and introduce a deferred shading function to jointly render the two Gaussian components. To separate reflection and transmission, we exploit the inherent multi-view inconsistency of reflections and leverage the residuals from reconstructing multi-view consistent content as cues for disentangled geometry and appearance modeling. We further propose a reflection light field that enables high-fidelity estimation of near-field reflections. During training, we introduce a high-frequency regularization to preserve fine details. We also contribute a new synthetic dataset for evaluating transmissive surface reconstruction. Experiments on both synthetic and real-world scenes demonstrate that TransmissiveGS consistently outperforms prior Gaussian Splatting-based methods in both reconstruction and rendering quality for transmissive scenes.

UAV-Assisted Scan-to-Simulation for Landslides Using Physics-Informed Gaussian Splatting

May 11, 2026Abstract:Landslide monitoring and simulation play an important role in urban safety assessment and disaster prevention. Existing landslide simulation pipelines typically rely on digital elevation model and mesh-based representations, which are suitable for geometric analysis, but often lack visual realism. This limitation reduces their effectiveness in interactive applications, hazard communication, and public education. In this paper, we propose a UAV-based scan-to-simulation framework that bridges photorealistic scene capture and physics-based landslide simulation through 3DGS. Specifically, our pipeline includes four stages: (1) UAV-based acquisition of slope imagery, (2) reconstruction of a low-anisotropy 3DGS scene representation, (3) volumetric conversion of the target simulation region by filling the interior of the surface-based model, and (4) integration with the Material Point Method (MPM) for landslide simulation. We validate the proposed framework on a real landslide site in Hong Kong that experienced a severe landslide event. The results show that our method supports both realistic visual reconstruction and effective simulation.

Industrial3D: A Terrestrial LiDAR Point Cloud Dataset and CrossParadigm Benchmark for Industrial Infrastructure

Mar 30, 2026Abstract:Automated semantic understanding of dense point clouds is a prerequisite for Scan-to-BIM pipelines, digital twin construction, and as-built verification--core tasks in the digital transformation of the construction industry. Yet for industrial mechanical, electrical, and plumbing (MEP) facilities, this challenge remains largely unsolved: TLS acquisitions of water treatment plants, chiller halls, and pumping stations exhibit extreme geometric ambiguity, severe occlusion, and extreme class imbalance that architectural benchmarks (e.g., S3DIS or ScanNet) cannot adequately represent. We present Industrial3D, a terrestrial LiDAR dataset comprising 612 million expertly labelled points at 6 mm resolution from 13 water treatment facilities. At 6.6x the scale of the closest comparable MEP dataset, Industrial3D provides the largest and most demanding testbed for industrial 3D scene understanding to date. We further establish the first industrial cross-paradigm benchmark, evaluating nine representative methods across fully supervised, weakly supervised, unsupervised, and foundation model settings under a unified benchmark protocol. The best supervised method achieves 55.74% mIoU, whereas zero-shot Point-SAM reaches only 15.79%--a 39.95 percentage-point gap that quantifies the unresolved domain-transfer challenge for industrial TLS data. Systematic analysis reveals that this gap originates from a dual crisis: statistical rarity (215:1 imbalance, 3.5x more severe than S3DIS) and geometric ambiguity (tail-class points share cylindrical primitives with head-class pipes) that frequency-based re-weighting alone cannot resolve. Industrial3D, along with benchmark code and pre-trained models, will be publicly available at https://github.com/pointcloudyc/Industrial3D.

2K Retrofit: Entropy-Guided Efficient Sparse Refinement for High-Resolution 3D Geometry Prediction

Mar 23, 2026Abstract:High-resolution geometric prediction is essential for robust perception in autonomous driving, robotics, and AR/MR, but current foundation models are fundamentally limited by their scalability to real-world, high-resolution scenarios. Direct inference on 2K images with these models incurs prohibitive computational and memory demands, making practical deployment challenging. To tackle the issue, we present 2K Retrofit, a novel framework that enables efficient 2K-resolution inference for any geometric foundation model, without modifying or retraining the backbone. Our approach leverages fast coarse predictions and an entropy-based sparse refinement to selectively enhance high-uncertainty regions, achieving precise and high-fidelity 2K outputs with minimal overhead. Extensive experiments on widely used benchmark demonstrate that 2K Retrofit consistently achieves state-of-the-art accuracy and speed, bridging the gap between research advances and scalable deployment in high-resolution 3D vision applications. Code will be released upon acceptance.

GPU-accelerated Evolutionary Many-objective Optimization Using Tensorized NSGA-III

Apr 08, 2025

Abstract:NSGA-III is one of the most widely adopted algorithms for tackling many-objective optimization problems. However, its CPU-based design severely limits scalability and computational efficiency. To address the limitations, we propose {TensorNSGA-III}, a fully tensorized implementation of NSGA-III that leverages GPU parallelism for large-scale many-objective optimization. Unlike conventional GPU-accelerated evolutionary algorithms that rely on heuristic approximations to improve efficiency, TensorNSGA-III maintains the exact selection and variation mechanisms of NSGA-III while achieving significant acceleration. By reformulating the selection process with tensorized data structures and an optimized caching strategy, our approach effectively eliminates computational bottlenecks inherent in traditional CPU-based and na\"ive GPU implementations. Experimental results on widely used numerical benchmarks show that TensorNSGA-III achieves speedups of up to $3629\times$ over the CPU version of NSGA-III. Additionally, we validate its effectiveness in multiobjective robotic control tasks, where it discovers diverse and high-quality behavioral solutions. Furthermore, we investigate the critical role of large population sizes in many-objective optimization and demonstrate the scalability of TensorNSGA-III in such scenarios. The source code is available at https://github.com/EMI-Group/evomo

Bridging Evolutionary Multiobjective Optimization and GPU Acceleration via Tensorization

Mar 27, 2025

Abstract:Evolutionary multiobjective optimization (EMO) has made significant strides over the past two decades. However, as problem scales and complexities increase, traditional EMO algorithms face substantial performance limitations due to insufficient parallelism and scalability. While most work has focused on algorithm design to address these challenges, little attention has been given to hardware acceleration, thereby leaving a clear gap between EMO algorithms and advanced computing devices, such as GPUs. To bridge the gap, we propose to parallelize EMO algorithms on GPUs via the tensorization methodology. By employing tensorization, the data structures and operations of EMO algorithms are transformed into concise tensor representations, which seamlessly enables automatic utilization of GPU computing. We demonstrate the effectiveness of our approach by applying it to three representative EMO algorithms: NSGA-III, MOEA/D, and HypE. To comprehensively assess our methodology, we introduce a multiobjective robot control benchmark using a GPU-accelerated physics engine. Our experiments show that the tensorized EMO algorithms achieve speedups of up to 1113x compared to their CPU-based counterparts, while maintaining solution quality and effectively scaling population sizes to hundreds of thousands. Furthermore, the tensorized EMO algorithms efficiently tackle complex multiobjective robot control tasks, producing high-quality solutions with diverse behaviors. Source codes are available at https://github.com/EMI-Group/evomo.

A Multi-objective Optimization Benchmark Test Suite for Real-time Semantic Segmentation

Apr 29, 2024

Abstract:As one of the emerging challenges in Automated Machine Learning, the Hardware-aware Neural Architecture Search (HW-NAS) tasks can be treated as black-box multi-objective optimization problems (MOPs). An important application of HW-NAS is real-time semantic segmentation, which plays a pivotal role in autonomous driving scenarios. The HW-NAS for real-time semantic segmentation inherently needs to balance multiple optimization objectives, including model accuracy, inference speed, and hardware-specific considerations. Despite its importance, benchmarks have yet to be developed to frame such a challenging task as multi-objective optimization. To bridge the gap, we introduce a tailored streamline to transform the task of HW-NAS for real-time semantic segmentation into standard MOPs. Building upon the streamline, we present a benchmark test suite, CitySeg/MOP, comprising fifteen MOPs derived from the Cityscapes dataset. The CitySeg/MOP test suite is integrated into the EvoXBench platform to provide seamless interfaces with various programming languages (e.g., Python and MATLAB) for instant fitness evaluations. We comprehensively assessed the CitySeg/MOP test suite on various multi-objective evolutionary algorithms, showcasing its versatility and practicality. Source codes are available at https://github.com/EMI-Group/evoxbench.

GPU-accelerated Evolutionary Multiobjective Optimization Using Tensorized RVEA

Apr 11, 2024Abstract:Evolutionary multiobjective optimization has witnessed remarkable progress during the past decades. However, existing algorithms often encounter computational challenges in large-scale scenarios, primarily attributed to the absence of hardware acceleration. In response, we introduce a Tensorized Reference Vector Guided Evolutionary Algorithm (TensorRVEA) for harnessing the advancements of GPU acceleration. In TensorRVEA, the key data structures and operators are fully transformed into tensor forms for leveraging GPU-based parallel computing. In numerical benchmark tests involving large-scale populations and problem dimensions, TensorRVEA consistently demonstrates high computational performance, achieving up to over 1000$\times$ speedups. Then, we applied TensorRVEA to the domain of multiobjective neuroevolution for addressing complex challenges in robotic control tasks. Furthermore, we assessed TensorRVEA's extensibility by altering several tensorized reproduction operators. Experimental results demonstrate promising scalability and robustness of TensorRVEA. Source codes are available at \url{https://github.com/EMI-Group/tensorrvea}.

Large Scale Many-Objective Optimization Driven by Distributional Adversarial Networks

Mar 16, 2020

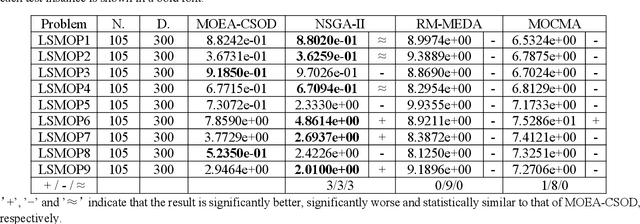

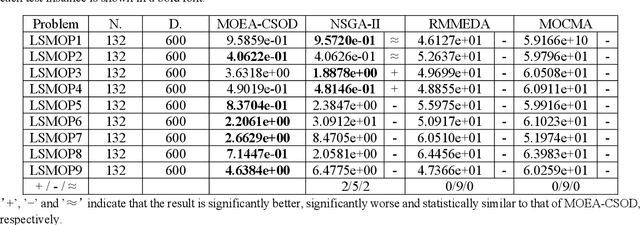

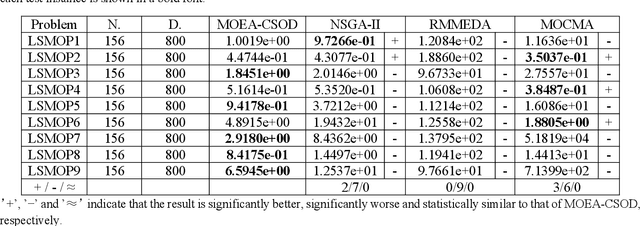

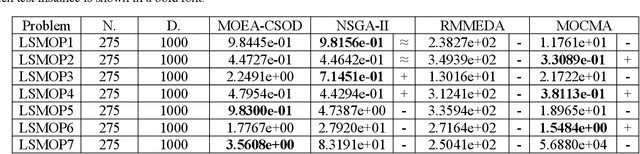

Abstract:Estimation of distribution algorithms (EDA) as one of the EAs is a stochastic optimization problem which establishes a probability model to describe the distribution of solutions and randomly samples the probability model to create offspring and optimize model and population. Reference Vector Guided Evolutionary (RVEA) based on the EDA framework, having a better performance to solve MaOPs. Besides, using the generative adversarial networks to generate offspring solutions is also a state-of-art thought in EAs instead of crossover and mutation. In this paper, we will propose a novel algorithm based on RVEA[1] framework and using Distributional Adversarial Networks (DAN) [2]to generate new offspring. DAN uses a new distributional framework for adversarial training of neural networks and operates on genuine samples rather than a single point because the framework also leads to more stable training and extraordinarily better mode coverage compared to single-point-sample methods. Thereby, DAN can quickly generate offspring with high convergence regarding the same distribution of data. In addition, we also use Large-Scale Multi-Objective Optimization Based on A Competitive Swarm Optimizer (LMOCSO)[3] to adopts a new two-stage strategy to update the position in order to significantly increase the search efficiency to find optimal solutions in huge decision space. The propose new algorithm will be tested on 9 benchmark problems in Large scale multi-objective problems (LSMOP). To measure the performance, we will compare our proposal algorithm with some state-of-art EAs e.g., RM-MEDA[4], MO-CMA[10] and NSGA-II.

Many-Objective Estimation of Distribution Optimization Algorithm Based on WGAN-GP

Mar 16, 2020

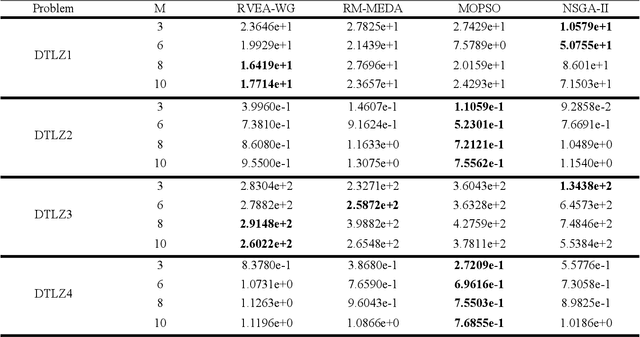

Abstract:Estimation of distribution algorithms (EDA) are stochastic optimization algorithms. EDA establishes a probability model to describe the distribution of solution from the perspective of population macroscopically by statistical learning method, and then randomly samples the probability model to generate a new population. EDA can better solve multi-objective optimal problems (MOPs). However, the performance of EDA decreases in solving many-objective optimal problems (MaOPs), which contains more than three objectives. Reference Vector Guided Evolutionary Algorithm (RVEA), based on the EDA framework, can better solve MaOPs. In our paper, we use the framework of RVEA. However, we generate the new population by Wasserstein Generative Adversarial Networks-Gradient Penalty (WGAN-GP) instead of using crossover and mutation. WGAN-GP have advantages of fast convergence, good stability and high sample quality. WGAN-GP learn the mapping relationship from standard normal distribution to given data set distribution based on a given data set subject to the same distribution. It can quickly generate populations with high diversity and good convergence. To measure the performance, RM-MEDA, MOPSO and NSGA-II are selected to perform comparison experiments over DTLZ and LSMOP test suites with 3-, 5-, 8-, 10- and 15-objective.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge