Yanxiong Lu

LFD: Layer Fused Decoding to Exploit External Knowledge in Retrieval-Augmented Generation

Aug 27, 2025

Abstract:Retrieval-augmented generation (RAG) incorporates external knowledge into large language models (LLMs), improving their adaptability to downstream tasks and enabling information updates. Surprisingly, recent empirical evidence demonstrates that injecting noise into retrieved relevant documents paradoxically facilitates exploitation of external knowledge and improves generation quality. Although counterintuitive and challenging to apply in practice, this phenomenon enables granular control and rigorous analysis of how LLMs integrate external knowledge. Therefore, in this paper, we intervene on noise injection and establish a layer-specific functional demarcation within the LLM: shallow layers specialize in local context modeling, intermediate layers focus on integrating long-range external factual knowledge, and deeper layers primarily rely on parametric internal knowledge. Building on this insight, we propose Layer Fused Decoding (LFD), a simple decoding strategy that directly combines representations from an intermediate layer with final-layer decoding outputs to fully exploit the external factual knowledge. To identify the optimal intermediate layer, we introduce an internal knowledge score (IKS) criterion that selects the layer with the lowest IKS value in the latter half of layers. Experimental results across multiple benchmarks demonstrate that LFD helps RAG systems more effectively surface retrieved context knowledge with minimal cost.

Seg2Act: Global Context-aware Action Generation for Document Logical Structuring

Oct 09, 2024

Abstract:Document logical structuring aims to extract the underlying hierarchical structure of documents, which is crucial for document intelligence. Traditional approaches often fall short in handling the complexity and the variability of lengthy documents. To address these issues, we introduce Seg2Act, an end-to-end, generation-based method for document logical structuring, revisiting logical structure extraction as an action generation task. Specifically, given the text segments of a document, Seg2Act iteratively generates the action sequence via a global context-aware generative model, and simultaneously updates its global context and current logical structure based on the generated actions. Experiments on ChCatExt and HierDoc datasets demonstrate the superior performance of Seg2Act in both supervised and transfer learning settings.

USER: A Unified Information Search and Recommendation Model based on Integrated Behavior Sequence

Sep 30, 2021

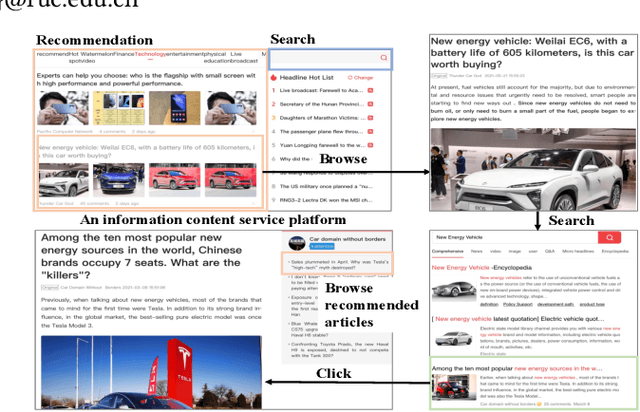

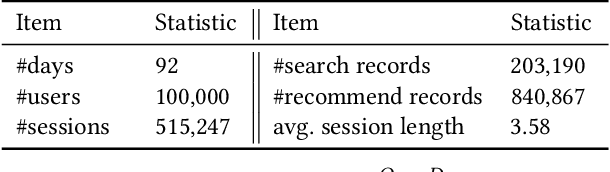

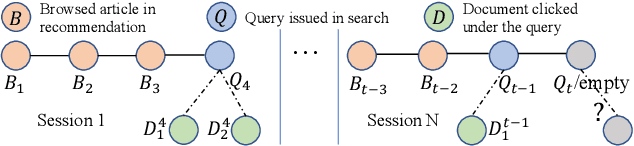

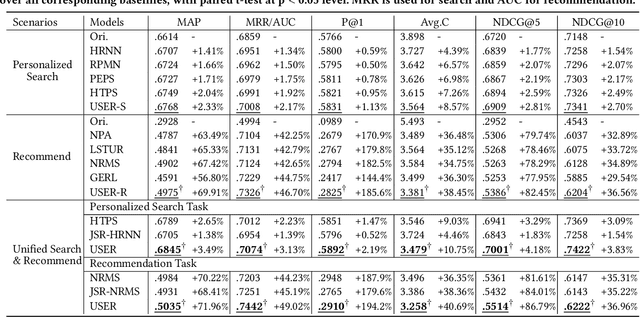

Abstract:Search and recommendation are the two most common approaches used by people to obtain information. They share the same goal -- satisfying the user's information need at the right time. There are already a lot of Internet platforms and Apps providing both search and recommendation services, showing us the demand and opportunity to simultaneously handle both tasks. However, most platforms consider these two tasks independently -- they tend to train separate search model and recommendation model, without exploiting the relatedness and dependency between them. In this paper, we argue that jointly modeling these two tasks will benefit both of them and finally improve overall user satisfaction. We investigate the interactions between these two tasks in the specific information content service domain. We propose first integrating the user's behaviors in search and recommendation into a heterogeneous behavior sequence, then utilizing a joint model for handling both tasks based on the unified sequence. More specifically, we design the Unified Information Search and Recommendation model (USER), which mines user interests from the integrated sequence and accomplish the two tasks in a unified way.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge