Xi Peng

Rutgers University

Deep Multiview Clustering by Contrasting Cluster Assignments

Apr 21, 2023

Abstract:Multiview clustering (MVC) aims to reveal the underlying structure of multiview data by categorizing data samples into clusters. Deep learning-based methods exhibit strong feature learning capabilities on large-scale datasets. For most existing deep MVC methods, exploring the invariant representations of multiple views is still an intractable problem. In this paper, we propose a cross-view contrastive learning (CVCL) method that learns view-invariant representations and produces clustering results by contrasting the cluster assignments among multiple views. Specifically, we first employ deep autoencoders to extract view-dependent features in the pretraining stage. Then, a cluster-level CVCL strategy is presented to explore consistent semantic label information among the multiple views in the fine-tuning stage. Thus, the proposed CVCL method is able to produce more discriminative cluster assignments by virtue of this learning strategy. Moreover, we provide a theoretical analysis of soft cluster assignment alignment. Extensive experimental results obtained on several datasets demonstrate that the proposed CVCL method outperforms several state-of-the-art approaches.

Are Data-driven Explanations Robust against Out-of-distribution Data?

Mar 29, 2023Abstract:As black-box models increasingly power high-stakes applications, a variety of data-driven explanation methods have been introduced. Meanwhile, machine learning models are constantly challenged by distributional shifts. A question naturally arises: Are data-driven explanations robust against out-of-distribution data? Our empirical results show that even though predict correctly, the model might still yield unreliable explanations under distributional shifts. How to develop robust explanations against out-of-distribution data? To address this problem, we propose an end-to-end model-agnostic learning framework Distributionally Robust Explanations (DRE). The key idea is, inspired by self-supervised learning, to fully utilizes the inter-distribution information to provide supervisory signals for the learning of explanations without human annotation. Can robust explanations benefit the model's generalization capability? We conduct extensive experiments on a wide range of tasks and data types, including classification and regression on image and scientific tabular data. Our results demonstrate that the proposed method significantly improves the model's performance in terms of explanation and prediction robustness against distributional shifts.

GLOW: Global Layout Aware Attacks on Object Detection

Mar 08, 2023

Abstract:Adversarial attacks aim to perturb images such that a predictor outputs incorrect results. Due to the limited research in structured attacks, imposing consistency checks on natural multi-object scenes is a promising yet practical defense against conventional adversarial attacks. More desired attacks, to this end, should be able to fool defenses with such consistency checks. Therefore, we present the first approach GLOW that copes with various attack requests by generating global layout-aware adversarial attacks, in which both categorical and geometric layout constraints are explicitly established. Specifically, we focus on object detection task and given a victim image, GLOW first localizes victim objects according to target labels. And then it generates multiple attack plans, together with their context-consistency scores. Our proposed GLOW, on the one hand, is capable of handling various types of requests, including single or multiple victim objects, with or without specified victim objects. On the other hand, it produces a consistency score for each attack plan, reflecting the overall contextual consistency that both semantic category and global scene layout are considered. In experiment, we design multiple types of attack requests and validate our ideas on MS COCO and Pascal. Extensive experimental results demonstrate that we can achieve about 30$\%$ average relative improvement compared to state-of-the-art methods in conventional single object attack request; Moreover, our method outperforms SOTAs significantly on more generic attack requests by about 20$\%$ in average; Finally, our method produces superior performance under challenging zero-query black-box setting, or 20$\%$ better than SOTAs. Our code, model and attack requests would be made available.

Incomplete Multi-view Clustering via Prototype-based Imputation

Jan 30, 2023

Abstract:In this paper, we study how to achieve two characteristics highly-expected by incomplete multi-view clustering (IMvC). Namely, i) instance commonality refers to that within-cluster instances should share a common pattern, and ii) view versatility refers to that cross-view samples should own view-specific patterns. To this end, we design a novel dual-stream model which employs a dual attention layer and a dual contrastive learning loss to learn view-specific prototypes and model the sample-prototype relationship. When the view is missed, our model performs data recovery using the prototypes in the missing view and the sample-prototype relationship inherited from the observed view. Thanks to our dual-stream model, both cluster- and view-specific information could be captured, and thus the instance commonality and view versatility could be preserved to facilitate IMvC. Extensive experiments demonstrate the superiority of our method on six challenging benchmarks compared with 11 approaches. The code will be released.

Graph Matching with Bi-level Noisy Correspondence

Dec 22, 2022

Abstract:In this paper, we study a novel and widely existing problem in graph matching (GM), namely, Bi-level Noisy Correspondence (BNC), which refers to node-level noisy correspondence (NNC) and edge-level noisy correspondence (ENC). In brief, on the one hand, due to the poor recognizability and viewpoint differences between images, it is inevitable to inaccurately annotate some keypoints with offset and confusion, leading to the mismatch between two associated nodes, i.e., NNC. On the other hand, the noisy node-to-node correspondence will further contaminate the edge-to-edge correspondence, thus leading to ENC. For the BNC challenge, we propose a novel method termed Contrastive Matching with Momentum Distillation. Specifically, the proposed method is with a robust quadratic contrastive loss which enjoys the following merits: i) better exploring the node-to-node and edge-to-edge correlations through a GM customized quadratic contrastive learning paradigm; ii) adaptively penalizing the noisy assignments based on the confidence estimated by the momentum teacher. Extensive experiments on three real-world datasets show the robustness of our model compared with 12 competitive baselines.

Relationship Quantification of Image Degradations

Dec 08, 2022Abstract:In this paper, we study two challenging but less-touched problems in image restoration, namely, i) how to quantify the relationship between different image degradations and ii) how to improve the performance of a specific restoration task using the quantified relationship. To tackle the first challenge, Degradation Relationship Index (DRI) is proposed to measure the degradation relationship, which is defined as the drop rate difference in the validation loss between two models, i.e., one is trained using the anchor task only and another is trained using the anchor and the auxiliary tasks. Through quantifying the relationship between different degradations using DRI, we empirically observe that i) the degradation combination proportion is crucial to the image restoration performance. In other words, the combinations with only appropriate degradation proportions could improve the performance of the anchor restoration; ii) a positive DRI always predicts the performance improvement of image restoration. Based on the observations, we propose an adaptive Degradation Proportion Determination strategy (DPD) which could improve the performance of the anchor restoration task by using another restoration task as auxiliary. Extensive experimental results verify the effective of our method by taking image dehazing as the anchor task and denoising, desnowing, and deraining as the auxiliary tasks. The code will be released after acceptance.

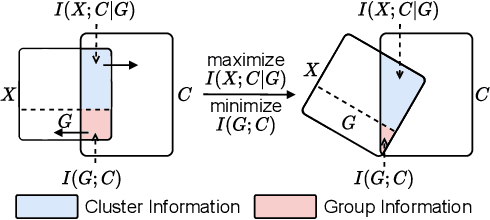

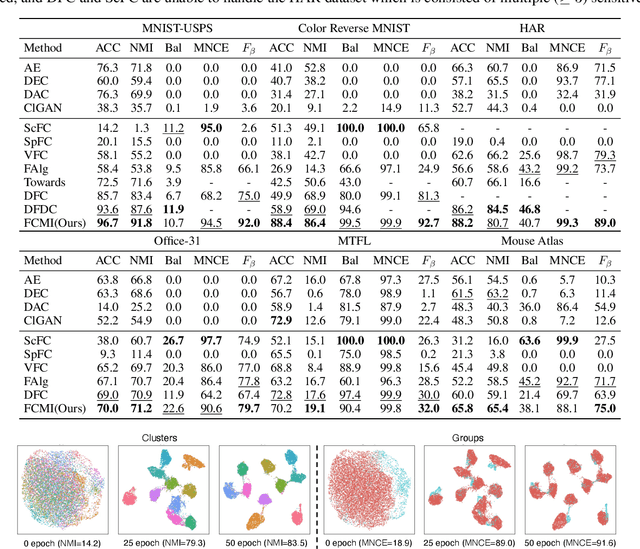

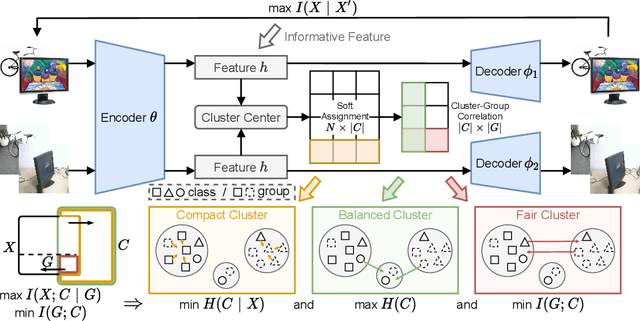

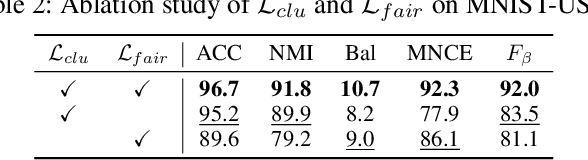

Deep Fair Clustering via Maximizing and Minimizing Mutual Information

Sep 26, 2022

Abstract:Fair clustering aims to divide data into distinct clusters, while preventing sensitive attributes (e.g., gender, race, RNA sequencing technique) from dominating the clustering. Although a number of works have been conducted and achieved huge success in recent, most of them are heuristical, and there lacks a unified theory for algorithm design. In this work, we fill this blank by developing a mutual information theory for deep fair clustering and accordingly designing a novel algorithm, dubbed FCMI. In brief, through maximizing and minimizing mutual information, FCMI is designed to achieve four characteristics highly expected by deep fair clustering, i.e., compact, balanced, and fair clusters, as well as informative features. Besides the contributions to theory and algorithm, another contribution of this work is proposing a novel fair clustering metric built upon information theory as well. Unlike existing evaluation metrics, our metric measures the clustering quality and fairness in a whole instead of separate manner. To verify the effectiveness of the proposed FCMI, we carry out experiments on six benchmarks including a single-cell RNA-seq atlas compared with 11 state-of-the-art methods in terms of five metrics. Code will be released after the acceptance.

Robust Domain Adaptation for Machine Reading Comprehension

Sep 23, 2022

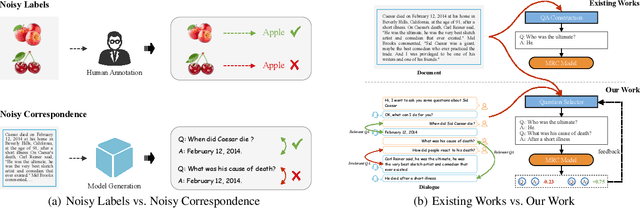

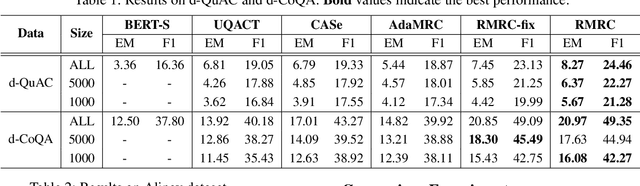

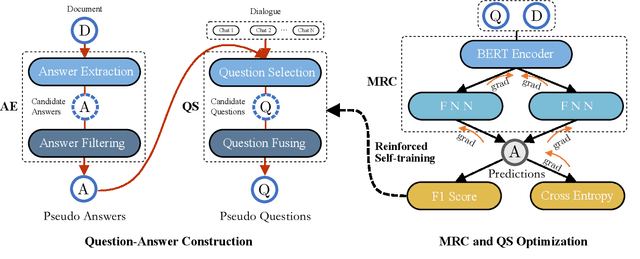

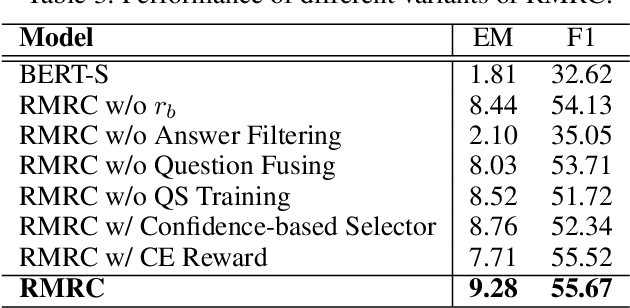

Abstract:Most domain adaptation methods for machine reading comprehension (MRC) use a pre-trained question-answer (QA) construction model to generate pseudo QA pairs for MRC transfer. Such a process will inevitably introduce mismatched pairs (i.e., noisy correspondence) due to i) the unavailable QA pairs in target documents, and ii) the domain shift during applying the QA construction model to the target domain. Undoubtedly, the noisy correspondence will degenerate the performance of MRC, which however is neglected by existing works. To solve such an untouched problem, we propose to construct QA pairs by additionally using the dialogue related to the documents, as well as a new domain adaptation method for MRC. Specifically, we propose Robust Domain Adaptation for Machine Reading Comprehension (RMRC) method which consists of an answer extractor (AE), a question selector (QS), and an MRC model. Specifically, RMRC filters out the irrelevant answers by estimating the correlation to the document via the AE, and extracts the questions by fusing the candidate questions in multiple rounds of dialogue chats via the QS. With the extracted QA pairs, MRC is fine-tuned and provides the feedback to optimize the QS through a novel reinforced self-training method. Thanks to the optimization of the QS, our method will greatly alleviate the noisy correspondence problem caused by the domain shift. To the best of our knowledge, this could be the first study to reveal the influence of noisy correspondence in domain adaptation MRC models and show a feasible way to achieve robustness to mismatched pairs. Extensive experiments on three datasets demonstrate the effectiveness of our method.

FALCON: Sound and Complete Neural Semantic Entailment over ALC Ontologies

Aug 16, 2022

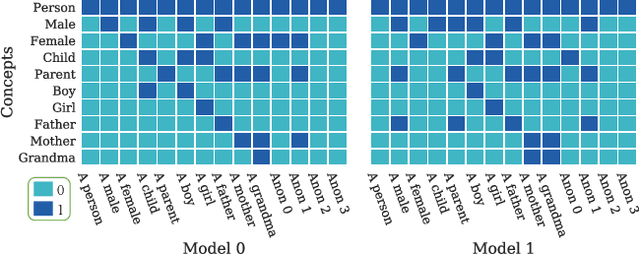

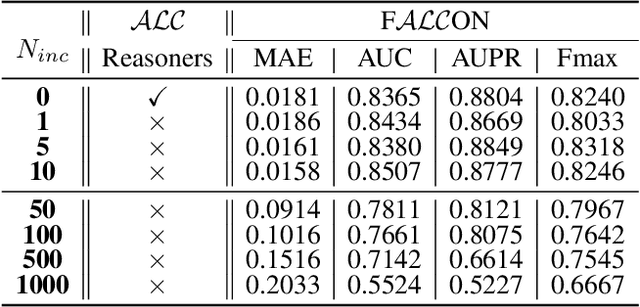

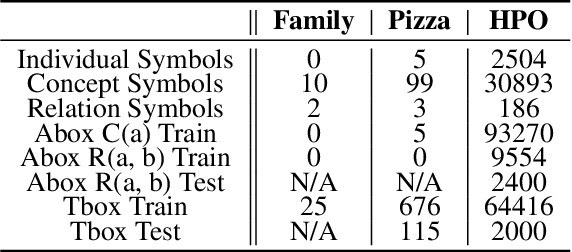

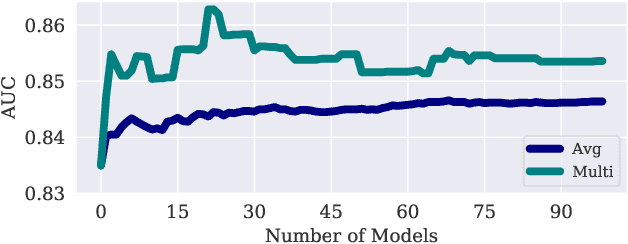

Abstract:Many ontologies, i.e., Description Logic (DL) knowledge bases, have been developed to provide rich knowledge about various domains, and a lot of them are based on ALC, i.e., a prototypical and expressive DL, or its extensions. The main task that explores ALC ontologies is to compute semantic entailment. Symbolic approaches can guarantee sound and complete semantic entailment but are sensitive to inconsistency and missing information. To this end, we propose FALCON, a Fuzzy ALC Ontology Neural reasoner. FALCON uses fuzzy logic operators to generate single model structures for arbitrary ALC ontologies, and uses multiple model structures to compute semantic entailments. Theoretical results demonstrate that FALCON is guaranteed to be a sound and complete algorithm for computing semantic entailments over ALC ontologies. Experimental results show that FALCON enables not only approximate reasoning (reasoning over incomplete ontologies) and paraconsistent reasoning (reasoning over inconsistent ontologies), but also improves machine learning in the biomedical domain by incorporating background knowledge from ALC ontologies.

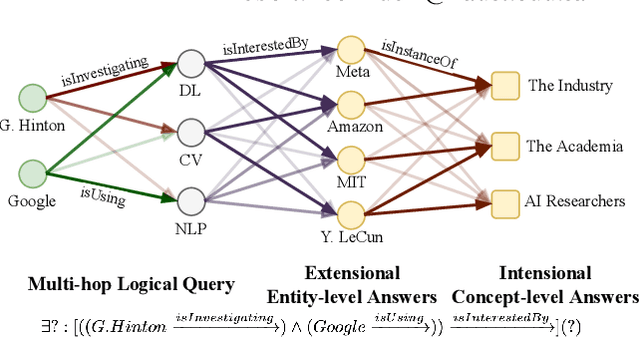

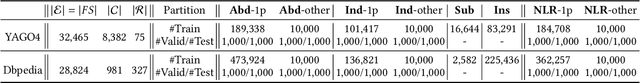

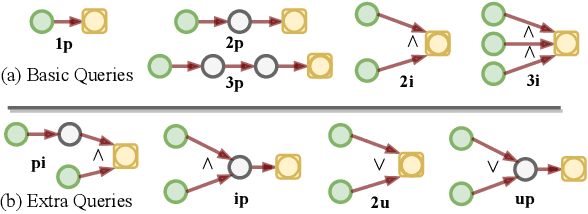

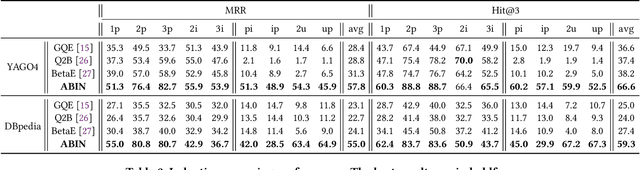

Joint Abductive and Inductive Neural Logical Reasoning

May 29, 2022

Abstract:Neural logical reasoning (NLR) is a fundamental task in knowledge discovery and artificial intelligence. NLR aims at answering multi-hop queries with logical operations on structured knowledge bases based on distributed representations of queries and answers. While previous neural logical reasoners can give specific entity-level answers, i.e., perform inductive reasoning from the perspective of logic theory, they are not able to provide descriptive concept-level answers, i.e., perform abductive reasoning, where each concept is a summary of a set of entities. In particular, the abductive reasoning task attempts to infer the explanations of each query with descriptive concepts, which make answers comprehensible to users and is of great usefulness in the field of applied ontology. In this work, we formulate the problem of the joint abductive and inductive neural logical reasoning (AI-NLR), solving which needs to address challenges in incorporating, representing, and operating on concepts. We propose an original solution named ABIN for AI-NLR. Firstly, we incorporate description logic-based ontological axioms to provide the source of concepts. Then, we represent concepts and queries as fuzzy sets, i.e., sets whose elements have degrees of membership, to bridge concepts and queries with entities. Moreover, we design operators involving concepts on top of the fuzzy set representation of concepts and queries for optimization and inference. Extensive experimental results on two real-world datasets demonstrate the effectiveness of ABIN for AI-NLR.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge