Shaoshan Liu

Timely Fusion of Surround Radar/Lidar for Object Detection in Autonomous Driving Systems

Sep 09, 2023

Abstract:Fusing Radar and Lidar sensor data can fully utilize their complementary advantages and provide more accurate reconstruction of the surrounding for autonomous driving systems. Surround Radar/Lidar can provide 360-degree view sampling with the minimal cost, which are promising sensing hardware solutions for autonomous driving systems. However, due to the intrinsic physical constraints, the rotating speed of surround Radar, and thus the frequency to generate Radar data frames, is much lower than surround Lidar. Existing Radar/Lidar fusion methods have to work at the low frequency of surround Radar, which cannot meet the high responsiveness requirement of autonomous driving systems.This paper develops techniques to fuse surround Radar/Lidar with working frequency only limited by the faster surround Lidar instead of the slower surround Radar, based on the state-of-the-art object detection model MVDNet. The basic idea of our approach is simple: we let MVDNet work with temporally unaligned data from Radar/Lidar, so that fusion can take place at any time when a new Lidar data frame arrives, instead of waiting for the slow Radar data frame. However, directly applying MVDNet to temporally unaligned Radar/Lidar data greatly degrades its object detection accuracy. The key information revealed in this paper is that we can achieve high output frequency with little accuracy loss by enhancing the training procedure to explore the temporal redundancy in MVDNet so that it can tolerate the temporal unalignment of input data. We explore several different ways of training enhancement and compare them quantitatively with experiments.

A Comprehensive Review and Systematic Analysis of Artificial Intelligence Regulation Policies

Jul 23, 2023

Abstract:Due to the cultural and governance differences of countries around the world, there currently exists a wide spectrum of AI regulation policy proposals that have created a chaos in the global AI regulatory space. Properly regulating AI technologies is extremely challenging, as it requires a delicate balance between legal restrictions and technological developments. In this article, we first present a comprehensive review of AI regulation proposals from different geographical locations and cultural backgrounds. Then, drawing from historical lessons, we develop a framework to facilitate a thorough analysis of AI regulation proposals. Finally, we perform a systematic analysis of these AI regulation proposals to understand how each proposal may fail. This study, containing historical lessons and analysis methods, aims to help governing bodies untangling the AI regulatory chaos through a divide-and-conquer manner.

Autonomy 2.0: The Quest for Economies of Scale

Jul 08, 2023

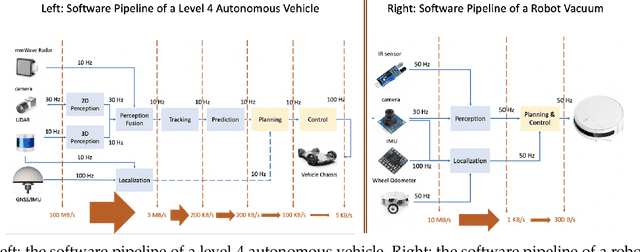

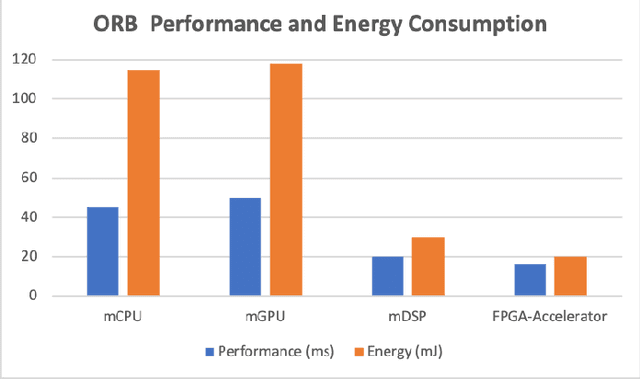

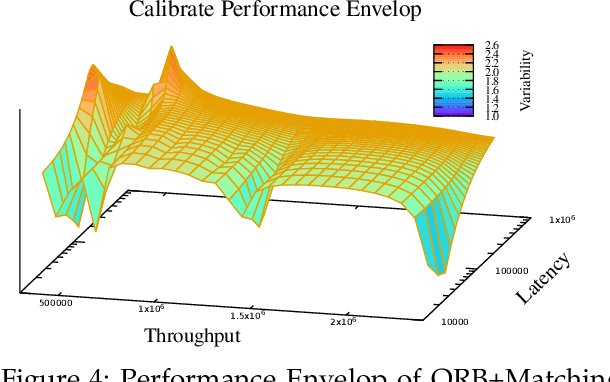

Abstract:With the advancement of robotics and AI technologies in the past decade, we have now entered the age of autonomous machines. In this new age of information technology, autonomous machines, such as service robots, autonomous drones, delivery robots, and autonomous vehicles, rather than humans, will provide services. In this article, through examining the technical challenges and economic impact of the digital economy, we argue that scalability is both highly necessary from a technical perspective and significantly advantageous from an economic perspective, thus is the key for the autonomy industry to achieve its full potential. Nonetheless, the current development paradigm, dubbed Autonomy 1.0, scales with the number of engineers, instead of with the amount of data or compute resources, hence preventing the autonomy industry to fully benefit from the economies of scale, especially the exponentially cheapening compute cost and the explosion of available data. We further analyze the key scalability blockers and explain how a new development paradigm, dubbed Autonomy 2.0, can address these problems to greatly boost the autonomy industry.

AI Clinics on Mobile : Universal AI Doctors for the Underserved and Hard-to-Reach

Jun 17, 2023

Abstract:This paper introduces Artificial Intelligence Clinics on Mobile (AICOM), an open-source project devoted to answering the United Nations Sustainable Development Goal 3 (SDG3) on health, which represents a universal recognition that health is fundamental to human capital and social and economic development. The core motivation for the AICOM project is the fact that over 80% of the people in the least developed countries (LDCs) own a mobile phone, even though less than 40% of these people have internet access. Hence, through enabling AI-based disease diagnostics and screening capability on affordable mobile phones without connectivity will be a critical first step to addressing healthcare access problems. The technologies developed in the AICOM project achieve exactly this goal, and we have demonstrated the effectiveness of AICOM on monkeypox screening tasks. We plan to continue expanding and open-sourcing the AICOM platform, aiming for it to evolve into an universal AI doctor for the Underserved and Hard-to-Reach.

Compliance Costs of AI Technology Commercialization: A Field Deployment Perspective

Jan 31, 2023

Abstract:While Artificial Intelligence (AI) technologies are progressing fast, compliance costs have become a huge financial burden for AI startups, which are already constrained on research & development budgets. This situation creates a compliance trap, as many AI startups are not financially prepared to cope with a broad spectrum of regulatory requirements. Particularly, the complex and varying regulatory processes across the globe subtly give advantages to well-established and resourceful technology firms over resource-constrained AI startups [1]. The continuation of this trend may phase out the majority of AI startups and lead to giant technology firms' monopolies of AI technologies. To demonstrate the reality of the compliance trap, from a field deployment perspective, we delve into the details of compliance costs of AI commercial operations.

Thales: Formulating and Estimating Architectural Vulnerability Factors for DNN Accelerators

Dec 05, 2022

Abstract:As Deep Neural Networks (DNNs) are increasingly deployed in safety critical and privacy sensitive applications such as autonomous driving and biometric authentication, it is critical to understand the fault-tolerance nature of DNNs. Prior work primarily focuses on metrics such as Failures In Time (FIT) rate and the Silent Data Corruption (SDC) rate, which quantify how often a device fails. Instead, this paper focuses on quantifying the DNN accuracy given that a transient error has occurred, which tells us how well a network behaves when a transient error occurs. We call this metric Resiliency Accuracy (RA). We show that existing RA formulation is fundamentally inaccurate, because it incorrectly assumes that software variables (model weights/activations) have equal faulty probability under hardware transient faults. We present an algorithm that captures the faulty probabilities of DNN variables under transient faults and, thus, provides correct RA estimations validated by hardware. To accelerate RA estimation, we reformulate RA calculation as a Monte Carlo integration problem, and solve it using importance sampling driven by DNN specific heuristics. Using our lightweight RA estimation method, we show that transient faults lead to far greater accuracy degradation than what todays DNN resiliency tools estimate. We show how our RA estimation tool can help design more resilient DNNs by integrating it with a Network Architecture Search framework.

AICOM-MP: an AI-based Monkeypox Detector for Resource-Constrained Environments

Nov 21, 2022

Abstract:Under the Autonomous Mobile Clinics (AMCs) initiative, we are developing, open sourcing, and standardizing health AI technologies to enable healthcare access in least developed countries (LDCs). We deem AMCs as the next generation of health care delivery platforms, whereas health AI engines are applications on these platforms, similar to how various applications expand the usage scenarios of smart phones. Facing the recent global monkeypox outbreak, in this article, we introduce AICOM-MP, an AI-based monkeypox detector specially aiming for handling images taken from resource-constrained devices. Compared to existing AI-based monkeypox detectors, AICOM-MP has achieved state-of-the-art (SOTA) performance. We have hosted AICOM-MP as a web service to allow universal access to monkeypox screening technology. We have also open sourced both the source code and the dataset of AICOM-MP to allow health AI professionals to integrate AICOM-MP into their services. Also, through the AICOM-MP project, we have generalized a methodology of developing health AI technologies for AMCs to allow universal access even in resource-constrained environments.

INTERNEURON: A Middleware with Multi-Network Communication Reliability for Infrastructure Vehicle Cooperative Autonomous Driving

Oct 28, 2022

Abstract:Infrastructure-Vehicle Cooperative Autonomous Driving (IVCAD) is a new paradigm of autonomous driving, which relies on the cooperation between intelligent roads and autonomous vehicles. This paradigm has been shown to be safer and more efficient compared to the on-vehicle-only autonomous driving paradigm. Our real-world deployment data indicates that the effectiveness of IVCAD is constrained by reliability and performance of commercial communication networks. This paper targets this exact problem, and proposes INTERNEURON, a middleware to achieve high communication reliability between intelligent roads and autonomous vehicles, in the context of IVCAD. Specifically, INTERNEURON dynamically matches IVCAD applications and the underlying communication technologies based on varying communication performance and quality needs. Evaluation results confirm that INTERNEURON reduces deadline violations by more than 95\%, significantly improving the reliability of IVCAD systems.

Factor Graph Accelerator for LiDAR-Inertial Odometry

Sep 06, 2022

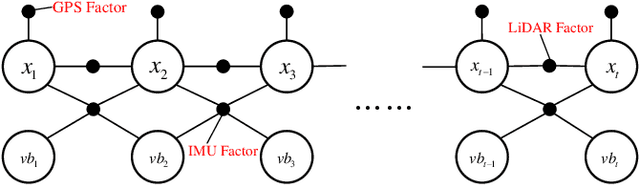

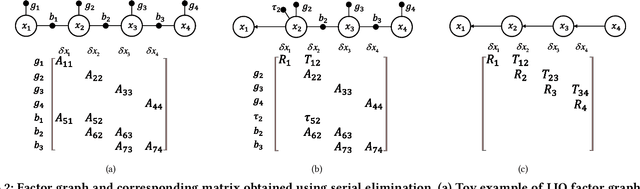

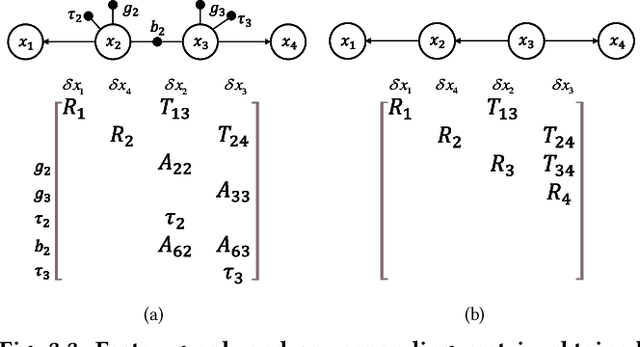

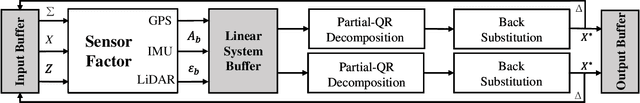

Abstract:Factor graph is a graph representing the factorization of a probability distribution function, and has been utilized in many autonomous machine computing tasks, such as localization, tracking, planning and control etc. We are developing an architecture with the goal of using factor graph as a common abstraction for most, if not, all autonomous machine computing tasks. If successful, the architecture would provide a very simple interface of mapping autonomous machine functions to the underlying compute hardware. As a first step of such an attempt, this paper presents our most recent work of developing a factor graph accelerator for LiDAR-Inertial Odometry (LIO), an essential task in many autonomous machines, such as autonomous vehicles and mobile robots. By modeling LIO as a factor graph, the proposed accelerator not only supports multi-sensor fusion such as LiDAR, inertial measurement unit (IMU), GPS, etc., but solves the global optimization problem of robot navigation in batch or incremental modes. Our evaluation demonstrates that the proposed design significantly improves the real-time performance and energy efficiency of autonomous machine navigation systems. The initial success suggests the potential of generalizing the factor graph architecture as a common abstraction for autonomous machine computing, including tracking, planning, and control etc.

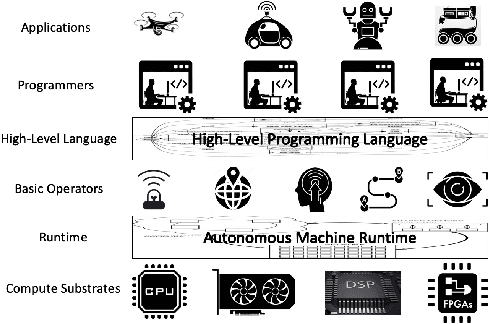

Programming Autonomous Machines

Sep 06, 2022

Abstract:One key technical challenge in the age of autonomous machines is the programming of autonomous machines, which demands the synergy across multiple domains, including fundamental computer science, computer architecture, and robotics, and requires expertise from both academia and industry. This paper discusses the programming theory and practices tied to producing real-life autonomous machines, and covers aspects from high-level concepts down to low-level code generation in the context of specific functional requirements, performance expectation, and implementation constraints of autonomous machines.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge