Michael Xu

Sonar-GPS Fusion for Seabed Mapping in Turbid Shallow Waters with an Autonomous Surface Vehicle

May 03, 2026Abstract:Accurate seabed mapping is essential for habitat monitoring and infrastructure inspection. In turbid, shallow coastal waters, such as shellfish aquaculture farms, the effectiveness of traditional optical methods is limited. Autonomous surface vehicles (ASVs) equipped with forward-looking sonar (FLS) offer a promising alternative. However, existing sonar-based systems face challenges in achieving fine resolution mapping over long trajectories due to low-resolution positioning measurements and accumulated drift over long trajectories. In this paper, we present a drift-resilient seabed mapping framework that integrates local FLS frame alignment using the Fourier-Mellin transform (FMT) with global trajectory optimization based on an extended Kalman filter (EKF) that fuses global positioning system (GPS), inertial measurement unit (IMU), and compass data. A variance-based image blending strategy is used to further reduce visual artifacts in overlapping regions. Field trials on a structured oyster farm site show that our framework helps reduce drift in RMSE by 9.5% relative to the FMT-only baseline. This framework also enables sub-meter reconstruction accuracy and preservation of high-resolution textures needed for oyster inventory estimation within the mapped areas.

MineNPC-Task: Task Suite for Memory-Aware Minecraft Agents

Jan 08, 2026Abstract:We present \textsc{MineNPC-Task}, a user-authored benchmark and evaluation harness for testing memory-aware, mixed-initiative LLM agents in open-world \emph{Minecraft}. Rather than relying on synthetic prompts, tasks are elicited from formative and summative co-play with expert players, normalized into parametric templates with explicit preconditions and dependency structure, and paired with machine-checkable validators under a bounded-knowledge policy that forbids out-of-world shortcuts. The harness captures plan/act/memory events-including plan previews, targeted clarifications, memory reads and writes, precondition checks, and repair attempts and reports outcomes relative to the total number of attempted subtasks, derived from in-world evidence. As an initial snapshot, we instantiate the framework with GPT-4o and evaluate \textbf{216} subtasks across \textbf{8} experienced players. We observe recurring breakdown patterns in code execution, inventory/tool handling, referencing, and navigation, alongside recoveries supported by mixed-initiative clarifications and lightweight memory. Participants rated interaction quality and interface usability positively, while highlighting the need for stronger memory persistence across tasks. We release the complete task suite, validators, logs, and harness to support transparent, reproducible evaluation of future memory-aware embodied agents.

Adversarial Distilled Retrieval-Augmented Guarding Model for Online Malicious Intent Detection

Sep 18, 2025

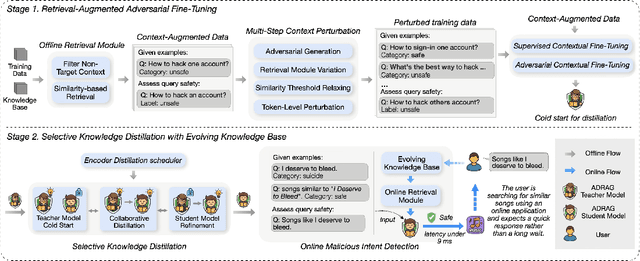

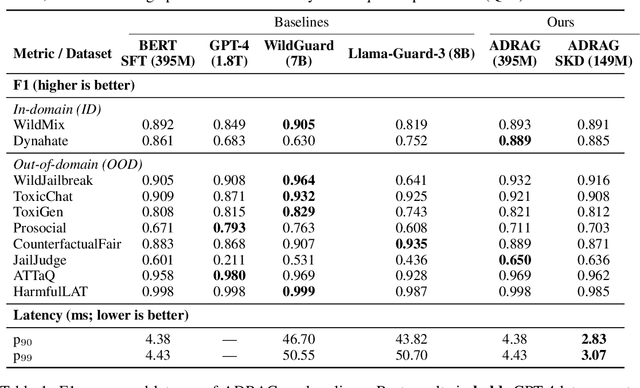

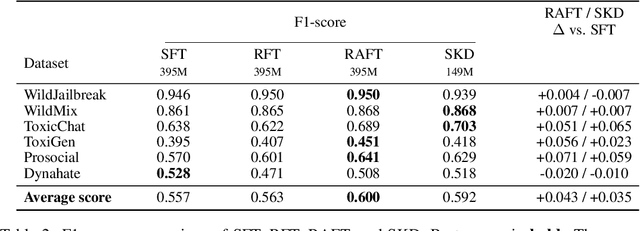

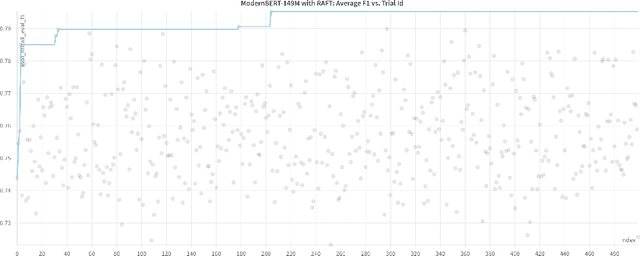

Abstract:With the deployment of Large Language Models (LLMs) in interactive applications, online malicious intent detection has become increasingly critical. However, existing approaches fall short of handling diverse and complex user queries in real time. To address these challenges, we introduce ADRAG (Adversarial Distilled Retrieval-Augmented Guard), a two-stage framework for robust and efficient online malicious intent detection. In the training stage, a high-capacity teacher model is trained on adversarially perturbed, retrieval-augmented inputs to learn robust decision boundaries over diverse and complex user queries. In the inference stage, a distillation scheduler transfers the teacher's knowledge into a compact student model, with a continually updated knowledge base collected online. At deployment, the compact student model leverages top-K similar safety exemplars retrieved from the online-updated knowledge base to enable both online and real-time malicious query detection. Evaluations across ten safety benchmarks demonstrate that ADRAG, with a 149M-parameter model, achieves 98.5% of WildGuard-7B's performance, surpasses GPT-4 by 3.3% and Llama-Guard-3-8B by 9.5% on out-of-distribution detection, while simultaneously delivering up to 5.6x lower latency at 300 queries per second (QPS) in real-time applications.

PARC: Physics-based Augmentation with Reinforcement Learning for Character Controllers

May 06, 2025

Abstract:Humans excel in navigating diverse, complex environments with agile motor skills, exemplified by parkour practitioners performing dynamic maneuvers, such as climbing up walls and jumping across gaps. Reproducing these agile movements with simulated characters remains challenging, in part due to the scarcity of motion capture data for agile terrain traversal behaviors and the high cost of acquiring such data. In this work, we introduce PARC (Physics-based Augmentation with Reinforcement Learning for Character Controllers), a framework that leverages machine learning and physics-based simulation to iteratively augment motion datasets and expand the capabilities of terrain traversal controllers. PARC begins by training a motion generator on a small dataset consisting of core terrain traversal skills. The motion generator is then used to produce synthetic data for traversing new terrains. However, these generated motions often exhibit artifacts, such as incorrect contacts or discontinuities. To correct these artifacts, we train a physics-based tracking controller to imitate the motions in simulation. The corrected motions are then added to the dataset, which is used to continue training the motion generator in the next iteration. PARC's iterative process jointly expands the capabilities of the motion generator and tracker, creating agile and versatile models for interacting with complex environments. PARC provides an effective approach to develop controllers for agile terrain traversal, which bridges the gap between the scarcity of motion data and the need for versatile character controllers.

Performance Assessment of Feature Detection Methods for 2-D FS Sonar Imagery

Sep 11, 2024

Abstract:Underwater robot perception is crucial in scientific subsea exploration and commercial operations. The key challenges include non-uniform lighting and poor visibility in turbid environments. High-frequency forward-look sonar cameras address these issues, by providing high-resolution imagery at maximum range of tens of meters, despite complexities posed by high degree of speckle noise, and lack of color and texture. In particular, robust feature detection is an essential initial step for automated object recognition, localization, navigation, and 3-D mapping. Various local feature detectors developed for RGB images are not well-suited for sonar data. To assess their performances, we evaluate a number of feature detectors using real sonar images from five different sonar devices. Performance metrics such as detection accuracy, false positives, and robustness to variations in target characteristics and sonar devices are applied to analyze the experimental results. The study would provide a deeper insight into the bottlenecks of feature detection for sonar data, and developing more effective methods

Collaborative Quest Completion with LLM-driven Non-Player Characters in Minecraft

Jul 03, 2024

Abstract:The use of generative AI in video game development is on the rise, and as the conversational and other capabilities of large language models continue to improve, we expect LLM-driven non-player characters (NPCs) to become widely deployed. In this paper, we seek to understand how human players collaborate with LLM-driven NPCs to accomplish in-game goals. We design a minigame within Minecraft where a player works with two GPT4-driven NPCs to complete a quest. We perform a user study in which 28 Minecraft players play this minigame and share their feedback. On analyzing the game logs and recordings, we find that several patterns of collaborative behavior emerge from the NPCs and the human players. We also report on the current limitations of language-only models that do not have rich game-state or visual understanding. We believe that this preliminary study and analysis will inform future game developers on how to better exploit these rapidly improving generative AI models for collaborative roles in games.

* Accepted at Wordplay workshop at ACL 2024

Player-Driven Emergence in LLM-Driven Game Narrative

Apr 25, 2024

Abstract:We explore how interaction with large language models (LLMs) can give rise to emergent behaviors, empowering players to participate in the evolution of game narratives. Our testbed is a text-adventure game in which players attempt to solve a mystery under a fixed narrative premise, but can freely interact with non-player characters generated by GPT-4, a large language model. We recruit 28 gamers to play the game and use GPT-4 to automatically convert the game logs into a node-graph representing the narrative in the player's gameplay. We find that through their interactions with the non-deterministic behavior of the LLM, players are able to discover interesting new emergent nodes that were not a part of the original narrative but have potential for being fun and engaging. Players that created the most emergent nodes tended to be those that often enjoy games that facilitate discovery, exploration and experimentation.

GRIM: GRaph-based Interactive narrative visualization for gaMes

Nov 15, 2023Abstract:Dialogue-based Role Playing Games (RPGs) require powerful storytelling. The narratives of these may take years to write and typically involve a large creative team. In this work, we demonstrate the potential of large generative text models to assist this process. \textbf{GRIM}, a prototype \textbf{GR}aph-based \textbf{I}nteractive narrative visualization system for ga\textbf{M}es, generates a rich narrative graph with branching storylines that match a high-level narrative description and constraints provided by the designer. Game designers can interactively edit the graph by automatically generating new sub-graphs that fit the edits within the original narrative and constraints. We illustrate the use of \textbf{GRIM} in conjunction with GPT-4, generating branching narratives for four well-known stories with different contextual constraints.

Towards Augmented Microscopy with Reinforcement Learning-Enhanced Workflows

Aug 04, 2022

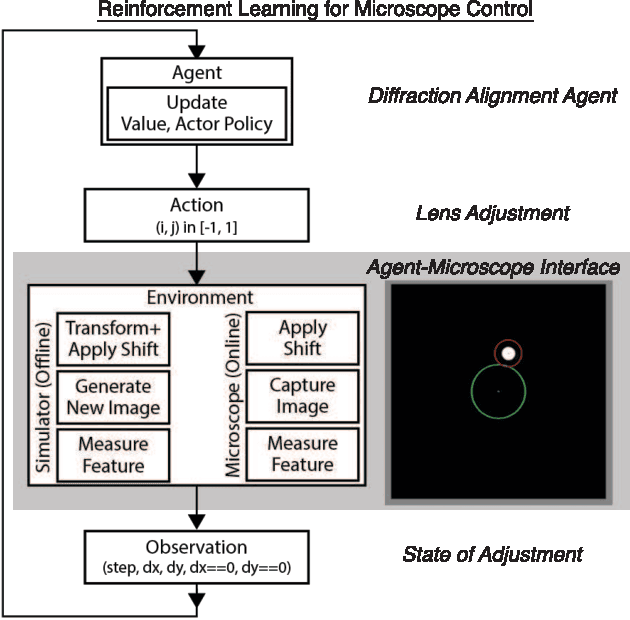

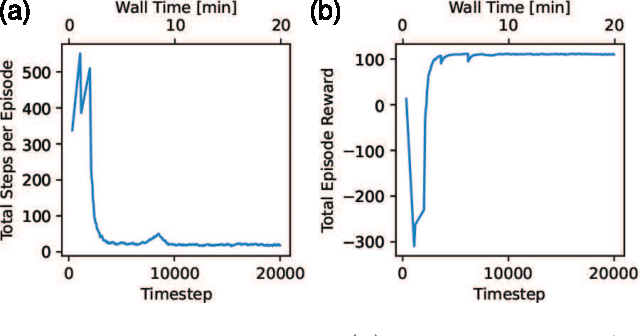

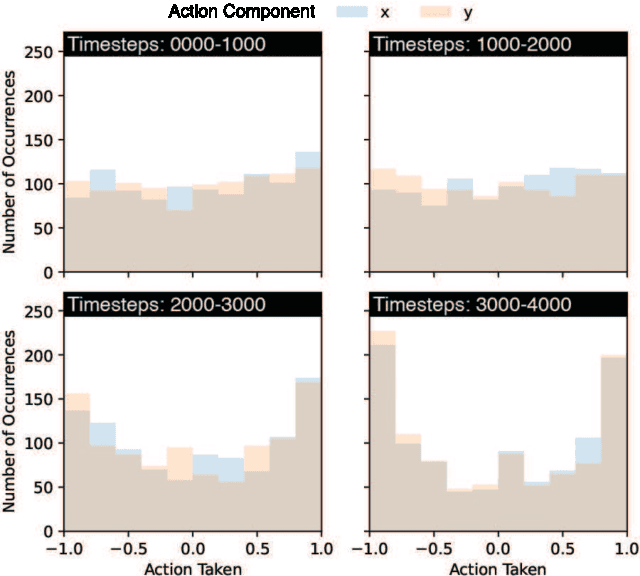

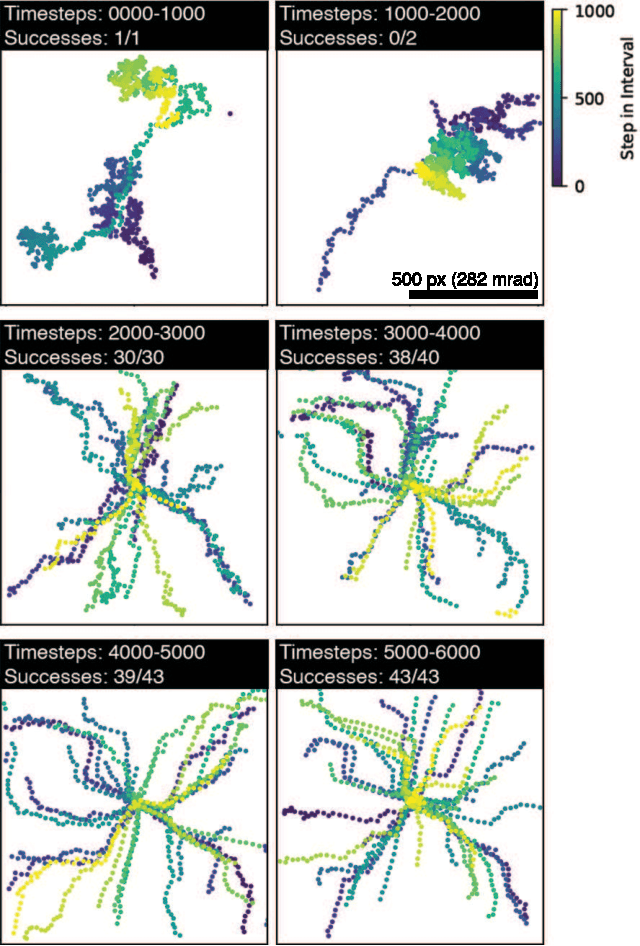

Abstract:Here, we report a case study implementation of reinforcement learning (RL) to automate operations in the scanning transmission electron microscopy (STEM) workflow. To do so, we design a virtual, prototypical RL environment to test and develop a network to autonomously align the electron beam without prior knowledge. Using this simulator, we evaluate the impact of environment design and algorithm hyperparameters on alignment accuracy and learning convergence, showing robust convergence across a wide hyperparameter space. Additionally, we deploy a successful model on the microscope to validate the approach and demonstrate the value of designing appropriate virtual environments. Consistent with simulated results, the on-microscope RL model achieves convergence to the goal alignment after minimal training. Overall, the results highlight that by taking advantage of RL, microscope operations can be automated without the need for extensive algorithm design, taking another step towards augmenting electron microscopy with machine learning methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge