Maria Bauza

Experience-Embedded Visual Foresight

Nov 17, 2019

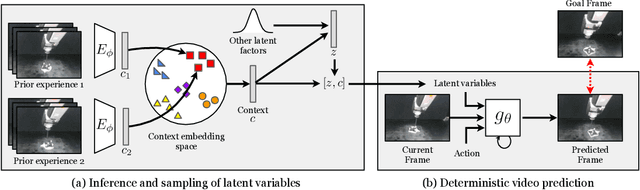

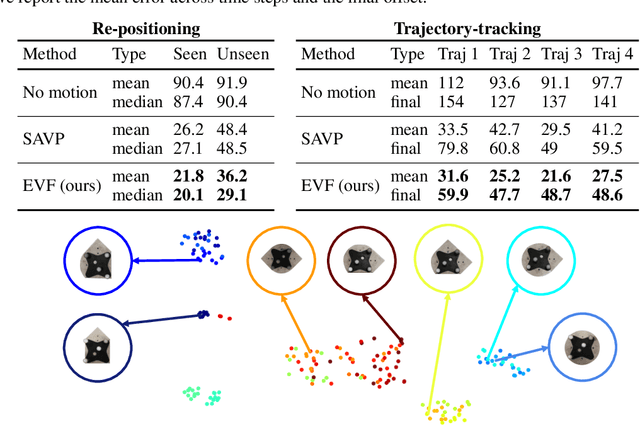

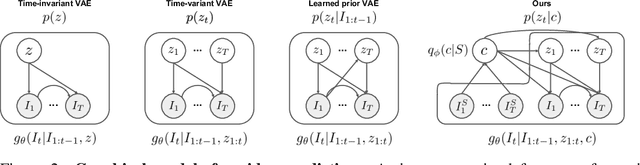

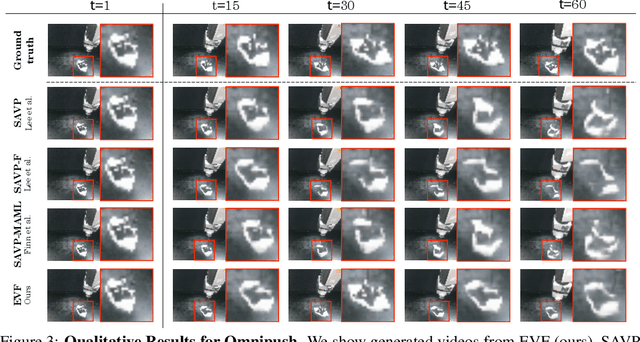

Abstract:Visual foresight gives an agent a window into the future, which it can use to anticipate events before they happen and plan strategic behavior. Although impressive results have been achieved on video prediction in constrained settings, these models fail to generalize when confronted with unfamiliar real-world objects. In this paper, we tackle the generalization problem via fast adaptation, where we train a prediction model to quickly adapt to the observed visual dynamics of a novel object. Our method, Experience-embedded Visual Foresight (EVF), jointly learns a fast adaptation module, which encodes observed trajectories of the new object into a vector embedding, and a visual prediction model, which conditions on this embedding to generate physically plausible predictions. For evaluation, we compare our method against baselines on video prediction and benchmark its utility on two real-world control tasks. We show that our method is able to quickly adapt to new visual dynamics and achieves lower error than the baselines when manipulating novel objects.

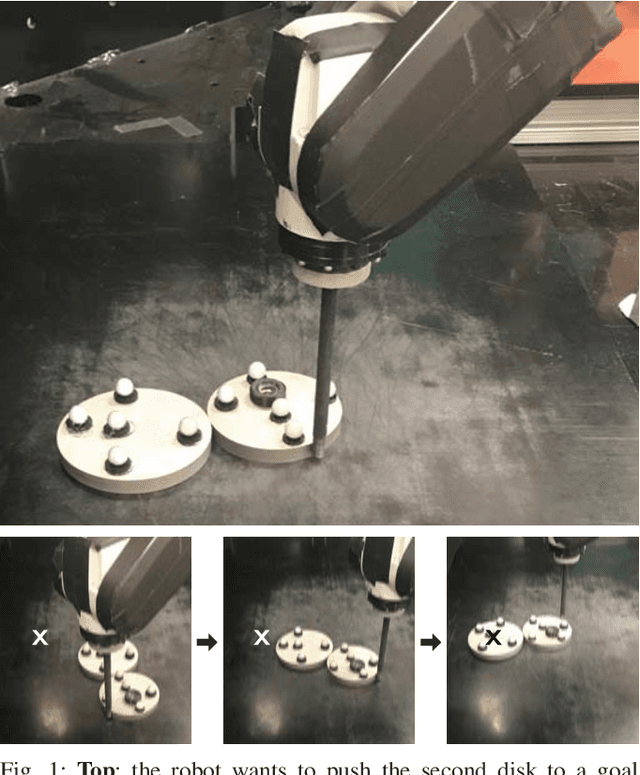

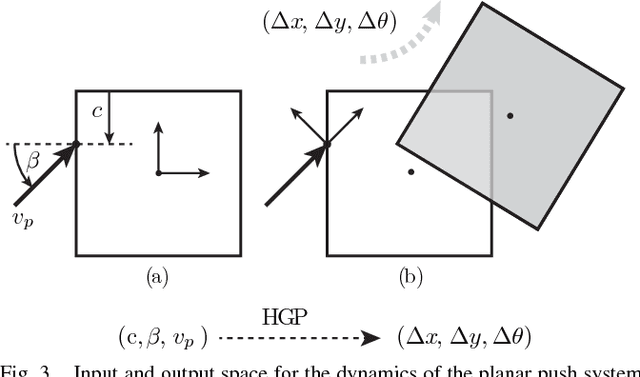

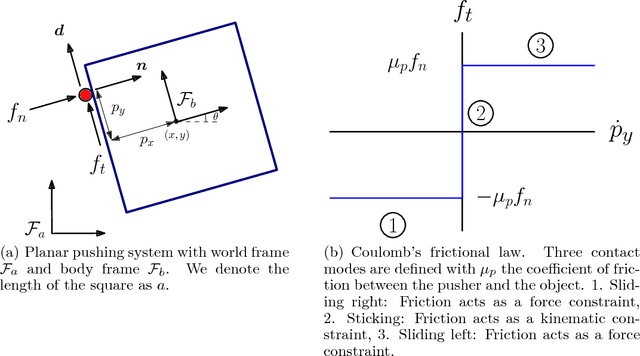

Accurate Vision-based Manipulation through Contact Reasoning

Nov 08, 2019

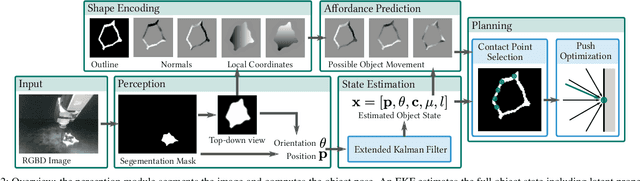

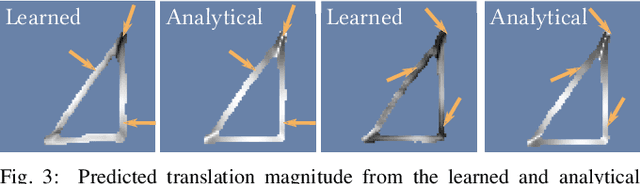

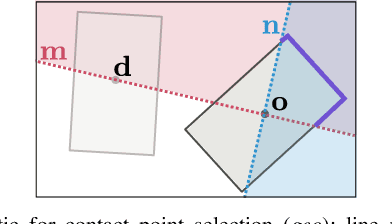

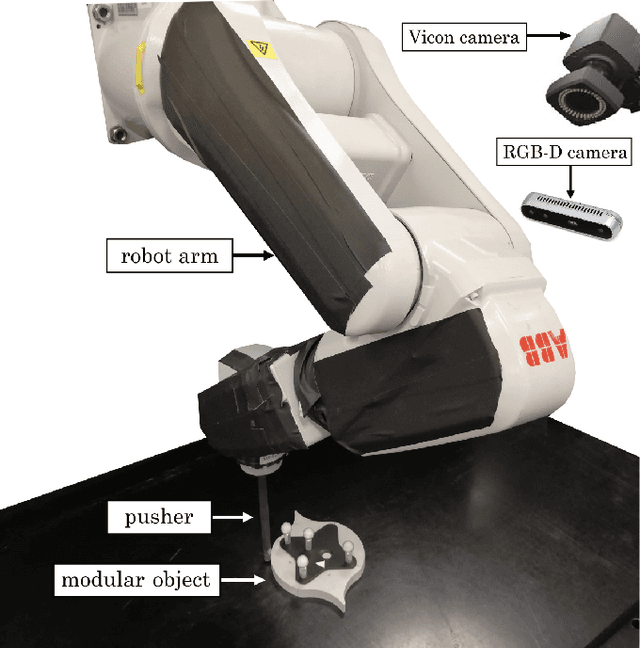

Abstract:Planning contact interactions is one of the core challenges of many robotic tasks. Optimizing contact locations while taking dynamics into account is computationally costly and in only partially observed environments, executing contact-based tasks often suffers from low accuracy. We present an approach that addresses these two challenges for the problem of vision-based manipulation. First, we propose to disentangle contact from motion optimization. Thereby, we improve planning efficiency by focusing computation on promising contact locations. Second, we use a hybrid approach for perception and state estimation that combines neural networks with a physically meaningful state representation. In simulation and real-world experiments on the task of planar pushing, we show that our method is more efficient and achieves a higher manipulation accuracy than previous vision-based approaches.

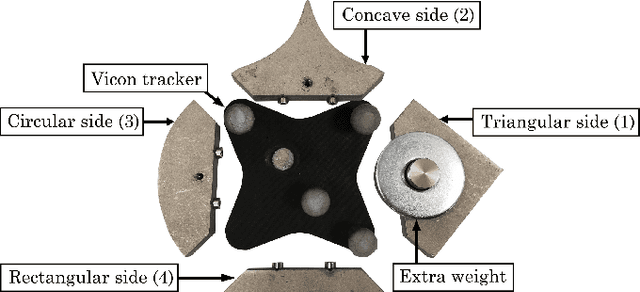

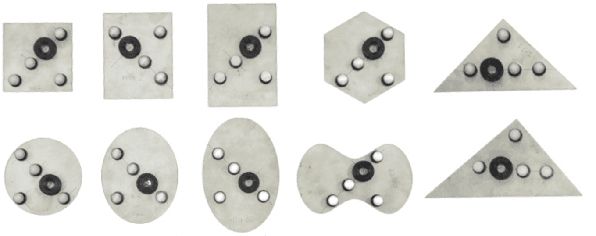

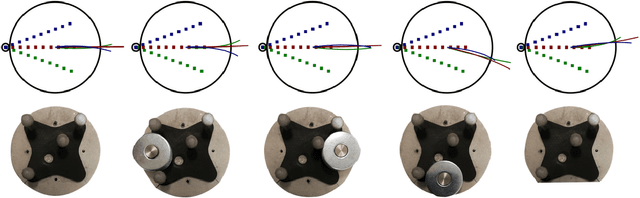

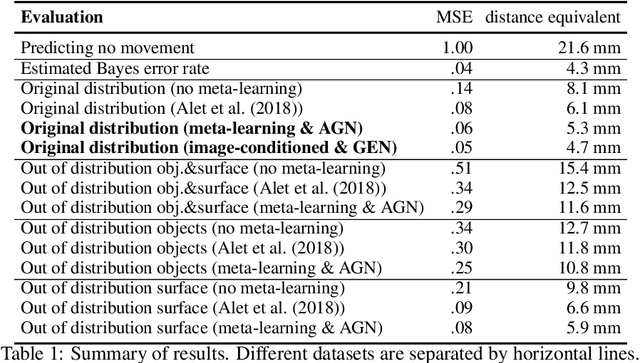

Omnipush: accurate, diverse, real-world dataset of pushing dynamics with RGB-D video

Oct 01, 2019

Abstract:Pushing is a fundamental robotic skill. Existing work has shown how to exploit models of pushing to achieve a variety of tasks, including grasping under uncertainty, in-hand manipulation and clearing clutter. Such models, however, are approximate, which limits their applicability. Learning-based methods can reason directly from raw sensory data with accuracy, and have the potential to generalize to a wider diversity of scenarios. However, developing and testing such methods requires rich-enough datasets. In this paper we introduce Omnipush, a dataset with high variety of planar pushing behavior. In particular, we provide 250 pushes for each of 250 objects, all recorded with RGB-D and a high precision tracking system. The objects are constructed so as to systematically explore key factors that affect pushing --the shape of the object and its mass distribution-- which have not been broadly explored in previous datasets, and allow to study generalization in model learning. Omnipush includes a benchmark for meta-learning dynamic models, which requires algorithms that make good predictions and estimate their own uncertainty. We also provide an RGB video prediction benchmark and propose other relevant tasks that can be suited with this dataset. Data and code are available at \url{https://web.mit.edu/mcube/omnipush-dataset/}.

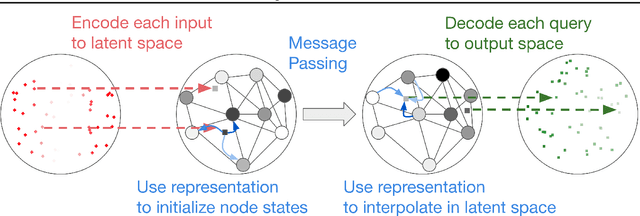

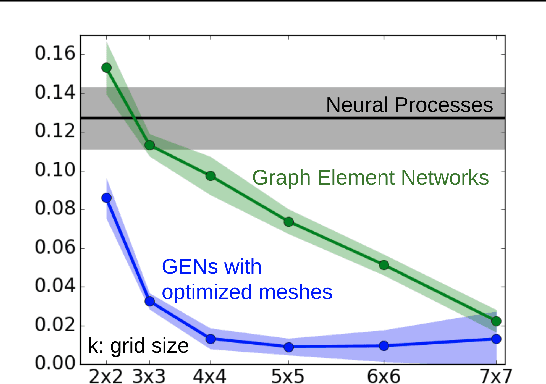

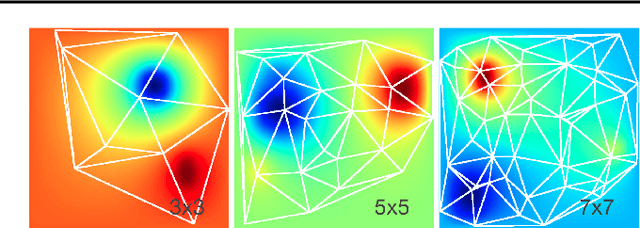

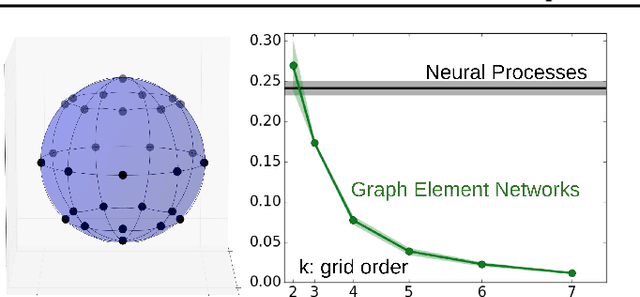

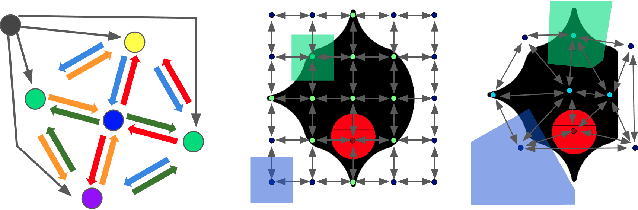

Graph Element Networks: adaptive, structured computation and memory

May 13, 2019

Abstract:We explore the use of graph neural networks (GNNs) to model spatial processes in which there is no a priori graphical structure. Similar to finite element analysis, we assign nodes of a GNN to spatial locations and use a computational process defined on the graph to model the relationship between an initial function defined over a space and a resulting function in the same space. We use GNNs as a computational substrate, and show that the locations of the nodes in space as well as their connectivity can be optimized to focus on the most complex parts of the space. Moreover, this representational strategy allows the learned input-output relationship to generalize over the size of the underlying space and run the same model at different levels of precision, trading computation for accuracy. We demonstrate this method on a traditional PDE problem, a physical prediction problem from robotics, and learning to predict scene images from novel viewpoints.

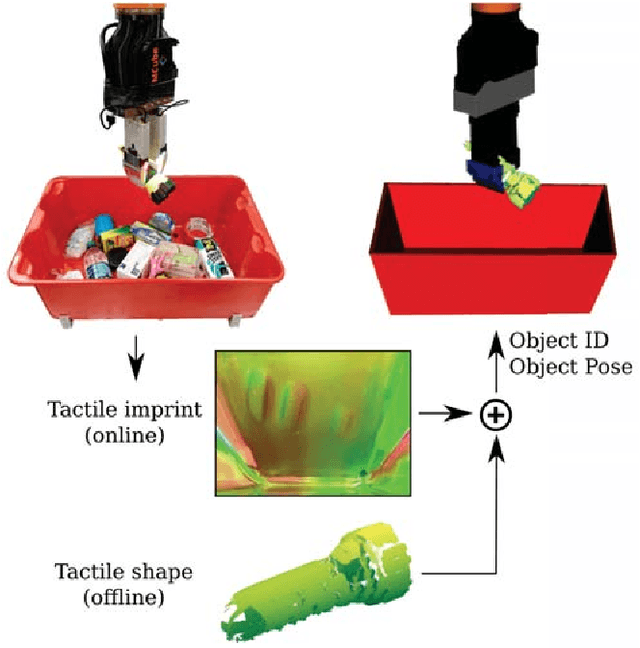

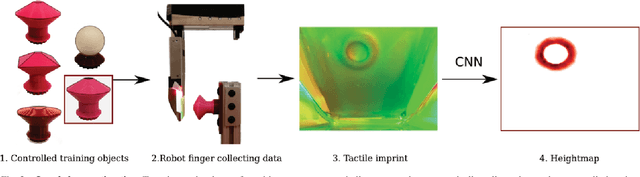

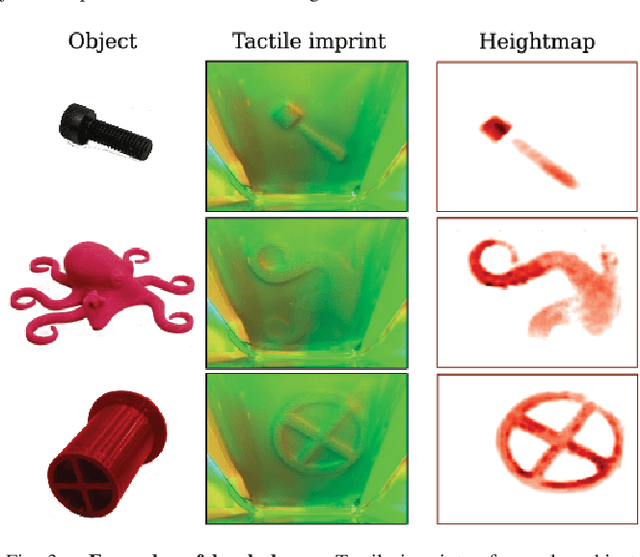

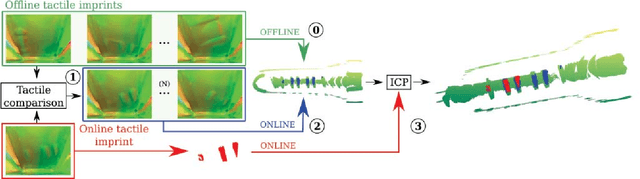

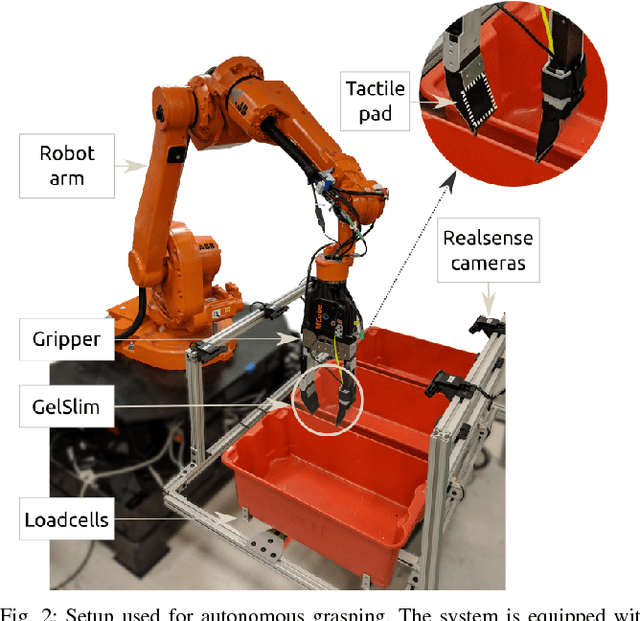

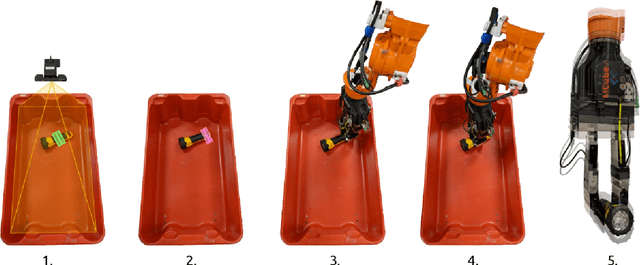

Tactile Mapping and Localization from High-Resolution Tactile Imprints

Apr 24, 2019

Abstract:This work studies the problem of shape reconstruction and object localization using a vision-based tactile sensor, GelSlim. The main contributions are the recovery of local shapes from contact, an approach to reconstruct the tactile shape of objects from tactile imprints, and an accurate method for object localization of previously reconstructed objects. The algorithms can be applied to a large variety of 3D objects and provide accurate tactile feedback for in-hand manipulation. Results show that by exploiting the dense tactile information we can reconstruct the shape of objects with high accuracy and do on-line object identification and localization, opening the door to reactive manipulation guided by tactile sensing. We provide videos and supplemental information in the project's website http://web.mit.edu/mcube/research/tactile_localization.html.

Combining Physical Simulators and Object-Based Networks for Control

Apr 13, 2019

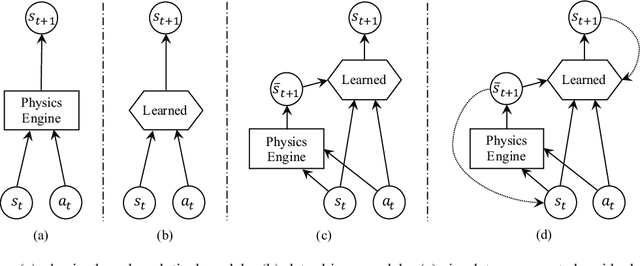

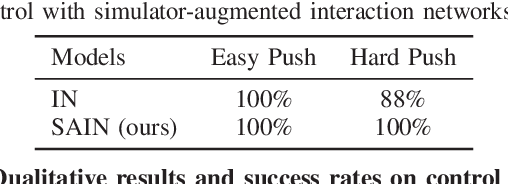

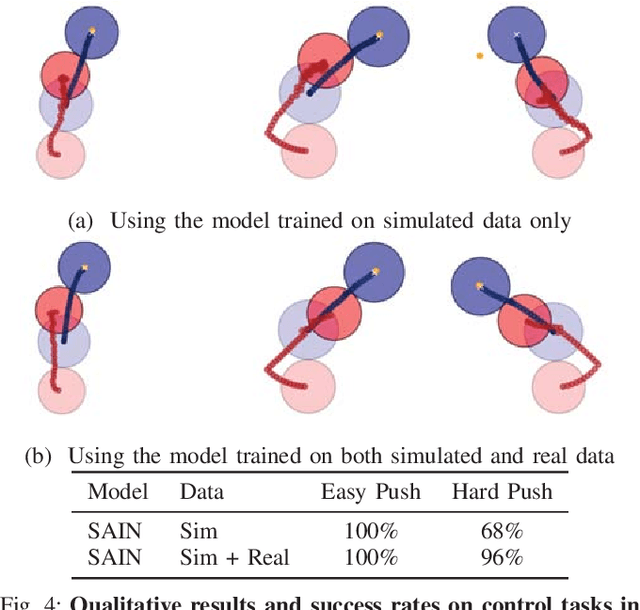

Abstract:Physics engines play an important role in robot planning and control; however, many real-world control problems involve complex contact dynamics that cannot be characterized analytically. Most physics engines therefore employ . approximations that lead to a loss in precision. In this paper, we propose a hybrid dynamics model, simulator-augmented interaction networks (SAIN), combining a physics engine with an object-based neural network for dynamics modeling. Compared with existing models that are purely analytical or purely data-driven, our hybrid model captures the dynamics of interacting objects in a more accurate and data-efficient manner.Experiments both in simulation and on a real robot suggest that it also leads to better performance when used in complex control tasks. Finally, we show that our model generalizes to novel environments with varying object shapes and materials.

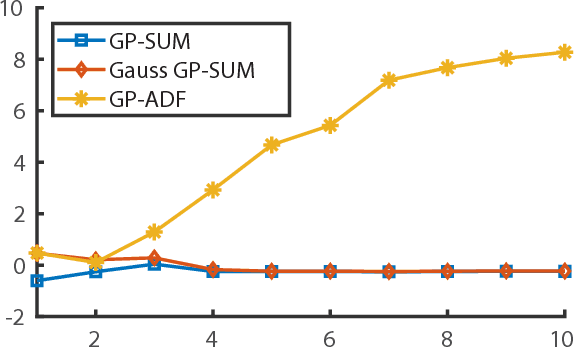

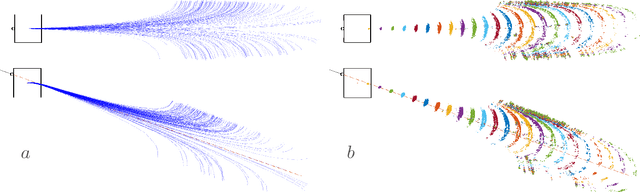

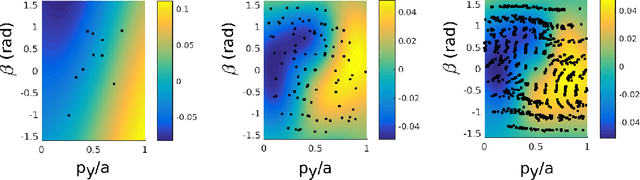

GP-SUM. Gaussian Processes Filtering of non-Gaussian Beliefs

Jan 30, 2019

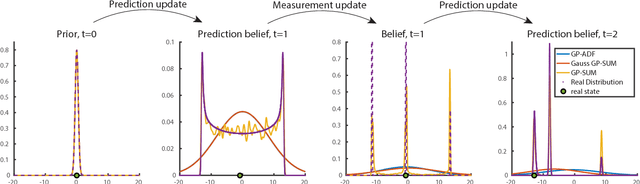

Abstract:This work studies the problem of stochastic dynamic filtering and state propagation with complex beliefs. The main contribution is GP-SUM, a filtering algorithm tailored to dynamic systems and observation models expressed as Gaussian Processes (GP), and to states represented as a weighted sum of Gaussians. The key attribute of GP-SUM is that it does not rely on linearizations of the dynamic or observation models, or on unimodal Gaussian approximations of the belief, hence enables tracking complex state distributions. The algorithm can be seen as a combination of a sampling-based filter with a probabilistic Bayes filter. On the one hand, GP-SUM operates by sampling the state distribution and propagating each sample through the dynamic system and observation models. On the other hand, it achieves effective sampling and accurate probabilistic propagation by relying on the GP form of the system, and the sum-of-Gaussian form of the belief. We show that GP-SUM outperforms several GP-Bayes and Particle Filters on a standard benchmark. We also demonstrate its use in a pushing task, predicting with experimental accuracy the naturally occurring non-Gaussian distributions.

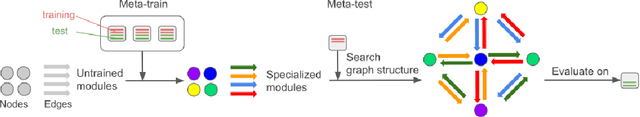

Modular meta-learning in abstract graph networks for combinatorial generalization

Dec 19, 2018

Abstract:Modular meta-learning is a new framework that generalizes to unseen datasets by combining a small set of neural modules in different ways. In this work we propose abstract graph networks: using graphs as abstractions of a system's subparts without a fixed assignment of nodes to system subparts, for which we would need supervision. We combine this idea with modular meta-learning to get a flexible framework with combinatorial generalization to new tasks built in. We then use it to model the pushing of arbitrarily shaped objects from little or no training data.

A Data-Efficient Approach to Precise and Controlled Pushing

Oct 09, 2018

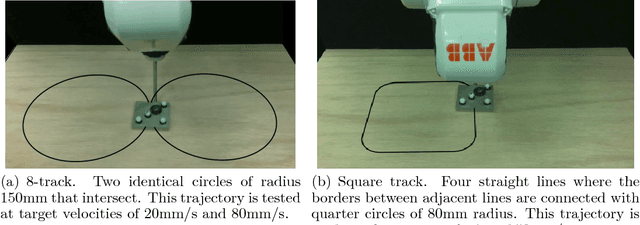

Abstract:Decades of research in control theory have shown that simple controllers, when provided with timely feedback, can control complex systems. Pushing is an example of a complex mechanical system that is difficult to model accurately due to unknown system parameters such as coefficients of friction and pressure distributions. In this paper, we explore the data-complexity required for controlling, rather than modeling, such a system. Results show that a model-based control approach, where the dynamical model is learned from data, is capable of performing complex pushing trajectories with a minimal amount of training data (10 data points). The dynamics of pushing interactions are modeled using a Gaussian process (GP) and are leveraged within a model predictive control approach that linearizes the GP and imposes actuator and task constraints for a planar manipulation task.

* Maria Bauza and Francois R. Hogan contributed equally to this work. 10 pages, 5 figures

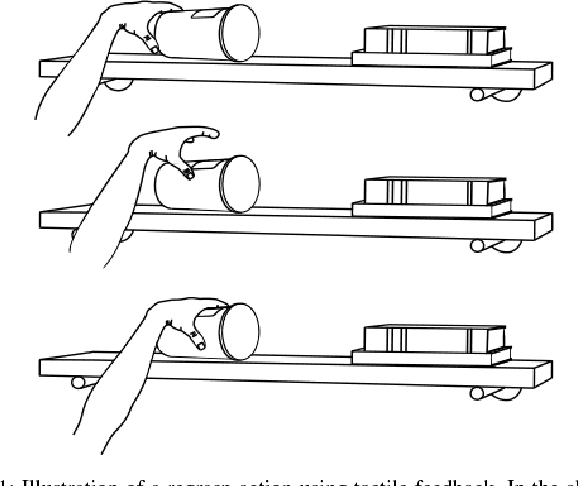

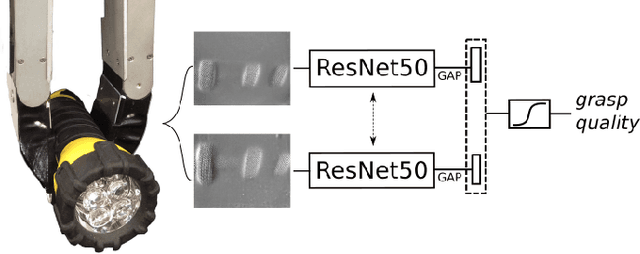

Tactile Regrasp: Grasp Adjustments via Simulated Tactile Transformations

Oct 09, 2018

Abstract:This paper presents a novel regrasp control policy that makes use of tactile sensing to plan local grasp adjustments. Our approach determines regrasp actions by virtually searching for local transformations of tactile measurements that improve the quality of the grasp. First, we construct a tactile-based grasp quality metric using a deep convolutional neural network trained on over 2800 grasps. The quality of each grasp, a continuous value between 0 and 1, is determined experimentally by measuring its resistance to external perturbations. Second, we simulate the tactile imprints associated with robot motions relative to the initial grasp by performing rigid-body transformations of the given tactile measurements. The newly generated tactile imprints are evaluated with the learned grasp quality network and the regrasp action is chosen to maximize the grasp quality. Results show that the grasp quality network can predict the outcome of grasps with an average accuracy of 85% on known objects and 75% on a cross validation set of 12 objects. The regrasp control policy improves the success rate of grasp actions by an average relative increase of 70% on a test set of 8 objects.

* Francois R. Hogan and Maria Bauza contributed equally to this work. 8 pages, 7 figures

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge