Magnus Egerstedt

Georgia Institute of Technology USA

Disentangled Control of Multi-Agent Systems

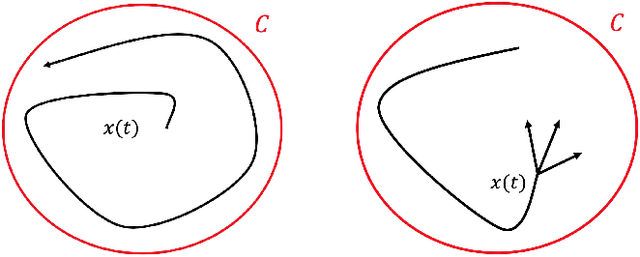

Nov 08, 2025Abstract:This paper develops a general framework for multi-agent control synthesis, which applies to a wide range of problems with convergence guarantees, regardless of the complexity of the underlying graph topology and the explicit time dependence of the objective function. The proposed framework systematically addresses a particularly challenging problem in multi-agent systems, i.e., decentralization of entangled dynamics among different agents, and it naturally supports multi-objective robotics and real-time implementations. To demonstrate its generality and effectiveness, the framework is implemented across three experiments, namely time-varying leader-follower formation control, decentralized coverage control for time-varying density functions without any approximations, which is a long-standing open problem, and safe formation navigation in dense environments.

Online Learning and Coverage of Unknown Fields Using Random-Feature Gaussian Processes

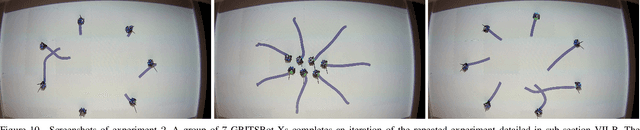

Sep 09, 2025Abstract:This paper proposes a framework for multi-robot systems to perform simultaneous learning and coverage of the domain of interest characterized by an unknown and potentially time-varying density function. To overcome the limitations of Gaussian Process (GP) regression, we employ Random Feature GP (RFGP) and its online variant (O-RFGP) that enables online and incremental inference. By integrating these with Voronoi-based coverage control and Upper Confidence Bound (UCB) sampling strategy, a team of robots can adaptively focus on important regions while refining the learned spatial field for efficient coverage. Under mild assumptions, we provide theoretical guarantees and evaluate the framework through simulations in time-invariant scenarios. Furthermore, its effectiveness in time-varying settings is demonstrated through additional simulations and a physical experiment.

RaccoonBot: An Autonomous Wire-Traversing Solar-Tracking Robot for Persistent Environmental Monitoring

Jan 24, 2025Abstract:Environmental monitoring is used to characterize the health and relationship between organisms and their environments. In forest ecosystems, robots can serve as platforms to acquire such data, even in hard-to-reach places where wire-traversing platforms are particularly promising due to their efficient displacement. This paper presents the RaccoonBot, which is a novel autonomous wire-traversing robot for persistent environmental monitoring, featuring a fail-safe mechanical design with a self-locking mechanism in case of electrical shortage. The robot also features energy-aware mobility through a novel Solar tracking algorithm, that allows the robot to find a position on the wire to have direct contact with solar power to increase the energy harvested. Experimental results validate the electro-mechanical features of the RaccoonBot, showing that it is able to handle wire perturbations, different inclinations, and achieving energy autonomy.

Range Limited Coverage Control using Air-Ground Multi-Robot Teams

Jun 12, 2023Abstract:In this paper, we investigate how heterogeneous multi-robot systems with different sensing capabilities can observe a domain with an apriori unknown density function. Common coverage control techniques are targeted towards homogeneous teams of robots and do not consider what happens when the sensing capabilities of the robots are vastly different. This work proposes an extension to Lloyd's algorithm that fuses coverage information from heterogeneous robots with differing sensing capabilities to effectively observe a domain. Namely, we study a bimodal team of robots consisting of aerial and ground agents. In our problem formulation we use aerial robots with coarse domain sensors to approximate the number of ground robots needed within their sensing region to effectively cover it. This information is relayed to ground robots, who perform an extension to the Lloyd's algorithm that balances a locally focused coverage controller with a globally focused distribution controller. The stability of the Lloyd's algorithm extension is proven and its performance is evaluated through simulation and experiments using the Robotarium, a remotely-accessible, multi-robot testbed.

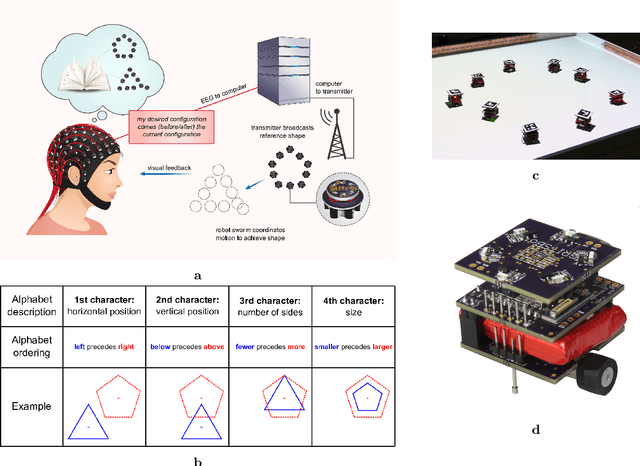

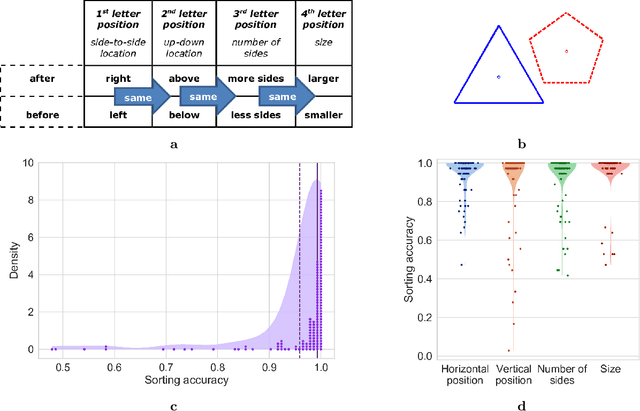

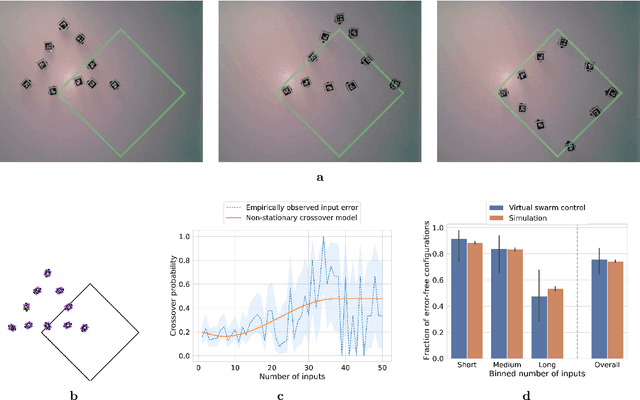

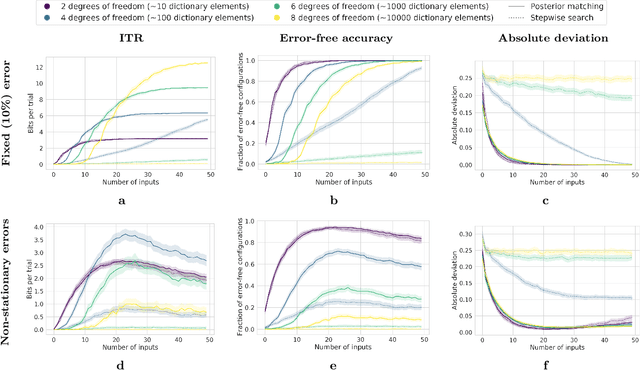

A Low-complexity Brain-computer Interface for High-complexity Robot Swarm Control

May 27, 2022

Abstract:A brain-computer interface (BCI) is a system that allows a human operator to use only mental commands in controlling end effectors that interact with the world around them. Such a system consists of a measurement device to record the human user's brain activity, which is then processed into commands that drive a system end effector. BCIs involve either invasive measurements which allow for high-complexity control but are generally infeasible, or noninvasive measurements which offer lower quality signals but are more practical to use. In general, BCI systems have not been developed that efficiently, robustly, and scalably perform high-complexity control while retaining the practicality of noninvasive measurements. Here we leverage recent results from feedback information theory to fill this gap by modeling BCIs as a communications system and deploying a human-implementable interaction algorithm for noninvasive control of a high-complexity robot swarm. We construct a scalable dictionary of robotic behaviors that can be searched simply and efficiently by a BCI user, as we demonstrate through a large-scale user study testing the feasibility of our interaction algorithm, a user test of the full BCI system on (virtual and real) robot swarms, and simulations that verify our results against theoretical models. Our results provide a proof of concept for how a large class of high-complexity effectors (even beyond robotics) can be effectively controlled by a BCI system with low-complexity and noisy inputs.

Safe Model-Based Reinforcement Learning Using Robust Control Barrier Functions

Oct 11, 2021

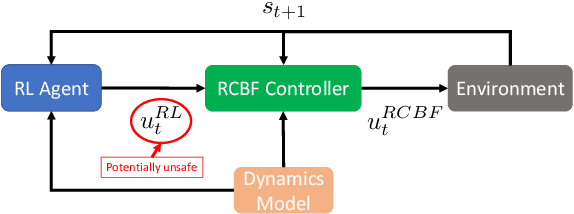

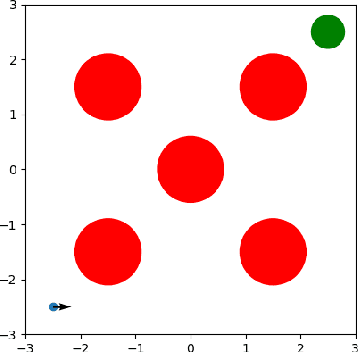

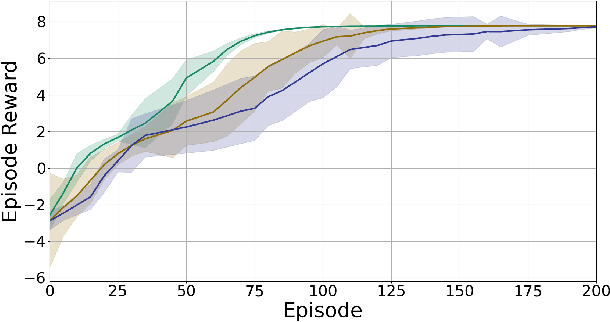

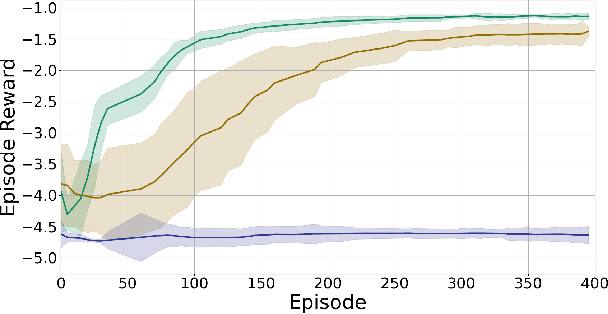

Abstract:Reinforcement Learning (RL) is effective in many scenarios. However, it typically requires the exploration of a sufficiently large number of state-action pairs, some of which may be unsafe. Consequently, its application to safety-critical systems remains a challenge. Towards this end, an increasingly common approach to address safety involves the addition of a safety layer that projects the RL actions onto a safe set of actions. In turn, a challenge for such frameworks is how to effectively couple RL with the safety layer to improve the learning performance. In the context of leveraging control barrier functions for safe RL training, prior work focuses on a restricted class of barrier functions and utilizes an auxiliary neural net to account for the effects of the safety layer which inherently results in an approximation. In this paper, we frame safety as a differentiable robust-control-barrier-function layer in a model-based RL framework. As such, this approach both ensures safety and effectively guides exploration during training resulting in increased sample efficiency as demonstrated in the experiments.

Optimal Stochastic Evasive Maneuvers Using the Schrodinger's Equation

Oct 11, 2021

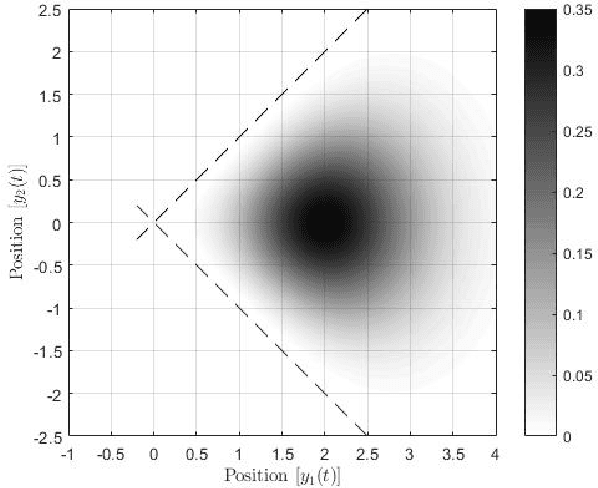

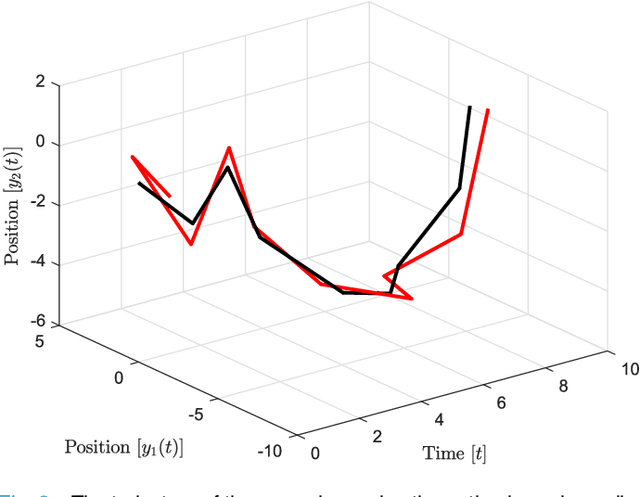

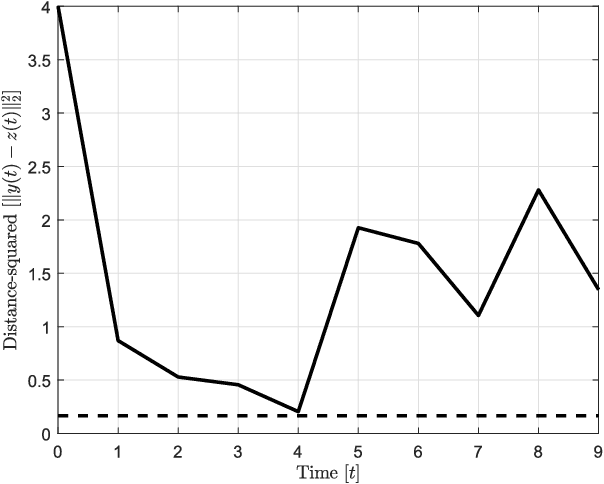

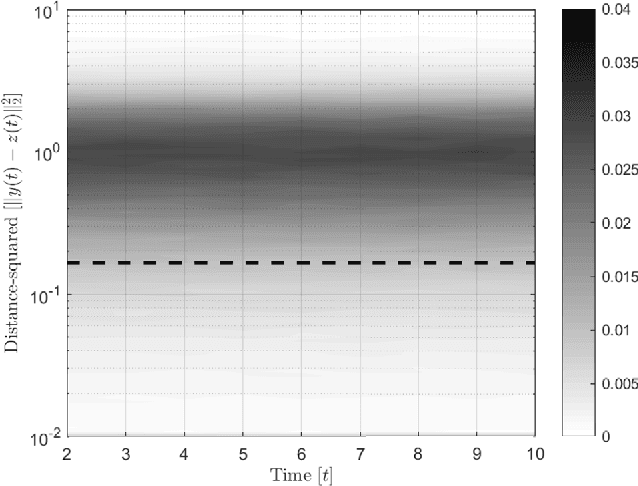

Abstract:In this paper, preys with stochastic evasion policies are considered. The stochasticity adds unpredictable changes to the prey's path for avoiding predator's attacks. The prey's cost function is composed of two terms balancing the unpredictability factor (by using stochasticity to make the task of forecasting its future positions by the predator difficult) and energy consumption (the least amount of energy required for performing a maneuver). The optimal probability density functions of the actions of the prey for trading-off unpredictability and energy consumption is shown to be characterized by the stationary Schrodinger's equation.

Model Free Barrier Functions via Implicit Evading Maneuvers

Jul 27, 2021

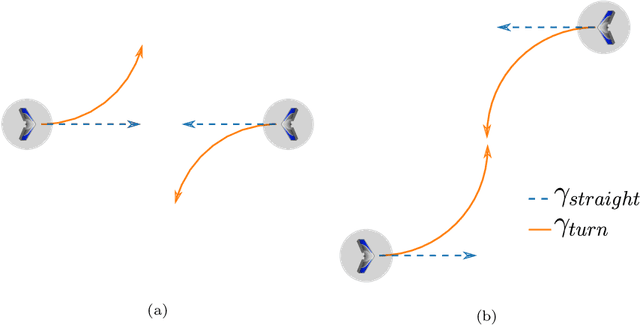

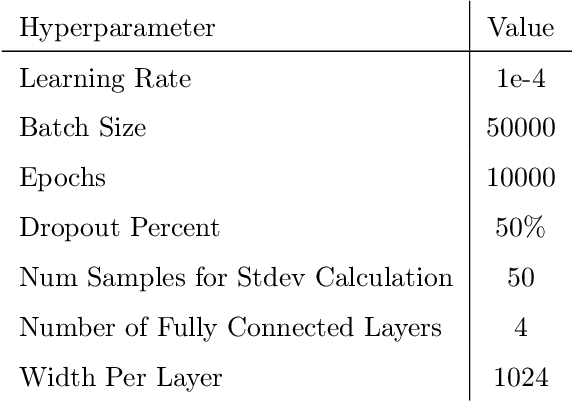

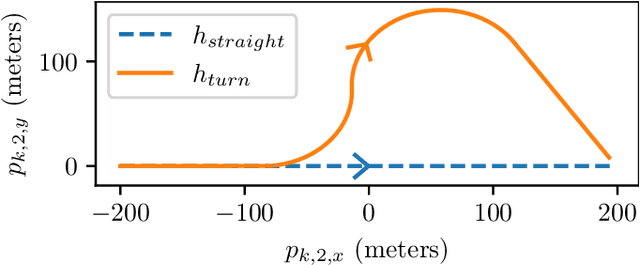

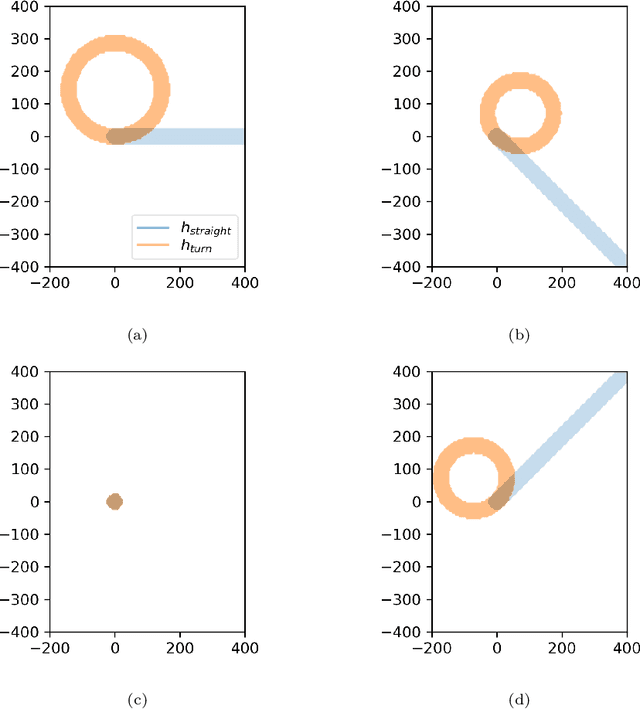

Abstract:This paper demonstrates that in some cases the safety override arising from the use of a barrier function can be needlessly restrictive. In particular, we examine the case of fixed wing collision avoidance and show that when using a barrier function, there are cases where two fixed wing aircraft can come closer to colliding than if there were no barrier function at all. In addition, we construct cases where the barrier function labels the system as unsafe even when the vehicles start arbitrarily far apart. In other words, the barrier function ensures safety but with unnecessary costs to performance. We therefore introduce model free barrier functions which take a data driven approach to creating a barrier function. We demonstrate the effectiveness of model free barrier functions in a collision avoidance simulation of two fixed-wing aircraft.

A Resilient and Energy-Aware Task Allocation Framework for Heterogeneous Multi-Robot Systems

May 12, 2021

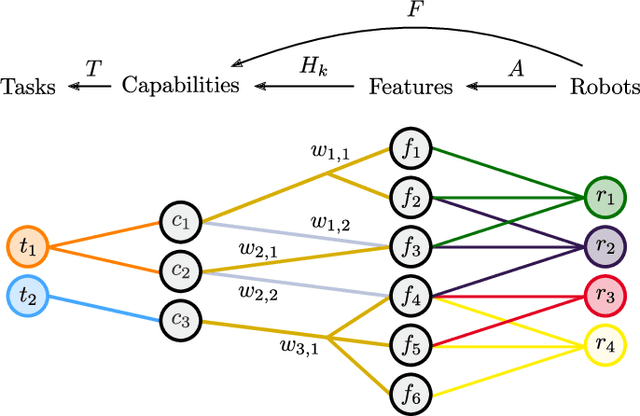

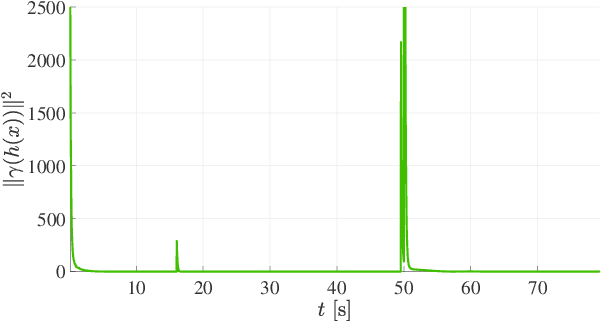

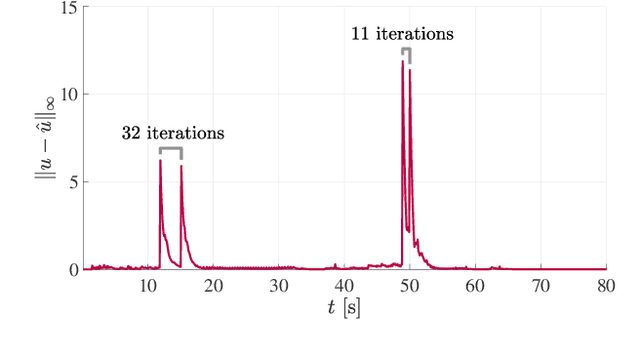

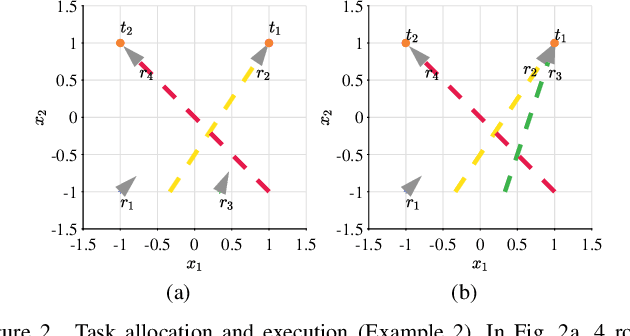

Abstract:In the context of heterogeneous multi-robot teams deployed for executing multiple tasks, this paper develops an energy-aware framework for allocating tasks to robots in an online fashion. With a primary focus on long-duration autonomy applications, we opt for a survivability-focused approach. Towards this end, the task prioritization and execution -- through which the allocation of tasks to robots is effectively realized -- are encoded as constraints within an optimization problem aimed at minimizing the energy consumed by the robots at each point in time. In this context, an allocation is interpreted as a prioritization of a task over all others by each of the robots. Furthermore, we present a novel framework to represent the heterogeneous capabilities of the robots, by distinguishing between the features available on the robots, and the capabilities enabled by these features. By embedding these descriptions within the optimization problem, we make the framework resilient to situations where environmental conditions make certain features unsuitable to support a capability and when component failures on the robots occur. We demonstrate the efficacy and resilience of the proposed approach in a variety of use-case scenarios, consisting of simulations and real robot experiments.

Data-Driven Robust Barrier Functions for Safe, Long-Term Operation

Apr 15, 2021

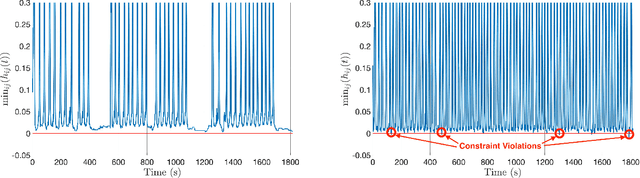

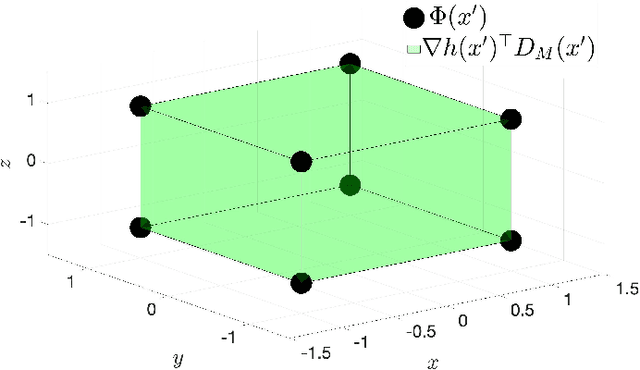

Abstract:Applications that require multi-robot systems to operate independently for extended periods of time in unknown or unstructured environments face a broad set of challenges, such as hardware degradation, changing weather patterns, or unfamiliar terrain. To operate effectively under these changing conditions, algorithms developed for long-term autonomy applications require a stronger focus on robustness. Consequently, this work considers the ability to satisfy the operation-critical constraints of a disturbed system in a modular fashion, which means compatibility with different system objectives and disturbance representations. Toward this end, this paper introduces a controller-synthesis approach to constraint satisfaction for disturbed control-affine dynamical systems by utilizing Control Barrier Functions (CBFs). The aforementioned framework is constructed by modelling the disturbance as a union of convex hulls and leveraging previous work on CBFs for differential inclusions. This method of disturbance modeling grants compatibility with different disturbance-estimation methods. For example, this work demonstrates how a disturbance learned via a Gaussian process may be utilized in the proposed framework. These estimated disturbances are incorporated into the proposed controller-synthesis framework which is then tested on a fleet of robots in different scenarios.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge