Lin Liu

University of South Australia

Time and Frequency Synergy for Source-Free Time-Series Domain Adaptations

Oct 23, 2024

Abstract:The issue of source-free time-series domain adaptations still gains scarce research attentions. On the other hand, existing approaches rely solely on time-domain features ignoring frequency components providing complementary information. This paper proposes Time Frequency Domain Adaptation (TFDA), a method to cope with the source-free time-series domain adaptation problems. TFDA is developed with a dual branch network structure fully utilizing both time and frequency features in delivering final predictions. It induces pseudo-labels based on a neighborhood concept where predictions of a sample group are aggregated to generate reliable pseudo labels. The concept of contrastive learning is carried out in both time and frequency domains with pseudo label information and a negative pair exclusion strategy to make valid neighborhood assumptions. In addition, the time-frequency consistency technique is proposed using the self-distillation strategy while the uncertainty reduction strategy is implemented to alleviate uncertainties due to the domain shift problem. Last but not least, the curriculum learning strategy is integrated to combat noisy pseudo labels. Our experiments demonstrate the advantage of our approach over prior arts with noticeable margins in benchmark problems.

Linking Model Intervention to Causal Interpretation in Model Explanation

Oct 21, 2024

Abstract:Intervention intuition is often used in model explanation where the intervention effect of a feature on the outcome is quantified by the difference of a model prediction when the feature value is changed from the current value to the baseline value. Such a model intervention effect of a feature is inherently association. In this paper, we will study the conditions when an intuitive model intervention effect has a causal interpretation, i.e., when it indicates whether a feature is a direct cause of the outcome. This work links the model intervention effect to the causal interpretation of a model. Such an interpretation capability is important since it indicates whether a machine learning model is trustworthy to domain experts. The conditions also reveal the limitations of using a model intervention effect for causal interpretation in an environment with unobserved features. Experiments on semi-synthetic datasets have been conducted to validate theorems and show the potential for using the model intervention effect for model interpretation.

Multi-Cause Deconfounding for Recommender Systems with Latent Confounders

Oct 16, 2024

Abstract:In recommender systems, various latent confounding factors (e.g., user social environment and item public attractiveness) can affect user behavior, item exposure, and feedback in distinct ways. These factors may directly or indirectly impact user feedback and are often shared across items or users, making them multi-cause latent confounders. However, existing methods typically fail to account for latent confounders between users and their feedback, as well as those between items and user feedback simultaneously. To address the problem of multi-cause latent confounders, we propose a multi-cause deconfounding method for recommender systems with latent confounders (MCDCF). MCDCF leverages multi-cause causal effect estimation to learn substitutes for latent confounders associated with both users and items, using user behaviour data. Specifically, MCDCF treats the multiple items that users interact with and the multiple users that interact with items as treatment variables, enabling it to learn substitutes for the latent confounders that influence the estimation of causality between users and their feedback, as well as between items and user feedback. Additionally, we theoretically demonstrate the soundness of our MCDCF method. Extensive experiments on three real-world datasets demonstrate that our MCDCF method effectively recovers latent confounders related to users and items, reducing bias and thereby improving recommendation accuracy.

Mitigating Dual Latent Confounding Biases in Recommender Systems

Oct 16, 2024

Abstract:Recommender systems are extensively utilised across various areas to predict user preferences for personalised experiences and enhanced user engagement and satisfaction. Traditional recommender systems, however, are complicated by confounding bias, particularly in the presence of latent confounders that affect both item exposure and user feedback. Existing debiasing methods often fail to capture the complex interactions caused by latent confounders in interaction data, especially when dual latent confounders affect both the user and item sides. To address this, we propose a novel debiasing method that jointly integrates the Instrumental Variables (IV) approach and identifiable Variational Auto-Encoder (iVAE) for Debiased representation learning in Recommendation systems, referred to as IViDR. Specifically, IViDR leverages the embeddings of user features as IVs to address confounding bias caused by latent confounders between items and user feedback, and reconstructs the embedding of items to obtain debiased interaction data. Moreover, IViDR employs an Identifiable Variational Auto-Encoder (iVAE) to infer identifiable representations of latent confounders between item exposure and user feedback from both the original and debiased interaction data. Additionally, we provide theoretical analyses of the soundness of using IV and the identifiability of the latent representations. Extensive experiments on both synthetic and real-world datasets demonstrate that IViDR outperforms state-of-the-art models in reducing bias and providing reliable recommendations.

Archilles' Heel in Semi-open LLMs: Hiding Bottom against Recovery Attacks

Oct 15, 2024

Abstract:Closed-source large language models deliver strong performance but have limited downstream customizability. Semi-open models, combining both closed-source and public layers, were introduced to improve customizability. However, parameters in the closed-source layers are found vulnerable to recovery attacks. In this paper, we explore the design of semi-open models with fewer closed-source layers, aiming to increase customizability while ensuring resilience to recovery attacks. We analyze the contribution of closed-source layer to the overall resilience and theoretically prove that in a deep transformer-based model, there exists a transition layer such that even small recovery errors in layers before this layer can lead to recovery failure. Building on this, we propose \textbf{SCARA}, a novel approach that keeps only a few bottom layers as closed-source. SCARA employs a fine-tuning-free metric to estimate the maximum number of layers that can be publicly accessible for customization. We apply it to five models (1.3B to 70B parameters) to construct semi-open models, validating their customizability on six downstream tasks and assessing their resilience against various recovery attacks on sixteen benchmarks. We compare SCARA to baselines and observe that it generally improves downstream customization performance and offers similar resilience with over \textbf{10} times fewer closed-source parameters. We empirically investigate the existence of transition layers, analyze the effectiveness of our scheme and finally discuss its limitations.

Towards Fair Graph Representation Learning in Social Networks

Oct 15, 2024

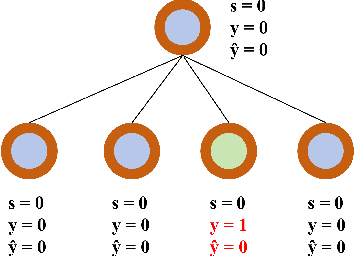

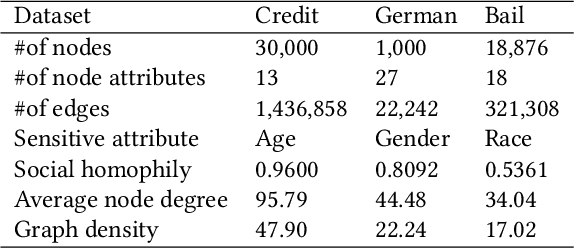

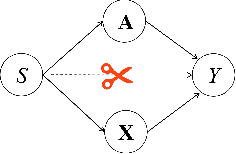

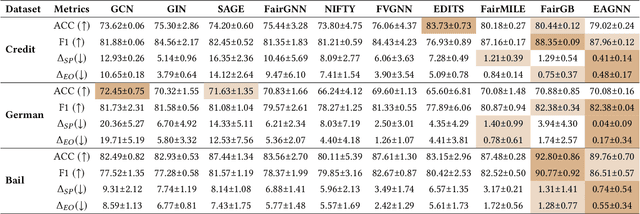

Abstract:With the widespread use of Graph Neural Networks (GNNs) for representation learning from network data, the fairness of GNN models has raised great attention lately. Fair GNNs aim to ensure that node representations can be accurately classified, but not easily associated with a specific group. Existing advanced approaches essentially enhance the generalisation of node representation in combination with data augmentation strategy, and do not directly impose constraints on the fairness of GNNs. In this work, we identify that a fundamental reason for the unfairness of GNNs in social network learning is the phenomenon of social homophily, i.e., users in the same group are more inclined to congregate. The message-passing mechanism of GNNs can cause users in the same group to have similar representations due to social homophily, leading model predictions to establish spurious correlations with sensitive attributes. Inspired by this reason, we propose a method called Equity-Aware GNN (EAGNN) towards fair graph representation learning. Specifically, to ensure that model predictions are independent of sensitive attributes while maintaining prediction performance, we introduce constraints for fair representation learning based on three principles: sufficiency, independence, and separation. We theoretically demonstrate that our EAGNN method can effectively achieve group fairness. Extensive experiments on three datasets with varying levels of social homophily illustrate that our EAGNN method achieves the state-of-the-art performance across two fairness metrics and offers competitive effectiveness.

Mitigating Propensity Bias of Large Language Models for Recommender Systems

Sep 30, 2024

Abstract:The rapid development of Large Language Models (LLMs) creates new opportunities for recommender systems, especially by exploiting the side information (e.g., descriptions and analyses of items) generated by these models. However, aligning this side information with collaborative information from historical interactions poses significant challenges. The inherent biases within LLMs can skew recommendations, resulting in distorted and potentially unfair user experiences. On the other hand, propensity bias causes side information to be aligned in such a way that it often tends to represent all inputs in a low-dimensional subspace, leading to a phenomenon known as dimensional collapse, which severely restricts the recommender system's ability to capture user preferences and behaviours. To address these issues, we introduce a novel framework named Counterfactual LLM Recommendation (CLLMR). Specifically, we propose a spectrum-based side information encoder that implicitly embeds structural information from historical interactions into the side information representation, thereby circumventing the risk of dimension collapse. Furthermore, our CLLMR approach explores the causal relationships inherent in LLM-based recommender systems. By leveraging counterfactual inference, we counteract the biases introduced by LLMs. Extensive experiments demonstrate that our CLLMR approach consistently enhances the performance of various recommender models.

TSI: A Multi-View Representation Learning Approach for Time Series Forecasting

Sep 30, 2024

Abstract:As the growing demand for long sequence time-series forecasting in real-world applications, such as electricity consumption planning, the significance of time series forecasting becomes increasingly crucial across various domains. This is highlighted by recent advancements in representation learning within the field. This study introduces a novel multi-view approach for time series forecasting that innovatively integrates trend and seasonal representations with an Independent Component Analysis (ICA)-based representation. Recognizing the limitations of existing methods in representing complex and high-dimensional time series data, this research addresses the challenge by combining TS (trend and seasonality) and ICA (independent components) perspectives. This approach offers a holistic understanding of time series data, going beyond traditional models that often miss nuanced, nonlinear relationships. The efficacy of TSI model is demonstrated through comprehensive testing on various benchmark datasets, where it shows superior performance over current state-of-the-art models, particularly in multivariate forecasting. This method not only enhances the accuracy of forecasting but also contributes significantly to the field by providing a more in-depth understanding of time series data. The research which uses ICA for a view lays the groundwork for further exploration and methodological advancements in time series forecasting, opening new avenues for research and practical applications.

CWT-Net: Super-resolution of Histopathology Images Using a Cross-scale Wavelet-based Transformer

Sep 11, 2024Abstract:Super-resolution (SR) aims to enhance the quality of low-resolution images and has been widely applied in medical imaging. We found that the design principles of most existing methods are influenced by SR tasks based on real-world images and do not take into account the significance of the multi-level structure in pathological images, even if they can achieve respectable objective metric evaluations. In this work, we delve into two super-resolution working paradigms and propose a novel network called CWT-Net, which leverages cross-scale image wavelet transform and Transformer architecture. Our network consists of two branches: one dedicated to learning super-resolution and the other to high-frequency wavelet features. To generate high-resolution histopathology images, the Transformer module shares and fuses features from both branches at various stages. Notably, we have designed a specialized wavelet reconstruction module to effectively enhance the wavelet domain features and enable the network to operate in different modes, allowing for the introduction of additional relevant information from cross-scale images. Our experimental results demonstrate that our model significantly outperforms state-of-the-art methods in both performance and visualization evaluations and can substantially boost the accuracy of image diagnostic networks.

Few-shot Multi-Task Learning of Linear Invariant Features with Meta Subspace Pursuit

Sep 04, 2024

Abstract:Data scarcity poses a serious threat to modern machine learning and artificial intelligence, as their practical success typically relies on the availability of big datasets. One effective strategy to mitigate the issue of insufficient data is to first harness information from other data sources possessing certain similarities in the study design stage, and then employ the multi-task or meta learning framework in the analysis stage. In this paper, we focus on multi-task (or multi-source) linear models whose coefficients across tasks share an invariant low-rank component, a popular structural assumption considered in the recent multi-task or meta learning literature. Under this assumption, we propose a new algorithm, called Meta Subspace Pursuit (abbreviated as Meta-SP), that provably learns this invariant subspace shared by different tasks. Under this stylized setup for multi-task or meta learning, we establish both the algorithmic and statistical guarantees of the proposed method. Extensive numerical experiments are conducted, comparing Meta-SP against several competing methods, including popular, off-the-shelf model-agnostic meta learning algorithms such as ANIL. These experiments demonstrate that Meta-SP achieves superior performance over the competing methods in various aspects.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge