Leszek Rutkowski

Distillation Traps and Guards: A Calibration Knob for LLM Distillability

Apr 21, 2026Abstract:Knowledge distillation (KD) transfers capabilities from large language models (LLMs) to smaller students, yet it can fail unpredictably and also underpins model leakage risks. Our analysis revealed several distillation traps: tail noise, off-policy instability, and, most fundamentally, the teacher-student gap, that distort training signals. These traps manifest as overconfident hallucinations, self-correction collapse, and local decoding degradation, causing distillation to fail. Motivated by these findings, we propose a post-hoc calibration method that, to the best of our knowledge, for the first time enables control over a teacher's distillability via reinforcement fine-tuning (RFT). Our objective combines task utility, KL anchor, and across-tokenizer calibration reward. This makes distillability a practical safety lever for foundation models, connecting robust teacher-student transfer with deployment-aware model protection. Experiments across math, knowledge QA, and instruction-following tasks show that students distilled from distillable calibrated teachers outperform SFT and KD baselines, while undistillable calibrated teachers retain their task performance but cause distilled students to collapse, offering a practical knob for both better KD and model IP protection.

SVGS: Single-View to 3D Object Editing via Gaussian Splatting

Mar 30, 2026Abstract:Text-driven 3D scene editing has attracted considerable interest due to its convenience and user-friendliness. However, methods that rely on implicit 3D representations, such as Neural Radiance Fields (NeRF), while effective in rendering complex scenes, are hindered by slow processing speeds and limited control over specific regions of the scene. Moreover, existing approaches, including Instruct-NeRF2NeRF and GaussianEditor, which utilize multi-view editing strategies, frequently produce inconsistent results across different views when executing text instructions. This inconsistency can adversely affect the overall performance of the model, complicating the task of balancing the consistency of editing results with editing efficiency. To address these challenges, we propose a novel method termed Single-View to 3D Object Editing via Gaussian Splatting (SVGS), which is a single-view text-driven editing technique based on 3D Gaussian Splatting (3DGS). Specifically, in response to text instructions, we introduce a single-view editing strategy grounded in multi-view diffusion models, which reconstructs 3D scenes by leveraging only those views that yield consistent editing results. Additionally, we employ sparse 3D Gaussian Splatting as the 3D representation, which significantly enhances editing efficiency. We conducted a comparative analysis of SVGS against existing baseline methods across various scene settings, and the results indicate that SVGS outperforms its counterparts in both editing capability and processing speed, representing a significant advancement in 3D editing technology. For further details, please visit our project page at: https://amateurc.github.io/svgs.github.io.

Alternating Gradient Flow Utility: A Unified Metric for Structural Pruning and Dynamic Routing in Deep Networks

Mar 17, 2026Abstract:Efficient deep learning traditionally relies on static heuristics like weight magnitude or activation awareness (e.g., Wanda, RIA). While successful in unstructured settings, we observe a critical limitation when applying these metrics to the structural pruning of deep vision networks. These contemporary metrics suffer from a magnitude bias, failing to preserve critical functional pathways. To overcome this, we propose a decoupled kinetic paradigm inspired by Alternating Gradient Flow (AGF), utilizing an absolute feature-space Taylor expansion to accurately capture the network's structural "kinetic utility". First, we uncover a topological phase transition at extreme sparsity, where AGF successfully preserves baseline functionality and exhibits topological implicit regularization, avoiding the collapse seen in models trained from scratch. Second, transitioning to architectures without strict structural priors, we reveal a phenomenon of Sparsity Bottleneck in Vision Transformers (ViTs). Through a gradient-magnitude decoupling analysis, we discover that dynamic signals suffer from signal compression in converged models, rendering them suboptimal for real-time routing. Finally, driven by these empirical constraints, we design a hybrid routing framework that decouples AGF-guided offline structural search from online execution via zero-cost physical priors. We validate our paradigm on large-scale benchmarks: under a 75% compression stress test on ImageNet-1K, AGF effectively avoids the structural collapse where traditional metrics aggressively fall below random sampling. Furthermore, when systematically deployed for dynamic inference on ImageNet-100, our hybrid approach achieves Pareto-optimal efficiency. It reduces the usage of the heavy expert by approximately 50% (achieving an estimated overall cost of 0.92$\times$) without sacrificing the full-model accuracy.

Resource-constrained Amazons chess decision framework integrating large language models and graph attention

Mar 11, 2026Abstract:Artificial intelligence has advanced significantly through the development of intelligent game-playing systems, providing rigorous testbeds for decision-making, strategic planning, and adaptive learning. However, resource-constrained environments pose critical challenges, as conventional deep learning methods heavily rely on extensive datasets and computational resources. In this paper, we propose a lightweight hybrid framework for the Game of the Amazons, which explores the paradigm of weak-to-strong generalization by integrating the structural reasoning of graph-based learning with the generative capabilities of large language models. Specifically, we leverage a Graph Attention Autoencoder to inform a multi-step Monte Carlo Tree Search, utilize a Stochastic Graph Genetic Algorithm to optimize evaluation signals, and harness GPT-4o-mini to generate synthetic training data. Unlike traditional approaches that rely on expert demonstrations, our framework learns from noisy and imperfect supervision. We demonstrate that the Graph Attention mechanism effectively functions as a structural filter, denoising the LLM's outputs. Experiments on a 10$\times$10 Amazons board show that our hybrid approach not only achieves a 15\%--56\% improvement in decision accuracy over baselines but also significantly outperforms its teacher model (GPT-4o-mini), achieving a competitive win rate of 45.0\% at N=30 nodes and a decisive 66.5\% at only N=50 nodes. These results verify the feasibility of evolving specialized, high-performance game AI from general-purpose foundation models under stringent computational constraints.

DM-CFO: A Diffusion Model for Compositional 3D Tooth Generation with Collision-Free Optimization

Mar 04, 2026Abstract:The automatic design of a 3D tooth model plays a crucial role in dental digitization. However, current approaches face challenges in compositional 3D tooth generation because both the layouts and shapes of missing teeth need to be optimized.In addition, collision conflicts are often omitted in 3D Gaussian-based compositional 3D generation, where objects may intersect with each other due to the absence of explicit geometric information on the object surfaces. Motivated by graph generation through diffusion models and collision detection using 3D Gaussians, we propose an approach named DM-CFO for compositional tooth generation, where the layout of missing teeth is progressively restored during the denoising phase under both text and graph constraints. Then, the Gaussian parameters of each layout-guided tooth and the entire jaw are alternately updated using score distillation sampling (SDS). Furthermore, a regularization term based on the distances between the 3D Gaussians of neighboring teeth and the anchor tooth is introduced to penalize tooth intersections. Experimental results on three tooth-design datasets demonstrate that our approach significantly improves the multiview consistency and realism of the generated teeth compared with existing methods. Project page: https://amateurc.github.io/CF-3DTeeth/.

Sequential, Parallel and Consecutive Hybrid Evolutionary-Swarm Optimization Metaheuristics

Aug 01, 2025Abstract:The goal of this paper is twofold. First, it explores hybrid evolutionary-swarm metaheuristics that combine the features of PSO and GA in a sequential, parallel and consecutive manner in comparison with their standard basic form: Genetic Algorithm and Particle Swarm Optimization. The algorithms were tested on a set of benchmark functions, including Ackley, Griewank, Levy, Michalewicz, Rastrigin, Schwefel, and Shifted Rotated Weierstrass, across multiple dimensions. The experimental results demonstrate that the hybrid approaches achieve superior convergence and consistency, especially in higher-dimensional search spaces. The second goal of this paper is to introduce a novel consecutive hybrid PSO-GA evolutionary algorithm that ensures continuity between PSO and GA steps through explicit information transfer mechanisms, specifically by modifying GA's variation operators to inherit velocity and personal best information.

* 16 pages, 2 figures, 5 tables, 5 algorithms, conference

Global Nash Equilibrium in Non-convex Multi-player Game: Theory and Algorithms

Jan 19, 2023

Abstract:Wide machine learning tasks can be formulated as non-convex multi-player games, where Nash equilibrium (NE) is an acceptable solution to all players, since no one can benefit from changing its strategy unilaterally. Attributed to the non-convexity, obtaining the existence condition of global NE is challenging, let alone designing theoretically guaranteed realization algorithms. This paper takes conjugate transformation to the formulation of non-convex multi-player games, and casts the complementary problem into a variational inequality (VI) problem with a continuous pseudo-gradient mapping. We then prove the existence condition of global NE: the solution to the VI problem satisfies a duality relation. Based on this VI formulation, we design a conjugate-based ordinary differential equation (ODE) to approach global NE, which is proved to have an exponential convergence rate. To make the dynamics more implementable, we further derive a discretized algorithm. We apply our algorithm to two typical scenarios: multi-player generalized monotone game and multi-player potential game. In the two settings, we prove that the step-size setting is required to be $\mathcal{O}(1/k)$ and $\mathcal{O}(1/\sqrt k)$ to yield the convergence rates of $\mathcal{O}(1/ k)$ and $\mathcal{O}(1/\sqrt k)$, respectively. Extensive experiments in robust neural network training and sensor localization are in full agreement with our theory.

Applying Autonomous Hybrid Agent-based Computing to Difficult Optimization Problems

Oct 24, 2022

Abstract:Evolutionary multi-agent systems (EMASs) are very good at dealing with difficult, multi-dimensional problems, their efficacy was proven theoretically based on analysis of the relevant Markov-Chain based model. Now the research continues on introducing autonomous hybridization into EMAS. This paper focuses on a proposed hybrid version of the EMAS, and covers selection and introduction of a number of hybrid operators and defining rules for starting the hybrid steps of the main algorithm. Those hybrid steps leverage existing, well-known and proven to be efficient metaheuristics, and integrate their results into the main algorithm. The discussed modifications are evaluated based on a number of difficult continuous-optimization benchmarks.

Spatial-Temporal-Fusion BNN: Variational Bayesian Feature Layer

Dec 12, 2021

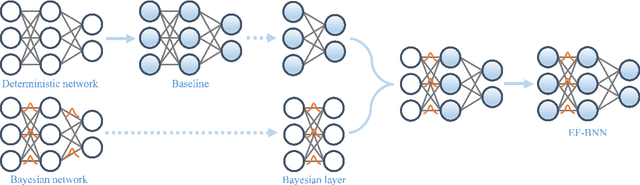

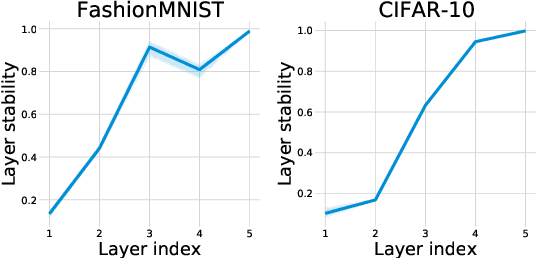

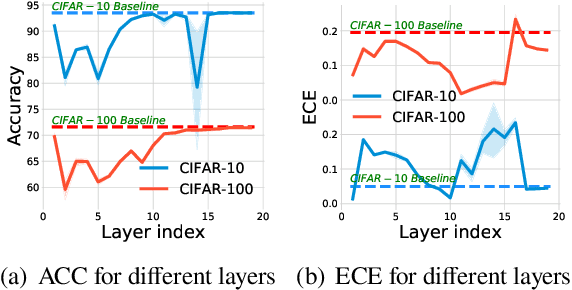

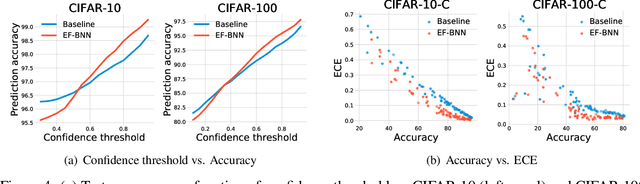

Abstract:Bayesian neural networks (BNNs) have become a principal approach to alleviate overconfident predictions in deep learning, but they often suffer from scaling issues due to a large number of distribution parameters. In this paper, we discover that the first layer of a deep network possesses multiple disparate optima when solely retrained. This indicates a large posterior variance when the first layer is altered by a Bayesian layer, which motivates us to design a spatial-temporal-fusion BNN (STF-BNN) for efficiently scaling BNNs to large models: (1) first normally train a neural network from scratch to realize fast training; and (2) the first layer is converted to Bayesian and inferred by employing stochastic variational inference, while other layers are fixed. Compared to vanilla BNNs, our approach can greatly reduce the training time and the number of parameters, which contributes to scale BNNs efficiently. We further provide theoretical guarantees on the generalizability and the capability of mitigating overconfidence of STF-BNN. Comprehensive experiments demonstrate that STF-BNN (1) achieves the state-of-the-art performance on prediction and uncertainty quantification; (2) significantly improves adversarial robustness and privacy preservation; and (3) considerably reduces training time and memory costs.

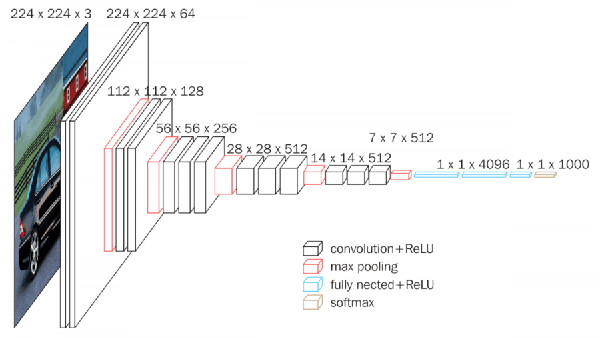

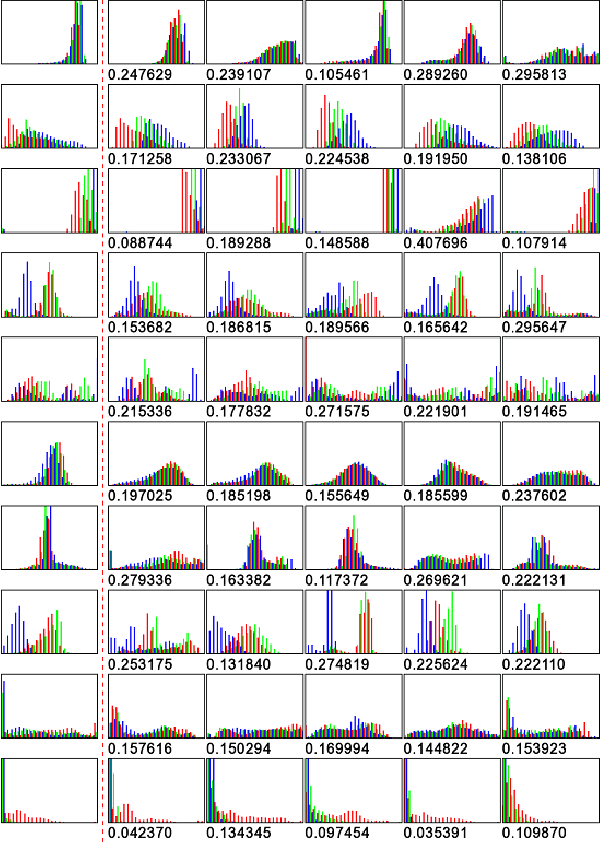

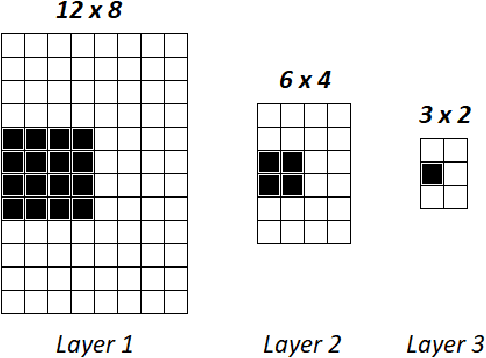

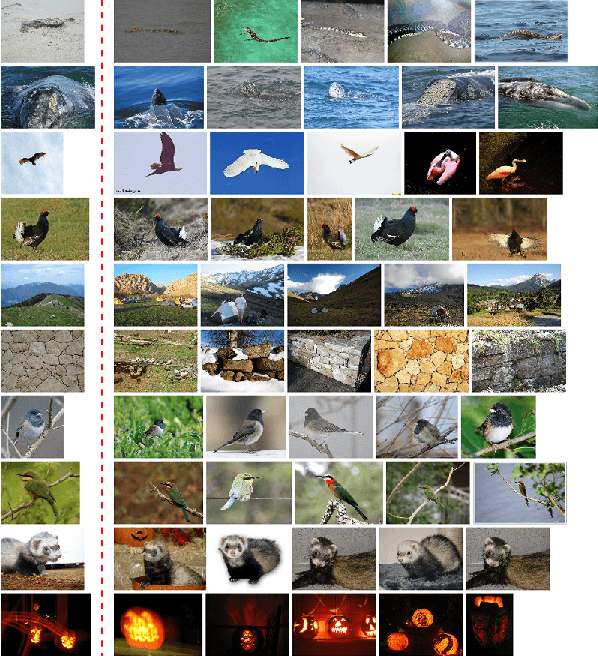

A new approach to descriptors generation for image retrieval by analyzing activations of deep neural network layers

Jul 13, 2020

Abstract:In this paper, we consider the problem of descriptors construction for the task of content-based image retrieval using deep neural networks. The idea of neural codes, based on fully connected layers activations, is extended by incorporating the information contained in convolutional layers. It is known that the total number of neurons in the convolutional part of the network is large and the majority of them have little influence on the final classification decision. Therefore, in the paper we propose a novel algorithm that allows us to extract the most significant neuron activations and utilize this information to construct effective descriptors. The descriptors consisting of values taken from both the fully connected and convolutional layers perfectly represent the whole image content. The images retrieved using these descriptors match semantically very well to the query image, and also they are similar in other secondary image characteristics, like background, textures or color distribution. These features of the proposed descriptors are verified experimentally based on the IMAGENET1M dataset using the VGG16 neural network.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge